Month: June 2015

Separating 5G fact from hype: Is Massive MIMO a Solution or Dead End?

Background:

Despite only a “vision” with no definition or specs from ITU-R of fifth generation wireless networks (5G), mobile vendors and wireless network operators continue to keep talking about 5G ready products and deployment — even if they’re not really even sure what 5G is yet. In the last year, we have seen carriers announce “4.5G” and “Pre-5G” technology and island’s vying for the first 5G network. In reality, carriers are simply marketing 5G packages that include speeds that barely reach what is defined today as 4G.

For the record, true 4G is LTE Advanced (see reference below), which only a few features have been deployed, like Carrier Aggregation. Almost all the “4G-LTE” deployments are really 3.5G.

Karl Bode reported previously that the ITU-R declared no major wireless carrier was technically deploying 4G networks since none were capable of speeds over 100 Mbps. Yet many wireless carriers simply ignored the declaration, with T-Mobile arguing their HSPA+ build was the “largest 4G network,” while Sprint, AT&T and Verizon also made “4G” part of marketing for their respective Mobile WiMax, HSPA+ and LTE networks.

To turn their hype into reality, carriers pressured the ITU-R to redefine 4G so that almost every current wireless network was deemed 4G. Consequently, it’s looking more likely that over the next few years, the definition of 5G will be lowered to make sure that improved 4G networks are included in the definition of 5G.

ITU-R Status on 5G now called IMT-2020:

In early 2012, ITU-R embarked on a programme to develop “IMT for 2020 and beyond”, setting the stage for “5G” research activities that are emerging around the world. As of June 19, 2015, ITU-R finally established the overall roadmap for the development of 5G mobile and defined the term it will apply to it as “IMT-2020.”

With the finalization of its work on the “Vision” for 5G systems at a meeting of ITU-R Working Party 5D in San Diego, CA, ITU defined the overall goals, process and timeline for the development of 5G mobile systems. This ongoing 5G work plan is being done by ITU in close collaboration with governments and the global mobile industry.

The ITU-R Working Party 5D San Diego, CA meeting also agreed that the work should be conducted under the name of IMT-2020, as an extension of the ITU’s existing family of global standards for International Mobile Telecommunication systems (IMT-2000 and IMT-Advanced) which serve as the basis for all of today’s 3G and 4G mobile systems.

The next step is to establish detailed technical performance requirements for the radio systems to support 5G, taking into account the needs of a wide portfolio of future scenarios and use cases, and then to specify the evaluation criteria for assessment of candidate radio interface technologies to join the IMT-2020 family. The ITU-R Radiocommunication Assembly, which meets in October 2015, is expected to formally adopt the term “IMT-2020.” These new systems, set to become available in 2020, will usher in new paradigms in connectivity in mobile broadband wireless systems to support, for example, extremely high definition video services, real time low latency applications and the expanding realm of the Internet of Things (IoT).

We think a huge segment of the IoT market will require cellular communications (mostly 3G or LTE, but NOT LTE Advanced or 5G).

“The buzz in the industry on future steps in mobile technology – 5G – has seen a sharp increase, with attention now focused on enabling a seamlessly connected society in the 2020 timeframe and beyond that brings together people along with things, data, applications, transport systems and cities in a smart networked communications environment,” said ITU Secretary-General Houlin Zhao. “ITU will continue its partnership with the global mobile industry and governmental bodies to bring IMT-2020 to realization.”

Ongoing ITU information on the development of IMT-2020 will be available here.

5G NORMA project to help define 5G radio architecture:

The 5G Infrastructure Public-Private Partnership (5GPPP) initiative has started the 5G NORMA (5G Novel Radio Multiservice adaptive network Architecture) project, set up to define the overall 5G mobile network architecture, including radio and core networks, needed to meet 5G multiservice requirements. The consortium is composed of 13 partners among leading industry vendors, operators, IT companies, small and medium-sized enterprises and academic institutions.

5G NORMA will propose an end-to-end architecture that takes into consideration both radio access network (RAN) and core network aspects. The consortium will work over a period of 30 months from July, to meet the key objectives of creating and disseminating innovative concepts on the mobile network architecture for the 5G era.

The overall aim of the 30-month project is to propose an end-to-end architecture taking into account both the radio access network (RAN) and core networks. The project will seek to underpin Europe’s leadership position in 5G, breaking from the rigid legacy network paradigm. It will on-demand adapt the use of the mobile network (RAN and Core Network) resources to the service requirements, the variations of the traffic demands over time and location, and the network topology, which include the available front/backhaul capacity. The consortium envisions the architecture will enable unprecedented levels of network customisability to ensure that stringent performance, security, cost and energy requirements are met. It will also provide an API-driven architectural openness, fueling economic growth through over-the-top innovation.

The project partners, spread across six countries, include leading mobile operators (Orange, Deutsche Telekom, Telefonica), network vendors and IT companies (Nokia Networks, Alcatel-Lucent, ATOS), SMEs (Nomor Research, Azcom Technology) and Universities (King’s College London), Technische Universität Kaiserslautern, and Univerdidad Carlos III de Madrid). More info on NORMA is here.

Massive MIMO for 5G:

The next generation wireless networks need to accommodate 1000x more data traffic than contemporary wireless networks. Since the spectrum is scarce in the bands suitable for coverage, the main improvements need to come from spatial reuse of spectrum; many concurrent transmissions per unit area. This is made possible by the massive MIMO technology, where the access points are equipped with hundreds of antennas. These antennas are phase-synchronized and can thus radiate the data signals to multiple users such that each signal only adds up coherently at its intended user.

Over the last the couple of years, massive MIMO has gone from being a theoretical concept to becoming one of the most promising ingredients of the emerging 5G technology. This is because it provides a way to improve the area spectral efficiency (bit/s/Hz/area) under realistic conditions, by upgrading existing base stations. Many experts think that massive MIMO is a commercially attractive solutionsince 100x higher efficiency is possible without installing 100x more base stations.

Maybe not? According to co-authors Jin Liu and Hlaing Minn, there are serious analog front end design challenges for Massive MIMO as noted in this just published IEEE ComSoc Technology News article.

More HYPE- does it ever end?

Telecom firm Ericsson is testing out a new 5G device on the streets of Stockholm, Sweden and Plano, Texas, the company says will revolutionize mobile technology.

“5G technology will also tap into new high-frequency spectrum known as the millimeter waves, which today are unusable for mobile communications. However, in the future the spectrum could open up thousands of megahertz of new frequencies for wireless broadband use, thereby adding tremendous amounts of bandwidth to mobile networks.”

Much of the work being done on 5G has little do with raw speed. Researchers are trying to bring down the latency of the network—the delay users experience after clicking on a link and before a web page loads—cutting it down from 30 milliseconds today to less than a single a millisecond in the future. By reducing the network’s reaction time, researchers open the door for a whole new set of real-time applications. For instance, autonomous cars could communicate their intentions to other vehicles over the network instantaneously, allowing them to coordinate their speed and lane position or avoid potential accidents.

“This is not only yet another system for mobile broadband,” says Sara Mazur, Ericsson’s head of research. “The 5G system is the system that will help create a networked society.”

5G could be all of these different technologies, or it could wind up being only one or two of them. The fact is 5G doesn’t yet have an official definition, and won’t for several years, while the mobile industry and global regulators settle on a standard. 5G is viewed by some as a way of bridging the digital divide between poor and rich countries or big cities and rural towns. Still others want 5G to become the glue connecting every conceivable device and application to the Internet.

To this author 5G is all about vision, hype, and speculation. We advise you to not believe ANY vendor or wireless network operator claims about their 5G network or products!

References:

ITU defines vision and roadmap for 5G mobile development

IMT Vision towards 2020 and Beyond

ICC 2015 Globecom Tutorial: 5G Evolution and Candidate Technologies

Cutting through the Hype: Will the real 4G (LTE-A) please stand up and tell us what 5G is?

Upcoming webinar:

5G: Separating hype from fact – understanding where the market really is at today, July 8, 2015 at 2pm BST (9am EDT)

Highlights of 2015 Open Network Summit (ONS) & Key Take-Aways

Executive Summary:

There were many significant announcements at this year’s Open Networking Summit (ONS), held June 15-18, 2015 in Santa Clara, CA. Unlike in past year’s conferences, there were no vendor sales pitches or infommercials. That was certainly refreshing! However, ONS continues to focus on “pure SDN” (as per the ONF definition) vs. various other SDN reference architectures, such as network virtualization/overlay model. That’s largely because the ONS is closely aligned with the Open Networking Foundation (ONF) and ON.Lab (which developed the open source Open Network Operating System). Surprisingly, there were several companies (Google, Microsoft, AT&T) that said they were using OpenFlow (but not how) in their proprietary versions of SDN.

THE MAJOR THEME OF THE CONFERENCE: Most SDN and NFV SOFTWARE IS GOING TO BE OPEN SOURCE – from organizations like OpenDaylight, ONOS lab, OPNFV, and ONF.

The open source movement will drastically disrupt many companies that had planned on their own vendor specific implementations of SDN Controllers, Open Flow, and NFV virtual appliances. The key missing piece of vendor neutral SDN and NFV software is MANAGEMENT & ORCHESTRATION (which includes scheduling and service insertion/chaining). Until that’s provided by one or more open source communities, proprietary implementations will inhibit widescale deployment of SDN (and even more so) NFV.

I believe the Atrium project (see description below) is a milestone for ONF in that it expands their scope to include open source software for SDN. Atrium will likely complement Open Daylight and ONOS open source projects. It’s still very early in the SDN Open Source space while OPNFV (for NFV) is in its infancy. Some efforts will succeed; others will fail. IMHO, commercial, supported Open Source SDN/NFV code will be critically important to the open networking industry.

Key Take-Aways:

- The ONF’s Atrium open source software was demo’d at the ONF SDN showcase on the exhibit floor. ONF Executive Director Dan Pitt described it’s significance and ongoing trend setting work within ONF during an interview with this author.

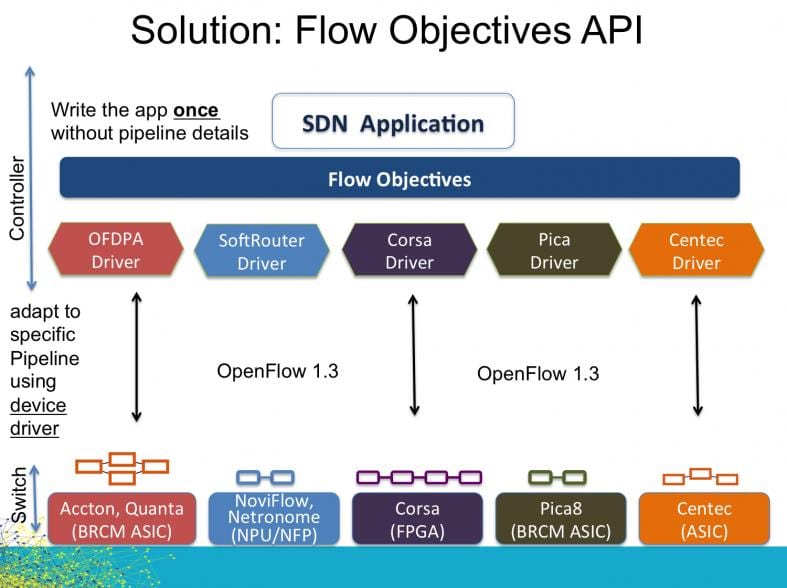

- ONF also demonstrated OpenFlow hardware interoperability using SDN data plane switches from five different vendors (Accton, Quanta, NoviFlow, Netronome, Corsa, Pic8, and Centec). They achieved this by defining a mediation sublayer called “Flow Objectives,” which the data plane switch companies I/O driver software/firmware interfaces with. Hence, it masks the hardware differences/uniqueness of each data plane switch vendor’s product.

- ONOS, the community developing the open source SDN network operating system and control plane, announced that software’s first wide-scale production deployment on the Internet2 research network. It demo’d an open source SDN router running on white box hardware. The Internet2 deployment is the first big deployment of ONOS on a live network. ONOS sponsors fund the open source software development wihich is done in Menlo Park, CA by programmers that work there.

- Dell and Huawei expressed interest in the commercial open source business for SDN with Huawei favoring ONOS for teleco/carrier SDN and OpenDaylight for enterprise SDN as well as smart cities and IoT.

- John Donovan, AT&T’s senior EVP for technology and operations said the company only uses open source now for ~5% of its software, but would like to increase that to 50% in the next few years. He said AT&T’s “secret sauce” (proprietary) software should be used sparingly, “like tabasco sauce.” AT&T is an active participant in OpenDaylight and Open Platform for NFV (OPNFV). AT&T is also an active participant in TM Forum’s ZOOM program, which is actively building relationships with both OpenDaylight and OPNFV. AT&T is contributing a design tool to OpenDaylight that the company has been using it its quest to “disaggregate” its network as part of its Domain 2.0 initiative. That means disentangling all the various subsystems and stripping down to core components, Donovan explained. “We have to rethink how they’re constructed,” he said. Note that AT&T is not, and has never been, a member of the ONF.

- AT&Ts goal is to convert 75% of the network equipment hardware boxes in its network to software running on generic/commodity compute servers by 2020, according to Mr. Donovan. From a base of zero, 5% of AT&T’s network equipment will be implemented in software by the end of 2015, Donovan said.

- AT&T is on an “aggressive schedule” to make the optical transport layer more flexible, according to Andre Fuetsch, AT&T’s senior vice president of architecture and design. “We want to start pushing NFV down the stack. We want to push it to Layer 1,” Fuetsch said during a keynote panel at the Open Networking Summit (ONS) on Tuesday.

- CORD for GPON: AT&T, along with chip vendors PCM-Sierra and Sckipio Technologies, are using the ONOS SDN software on a proof-of-concept project called Central Office Re-architected as Data Center (CORD). The basic premise of CORD is to transorm AT&T’s Central Offices (COs) so that they look like cloud resident data centers with compute servers doing all the heavy lifting. AT&T plans to dis-aggregate the functions of each network element- in this case a GPON Optical Line Terminal (OLT) – so that as many software functions as possible to run on generic compute servers. The white box hardware for CORD-GPON is a “stackable OLT” with GPON blades that are connected via Ethernet to a top-of-rack switch. Here’s a paper on the CORD fabric.an open source leaf-spine CLOS fabric

- CORD for g.fast: AT&T also demo’d a CORD proof of concept project for G.fast. An OpenFlow-enabled G.fast distribution point unit (DPU) was connected to a G.fast CPE bridge from Sckipio. [G.fast is an ITU-T DSL standard for short local loops (<500 meters) with performance targets between 150 Mbit/s and 1 Gbit/s,]

- For the first time anywhere, Google disclosed its internal data center network architecture and Jupiter jumbo data center switch. Scale out, performance and availability are Google’s key challenge in network design. The company designed their own routing protocol and didn’t use any open source software. Google’s current data center network design has a maximum capacity of 1.13 petabits per second using merchant silicon with their own routing protocols and software. For comparison, the current data center throughput is more than 100 times as much as the first data-center network Google developed 10 years ago. The current network is a hierarchical design with three tiers of switches, but they all use the same “off the shelf” chips. Google’s control software treats all the switches as if they were a single switch.

- The Jupiter jumbo switch leverages the latest generation of merchant silicon, has 80 Tbit/s bandwidth, and uses some form of SDN (Google proprietary) with OpenFlow used in an undisclosed manner.

Google’s Jupiter “cluster switches” provide 40 terabits per second of bandwidth—about as much as 40 million home internet connections. SOURCE: Google.

Google’s Jupiter “cluster switches” provide 40 terabits per second of bandwidth—about as much as 40 million home internet connections. SOURCE: Google.

- We strongly suggest the reader view/hear Amin Vahdat, PhD keynote on Google’s evolving data center network architecture.. Amin is one of the best NO NONSENSE presenters on networking that this author has ever observed. His keynote speech is here. We also recommend you read Amin’srelated blog: A look inside Google’s Data Center Networks

- Microsoft Azure public cloud uses its own unique version of SDN, which was described by CTO Mark Russinovich during his keynote speech. Fifty-seven percent of the Fortune 500 use Azure (we find that remarkable as Amazon’s AWS is by far the #1 public cloud service provider for Infrastructure as a Service -IaaS). Microsoft’s cloud storage and compute usage doubles every six months, and Azure adds 90,000 new subscribers a month, and this places unprecedented demands on its network, Russinovich said. The number of host computers quickly grew from 100,000 to millions. Microsoft Azure needs a virtualized, partitioned and scale-out design, delivered through software, in order to keep up with the explosive growth of users and data. Microsoft uses virtual networks (Vnets) built from overlays and Network Functions Virtualization (NFV) services running as software on commodity servers. [Note that virtual networks and overlays are NOT permitted in “pure SDN” and are a completely orthogonal SDN reference architecture].

–Vnets are partitioned through Azure controllers established as a set of interconnected services, and each service is partitioned to scale and run protocols on multiple instances for high availability.

-SDN Controllers are established in regions where there could be 100,000 to 500,000 hosts. Within those regions are smaller clustered controllers which act as stateless caches for up to 1,000 hosts.

-Microsoft builds their SDN controllers using an internally developed the Azure Service Fabric, which has what Microsoft refers to as a “microservices-based architecture” that allows customers to update individual application components without having to update the entire application. Microsoft’s Azure Service Fabric SDK is available for download here.

-Microsoft Azure’s SDN doesn’t use any open-source software. Russinovich said that’s because open-source communities don’t provide the functionality Azure requires.

-Russinovich said Azure’s SDN gets a hardware assist from a SmartNIC FPGA. SmartNIC covers those functions that need a hardware boost, or that Microsoft would just prefer to offload from the CPU. User data traffic flows through the SmartNIC – for functions such as encryption, quality-of-service processing, and storage acceleration. “The sky’s the limit, really, with what we can do with an FPGA given its flexible programming,” Russinovich said. He said that compute servers are better at running virtual machines to serve Azure customers, rather than to take on mundane network processing tasks that could be better implemented in silicon. Mark’s keynote can be watched here.

—>NOTE that this is the exact opposite of AT&T’s position on disaggregating network element functions and moving as much software as possible to generic compute servers, leaving only physical layer transport in network equipment. Microsoft evidently believes that hardware assist from smart NICs is still a valuable proposition for SDN.

- Service provider adoption of SDN/NFV: From Talk to Action (?): Andre Fuetsch of AT&T, Kang-Won Lee of SK Telecom, and Yukio Ito of NTT communications shared their works, experiences, challenges and roadmap in the keynote panel, moderated by Guru Parulkar, Chair ONS, at the ONS-2015. The common aspects among all the three telcos were: (a) Importance of Open Source (b) Presence of both technical and non-technical challenges in adapting SDN/NFV (c) Experience in real-world deployments of NfV use-cases, and (d) Importance of role of SDN in transport networks, especially optical transport.

—>This author noted that the “attack surface” exponentially increases with SDN and NFV so asked a question on mitigation of cyber security attacks. As that issue has been “swept under the rug” for a long time, it seemed to stun the moderator with no clear solutions from the three carrier panelists. Checkout the video clip yourself to see if the answers were “on the mark” or not.

- Alibaba disclosed the China eCommerce giant was using BOTH the pure SDN/OpenFlow and the Network Virtualization/Overlay model in the same network. Panel moderator Guido Appenzeller, PhD of VMWare said that was the first time he’d heard of both SDN reference architectures being used within the same network. How do they inter-connect, if at all?

- During a Thursday, June 18th Opening Keynote Panel titled SDN in Enterprises, NSA’s Brian Larish said, “centralized control via OpenFlow is key” and “we are all in on OpenFlow.”

- Cavium’s XPliant® Switch was the winner of SDN Idol 2015, the open networking industry’s top award for the year’s hottest SDN solutions. The four finalists for SDN Idol 2015 were: Cavium, XPliant Switch; Huawei, 2015 SDN IDOL – DN-based IP & Optical Synergy; NEC, SDN Cyber Attack Auto Protection; and Pluribus Networks, Integrated Network Analytics.

Selected Quotes:

- “Embrace Open Source SDN or become irrelevant going forward.” Guru Parulker, PhD and Chair of the ONS (each year since its inception)

- “Open Networking is here now and encompasses disaggregation of networking technologies including hardware and software similar to Servers 20 years ago. We expect the momentum of ON/SDN/NFV to pick up in 2015 for both Carriers and Enterprises and we are excited that Dell was a leader in creating and embracing this disruption.” Arpit Joshipura, VP of Dell Enterprise Networking & NFV and a long time colleague who this author truly respects.

- “SDN and NFV are speeding up innovation, as seen in projects like CORD,” said Tom Anschutz, Distinguished Member of Technical Staff at AT&T in a press release. “These technologies create systems that do not need new standards to function and enable new behaviors in software, which decreases development time. Faster development time leads to rapid innovation, something the industry needs to continue satisfying data-hungry customers.”

-

“Data Center Anchored Communication Services (DACS) is one of the key motivations for using SDN….We are all on a leash when we use the public network. Sometimes we like it because we get “move around” with IP, sometimes we would have wanted more bandwidth. But there is a leash tied to an anchor for each communication service – statefull inline control.” Sharon Barkai, founder of Contextream which is now part of HP. [Note that ConteXtream has historically gone after service providers to deploy its software into data centers and centralize management of networks.]

- “The (networking) industry is porting anchors from network appliances, and Application Specific Integrated Circuits (ASICs), to software on standard (x86) compute servers. Getting these DACS to factory mode in a row of clusters involves flows, granularity, federation, overlays, mapping, Overlay Descriptor Lists, etc. The challenge is to map traffic to computed anchors dynamically per flow. Virtualization frees any given hardware from ties to specific service functions and specific subscribers. This is where the capex (utilization) and opex (factory) savings come from and how feature velocity enabled. SDN really determines what gets processed where, and therefore, done right, holds the key.” Sharon Barkai of HP (see previous quote).

-

“Open-source may, in the long-run, be more secure, but it’s a tough one to buy. I guess one would have to look historically at open-source software projects and ask if the mere fact that a project was open-source contributed to its robustness, or was it that the robustness was introduced by someone who took the open-source effort and really provided that additional software that made things secure.” Vishal Sharma, PhD, Principal Metanoia, Inc & IEEE ComSocSCV officer.

- “Software Defined Networking is now migrating from a discussion to actual implementation in the real world….The XPliant protocol independent programmable (Ethernet) switch silicon is providing a platform for innovation to further this reality.” Eric Hayes, VP/GM, Switch Platform Group at Cavium (winner of SDN Idol award as noted above).

- “This year’s ONS is rife with SDN and OpenFlow solutions such as the Atrium Project that demonstrate how these technologies provide a whole new tool-set for solving network problems. We now face the challenge of making the benefits this technology known to a wider audience, and affecting a truly fundamental change in how networking is done! It’s a fascinating time to be in the networking business!” Marc LeClerc, VP Strategy and Marketing at NoviFlow Inc. [Marc described the Atrium OpenFlow (ONF control plane to 5 different data plane switches) interop demo to this author and Ken Pyle – see above illustration].

Acknowledgement:

The author would like to thank Elise Vue, Publicist for EngagePR who was in charge of the media for this conference. Unlike most PR agencies that ignore or neglect accredited media, Elise went out of her way to ensure I was getting everything I possibly could from ONS 2015 each and every day (Tues, Wed, and Thurs). It was a truly refreshing and positive change from most conferences I’ve attended recently. Elise also followed up after the conference to provide feedback on the unscripted video interview I did with ONF’s Dan Pitt: “I agree with Dan, it was seamless! Great work, keep it up.” THANKS for your excellent support, Elise!

References:

Alan’s interview with Dan Pitt, Executive Director of ONF:

How ONF is Accelerating the Open Software Defined Networking (SDN) Movement

http://www.archives.opennetsummit.org/archives-ons/archives-2015/

Cuba to expand Internet access and lower price of WiFi connections

The Cuban government announced plans to expand internet access throughout the island and lower the per-hour price of Wi-Fi. Cuba plans to add Wi-Fi to 35 state-run computer centers across the island, where internet access remains tightly controlled and illegal at home for most Cubans. Cuba will also lower the price of Wi-Fi based Internet access from $4.50 to $2 per hour.

The Internet initiatives were announced in Juventud Rebelde, an official newspaper aimed at the island’s youth, came amid new pressures to increase Internet access as the nation edges toward normalizing diplomatic relations with the United States. By this July, the state-run telecommunications company, Etecsa, will open 35 hot spots, mainly in parks and boulevards of cities, the company’s spokesman told Juventud Rebelde. Connection will cost just over $2 per hour, half of what it currently costs in an Internet cafe. However, it’s unclear exactly how quickly the Cuban government will conduct the expansion and how well the connections will actually work.

Gizmodo says: “Since the average salary in Cuba is only about $20 a month, that’s still pretty damn expensive. Nevertheless, it’s an improvement over a couple years ago when there was just one internet cafe in Old Havana. Things have been so bad there in recent years that young Cubans have taken things into their own hands and built an elaborate mesh network to create their own thriving underground internet. They also pass internet content around via USB sticks.”

Ted Henken, a professor at Baruch College in New York who has studied social media and the Internet in Cuba, said the decision could mark a “turning point. Their model was, ‘Nobody gets Internet,’ ” he said in a telephone interview. “Now their model is, ‘We’re going to bring prices down and expand access, but we are going to do it as a sovereign decision and at our own speed.’ ”

Nothing was announced for wireline Internet access to homes or business, probably because of very llimited DSL depolyment throughout the island. While the number of residential DSL Internet access lines has not been made public, that service was supposed to have been initiated last year as per this article:

Cuba’s ETECSA to provide residential DSL services in 2014

Also refer to:

Cuba’s WiFi access plan raises intresting questions

http://laredcubana.blogspot.com/2015/06/cubas-wifi-access-plan-raises.html

Addendum – Cuba’s Internet Infrastructure:

The country’s Internet capability was greatly boosted by the completion of an undersea fiber-optic cable from Venezuela that came online in January 2013. Venezuela financed it, a French company built it, and Doug Madory, the guy at Dyn Research who spotted that underwater cable, says it’s got potential you haven’t even tapped: “It’s improved their connectivity to the outside world. However, the improvement of greater access to the people of Cuba, that’s still slow going.”

Authorities say Cuba must prioritize its bandwidth for uses that are deemed to benefit society, such as schools and workplaces. Critics say government prohibitions are the main obstacle to access, although the state has gradually been loosening some controls.

Cuba’s poor Internet access is a grievance increasingly shared across political lines, by entrepreneurs and computer programmers as well as journalists and ordinary citizens who want to communicate with relatives overseas.

It is a source of frustration for young people, a growing number of whom — especially in Havana — own a smartphone that they cannot use to get online. The city’s one hot spot — at the workshop of the artistKcho — is constantly packed.

Over the past two years, the government has opened dozens of Internet cafes and introduced email service for the island’s million or so cellphone users. It signaled its willingness to expand connectivity this month in a leaked report that argued that lack of Internet access was holding back the economy. The report outlined plans to get broadband — albeit slow broadband — to half of Cuban homes by 2020.

According to Wikipedia, “The Internet in Cuba is among the most tightly controlled in the world.[2] It is characterized by a low number of connections, limited bandwidth, censorship, and high cost.[3] The Internet in Cuba stagnated since its introduction in the 1990s because of lack of funding, tight government restrictions, the U.S. embargo, and high costs. Starting in 2007 this situation began to slowly improve. In 2012, Cuba had an Internet penetration rate of 25.6%.[4]“

Reference:

https://techblog.comsoc.org/2014/12/21/u-s-cuba-rapprochement-telecom-internet-infrastructure-is-a-top-priority-for-the-cuban-government

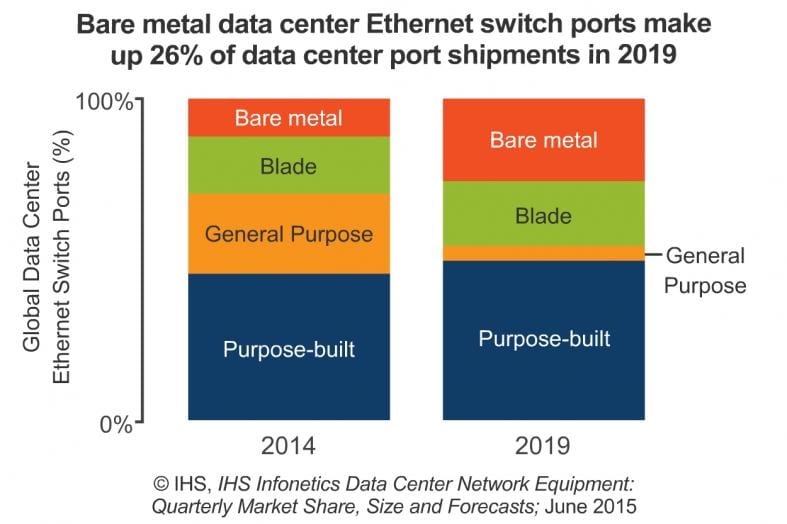

Open Networking/SDN Reference Models + Bare Metal Switches increase market share in Data Centers

Introduction:

“Open networking, which leverages open source software and open hardware designs and allows anyone to innovate, is set to change networking, just as open source changed the server and OS marketplace,” said Cliff Grossner, Ph.D., research director for data center, cloud and Software Defined Networking (SDN) at IHS.

“This move to open networking is heightening the importance of bare metal switches, as evidenced by all the vendor announcements at Interop in April. Dell is expanding its open networking portfolio with three new branded bare metal switches providing options from 1GE to 100GE Ethernet. And Citrix entered the SD-WAN market with Cloudbridge Virtual WAN Edition, which allows enterprises to create a virtualized WAN,” Grossner said.

Open Networking/SDN Reference Models:

Mr. Grossner and this author are collaborating on a report/article defining Open Networking Reference Architectures, Business Models, and Vendors Market Share. We’ve identified the following reference architectures:

DATA CENTER MARKET HIGHLIGHTS:

- The global data center network equipment market-including data center Ethernet switches, application delivery controllers (ADCs) and WAN optimization appliances (WOAs)-declined 14% sequentially in 1Q15, to $2.6 billion.

- The data center Ethernet switch segment continues to grow on a year-over-year basis (+4% in 1Q15 from 1Q14); positive forces include large enterprises and the public sector.

- F5 took the #1 spot for virtual ADC appliance revenue from Citrix in 1Q15.

DATA CENTER REPORT SYNOPSIS:

The quarterly IHS-Infonetics Data Center Network Equipment market research report tracks data center Ethernet switches, bare metal Ethernet switches, Ethernet switches sold in bundles, application delivery controllers (ADCs) and WAN optimization appliances (WOA). The research service provides worldwide and regional market size, vendor market share, forecasts through 2019, analysis and trends. Vendors tracked include A10, ALE, Arista, Array Networks, Aryaka, Barracuda, Blue Coat, Brocade, CloudGenix, Cisco, Citrix, Dell, Exinda, F5, HP, Huawei, IBM, Ipanema, Juniper, Kemp, NEC, Radware, Riverbed, Siaras, Silver Peak, Talari, VeloCloud, Viptela and others.

To purchase the report, please visit: www.infonetics.com/contact.asp

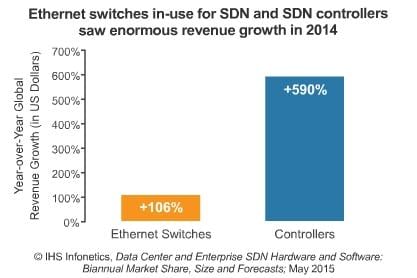

In an IHS-Infonetics report released earlier this month (June 3, 2015), Cliff forecast that the in-use software-defined networking (SDN) market (Ethernet switches and controllers) will reach $13 billion in 2019, up from $781 million in 2014, as the availability of branded bare metal switches and use of SDN by enterprises and smaller cloud service providers (CSPs) drive growth.

“The SDN market is still forming, and the top market share slots will change hands frequently, but currently the segment leaders are Dell, HP, VMware and White Box,” said Cliff Grossner, Ph.D., research director for data center, cloud and SDN at IHS.

“SDN will cross the chasm in 2016, with SDN in-use physical Ethernet switches accounting for 10 percent of Ethernet switch market revenue,” Grossner said.

SD-WAN WEBINAR:

Join analyst Cliff Grossner June 16 at 11:00 AM ET for Adopting Software-Defined WAN for Agility and Cost Savings, an event providing recommendations for buyers of new SD-WAN products and services.

Register at: http://w.on24.com/r.htm?e=993468&s=1&k=5A40BFBD0F05E1431D43F6D4D920370A

End Note: This author will be covering the annual Open Network Summit this week in Santa Clara, CA. Email any questions/concerns/issues to: [email protected]

For previous articles by this author, kindly refer to this website (https://techblog.comsoc.org/author/aweissberger/) and to http://viodi.com/category/weissberger

Does Verizon want to abandon copper landlines to focus on wireless & FiOS?

Ever since Verizon bought out Vodafone to take 100% ownership of Verizon Wireless, the largest U.S. wireless carrier has taken steps to divest its wireline operations to free up focus for its more luctorative wireless business. This past March, Verizon sold its wireline operations (including FiOS) in California, Florida and Texas to Frontier Communications for $10 billion. This May, Verizon bought AOL for $4.4 billion in cash, a deal aimed at advancing the telecom giant’s growth ambitions in mobile video and advertising.

On June 9th, the WSJ reported that Verizon’s largest union, The Communications Workers of America (CWA), claims the company is refusing to fix broken landlines. CWA is accusing the carrier of abandoning its copper landline networks in portions of the northeastern United States. It says that Verizon isn’t making necessary repairs and instead is pushing customers in parts of New York, Delaware, Pennsylvania, New Jersey, Maryland, Virginia, and Washington, DC. toward wireless home phone service.

CWA, which represents about 35,000 Verizon employees, announced intentions to file Freedom of Information Act (FOIA) requests to pull up data on Verizon’s maintenance of legacy networks. The dispute comes as Verizon and the union are about to enter negotiations later this month for a new contract.

“As a public utility in these states, Verizon has a duty to maintain services for all customers. But we’ve seen how the company abandons users, particularly on legacy networks, and customers across the country have noticed their service quality is plummeting,” Dennis Trainor, CWA vice president for District 1, said in a statement.

“Verizon is systematically abandoning the legacy network and as a consequence the quality of service for millions of phone customers has plummeted,” said Bob Master, CWA’s political director for the union’s northeastern region. Mr. Master said the union’s interests are aligned with those of consumers. Many customers want to keep their copper landline phones because in the event of a power outage the lines will keep running, while wireless and fiber phone systems will stop working as soon as the batteries die, according to Mr. Master. [Of course that’s correct, because copper phone lines have power feeding from the 48v dc battery in the telco central office that runs for several hours/days after a regional power failure).

Caption: Are copper phone lines for the birds?

Verizon spokesman Rich Young countered those remarks by saying that the CWA’s allegations are aimed at pressuring the carrier in advance of the contract negotiation talks and he denied the union’s claims. “It’s pure nonsense to say we’re abandoning our copper networks,” Mr. Young told the WSJ. Mr. Young said the company is investing in its copper network, and it only offers Voice Link, which delivers service over Verizon’s cellular network, as a temporary replacement while repairs are being done. About 13,000 customers have decided to keep the Voice Link service, Mr. Young said.

Spending on Verizon’s wireline network has declined. In the last year, the company invested $5.8 billion on its wirelines (copper and fiber), a 7.7% reduction from the year before. Mr. Young attributed the drop in spending not to reduced maintenance but to a slowdown in its FiOS build out.

In the wake of Hurricane Sandy, Verizon’s copper lines on parts of the East Coast were damaged. The carrier drew criticism from organizations like AARP for planning to turn off those legacy networks in favor of offering wireless Voice Link technology.

Verizon has expressed a desire to shut off its copper line system in the future in favor of cheaper wireless and higher-speed fiber network access technology. In addition to being faster and in some cases cheaper to build, those technologies face fewer regulations than services delivered over copper infrastructure. AT&T Inc. has also said it wants to eventually shut off its copper network. That’s despite advances in DSL technology like “vectoring” which mitigates interference and thereby increases upload/download speeds.

Verizon has about 10.5 million residential landline voice customers, about half of which are on copper. In the past few years, the company has moved about 800,000 people off its copper network onto its newer, fiber-to-the-premises based FiOS access network.

In it’s 1st Quarter 2015 earnings report, Verizon noted a 10.2% year-over-year increase in FiOS revenues with 133,000 FiOS Internet and 90,000 FiOS Video net additions. Total FiOS revenues in the 1st quarter were $3.4 billion. Verizon has a total of 6.7 million FiOS Internet and 5.7 million FiOS Video connections at the end of the 1st quarter 2015, representing year-over-year increases of 9.4% and 7.9%, respectively.

Clearly, Verizon is not abandoning FioS, but is not saying much about maintaining its existing copper wire plant. It’s not correct to conclude that Verizon wants to focus soley on wireless as they see a good business in offering FiOS based triple and quadruple play service bundles to residential customers.

References:

http://consumerist.com/2015/06/09/telecom-union-says-verizon-is-neglecting-landlines/

Cable Broadband Equipment Market: DOCSIS channels shipped increase, but revenue down, says IHS

CABLE BROADBAND MARKET OVERVIEW:

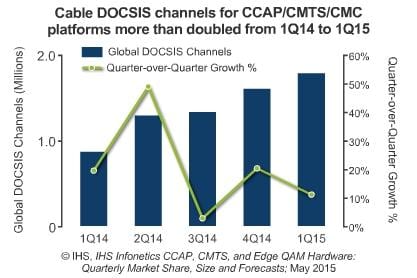

As MSOs continued major upgrades to their broadband networks, cable DOCSIS (Data Over Cable Service Interface Specification) channel shipments grew to 1.8 million in the first quarter of 2015 (1Q15), a 14% sequential increase and a gain of 48% from the year-ago first quarter, according to the latest CCAP, CMTS, and Edge QAM Hardware report from IHS (formerly Infonetics).

“The cable broadband market got off to a mixed start in the first quarter. Despite the first quarter typically being a slow one, DOCSIS channels increased yet again. But revenue was down due to a combination of aggressive pricing and a higher proportion of software licenses,” said Jeff Heynen, research director for broadband access and pay TV at IHS.

CABLE BROADBAND MARKET HIGHLIGHTS:

- CCAP, CMTS, CMC and edge QAM revenue fell 7% sequentially in 1Q15, to $474 million.

- The now-dead merger between Comcast and Time Warner Cable was expected to dampen the key North American region in Q1, but despite the headwinds, the overall cable broadband market remained healthy, setting the stage for a strong 2015.

- To date, very few cable operators have deployed video QAM channels on their CCAP-capable platforms, tamping the long-term outlook for these channels.

- ARRIS dominated the cable broadband market again in 1Q15, supported in part by the early availability of its E6000 CCAP-capable product.

CABLE BROADBAND REPORT SYNOPSIS:

The quarterly IHS Infonetics CCAP, CMTS, and Edge QAM Hardware market research report tracks cable broadband subscribers and equipment including converged cable access platforms (CCAPs), cable modem termination systems (CMTSs), coaxial media converters (CMCs) and edge quadrature amplitude modulation (QAM) channels. The research service provides worldwide and regional market size, vendor market share, forecasts through 2019, analysis and trends. Vendors tracked include Arris, Casa Systems, Cisco, Ericsson, Harmonic, Huawei, Sumavision, others.

To purchase the report, please visit: www.infonetics.com/contact.asp

Charts & Reference:

https://www.ncta.com/industry-data

IHS RELATED RESEARCH:

GPON, VDSL Drive 14 Percent YoY Increase in Broadband Equipment Market:

http://www.infonetics.com/pr/2015/1Q15-PON-FTTH-DSL-Aggregation-Highlights.asp

Telcos, Cablecos Ramp Spending on Premium Broadband CPE in Race to Gigabit:

http://www.infonetics.com/pr/2015/4Q14-Broadband-CPE-Market-Highlights.asp

Fixed Broadband Subscribers Projected to Approach 1 Billion in 2019, Led by China:

http://www.infonetics.com/pr/2015/FTTH-DSL-Cable-Subscribers-Market-Highlights.asp

Broadband Operators Reveal Ambitious Network Upgrade Plans:

http://www.infonetics.com/pr/2014/Fixed-Broadband-SP-Leadership-Survey-Highlights.asp

Implications of a T-Mobile – Dish Networks Merger

Dish Network, the satellite television company controlled by billionaire Charles Ergen, is in talks to acquire wireless telco T-Mobile US, according to people briefed on the matter. T-Mobile US has a market value of $31 billion, while Dish Network is worth nearly $35 billion. Details of the price, and the cash and stock mix, were still being worked out. Both Dish and T-Mobile declined to comment.

A merger of Dish and T-Mobile US would provide a definitive answer to what Dish plans to do with its huge wireless spectrum holdings, which Mr. Ergen has been adding to for years without revealing any clear plan for how Dish would use it. Speculation has persisted that DIsh would either build its own LTE or (true) 4G-LTE Advanced network – either on its own, or by acquiring a wireless network operator that had LTE/mobile broadband engineering expertise.

According to a Wells Fargo report, Dish’s full spectrum license collection is likely worth a total of between $40 billion and $44 billion. That figure includes Dish’s 700 MHz, AWS-4 and H Block licenses, as well as the AWS-3 licenses the company won in the FCC’s recent spectrum auction. (However, those AWS-3 licenses are somewhat in question because they are tied to Dish via the company’s two designated entities (DEs)–Northstar Wireless and SNR Wireless–which could pay around $10 billion in the auction for 702 licenses. Both regulators and Dish’s rivals have blasted Dish’s use of DEs–and the 25 percent small business discount they are to receive–as unfair). It’s also worth noting that Dish’s spectrum portfolio could be worth as little as $28.1 billion or as much as $56.7 billion, Wells Fargo said, depending on buyers’ eagerness.

Following the close of the recent FCC AWS-3 auction, Dish commands an average of around 80 MHz of licensed spectrum nationwide, putting it just behind T-Mobile in terms of spectrum depth. Sprint leads in overall spectrum owned due to its extensive 2.5 GHz licenses, while AT&T comes in second place, Verizon comes in third, and T-Mobile comes in fourth (even though it acquired all of MetroPCS’ spectrum in May 2013).

NOTE: Low-band spectrum can cover large geographic areas, while high-band spectrum can transmit larger amounts of data. Therefore, buyers of licensed spectrum might only be looking for spectrum licenses that fit in with their overall network rollout strategy. The value of spectrum is directly tied to demand from actual mobile broadband users, and demand is a hard metric to calculate. But the rising value of spectrum was clearly on display during the FCC’s recent AWS-3 spectrum auction, which raised almost $45 billion in total gross bids–double even the highest forecasts before the event.

A merger would also give T-Mobile US a clear path to grow and continue its role as a market disrupter (“the uncarrier”), giving it both the spectrum and financial resources it needs to build out its mobile broadband network to cover more of the U.S. T-Mobile would be able to use Dish’s accumulated spectrum, adding more and faster broadband wireless service across the U.S. That, combined with the continuation of its industry-disruption pricing strategy, could turn T-Mobile into a real threat for Verizon and AT&T for the first time. As the proposed deal wouldn’t reduce the number of wireless carriers, regulators are likely to approve it.

Braxton Carter, T-Mobile’s chief financial officer, in March complimented Mr. Ergen’s push to accumulate unused licensed spectrum. “You look at what he is doing with some of his technologies, and that type of marriage could be very, very, very interesting, or partnership,” he said.

Implications for Real Time Mobile Video:

Finally, a merged entity would be a serious competitive threat to the combined AT&T-DirecTV merged company, because it would sell both satellite TV as well as mobile broadband/wireless voice service. It would probably accelerate the trend toward delivery of REAL TIME MOBILE VIDEO, which both merged entities will likely pursue aggressively.

Here’s what DirecTV said about its acquisition by AT&T in a press release:

Creates Content Distribution Leader Across Mobile, Video & Broadband Platforms

- The premier pay TV brand with the best content relationships now poised to deliver video to multiple screens – mobile, TV, laptops and more – to meet consumers’ future viewing and programming preferences

- Unparalleled video content distribution scale in U.S. – nationwide mobile and video networks; broadband to cover 70 million customer locations with our broadband expansion

“DIRECTV is the premier pay TV provider in the United States and Latin America, with a high-quality customer base, the best selection of programming, the best technology for delivering and viewing high-quality video on any device and the best customer satisfaction among major U.S. cable and satellite TV providers. AT&T has a best-in-class nationwide mobile network and a high-speed broadband network that will cover 70 million customer locations with the broadband expansion enabled by this transaction.”

We can expect a very similar press release if and when the Dish/T-Mobile US deal is completed and announced.

In summary, a new Dish/T-Mobile, would be able to offer customers a full array of mobile Internet-based services along with its fast-growing “skinny-bundle” service called Sling TV. The combined entity could provide mobile Internet, pay-TV and cell phone services resulting in a “poor man’s triple play.” That’s because faster and more reliable wire-line broadband Internet wouldn’t be available from the new entity. So it couldn’t justifiably compete with AT&T’s U-verse, Verizon’s FiOS, or Comcast’s Xfinity triple play. However, we see tremendous competition in wireless only triple plays between Dish/T-Mobile and AT&T/DirecTV entities.

Reference:

Analysis of $37B Avago-Broadcom Deal: Risky Financing & Uncertain Synergies

Introduction:

“We have seen a slowdown in top buying (economic) growth rates. So one of your options to at least generate growth on the bottom line is to do accretive deals….. This deal could mark the start of a new string of mega-mergers in the tech industry,” said Christopher Rolland of FBR &Co. Of course, he was referring to Avago Technologies proposed $37 billion buyout of semiconductor heavy weight Broadcom. “Money is still cheap. So those dynamics are sort of coming together to cause this consolidation,” Rolland added.

The value of a combined Avago-Broadcom would be approximately $77 billion. The new company (which is to be called Broadcom Ltd) will have annual revenue of approximately $15 billion.

Broadcom Backgrounder:

Broadcom, based in Irvine, Calif., was founded in 1991 by Henry Samueli, PhD – an electrical engineering professor at the University of California, Los Angeles (UCLA) and Henry Nicholas III (Samueli’sPhD student) who left the company in 2003. Mr. Samueli, the owner of the Anaheim Ducks hockey team, is Broadcom’s chairman and chief technology officer.

Broadcom’s revenues last year were nearly twice the size of Avago’s. The acquisition would take Avago into new semiconductor markets, including cable modems, TV set-top boxes, Wi-Fi and data center switching systems. Broadcom is by far and away the leader in Ethernet switch chips for equipment in both premises and cloud based data centers as well as telco and campus networks.

The mainstream media, including the NY Times and WSJ, incorrectly reported that Broadcom has a very large share of the mobile communications chip market. In fact they have zero percent share of that market. The company does have a very large market share in WiFi chips, but Qualcomm’s Atheros Communications Division is closing in fast.

Broadcom acquired WiMAX chip leader Beceem Communications in 2010 for $316M, believing Beceem could transition it’s OFDMA based WiMAX technology to LTE, but that didn’t happen. Renesas Mobile was then acquired which gave Broadcom a fully qualified LTE modem, and products. However, facing very tough competition, Broadcom ultimately shut down its cellular efforts leaving it with 0% market share in the “mobile communications” chip market. [The leader in wireless chips, especially cellular, is Qualcomm. Three are no close competitors.]

Furthermore, Broadcom took an impairment charge of $501 million related to the NetLogic acquisition in 2013. Not every deal works out!

Where are the Synergies?

Junko Yoshida, Chief International Correspondent for EE times wrote:

“I need to be enlightened on how this (deal) advances the companies’ technologies. How will this deal make it better for engineers at the two companies working on new products and technologies? Am I cynical in suspecting that Avago wanted Broadcom purely for the sake of getting bigger?

Where is the affinity – or any apparent good vibe – connecting Avago to Broadcom? Aren’t the “soft” factors of corporate culture at least as important for successful mergers as a momentary splash on the stock market?”

Continuing, Yoshida wrote:

“Avago’s big appetite for acquisitions is well known. But this sort of abrupt switch from one target to another suggests recklessness. Or maybe I’m not cynical at all, but naïve. After all, company M&As aren’t personal. It’s not like Avago and Broadcom are engaged to be married.

I’ve always felt a great respect for Broadcom. I’ve admired savvy business strategy, and more significantly, a technology vision led by Henry Samueli, Broadcom’s co-founder, chairman of the board, and chief technology officer.

Without Broadcom, we might well be bereft of all the Ethernet, broadband connections the company has enabled. Broadcom, like a well-oiled machine, has stayed true to its motto – “integration, integration, integration” – to dominate the digital SoC world.

I don’t think I’m alone in viewing Broadcom as the best of the best among U.S. fabless chip companies. But this merger begs a big question: Where will Broadcom integration vision turn? Will Broadcom’s team be allowed to sustain its discipline in execution? Most important, will Henry Samueli stay?”

Quotes from Broadcom & Avago CEOs:

“This is a landmark day in the history of the industry,” said Scott McGregor, Broadcom’s 58-year-old CEO, during a conference call after the deal was announced last Thursday.

“Today’s announcement marks the combination of the unparalleled engineering prowess of Broadcom with Avago’s heritage of technology from HP, AT&T (Microelectronics), and LSI Logic in a landmark transaction for the semiconductor industry,” Avago CEO Hock Tan (62 years old) said in a statement. “Together with Broadcom, we intend to bring the combined company to a level of profitability consistent with Avago’s long-term target model.”

Financing the Deal:

Avago Technologies plans to finance its $37 billion purchase of Broadcom, with $15.5 billion of new syndicated term loans. Financing will come from Bank of America Merrill Lynch, Credit Suisse, Deutsche Bank, Barclays, and Citigroup, sources said. The issuer expects to refinance $6.5 billion of existing debt facilities and raise $9 billion of new money. A $500 million revolver would be undrawn at closing. The transaction would leverage Avago at roughly 2.7x, giving full credit for $750 million of synergies. Net of $1.3 billion of cash on hand, adjusted leverage would fall to 2.5x, according to an investor presentation.

Avago Built on Debt Fueled Takeovers:

Avago has used takeovers and mergers as an engine for economic growth and increased market capitalization. Some analysts have compared the company to Valeant Pharmaceuticals, a drug maker whose meteoric growth has been powered by serial acquisitions. Avago’s relatively short company history is truly amazing and one for the record books.

- In 2005, private equity firms KKR and Silver Lake Partners acquired Agilent’s Semiconductor Products Group (SPG) for $2.66 billion. (Agilent was spun off by HP). In December of that year, Avago Technologies was established, creating the world’s largest privately held independent semiconductor company.

- From 2007 to its IPO on August 6. 2009, Avago acquired the fiber optic component and Bulk Acoustic Wave businesses from Infineon (formerly Siemens Microelectronics) and then Nemicon to complement its motion control product line. When AVGO went public in 2009, it seemed like a modest player in the semiconductor industry with a market value of just $3.5 billion. But few anticipated its future growth through debt funded deal making.

- Since Avago became a public company in 2009, its management team has pursued a half-dozen acquisitions. The biggest of which was its $6.6 billion takeover of the LSI Corporation (formerly LSI Logic), a networking and storage chip manufacturer, in late 2013. It was funded by a $4.6 billion leveraged loan which was ~70% of the price paid for LSI. The purchase price for LSI was more than six times Avago’s cash on hand at the time.

- Note that LSI had previously acquired Agere (formerly AT&T Microelectronics, then part of Lucent Technologies, IPO in March 2001) which at one time had a market cap of > $10 billion!

- Early this year, Avago struck a $606 million takeover deal for Emulex.

- Aiding Avago’s acquisitions are several rare factors, including a roughly 5% tax rate that comes from being based in Singapore and ready access to low-cost debt financing. Note that Singapore is the company’s headquarters ONLY for tax reasons. Neither the original company nor any of its acquisitions were ever based there.

Meteoric Rise in Avago Stock Price:

AVGO went public August 6, 2009 at $15 a share. The stock closed Friday at $148.07. That’s an increase of 987.13% and triple its value of December 2013. Evidently, Avago’s aggressive acquisition strategy has paid off big time for its shareholders.

For more financial implications of this merger, please read this blog post by the Curmudgeon and Victor Sperandeo.

Addendum: Intel Buys Altera for close to $17B

On Monday, June 1st, Intel announced it would buy FPGA chip maker Altera Corp. for $16.7B – the costliest in the Santa Clara, CA, company’s 47-year history. Some Wall Street analysts question whether Intel paid too much.

http://www.wsj.com/articles/intel-ceo-accelerates-shift-from-pcs-1433201084

Meager Telco Capital Spending Entering New Era of Desynchronized Cycles; Dell’Oro & AT&T on CAPEX

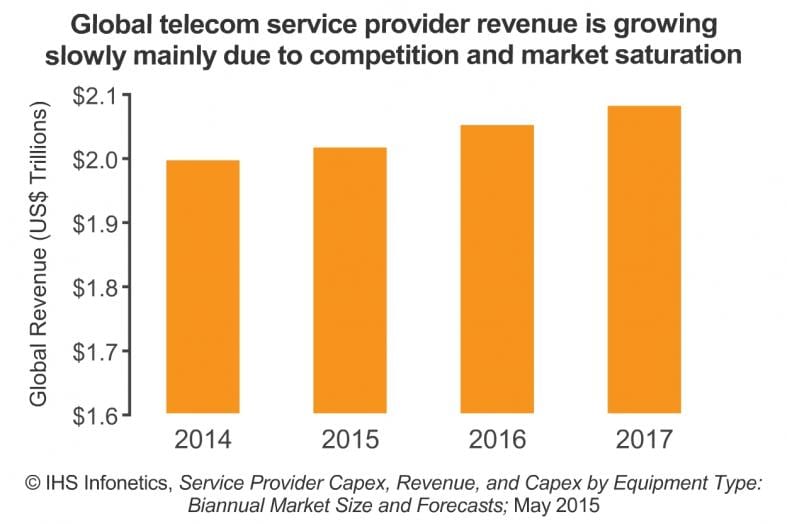

The latest IHS Infonetics Service Provider Capex, Revenue, and Capex by Equipment Type report notes that worldwide telecom service provider capital expenditures (CAPEX) grew 2.9% year-over-year in 2014, to US$352 billion.

CAPEX REPORT HIGHLIGHTS:

- In 2014, expenditures for every type of equipment except TDM voice slowly grew or stayed flat from the year prior.

- Europe posted 3.3% year-over-year growth in carrier CAPEX, mainly fueled by Deutsche Telekom and Vodafone.

- China more than offset spending declines in Japan and South Korea to drive Asia Pacific’s capex growth to 4.2 percent year-over-year in 2014.

- Meanwhile, a World Cup hangover slowed telecom spending in Latin America.

- IHS forecasts worldwide telecom capex to total a cumulative US$1.8 trillion in the 5 years from 2015 to 2019.

“We’re entering a new era of desynchronized cycles in telecom spending. Regions and economic powers have their own investment agendas and pace, and this will result in overall flat-to-low-single-digit capex growth through 2019 unless a major event occurs,” said Stéphane Téral, research director for mobile infrastructure and carrier economics at IHS.

CAPEX REPORT SYNOPSIS:

IHS Infonetics’ biannual service provider CAPEX report provides worldwide and regional market size, forecasts through 2019, analysis, and trends for revenue and capex by service provider type (incumbent, competitive, cable operators, independent wireless, satellite) and CAPEX by equipment type (broadband aggregation equipment; wireless infrastructure; IP routers and CES; optical equipment; IP and TDM voice infrastructure; video infrastructure; all other telecom/datacom network equipment; and CPE non-telecom/datacom network equipment).

To purchase the report, please visit: www.infonetics.com/contact.asp

RELATED RESEARCH

- Europe Exiting Optical Network Spending Slump

- Hotspot 2.0 and Virtualization Key to New Revenue for WiFi Carriers

- Carrier Voice-over-IP Market Off to Strong Start in 2015, Led by U.S.

- Mobile Operators Using EDGE, HSPA+ to Improve User Experience on Road to LTE

- Big Jump in Carrier WiFi Gear Expected as 802.11ac and Hotspot 2.0 Accelerate

From Dell’Oro Group’s Carrier Economics report: Dollar Strength to Wipe Out $20 B in Telecom Capex During 2015:

“We have not made any major changes to our constant currency Capex projections for 2015 and continue to expect the market will grow at a low-single-digit pace in 2015 driven primarily by China and Europe,” said Stefan Pongratz, Dell’Oro Group Carrier Analyst. “But in U.S. Dollar terms, assuming rates remain at current levels, the strengthening U.S. Dollar will unequivocally impact Telecom Capex, and we have revised our 2015 Capex in U.S. Dollar terms downward rather significantly to adjust for currency fluctuations,” continued Pongratz.

AT&T says by 2020, 75% of its network will be software-centric, with the use of network functions virtualization and software defined networking (SDN) technologies. Note however, that AT&T’s definition of SDN has nothing to do with the original definition which includes strict separation of data & control planes, centralized controller with a global view of the network (not just adjacent network elements), use of Open Flow API/protocol as the Southbound API from the control to data planes. AT&T is NOT a member of the Open Network Foundation that is specifying SDN architectures and protocols.

As part of AT&T’s ongoing Domain 2.0 effort, about 40% of its strategic IT applications have been migrated to the cloud, with an ongoing process of one application to be migrated a day. According to the company, that move has enabled greater operational efficiencies over applications running on dedicated hardware. In addition, about 400,000 processor cores are running the cloud IT apps and operating 50% more efficiently than on dedicated hardware, the company claims.

AT&T expects future networking deployments to further reduce capex over the next five years. “This is a big opportunity for change and will allow us to look at our network model in a different way,” said Susan Johnson, senior vice president of global supply chain at AT&T.