Month: March 2016

2016 OCP Summit: Highlights of Bell Labs Peter Winzer’s Talk & Conversation with FB’s Katharine Schmidtke

Backgrounder:

Fiber optics transmission – both short haul within a Data Center (DC) and long haul for WAN transport- have made great progress in recent years. While it took 12 to 14 years (from 2000 to 2012-2014) to go from 1G to 10G Ethernet in the DC, it’s taken only a couple of years to go to 40G in mega DCs and in the core WAN. Some DCs, Internet Exchanges, and WAN backbones now support 100G.

[For today’s DCs, the uplinks and switch to switch traffic uses 10G/40G Ethernet while WANs generally use OTN framing, channels and speeds.]

A Long History of Innovation at Bell Labs:

During a March 9th Open Compute Project (OCP) Summit Keynote, Peter Winzer, Ph.D & Head of the Optical Transmissions Systems & Networks Research Department at (now Nokia) Bell Labs shared insights around how innovation has evolved along with the fiber optic foundation for telecom infrastructure and long haul transport. After his 11 minute presentation, Winzer was interviewed by Katharine Schmidtke, PhD & Head of Optical Network Strategy at Facebook (see Q&A summary below).

Winzer shared key insights about how the “Transistor” was developed at Bell Labs (patent in 1947) and then given away for free to stimulate its uptake in design of electronic devices. That so called “culture of openness” spread to the area now known as Silicon Valley, ever since William Shockley set up his Mt View, CA lab in 1956, followed by Bob Noyce & company leaving to found Fairchild in 1957. “Silicon Valley is a spin-out of Bell Labs…” he said. We agree!

Peter covered the glory days of Bell Labs to it’s accomplishments after divestiture by AT&T (to Lucent than Alcatel-Lucent and now Nokia) with a chart showing all the important discoveries. Please refer to this chart of Innovation at Work which continues with a video window of Peter’s speaking.

Peter than described some of the more recent work he and his team conducted around the 100G field trial with Verizon in 2007 on a live link in Florida, which proved that capacity limits of optical networks could be drastically increased (from 10G or 40G to 100G) to help meet exponential growth in traffic. Winzer maintains that 100G in the WAN is feasible today as an upgrade to 10G/40G long haul networks.

Please refer to chart of Making 100G a Reality- A Recent Example of Bell Labs Innovation. One little Bell Labs developed DSP chip does all the signal processing for 100G transmission. Next was a Terabit per second per fiber optic wavelength/channel (which assumes DWDM transmission) lab demo in 2015.

Why was that important? Because data/video traffic is continuing to grow (exponentially). Traffic growth vs fiber speed per wavelength was illustrated by a table showing how much capacity/speed is needed depending on traffic growth rates. For example, with a 40% traffic growth rate, in 7 years a 10X fiber channel increase will be needed. That in turn implies 1 terabit per second transmission will be needed in 2017, since 100G bit/spec was commercially available (but rarely deployed) in 2010.

Capacity Limits of Optical Networks:

It was always believed that fiber capacity was infinite. But it’s been slowing down since 2000. Looking ahead, the Bell Labs team will focus on facing challenges around fiber capacity limits as we are now approahing the Shannon limits of a fiber optic channel based on transmission distance.

Winzer stated: “We are at the Shannon limits. Is there life beyond DWDM? Bell Labs is looking at physical dimensions available and, with the help of frequency and space, we hope to find solutions that will help you scale your networks further (e.g. attain higher speeds per optical channel/wavelength/fiber).”

This topic was picked up again during the Q&A conversation described below.

Facebook’s Katharine Schmidtke’s Remarks + Q &A with Peter Winzer:

Dr. Schmidtke has been previously profiled in a 2015 Hot Interconnects article detailing Facebook’s (FB’s) intra DC optical network strategy. Katharine said that while most of FB’s DC internal interconnects were at 40G bit/sec links, one FB DC would be upgraded to 100G bit/sec using Duplex Single Mode Fiber (SMF) this year. SMF, while more expensive than Multi Mode Fiber (MMF), can be upgraded to support much higher speeds such as 400G bit/sec and even 1 Tera bit/sec.

Katharine said that all FB DC internal interconnects would be upgraded 100G by January of 2017. Finally, Katharine said that it’s now possible to build efficient green infrastructure for better designed DCs.

Note: Katharine didn’t talk about fiber speeds needed to interconnect FB’s DCs, e.g. their internal WAN backbone (note that Google refused to answer that very same question from this author during several of their talks on Google’s inter-DC fiber optic based backbone WAN.

In a follow up conversation with Peter Winzel, Katharine asked a number of questions. Here’s a summary of the Q &A:

1. What’s the capacity needed in the near future? Fiber optic capacity is very relative, dependent on operator demand and their traffic growth rates.

2. What should the industry be doing to address the capacity challenges? There are five physical dimensions that can be used to scale a network. Only Frequency and Space haven’t been exploited yet, but those will need to be researched. Several approaches were suggested for Frequency improvements. Space implies parallel transmission systems (TBD).

3. How does coherence technology (e.g. 100G Coherent DSP chip from Bell Labs) help on the network scale? With Coherent DSPs you can electronically compensate for various types of impairments such that 100G becomes a “piece of cake” where 10G previously had trouble (due to uncompensated fiber impairments). You can also monitor and open up the network to sense polarization rotation in the signal which could be caused by a back hoe digging up the system. That’s predictive fault detection and isolation. Please also see the IEEE Spectrum article excerpt on coherence below.

4. What about “Alien Wavelengths” (sending wavelengths generated by a different vendor over the fiber line system built by the incumbent vendor)? Coherent technology can compensate for impairments much more easily providing for a lot more openness.

5. Business case? Peter referenced Bell Labs giving away the transistor to stimulate electronic designs to use it. The effect was that the transistor began used for switching (and other applications) besides the linear amplifier it was originally intended to replace. It had a huge impact on the electronics industry. The implication here is that openness and collaboration as per the OCP will lead to important innovations in fiber optics transmission. Time will tell.

In a March 9th afternoon OCP Summit session, Katharine described Optical Interconnects within the DC and Beyond using FB DC’s as examples. That was then followed by a panel that included representatives from Microsoft, Google and Equinix. You can watch that video here.

Has Fiber Optic Transmission Speed Reached It’s Limits?

In an email to this author related to Winzer’s comments about fiber optic transmission capacity limits, Katharine wrote: “You could say that fiber optic transmission (speed) is predicted to plateau or is predicted to reach the Shannon limit. I do agree that the capacity crunch challenge is important and research needs to be started right away. It’s a great topic to start a discussion.”

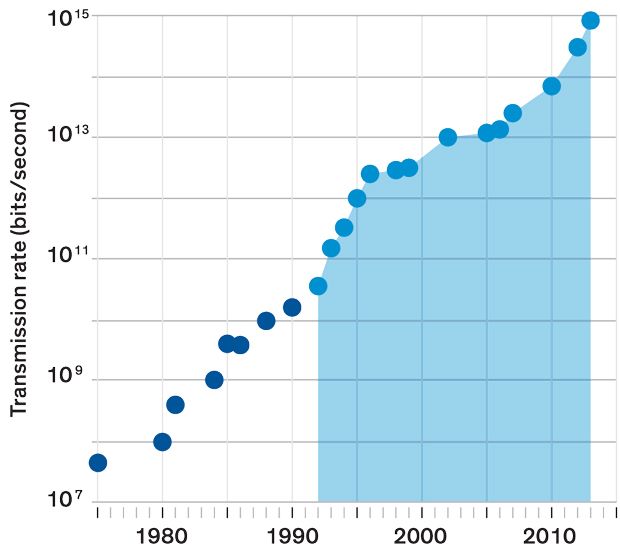

Indeed, the discussion has already started as per an excellent February 2016 IEEE Spectrum article: After decades of exponential growth, fiber-optic capacity may be facing a plateau. The graph below is extracted from that article:

Data source: Donald Keck Fiber-optic capacity has made exponential gains over the years. The data in this chart, compiled by Donald Keck, tracks the record-breaking “hero experiments” that typically precede commercial adoption. It shows the improvement in fiber capacity before and after the introduction of wavelength-division multiplexing [light blue section]

The article goes on to reference Peter Winzer’s work:

“Peter Winzer, a distinguished member of technical staff at Bell Labs and a leader in high-speed fiber systems, agrees that installing new cables with even more fibers is the simplest approach. But in a recent article, he warned that this approach, which will add to the cost of a cable, might not be popular among telecommunications companies. It wouldn’t reduce the cost per transmitted bit as much as they had come to expect from earlier technological improvements.”

The article also defines coherence and why it’s an important and intrinsic property of laser light.

“Coherence means that if you cut across the beam at any point, you’ll find that all its waves will have the same phase. The peaks and troughs all move in concert, like soldiers marching on parade. Coherence can be used to drastically improve a receiver’s ability to extract information. The scheme works by combining an incoming fiber signal with light of the same frequency generated inside a receiver. With its clean phase, the locally generated light can be used to help determine the phase of the noisier incoming signal. The carrier wave can then be filtered out, leaving the signal that was added to it. The receiver converts that remaining signal into an electronic form carrying the 1s and 0s of the information that was sent.”

Space and time do not permit any more discussion here about the intriguing subject of how to increase fiber optic tranmission capacity as the Shannon limit is reached. The obvious answer is to just use more fibers or wavelengths.

We invite blog posts, referenced articles and comments (log in using your IEEE web account credentials to post a comment below this article).

Verizon, FCC Push Mm Wave 5G -Threat to Cable Broadband Service, Reinhardt Krause, INVESTOR’S BUSINESS DAILY

Bottom Line: Could high frequencies let AT&T or Verizon out do cable broadband service? Wireless carriers could one day boast data-transfer speeds up to a gigabit per second with 5G — about 50 times faster than cellular networks around the U.S. have now. That opens up new markets for competition.

Federal regulators and Verizon Communications have zeroed in on airwaves that could make the U.S. the global leader in rolling out 5G wireless services. One market opportunity for 5G may be as challenger to the cable TV industry’s broadband dominance. Think Verizon Wireless, not Verizon’s VZ FiOS-branded landline service, vs. the likes of Comcast or Charter Communications.

First, though, airwaves need to be freed up for 5G. That’s where highfrequency radio spectrum, also called millimeter wave or mm-Wave, comes in. In particular, U.S. regulators are focused on the 28 gigahertz frequency band, analysts say. Most wireless phone services use radio frequency below 3 GHz.

If 28 GHz or millimeter wave rings a bell, that’s because several fixed wireless startups (WinStar, Teligent TLGT , NextLink, Terabeam) tried and failed to commercialize products relying on high-frequency airwaves during the dot-com boom of the late 1990s. Business models were suspect, and their LMDS (local multipoint distribution services) were susceptible to interference from rain and other environmental conditions.

When the tech bubble burst in 2000-01, the LMDS startups perished. Technology advances, however, could now make the high-frequency airwaves prime candidates for 5G.

“In the 1990s, with LMDS, mobile data wasn’t mature, and neither was the Internet, and neither was the electronics industry — it couldn’t make low-cost, mmWave devices,” said Ted Rappaport, founding director of NYU Wireless, New York University’s research center on millimeter-wave technologies.

“Wi-Fi was really brand new then, and broadband backhaul (long-distance) was not even built out. LMDS was originally conceived to be like fiber, to serve as backhaul or point-to-multipoint, and was not for mobile services, he said.

“Fast forward to today: backhaul is in place to accommodate demand, and electronics at mmWave frequencies are being mass-produced in cars,” Rappaport continued. “Demand for data is increasing more than 50% a year, and the only way to continue to supply capacity to users is to move up to (millimeter wave).”

The Federal Communications Commission in October opened a study looking at 28, 37, 39, and 60 GHz as the primary bands for 5G. While the FCC says that 28 GHz airwaves show promise, some countries have been focused on higher frequencies. FCC Chairman Tom Wheeler, speaking at a U.S. Senate committee hearing on March 2, said: “While international coordination is preferable, I believe we should move forward with exploration of the 28 GHz band.”

Wheeler said that the U.S. will lead the world in 5G and allocate spectrum “faster than any nation on the planet.”

Verizon Makes Deals

Verizon, meanwhile, on Feb. 22 agreed to buy privately held XO Communications’ fiber-optic network business for about $1.8 billion. In a side deal, Verizon will also lease XO’s wireless spectrum in the 28 GHz to 31 GHz bands, with an option to buy for $200 million by the end of 2018. XO’s spectrum covers some of the largest U.S. metro areas, including New York, Boston, Chicago, Minneapolis, Atlanta, Miami, Dallas, Denver, Phoenix, San Francisco and Los Angeles, as well as Tampa, Fla., and Austin, Texas. Verizon CFO Fran Shammo commented on the XO deal at a Morgan Stanley conference on March 1st.

“Right now we have licenses issued to us from the FCC for trial purposes at 28 GHz. The XO deal gave us additional 28 GHz,” he said. “The rental agreement enables us to include that (XO spectrum) in some of our R&D development with 28 GHz. So that just continues the path that we’re on in launching 5G as soon as the FCC clears spectrum.” He noted that Japan and South Korea plan to test 5G services using 28 GHz and 39 GHz airwaves.

Some analysts doubt that 28 GHz airwaves will be on a fast track. “We are skeptical not only on the timing of the availability of 28 GHz but also its ultimate viability in a mobile wireless network,” Walter Piecyk, analyst at BTIG Research, said in a report. Boosting signal strength at higher frequencies is a challenge for wireless firms. Low-frequency airwaves travel over long distances and also through walls, improving in-building services.

One approach to increase propagation in millimeter wave bands, analysts say, is using more “smallcell” radio antennas, which increase network capacity. Wireless firms generally use large cell towers to connect mobile phone calls and whisk video and email to mobile phone users. They also install radio antennas on building rooftops, church steeples and billboards. Suitcase-sized antennas used in small-cell technology often go on lamp posts or utility poles. Verizon has been testing small cell technology in Boston, MA.

When Will 5G Happen?

Verizon says that it will begin rolling out 5G commercially in 2017, though its plans are still vague. While many wireless service providers touted 5G plans and tests at the Mobile World Congress (MWC) in February, makers of telecom network equipment are being cautious.

“General consensus (at MWC) seemed to indicate that the 2020 time-frame will mark full-scale 5G deployments,” Barclays analyst Mark Moskowitz said in a report. Verizon has said that it doesn’t expect 5G networks to replace existing 4G ones.

While 5G is expected to provide much faster data speeds, wireless firms also expect applications that require always-on, low-data-rate connections. The apps involve datagathering from industrial sensors, home appliances and other devices often referred to as part of the Internet of Things (IoT).

Both Verizon and AT&T T have recently touted 5G speeds up to one gigabit per second. That’s roughly 50 times faster than the average speeds of 4G wireless networks in good conditions. AT&T CEO Randall Stephenson recently said that 5G speeds could match fiber-optic broadband connections to homes.

5G Vs. Broadband

At the Morgan Stanley conference, Verizon’s Shammo also said that 5G could be a “substitute product for broadband.” Regulators would like to create new competition for cable TV companies. But, Verizon says, it’s still early days.

“With trials, we’ll figure out exactly what we can deliver, what the base cases are,” said Shammo. “5G has the capability to be a substitute for broadband into the home with a fixed wireless solution. The question is, can you deploy that technology and actually make money at a price that the consumer would pay?”

Sanyogita Shamsunder, Verizon’s director of network infrastructure planning, says that high frequencies can support 5G. “Radio frequency components today are able to support much wider bandwidth (think wide lanes on the highway) when compared to even 10 years ago. What it means is we are able to pump more bits at the same time,” Shamsunder said in an email to IBD. “Due to improvements in antenna and RF technology,” she added, “we are able to support 100s of small, tiny antennas on a small die the size of a quarter.”

Another point of view from Alan Gatherer of IEEE ComSoc:

Fresh from Mobile World Congress, my favourite “tell it like it is” curmudgeon-cum-analyst Richard Kramer has kindly agreed to share his thoughts on the state of the industry and on 5G in particular. While reading his article, I had two thoughts that align with his position:

1) How long will it take to really do VoLTE well?

2) 3G’s lifespan was quite short and we should probably expect a lot more runway for 4G.

Like Alice in Wonderland, the mobile world has been turned topsy-turvy with an accelerated push to 5G. One would think the lessons on 3G, 3.5G (HSPA), 4G, and its many variants, were never (painfully) learned: that the ideal approach for operators and vendors is to leave time to “harvest” profits from investments, not race to the next node. This was true in the earliest discussions of LTE (stretching back, if one recalls, to 2006/7), and in the interim fending off the noisy interventions of WiMax (remember those embarrassing forecasts from some analysts, which we fondly recall dubbing “technical pornography” for the 802.xxx variants garnering oohs and aahs from radio engineers). Bear in mind that 3G was commercially launched in UK on 03.03.03, and LTE was demo’ed at the 2008 Beijing Olympics. Isn’t there a lesson here about leaving the cake in the oven long enough to bake?

That 5G is theoretically using the same, or at least similar, air interfaces, is hardly a saving grace. For now, the thought of deploying a heap of non-standard equipment is highly unappealing to telco customers. Neither is sufficient attention paid to the lack of spectrum, or the potential perils of relying on unlicensed spectrum for commercial services. There seems to be a blind, marketing-led rush to be the first to announce milestones that are effectively rigged lab trials, and that convince few of the sceptical buyers to shift long-standing vendor allegiances. So what do we have to hang our hats on? A series of relatively disjointed and often proprietary innovations building on LTE, specifically many bands of carrier aggregation and millimetre wave, including unlicensed bands, to get support for (and make a smash and grab raid on) much wider blocks of spectrum and therefore better throughout and capacity; a further extension of decades of work on MIMO to further boost capacity; and a similar pendulum swing towards edge caching to reduce latency (while at the same time trying to centralise resource in baseband-in-the-cloud, to reduce processing overheads in networks). The astonishing leap of faith is that by providing gigabit wireless speed at low latency, one will enable “new business models,” for now largely unimagined.

This leaves us with the farcical purported “business cases” for 5G. First, we have the Ghost of 2G Past, in the form of telematics, rebranded M2M, and now rebranded once more as “IoT”. To be sure, there are many industries that have long had the aim of wirelessly connecting all sorts of devices without voice or high-speed data connectivity. Yet these applications tend to work just fine at 2G or even 3G speeds. The notion that we need vast infrastructure upgrades to send tiny amounts of data with lower latency smells of desperation. Then there are all the low-latency video-related services – which again can be made more than workable with a combination of cellular plus WiFi. Meanwhile, just to muddy the waters and prevent any smooth sailing towards the mythical 5G world, we have a slew of new variants: LTE-A, LTE-U, low-energy LTE, MulteFire, LTE-QED (sorry, I made that one up), etc. And the aims of gigabit wireless have to be to supplant wireline, though that is hardly acting in isolation, as cablecos adopt DOCSIS 3.1 and traditional telcos bring on G.fast and other next-generation copper or fibre technology. As always, these advances are not being made in isolation, even if the plans of individual vendors seem to have done so.

Desperation is not confined to equipment vendors; chipmakers such as Qualcomm, Mediatek and others are facing the first year of a declining TAM for smartphone silicon, partly due to weak demand from emerging markets, and also due a rising influence of second hand smartphones being sold after refurbishment. We also see a trend of leading smartphone vendors internalising their silicon requirements, be it with apps processors (Apple’s A-series, Samsung Exynos), or modems (HiSilicon). Our view is that smartphone unit demand will be flattish overall this year, with most of the growth coming from low-end vendors desperate to ramp volumes to stay relevant. This should drive Qualcomm and MediaTek to continue addressing more and more “adjacent” segments within smartphones, to prevent chip sales from shrinking. Qualcomm is looking to make LTE much more robust to overtake WiFi and get traction in end-markets it does not address today.

Thus we have another of the “inter-regnum” MWCs, in which we are mired in a chaotic economic climate where investment commitments will be slow in coming, while vendors pre-position themselves for the real action in two or three years when the technologies are actually closer to being standardised and then working. We have like Alice, dropped into the Rabbit Hole, to wander amidst the psychedelic lab experiments of multiple hues of 5G, before reality sets in and everything fades to grey, or at least the black and white of firm roadmaps and real technical solutions.

Editor-in-Chief: Alan Gatherer ([email protected])

Comments are welcome!

http://www.comsoc.org/ctn/5g-down-rabbit-hole

Reference to presentation & slides: 5G and the Future of Wireless by Jonathan Wells, PhD

https://californiaconsultants.org/event/5g-and-the-future-of-wireless/

2016 OCP Summit Solidifies Mega Trend to Open Hardware & Software in Mega Data Centers

The highlight of the 2016 Open Compute Project (OCP) Summit, held March 9-10, 2016 at the San Jose Convention Center, was Google’s unexpected announcement that it had joined OCP and was contributing “a new rack specification that includes 48V power distribution and a new form factor to allow OCP racks to fit into our data centers,” With Facebook and Microsoft already contributing lots of open source software (e.g. MSFT SONIC – more below) and hardware (compute server and switch designs), Google’s presence puts a solid stamp of authenticity on OCP and ensures the trend of open IT hardware and software will prevail in cloud resident mega data centers.

Google hopes it can go beyond the new power technology in working together with OCP, Urs Hölzle, Google’s senior vice president for technical infrastructure said in a surprise Wednesday keynote talk at the OCP Summit. Google published a paper last week calling on disk manufacturers “to think about alternate form factors and alternate functionality of disks in the data center,” Hölzle said. Big data center operators “don’t care about individual disks, they care about thousands of disks that are tied together through a software system into a storage system.” Alternative form factors can save costs and reduce complexity.

Hölzle noted the OCP had made great progress (in open hardware designs/schematics), but said the organization could do a lot more in open software. He said there’s an opportunity for OCP to improve software for managing the servers, switch/routers, storage, and racks in a (large) Data Center. That would replace the totally outdated SNMP with its set of managed objects per equipment type (MIBs).

Jason Taylor, PhD, the OCP Foundation chairman and president + vice president of Infrastructure for Facebook, said that the success of the OCP concept depends upon its acceptance by the telecommunications industry. Taylor said: “The acceptance of OCP from the telecommunications industry is a particularly important sign of momentum for the community. This is another industry where infrastructure is core to the business. Hopefully we’ll end up with a far more efficient infrastructure.”

This past January, the OCP launched the OCP Telco Project. It’s specifically focused on open telecom data center technologies. Members include AT&T, Deutshe Telekom (DT), EE (UK mobile network operator and Internet service provider), SK Telecom, Verizon, Equinix and Nexius. The three main goals of the OCP Telco Project are:

- Communicating telco technical requirements effectively to the OCP community.

- Strengthening the OCP ecosystem to address the deployment and operational needs of telcos.

- Bringing OCP innovations to telco data-center infrastructure for increased cost-savings and agility.

See OCP Telco Project, Major Telcos Join Facebook’s Open Compute Project and Equinix Looks to Future-Proof Network Through Open Computing.

In late February, Facebook started a parallel open Telecom Infra Project (TIP) for mobile networks which will use OCP principles. Facebook’s Jay Parikh wrote in a blog post:

“TIP members will work together to contribute designs in three areas — access, backhaul, and core and management — applying the Open Compute Project models of openness and disaggregation as methods of spurring innovation. In what is a traditionally closed system, component pieces will be unbundled, affording operators more flexibility in building networks. This will result in significant gains in cost and operational efficiency for both rural and urban deployments. As the effort progresses, TIP members will work together to accelerate development of technologies like 5G that will pave the way for better connectivity and richer services.”

TIP was referenced by Mr. Parikh in his keynote speech which was preceeded by a panel session (see below) in which wireless carriers DT, SK Telecom, AT&T and Verizon shared how they planned to use and deploy OCP built network equipment. Jay noted that Facebook contributed Wedge 100 and 6-pack – design of next-generation open networking switches to OCP. Facebook is also working with other companies on standardizing data center optics and inter-data center (WAN) transport solutions to help the industry move faster on networking. Microsoft, Verizon, and Equinix are all part of that effort.

At the beginning of his keynote speech, Microsoft’s Azure CTO Mark Russinovich asked the OCP Summit audience how many believed Microsoft was an “open source company?” Very few hands were raised. That was to change after Russinovich announced the release of SONiC (Software for Open Networking in the Cloud) to the OCP. It is based on the idea that a fully open sourced switch platform could be serviceable by sharing the same software stack across various hardware from multiple switch vendors/ ASIC switch silicon. The new software extends and opens the Linux-based ACS switch that Microsoft has been using internally in its Azure cloud, and will be offered for all to use through the OCP. It also includes software implementations for all the popular protocol stacks for a switch-router.

Soucrce: Microsoft – Positioning SONiC within a 3 layer stack

The SONiC platform biulds on the Switch Abstraction Interface (SAI) a software layer launched last year by Microsoft, that translates the APIs for multiple network ASICs, so they can be run by the same software instead of requiring proprietary code. With SAI, cloud service providers had to provide or find code to carry out actual network jobs on top of the interface These utilities included some open source software. SONiC combines those open source omponents (for jobs like BGP routing) and Microsoft’s own utilities, all of which have been open sourced.

More than a simple proposal, SONiC is already receiving contributions from companies such as Arista, Broadcom, Dell, and Mellanox. Russinovich closed by asking the audience how many NOW think Microsoft is an “open source company?” Hundreds of hands went up in the air which affirms the audience’s recognition of SONiC as a key contribution to the open source networking software movement.

Rachael King, Reporter at the Wall Street Journal moderated a panel of telecommunications executives, including Ken Duell from AT&T, Mahmoud El-Assir from Verizon, Kangwon Lee from SK Telecom, and Daniel Brower from Deutsche Telekom to discuss some of the common infrastructure challenges related to shifting to 5G cellular networks quickly and without disrupting service. The central theme of the session was “driving innovation at a much greater speed,” as Daneil Brower, VP chief architect of infrastructure cloud for DT. The goal is improved service velocity so carriers can deploy and realize revenues from new services much quicker.

Most telco network operators are focused on shifting to “white box” switches and routers and virtualizing their networks, taking an open approach to infrastructure will make the transition to 5G more efficient and will accelerate the speed of delivery and configuration of networks.

Ken Duell, AVP of new technology product development and engineering at AT&T concisely summarized the carrier’s dilemma: “In our case, it’s a matter of survival. Our customers are expecting services on demand anywhere they may be. We’re finding that the open source platform … provides us a platform to build new services and deliver with much faster velocity.”

Duell said a major challenge facing AT&T and other telecom companies is network operating system software. “When we think of white boxes, the hardware eco-system is maturing very quickly. The challenge is the software, especially network OS software, to run on these systems with WAN networking features. One of the things we hoped … is to create enough of an ecosystem to create these network OS software platforms.”

There’s also a huge learning and retraining effort for network engineers and other employees, which AT&T is addressing with new on-line learning courses.

Verizon SVP and CIO Mahmoud El Assir hit on the ability of open source and virtualization of functions (e.g. virtualized CPE) to create true network personalization for future wireless customers. That was somewhat of a surprise to the WSJ moderator and to this author. El Assir compared the new telco focus to the now outdated historical concerns with providing increased speed/throughput and supporting various protocols on the same network.

“Now it’s exciting that the telecom industry, the networking industry, everything is becoming more software,” El Assir said. “Everything in the network becomes more like an app. This allows us to kind of unlock and de-aggregate our network components and accelerate the speed of innovation. … Getting compute everywhere in the network, all the way to the edge, is a key element for us.”

El Assir added OCP-based switches and routers will allow for “personalized networks on the edge. You can have your own network on the edge. Today that’s not possible. Today everybody is connected to the same cell. We can change that. Edge compute will create this differentiation.”

Kang-Won Lee, director of wireless access network solution management for SK Telecom, looked ahead to “5G” and the various high-capacity use cases that will usher in a new type of network that will require white box hardware due to cost models.

“It was more about the storage and the bandwidth and how you support people moving around to make sure their connections don’t drop,” Lee said. “That was the foremost goal of mobile service providers. In Korea, we have already achieved that.” With 5G the network “will be a lot of different types of traffic that need to be able to connect. In order to support those different types of traffic … it will require a lot of work. That’s why we are looking at data centers, white boxes, obviously, I mean, creating data centers with the brand name servers is not going to be cost efficient.”

Moderator Rachel King asked: “So what about Verizon and AT&T, fierce rivals in the U.S. mobile market, sharing research and collaborating – how does that work?”

“Our current focus is on the customer,” El Assir replied. “I think now with what OCP is bringing to the table is really unique. We’ve moved from using proprietary software to open source software and now we’re at a good place where we can transition from using proprietary hardware to open source hardware. We want the ecosystem to grow in order for the ecosystem to be successful.”

“There’s a lot of efficiencies in having many companies collaborate on open source hardware,” Duell added. “I think it will help drive the cost down and the efficiency up across the entire industry. AT&T will still compete with Verizon, but the differentiation will come with the software. The hardware will be common. We’ll compete on software features.”

You can watch the video of that panel session here.

We close with a resonating quote from Carl Weinschenk, who covers telecom for IT Business Edge:

“Reconfiguring how IT and telecom companies acquire equipment is a complex and long-term endeavor. OCP appears to be taking that long road, and is getting buy-in from companies that can help make it happen.”

IDC Directions 2016: IoT (Internet of Things) Outlook vs Current Market Assessment

The 11th annual IDC Directions conference was held in San Jose, CA last week. The saga of the 3rd platform (Cloud, Mobile, Social, Big/Data Analytics) continues unabated. One of many IT predictions was that artifical intelligence (AI) and deep learning/machine learning are a big part of the new application development. IDC predicts 50% of developer teams will build AI/cognitive technologies into apps by 2018 up from only 1% in 2015.

Vernon Turner, senior vice president of enterprise systems at IDC, presented a keynote speech on IoT. IDC forecasts that by 2025, approximately 80 billion devices will be connected to the Internet. To put that in perspective, approximately 11 billion devices connect to the Internet now. The figure is expected to nearly triple to 30 billion by 2020 and then nearly triple again to 80 billion five years later.

To illustrate that phenomnal IoT growth rate, consider that currently, there are approximately 4,800 devices are being connected to the network. Ten years from now, the figure will balloon to 152,000 a minute. Overall, IoT will be a $1.46 trillion market by 2020, according to IDC.

“If you don’t have scalable networks for the IoTs, you won’t be able to connect,” Turner said. “New IoT networks are going to have to be able to handle various requirements of IoT (e.g. very low latency).”

Turner also provided a quick update to IDC’s predictions for the growth of (big) digital data. A few years ago, the market research firm made headlines by predicting that the total amount of digital data created worldwide would mushroom from 4.4 zettabytes in 2013 to 44 zettabytes by 2020. Currently, IDC believes that by 2025 the total worldwide digital data created will reach 180 zettabytes. The astounding growth comes from both the number of devices generating data as well as the number of sensors in each device.

- The Ford GT car, for instance, contains 50 sensors and 28 microprocessors and is capable of generating up to 100GB of data per hour. Granted, the GT is a finely-tuned race car, but even pedestrian household items will contain arrays of sensors and embedded computing capabilities.

- Smart thermometers will compile thousands of readings in a few seconds.

- Cars, homes and office will likely be equipped with IoT gateways to manage security and connectivity for the expanding armada of devices.

How this huge amount of newly generated data gets used and where it’s stored remains an open debate in the industry. A substantial portion of it will consist of status data from equipment or persona devices reporting on remedial tasks: the current temperature inside a cold storage unit, the RPMs of a wheel on a truck etc. Some tech execs believe that a large segment of this status data can be summarized and discarded.

Industrial customers (like GE, Siemens, etc) will likely invest more heavily in IoT (sometimes referred to as “the Industrial Internet) than other market segments/verticals over time, but the moment retail customers are the most active in implementing new systems. In North America, a substantial amount of interest for IoT revolves around “digital transformation,” i.e. developing new digital services on top of existing businesses like car repair or hotel reservation. In Europe and Asia, the focus trends toward improving energy consumption and efficiency.

Turner noted that the commercialization of IoT is still in the experimental phase. When examining the IoT projects underway at big companies, IDC found that most of the budgets are in the $5 million to $10 million range. The $100 million contracts aren’t here yet, he added. Retail and manufacturing are the two leading IoT industry verticals, based on IDC findings.

In a presentation titled, A Market Maturing: The Reality of IoT, Carrie MacGillivray Vice President, IoT & Mobile made the following key points related to the IoT market:

- Early adopters are plenty, but ROI cases few & far between

- Vendors refining story, making solutions more “real”

- Standards, regulation, scalability and cost (!!!) are still inhibitors (as they have been for years)

IDC has created a model to measure IoT market maturity and placed various categories of users in buckets. Their survey findings are as follows:

- 2% are IoT Experimenters/ Ad Hoc

- 31.9% are IoT Explorers/Opportunistic

- 31.3% are IoT Connectors/Repeatable

- 24.2% are IoT Transformers/Manageable

- 10.7% are IoT Disruptors/Optimized solution

Carrie revealed several other important IoT findings:

- Vision is still a struggle for organizations, but it’s moving in the right direction. Executive teams must set the pace for IoT innovation.

- Still more tehcnology maturity is needed Investment extends beyond connecting the “thing” but also ensuring backend technology is enabled.

- IoT plans/process are still not captured in the strategic plan. They need to be integrated into production environments holistically.

Carrie’s Closing Comments for IoT Market Outlook:

- Security, regulatory, standards…and cost (!!!) are still inhibitors to IoT market maturity [IDC will be publishing a report next month on the status of IoT standards and Carrie publicaly offered to share it with this author.]

- Vision is still needed to be set at the executive level.

- Thoughtful integration of process has to be driven by a vision with measurable objectives.

- People “buy-in” will determine success or failure of these connected “things.”

Above graphic courtesy of IDC: http://www.idc.com/infographics/IoT

References:

MWC-2016: CDNs for Mobile Networks?

One of the more interesting trends from MWC-2016 in Barcelona last month is content delivery networks (CDNs) for mobile operators. [A CDN is an interconnected system of cache servers that deliver web content based on geographical proximity. The CDN concept originated in the wire-line Internet world for traditional video services over “best effort” Internet transport.]

A Mobile CDN is a network of servers – systems, computers or devices – that cooperate transparently to optimize the delivery of content to end users on any type of wireless or mobile network. To provide good Qualtiy of Experience (QoE) mobile content delivery networks (CDNs) offer an edge-based path to maintaining consumers’ QoE, aimed at optimizing mobile content delivery on that last-mile link from cell to device.

“Sustaining a good user experience is extremely costly for operators and, despite their efforts to increase network capacity, video quality degrades as content gets more and more popular,” said Expway visit co-founder and CMO Claude Seyrat. “15 percent of videos never successfully start, and 25 percent of users give up when facing buffering. The mobile video market is at a crossroads: video traffic is accelerating and mobile operators have outmost difficulty to tame it.”

A number of vendors were showing CDN technologies at MWC-2016 including Expway, Quickplay and Ericsson. The latter is also working to create a sort of global “super CDN” ecosystem among content providers (Brightcove, DailyMotion, EchoStar, Deluxe, LeTV and QuickPlay) and telcos (Hutchison, Telstra,AIS, and Vodafone). There’s also CDNetworks which claims to have a mobile CDN solution.

LTE Broadcast, a 3GPP feature available on some commercial LTE networks, is a one-to many approach that allows operators to deliver the content once, to a thousand users or more. Expway says it leads to ‘enormous’ bandwidth savings for the delivery of popular content. The company added that with its new offering called FastLane, mobile network operators can enhance their own CDNs or offer additional services with a guaranteed quality of service.

Related video tech at MWC-2016 included open caching solutions from such vendors as PeerApp and Qwilt. Those vendors are trying to lighten the CDN load on the core network by moving popular content closer to end-users.

Akamai– the world’s leading CDN provider which claims to deliver between 15-30% of all Web traffic – demonstrated a CDN for mobile video delivery at MWC-2015. At this year’s MWC, Akamai announced the commercial availability of its predictive content delivery solutions, intended to help solve mobile video quality challenges. Akamai’s predictive content delivery (PCD) solutions were designed specifically to address the requirements of content providers, video platform providers and mobile network operators, and are available in two configurations: an SDK that can be integrated into existing or new media apps, or the turnkey, white-labelled Akamai WatchNow application. They both allow for pre-positioning or caching of new videos on the end-user device based on user preferences and viewing behavior. This is said to make searching for content easier and allows for offline viewing.

Other top rating CDN providers can be viewed here and here.

Exactly how many mobile operators will actually buy this new CDN/video technology is not clear. Getting into the video delivery business from entails a pretty steep learning curve, plus major capital and operational expense, and not every mobile operator will be able to afford to participate. Most likely it’ll be restricted to the largest wireless telcos for the foreseeable future, i.e. AT&T, VZW, Vodafone, etc. Barriers to entry will be much more difficult for 2nd and 3rd tier wireless telcos like Sprint and T-Mobile.

Verizon CFO on 5G for landline services to homes? VZ to sell Data Centers?

More 5G Talk the Talk:

5G networks will provide speeds that could compete with landline service inside homes, but it’s not yet known whether wireless telecos could profit from offering the service, Verizon Communications Chief Financial Officer Fran Shammo told an investor conference Tuesday. Mr. Shammo spoke at the Morgan Stanley Technology, Media and Telecom Conference, which was also webcast.

Verizon has been experimenting with 5G technology from multiple vendors in five different markets where the FCC gave the company permission to use 28 GHz spectrum for trial purposes. Based on what Verizon has learned, Shammo said the company believes 5G service could be launched in 2017 if spectrum were available.

Author’s Note: Brilliant! Deploy 5G service in 2017 when the standards won’t be completed till the end of 2020!

“We’re trying to accelerate the FCC to clear spectrum,” Shammo said. He added that FCC Chairman Tom Wheeler recently visited Verizon’s location in Basking Ridge, N.J. – one of the places where the 5G technology has been deployed.

Shammo noted that when wireless carriers upgraded from 3G to 4G technology, costs decreased four- to five-fold (presumably on a per-bit basis). 5G has the potential to provide a similar cost advantage over 4G technology – at least with regard to video delivery, he said.

Separately, Shammo said:

- Verizon might sell data centers to raise cash to “do something else to increase shareholder value.”

- The take rates for Verizon Custom TV have increased to about 40% since the company rebundled the offering – an increase from a previous level of about one-third

- There’ll be more conflicts between content and linear video providers — and more channels being dropped from video service provider lineups. He believes Verizon’s Go90 offering gives the company negotiating power with content providers, though, because Go90 offers an additional way to monetize content

Reference

http://www.verizon.com/about/investors/morgan-stanley-technology-media-and-telecom-conference-2016