Month: September 2016

Google Expands Broadband Internet Access in India

This is an update of an earlier article on Google Managed WiFi network for India Railways:

Google today announced a collection of updates aimed at helping get ‘the next billion’ internet users in India and emerging markets online. One of the more subtle yet interesting components to that push is the launch of Google Station, a project to enable free public Wi-Fi hotspots, which is now open for new partners.

In India alone, Google estimates that 10,000 people go online for the first time each hour, while in Southeast Asia the figure is 3.8 million per month.

The company made a big push on the free Wi-Fi initiative, and today it revealed that it now covers 50 national stations, providing internet access to 3.5 million people each month. (That’s up from 1.5 million in June.) Google and RailTel are targeting 400 stations nationwide, and, in addition to that, it has now opened the program up to other public organizations.

Google Station is aimed at all manner of public businesses, from malls, to bus stops, city centers, and cafes. And not just those in India, too, Google said.

“We’re just getting started and are looking for a few strategic, forward-thinking partners to work with on this effort,” it added in a statement.

For all the excitement around how Google is disrupting U.S. internet access with Project Fi and Google Fiber, Google Station has the potential to take things even further by giving the hundreds of millions who lack decent quality internet access, a reliable connection to get online regularly for the first time. That is potentially life-changing for many.

No doubt the project is in its early days, but it has the potential to do an incredible amount of good — and hopefully without running into conflicts of interest, as the free internet project from Facebook did.

https://googleindia.blogspot.com/2016/09/google-for-india-making-our-products.html

IHS Markit: 90% of Mobile Operators Surveyed Have Deployed Small Cells

By Stéphane Téral, senior research director, mobile infrastructure and carrier economics, IHS Markit

Bottom Line

- The vast majority of mobile operators participating in the IHS Markit small cells survey have already deployed small cells, mostly in outdoor metro areas and in homes as residential femtocells

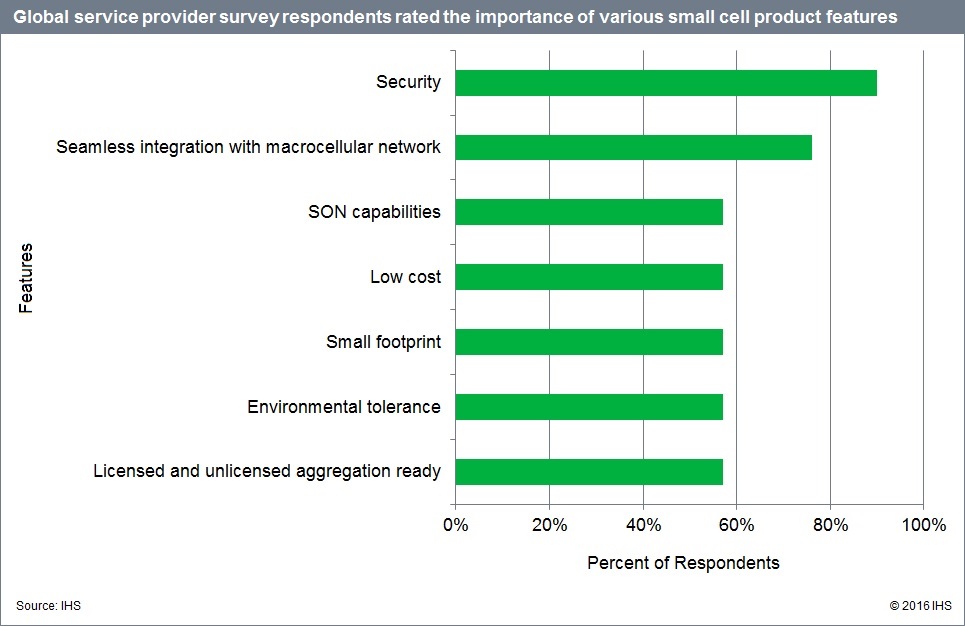

- Security is the number-one small cell product feature, rising from the bottom of respondents’ list five years ago

- Nokia emerged as the top small cell manufacturer among operators surveyed; its acquisition of Alcatel-Lucent has raised the “new” Nokia’s profile in the small cell space

Our Analysis

For our fifth annual small cell strategies study, we asked mobile operators if they have deployed small cells or plan to deploy them. Ninety percent of our respondents—compared to eighty-six percent last year—have already deployed small cells, while the rest plan to do so by the end of 2017. This illustrates the continued importance of adding small cells into existing macrocellular networks to close up coverage gaps and increase capacity. The principal business drivers behind operators’ deployment of small cells are lower subscriber churn and increased revenue.

Survey respondents are mostly connecting small cells to the nearest base station (BTS), but the use of gateways is rising. The bulk of small cells are being deployed in the 1.5GHz to 2.2GHz spectrum, where 3G and Long Term Evolution (LTE) reside.

When it comes to small cell product features, security has consistently risen from the bottom of respondents’ list five years ago to the top-rated feature this year after placing second last year. This is attributable to the increasing frequency and severity of security breaches and network hacking. Generally speaking, a small cell is easier to hack than a macro site that is typically not easily accessible to the public—and this will continue despite their camouflage. However, innovation in small cell installation in public spaces has led to small cells being embedded into furniture in public places such as bus stops in Amsterdam and London.

The integration of Alcatel-Lucent has led to the new Nokia emerging as the top small cell manufacturer among those surveyed, followed by Ericsson, Huawei and SpiderCloud, which continues its ascension. Nokia has the largest installed base, Ericsson leads under evaluation, and in China a flurry of homegrown players are under evaluation as well.

Small Cell Survey Synopsis

For its 30-page small cells study, IHS Markit conducted in-depth interviews with mobile operators worldwide about their small cell buildout plans. The study helps manufacturers and operators better understand the use cases for small cells, the number and types of small cells needed, the frequency bands required, and best-suited architectures.

For information about purchasing this report, contact the sales department at IHS Markit in the Americas at (844) 301-7334 or [email protected]; in Europe, Middle East and Africa (EMEA) at +44 1344 328 300 or [email protected]; or Asia-Pacific (APAC) at +604 291 3600 or [email protected]

Separately, Acute Market Reports announces that it has published a new study Small Cells and Femtocells Market, Shares, Strategies and Forecasts, Worldwide, 2013 to 2019. The 2013 study has 413 pages, 162 tables and figures. Worldwide markets are poised to achieve significant growth as small cell and femtocells and trucks permit users to implement automated driving.

AT&T’s CEO Talks Integrated Communications, 5G, CAPEX Dependency on "Regulator"

Executive Summary:

Randall Stephenson, AT&T chairman and chief executive officer (CEO) said that the company made a committment to have a full integrated suite of assets available. That includes mobile, video, business communications and others.

“2019-2020 5G can “intercept the current LTE network” if AT&T has enough spectrum. “Our target architecture is a wireless network, which may be a viable substitute for fixed broadband (wireline),” he added. Stephenson spoke at the Goldman Sachs 25th Annual Communacopia Conference on September 21st.

Stephenson said the FCC Chairman position has tremendous power and the uncertainty of who will replace current FCC Chair Tom Wheeler will impact capital spending (more details below).

AT&Ts CEO made a lot of other interesting comments during his Q&A with the Goldman moderator. You can listen to an audio replay of the webcast here (Registration Required). T-Mobile USA also presented at this conference.

Separately, AT&T announced that the company’s third-quarter 2016 financial results will be released after the New York Stock Exchange closes on Tuesday, October 25, 2016. AT&T will host a conference call to discuss the results that same day. The company’s earnings release, Investor Briefing and related materials will be available at AT&T Investor Relations.

AT&T CAPEX Dependent on Policies of Next FCC Chairman:

“As you start to make capital investment decisions over the next three or four years, it’s hard to make those decisions until you know who is sitting in that chair, as Chairman of the FCC,” Stephenson said. “That will determine how these services and these investments are regulated in the foreseeable future.”

Stephenson said that a recent court decision to defer any issues related to net neutrality to the FCC illustrates how much power the FCC chairman has in deciding how the overall telecom industry is regulated.

“Why is that so important? It’s important, because what we have just demonstrated is the individual who is Chairman of the FCC kind of has somewhat ultimate rule making authority under this kind of purview.”

Stephenson added that the regulatory environment has created an environment where AT&T’s board of directors has to consider capital spending plans based on the FCC regulator’s policies.

“I have never been in a situation where my board was more active in discussing capital allocation by virtue of who is the regulator,” Stephenson said. “The regulator is very determinative in terms of how my board thinks about capital investment and capital allocation.”

CenturyLink: Slow Movement to Software-Defined Wide-Area Networks (SD-WANs)

Customer transitions to software-defined wide-area network technology are taking longer than expected, according to CenturyLink, as networks are being upgraded on a piecemeal basis, not all at once. This is no surprise to this author, who has pounded the table that SD-WANs would be deployed very slowly because existing networking infrastructure could not easily be replaced and lack of a complete set of standards inhibited multi vendor interoperability.

“What we didn’t understand is most customers are themselves multi-tenant,” CenturyLink’s Eric Nowak said during a SD-WAN panel organized by Light Reading.

When CenturyLink deployed its SD-WAN service at the beginning of the year, it had expectations on how customers would view the importance of cost savings, the role SD-WAN would play on customer networks and more. All those expectations were subverted by reality. CenturyLink expected customers to be interested in getting cost savings from SD-WAN. In reality, customers weren’t so much looking to save money as get more for the same spending, Nowak said. SD-WAN can provide five to ten times the bandwidth as conventional WAN with the same security and application performance, for the same money, he said.

CenturyLink thought its customers would transition their entire networks to SD-WAN. In reality, the transition is taking time, with new locations going on the new network and traditional connections remaining in place, Nowak said.

Again, no surpise to us, as we totally rejected Prof. Guru Paraulker’s ONS assertions/ demands that classical SDN (as defined by Open Network Foundation) must start with a clean slate and deploy all new equipment (centralized SDN Controller for the Control plane, with L2/L3 data forwarding engines for the Data Plane). Overlay networks (that overlay SDN on the existing network infrastructure) were excluded and referred to as “SDN washing” – along with all the network vendor proprietary schemes that claimed to be “SDN compatible.”

“What we didn’t understand is most customers are themselves multi-tenant,” Nowak said. They have franchises, divisions and their own customers, all of which need to be managed independently.

“We as a service provider have customers with their own end customers,” Nowak said. “We sell to customers that have their own end customers downstream.” SD-WAN needs to be tuned to allow Skype for Business and other applications to work, and that ability — and need — to customize “may be one of the things that limits adoption,” Nowak added.

CenturyLink not only uses multi-tenant capabilities internally, but it also delivers those capabilities as a service to help customers manage their own customers. CenturyLink learned a lot from its first 50 customers. “Those are a lot of lessons in a short amount of time,” said Heavy Reading Senior Analyst Sterling Perrin, who moderated the panel.

Service providers need to “co-opt” SD-WAN into their own offerings, rather than simply reselling SD-WAN “off a catalog,” Andrew Coward, vice president of strategy at Brocade Communications Systems Inc.said. Simply reselling SD-WAN is a race to the bottom, as enterprises will group together circuits from multiple providers and go with the one that offers the best price performance, a pattern Perrin tagged “MPLS arbitrage.” In that case, customers benefit by saving money, vendors benefit, but service providers lose out on revenue, Mr. Perrin said. Service providers can avoid this fate by combining SD-WAN into a suite of vCPE and other services (what might those be?).

Read more at:

References from CenturyLink:

http://www.centurylink.com/business/enterprise/services/data-network/sd-wan.html

http://news.centurylink.com/news/centurylink-unveils-fully-managed-sd-wan-service

http://www.centurylink.com/asset/business/enterprise/data-sheet/sd-wan-sellsheet-ss160184.pdf

Related posts:

- IHS Markit: 75% of Carriers Surveyed Have Deployed or Will Deploy SDN This Year

- HOTi Summary Part II. SDN & NFV Impact on Interconnects & Cloud Orchestration Lacking

- IDC Forecasts Strong Growth for Software-Defined WAN As Enterprises Seek to Optimize Their Cloud Strategies

- Level 3: Focus SDN/NFV on Enterprise Issues

- Heavy Reading: Faster Services Driving NFV

- Heavy Reading: SDN Bringing Capex, Opex Savings to Optical Networks

- Masergy Promises Differentiated SD-WAN

IHS Markit SD-WAN Webcast Presentation Slides:

Author’s Note:

On this webcast, Telstra (Australia) was said to be the first carrier to deploy SDN in the WAN. Windstream said that we are in an early “journey” to SDN WAN. AT&Ts Network on Demand was cited as being a SDN WAN, but it’s actually only confined to the Management Plane for provisioning & reconfiguration which the Open Network Foundation (creators of Open Flow “southbound API”) doesn’t address let alone specify.

AT&T’s Network on Demand offers customer automated provisioning and rate changes for a switched Ethernet WAN. I was told that it used NETCONF and YANG modeling language for configuration/provisioning, along with some form of network orchestration software (possibly from TAIL-f/Cisco).

Copy/paste from AT&T’s website referenced above:

AT&T Network on Demand is the first of its kind software-defined networking solution in the U.S. It makes network management easier than ever with an online self-service portal. Business customers can now easily add or change services, scale bandwidth to meet their changing needs and manage their network all in near real time. This translates into time savings and less complexity for companies of all sizes.

“We’re working with Cisco to bring the next-generation network technology benefits to our customers. Cisco is an industry leader in networking. Their extensive SDN and NFV capabilities will broaden and enhance our Network on Demand platform,” Ralph de la Vega, president and CEO, AT&T Mobile and Business Solutions.

We’re continuing to make our network dynamic and adaptive with these new Network on Demand capabilities. This will enable the agility, speed and efficiency customers need.

What Innovation Do Telecom Carriers Need Today?

This is a question the Telecom Council of Silicon Valley asks of global carriers every September – and they answer at the TC3 Summit.

The Council is a bridge between entrepreneurs and telecom giants. It strives to understand the specific technology gaps of the network operators, so they can be clearly communicated to both start-ups and established vendors. Every year, a consolidated report is freely offered to entrepreneurs worldwide, which can help guide them towards possible deals with operators.

The Council reports that draft versions of the carriers’ innovation demands are starting to roll in for this year’s TC3 summit. Many carriers are seeking solutions in the following areas:

- Virtualization, NFV and VNFs, including MANO (Management & Orchestration) solutions

- SDN and SON (Self Organizing Networks) solutions

- IoT platforms, narrowband solutions

- “5G-ready” technologies (in advance of standardized “5G”)

- Media integration. Ways of integrating winning content with the network

- Solutions for handling Video with aplomb

- Big Data and analytics

- Digital Transformations, from the core right to the Customer Experience

- Shifting focus away from wireless access to SDN

- Improving the digital customer experience

Unfortunately for Tier 1 vendors, this does not translate into more revenue, since one of the main goals of virtualization is reduction in the Capital Expenditure (CAPEX) on dedicated hardware solutions. But on the other hand, it does open up the market, allowing new entrants to compete in these newly opened technology gaps. This is good news for entrepreneurs, VCs, and startups.

Editor’s Note: The 9th Annual TC3 Summit in Silicon Valley will take place Sept 28-29, 2016 at the Computer History Museum in Mt. View, CA. TC3 gathers innovation scouts from global telcos and communication vendors and will introduce over 50 innovation solutions to the telecom/ICT industry.

You can learn more about TC3 carrier road maps, innovations presented, sponsors and start-up vendor demo’s here.

To participate and attend TC3, use our IEEE ComSoc Community reader discount of $200 off: IEEE200 at the Registration web page.

Blog Posts on 2013 TC3 (last one this author attended):

http://viodi.com/2013/09/25/tc3-part-2-likely-strategic-goals-for-next-fcc-chairman-obstacles-ahead/

“Real” 5G Is Far in Future; Service Providers Struggling to Find Compelling Use Cases

By Stéphane Téral, senior research director, mobile infrastructure and carrier economics, IHS Markit

Bottom Line

- 5G will come in two waves: first, sub-6GHz in 2017, followed by the “real” 5G at higher spectrum bands—millimeter wave (mmWave)—in 2020

- Sub-6GHz spectrum is not new: it’s where existing mobile and wireless communications already coexist

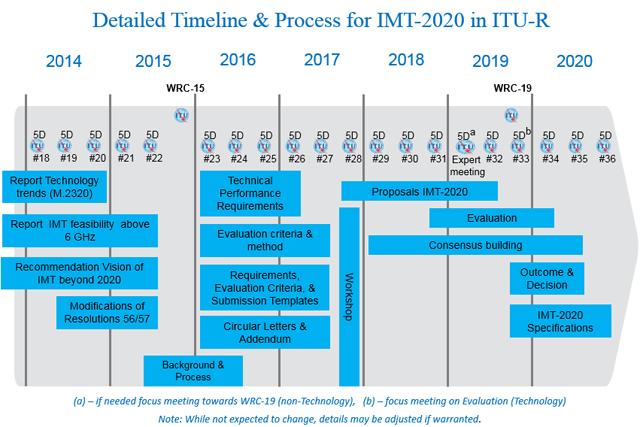

- Service providers in the 5G race are struggling to find compelling use cases that substantially benefit from the proposed International Telecommunication Union (ITU) IMT-2020 standard

Our Analysis

The 5G race is on and raging. The very first discussions about 5G started in 2012, and in 2013 NTT DoCoMo garnered attention by seriously discussing the possibility of rolling out 5G in time for the Tokyo Olympics in 2020.

Soon after, South Korea set the target for pre-commercial 5G showcasing commercial pre-standard evolutionary 5G—in reality, something like a 4.5G—for the PyeongChang Winter Olympics in 2018. Since then, major 5G R&D developments have happened in China, Europe, Japan and South Korea, fueling the hype to a level never seen for 3G and 4G. And finally, Verizon caught the mobile industry off guard last year at CTIA by announcing its aggressive 5G plan with first commercial deployments in 2017.

So What Is 5G, Anyway?

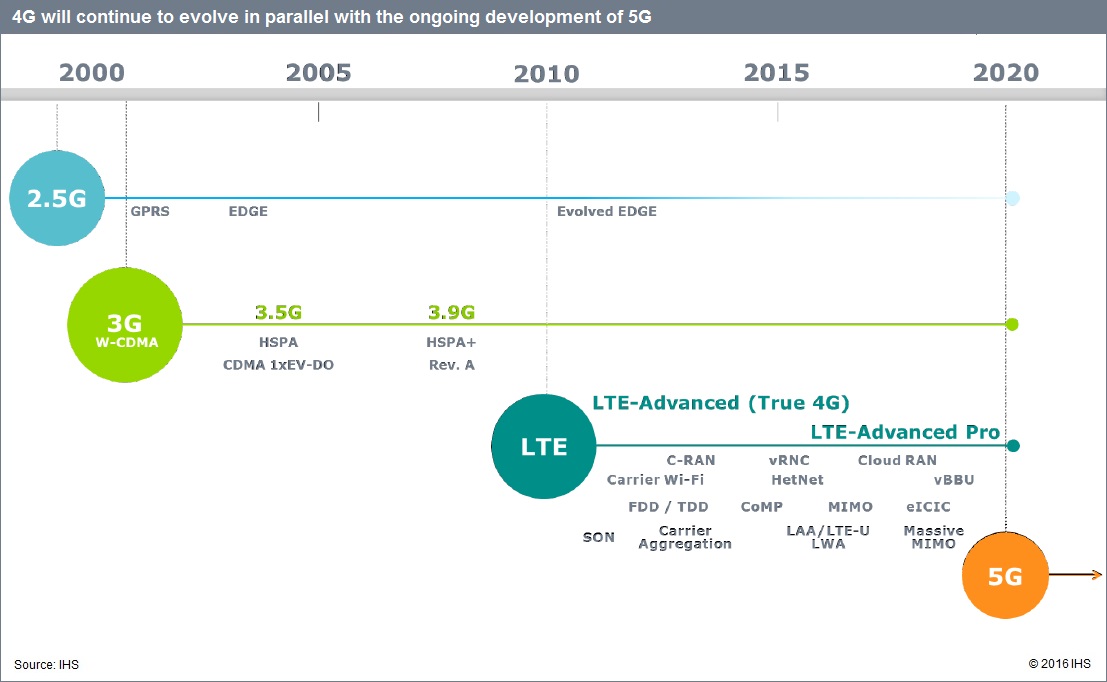

5G means a lot of different things to different people in various industries. Every stakeholder in the game—vendors, mobile operators, 5GPPP, the ITU and others—has its own ideas and thoughts about what 5G should be, and there’s currently a split between two schools of thought. Evolutionary 5G is an extension of current Long Term Evolution (LTE) and LTE-Advanced networks and is backward compatible with all 3GPP technologies. Revolutionary 5G, meanwhile, is a brand-new network architecture that requires a new air interface and radio access technology (RAT), moving away from current cellular designs.

In the end, 5G is the evolution of wireless and mobile technologies to create a new mode of connectivity that will not only provide humans with an enhanced broadband experience, but also address a wide range of industrial applications. What it is not is a continuing development of 4G and current technologies. 5G is already on a path that goes beyond cellular, shaping up as a multilink architecture that enables direct device-to-device communications.

4G is just ramping up, and LTE as we know it is just at 3G transitional. As such, 4G will continue to evolve in parallel with the ongoing development of 5G.

“Pre-5G”

Driven by the pre-5G race agenda spearheaded by Verizon, KT, SK Telecom, NTT DOCOMO, KDDI and Softbank, all radio access network (RAN) vendors are developing 5G air interfaces and new RAT ahead of the release of spectrum. But these early 5G systems will be “pre-5G” and operating in sub-6GHz spectrum. And let’s face it: there really isn’t anything new in sub-6GHz spectrum—it’s where existing mobile and wireless communications already coexist. Still, there is simply no choice, as mmWaves are far from being ready for prime time. So as a result, “real” 5G at higher spectrum bands isn’t expected until 2020.

Use Cases? What Use Cases?

The entire mobile ecosystem is trying to figure out the uses cases for 5G. Every service provider on this planet that has jumped into the 5G race is trying to find convincing uses cases that can greatly benefit from the proposed ITU IMT-2020 enhancements. And although the usual suspects are already in the game, companies such as Google and would-be entrepreneurs may come to the table with disruptive ideas.

Backing up the notion that industry will drive 5G, three-quarters of the global operators that participated in our recent5G Strategies Global Service Provider Survey rated the Internet of Things (IoT) as the top use case for 5G.

5G Report Synopsis

The 60-page IHS Markit 5G market report examines how 5G technology can evolve in terms of network architecture, topology, waveform and modulation schemes.

For information about purchasing this report, contact the sales department at IHS Markit in the Americas at (844) 301-7334 or [email protected]; in Europe, Middle East and Africa (EMEA) at +44 1344 328 300 or[email protected]; or Asia-Pacific (APAC) at +604 291 3600 or [email protected].

IHS Reference:

https://technology.ihs.com/582964/research-note-the-5g-race-is-on-with-iot-as-the-top-use-case

The International Telecommunications Union (ITU) has outlined three use cases for what is expected to be the 5G standard, as well as applications and industries that could benefit from the new network. Those included, enhanced mobile broadband; ultra-reliable and low-latency communications; and massive machine-type communications.

ITU-R 5G References:

https://www.itu.int/dms_pubrec/itu-r/rec/m/R-REC-M.2083-0-201509-I!!PDF-E.pdf

IHS Markit: 75% of Carriers Surveyed Have Deployed or Will Deploy SDN This Year

By Michael Howard, senior research director carrier networks, IHS Markit

Bottom Line

- Three-quarters of carriers participating in the IHS Markit software-defined networking (SDN) survey say they have already deployed or will deploy SDN in 2016; 100 percent plan to deploy the technology at some point

- The top drivers for service provider SDN investments and deployments are simplification and automation of network and service provisioning, as well as service automation

- Most operators are moving from SDN proof-of-concept (PoC) evaluations to commercial deployments in 2016 and 2017

IHS Analysis

In our fourth annual survey of carrier SDN strategies, it’s clear that service providers around the globe are investing in SDN as part of a larger move to automate their networks and transform not only their networks, but also internal processes, operations and service offerings.

Service providers believe that SDN will fundamentally change telecom network architecture and deliver benefits in service agility, time to revenue, operational efficiency and capex savings.

And these operators want SDN in most parts of their networks. Survey respondents’ top three SDN-targeted network domains for deployment by the end of 2017 are within data centers, between data centers and access for businesses.

Still, carriers are starting carefully with SDN, biting off small chunks of their networks called “contained domains” in which they will explore, trial, test and make initial deployments. Momentum is strong, but it will be many years before bigger parts of networks or entire networks are controlled by SDN—although a few operators are leading the way including AT&T, Level 3, Colt, Orange Business Systems, SK Telecom and Telefónica, among others.

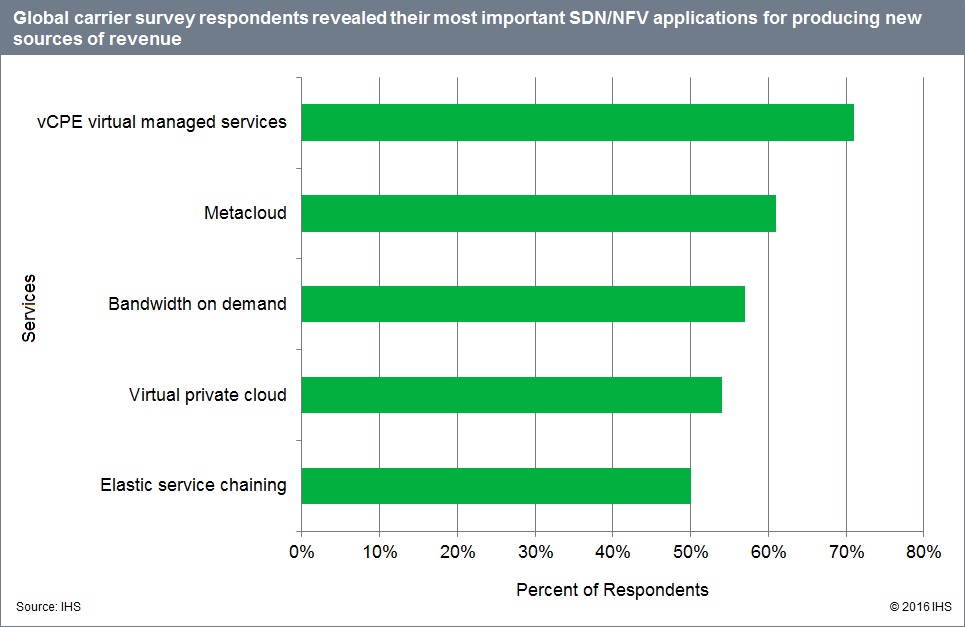

The industry is still in the early stages of a long-term transition to SDN and network functions virtualization (NFV) architected networks. Much continues to be learned as each year passes, and various barriers and drivers have become more prominent as operators inch closer to commercial deployment. In this year’s survey, for example, respondent operators indicated that their top barriers to deployment are the lack of carrier-grade products and integration of virtual networking into their existing physical networks. Nonetheless, they rated virtual customer premises equipment (vCPE) managed services as the top SDN/NFV application for producing new sources of revenue.

Carriers are learning that some avenues are not as fruitful as expected, and telecom equipment manufacturers and software suppliers may well invent new approaches that open up new applications. IHS Markit will conduct the fifth annual SDN survey in 2017, and it will be interesting to see what new issues emerge and which problems get resolved in the additional commercial deployments planned for 2016.

SDN Survey Synopsis

The 22-page IHS Markit SDN strategies survey is based on interviews with purchase-decision makers at 28 service providers around the world that control 53 percent of world’s telecom capex and are deploying or plan to deploy SDN in their networks in the future. Operators were asked about their strategies and timing for SDN, including deployment drivers and barriers, target use cases, applications and more.

How to Buy this Report

For information about purchasing this report, contact the sales department at IHS Markit in the Americas at (844) 301-7334 or [email protected]; in Europe, Middle East and Africa (EMEA) at +44 1344 328 300 or [email protected]; or Asia-Pacific (APAC) at +604 291 3600 or [email protected].

Previous IHS SDN Forecast Chart:

Author’s Opinion:

While there may be pockets of SDN deployed in carrier networks this year and next, we maintain it won’t present a large business opportunity for any carrier vendor/suppliers. That’s because each network (and cloud service) provider has their own version of SDN, with none compatible with any others! That in turn is due to the fragmentation of the market due to too many versions of SDN and no solid standards for multi-vendor interoperability.

We think the CORD project and mobile CORD have a much better opportunity because vendors are collaborating under various open source consortiums to disaggregate hardware of network elements, specify functional groupings and inter-module communications. IMHO, the dis-aggregation/network equipment virtualization trend is irreversible.

Here are a few references:

CORD: REINVENTING CENTRAL OFFICES FOR EFFICIENCY & AGILITY

Threat of Disaggregated Network Equipment – Part 3

CORD (Central Office Re-architected as a Datacenter): the killer app for SDN & NFV

Highlights of Light Reading’s White Box Strategies for Communications Service Providers (CSPs)

HOTi Summary Part II. Optimal Network Topologies for HPC/DCs; SDN & NFV Impact on Interconnects

Introduction:

Two talks are summarized in this second HOTi summary article. The first examines different topologies for High Performance Computing (HPC) networks with an assumption that Data Center (DC) networks will follow that topology. The second talk was a keynote on the impact of SDN, NFV and other Software Defined WAN/ open networking schemes.

1. Network topologies for large-scale compute centers: It’s the diameter, stupid! Presented by Torsten Hoefler, ETH Zurich

Overview: Torsten discussed the history and design tradeoffs for large-scale topologies used in high-performance computing (HPC). He observed that Cloud Data Centers (DCs) are slowly following HPC attributes due to a variety of similarities, including: more East-West (vs North-South) traffic, the growing demand for low latency, high throughput, and lowest possible cost per node/attached endpoint.

- Torsten stated that optical transceivers are the most expense equipment used in DCs (I think he meant per attachment or per network port). While high speed copper interconnects are much cheaper, their range is limited to about 3 meters for high bandwidth (e.g. N x Gb/sec) interfaces.

- HPC state of the art topology is known as Dragon Fly, which is based on a collection of hierarchical meshes. It effectively tries to make the HPC network look “fatter.”

- A high-performance cost-effective network topology called Slim Fly approaches the theoretically optimal (minimized) network diameter. For a smaller diameter topology, there are less cables needed to traverse nodes and fewer routers/switches are needed, resulting in lower system cost.

- Slim Fly topology was analyzed and compared to both traditional and state-of-the-art networks in Torsten’s HOTi presentation slides.

- Torsten’s analysis shows that Slim Fly has significant advantages over other topologies in: latency, bandwidth, resiliency, cost, and power consumption.

- In particular, Slim Fly has the lowest diameter topology, is 25% “better cost at the same performance” than Dragon Fly and 50% better than a Fat Tree topology (which is very similar to a Clos network).

- Furthermore, Slim Fly is resilient, has the lowest latency, and provides full global bandwidth throughout the network.

- Here’s a Slim Fly topology illustration supplied post conference email by Torsten:

- Torsten strongly believes that the Slim Fly topology will be used in large cloud resident Data Centers as their requirements and scale seem to be following those of HPC.

- Conclusion: Slim Fly enables constructing cost effective and highly resilient DC and HPC networks that offer low latency and high bandwidth under different workloads.

Slim Fly and Dragon Fly References:

https://htor.inf.ethz.ch/publications/img/sf_sc_slides_website.pdf

http://htor.inf.ethz.ch/publications/index.php?pub=251

http://htor.inf.ethz.ch/publications/index.php?pub=187

https://www.computer.org/csdl/mags/mi/2009/01/mmi2009010033-abs.html (Dragon Fly)

2. Software-Defined Everything (SDe) and the Implications for Interconnects, by Roy Chua, SDx Central

Overview: Software-defined networking (SDN), software-defined storage, and the all encompassing software-defined infrastructure have generated a tremendous amount of interest the past few years. Roy described the emerging trends, hot topics and the impact SDe will have on interconnect technology.

Roy addressed the critical question of why interconnects1 matter. He said that improvements are needed in many areas, such as:

- Embedded memory performance gap vs CPU (getting worse)

- CPU-to-CPU communications

- VM-to-VM and container-to-container

It’s hoped and expected that SDx will help improve cost-performance in each of the above areas.

Note 1. In this context, “interconnects” are used to describe the connections between IT equipment (e.g. compute, storage, networking, management) within a Data Center (DC) or HPC center. This is to be distinguished from DC Interconnect (DCI) which refers to the networking of two or more different DCs. When the DC is operated by the same company, fiber is leased or purchased to interconnect the DCs in a private backbone network (e.g. Google).

When DCs operated by different companies (e.g. Cloud Service Provider DCs, Content Provider DCs or Enterprise DCs) need to be interconnected, a third party is used via ISP peering, colocation, cloud exchange (e.g. Equinix), or Content Delivery Networks (e.g. Akamai)

SDx Attributes and Hot Topics:

- SDx provides: agility, mobility, scalability, flexibility (especially for configuration and turning up/down IT resources quickly).

- Other SDx attributes include: visibility, availability, readability, security and manageability.

- SDx hot topics include: SDN and NFV (Network Function Virtualization) in both DCs and WANs, policy driven networking, applications driven networking (well accepted API’s are urgently needed!)

Important NFV Projects:

- Virtualization across a service providers network (via virtual appliances running on compute servers, rather than physical appliances/specialized network equipment)

- CORD (Central Office Re-architected as a Data center)

- 5G Infrastructure (what is 5G?)

Note: Roy didn’t mention the Virtualized Packet Core for LTE networks, which network equipment vendors like Ericsson, Cisco and Affirmed Networks are building for wireless network operators.

SDx for Cloud Service Providers may address:

- Containers

- Software Defined Storage

- Software Defined Security

- Converged Infrastructure

Other potential areas for use of SDx:

- Server to server/ToR switch communications- orchestration, buffer management, convergence, service chaining

- Inter DC WAN communications (mostly proprietary to Google, Amazon, Facebook, Microsoft, Alibaba, Tencent, and other large cloud service providers

- Software Defined WAN [SD-WAN is a specific application of SDN technology applied to WAN connections, which are used to connect enterprise networks – including branch offices and data centers – over large geographic distances.]

- Bandwidth on Demand in a WAN or Cloud computing service (XaaS)

- Bandwidth calendering (changing bandwidth based on time of day)

- XaaS (Anything as a Service, including Infrastructure, Platform, and Applications/Software)

- 5G (much talk, but no formal definition or specs)

- Internet of Things (IoT)

NFV Deployment and Issues:

Roy said, “There are a lot of unsolved problems with NFV which have to be worked out.” Contrary to a report by IHS Markit that claims most network operators would deploy NFV next year, Roy cautioned that there will be limited NFV deployments in 2017 with “different flavors of service chaining” and mostly via single vendor solutions.

–>This author strongly agrees with Roy!

Open Networking Specifications Noted:

- Open Flow ® v1.4 from the Open Network Foundation (ONF) is the “southbound” API from the Control plane to the Data Forwarding plane in classical SDN. Open Flow ® allows direct access to and manipulation of the Data Forwarding plane of network devices such as switches and routers, both physical and virtual (hypervisor-based).

[Note that the very popular network virtualization/overlay model of SDN does not use Open Flow.]

- P4 is an open source programming language designed to allow programming of packet forwarding dataplanes. In particular, P4 programs specify how a switch processes packets. P4 is suitable for describing everything from high- performance forwarding ASICs to software switches. In contrast to a general purpose language such as C or Python, P4 is a domain-specific language with a number of constructs optimized around network data forwarding.

- Switch Abstraction Interface (SAI) from the Open Compute Project: Microsoft, Dell, Facebook, Broadcom, Intel, Mellanox are the co-authors of the final spec (v2) which was accepted by the OCP in July 2015.

Roy’s Conclusions:

- SDx is an important approach/mindset to the building of today’s and tomorrows IT infrastructure and business applications.

- Its requirement of agility, flexibility and scalability drives the need for higher-speed, higher-capacity, lower latency, lower cost, greater reliability interconnects at all scales (CPU-memory, CPU-CPU, CPU-network/storage, VM to VM, machine to machine, rack to rack, data center to data center).

- Interconnect technology enables new architectures for SDx infrastructure and innovations at the interconnect level can impact or flip architectural designs (faster networks help drive cluster-based design, better multi-CPU approaches help vertical scalability on a server).

- The next few years will see key SDx infrastructure themes play out in data centers as well as in the WAN, across enterprises and service providers (SDN and NFV are just the beginning).

Postscript:

I asked Roy to comment on an article titled: Lack of Automated Cloud Tools Hampers IT Teams. Here’s an excerpt:

“While most organizations are likely to increase their investments in the cloud over the next five years, IT departments currently struggle with a lack of automated cloud apps and infrastructure tools, according to a recent survey from Logicworks. The resulting report, “Roadblocks to Cloud Success,” reveals that the vast majority of IT decision-makers are confident that their tech staffers are prepared to address the challenges of managing cloud resources. However, they also feel that their leadership underestimates the time and cost required to oversee cloud resources. Without more cloud automation, IT is devoting at least 17 hours a week on cloud maintenance. Currently, concerns about security and budget, along with a lack of expertise among staff members, is preventing more automation.”

One would think that SDx, with its promise and potential to deliver: agility, flexibility, zero touch provisioning, seamless (re-)configuration, scaleability, orchestration, etc. could be effectively used to automate cloud infrastructure and apps. When might that happen?

Roy replied via email: “Interesting article—my short immediate reaction is that we’re going through a transitionary period and managing the number of cloud resources can bring its own nightmares, plus the management tools haven’t quite caught up yet to the rapid innovation across cloud infrastructures.”

Thanks Roy!

HOT Interconnects (HOTi) Summary- Part I. Building Large Scale Data Centers

Introduction:

The annual IEEE Hot Interconnects conference was held August 24-25, 2016 in Santa Clara, CA with several ½ day tutorials on August 25th. We review selected presentations in a series of conference summary articles. In this Part I. piece, we focus on design considerations for large scale Data Centers (DCs) that are operated by Cloud Service Providers (CSPs). If there is reader demand (via email requests to this author: [email protected]), we’ll generate a summary of the tutorial on Data Center Interconnection (DCI) methodologies.

Building Large Scale Data Centers: Cloud Network Design Best Practices, Ariel Hendel, Broadcom Limited

In his invited HOTi talk, Ariel examined the network design principles of large scale Data Centers designed and used by CSPs. He later followed up with additional information via email and phone conversations with this author. See Acknowledgment at the end of this article.

It’s important to note that this presentation was focused on intra-DC communications and NOT inter-DC or the cloud network access (e.g. type of WAN/Metro links) between cloud service provider DC and the customer premises WAN/metro access router.

Mr. Hendel’s key points:

- The challenges faced by designers of large DCs include: distributed applications, multi-tenancy, number and types of virtual machines (VMs), containers [1], large volumes of East-West (server-to-server) traffic, delivering low cost per endpoint, choice of centralized (e.g. SDN) or distributed (conventional hop by hop) Control plane for routing/path selection, power, cabling, cooling, satisfying large scale and incremental deployment.

Note 1. Containers are a solution to the problem of how to get software to run reliably when moved from one computing environment to another. This could be from a developer’s laptop to a test environment, from a staging environment into production and perhaps from a physical machine in a data center to a virtual machine in a private or public cloud.

- Most large-scale Data Centers have adopted a “scale- out” network model. This model is analogous to the “scale- out” server model for which the compute infrastructure is one size fits all. The network scale- out model is a good fit for the distributed software programming model used in contemporary cloud based compute servers.

- Distributed applications are the norm for public cloud deployments as application scale tends to exceed the capacity of any multi-processor based IT equipment in a large DC.

- The distributed applications are decomposed and deployed across multiple physical (or virtual) servers in the DC, which introduces network demands for intra-application communications.

- This evolving programming and deployment model is evolving to include parallel software clusters, micro-services, and machine learning clusters. All of these have ramifications on the corresponding network attributes.

- The cloud infrastructure further requires that multiple distributed applications coexist on the same server infrastructure and network infrastructure.

- The network design goal is a large-scale network that satisfies the workload requirements of cloud-distributed applications. This task is ideally accomplished by factoring in the knowledge of cloud operators regarding their own workloads.

- Post talk add-on: L3 (Network layer) routing has replaced L2 multi-port bridging in large scale DC networks, regardless of the type of Control plane (centralized/distributed) or routing protocol used at L3 (in many cases it’s BGP). Routing starts at the TOR and the subnets are no larger than a single rack. Therefore, there is no need for L2 Spanning Tree protocol as the sub net comprises only a single switch. There is absolute consensus on intra DC networks operating at L3, as opposed to HPC fabrics (like InfiniBand and Omnipath) which are L2 islands.

- Other DC design considerations include: North-South WAN Entry Points, Route Summarization, dealing with Traffic Hot Spots (and Congestion), Fault Tolerance, enabling Converged Networks, Instrumentation for monitoring, measuring and quantifying traffic, and Ethernet PMD sublayer choices for line rate (speed) and physical media type.

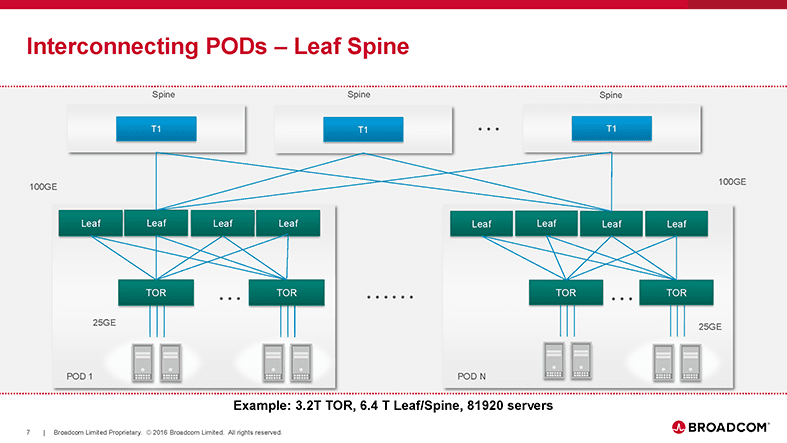

The concept of a DC POD [2] was introduced to illustrate a sub-network of IP/Ethernet switches and compute servers, which consist of: Leaf switches which are fully mesh connected via 100 GE to Top of Rack (TOR) switches, each of which connects to many compute servers in the same rack via 25GE copper.

Note 2. PODs provide a unit of incremental deployment of compute resources whose chronological order is independent of the applications they eventually host. PODs can also be the physical grouping of two adjacent tiers in the topology so that such tiers can be deployed with sets of switches that are in close physical proximity and with short average and maximum length cable runs. In the diagrams shown, the adjacent tiers are Leaf and TOR plus the compute servers below each TOR (in the same rack).

Author’s Note for clarification: Most storage area networks use Fibre Channel or InfiniBand (rather than Ethernet) for connectivity between storage server and storage switch fabric or between fabrics. Ariel showed Ethernet used for storage interconnects in his presentation for consistency. He later wrote this clarifying comment:

“The storage network part (of the DC) differs across cloud operators. Some have file servers connected to the IP/Ethernet network using NAS protocols, some have small SANs with a scale of a rack or a storage array behind the storage servers providing the block access, and others have just DAS on the servers and distributed file systems over the network. Block storage is done at a small scale (rack or storage array) using various technologies. It’s scalable over the DC network as NAS or distributed file systems.”

In the POD example given in Ariel’s talk, the physical characteristics were: 3.2T TOR, 6.4T Leaf, 100G uplinks, 32 racks, 1280 servers. Ariel indicated there were port speed increases ongoing to provide optimal “speeds and feeds” with no increase in the fiber/cabling plant. In particular:

- Compute servers are evolving from 10GE to 25GE interfaces (25GE uses the same number of 3m copper or fiber lanes as 10GE). That results in a 2.5x Higher Server Bandwidth Efficiency.

- Storage equipment (see note above about interface type for storage networks) are moving from 40GE to 50GE connectivity, where 50GE uses half the copper or fiber lanes than 40GE. That results in 2x Storage Node Connectivity, 25% More Bandwidth Per Node and 50% Fewer Cabling Elements versus 40GE.

- Switch Fabrics are upgrading from 40GE to 100GE backbone links which provides a 2.5X performance increase for every link in a 3-tier leaf-spine. There’s also Better Load Distribution and Lower Application Latency which results in an effective 15x increase in Fabric Bandwidth Capacity.

Ariel said there is broad consensus is the use of the “Leaf-Spine” topology model for large DC networks. In the figure below, Ariel shows how to interconnect the PODs in a leaf- spine configuration.

In a post conference phone conversation, Ariel added: “A very wide range of network sizes can be built with a single tier of spines connecting PODs. One such network might have as many as 80K endpoints that are 25GE or 50GE attached. More than half of the network cost, in this case, is associated with the optical transceivers.”

Ariel noted in his talk that the largest DCs have two spine tiers which are (or soon will be) interconnected using 100GE. The figure below illustrates how to scale the spines via either a single tier or dual spine topology.

“It is important to note that each two tier Spine shown in the above figure can be packaged inside a chassis or can be cabled out of discrete boxes within a rack. Some of the many ramifications of a choice between Spine chassis vs. Fixed boxes were covered during the talk.”

Ariel’s Acute Observations:

• Cost does not increase significantly with increase in endpoints.

• Endpoint Speed and ToR over subscription determine the cost/performance tradeoffs even in all

100G network.

• 100G network is “future proofed” for incremental or wholesale transition to 50G and 100G endpoints.

• 50G endpoint is not prohibitively more expensive than a 25G endpoint and is comparable at 2.5:1

over subscription of network traffic.

Our final figure is an illustration of the best way to connect to Metro and WAN – Edge PODs:

“When connecting all external traffic through the TOR or Leaf tier traffic is treated as any other regular endpoint traffic, it is dispersed, load balanced, and failed over completely by the internal Data Center network.”

Author’s Note: The type(s) of WAN-Metro links at the bottom of the figure are dependent on the arrangement between a cloud service and network provider. For example:

- AT&T’s Netbond let’s partner CSPs PoP be 1 or more endpoints of their customers IP VPN.

- Equinix Internet Exchange™ allows networks including ISPs, Content Providers and Enterprises to easily and effectively exchange Internet traffic.

- Amazon Virtual Private Cloud (VPC) lets the customer provision a logically isolated section of their Amazon Web Services (AWS) cloud where one can launch AWS resources in a virtual network that’s customer define.

Other Topics:

Mr. Hendel also mapped network best practices down to salient (Broadcom) switch and NIC silicon at the architecture and feature level. Congestion avoidance and management were discussed along with DC visibility and control. These are all very interesting, but that level of detail is beyond the scope of this HOTi conference summary.

Add-on: Important Attribute of Leaf-Spine Network Topology:

Such networks can provide “rearrangeable non-blocking” capacity by using traffic dispersion. In this case, traffic flows between sources and destinations attached to the leaf stage can follow multiple alternate paths. To the extent that such flows are placed optimally on these multiple paths, the network is non-blocking (it has sufficient capacity for all the flows).

Add-on: Other Network Topologies:

Actual network topologies for a given DC network are more involved than this initial concept. Their design depends on the following DC requirements:

1. Large (or ultra-large) Scale

2. Flat/low cost per endpoint attachment

3. Support for heavy E-W traffic patterns

4. Provide a logical as opposed to a physical network abstraction

5. Incrementally deployable (support a “pay as you grow” model)

Up Next:

We’ll review two invited talks on 1] DC network topology choices analyzed (it’s the diameter, stupid!) and 2] the impact of SDN and NFV on DC interconnects. An article summarizing the tutorial on Inter-DC networking (mostly software oriented) will depend on reader interest expressed via emails to this author.

Acknowledgment: The author sincerely thanks Ariel Hendel for his diligent review, critique and suggested changes/clarifications for this article.