Google Cloud Platform

Google Expands Cloud Network Infrastructure via 3 New Undersea Cables & 5 New Regions

Google has plans to build three new undersea cables in 2019 to support its Google Cloud customers. The company plans to co-commission the Hong Kong-Guam (HK-G) cable system as part of a consortium. In a blog post by Ben Treynor Sloss, vice president of Google’s cloud platform, three undersea cables and five new regions were announced..

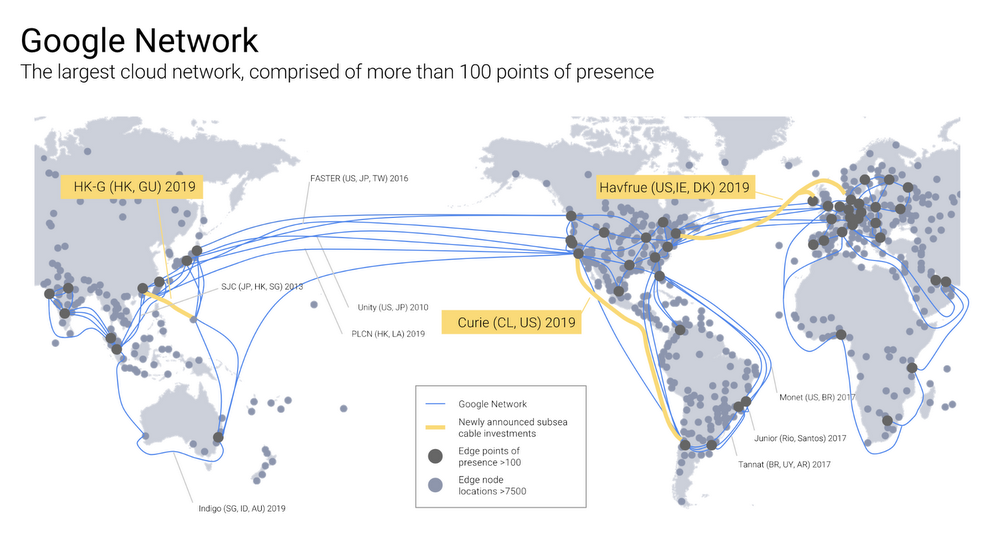

The HK-G will be an extension of the SEA-US cable system, and will have a design capacity of more than 48Tbps. It is being built by RTI-C and NEC. Google said that together with Indigo and other cable systems, HK-G will create multiple scalable, diverse paths to Australia. In addition, Google plans to commission Curie, a private cable connecting Chile to Los Angeles and Hvfrue, a consortium cable connecting the US to Denmark and Ireland as shown in the figure below.

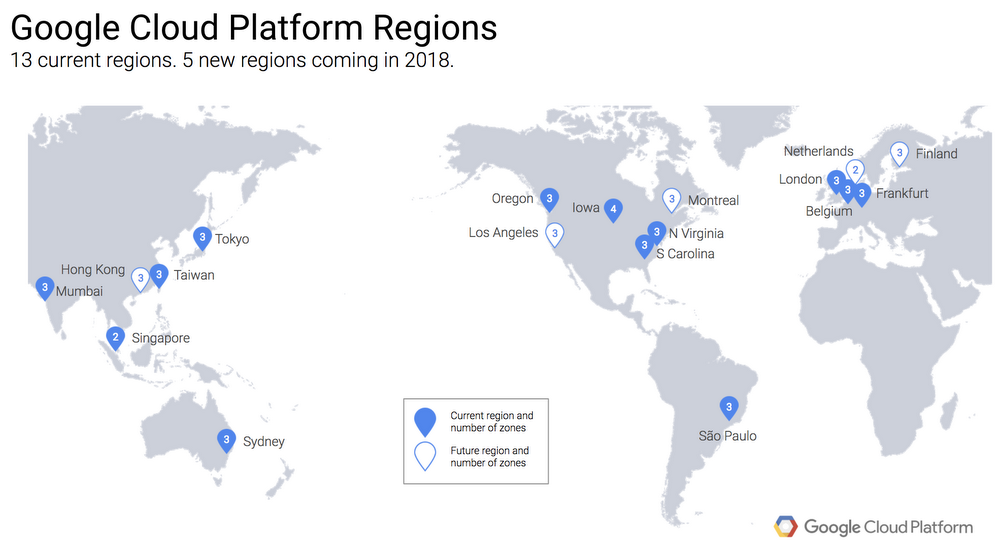

Late last year, Google also revealed plans to open a Google Cloud Platform region in Hong Kong in 2018 to join its recently launched Mumbai, Sydney, and Singapore regions, as well as Taiwan and Tokyo.

Of the five new Google Cloud regions, Netherlands and Montreal will be online in the first quarter of 2018. Three others in Los Angeles, Finland, and Hong Kong will come online later this year. The Hong Kong region will be designed for high availability, launching with three zones to protect against service disruptions. The HK-G cable will provide improved network capacity for the cloud region. Google promises they are not done yet and there will be additional announcements of other regions.

In an earlier announcement last week, Google revealed that it has implemented a compile-time patch for its Google Cloud Platform infrastructure to address the major CPU security flaw disclosed by Google’s Project Zero zero-day vulnerability unit at the beginning of this year.

Diane Greene, who heads up Google’s cloud unit, often marvels at how much her company invests in Google Cloud infrastructure. It’s with good reason. Over the past three years since Greene came on board, the company has spent a whopping $30 billion beefing up the infrastructure.

Google has direct investment in 11 cables, including those planned or under construction. The three cables highlighted in yellow are being announced in this blog post. (In addition to these 11 cables where Google has direct ownership, the company also leases capacity on numerous additional submarine cables.)

In the referenced Google blog post, Mr Treynor Sloss wrote:

At Google, we’ve spent $30 billion improving our infrastructure over three years, and we’re not done yet. From data centers to subsea cables, Google is committed to connecting the world and serving our Cloud customers, and today we’re excited to announce that we’re adding three new submarine cables, and five new regions.

We’ll open our Netherlands and Montreal regions in the first quarter of 2018, followed by Los Angeles, Finland, and Hong Kong – with more to come. Then, in 2019 we’ll commission three subsea cables: Curie, a private cable connecting Chile to Los Angeles; Havfrue, a consortium cable connecting the U.S. to Denmark and Ireland; and the Hong Kong-Guam Cable system (HK-G), a consortium cable interconnecting major subsea communication hubs in Asia.

Together, these investments further improve our network—the world’s largest—which by some accounts delivers 25% of worldwide internet traffic……………….l.l….

Simply put, it wouldn’t be possible to deliver products like Machine Learning Engine, Spanner, BigQuery and other Google Cloud Platform and G Suite services at the quality of service users expect without the Google network. Our cable systems provide the speed, capacity and reliability Google is known for worldwide, and at Google Cloud, our customers are able to to make use of the same network infrastructure that powers Google’s own services.

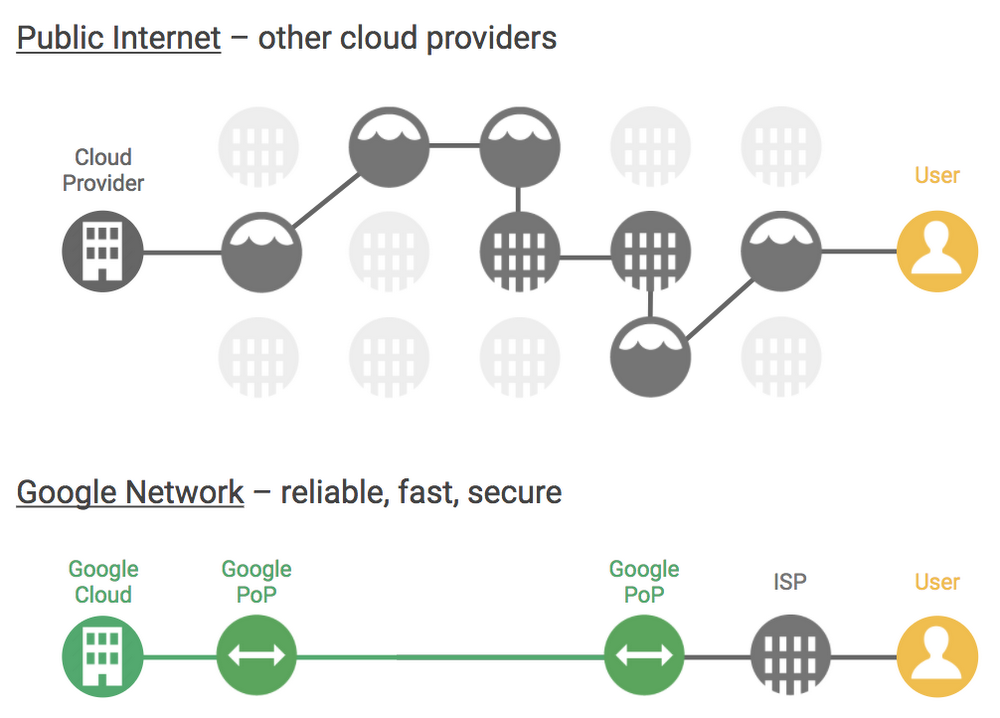

While we haven’t hastened the speed of light, we have built a superior cloud network as a result of the well-provisioned direct paths between our cloud and end-users, as shown in the figure below.

According to Ben: “The Google network offers better reliability, speed and security performance as compared with the nondeterministic performance of the public internet, or other cloud networks. The Google network consists of fiber optic links and subsea cables between 100+ points of presence, 7500+ edge node locations, 90+ Cloud CDN locations, 47 dedicated interconnect locations and 15 GCP regions.”

……………………………………………………………………………………………………………………………………………………………………………………………

Reference: