AT&T Yahoo/Verizon accounts? Analysis of AT&T’s Business & Earnings, by David Dixon of FBR Group

What will AT&T do about it’s AT&T-Yahoo accounts (including email) now that Yahoo Internet Portal has been sold to Verizon? AT&T-Yahoo email has been deteriorating for months. Will AT&T now offer AT&T-Verizon email accounts?

Growing International Focus Bears Fruit, but Ongoing Domestic Weakness Is a Concern, David Dixon of FBR

Summary

AT&T reported largely in-line 2Q16 financial results and mixed subscriber results. Its focus on growing the newly acquired Mexican operations appeared to bear fruit, helping offset ongoing U.S. weakness. AT&T added 742,000 wireless net subscribers in Mexico in the quarter, and total Mexican wireless subscribers now approach 10M.

AT&T’s Mexican LTE deployment now serves 65M PoPs and is expected to reach 75M by year-end. While the LatAm macroeconomic environment is expected to remain challenging, we expect the upcoming Rio Olympics to build on recent subscriber momentum. In the U.S., total 2Q net adds of 1.36M were meaningfully below consensus of 1.78M. Management attributed net add weakness to the planned shutdown of the 2G network and a network outage caused by an equipment vendor. We believe the domestic net add weakness is more likely a result of shifting focus on profitability rather than subscriber count. With the LTE network buildout complete and AT&T diversifying into Mexico to alleviate churn pressures, further changes to smart phone upgrade eligibility are likely.

We think AT&T is challenged by T-Mobile USA (TMUS) for the low-end customer and is focused on maintaining share of the more profitable enterprise market as well as improving ARPU in the interim, ahead of TMUS facing capacity challenges in the coming one to two years, based on our vendor checks.

Key Points:

■ 2Q16 results recap: Including DIRECTV results, consolidated revenues increased 22.7% YOY to $40.5B, modestly below consensus’ estimate of $40.6B and higher than our estimate of $40.0B. By segment: business solutions delivered revenues of $17.6B, entertainment and Internet services had revenues of $12.7B, consumer mobility had revenues of $8.2B, and international had revenues of $1.8B.

Adjusted EBITDA of $13.4B were just shy of our Streetcomparable estimate of $13.6B. EBITDA margins declined 30 bps YOY to 33.0%. Consumer mobility postpaid net adds were 72,000, prepaid net adds were 365,000, and postpaid churn was 1.09%.

■ Further expansion of GigaPower: GigaPower now reaches 2.2M homes and is expected to increase to 2.6M or more by year-end. We believe AT&T’s fiber investment is a negative NPV decision but necessary to continue over many years until it equates to cable because a decision not to invest would drive a more negative NPV outcome due to the superior competitive positioning from the cable sector. Combined with a shift toward software-defined network (SDN), the expanded fiber footprint afforded by GigaPower should benefit T as the Internet of Things (IoT) becomes more prevalent and as 5G is deployed. We believe AT&T is the market leader in the burgeoning IoT segment, which was largely standardized this year and should underpin faster growth rates.

■ FY16 guidance maintained. Management reiterated prior issued guidance of double-digit consolidated revenue growth, adjusted EPS growth of mid single digits or better, stable consolidated margins, and $22.0B in capex.

■ We believe AT&T will benefit from the Olympics through cross-selling DIRECTV products in Latin America, partially offset by continued competition in the U.S. carrier market.

Q&A:

1. Can AT&T drive earnings growth? 6 to 18 months

Smartphone activations remain significant. Strategic initiatives with Samsung and Google, coupled with support of the Windows Phone ecosystem by MSFT, NOK, and other OEMs, are key to lower wireless subsidy pressure, but it is early days. We think AT&T will continue to consider pricing action to augment growth once the LTE network build is complete, but competitive intensity is likely to increase in FY16, so this will prove difficult absent consolidation or until T-Mobile US becomes spectrum challenged, which we think is still one year away and a function of T-Mobile US commitment to continue network investment.

2. How will AT&T fare in the changing wireless landscape in 2016 and beyond?

Our strategic concerns for AT&T include (1) the Apple eSIM impact, should Apple be successful in striking wholesale agreements; (2) the Google MVNO impact, which could strip the company of the last bastion of connectivity revenue; and (3) a Wi-Fi first network from Comcast, coupled with a wholesale agreement with a carrier, which would enable a competitor and increase pricing pressure

3. Does AT&T have a sustainable spectrum advantage compared with other carriers?

AT&T is behind Verizon in spectrum and out of spectrum in numerous major markets, according to our vendor checks. However, with additional density investment, it is reasonably well positioned to benefit from the combination of coverage layer (700 MHz and 850 MHz) and capacity layer (1,700 MHz, 1,900 MHz, and soon-to-be-confirmed 2,300 MHz) spectrum and will focus on LTE and LTE Advanced, as well as refarming 850 MHz/1,900 MHz spectrum for additional coverage and capacity. Yet this nonstandard LTE band will cost more capex and take longer to implement. In the short run, aggressive cell splitting is expected, and metro Wi-Fi and small-cell solutions with economic backhaul solutions are becoming available, allowing for greater surgical reuse of existing spectrum. Sprint s differentiation through Clearwire spectrum in FY16 is only likely to modestly affect AT&T relative to Verizon. Furthermore, with 70% 80% of wireless data traffic on Wi-Fi and only 20% of capacity utilized, this suggests a focus in this area to manage data usage growth.

Conclusions:

We expect the wireless segment to continue to be challenged by a resurgent T-Mobile USA. We are less bullish on near-term improvements in capex intensity, due to cultural challenges associated with the much-needed migration to software-centric networks, coupled with the need to upgrade its fiber plant aggressively to improve its competitive positioning and lay the foundation for efficiency improvement.

ONF and ON.Lab advance SDN with scalable and customizable Leaf-spine fabric solution

The networking world continues to progress open source software solutions. The ONOS® Project, a software defined networking (SDN) operating system for service providers, today announced availability of an ONOS-based leaf-spine fabric solution for data centers and service provider Central Offices. This is the first L2/L3 leaf-spine fabric on bare-metal switching hardware that is built with SDN principles and open source software. It is a result of a productive collaboration between the Open Networking Foundation (ONF), a non-profit organization dedicated to accelerating the adoption of open SDN, and Open Networking Lab (ON.Lab), a nonprofit building open source communities to realize the full potential of SDN and network functions virtualization (NFV).

Leaf-spine fabric technology is ideal for any enterprise in which the underlay fabric plays a key role in the infrastructure or service provider network operator interested in utilizing a hardware-based, modern data center fabric that leverages white boxes and open source for easy customization. Service providers and vendors are beginning to field test the fabric as part of the Central Office Re-architected as a Data Center (CORD™) initiative from ON.Lab.

This generic leaf-spine fabric that can be used, and customized for any of the following architectures:

1) Webscale datacenter

2) Enterprise datacenter

3) Telco datacenter

4) Telco central offices undergoing transformation to data centers

Project CORD:

The last use case reflects CORD (Central Office Rearchitected as a Datacenter), which is a major use case of the fabric and strongly supported by AT&T and other telecom industry titans.

“SDN and NFV are speeding up innovation, as seen in projects like CORD,” said Tom Anschutz, Distinguished Member of Technical Staff at AT&T. “These technologies create systems that do not need new standards to function and enable new behaviors in software, which decreases development time. Faster development time leads to rapid innovation, something the industry needs to continue satisfying data-hungry customers.”

CORD combines SDN, NFV and cloud with commodity infrastructure and open building blocks to bring in data center economies of scale and cloud-like agility to service providers. The CORD solution POC spans the Telco Central Office, access including Gigabit-capable Passive Optical Networks (GPON) and G.fast as well as home/enterprise customer premises equipment (CPE).

“Underlay and overlay fabrics represent important ONOS use cases,” said Guru Parulkar, executive director of ON.Lab. “ONOS Project, in partnership with ONF and several active ONOS collaborators, have delivered a highly flexible, economical and scalable solution as software defined data centers gain momentum. This is also a great example of collaboration between ONF and ON.Lab to create open source solutions for the industry.”

Fabric Enables a Truly Integrated SDN-Based Solution

The fabric is built on Edgecore bare-metal hardware from the Open Compute Project (OCP) and switch software, including OCP’s Open Network Linux and Broadcom’s OpenFlow Data Plane Abstraction (OF-DPA) API. It leverages earlier work from ONF’s Atrium and SPRING-OPEN projects that implemented segment-routed networks using SDN.

“This is an L2/L3 SDN fabric with state-of-the-art white box hardware and completely open source switch, controller and application software,” said Saurav Das, principal architect at the Open Networking Foundation. “No traditional networking protocols found in commercial solutions are used inside the fabric, which instead uses an integrated SDN-based solution. In the past, the promise of SDN has fallen short in delivering HA, scale and performance. The fabric control application design, together with ONOS, and the full use of modern merchant silicon ASICs solve all of these problems. In addition, the use of SDN affords a high degree of customizability for rapidly introducing newer features in the fabric. CORD’s usage of the fabric is an excellent example of such customization.”

Besides bridging and routing, new features include:

- HA and scale support with multi-instance ONOS controller cluster (previous work was with single-controller)

- Integration with vRouter for interfacing with traditional networks using BGP and/or OSPF

- Integration with CORD’s vOLT for residential access network support

- Support for IPv4 Multicast forwarding for residential IPTV streams in CORD

- Integration with CORD’s XOS-based orchestration framework

“Edgecore open network switches are deployed as the underlay network in leaf-spine topologies for data center and telecom infrastructures,” said Jeff Catlin, vice president technology, Edgecore Networks. “ONOS and the fabric control application design, with ONOS and open network switches, provides a more highly scalable and resilient network fabric. The deployment of OCP switches in open SDN deployments is critical for accelerating the continued development of the open SDN ecosystem.”

The number of service providers, developers and networking professionals experimenting and contributing to the fabric continues to grow. Ciena Blue Planet, a network specialist and ONOS partner, is adding test cases for build and deployment automation. Services providers, enterprises and individual developers interested in getting involved may download, test, contribute new features and initiate lab and production trials to make the fabric solution even stronger. To join the active discussion, send an email to [email protected].

Whether an individual or an organization, all are encouraged to get involved with the growing open source CORD community. Both the ONOS and CORD Projects are hosted by The Linux Foundation, the nonprofit advancing professional open source management for mass collaboration.

IHS: Network Functions Virtualization Market Worth Over $15 Billion by 2020

The global network functions virtualization (NFV) market, which includes NFV hardware, software and services, will be worth $15.5 billion by 2020, according to the latest NFV Hardware, Software, and Services Annual Market Report from IHS Markit (Nasdaq: INFO), a world leader in critical information, analytics and solutions.

“Between 2015 and 2020, the service provider NFV market will grow at a robust compound annual growth rate (CAGR) of 42 percent — from $2.7 billion in 2015 to $15.5 billion in 2020,” said Michael Howard, Senior Research Director, Carrier Networks, at IHS Markit.

NFV represents the shift in the telecom industry from a hardware focus to a software focus, with operators making much larger investments in software than in server, storage and switch hardware.

“NFV software will comprise 80 percent of the $15.5 billion total in 2020 — or around $4 out of every $5 spent on NFV,” said Howard.

In 2020, only 11 percent of NFV revenue will be attributable to new software and services. Sixteen percent will come from network functions virtualization infrastructure (NFVI) — servers, storage, switches — acquired in place of purpose-built network hardware such as routers, deep packet inspection (DPI) products and firewalls. The remaining 73 percent will originate from existing market segments, primarily virtual network functions (VNFs).

The main value of NFV is in its applications, that is, the VNFs. “The service provider NFV market is larger than the software-defined networking (SDN) market throughout our forecast horizon of 2020, due to the pre-existing and ongoing VNF market,” said Howard. “We expect strong growth in NFV markets in 2020 and beyond, driven by service providers’ desire for service agility and operational efficiency.”

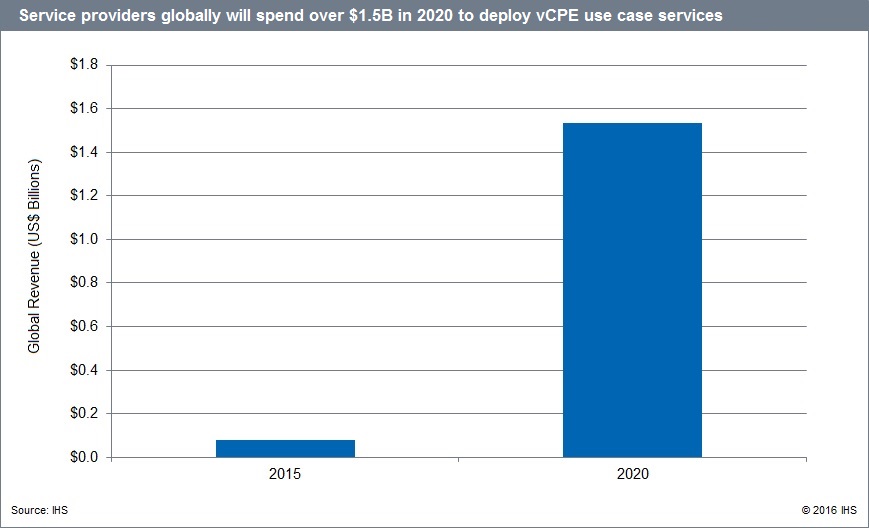

This latest NFV report tracks, for the first time, what service providers spend on NFV hardware and software to deliver software-based services to customers via the consumer virtual customer premises equipment (vCPE) and enterprise vCPE use cases. The vCPE use case opportunity, including spending to deploy consumer and enterprise services, is forecast to reach over $1.5 billion worldwide by 2020.

About the NFV Report

The 2016 IHS Markit NFV Hardware, Software, and Services Annual Market Report tracks service provider NFV hardware, including NFVI servers, storage and switches; NFV software split out by NFV MANO and VNF software, including vRouters and the software-only functions of mobile core and EPC, IMS, PCRF and DPI, security, video CDN and other VNF software; NFV services outsourced services for NFV projects; and NFV uses cases. The report provides worldwide and regional market size, forecasts through 2020, in-depth analysis and trends.

####

About IHS Markit (www.ihsmarkit.com)

IHS Markit (Nasdaq: INFO) is a world leader in critical information, analytics and solutions for the major industries and markets that drive economies worldwide. The company delivers next-generation information, analytics and solutions to customers in business, finance and government, improving their operational efficiency and providing deep insights that lead to well-informed, confident decisions. IHS Markit has more than 50,000 key business and government customers, including 80 percent of the Fortune Global 500 and the world’s leading financial institutions. Headquartered in London, IHS Markit is committed to sustainable, profitable growth.

Here’s an excerpt of a SDx Central report on SDN and NFV market size:

SDN and NFV Market Size Report 2015 Executive Summary

The adoption of software-defined networking (SDN), network function virtualization (NFV), network virtualization (NV), and white box (gray box and brite box) initiatives has dramatically changed the networking landscape over the past few years. In response to the demand for data around the size and scope of the market, SDxCentral has updated its 2013 Market Sizing Report to reflect the realities of today. This 2015 Market Sizing Report not only provides market data, but also includes a comprehensive analysis of the SDx Networking trends, influences and use cases that are impacting uptake. Our updated model predicts the combined revenue of SDN, NFV and other next-generation networking initiatives (SDx Networking) will exceed $105B per annum by 2020. The rapid emergence of SDxN as an influencer in network purchasing decisions will dramatically impact what has been a largely stagnant network vendor competitive landscape. It’s expected these technologies will influence almost 80% of the purchasing decisions associated with all networking revenue by the end of 2020, affecting virtually every customer segment within the networking space. New SDxN use cases could open the market to new entrants for the first time in more than a decade. Combined with the trend towards more converged IT organizations, SDx Networking could enable a new type of networking decision maker who has less experience with and weaker ties to incumbent vendors. Cloud providers have been leading the charge into the new network paradigm and are expected to remain the largest consumer of new network technologies through 2020.

FCC’s Forward Auction Attracts 62 Bidders with August 16th Start

The FCC announced a total of 62 bidders will take part in the forward auction portion of the FCC’s spectrum incentive auction, the commission. Only 62 of the 99 bidders with approved applications submitted qualifying upfront payments by the July 1 deadline. Those bidders will move on to participate in the forward auction starting on August 16, the FCC said.

Note: I reviewed the FCC reverse auction and noted the importance of the forward auction in a recent Viodi.com article.

Among those who have been deemed “qualified” through the successful submission of a complete application and advance payment are telecommunication industry giants like AT&T, Verizon, T-Mobile, U.S. Cellular and Comcast.

Dish Network also appears to be participating under the name ParkerB.com Wireless, which shares a Colorado address with Dish. The company did not previously respond to a request for comment on its participation.

The WSJ reported today that former Facebook executive Chamath Palihapitiya won’t be executing his plan to participate in the auction after all. Though the name of the dark horse player’s firm, Social Capital, was among bidders with initially approvedapplications, it appears the firm failed to submit an upfront payment in time to qualify for participation.

Twenty of the final bidders have qualified for small business bidding credits and 28 bidders have qualified for rural service provider bidding credits.

A clock phase practice auction for qualified bidders will be held July 25 through July 29, the FCC said. A final clock phase mock auction will be held just before the start of the auction, on August 11 and 12.

At the start of the auction, the FCC said bids must be sufficient to cover the minimum opening bid amounts set by the commission previously. Clock prices for subsequent bidding rounds will be set by adding a fixed percentage of between one and 15 percent to the previous round’s price, with the initial increment set at five percent, the FCC said. The increments may be changed during the auction on a PEA-by-PEA or category-by-category basis as stages and rounds continue, but any changes will be announced in advance, the commission said.

Bidders in the forward auction will be up against a towering $86.4 billion clearing target price for 126 MHz of broadcaster spectrum – a figure analysts have been skeptical bidders will reach.

“Tthe bar has been set high for the wireless industry,” Dan Hays, principal of PwC’s Strategy&, said after the target was announced. “Given the current financial profile of the industry, this number may have to move significantly lower. A second stage of the reverse auction later this year is likely. Indeed, we could well see the proceedings drag on into early 2017 before coming to a final conclusion.”

References:

https://www.fcc.gov/about-fcc/fcc-initiatives/incentive-auctions/primer-bidders

https://www.wirelessweek.com/news/2016/07/fcc-announces-62-bidders-mid-august-start-forward-auction

http://www.nasdaq.com/article/us-fccs-incentive-auction-witnesses-stepped-up-bidding-cm650949

Telco Coalition Opposes FCC Business Broadband Proposal: ‘flying blind’ into a bad decision

Frontier Communications, CenturyLink, Cincinnati Bell, Consolidated Communications, and FairPoint have partnered to create the “Invest in Broadband for America” coalition to oppose the Federal Communications Commission’s proposed special-access market reforms. The coalition says the FCC’s proposed reforms of special access market are questionable and sweeping.

Special access is the business services market of dedicated connections used by businesses and institutions to deliver voice and data traffic, including for ATMs and credit card transactions. They also include wireless backhaul services, so the move also ties to the FCC’s promotion of wireless broadband. The FCC has rebranded special access as “BDS” (business data services).

“First and foremost, it is crucial that the FCC get the data right on competition in the marketplace before flying blindly into a major policy decision,” said John Jones, CenturyLink SVP, in announcing the new effort. “Important decisions are best made with accurate data. What is at stake here is the definition of ‘competition.’ That definition will have a substantial impact on the telecom and national economy for years to come. Think investment, suppliers, employees, infrastructure and contractors.”

The FCC BDS proposal is being questioned by cable operators providing BDS competition.

References:

https://www.fcc.gov/document/fcc-releases-business-data-services-order-and-further-notice

Worldwide Radio Access Network (RAN) hits $5B; China’s booming RAN & IoT Markets

Outlook and Analysis:

Operators worldwide are deploying Centralized (C)-RAN architectures to bring simplicity, agility, flexibility and efficiency to mobile networks. However, this is nowhere more apparent than in Asia Pacific, where China has overtaken Japan in C-RAN deployments.

In 2015, China Mobile and China Unicom finally managed to convince their government that C-RAN was the right way to go to achieve energy savings of about 60 percent and have a green footprint. The Chinese operators have aggressive 5G research and development initiatives that include C-RAN. China Mobile has deployed C-RAN in three cities and one province, which led to a rollout of 2,000 sites.

A few C-RAN deployments also happened in EMEA (Europe, Middle East, Africa) and Canada in 2015.

In 2016, IHS expects more C-RAN rollouts in China, along with a few in CALA (Caribbean and Latin America) and Europe, where operators will start to adopt C-RAN as a means to expand their existing footprints by connecting remote radio heads (RRHs) to BBUs of installed base transceiver stations (BTSs).

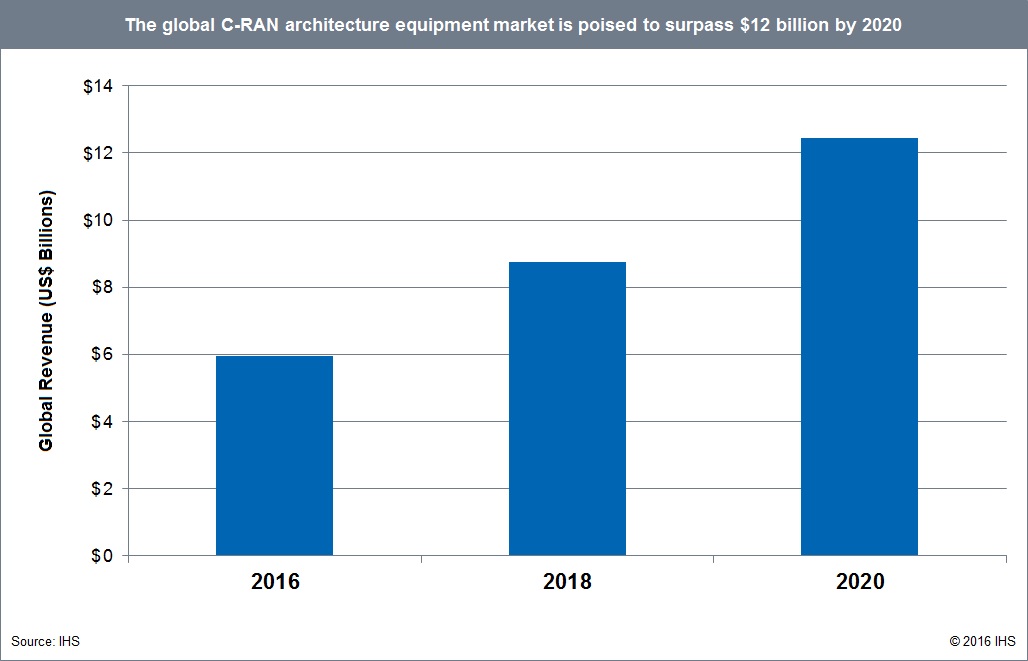

Worldwide C-RAN architecture revenue is forecast to top $12 billion in 2020—a compound annual growth rate (CAGR) of 19.8 percent from 2015 to 2020—primarily driven by RAN expansion in the West and the beginning of 5G rollouts in Japan and South Korea.

Key Points:

- Global centralized RAN (C-RAN) architecture equipment revenue reached $5 billion in 2015, a gain of 18 percent from $4.3 billion in 2014

- The market remains mainly driven by Asia Pacific—with China now in the driver’s seat thanks to China Mobile’s and China Unicom’s C-RAN deployments, followed by Japan with NTT DOCOMO’s “advanced C-RAN architecture” rollout

- The most expensive component in a C-RAN architecture, baseband units (BBUs) accounted for the bulk of C-RAN revenue in 2015

- Nokia Networks once again led the C-RAN market in 2015, and Ericsson remained in second place—but Huawei gained 4 percentage points year-over-year to move into third place, displacing Samsung

C-RAN Report Synopsis:

The IHS Technology C-RAN Architecture Equipment Annual Market Report tracks centralized RAN equipment revenue and units, covering the migration from decomposed to C-RAN architectures including baseband units (BBUs) and remote radio heads (RRHs). The report provides worldwide and regional market size, vendor market share, forecasts through 2020, analysis and trends, and includes a C-RAN Deployment Tracker that shows C-RAN announcements and major developments by region, country, service provider and strategy. Vendors tracked include Alcatel-Lucent, Eblink, Ericsson, Fujitsu, Huawei, NEC, Nokia Networks, Samsung and ZTE.

To buy the IHS report in the Americas, call +1 844 301 7334 or [email protected]; in Europe, Middle East and Africa (EMEA) at +44 1344 328 300 or[email protected]; or Asia-Pacific (APAC) at +604 291 3600 or [email protected].

Separately, New GSMA Report Predicts Chinese IoT Market Will Exceed One Billion Connections by 2020

Underpinned by Licensed Low Power, Wide Area Market

Verizon, KT to work together on 5G standard; US Telecom says FCC could impede 5G Deployment

1. Verizon and KT to collaborate on 5G standard and technology:

Verizon Communications (VZ) and Korea Telecom (KT) will collaborate on development of 5G wireless technology ahead of a planned 2018 demonstration of the proposed standard.

KT Chairman Hwang Chang-gyu noted in a statement: “Global partnership for the 5G standardization is very crucial ahead of its planned commercialization in 2020.”

Up till recently, it appeared VZ didn’t agree as the US carrier had announced 5G trials before ITU-R even defined what 5G is and the specs aren’t to be finalized till the end of 2020. VZ inked a partnership with Samsung to make their 5G trials real.

Carriers and manufacturers are making their 5G speed tests public, but until a global standard is established, it is difficult to gauge the accuracy of the 5G speed tests by the companies.

The 5G service is expected to roll out by 2020, but Korea Telecom aims to be ahead of the pack and deliver 5G capabilities by 2018 during the PyeongChang Winter Olympics. The deal between KT and Verizon means that the latter will pool its efforts to push out the next generation of wireless service, which should make sure KT checks its objective. Keep in mind that Verizon was the first carrier that introduced 4G LTE service in the United States and there is a high chance to repeat the feat with 5G.

Although 5G remains a debatable subject, a tight partnership of the two large global carriers can make sure that the technical infrastructure is ready quicker than expected.

|

AT&T to use LTE, Cat-M1 & M2 for IoT Applications

AT&T is moving forward with LTE future for its cellular Internet of Things (IoT) applications, despite earlier suggestions that the network operator could consider other low-power, wide-area (LPWAN) specifications. The LTE only decision is consistent with AT&T’s existing LTE based IoT platform, which we described in an earlier article:

AT&T claims to have the largest share of the connected device market with 19.8M IoT devices or 47% of the U.S. total IoT market in 2013. There are GSM location tracking capabilities in over 100 countries with roaming access in more than 200 countries.

AT&T is a founding member of the Industrial Internet Consortium where over 100 companies are now involved. As part of that effort:

- IBM and AT&T are collorating on IoT solutions for cities, institutions, and enterprises.

- GE and AT&T are working on remotely controlled industrial machines.

“I think the decision that we have made as a company is that AT&T is going to standardize on the LTE stack as opposed to unlicensed bands,” Mobeen Khan, AVP of AT&T IoT Solutions, at AT&T Mobile and Business Solutions, told Light Reading Wednesday, June 22nd. AT&T has “many reasons” for the decision: The specialized IoT LTE technologies uses AT&T’s existing spectrum; it’s more secure and can be managed using existing infrastructure. “It has a lot of benefits for our customers,” Khan said.

AT&T is going to standardize on Cat-M1 (a.k.a. LTE-M) for devices like smart meters and wearables. Cat-M1 is optimized to offer a 1Mbit/s connection but with superior battery life compared to the typical 4G smartphone radio chipset. The operator has just approved its first modules for this specification. There will be trials in the forth quarter.

For even lower-power applications, AT&T will use Cat-M2 modules in units like smoke detectors and networked monitors. Cat-M2 is “still being specced out” but is anticipated to go to kilobits-per-second connection rates to further extend battery life, Khan said. AT&T will test Cat-M2 devices on the network in 2017 and hopes to go commercial early in 2018.

This doesn’t mean that AT&T won’t support any other types of networking for IoT. WiFi, Bluetooth and mesh networking will all be part of the mix. “We’re living in a multi-network world,” Khan said.

References:

http://www.lightreading.com/iot/iot-strategies/atandt-settles-on-lte-for-cellular-iot/d/d-id/724289

http://www.lightreading.com/iot/m2m-platforms/atandt-readies-low-power-lte-for-iot/d/d-id/720150

Energy versus security–the IoT tradeoff:

AT&T and other leading telcos are highlighting the security advantages of using cellular networks in licensed spectrum to connect IoT devices. They point to the benefits of having a SIM card authenticate the device on the network, such as being able to remotely bar devices, where necessary. Without a secure link, IoT applications may be more vulnerable to attacks, such as spoofing, where a fraudulent end device injects false data into the network or a fraudulent access point hijacks the data captured by a device.

AT&T Focus on Smart City Development (part of IoT)

An AT&T executive offered an update on the carrier’s “smart city” program, in which the company is competing against other providers in the Internet of Things space.

‘Secure connectivity’ is the common thread in smart city technologies

Matt Foreman, lead product marketing manager for the smart cities business unit, discussed the differences between selling to governments and enterprises, noting that cities are especially wary of introducing new technologies that could prove risky or insecure. Foreman said:

“AT&T is super excited about this space. Really, secure connectivity is the common thread woven through any smart city technology,” which can range from smart electric and water meters to connected garbage cans, street lights, irrigation systems and even acoustic leak detection.

“All of those are anchored on secure connectivity whether that be LTE, whether that be fiber,” or satellite, Foreman said. “We want to make sure we’re the connectivity provider of choice, taking our best in breed practices and capabilities to help cities solve problems for their citizens.”

He emphasized that, when dealing with government, derisking a deployment is key. “The technology that’s influencing and changing the direction of how cities can operate, interact with their citizens and create that compelling place to live is tried, true and proven. There’s a huge startup ecosystem that we want to solve for and bring into that fold, but at the same time we don’t want cities to think these are new technologies.”

Foreman also highlighted the differences between selling to government and selling to the enterprise. “”When you think about the enterprise side versus government, there’s different sales cycles and different procurement considerations. When you think about some of these account teams and relationships that are out there…AT&T is really well positioned to come in and help bubble up decision making and strategy out of the individual departments to a higher level so you get incremental, exponential value.”

More, including video clip, at: http://industrialiot5g.com/20160617/channels/news/att-smart-city-tag17

Separately, AT&T vowed to appeal a Federal court decision to uphold the FCC’s network neutrality rules

“We have always expected this issue to be decided by the Supreme Court, and we look forward to participating in that appeal,” AT&T Senior Executive Vice President and General Counsel David McAtee said in a statement.

“Today’s ruling is a victory for consumers and innovators who deserve unfettered access to the entire web, and it ensures the internet remains a platform for unparalleled innovation, free expression and economic growth,” FCC Chairman Tom Wheeler said in a statement. “After a decade of debate and legal battles, today’s ruling affirms the Commission’s ability to enforce the strongest possible internet protections – both on fixed and mobile networks – that will ensure the internet remains open, now and in the future.”

Highlights of Ericsson Mobility Report

The latest Ericsson Mobility Report covers the period to 2021. Ericsson has forecast significant growth in a wide range of factors. Some of the highlight figures include:

- Mobile broadband subscriptions: CAGR of 15%

- LTE subscriptions: CAGR of 25%

- Data traffic per smartphone: CAGR of 35%

- Total mobile data traffic: CAGR of 45%

Video to dominate mobile traffic growth:

Ericsson expects video to continue to play a large part in the data traffic growth mainly due to teenagers streaming video.. In 2015 video was some 40–55% of the total mobile data traffic depending on the device type and is forecast to have a CAGR of 55% to 2021. By 2021 Ericsson forecasts that video will account for some 70% of mobile data traffic. As the report notes: “Today’s teens… have no experience of a world without online video streaming.”

To meet such growth, LTE continues to provide fast speeds with current deployments providing up to 600Mbps (Cat 11), which will grow to 1Gbps LTE (Cat 16) with deployments in in 2016 according to Ericsson.

5G to start in 2020:

Looking beyond 4G and the massive growth, Ericsson forecasts that 5G services will commence in 2020 based on ITU IMT2020 standards, and that there will be 150 million 5G subscribers by 2021 led by rollouts in South Korea, Japan, China and the US.

IoT endpoints to overtake mobile phones:

In one of the most eye-catching predictions, Ericsson suggests that the number of IoT connected end points — such as cars, machines, smart meters and consumer tech — will overtake the number of mobile phones in 2018. IoT devices are forecast to grow at a CAGR of 23% over the period, and what is worth noting is the connectivity types including non-cellular IoT connectivity and the various low-power wide-area (LPWA) proprietary systems like SIGFOX, LoRa and Ingenu. Ericsson forecasts non-cellular IoT to be almost 10 times the cellular IoT by 2021.

VoLTE growth:

Voice over LTE (VoLTE) also features in the report. Ericsson forecasts that the 100 million VoLTE subscriptions at the end of 2015 will increase to 2.3 billion by 2021 — representing over 50% of all LTE subscriptions. In the US, Canada, South Korea and Japan this figure rises to over 80%.

The article on managing user experience describes how high traffic load in less than a tenth of the mobile radio cells in metropolitan areas can affect more than half of the user activity over the course of 24 hours.

In the last article, Ericcson discusses the need for global spectrum harmonization to secure early 5G deployments.

Illustrations:

Mobile subscriptions

State of the networks

In analyzing the report, John Okas of Real Wireless wrote:

One of the key conclusions from the report is that managing the user experience is key for network operators and infrastructure providers – and all of the trends highlighted above are making that an increasingly complex challenge. As such, Data analytics are increasingly being applied to find the relationship between user experience and network performance statistics. Such an understanding is vital for operators to prioritise network investment as well as keep churn low. As the data from the report shows, operators face many calls on capex and opex as new technology combined with new use cases (and hopefully more spectrum), gives operators new opportunities and as well new challenges.

Of course, vendors put time and effort in to these reports to bring these challenges into sharp focus for the operators along with whatever solutions the vendor may have to offer. Real Wireless provides deep independent expertise in all of the areas and topics covered in such vendor reports including LTE, 5G and IoT. We’re involved in the business, technology, regulation and markets, working with all parts of the ecosystem including vendors, operators, regulators and end users. We help bring clarity and understanding to the challenges as well as the opportunities in the wireless world — without bias.