AI Echo Chamber: “Upstream AI” companies huge spending fuels profit growth for “Downstream AI” firms

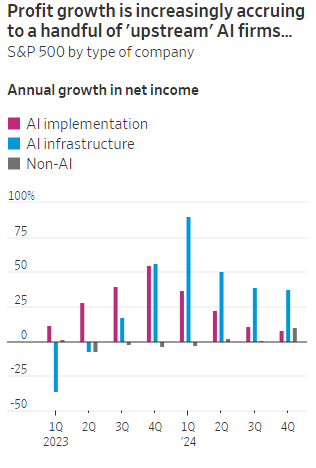

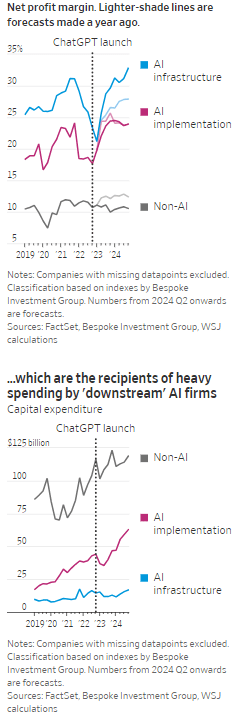

According to the Wall Street Journal, the AI industry has become an “Echo Chamber,” where huge capital spending by the AI infrastructure and application providers have fueled revenue and profit growth for everyone else. Market research firm Bespoke Investment Group has recently created baskets for “downstream” and “upstream” AI companies.

- The Downstream group involves “AI implementation,” which consist of firms that sell AI development tools, such as the large language models (LLMs) popularized by OpenAI’s ChatGPT since the end of 2022, or run products that can incorporate them. This includes Google/Alphabet, Microsoft, Amazon, Meta Platforms (FB), along with IBM, Adobe and Salesforce.

- Higher up the supply chain (Upstream group), are the “AI infrastructure” providers, which sell AI chips, applications, data centers and training software. The undisputed leader is Nvidia, which has seen its sales triple in a year, but it also includes other semiconductor companies, database developer Oracle and owners of data centers Equinix and Digital Realty.

The Upstream group of companies have posted profit margins that are far above what analysts expected a year ago. In the second quarter, and pending Nvidia’s results on Aug. 28th , Upstream AI members of the S&P 500 are set to have delivered a 50% annual increase in earnings. For the remainder of 2024, they will be increasingly responsible for the profit growth that Wall Street expects from the stock market—even accounting for Intel’s huge problems and restructuring.

It should be noted that the lines between the two groups can be blurry, particularly when it comes to giants such as Amazon, Microsoft and Alphabet, which provide both AI implementation (e.g. LLMs) and infrastructure: Their cloud-computing businesses are responsible for turning these companies into the early winners of the AI craze last year and reported breakneck growth during this latest earnings season. A crucial point is that it is their role as ultimate developers of AI applications that have led them to make super huge capital expenditures, which are responsible for the profit surge in the rest of the ecosystem. So there is a definite trickle down effect where the big tech players AI directed CAPEX is boosting revenue and profits for the companies down the supply chain.

As the path for monetizing this technology gets longer and harder, the benefits seem to be increasingly accruing to companies higher up in the supply chain. Meta Platforms Chief Executive Mark Zuckerberg recently said the company’s coming Llama 4 language model will require 10 times as much computing power to train as its predecessor. Were it not for AI, revenues for semiconductor firms would probably have fallen during the second quarter, rather than rise 18%, according to S&P Global.

………………………………………………………………………………………………………………………………………………………..

………………………………………………………………………………………………………………………………………………………..

A paper written by researchers from the likes of Cambridge and Oxford uncovered that the large language models (LLMs) behind some of today’s most exciting AI apps may have been trained on “synthetic data” or data generated by other AI. This revelation raises ethical and quality concerns. If an AI model is trained primarily or even partially on synthetic data, it might produce outputs lacking human-generated content’s richness and reliability. It could be a case of the blind leading the blind, with AI models reinforcing the limitations or biases inherent in the synthetic data they were trained on.

In this paper, the team coined the phrase “model collapse,” claiming that training models this way will answer user prompts with low-quality outputs. The idea of “model collapse” suggests a sort of unraveling of the machine’s learning capabilities, where it fails to produce outputs with the informative or nuanced characteristics we expect. This poses a serious question for the future of AI development. If AI is increasingly trained on synthetic data, we risk creating echo chambers of misinformation or low-quality responses, leading to less helpful and potentially even misleading systems.

……………………………………………………………………………………………………………………………………………

In a recent working paper, Massachusetts Institute of Technology (MIT) economist Daron Acemoglu argued that AI’s knack for easy tasks has led to exaggerated predictions of its power to enhance productivity in hard jobs. Also, some of the new tasks created by AI may have negative social value (such as design of algorithms for online manipulation). Indeed, data from the Census Bureau show that only a small percentage of U.S. companies outside of the information and knowledge sectors are looking to make use of AI.

References:

https://deepgram.com/learn/the-ai-echo-chamber-model-collapse-synthetic-data-risks

https://economics.mit.edu/sites/default/files/2024-04/The%20Simple%20Macroeconomics%20of%20AI.pdf

AI wave stimulates big tech spending and strong profits, but for how long?

AI winner Nvidia faces competition with new super chip delayed

SK Telecom and Singtel partner to develop next-generation telco technologies using AI

Telecom and AI Status in the EU

Vodafone: GenAI overhyped, will spend $151M to enhance its chatbot with AI

Data infrastructure software: picks and shovels for AI; Hyperscaler CAPEX

One thought on “AI Echo Chamber: “Upstream AI” companies huge spending fuels profit growth for “Downstream AI” firms”

Comments are closed.

Big Tech companies are engaged in an artificial-intelligence arms race, each building data centers at a blistering rate. All together, the four giants spent $95 billion on capex in the second quarter, and much more than that is in the pipeline.

The companies have been giving annual capex guidance. Microsoft, whose fiscal year ended this quarter, declined to issue a fiscal 2026 projection, but put out a big number—over $30 billion—for the current quarter’s investments. That would see expenses rise by 50% on the year, but Chief Financial Officer Amy Hood cautioned that the growth rate would moderate through the fiscal year.

By contrast, Meta isn’t slowing down. After raising its 2025 capex guidance last quarter and inching it up this past week, Chief Financial Officer Susan Li said on the earnings call that the company “expects to ramp our investments significantly in 2026.” This is exceptional because Meta is the only one from this group that doesn’t operate a cloud to rent out these AI servers; it’s all for its own use.

Amazon is also proceeding full steam ahead. It used almost every penny of its second-quarter operational cash flows for $31 billion of capex, and guided to around $60 billion for the second half, putting it on pace for a stunning $115 billion for the year. Amazon leads the pack here, but unlike the other AI contenders, its number is inflated by large retail investments for warehouses, vehicles, and robots.

Alphabet isn’t slowing down either, raising its 2025 capex guidance considerably. And though Apple spends much less—$3.5 billion in the quarter—that’s still 61% higher than last year.

https://www.barrons.com/articles/takeaways-big-tech-earnings-ai-68402f1a?mod=hp_WIND_A_3_2