OCP 2025 Meta keynote: Scaling the AI Infrastructure to Data Center Regions

At the OCP Global Summit 2025 in San Jose, CA, Meta detailed its strategy for scaling AI infrastructure to regional data center deployments, emphasizing open, collaborative, and highly scalable designs to support growing AI workloads. The October 14th keynote presentation by Meta’s VP of Data Center Infrastructure, Dan Rabinovitsj, discussed strategies for deploying and operating AI at scale across various data center regions at OCP 2025. The session highlighted innovations for building AI-ready data centers, focusing on open hardware, power innovation, and challenges in next-generation AI infrastructure.

Initiatives discussed included: new Ethernet standards for AI clusters, integration of the Ultra Ethernet Consortium standard, Meta’s vision for open networking hardware, AMD’s “Helios” rack-scale AI platform, MSI’s integrated OCP solutions, next-gen liquid cooling, and solutions for distributed and edge AI.

Rabinovitsj highlighted Meta’s contributions to open standards and hardware innovations, including the Open Rack Wide standard and advanced networking concepts for AI clusters.

Meta also announced several new milestones for data center networking:

- The evolution of Disaggregated Scheduled Fabric (DSF) to support scale-out interconnect for large AI clusters that span entire data center buildings.

- A new Non-Scheduled Fabric (NSF) architecture based entirely on shallow-buffer, disaggregated Ethernet switches that will support our largest AI clusters like Prometheus.

- The addition of Minipack3N, based on NVIDIA’s Ethernet Spectrum-4 ASIC, to our portfolio of 51 Tbps OCP switches that use OCP’s SAI and Meta’s FBOSS software stack.

- The launch of the Ethernet for Scale-Up Networking (ESUN) initiative, focused on making Ethernet suitable for connecting high-performance processors, or GPUs, within a single rack by emphasizing requirements like low latency, high bandwidth, and lossless transfers. Meta has been working with other large-scale data center operators and leading Ethernet vendors to advance using Ethernet for scale-up networking (specifically the high-performance interconnects required for next-generation AI accelerator architectures.

OCP Summit 2025: The Open Future of Networking Hardware for AI

- Open Rack Wide (ORW) standard: Meta introduced the ORW specification, a new open standard for double-wide equipment racks designed to meet the extreme power, cooling, and serviceability demands of next-generation AI systems. AMD, a partner of Meta, showcased its “Helios” rack-scale platform built to be compliant with this new standard.

- Networking fabrics for AI clusters: Meta detailed its networking architecture, revealing the following innovations:

- Disaggregated Scheduled Fabric (DSF): An updated version of DSF was discussed (see below), which now provides non-blocking interconnects for clusters of up to 18,432 XPUs (AI processors), enabling communication between a larger number of GPUs.

- Non-Scheduled Fabric (NSF): Meta unveiled NSF, a new fabric for its largest AI clusters, which runs on shallow-buffer, disaggregated Ethernet switches to reduce latency. NSF is planned for Meta’s upcoming multi-gigawatt “Prometheus” clusters. See next section below for details.

- FBNIC: Meta announced FBNIC, a network ASIC of their own design.

- 51T switches: Meta revealed new 51T network switches, which utilize Broadcom and Cisco ASICs.

- Next-generation optical connections: For faster and higher-capacity optical interconnections, Meta discussed its adoption of 2x400G FR4-LITE and 400G/2x400G DR4 optics for its 400G and 800G connectivity.

- Sustainable hardware: As part of its 2030 net-zero goals, Meta presented a new AI-powered methodology for tracking and estimating the carbon emissions of its IT hardware. The methodology will be open-sourced for the wider industry

……………………………………………………………………………………………………………………………………………………………………………………………………………………………………

Deep Dive into DSF and NSF:

- Non-blocking scale: An updated, two-stage architecture for DSF can now support a non-blocking fabric for up to 18,432 XPUs (AI processors). This allows all-to-all communication between a significantly larger number of GPUs without performance degradation.

- Proactive congestion avoidance: DSF uses a Virtual Output Queue (VOQ)-based system to manage traffic flow. By scheduling traffic between endpoints, it proactively avoids congestion before it occurs, which improves bandwidth delivery and overall network efficiency.

- Open and standardized: The fabric is built on open standards like the OCP-SAI (Switch Abstraction Interface) and is managed by Meta’s own network operating system, FBOSS. This vendor-agnostic approach allows Meta to use components from different suppliers and avoid vendor lock-in.

- Optimal load balancing: Traffic is “sprayed” across all available links and switches, ensuring an equal load and smooth performance for bandwidth-intensive workloads like AI training.

- Low latency: Unlike DSF, which relies on scheduling, NSF operates on shallow-buffer, disaggregated Ethernet switches. This reduces round-trip latency, making it ideal for the most latency-sensitive AI workloads.

- Adaptive routing: The NSF architecture is a three-tier fabric that supports adaptive routing for effective load-balancing. This helps minimize congestion and ensure optimal utilization of GPUs, which is critical for maximizing performance in Meta’s largest AI factories.

- Disaggregated design: Like DSF, NSF is built on a disaggregated design. This allows Meta to scale its network by using interchangeable, industry-standard components instead of a single vendor’s closed system.

- DSF: Provides a high-efficiency, highly scalable network for its large, but still modular, AI clusters.

- NSF: Is optimized for the extreme demands of its largest, gigawatt-scale “AI factories” like Prometheus, where low latency and robust adaptive routing are paramount.

Image Credit: Meta

………………………………………………………………………………………………………………………………………………………..

References:

OCP Summit 2025: The Open Future of Networking Hardware for AI

Networking at the Heart of AI — @Scale: Networking 2025 Recap

Big tech spending on AI data centers and infrastructure vs the fiber optic buildout during the dot-com boom (& bust)

Gartner: AI spending >$2 trillion in 2026 driven by hyperscalers data center investments

AI Data Center Boom Carries Huge Default and Demand Risks

Analysis: Cisco, HPE/Juniper, and Nvidia network equipment for AI data centers

Qualcomm to acquire Alphawave Semi for $2.4 billion; says its high-speed wired tech will accelerate AI data center expansion

Cisco CEO sees great potential in AI data center connectivity, silicon, optics, and optical systems

Data Center Networking Market to grow at a CAGR of 6.22% during 2022-2027 to reach $35.6 billion by 2027

TechCrunch: Meta to build $10 billion Subsea Cable to manage its global data traffic

Meta, the parent company of Facebook, Instagram, and WhatsApp, is reportedly planning to build its first fully owned, large-scale fiber-optic subsea cable extending around the globe. The project, spanning over 40,000 kilometers, is expected to require an investment of more than $10 billion. The purpose is to enhance Meta’s infrastructure to meet the growing demand for data usage driven by its artificial intelligence (AI) products and services, according to a report by TechCrunch. Meta accounts for 10% of all fixed and 22% of all mobile traffic and its AI investments promise to boost that usage even further.

TechCrunch has confirmed with sources close to the company that Meta plans to build a new, major, fiber-optic subsea cable extending around the world — a 40,000+ kilometer project that could total more than $10 billion of investment. Critically, Meta will be the sole owner and user of this subsea cable — a first for the company and thus representing a milestone for its infrastructure efforts.

Image Credit: Sunil Tagare

Image Credit: Sunil Tagare

………………………………………………………………………………………………………………………………………….

Meta’s infrastructure work is overseen by Santosh Janardhan, who is the company’s head of global infrastructure and co-head of engineering. The company has teams globally who look at and plan out its infrastructure — and it has achieved some significant industry figures work for it in the past. In the case of this upcoming project, it is being conceived out of the company’s South Africa operation, according to sources.

Fiber-optic subsea cables have been a part of communications infrastructure for the last 40 years. What’s significant here is who is putting the money down to build and own it — and for what purposes.

Meta’s plans underscore how investment and ownership of subsea networks has shifted in recent years from consortiums involving telecoms carriers, to now also include big tech giants. According to telecom analysts Telegeography, Meta is part-owner of 16 existing networks, including most recently the 2Africa cable that encircles the continent (others in that project are carriers including Orange, Vodafone, China Mobile, Bayobab/MTN and more). However, this new cable project would be the first wholly owned by Meta itself.

That would put Meta into the same category as Google, which has involvement in some 33 different routes, including a few regional efforts in which it is the sole owner, per Telegeography’s tracking. Other big tech companies that are either part owners or capacity buyers in subsea cables include Amazon and Microsoft (neither of which are whole-owners of any route themselves).

There are a number of reasons why building subsea cables would appeal to big tech companies like Meta.

First, sole ownership of the route and cable would give Meta first dibs in capacity to support traffic on its own properties.

Meta, like Google, also plays up the lift it has provided to regions by way of its subsea investments, claiming that projects like Marea in Europe and others in Southeast Asia have contributed more than “half a trillion dollars” to economies in those areas.

Yet there is a more pragmatic impetus for these investments: tech companies — rather than telecoms carriers, traditional builders and owners of these cables — want to have more direct ownership of the pipes needed to deliver content, advertising and more to users around the world.

According to its earnings reports, Meta makes more money outside of North America than in its home market itself. Having priority on dedicated subsea cabling can help ensure quality of service on that traffic. (Note: this is just to ensure long-haul traffic: the company still has to negotiate with carriers within countries and in ‘last-mile’ delivery to users’ devices, which can have its challenges.)

References:

Meta plans to build a $10B subsea cable spanning the world, sources say

Google’s Bosun subsea cable to link Darwin, Australia to Christmas Island in the Indian Ocean

“SMART” undersea cable to connect New Caledonia and Vanuatu in the southwest Pacific Ocean

Telstra International partners with: Trans Pacific Networks to build Echo cable; Google and APTelecom for central Pacific Connect cables

Orange Deploys Infinera’s GX Series to Power AMITIE Subsea Cable

NEC completes Patara-2 subsea cable system in Indonesia

SEACOM telecom services now on Equiano subsea cable surrounding Africa

Google’s Equiano subsea cable lands in Namibia en route to Cape Town, South Africa

China seeks to control Asian subsea cable systems; SJC2 delayed, Apricot and Echo avoid South China Sea

HGC Global Communications, DE-CIX & Intelsat perspectives on damaged Red Sea internet cables

AI Echo Chamber: “Upstream AI” companies huge spending fuels profit growth for “Downstream AI” firms

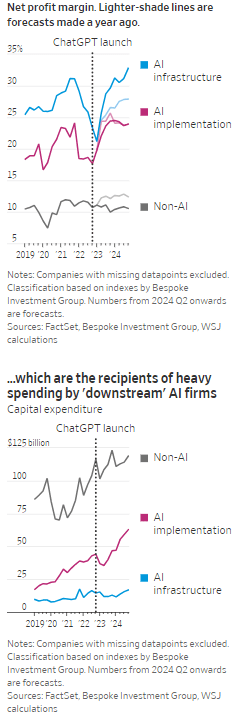

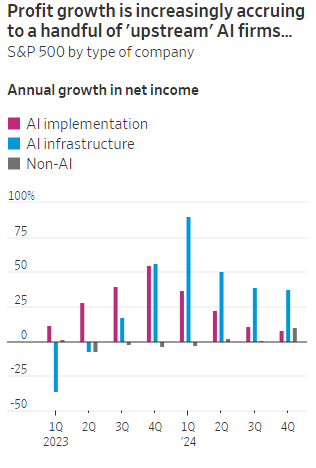

According to the Wall Street Journal, the AI industry has become an “Echo Chamber,” where huge capital spending by the AI infrastructure and application providers have fueled revenue and profit growth for everyone else. Market research firm Bespoke Investment Group has recently created baskets for “downstream” and “upstream” AI companies.

- The Downstream group involves “AI implementation,” which consist of firms that sell AI development tools, such as the large language models (LLMs) popularized by OpenAI’s ChatGPT since the end of 2022, or run products that can incorporate them. This includes Google/Alphabet, Microsoft, Amazon, Meta Platforms (FB), along with IBM, Adobe and Salesforce.

- Higher up the supply chain (Upstream group), are the “AI infrastructure” providers, which sell AI chips, applications, data centers and training software. The undisputed leader is Nvidia, which has seen its sales triple in a year, but it also includes other semiconductor companies, database developer Oracle and owners of data centers Equinix and Digital Realty.

The Upstream group of companies have posted profit margins that are far above what analysts expected a year ago. In the second quarter, and pending Nvidia’s results on Aug. 28th , Upstream AI members of the S&P 500 are set to have delivered a 50% annual increase in earnings. For the remainder of 2024, they will be increasingly responsible for the profit growth that Wall Street expects from the stock market—even accounting for Intel’s huge problems and restructuring.

It should be noted that the lines between the two groups can be blurry, particularly when it comes to giants such as Amazon, Microsoft and Alphabet, which provide both AI implementation (e.g. LLMs) and infrastructure: Their cloud-computing businesses are responsible for turning these companies into the early winners of the AI craze last year and reported breakneck growth during this latest earnings season. A crucial point is that it is their role as ultimate developers of AI applications that have led them to make super huge capital expenditures, which are responsible for the profit surge in the rest of the ecosystem. So there is a definite trickle down effect where the big tech players AI directed CAPEX is boosting revenue and profits for the companies down the supply chain.

As the path for monetizing this technology gets longer and harder, the benefits seem to be increasingly accruing to companies higher up in the supply chain. Meta Platforms Chief Executive Mark Zuckerberg recently said the company’s coming Llama 4 language model will require 10 times as much computing power to train as its predecessor. Were it not for AI, revenues for semiconductor firms would probably have fallen during the second quarter, rather than rise 18%, according to S&P Global.

………………………………………………………………………………………………………………………………………………………..

………………………………………………………………………………………………………………………………………………………..

A paper written by researchers from the likes of Cambridge and Oxford uncovered that the large language models (LLMs) behind some of today’s most exciting AI apps may have been trained on “synthetic data” or data generated by other AI. This revelation raises ethical and quality concerns. If an AI model is trained primarily or even partially on synthetic data, it might produce outputs lacking human-generated content’s richness and reliability. It could be a case of the blind leading the blind, with AI models reinforcing the limitations or biases inherent in the synthetic data they were trained on.

In this paper, the team coined the phrase “model collapse,” claiming that training models this way will answer user prompts with low-quality outputs. The idea of “model collapse” suggests a sort of unraveling of the machine’s learning capabilities, where it fails to produce outputs with the informative or nuanced characteristics we expect. This poses a serious question for the future of AI development. If AI is increasingly trained on synthetic data, we risk creating echo chambers of misinformation or low-quality responses, leading to less helpful and potentially even misleading systems.

……………………………………………………………………………………………………………………………………………

In a recent working paper, Massachusetts Institute of Technology (MIT) economist Daron Acemoglu argued that AI’s knack for easy tasks has led to exaggerated predictions of its power to enhance productivity in hard jobs. Also, some of the new tasks created by AI may have negative social value (such as design of algorithms for online manipulation). Indeed, data from the Census Bureau show that only a small percentage of U.S. companies outside of the information and knowledge sectors are looking to make use of AI.

References:

https://deepgram.com/learn/the-ai-echo-chamber-model-collapse-synthetic-data-risks

https://economics.mit.edu/sites/default/files/2024-04/The%20Simple%20Macroeconomics%20of%20AI.pdf

AI wave stimulates big tech spending and strong profits, but for how long?

AI winner Nvidia faces competition with new super chip delayed

SK Telecom and Singtel partner to develop next-generation telco technologies using AI

Telecom and AI Status in the EU

Vodafone: GenAI overhyped, will spend $151M to enhance its chatbot with AI

Data infrastructure software: picks and shovels for AI; Hyperscaler CAPEX

AI wave stimulates big tech spending and strong profits, but for how long?

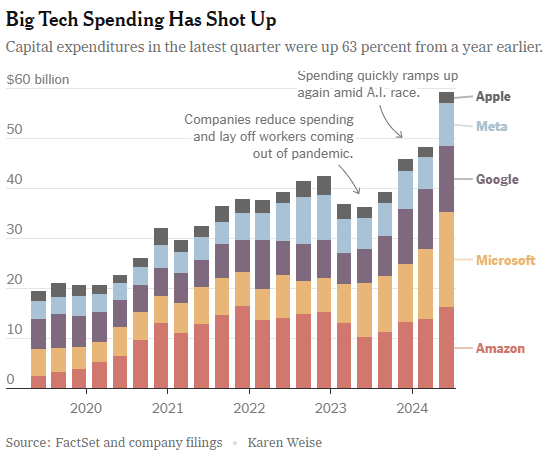

Big tech companies have made it clear over the last week that they have no intention of slowing down their stunning levels of spending on artificial intelligence (AI), even though investors are getting worried that a big payoff is further down the line than most believe.

In the last quarter, Apple, Amazon, Meta, Microsoft and Google’s parent company Alphabet spent a combined $59 billion on capital expenses, 63% more than a year earlier and 161 percent more than four years ago. A large part of that was funneled into building data centers and packing them with new computer systems to build artificial intelligence. Only Apple has not dramatically increased spending, because it does not build the most advanced AI systems and is not a cloud service provider like the others.

At the beginning of this year, Meta said it would spend more than $30 billion in 2024 on new tech infrastructure. In April, he raised that to $35 billion. On Wednesday, he increased it to at least $37 billion. CEO Mark Zuckerberg said Meta would spend even more next year. He said he’d rather build too fast “rather than too late,” and allow his competitors to get a big lead in the A.I. race. Meta gives away the advanced A.I. systems it develops, but Mr. Zuckerberg still said it was worth it. “Part of what’s important about A.I. is that it can be used to improve all of our products in almost every way,” he said.

………………………………………………………………………………………………………………………………………………………..

This new wave of Generative A.I. is incredibly expensive. The systems work with vast amounts of data and require sophisticated computer chips and new data centers to develop the technology and serve it to customers. The companies are seeing some sales from their A.I. work, but it is barely moving the needle financially.

In recent months, several high-profile tech industry watchers, including Goldman Sachs’s head of equity research and a partner at the venture firm Sequoia Capital, have questioned when or if A.I. will ever produce enough benefit to bring in the sales needed to cover its staggering costs. It is not clear that AI will come close to having the same impact as the internet or mobile phones, Goldman’s Jim Covello wrote in a June report.

“What $1 trillion problem will AI solve?” he wrote. “Replacing low wage jobs with tremendously costly technology is basically the polar opposite of the prior technology transitions I’ve witnessed in my 30 years of closely following the tech industry.” “The reality right now is that while we’re investing a significant amount in the AI.space and in infrastructure, we would like to have more capacity than we already have today,” said Andy Jassy, Amazon’s chief executive. “I mean, we have a lot of demand right now.”

That means buying land, building data centers and all the computers, chips and gear that go into them. Amazon executives put a positive spin on all that spending. “We use that to drive revenue and free cash flow for the next decade and beyond,” said Brian Olsavsky, the company’s finance chief.

There are plenty of signs the boom will persist. In mid-July, Taiwan Semiconductor Manufacturing Company, which makes most of the in-demand chips designed by Nvidia (the ONLY tech company that is now making money from AI – much more below) that are used in AI systems, said those chips would be in scarce supply until the end of 2025.

Mr. Zuckerberg said AI’s potential is super exciting. “It’s why there are all the jokes about how all the tech C.E.O.s get on these earnings calls and just talk about A.I. the whole time.”

……………………………………………………………………………………………………………………

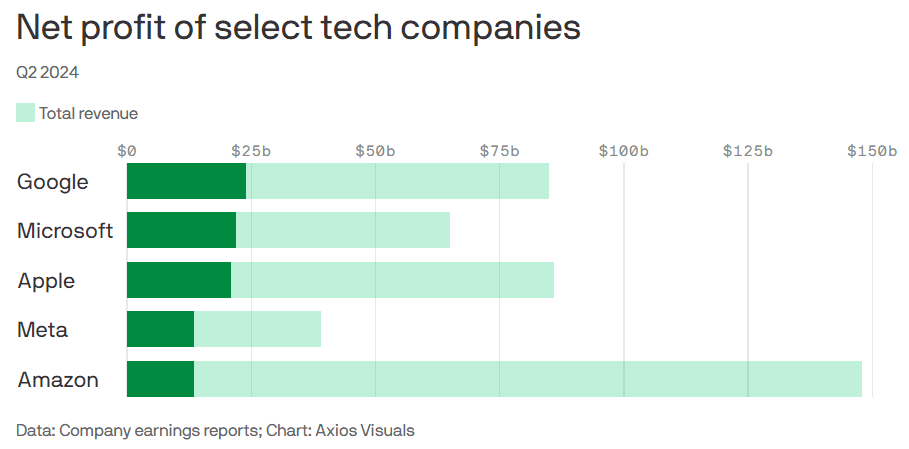

Big tech profits and revenue continue to grow, but will massive spending produce a good ROI?

Last week’s Q2-2024 results:

- Google parent Alphabet reported $24 billion net profit on $85 billion revenue.

- Microsoft reported $22 billion net profit on $65 billion revenue.

- Meta reported $13.5 billion net profit on $39 billion revenue.

- Apple reported $21 billion net profit on $86 billion revenue.

- Amazon reported $13.5 billion net profit on $148 billion revenue.

This chart sums it all up:

………………………………………………………………………………………………………………………………………………………..

References:

https://www.nytimes.com/2024/08/02/technology/tech-companies-ai-spending.html

https://www.axios.com/2024/08/02/google-microsoft-meta-ai-earnings

https://www.nvidia.com/en-us/data-center/grace-hopper-superchip/

AI Frenzy Backgrounder; Review of AI Products and Services from Nvidia, Microsoft, Amazon, Google and Meta; Conclusions

Cloud Service Providers struggle with Generative AI; Users face vendor lock-in; “The hype is here, the revenue is not”

Everyone agrees that Generative AI has great promise and potential. Martin Casado of Andreessen Horowitz recently wrote in the Wall Street Journal that the technology has “finally become transformative:”

“Generative AI can bring real economic benefits to large industries with established and expensive workloads. Large language models could save costs by performing tasks such as summarizing discovery documents without replacing attorneys, to take one example. And there are plenty of similar jobs spread across fields like medicine, computer programming, design and entertainment….. This all means opportunity for the new class of generative AI startups to evolve along with users, while incumbents focus on applying the technology to their existing cash-cow business lines.”

A new investment wave caused by generative AI is starting to loom among cloud service providers, raising questions about whether Big Tech’s spending cutbacks and layoffs will prove to be short lived. Pressed to say when they would see a revenue lift from AI, the big U.S. cloud companies (Microsoft, Alphabet/Google, Meta/FB and Amazon) all referred to existing services that rely heavily on investments made in the past. These range from the AWS’s machine learning services for cloud customers to AI-enhanced tools that Google and Meta offer to their advertising customers.

Microsoft offered only a cautious prediction of when AI would result in higher revenue. Amy Hood, chief financial officer, told investors during an earnings call last week that the revenue impact would be “gradual,” as the features are launched and start to catch on with customers. The caution failed to match high expectations ahead of the company’s earnings, wiping 7% off its stock price (MSFT ticker symbol) over the following week.

When it comes to the newer generative AI wave, predictions were few and far between. Amazon CEO Andy Jassy said on Thursday that the technology was in its “very early stages” and that the industry was only “a few steps into a marathon”. Many customers of Amazon’s cloud arm, AWS, see the technology as transformative, Jassy noted that “most companies are still figuring out how they want to approach it, they are figuring out how to train models.” He insisted that every part of Amazon’s business was working on generative AI initiatives and the technology was “going to be at the heart of what we do.”

There are a number of large language models that power generative AI, and many of the AI companies that make them have forged partnerships with big cloud service providers. As business technology leaders make their picks among them, they are weighing the risks and benefits of using one cloud provider’s AI ecosystem. They say it is an important decision that could have long-term consequences, including how much they spend and whether they are willing to sink deeper into one cloud provider’s set of software, tools, and services.

To date, AI large language model makers like OpenAI, Anthropic, and Cohere have led the charge in developing proprietary large language models that companies are using to boost efficiency in areas like accounting and writing code, or adding to their own products with tools like custom chatbots. Partnerships between model makers and major cloud companies include OpenAI and Microsoft Azure, Anthropic and Cohere with Google Cloud, and the machine-learning startup Hugging Face with Amazon Web Services. Databricks, a data storage and management company, agreed to buy the generative AI startup MosaicML in June.

If a company chooses a single AI ecosystem, it could risk “vendor lock-in” within that provider’s platform and set of services, said Ram Chakravarti, chief technology officer of Houston-based BMC Software. This paradigm is a recurring one, where a business’s IT system, software and data all sit within one digital platform, and it could become more pronounced as companies look for help in using generative AI. Companies say the problem with vendor lock-in, especially among cloud providers, is that they have difficulty moving their data to other platforms, lose negotiating power with other vendors, and must rely on one provider to keep its services online and secure.

Cloud providers, partly in response to complaints of lock-in, now offer tools to help customers move data between their own and competitors’ platforms. Businesses have increasingly signed up with more than one cloud provider to reduce their reliance on any single vendor. That is the strategy companies could end up taking with generative AI, where by using a “multiple generative AI approach,” they can avoid getting too entrenched in a particular platform. To be sure, many chief information officers have said they willingly accept such risks for the convenience, and potentially lower cost, of working with a single technology vendor or cloud provider.

A significant challenge in incorporating generative AI is that the technology is changing so quickly, analysts have said, forcing CIOs to not only keep up with the pace of innovation, but also sift through potential data privacy and cybersecurity risks.

A company using its cloud provider’s premade tools and services, plus guardrails for protecting company data and reducing inaccurate outputs, can more quickly implement generative AI off-the-shelf, said Adnan Masood, chief AI architect at digital technology and IT services firm UST. “It has privacy, it has security, it has all the compliance elements in there. At that point, people don’t really have to worry so much about the logistics of things, but rather are focused on utilizing the model.”

For other companies, it is a conservative approach to use generative AI with a large cloud platform they already trust to hold sensitive company data, said Jon Turow, a partner at Madrona Venture Group. “It’s a very natural start to a conversation to say, ‘Hey, would you also like to apply AI inside my four walls?’”

End Quotes:

“Right now, the evidence is a little bit scarce about what the effect on revenue will be across the tech industry,” said James Tierney of Alliance Bernstein.

Brent Thill, an analyst at Jefferies, summed up the mood among investors: “The hype is here, the revenue is not. Behind the scenes, the whole industry is scrambling to figure out the business model [for generative AI]: how are we going to price it? How are we going to sell it?”

………………………………………………………………………………………………………………

References:

https://www.ft.com/content/56706c31-e760-44e1-a507-2c8175a170e8

https://www.wsj.com/articles/companies-weigh-growing-power-of-cloud-providers-amid-ai-boom-478c454a

https://www.techtarget.com/searchenterpriseai/definition/generative-AI?Offer=abt_pubpro_AI-Insider

Global Telco AI Alliance to progress generative AI for telcos

Curmudgeon/Sperandeo: Impact of Generative AI on Jobs and Workers

Bain & Co, McKinsey & Co, AWS suggest how telcos can use and adapt Generative AI

Generative AI Unicorns Rule the Startup Roost; OpenAI in the Spotlight

Generative AI in telecom; ChatGPT as a manager? ChatGPT vs Google Search

Generative AI could put telecom jobs in jeopardy; compelling AI in telecom use cases

Qualcomm CEO: AI will become pervasive, at the edge, and run on Snapdragon SoC devices

Summary of Facebook Connectivity Projects

Facebook Connectivity works with partners to develop these technologies and bring them to people across the world. Since 2013, Facebook Connectivity has accelerated access to a faster internet for more than 300M people around the world. Earlier this week, during an event called Inside the Lab, our engineers shared the latest developments on some of our connectivity technologies, which aim to improve internet capacity across the world by sea, land and air:

- Subsea cables connect continents and are the backbone of the global internet. Our first-ever transatlantic subsea cable system will connect Europe to the U.S. This new cable provides 200X more internet capacity than the transatlantic cables of the 2000s. This investment builds on other recent subsea expansions, including 2Africa PEARLS which will be the longest subsea cable system in the world connecting Africa, Europe and Asia.

- To slash the time and cost required to roll out fiber-optic internet to communities, Facebook developed a robot called Bombyx that moves along power lines, wrapping them with fiber cable. Since we first unveiled Bombyx, it has become lighter, faster and more agile, and we believe it could have a radical effect on the economics of fiber deployment around the world.

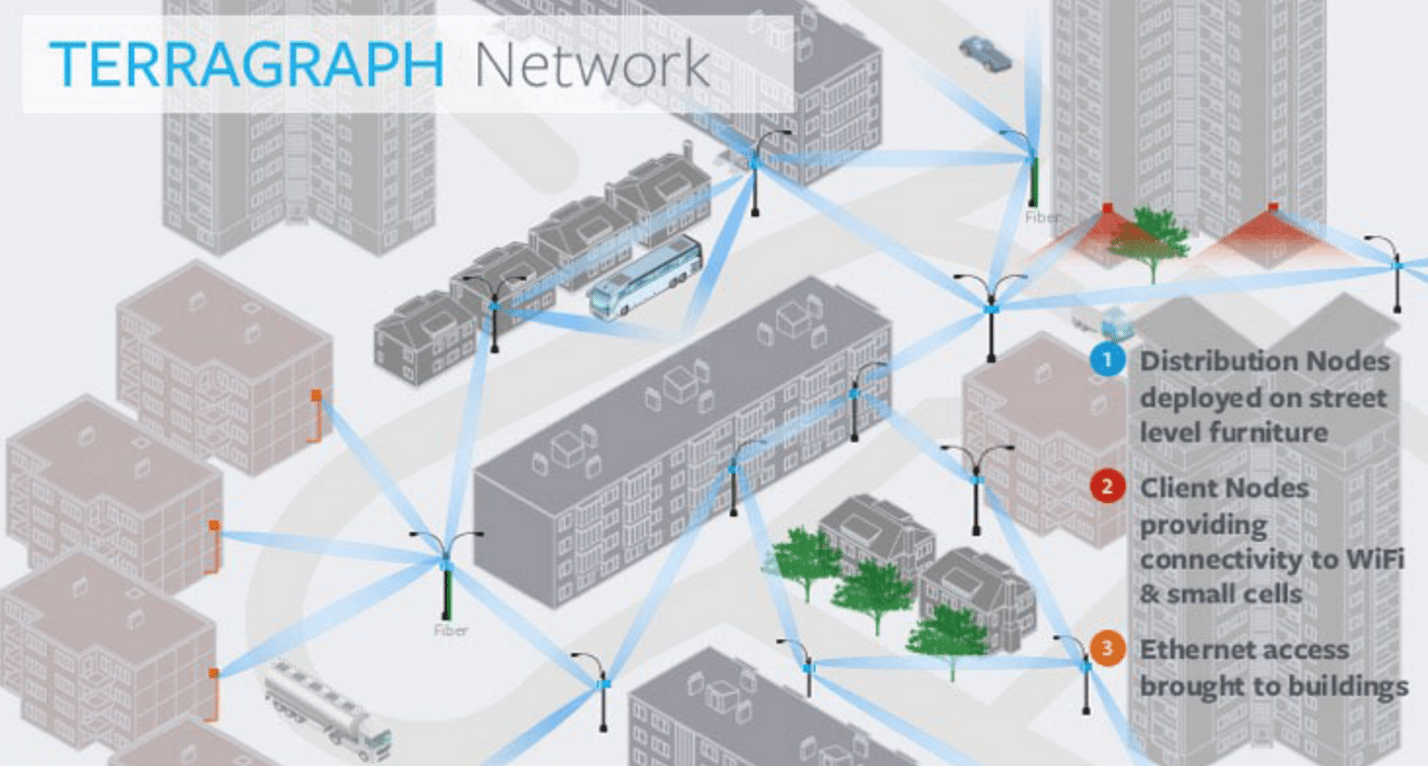

- Facebook also developed Terragraph, a wireless technology that delivers internet at fiber speed over the air. This technology has already brought high-speed internet to more than 6,500 homes in Anchorage, Alaska, and deployment has also started in Perth, Australia, one of the most isolated capital cities in the world.

Bombyx wraps fiber around existing telephone wires, clearing obstacles and flipping as it needs to along its route. (Source: Facebook)

Facebook wants to bring high-speed reliable internet to more than 300M people — but the work doesn’t stop there. Connecting the next billion will require many different approaches. And as people look for more immersive experiences in new virtual spaces like the metaverse, we need to increase access to a more reliable and affordable internet for everyone. The company believes this work is fundamental for creating greater equity where everyone can benefit from the economic, education and social benefits of a digitally connected world.

“High speed, reliable Internet access that connects us to people around the world is something that’s lacking for billions of people around the world,” Mike Schroepfer, Facebook’s chief technology officer, declared during the company’s “Inside the Lab” roundtable discussion. “Business as usual will not solve it. We need radical breakthroughs to provide radical improvements – 10x faster speeds, 10x lower costs.”

Facebook and its partners are in the process of building 150,000 kilometers of subsea cables, and working on new sea-based power stations that will provide those cables with power.

“This will have a major impact on underserved regions of the world, notably in Africa, where our work is set to triple the amount of Internet bandwidth reaching the continent,” Dan Rabinovitsj, Facebook’s VP of connectivity, explained. That activity partly ties into a new segment of subsea cables called 2Africa PEARLS that will connect three continents: Africa, Europe and Asia.

(Source: Facebook)

2Africa Pearls, a new segment of subsea cable that connects Africa, Europe and Asia, will bring the total length of the 2Africa cable system to more than 45,000 kilometers, making it the longest subsea cable system ever deployed, the company said.

Cynthia Perret, Facebook’s infrastructure program manager, noted every transatlantic cable Facebook connects will contain 24 fiber pairs. “Capacity alone isn’t enough,” she said, noting that Facebook is also working on ways to configure and adapt the amount of capacity provided to each landing point. Facebook is also utilizing a model called “Atlantis” to help forecast and optimize where subsea cable routes need to be built. An integrated adaptive bandwidth system will likewise allow Facebook to shift capacities based on traffic patterns and reduce congestion and improve reliably, Perret explained.

References:

Facebook tests voice and video calls in its main app

Facebook and Liquid Intelligent Technologies to build huge fiber network in Africa

Facebook Inc. and Africa’s largest fiber optics company, Liquid Intelligent Technologies, are extending their reach on the continent by laying 2,000 kilometers (1,243 miles) of fiber in the Democratic Republic of Congo. The two companies intend to build an extensive long haul and metro fiber network. Apparently, this is part of Facebook’s effort to “connect the unconnected,” especially in 3rd world countries.

The move will make Facebook one of the biggest investors in fiber networks in the region. The cable will eventually extend the reach of 2Africa, a major sub-sea line that’s also been co-developed by Facebook, the two companies said in a July 5th statement.

Facebook will invest in the fiber build and support network planning. Liquid Technologies will own, build and operate the fiber network, and provide wholesale services to mobile network operators and internet service providers. The network will help create a digital corridor from the Atlantic Ocean through the Congo Rainforest, the second largest rainforest after the Amazon, to East Africa, and onto the Indian Ocean. Liquid Technologies has been working on the digital corridor for more than two years, which now reaches Central DRC. This corridor will connect DRC to its neighboring countries including Angola, Congo Brazzaville, Rwanda, Tanzania, Uganda, and Zambia.

The new build will stretch from Central DRC to the Eastern border with Rwanda and extend the reach of 2Africa, a major undersea cable that will land along both the East and West African coasts, and better connect Africa to the Middle East and Europe. Additionally, Liquid will employ more than 5,000 people from local communities to build the fiber network.

“This is one of the most difficult fiber builds ever undertaken, crossing more than 2,000 kilometers of some of the most challenging terrain in the world” said Nic Rudnick, Group CEO of Liquid Intelligent Technologies. “Liquid Technologies and Facebook have a common mission to provide affordable infrastructure to bridge connectivity gaps, and we believe our work together will have a tremendous impact on internet accessibility across the region.”

Liquid Intelligent Technologies is present in more than 20 countries in Africa, with a vision of a digitally connected future that leaves no African behind.

“This fiber build with Liquid Technologies is one of the most exciting projects we have worked on,” said Ibrahima Ba, Director of Network Investments, Emerging Markets at Facebook. “We know that deploying fibre in this region is not easy, but it is a crucial part of extending broadband access to under-connected areas. We look forward to seeing how our fibre build will help increase the availability and improve the affordability of high-quality internet in DRC.”

Facebook has been striving to improve connectivity in Africa to take advantage of a young population and the increasing availability and affordability of smartphones. The social-media giant switched to a predominantly fiber strategy following the failed launch of a satellite to beam signal around the continent in 2016.

About Liquid Intelligent Technologies:

Liquid Intelligent Technologies is a pan-African technology group present in more than 20 countries, mainly in Sub-Saharan Africa. Liquid has firmly established itself as the leading provider of pan-African digital infrastructure with an extensive network covering over 100,000 km. Liquid Intelligent Technologies is redefining network, cloud, and cybersecurity offerings through strategic partnerships with leading global players, innovative business applications, smart cloud services and world-class security on the African continent. Liquid Intelligent Technologies is now a comprehensive, one-stop technology group that provides customized digital solutions to public and private sector companies across the continent under several business units including Liquid Networks, Liquid Cloud and CyberSecurity and Africa Data Centers. For more information contact: Angela Chandy [email protected]

References:

FCC Grants Facebook permission to test converged WiFi/LTE indoor network in Menlo Park, CA

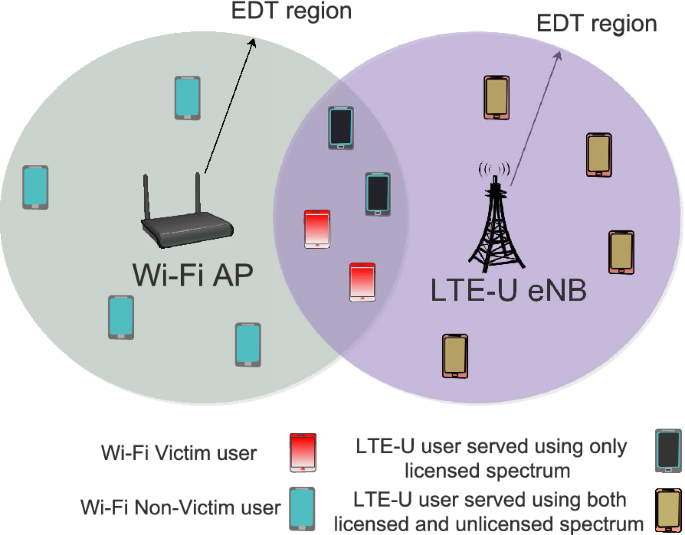

Following last month’s FCC filing to test a small 5G network, Facebook has filed another FCC Special Temporary Authority (STA) petition to test a “converged wireless system” that could potentially support concurrent communications across Wi-Fi and cellular networks in Menlo Park, CA (Facebook corporate headquarters).

In its FCC filing (granted June 23,2021), Facebook said “The experiment involves short-term testing of a LTE over-the-air setup for an indoor demonstration that is not likely to last more than six months, making an STA more appropriate than a conventional experimental license.”

Also, that it is researching a “proof of concept for a converged wireless system that will operate at the 2.4GHz Wi-Fi band and at Band 3 (1710MHz to 2495 MHz). The goal of the proof of concept is to create a demonstration and see if such a system may be viable. The system that will be tested will have a simple radio head that will be able to operate as a Wi-Fi Radio at 2.4 GHz and as a Band 3 cellular radio (LTE) concurrently. We will wirelessly connect dedicated client devices to demonstrate performance.”

The FCC approved Facebook’s request on June 23,2021. It will remain in effect until its scheduled expiration date of November 10, 2021. Facebook petition was filed under the “FCL Tech” name, which the company has been used for previous wireless tests in the 6GHz band.

Facebook will be using five units of unspecified AVX wireless network gear (E 102289 model). AVX is a Kyocera Group company. Their website states:

AVX Corporation is a leading international manufacturer and supplier of advanced electronic components and interconnect, sensor, control and antenna solutions with 33 manufacturing facilities in 16 countries around the world.

We offer a broad range of devices including capacitors, resistors, filters, couplers, sensors, controls, circuit protection devices, connectors and antennas. AVX components can be found in many electronic devices and systems worldwide.

Since WiFi at 2.4 GHz is in unlicensed spectrum (and being used indoors), one would assume that Facebook would also like to operate LTE in unlicensed spectrum in their converged network.

LTE in unlicensed spectrum (LTE-Unlicensed, LTE-U) is a proposed extension of the 4G-LTE wireless standard intended to allow cellular network operators to offload some of their data traffic by accessing the unlicensed 5 GHz frequency band. LTE-Unlicensed is a proposal, originally developed by Qualcomm, for the use of the 4G LTE radio communications technology in unlicensed spectrum, such as the 5 GHz band used by IEEE 802.11a and 802.11ac compliant Wi-Fi equipment. It would serve as an alternative to carrier-owned Wi-Fi hotspots. Currently, there are a number of variants of LTE operation in the unlicensed band, namely LTE-U, License Assisted Access (LAA), and MulteFire.

License Assisted Access (LAA) is a feature of LTE that leverages the unlicensed 5 GHz band in combination with licensed spectrum to increase performance. It uses carrier aggregation in the downlink to combine LTE in unlicensed 5 GHz band with LTE in the licensed band to provide better data rates and a better user experience.

However, Facebook’s STA is only for the band between 1710-2495 MHz – not the 5 GHz band.

……………………………………………………………………………………………………………………………………………………

References:

https://apps.fcc.gov/oetcf/els/reports/GetApplicationInfo.cfm?id_file_num=0769-EX-ST-2021

https://apps.fcc.gov/oetcf/els/reports/STA_Print.cfm?mode=current&application_seq=107558

Facebook to test 5G small cell network with SON features; Combine 5G access with Terragraph wireless backhaul?

The FCC today approved Facebook’s application to test a 5G small cell network across a wide range of mid-band spectrum bands (see below) at its Menlo Park, California headquarters.

Facebook told the FCC in its application:

The experiment involves short-term testing of a 5G over-the-air setup for an outdoor demonstration that is not likely to last more than six months, making an STA (Special Temporary Authority) more appropriate than a conventional experimental license.

The purpose of operation is to demonstrate the self-organizing network (“SON”) features in a 5G over-the-air setup operating in a small cell configuration. Lab testing does not allow feature realization. The outdoor test setup aims at validating the improvements done to 5G cellular networks.

The improvements involve:

(1) Load balancing between the cells in an attempt to optimize the resource utilization, reduce call drops, and create a better user experience by means of improved quality of service; and

(2) Run time selection and updates of the 5G cell physical layer cell identifiers (“PCIs”) to avoid conflict between neighboring cells, thereby avoiding UE drops and reducing network signaling traffic.

The frequency bands to be used are: 2.496-2.690 GHz, 3.3-3.6 GHz, 3.7-3.8 GHz, and 4.8-4.9GHz. A directional antenna will be used to beam the 5G signals.

Facebook did not name the network equipment suppliers for this test nor did they state why they needed to perform these tests. The only hint given was to test “self-organizing network (“SON”) features in a 5G over-the-air setup operating in a small cell configuration.”

One could speculate that Facebook might want to deploy a private 5G network across its sprawling Menlo Park campus. Or they might want to provide 5G access to municipalities using mid-band spectrum.

The company does have some recent experience designing and deploying millimeter wave wireless distribution networks (based on Terragraph) which could be combined with a 5G access network.

- Facebook’s Terragraph wireless backhaul technology is being used by Cambium Networks in their 60 GHz cnWave solution. Terragraph is a high-bandwidth, low-cost wireless solution to connect cities. Rapidly deployed on street poles or rooftops to create a mmWave wireless distribution network, Terragraph is capable of delivering fiber-like connectivity at a lower cost than fiber, making it ideally suited for applications such as fixed wireless access and Wi-Fi backhaul.

- In June 2018, Magyar Telekom, subsidiary of Deutsche Telekom, deployed their first Terragraph network in Mikebuda, Hungary. Terragraph improved local network speeds from 5M bps to 650M bps.

- Common Networks, a California based Internet Service Provider, deployed a Terragraph network to serve customers in Alameda, CA. Local businesses and customers of Common Networks saw an immediate improvement in internet speeds. Common Networks presented their approach at a 2018 IEEE ComSoc SCV technical meeting in Santa Clara, CA.

References:

https://apps.fcc.gov/oetcf/els/reports/STA_Print.cfm?mode=current&application_seq=106515

https://apps.fcc.gov/oetcf/els/reports/GetApplicationInfo.cfm?id_file_num=0482-EX-ST-2021

https://connectivity.fb.com/terragraph/

Facebook’s Terragraph gains momentum with operator, vendor buy-in