The enterprise network stack is collapsing; AI’s impact; comparison with “Batch Pipelines Break AI Agents”

by Shashi Kiran with Alan J Weissberger, ScD

Abstract:

This article presents the primary author’s point of view on networking technology and market evolution, as experienced it directly with his customers at Nile, where he serves as Chief Marketing Officer (CMO). A key theme is overlaying the impact of AI and its implications for network and network security architecture on a new network stack. We focus specifically on the diverse complexity and heterogeneity of the LAN, while drawing inferences to other areas in the broader enterprise network.

The article draws no information from other publications or references, except for the security breach data points derived from IDC, Gartner, and market surveys. Hence, the References listed at the end of the piece are from related IEEE Techblog posts and Nile press releases chosen by this website’s content manager.

Definitions:

The enterprise network stack is much more than a protocol stack. It is the layered architecture of physical infrastructure, forwarding devices, control protocols, management systems, and security enforcement functions that interconnect users, endpoints, workloads, and cloud services across campus, branch, WAN, data center, and cloud domains. It typically includes access, distribution, core, and edge segments, along with overlay, orchestration, telemetry, identity, and policy planes that govern how traffic is admitted, routed, segmented, monitored, and secured.

A useful way to think about the stack is in terms of planes:

-

Data plane: forwards packets, enforces QoS, and applies access-control functions close to the traffic path.

-

Control plane: discovers topology and capabilities, computes paths, and reacts to failures.

-

Management plane: handles configuration, monitoring, troubleshooting, reporting, and performance management.

-

Security stack: includes firewalls, IDS/IPS, secure web gateways, threat intelligence, and related inspection or enforcement tools.

At the device level, the stack typically includes physical media and network hardware such as cabling, Wi-Fi, NICs, switches, routers, gateways, servers, and dedicated security appliances. At higher layers, it includes protocols and services for addressing, routing, transport, application connectivity, identity, and policy enforcement, often mapped loosely to OSI/TCP-IP concepts rather than a strict textbook stack.

In an enterprise environment, the network stack extends across LAN, WAN, data center, cloud, and security domains, so “the stack” is less a single product and more an integrated system of infrastructure, software, telemetry, and policy. That is why discussions of enterprise architecture usually separate forwarding, orchestration, assurance, and security functions even when they are delivered in a unified platform.

Structural Limits of the Enterprise Network Stack:

The enterprise network stack is approaching a structural inflection which may be at a “breaking point.” That’s because what’s failing is structural and architectural, not incremental. The enterprise network stack was architected for a world that no longer exists, and most of the pain organizations feel today is the cost of pretending otherwise. The interesting question isn’t whether it breaks but rather when, and along which seams. Here’s why:

The network stack most enterprises still run was designed around five assumptions that were partly true in 2010 but mostly false in 2026. Users sit at desks on managed devices. Applications live in a corporate data center. Traffic flows north-south through a perimeter. Identity equals a user with a session. Trust derives from network location. Every one of those is gone. Users are hybrid, apps are SaaS and multi-cloud, traffic is increasingly east-west and machine-driven, identity now includes non-human agents acting with delegated authority, and zero trust has formally retired the idea that being inside the network means anything.

So, the enterprise stack isn’t failing because any single piece is bad. Rather, it’s failing because the architecture it was based on no longer matches the workload, the threat model, or the operational reality it’s asked to serve. AI is the forcing function, but the cracks were already there. The choice in front of most enterprises isn’t whether to rebuild but whether to do it deliberately or by accident. Will reinvention and self-disruption be intentional or forced?

Today, many enterprise environments represent layered extensions of legacy architectures rather than cohesive designs. AI acts as an accelerant, exposing pre-existing architectural limitations. The resulting fragmentation increases operational complexity, reduces agility, and amplifies security risk.

Complexity is a Primary Risk Vector:

Complexity has evolved from an operational burden into a primary source of systemic risk. Modern network environments often exceed the capacity for deterministic human understanding, creating conditions where failures and vulnerabilities emerge at the intersections between systems rather than within individual components.

Empirical evidence suggests that many successful breaches exploit misconfigurations and integration gaps rather than novel vulnerabilities. In this context, complexity itself becomes the effective attack surface.

This challenge is particularly acute in the LAN, which often retains legacy architectural elements, heterogeneous device ecosystems, and fragmented management models. Combined with constrained IT resources, this environment can become a disproportionate source of exposure.

Reducing complexity—through architectural simplification, integrated control planes, and automation—is therefore not merely an operational objective but a core security strategy. In AI-driven environments, simplicity directly contributes to resilience and risk reduction.

An Architectural Reset is Needed:

An architectural reset is increasingly necessary. While incremental upgrades remain feasible, their marginal returns are diminishing relative to the growing mismatch between legacy designs and emerging requirements. Many organizations continue to extend existing architectures due to cost constraints or perceived transition risks. However, this approach often compounds technical debt and increases long-term exposure. The more fundamental question is not whether incremental evolution is possible, but whether it represents effective capital allocation in the context of AI-driven workloads and threat models.

Forward-looking architectures are converging around several principles: AI-native workload support, identity-centric security, zero-trust enforcement, and tightly integrated operational models. Organizations that proactively redefine their network architectures around these principles are more likely to achieve sustainable performance, security, and operational efficiency gains.

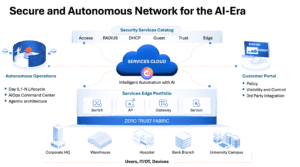

Here are a couple of conceptual architectural constructs for a unified, secure fabric with AI orchestration, autonomous operation and service delivery, which replaces the fragmented network stack and operations of the traditional/legacy network. The first illustration is more functional; the second is a more theoretical stack. CLICK ON EACH IMAGE TO ENLARGE!

Security and the Network Fabric:

Security is neither fully “moving into” nor “remaining outside of” the network fabric; rather, it is being restructured across distinct functional planes, including identity, policy, enforcement, and detection.

Historically, network-centric security relied on in-path inspection mechanisms (e.g., firewalls, intrusion prevention systems, and proxies). This model proved difficult to scale due to encryption, cloud decentralization, and traffic patterns that bypass centralized inspection points.

In contemporary architectures, the network fabric is evolving into a high-performance enforcement plane. Policy definition and decision-making are increasingly centralized in identity and control-plane systems, while enforcement is distributed across the network and applied at line rate to identity-associated flows.

This separation of concerns improves scalability and composability. Identity-centric policy models define “who can do what,” while the network enforces those decisions efficiently and locally. The result is a more adaptable and performant security architecture.

However, the effectiveness of this approach depends on architectural discipline. Designs that treat the fabric as one component within a broader, identity-driven security framework tend to reduce complexity. Conversely, attempts to re-centralize security entirely within the network risk recreating earlier limitations in a more complex form.

AI’s Impact on Telecommunications Networks:

Artificial intelligence (AI) is influencing telecom network architectures along two orthogonal dimensions:

1.] AI introduces a new class of workloads that impose stringent and atypical requirements on network infrastructure.

AI workloads fundamentally challenge legacy network design assumptions. Traditional enterprise networks were optimized for north–south traffic patterns, human-driven interactions, and best-effort delivery models. In contrast, AI workloads generate predominantly east–west traffic, operate at machine timescales, and exhibit low tolerance for latency, jitter, and packet loss. Simultaneously, AI-enabled control and management planes enable higher degrees of automation and operational efficiency, particularly in campus and branch environments where autonomous operations are beginning to reduce manual intervention.

2.] AI is increasingly being embedded within the network itself, enhancing operations, optimization, fault diagnosis/recovery and security functions. The interaction between these roles is driving many of the architectural shifts observed today. Today, wide-area networks (WANs) must interconnect AI-intensive data center environments with distributed enterprise domains, effectively bridging heterogeneous traffic models and service requirements.

AI-Driven Changes in Traffic and Risk:

AI is reshaping both the structure of network traffic and its associated risk profile. From a traffic perspective, flows are becoming increasingly east–west, bursty, and machine-generated, with reduced visibility due to encryption and abstraction layers. From a security standpoint, AI introduces new classes of actors (e.g., non-human identities and autonomous agents), as well as new attack vectors, including adversarial AI and data exfiltration via model interactions.

These shifts are tightly coupled. The same properties that define AI-driven traffic—distribution, dynamism, and opacity—also complicate detection and enforcement. As a result, security architectures are evolving toward:

-

Identity-centric models that extend zero-trust principles to non-human entities.

-

Data loss prevention mechanisms adapted to AI-generated and AI-consumed data flows.

-

Fine-grained segmentation within network fabrics, subject to latency constraints.

-

Increased reliance on AI-driven detection and response systems to counter AI-enabled threats.

Importantly, these dynamics vary across network domains (LAN, WAN, and data center/cloud), requiring domain-specific adaptations while maintaining consistent policy frameworks.

Alignment with “Why Batch Pipelines Break AI Agents: The Case For Streaming-First Network Operations:”

The key points made in this article are highly consistent with the above referenced IEEE Techblog post written by Shazia Hasnie, Ph.D. Both articles treat AI as an architectural forcing function: Shazia’s article focuses on the data/telemetry layer, while this post extends the same logic to the broader enterprise network stack. The core claim in both pieces is that legacy architectures were built for human-operated, latency-tolerant workflows, not autonomous AI systems. In the Shazia’s article, batch pipelines fail because they deliver stale, incomplete, and inconsistent context to AI agents. Here, the same mismatch appears at the network level, where legacy enterprise designs were optimized for north–south traffic, perimeter trust, and static operational assumptions. Both arguments are fundamentally about architectural mismatch rather than isolated product shortcomings.

A particularly strong point of overlap is the emphasis on real-time context. Shazia’s article argues that AI agents require continuous data freshness and an ordered event stream to function safely, while this piece frames AI networking as a shift toward machine-timescale traffic, streaming telemetry, and identity-aware enforcement. In both cases, the network is no longer just a transport layer; it becomes part of the control loop that determines whether AI decisions are accurate and timely.

The failure models are also similar. Shazia identifies five failure modes of batch-to-agent mismatch: stale data, memory gaps, delete blindness, schema fragility, and coordination failure. While not using that taxonomy explicitly, we share the same underlying diagnosis by arguing that complexity, fragmentation, and legacy operational models are now the primary sources of risk. Our discussion of east–west traffic, non-human identities, zero trust, and observability mirrors Shazia’s broader point that autonomous systems fail when their surrounding infrastructure cannot preserve state, sequence, and policy consistency.

These two articles work well together because they address different layers of the same transition. The first article is mainly about the data plane of AI operations—how telemetry, event streams, and agent inputs must move from batch to streaming to avoid operational failure. This article is about the network and security architecture around that data plane—how the enterprise stack, LAN, WAN, and fabric must evolve to support AI-native workloads and enforcement. Hence, the reader can consider the two articles companion pieces.

…………………………………………………………………………………………………………………………………………………………………………………………

About the Author:

Shashi Kiran has nearly 30 years of experience in network, security and cloud technologies, primarily as an operator and executive in public and private B2B companies, where he has held global product management and marketing positions. He’s adopting a protopian view of AI, while being both fascinated and frightened by it at the same time.

Shashi is currently the CMO at Nile, whose network architecture aligns with what AI-era networks require: identity-centric control, embedded security, and autonomous operations. He previously held executive roles at Cisco, Check Point Software, Broadcom and other venture backed startups, and is based in San Jose, CA. He can be reached at http://www.linkedin.com/in/

…………………………………………………………………………………………………………………………………………………………………………………………

References:

Why Batch Pipelines Break AI Agents: The Case For Streaming-First Network Operations

Nile launches a Generative AI engine (NXI) to proactively detect and resolve enterprise network issues

Fiber Optic Networks & Subsea Cable Systems as the foundation for AI and Cloud services

Dell’Oro: Bright Future for Campus Network As A Service (NaaS) and Public Cloud Managed LAN

Cisco Plus: Network as a Service includes computing and storage too

https://nilesecure.com/press-releases/networking-and-security-in-higher-ed

https://nilesecure.com/press-releases/nile-powers-black-hat-mea-2025-with-zero-reported-incidents

Thank you to Shashi Kiran and Alan J Weissberger, ScD for this rigorous and timely analysis. I’m honored to have my article referenced as a companion piece.

The authors make a critical point that deserves to be underlined: AI is not a feature upgrade. It’s an architectural stress test—and most legacy stacks are failing it. My article examined what happens when the data pipeline feeding AI agents is still architected for human consumers—stale data, memory gaps, coordination failures. This piece extends the same logic to the broader enterprise network stack, and the diagnosis is consistent. The networks most enterprises are running today were designed for a world of managed devices, perimeter trust, and north-south traffic. That world is gone. What’s replacing it—east-west machine traffic, non-human identities, zero-trust enforcement, streaming telemetry—requires a fundamentally different architectural foundation.

The two articles work together because they address different layers of the same transition: the data plane of AI operations, and the network and security architecture around it. Neither layer can be retrofitted. Both must be rebuilt deliberately.

I hope this conversation continues. The enterprise stack is not going to fix itself, and AI will not wait.