Month: May 2026

Goldman Sachs report: Optical Networking is the next mega trend in AI infrastructure

Goldman Sachs analysts forecast a $154billion opportunity in optical networking driven by skyrocketing capacity demands from hyperscale cloud and AI workloads. Carriers and vendors are integrating 10GbE edge networking and AI-RAN (Artificial Intelligence Radio Access Network) trials on live 5G networks.

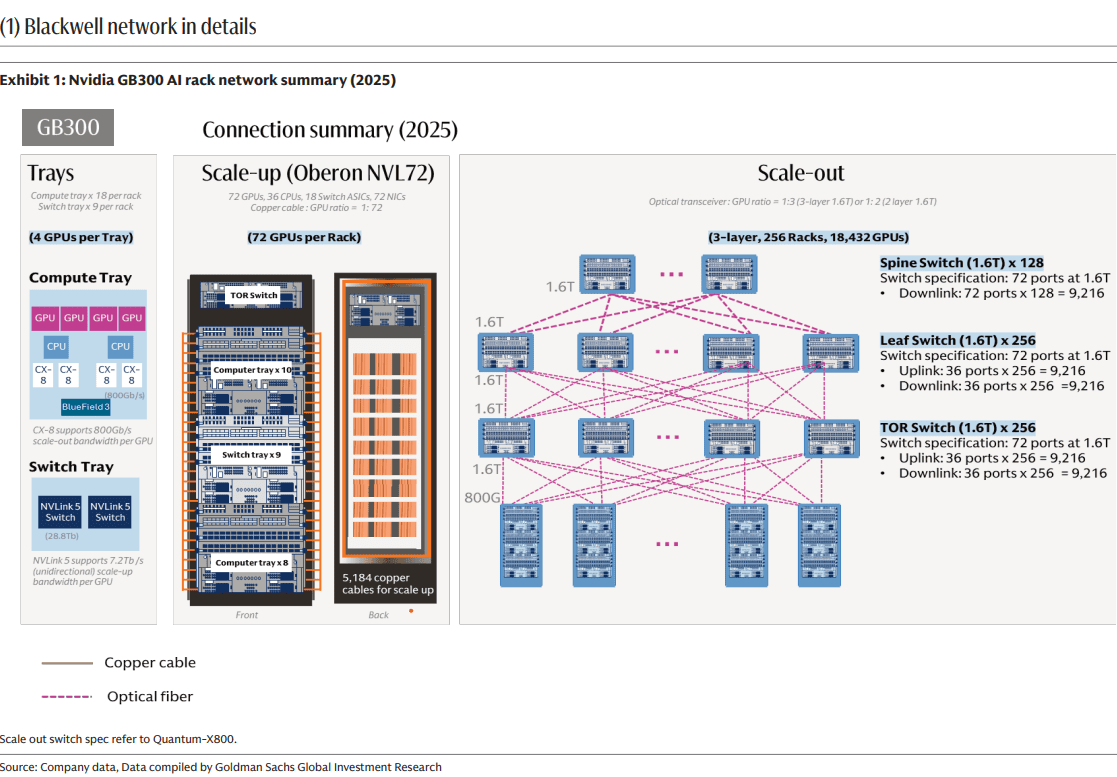

Goldman argues that AI infrastructure is creating a networking bottleneck phase, where optical interconnects become essential to connect more chips, keep latency low, and let AI clusters scale efficiently. The total optical networking market forecast 9x increase to $154 billion is due to both scale-up and scale-out AI data center architectures grow.

AI compute gains are no longer just about faster GPU and HBM chips; they depend on moving data fast enough between chips, racks, and super-nodes. Goldman Sachs emphasizes that networking now “unlocks computing capability” by enabling seamless exchange across multiple AI chips, which is exactly where copper-based links start to fall short. That makes fiber-optic connectivity, pluggable optics, and co-packaged optics central to the next phase of AI build-out. The report splits opportunity across scale-up and scale-out networking, plus component categories such as copper cables, pluggable optical modules, CPO, and PCB midplanes. External coverage of the report says Goldman Sachs sees scale-up as the larger pool, about $106 billion or 69% of the $154 billion TAM, while CPO could represent about $91 billion or 59% of the total, assuming 29% penetration in scale-out networking. In practical terms, the report is signaling that the highest-value optical opportunity sits inside tightly coupled AI systems, not just in long-haul or metro transport.

………………………………………………………………………………………………………………………………………………………………………………………….

Goldman projects the following:

- Dollar content increase by 16x / 45x in Scale Out / Scale Up per computing unit from GB300 NVL72 (per computing unit means 72 GPUs per rack to reach NVL72) to Rubin Ultra NVL576 (per computing unit means 72 GPUs per rack, and 8 racks together to reach NVL576), with opportunities across pluggable optical modules, optical engines in CPO, copper cables, and PCB midplanes.

- A 13x larger addressable market for optical modules / optical engines expanding from scale out (e.g. GB300 NVL72) to scale up (e.g. Nvidia Rubin Ultra [1.] NVL576 level 2 scale up via CPO) per computing unit. n

- A 10x larger value market for pluggable optical modules in scale out per computing unit from GB300 NVL72 to Rubin Ultra NVL576, even with a 29% CPO penetration rate. The numbers of pluggable optical module (1.6T equivalent) per computing unit would increase from 216 units in GB300 NVL72 to 2.5k units in Rubin Ultra NVL576.

Note 1. Nvidia Rubin Ultra is a flagship, next-generation AI and high-performance computing (HPC) processor succeeding the standard Rubin architecture. Scheduled to debut in late 2027, it utilizes massive multi-die chiplet designs and unprecedented memory configurations to power the next wave of generative and agentic AI.

………………………………………………………………………………………………………………………………………………………………………….

Market Forecasts:

The investment bank expects the aggregate dollar content per computing unit across scale up and scale out to increase by 29x from US$315k in GB300 NVL72 to US$9.4bn in Rubin Ultra NVL576, and assuming the numbers of racks through the full product cycle are 48k racks for GB300 NVL72, and 16.5k computing units for Rubin Ultra NVL576, the aggregate value TAM across scale up and scale out would increase by 9x from US$15bn in GB300 NVL72 (mainly in 2026) to US$154bn in Rubin Ultra NVL576 (mainly in 2028).

Among the US$154bn value TAM, 69% goes to scale up, or US$106bn, and CPO contributes US$91bn, or 59% of the US$154bn value TAM, assuming CPO at 29% penetration rate in scale out.

For network architects, the important takeaway is that AI clusters are becoming optics-heavy at more layers of the network stack, not just at the edge of the rack. The likely winners are suppliers that can reduce power, improve density, and simplify packaging for very high-bandwidth links, especially around CPO and advanced pluggables. This is less a story about traditional telecom optics and more about datacenter interconnects optimized for GPU fabrics and AI training/inference throughput.

The most consistently cited “top beneficiaries” are Coherent, Lumentum, and Fabrinet. These companies sit close to the core optical component modules and manufacturing layers that scale with higher AI interconnect demand. That makes them the most straightforward proxies for the forecasted optics expansion. The report’s thesis favors companies with strong exposure to high-end optical transport, coherent optics, and data-center interconnect rather than the broader optical networking/PON equipment companies like Ciena, Nokia/Infinera, Cisco/Acacia, ADVA, or Calix.

Conclusions:

Strategically, Goldman Sachs maintains that optical networking is no longer a niche enabling layer; it is becoming a core enabler of AI capex scaling. That shifts investor attention toward optical component vendors, silicon photonics, transceiver suppliers, and adjacent packaging ecosystems. The report’s core message is simple: as AI clusters grow, the network fabric becomes a first-order constraint, and optics are the most likely answer.

References:

2026 Fiber Connect Keynote: “The Future of Fiber Optics: AI and the Quantum”

How will fiber and equipment vendors meet the increased demand for fiber optics in 2026 due to AI data center buildouts?

Big Fiber’s $250M financing deal to buildout dark fiber routes for AI Data Center expansion

Analysis: Fiber Broadband Association (FBA) whitepaper: Upgrading MSO Networks to Fiber to the Home (FTTH): A Technical Perspective

Fiber Optic Boost: Corning and Meta in multiyear $6 billion deal to accelerate U.S data center buildout

Fiber Optic Networks & Subsea Cable Systems as the foundation for AI and Cloud services

AI infrastructure investments drive demand for Ciena’s products including 800G coherent optics

DriveNets and Ciena Complete Joint Testing of 400G ZR/ZR+ optics for Network Cloud Platform

Inside Nokia’s new AI Networking Innovation Lab

- Silicon & Compute: Collaborating with AMD to optimize enterprise AI workloads alongside Nokia data center switches.

- Testing & Infrastructure: Partnering with Keysight Technologies to emulate workloads across Ultra Ethernet Consortium (UEC) and RoCEv2 transports.

- Hardware & Servers: Integrating high-performance platforms from Lenovo and Supermicro.

- Data Storage & Cloud: Working with Weka and cloud builders like Nscale to eliminate storage bottlenecks during heavy computational training.

Nokia’s AI Networking Innovation Lab is built upon three fundamental pillars: Technology Innovation, Ecosystem Collaboration, and Validation. Image credit: Nokia

………………………………………………………………………………………………………………….

Technology Innovation: The lab provides a dedicated space for AI partners to experiment with next-gen solutions across the entire networking stack – driving emerging standards forward with pioneering approaches to new protocols, switching silicon, congestion control, real-time telemetry, and automation.

“Partnering with Nokia in the AI Networking Innovation Lab has enabled us to benchmark and optimize AI networks under real-world conditions…Together, we are helping accelerate AI network adoption by giving operators and hyperscalers the validated insights needed for confident, large-scale deployment.”

Ecosystem Collaboration: True progress depends on a strong ecosystem of technology providers – silicon manufacturers, GPU developers, system, storage and test vendors, and cloud platforms – that work together to create highly-compatible AI-ready solutions. This facilitates joint testing for interoperability, improves integration, and ensures roadmaps are aligned across different hardware, software, and orchestration layers.

Travis Karr, Corporate Vice President, HPC and Sovereign AI at AMD believes customer collaboration and an open ecosystem are fundamental to accelerating AI innovation:

“By co-developing solutions with partners, such as Nokia in their AI networking innovation lab, we ensure our AMD enterprise AI solutions are tested with Nokia data center switches on real-world workloads and network demands. An open, standards-driven approach empowers customers to integrate seamlessly across heterogeneous environments, avoiding lock-in and fostering industry-wide advancement in AI.”

Validation: This positions the lab as the testing ground for Nokia Validated Designs, where customers and partners rigorously validate multi-vendor data center architectures under authentic AI training and inference workloads. By testing failure scenarios, congestion behavior, and operational automation, the lab turns NVDs into proven, deployable solutions — enabling predictable performance, faster deployment, and reduced operational complexity and risk for organizations navigating the AI era.

Arno van Huyssteen, Vice President of Global Telecommunications for Nscale:

“Nokia is a strategic networking partner for Nscale as we build towards AI Grid, and the engineering rigour behind their Validated Designs reflects the kind of innovation needed to enable next-generation AI infrastructure. The depth of hardware, software and failure testing behind those blueprints is what will give operators the confidence to deploy complex AI environments faster, with fewer integration risks and less operational disruption. We’re excited to collaborate in the AI Networking Innovation Lab to help push the boundaries of AI-native networking and validate the next generation of solutions before they reach production.”

A primary focal point inside the lab is managing data center congestion. Unlike traditional cloud traffic, back-end AI networks feature high-density data synchronization across massive GPU clusters. The lab uses advanced automation, AIOps, and lossless Ethernet solutions—such as the Nokia 7220 IXR-H6 switches—to handle these intense uplink and synchronization demands safely.

The AI Networking Innovation Lab supports Nokia’s broader strategy to accelerate the next era of AI-driven connectivity. As demand for AI infrastructure continues to grow, data center networking has become one of the most critical foundations of the global AI ecosystem. Through this investment, Nokia is strengthening its capabilities in AI and cloud infrastructure while advancing its vision of AI-native networking.

Rudy Hoebeke, Vice President of Software Product Management at Nokia:

“The launch of Nokia’s AI Networking Innovation Lab marks a major milestone in our commitment to drive the next era of AI-native connectivity. As the industry continues to evolve with solutions like scale-across and AI-Grid, this lab is poised to accelerate AI networking technology that will not only support but optimize these emerging industry offerings. This center gives our customers and partners early access to new technologies, deeper collaboration with the world’s leading AI ecosystem players, and the confidence that their networks are validated under more realistic AI conditions. By accelerating innovation and reducing deployment risks, we’re enabling the industry to deliver faster, more reliable, and more sustainable AI experiences to people and businesses everywhere.”

………………………………………………………………………………………………………………………

References:

Analysis: Nokia’s strong growth in Optical Networks and AI network infrastructure

Orange, Nokia, Nvidia, and Intel debate: ASICs vs. GPUs vs. General-Purpose CPUs for RAN Baseband Processing

Nokia’s AI Applications Study: “Physical AI” may require RAN redesign to support high‑volume, low‑latency uplink traffic

Australia’s NBN and Nokia demonstrate multi-generation optical technologies concurrently over existing FTTP infrastructure

Nokia to showcase agentic AI network slicing; Ericsson partners with Ookla to measure 5G network slicing performance

Tampnet to expand 5G offshore connectivity in the Gulf of Mexico using Nokia AirScale 5G radios

Dell’Oro: Analysis of the Nokia-NVIDIA-partnership on AI RAN

2026 Fiber Connect Keynote: “The Future of Fiber Optics: AI and the Quantum”

Dr. Michio Kaku’s 2026 Fiber Connect keynote, “The Future of Fiber Optics: AI and the Quantum,” kicked off the inaugural AI & Emerging Technology Infrastructure Summit on Wednesday, May 20,2026.

As a theoretical physicist and futurist, Dr. Kaku delivered a high-altitude roadmap framing fiber optic networks not merely as faster telecom pipes, but as the mandatory foundation for a world defined by concurrent, multi-cloud AI infrastructure and quantum mechanics.

Kaku described the convergence of AI, quantum computing, and fiber infrastructure as a critical shift toward an AI-native, quantum-enabled internet essential for national competitiveness. Kaku emphasized that fiber optics are necessary to facilitate “quantum AI” by handling high-density, low-latency data movement, moving beyond traditional networking to support exponential computing advancements.

Key Takeaways:

- Fiber as the Foundation for AI: Dr. Kaku explained that massive data sets and hyperscale AI computations cannot run efficiently over wireless or legacy networks. Fiber’s near-limitless bandwidth and sub-millisecond latency are required to process these workloads in real-time.

- The Quantum Computing Leap: He detailed how quantum networks—which compute at the atomic level—will redefine security and processing power. He emphasized that quantum data requires the stability, security, and bandwidth that only fiber optics can provide.

- National Competitiveness: Dr. Kaku framed fiber broadband as a strategic national asset. He argued that a region’s ability to evolve into an AI-native economy depends directly on robust fiber infrastructure to secure future healthcare, financial, and climate innovations.

- The “Thinking Economy”: He projected that networks are evolving to do more than just transport data. They will increasingly support “thinking economies” where intelligence moves instantly between edge computing centers, end-points, and the cloud.

The presentation and subsequent fireside chat with quantum computing firm IonQ offered several critical technological dimensions and actionable industry analysis:

The Physics of the “AI Triad” (Compute, Quantum, & Photonics):

Kaku mapped out how classical silicon-based computing is approaching its physical limits (thermodynamics and transistor gating). He explained that the future relies on a three-pronged convergence:

-

- AI Models: The brain processing the logic.

- Quantum Computing: The hyper-accelerator solving atomic, chemical, and multi-variable optimization issues.

- Optical Fiber: The unified nervous system. Quantum and distributed AI workloads cannot scale on traditional copper networks because they require absolute determinism, zero-jitter latency, and near-limitless bandwidth.

Upgrading to a Quantum-Ready Internet:

Drawing from themes in his book Quantum Supremacy, Kaku noted that the move toward a quantum-enabled web alters the physical network topology. Operators must plan for physical security layers (like Quantum Key Distribution) and data transmission methods that preserve quantum entanglement across distances.

–>Fiber is the only media capable of transporting light photons over vast geographies without disrupting these states.

The Power and Cooling Crisis:

A significant focus of the analysis was the staggering energy footprint of next-generation AI factories and hyper-scale data centers. Kaku noted that moving data electronically creates heat resistance. Shifting toward all-optical (photonic) networks and in-rack fiber interconnects removes electronic bottlenecks, drastically reducing the power required to pass massive datasets between distributed data centers

Strategic Implications for Network Operators:

During the fireside chat, the discussion moved from theoretical physics to immediate business strategy and tactics:

-

- National Competitiveness: Bandwidth, latency, and optical infrastructure are the new benchmarks for a country’s economic power.

- Capacity Planning: Network planners must shift from estimating consumer download speeds to calculating the throughput required for real-time, stateful AI agents and machine learning inference workloads operating at the network edge.

FBA Panel and Summit Sessions:

Following Kaku’s opening address, the Fiber Broadband Association (FBA) hosted deep-dive industry panels that put these physics concepts into operator terms:

- The Open Compute Project (OCP): Discussed open-source hardware standards for in-rack photonics to support massive AI clustering.

- Multi-Data-Center Architectures: Network engineers mapped out how dense dark fiber rings are being laid to link secondary edge facilities, allowing enterprises to run heavy inference closer to end-users without overwhelming backbone networks.

- AI data center speed and power requirements are transitioning towards 800 Gbps–1.6 Tbps node-to-node networking and gigawatt-scale power to handle distributed generative AI workloads.

- High rack densities up to 240 kW require advanced liquid or immersion cooling, with optical technologies being introduced to reduce heat generation.

…………………………………………………………………………………………………………………………………..

References:

https://fiberconnect.fiberbroadband.org/about/whats-new/

Analysis: Fiber Broadband Association (FBA) whitepaper: Upgrading MSO Networks to Fiber to the Home (FTTH): A Technical Perspective

Fiber Broadband Association Middle Mile WG: how to use “Digital Infrastructure Networks” for coordinated fiber backbone investments

Analysis: AT&T 1Q-2026 results: increased fiber penetration, FWA momentum, D2D deals, and mobile/home internet bundles

Fiber Optic Boost: Corning and Meta in multiyear $6 billion deal to accelerate U.S data center buildout

Fiber Optic Networks & Subsea Cable Systems as the foundation for AI and Cloud services

How will fiber and equipment vendors meet the increased demand for fiber optics in 2026 due to AI data center buildouts?

Automating Fiber Testing in the Last Mile: An Experiment from the Field

AI wireless and fiber optic network technologies; IMT 2030 “native AI” concept

Big Fiber’s $250M financing deal to buildout dark fiber routes for AI Data Center expansion

Executive Summary:

Big Fiber [1.] has secured $250 million in financing from Stonepeak and Caisse de dépôt et placement du Québec (CDPQ) to expand its dark fiber footprint and increase network capacity in response to accelerating hyperscaler and large-scale data center investments in AI-driven workloads.

Note 1. Sunnyvale, CA headquartered Big Fiber was previously known as Bandwidth IG, which was originally established in 2019 as a telecom and dark-fiber infrastructure company. The rebrand to BIG Fiber was announced on May 1, 2025 when the company described it as a shift to better reflect its focus on privately owned, newly constructed dark fiber networks. The company has built privately owned metro dark fiber networks from its inception, primarily in the SF Bay Area and the Greater Portland, OR and Atlanta, GA areas.

BIG Fiber structures its dark fiber portfolio around high‑strand‑count, single‑mode, low‑loss fiber deployed in purpose‑built, underground metro and regional routes, rather than a carrier‑specific “technology” stack of its own. The company’s public materials emphasize:

-

Single‑mode fiber (SMF) for metro and long‑haul connectivity, consistent with standard dark‑fiber infrastructure designed for multi‑wavelength and DWDM‑based upgrades.

-

High‑density, high‑fiber‑count cables in metro corridors (often hundreds of strands) to support dense data‑center and interconnect demand, which is typical of “new‑build” dark‑fiber operators entering AI‑and‑cloud‑centric markets.

-

Point‑to‑point and ring‑style topologies engineered for extreme route diversity (tri‑/quad‑versity) and low latency, rather than a legacy long‑haul backbone that relies on older fiber types or managed wavelengths.

To complement Big Fiber’s dark‑fiber infrastructure; the customer provides the optical PHY layer (e.g., coherent DWDM, 400ZR/ZR+, or other high‑speed optics), which is how dark‑fiber providers typically position their offerings.

–>More about Big Fiber at the end of this article from the company itself.

……………………………………………………………………………………………………………………………………………………………………………………………………………………………………….

Proceeds of the facility will be used to refinance existing debt, provide new capital and facilitate the necessary headroom for major fiber optic network expansions already underway. This includes a significant multi-market buildout in Greater Atlanta, adding over 205 route miles and 165,000 fiber miles to BIG Fiber’s existing market-leading footprint.

“Our partnership with Stonepeak Credit and La Caisse marks a pivotal moment in our mission to empower our customers with highly scalable and purpose-built dark fiber solutions,” said Bruce Garrison, CEO of BIG Fiber. “This financing ensures we have the scale to stay ahead of the escalating demand for modernized infrastructure enabling the AI ecosystem and the necessary digital highways for decades to come.”

“BIG Fiber’s infrastructure delivers critical bandwidth to meet the insatiable demand for both data and compute capacity across its key markets,” said Arun Varanasi, Managing Director at Stonepeak Credit. “We are proud to partner with Columbia Capital, SDC Capital Partners, and La Caisse to support the company’s next leg of growth as it positions itself as one of the preeminent dark fiber operators in the country.”

“BIG Fiber is well positioned to meet the growing connectivity needs of enterprises and data centers seeking new, high-quality infrastructure options,” said Jérôme Marquis, Managing Director and Head of Private Credit at La Caisse. “Its resilient business model, underpinned by long-term contracts and strong structural demand, positions the company well for growth. Together with Stonepeak Credit, we’re providing a tailored financing solution that supports the continued buildout of essential digital infrastructure.”

The latest expansion will bring BIG Fiber’s Atlanta and San Francisco Bay Area network capacity to 850 route miles and over 3 million fiber miles. Projects are currently under construction or contract, with phased Ready for Service (RFS) dates expected in early 2027.

![]()

………………………………………………………………………………………………………………………………………………………………………………………………………………………………

According to Big Fiber Chief Commercial Officer Patton Lochridge, demand signals are particularly strong in key U.S. metros including the San Francisco Bay Area, Hillsboro, and Atlanta, where new fiber routes are being deployed to support AI-centric data center expansion. “We’re seeing customers require extreme route diversity, often moving toward triversity or quadversity networks to connect metro assets and long-haul routes,” Lochridge said. He added that inference workloads are increasing the demand for dense metro connectivity: “Traditional telecommunications networks are often too congested or lack the latency and loss tolerances required for stringent AI workloads, making purpose-built metro fiber essential.” Lochridge indicated that the majority of the new capital will be directed toward greenfield build-outs and targeted overbuilds of “exhausted legacy telecommunications corridors that need more scale.”

Industry analysts highlight a parallel geographic shift in AI infrastructure deployment. Sterling Perrin, senior principal analyst for optical networks and transport at Omdia, noted that AI campuses are expanding beyond traditional connectivity hubs such as Ashburn, Dallas, and Northern California into power-advantaged regions including West Texas, Ohio, Tennessee, Louisiana, and Georgia. “They all require massive fiber optic connectivity,” Perrin said.

Power availability is emerging as a primary constraint shaping network topology. Ron Westfall, vice president and analyst at HyperFrame Research, emphasized that grid limitations are driving hyperscalers toward distributed AI campus architectures interconnected via metro and long-haul dark fiber. “Power grid constraints have forced a material shift toward metro and long-haul dark fiber infrastructure to stitch together distributed regional data center campuses,” Westfall said. “Because this relentless GPU-to-GPU communication demands near-zero latency and unprecedented bandwidth, infrastructure planners are prioritizing the deployment of ultra-high-strand dark fiber corridors that directly link distributed, power-rich data centers.”

AI Workloads Reshape Optical Demand:

AI-driven traffic growth is now materially impacting the optical supply chain. In its April 2026 post-OFC analysis, CRU Group reported that AI-related data center demand “has overtaken traditional telecom as the primary growth engine for optical [fiber] and cable,” contributing to tightening supply conditions for high-fiber-count cables and upstream preform materials.

Despite this surge, the majority of AI traffic remains intra-data-center. Omdia estimates indicate that up to 90% of AI traffic does not exit the facility during GPU cluster operations. However, the emergence of distributed AI architectures is beginning to increase requirements for high-capacity inter-data-center interconnect (DCI).

At the Optica Executive Forum, Cisco SVP and Fellow Rakesh Chopra highlighted the scale differential between AI and conventional traffic profiles. As cited by Perrin, AI “scale-up” traffic within data centers can generate 504 times more traffic than traditional DCI flows, while “scale-out” traffic can produce 56 times DCI bandwidth requirements. “With AI training models at the limits of what can be processed within a data center, distributed AI clusters are inevitable,” Perrin said.

This architectural transition is reflected in NVIDIA’s AI factory designs, which decouple east-west GPU compute traffic from traditional north-south enterprise flows, leveraging low-latency leaf-spine topologies optimized for continuous GPU synchronization.

Westfall further noted that these evolving traffic patterns are fundamentally altering network design assumptions. Operators are increasingly optimizing for persistent machine-to-machine synchronization rather than burst-oriented enterprise traffic models.

Fiber as a Core AI Infrastructure Asset:

The Big Fiber’s latest financing aligns with broader trends in AI infrastructure investment, where capital is being deployed across integrated stacks including energy, land, connectivity, and compute infrastructure. Utilities are expanding transmission capacity, while developers are co-locating generation resources near emerging AI hubs.

Within this context, fiber infrastructure is being revalued based on its strategic proximity to power-rich data center clusters. “Infrastructure monetization is shifting away from historical metrics such as per-megabit pricing toward asset-level valuations built around proximity to power-rich data centers,” Westfall said.

If current deployment trajectories persist, the resulting topology will consist of a dense, high-capacity mesh of metro and long-haul fiber routes interconnecting geographically distributed, power-optimized AI campuses with hyperscale cloud and interconnection ecosystems.

………………………………………………………………………………………………………………………………

About BIG Fiber:

BIG Fiber is a metro dark fiber provider that offers high capacity, strategically placed, dark fiber networks to mission critical data centers, Hyperscalers and enterprises throughout the San Francisco Bay Area, Greater Portland and Greater Atlanta areas. BIG Fiber’s 100% underground network meets critical data needs for enterprises and data centers that require new, quality infrastructure options. BIG Fiber’s San Francisco Bay Area network offers more than 320 route miles and 65 data centers. The Greater Portland network has more than 20 route miles and 15 data centers, and the Greater Atlanta network has more than 550 route miles and 30 data centers. BIG Fiber was founded in 2019 and is headquartered in Sunnyvale, California. Visit www.bigfiber.com to learn more.

………………………………………………………………………………………………………………………………

References:

BIG Fiber Secures $250 Million Financing Led by Stonepeak Credit and La Caisse

Analysis: Fiber Broadband Association (FBA) whitepaper: Upgrading MSO Networks to Fiber to the Home (FTTH): A Technical Perspective

Fiber Broadband Association Middle Mile WG: how to use “Digital Infrastructure Networks” for coordinated fiber backbone investments

Analysis: AT&T 1Q-2026 results: increased fiber penetration, FWA momentum, D2D deals, and mobile/home internet bundles

Fiber Optic Boost: Corning and Meta in multiyear $6 billion deal to accelerate U.S data center buildout

Fiber Optic Networks & Subsea Cable Systems as the foundation for AI and Cloud services

How will fiber and equipment vendors meet the increased demand for fiber optics in 2026 due to AI data center buildouts?

Automating Fiber Testing in the Last Mile: An Experiment from the Field

AI wireless and fiber optic network technologies; IMT 2030 “native AI” concept

Analysis & Implications of the Communications Cybersecurity Information Sharing and Analysis Center (C2 ISAC)

The Communications Cybersecurity Information Sharing and Analysis Center (C2 ISAC), announced today, is a private sector-only nonprofit dedicated to strengthening defenses across the U.S. telecommunications industry. The founding members of C2 ISAC are: AT&T, Charter Communications, Comcast, Cox Communications, Lumen Technologies, T-Mobile, Verizon, and Zayo. The board of the nonprofit organization will comprise the chief information and security officers from each of the eight network operators, led by AT&T CISO Rich Baich as chairman.

The coalition represents a strategic imperative for major network operators to build a unified, rapid-response network to counter sophisticated, AI-driven infrastructure attacks and state-sponsored espionage. It is a meaningful structural change in how the U.S. telecom sector approaches cybersecurity—especially under pressure from increasingly coordinated, AI-enabled threats and nation-state activity.

Traditional ISACs (Information Sharing and Analysis Centers) already exist across sectors—financial services, energy, healthcare—but telecom has historically been more fragmented in how it shares threat intelligence. Operators often guarded incident data due to regulatory exposure, reputational risk, and competitive sensitivities.

C2 ISAC stands out because it is explicitly private-sector-led, rather than government-anchored or compliance-driven. It focuses on telecom infrastructure itself (RAN, core, transport, signaling systems), not just enterprise IT and aims for real-time operational coordination, not just periodic intelligence reports.

“We’re not going to be operating in silos when a potential event occurs. There’ll be information sharing across all that…[and] coordinated response based on that information sharing,” said Baich. “We could be sharing vulnerabilities that we find to be an issue. We could be sharing information related to different types of cyber techniques that are being utilized. Most importantly, though, it is having that trusted forum and the right relationships that someone can just make a phone call to get an answer,” he added.

In effect, it’s closer to a joint cyber defense grid for carriers than a passive information-sharing forum. Several converging pressures explain why this is happening now:

-

AI-enhanced attack capabilities: Adversaries are using AI for automated vulnerability discovery, polymorphic malware, and adaptive intrusion techniques targeting network infrastructure (e.g., signaling exploitation, orchestration layers, and cloud-native cores).

-

State-sponsored campaigns: Groups linked to China, Russia, Iran, and DPRK have increasingly targeted telecom networks for espionage, lawful intercept bypass, metadata harvesting, and potential pre-positioning for disruption.

-

Soft targets in telecom evolution: The shift to:

-

Virtualized RAN (vRAN)

-

Open RAN (multi-vendor complexity)

-

Cloud-native 5G cores

has expanded the attack surface dramatically, especially at APIs, orchestration layers, and inter-vendor interfaces.

-

-

Regulatory pressure without operational mechanisms: Governments (e.g., via CISA, FCC, NSA advisories) have been urging collaboration, but lacked a low-friction, operator-driven mechanism for tactical data exchange.

Key C2 ISAC functions include:

-

Real-time threat intelligence sharing

-

Indicators of compromise (IOCs)

-

Tactics, techniques, and procedures (TTPs)

-

Zero-day exploitation patterns in telecom-specific protocols (e.g., SS7, Diameter, 5G SBA interfaces)

-

-

Coordinated incident response

-

Rapid cross-operator alerts when an intrusion is detected

-

Shared mitigation playbooks (e.g., blocking malicious signaling traffic patterns)

-

Potential “collective defense” actions, like synchronized filtering or patch prioritization

-

-

Infrastructure-specific vulnerability tracking

-

Vendor equipment vulnerabilities (RAN, core, routers, optical transport)

-

Software supply chain risks (containers, orchestration stacks like Kubernetes in 5G cores)

-

-

Simulation and preparedness

-

Joint exercises for large-scale outages or cyber-physical attacks

-

Red-teaming of inter-operator dependencies (e.g., roaming, interconnect)

-

Why this matters strategically:

This is less about incremental improvement and more about closing a structural asymmetry:

-

Attackers collaborate and reuse tooling globally

-

Defenders (telecom operators) have historically operated in silos

C2 ISAC is an attempt to match attacker coordination with defender coordination, particularly in a sector that underpins:

-

National security communications

-

Critical infrastructure interconnectivity

-

Emergency services

-

Financial transaction networks

In that sense, telecom is closer to energy than to typical enterprise IT—and requires a sector-wide defense posture, not just firm-level security.

Implications for the telecom ecosystem:

-

Operators: Likely to gain faster detection and response capabilities, but must overcome internal legal/compliance barriers to share sensitive data.

-

Vendors (e.g., Ericsson, Nokia, Cisco): May face stronger pressure for rapid disclosure and coordinated patching, especially if vulnerabilities affect multiple operators simultaneously.

-

Cloud providers (AWS, Azure, Google Cloud): Become indirectly implicated, since 5G cores and network functions increasingly run on hyperscaler infrastructure.

-

Government: Even though this is private-sector-led, agencies like CISA and NSA will likely act as intelligence feeders and backstops, not primary coordinators.

Risks and limitations:

-

Trust barriers: Operators must be willing to share sensitive breach data quickly—historically a weak point.

-

Legal liability concerns: Information sharing can expose firms to regulatory or litigation risk unless protected.

-

Speed vs. accuracy trade-offs: Real-time sharing increases the risk of false positives propagating across networks.

-

Vendor opacity: If equipment/software vendors are slow or incomplete in disclosures, the ISAC’s effectiveness is constrained.

A useful analogy:

C2 ISAC aims to move telecom from a model of independent air traffic control towers to a shared radar network:

Each network operator still controls its own “airspace,” but now they can all see incoming threats earlier and coordinate responses before collisions—or attacks—propagate system-wide.

References:

https://www.lightreading.com/security/eight-big-us-telcos-join-forces-on-network-cybersecurity

Key Differences Between Network Cybersecurity and Control System Cybersecurity & Why It Matters

Cybersecurity threats in telecoms require protection of network infrastructure and availability

WSJ: T-Mobile hacked by cyber-espionage group linked to Chinese Intelligence agency

GSA Meetup: Cyber Security Continues as Major Obstacle for IoT Adoption

Demythifying Cyber security: IEEE ComSocSCV April 19th Meeting Summary

Analysis: Fiber Broadband Association (FBA) whitepaper: Upgrading MSO Networks to Fiber to the Home (FTTH): A Technical Perspective

Executive Summary:

The Fiber Broadband Association (FBA) has published an economic and structural framework for Hybrid Fiber Coax (HFC)-to-Fiber to the Premises (FTTP) [1.] upgrades, viewing that as a ‘strategic imperative’ for cable network operators (MSOs). FBA’s The whitepaper white paper, “Upgrading MSO Networks to Fiber to the Home (FTTH): A Technical Perspective,“ positions fiber as the long-term architectural solution for cable access networks.

The whitepaper evaluates multiple migration paths—including full overbuilds, targeted deployments, and HFC/FTTP coexistence—alongside passive optical network (PON) options and operating models. Economically, the report estimates FTTP operating expenses to be approximately 50% lower than HFC, driven by the elimination of active outside plant components. It outlines deployment options ranging from incremental overlays to full HFC replacement, each with distinct cost-performance trade-offs.

Note 1. Fiber optic access networks (sometimes referred to as fiber-to-the-home—FTTH—or fiber-to-the-premises—FTTP) are built to connect homes and businesses to lightning fast Internet connections. The fiber optic cables that make up these networks are the fastest and most reliable broadband technology and are capable of delivering vastly higher bandwidth than traditional copper wires or wireless. All-fiber networks are directly connected from the central office all the way to a subscriber’s building. There is no other technology along the path except fiber optics.

Fiber optic cables are made up of thin strands of glass that carry information by transmitting pulses of light, which are usually created by lasers. The vibrations are turned on and off very quickly. A single fiber can carry multiple streams of information simultaneously over different wavelengths, or colors, of light, enabling more robust video, Internet, and voice services. Fiber cables are capable of transmitting multi-gigabit Internet speeds compared to the mere megabytes typical of copper connections.

Image Credit: Panther Media GmbH/Alamy Stock Photo

…………………………………………………………………………………………………………………………..

Divergent Cable Operator (MSO) Strategies:

In practice, MSO upgrade strategies remain highly variable, shaped by market competition, plant condition, density, and capital constraints. Most large operators now manage hybrid access portfolios spanning HFC, FTTP, and, in some cases, fixed wireless access (FWA).

Optimum Communications, for example, is deploying FTTP overlays in dense Northeastern markets while relying on DOCSIS 3.1 in rural areas where fiber economics are less favorable.

Comcast and Charter similarly pursue selective FTTP in greenfield and edge-out scenarios but continue to prioritize HFC evolution via DOCSIS 4.0. Both assert that upgraded HFC can deliver symmetrical multi-gigabit services at lower cost. Charter estimates upgrade costs at approximately $100 per home passed (excluding CPE), versus roughly $200 for Comcast. Both operators utilize virtualized CMTS platforms and distributed access architectures (DAA) to support converged HFC/FTTP operations and targeted fiber extensions.

Operational cost differences remain contested. Charter CEO Chris Winfrey characterized the delta as minimal—on the order of one to two dollars per passing—stating, “We’ll take that tradeoff any day.” This reinforces the view that large-scale HFC-to-FTTP overbuilds are unlikely among major incumbents in the near term.

HFC investment also continues. Comcast and Charter are deploying low-latency capabilities based on the IETF Low Latency Low Loss Scalable Throughput (L4S) standard, with Charter already launching in multiple U.S. markets.

Fiber-First Deployments:

Smaller operators are, in some cases, moving more decisively toward FTTP. MCTV, serving approximately 57,000 customers in Ohio and West Virginia, determined that FTTP and DOCSIS 3.1 were cost-comparable and opted for full fiber rebuilds. The operator now passes more than 80% of its footprint with FTTP and has decommissioned roughly two-thirds of its HFC power supplies.

Competitive and Technology Outlook:

The FBA identifies FTTP as the “primary driver” of recent cable subscriber losses, citing “structural limitations” of HFC in symmetry, latency, and scalability “that DOCSIS upgrades can partially address but not fully overcome.” Cable proponents dispute this, pointing to DOCSIS 4.0 and future enhancements.

However, competitive pressure is multi-dimensional. In addition to fiber, FWA is gaining traction, particularly in lower-speed tiers. As a result, operators are increasingly focused on pricing, service bundling, and customer experience, including converged broadband-mobile offerings, rather than peak speeds alone.

On the technology roadmap, CableLabs continues to extend DOCSIS, including exploration of an “operational annex” supporting spectrum up to 3 GHz and downstream rates around 25 Gbit/s, with longer-term targets of 6 GHz and 50 Gbit/s. At the same time, it is expanding work on PON, including coherent PON, reflecting a more access-technology-agnostic posture.

Migration Trajectory:

Some industry veterans align with the FBA’s long-term view. John Chapman has advocated a “fiber-first” strategy, citing projections that access networks may need to support up to 1 Tbit/s by 2040. Rather than abrupt transitions, this approach emphasizes phased migration from HFC to FTTP while leveraging existing infrastructure.

Quotes:

John Chapman, a DOCSIS pioneer and former long-time Cisco Systems engineering exec, suggested last year that the cable industry should adjust its thinking and take a “fiber-first” approach as historical trends indicate that broadband access networks will need to be capable of supporting speeds of 1 Tbit/s by 2040. Rather than doing a quick cutover, he thinks MSO’s should migrate from HFC to FTTP over an extended period. But they should get started now.

“The industry has reached an inflection point between the maturity of DOCSIS and the inevitability of fiber. It’s about coming up with a graceful, pragmatic solution to migrate, to transition from DOCSIS to fiber. And that migration’s going to take 20 years. But we need a strategy that accommodates the two,” he said. “It’s a cultural change.”

Jay Rolls, a former Charter CTO and current CTO of BSP, a company that conducts due diligence on various types of broadband network transactions, also believes that cable operators should be weighing whether FTTP is the right move. His recent analysis suggests that the capital spend on a DOCSIS 4.0 overhaul is comparable to an FTTP rebuild.

“Every network and market is different, but the tipping point is fast arriving where overbuilding fiber makes as much financial sense as upgrading HFC, especially when you consider what comes next,” he said on a Light Reading podcast.

References:

Upgrading MSO Networks to Fiber to the Home (FTTH): A Technical Perspective

https://fiberconnect.fiberbroadband.org/

Fiber Broadband Association Middle Mile WG: how to use “Digital Infrastructure Networks” for coordinated fiber backbone investments

Analysis: AT&T 1Q-2026 results: increased fiber penetration, FWA momentum, D2D deals, and mobile/home internet bundles

Fiber Optic Boost: Corning and Meta in multiyear $6 billion deal to accelerate U.S data center buildout

Fiber Optic Networks & Subsea Cable Systems as the foundation for AI and Cloud services

How will fiber and equipment vendors meet the increased demand for fiber optics in 2026 due to AI data center buildouts?

Automating Fiber Testing in the Last Mile: An Experiment from the Field

AI wireless and fiber optic network technologies; IMT 2030 “native AI” concept

Dell’Oro: Global RAN market stable (again) in 1Q 2026; top 5 RAN vendors are unchanged

A recently published report from Dell’Oro Group indicates that the stable trends shaping the Radio Access Network (RAN) market in 2025 extended into the first quarter of 2026. Worldwide RAN revenue, excluding services, increased at a low-single-digit year-over-year rate in 1Q 2026, marking the fifth consecutive quarter where the market remained within a relatively narrow range (-4 to +4% year-over-year). However, market fundamentals remain constrained by slower mobile broadband growth.

“This positive start does not alter the fundamentals shaping the growth prospects of this market,” said Stefan Pongratz, Vice President for RAN market research at the Dell’Oro Group. “We attribute the improved conditions primarily to a favorable regional mix and easier comparisons in markets that experienced sharp declines. Meanwhile, RAN remains growth-constrained, and operators are increasingly preparing for a slower mobile broadband growth environment,” Pongratz added.

Additional highlights from the 1Q 2026 RAN report:

- Growth in EMEA and APAC offset weaker activity in North America.

- Revenue rankings were unchanged in 1Q 2026. Based on trailing four-quarter worldwide revenue, the top five RAN suppliers are Huawei, Ericsson, Nokia, ZTE, and Samsung [1.].

- Regional imbalances continue to shape the market recovery trajectory, with APAC excluding China improving while North America and China remain under pressure.

Note 1. There were no significant market share shifts quarter-to-quarter for these five vendors whose ranking remains the same. The ongoing war in Iran, with the Strait of Hormuz closed, has disrupted supply chains for specialized components, while higher energy prices are raising operational costs for infrastructure deployment, creating an increasingly complex environment for network equipment suppliers.

Dell’Oro Group’s RAN Quarterly Report offers a complete overview of the RAN industry, with tables covering manufacturers’ and market revenue for multiple RAN segments including 5G NR Sub-7 GHz, 5G NR mmWave, LTE, Macro BTS, small cells, Massive MIMO, and Cloud RAN. The report also tracks the RAN market by region and includes a four-quarter outlook. To purchase this report, please contact us by email at [email protected]

References:

Worldwide RAN Market Remained Stable in 1Q 2026, According to Dell’Oro Group

Dell’Oro: RAN Market Stabilized in 2025 with 1% CAG forecast over next 5 years; Opinion on AI RAN, 5G Advanced, 6G RAN/Core risks

Dell’Oro: Analysis of the Nokia-NVIDIA-partnership on AI RAN

ABI Research: mobile network spending to fall 29% from 2026-to-2031

Dell’Oro: RAN market stable, Mobile Core Network market +14% Y/Y with 72 5G SA core networks deployed

Market research firms Omdia and Dell’Oro: impact of 6G and AI investments on telcos

Mulit-vendor Open RAN stalls as Echostar/Dish shuts down it’s 5G network leaving Mavenir in the lurch

The enterprise network stack is collapsing; AI’s impact; comparison with “Batch Pipelines Break AI Agents”

by Shashi Kiran with Alan J Weissberger, ScD

Abstract:

This article presents the primary author’s point of view on networking technology and market evolution, as experienced it directly with his customers at Nile, where he serves as Chief Marketing Officer (CMO). A key theme is overlaying the impact of AI and its implications for network and network security architecture on a new network stack. We focus specifically on the diverse complexity and heterogeneity of the LAN, while drawing inferences to other areas in the broader enterprise network.

The article draws no information from other publications or references, except for the security breach data points derived from IDC, Gartner, and market surveys. Hence, the References listed at the end of the piece are from related IEEE Techblog posts and Nile press releases chosen by this website’s content manager.

Definitions:

The enterprise network stack is much more than a protocol stack. It is the layered architecture of physical infrastructure, forwarding devices, control protocols, management systems, and security enforcement functions that interconnect users, endpoints, workloads, and cloud services across campus, branch, WAN, data center, and cloud domains. It typically includes access, distribution, core, and edge segments, along with overlay, orchestration, telemetry, identity, and policy planes that govern how traffic is admitted, routed, segmented, monitored, and secured.

A useful way to think about the stack is in terms of planes:

-

Data plane: forwards packets, enforces QoS, and applies access-control functions close to the traffic path.

-

Control plane: discovers topology and capabilities, computes paths, and reacts to failures.

-

Management plane: handles configuration, monitoring, troubleshooting, reporting, and performance management.

-

Security stack: includes firewalls, IDS/IPS, secure web gateways, threat intelligence, and related inspection or enforcement tools.

At the device level, the stack typically includes physical media and network hardware such as cabling, Wi-Fi, NICs, switches, routers, gateways, servers, and dedicated security appliances. At higher layers, it includes protocols and services for addressing, routing, transport, application connectivity, identity, and policy enforcement, often mapped loosely to OSI/TCP-IP concepts rather than a strict textbook stack.

In an enterprise environment, the network stack extends across LAN, WAN, data center, cloud, and security domains, so “the stack” is less a single product and more an integrated system of infrastructure, software, telemetry, and policy. That is why discussions of enterprise architecture usually separate forwarding, orchestration, assurance, and security functions even when they are delivered in a unified platform.

Structural Limits of the Enterprise Network Stack:

The enterprise network stack is approaching a structural inflection which may be at a “breaking point.” That’s because what’s failing is structural and architectural, not incremental. The enterprise network stack was architected for a world that no longer exists, and most of the pain organizations feel today is the cost of pretending otherwise. The interesting question isn’t whether it breaks but rather when, and along which seams. Here’s why:

The network stack most enterprises still run was designed around five assumptions that were partly true in 2010 but mostly false in 2026. Users sit at desks on managed devices. Applications live in a corporate data center. Traffic flows north-south through a perimeter. Identity equals a user with a session. Trust derives from network location. Every one of those is gone. Users are hybrid, apps are SaaS and multi-cloud, traffic is increasingly east-west and machine-driven, identity now includes non-human agents acting with delegated authority, and zero trust has formally retired the idea that being inside the network means anything.

So, the enterprise stack isn’t failing because any single piece is bad. Rather, it’s failing because the architecture it was based on no longer matches the workload, the threat model, or the operational reality it’s asked to serve. AI is the forcing function, but the cracks were already there. The choice in front of most enterprises isn’t whether to rebuild but whether to do it deliberately or by accident. Will reinvention and self-disruption be intentional or forced?

Today, many enterprise environments represent layered extensions of legacy architectures rather than cohesive designs. AI acts as an accelerant, exposing pre-existing architectural limitations. The resulting fragmentation increases operational complexity, reduces agility, and amplifies security risk.

Complexity is a Primary Risk Vector:

Complexity has evolved from an operational burden into a primary source of systemic risk. Modern network environments often exceed the capacity for deterministic human understanding, creating conditions where failures and vulnerabilities emerge at the intersections between systems rather than within individual components.

Empirical evidence suggests that many successful breaches exploit misconfigurations and integration gaps rather than novel vulnerabilities. In this context, complexity itself becomes the effective attack surface.

This challenge is particularly acute in the LAN, which often retains legacy architectural elements, heterogeneous device ecosystems, and fragmented management models. Combined with constrained IT resources, this environment can become a disproportionate source of exposure.

Reducing complexity—through architectural simplification, integrated control planes, and automation—is therefore not merely an operational objective but a core security strategy. In AI-driven environments, simplicity directly contributes to resilience and risk reduction.

An Architectural Reset is Needed:

An architectural reset is increasingly necessary. While incremental upgrades remain feasible, their marginal returns are diminishing relative to the growing mismatch between legacy designs and emerging requirements. Many organizations continue to extend existing architectures due to cost constraints or perceived transition risks. However, this approach often compounds technical debt and increases long-term exposure. The more fundamental question is not whether incremental evolution is possible, but whether it represents effective capital allocation in the context of AI-driven workloads and threat models.

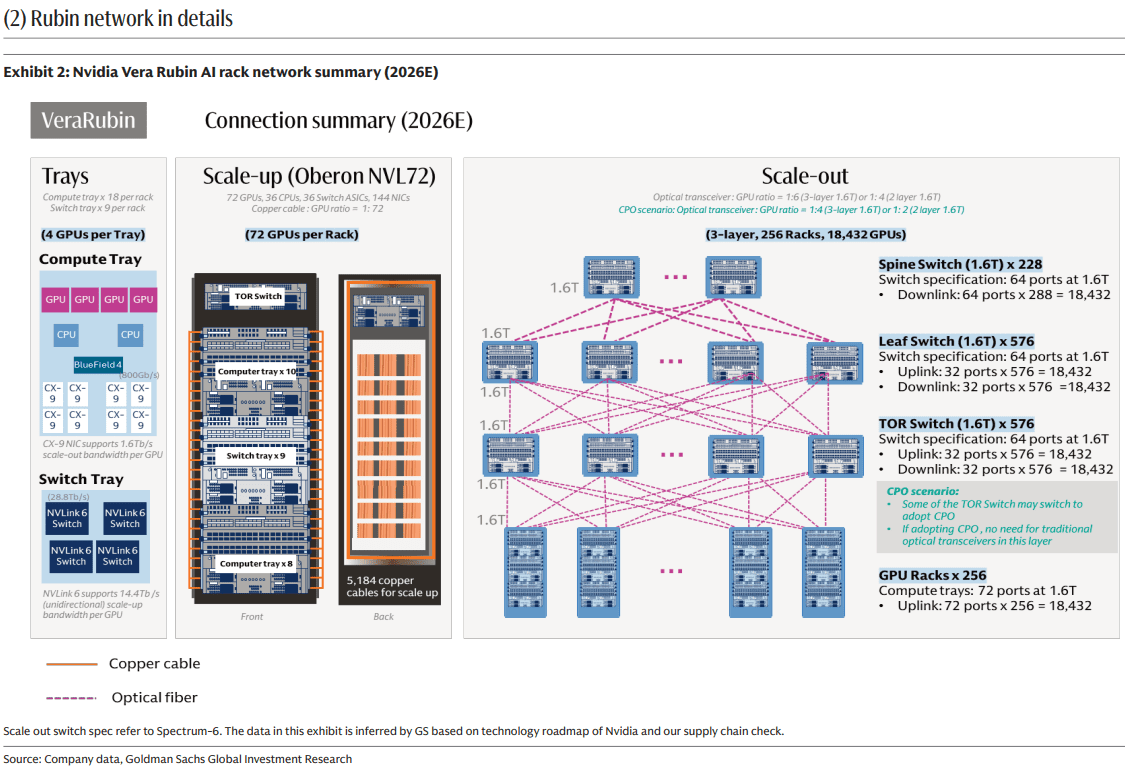

Forward-looking architectures are converging around several principles: AI-native workload support, identity-centric security, zero-trust enforcement, and tightly integrated operational models. Organizations that proactively redefine their network architectures around these principles are more likely to achieve sustainable performance, security, and operational efficiency gains.

Here are a couple of conceptual architectural constructs for a unified, secure fabric with AI orchestration, autonomous operation and service delivery, which replaces the fragmented network stack and operations of the traditional/legacy network. The first illustration is more functional; the second is a more theoretical stack. CLICK ON EACH IMAGE TO ENLARGE!

Security and the Network Fabric:

Security is neither fully “moving into” nor “remaining outside of” the network fabric; rather, it is being restructured across distinct functional planes, including identity, policy, enforcement, and detection.

Historically, network-centric security relied on in-path inspection mechanisms (e.g., firewalls, intrusion prevention systems, and proxies). This model proved difficult to scale due to encryption, cloud decentralization, and traffic patterns that bypass centralized inspection points.

In contemporary architectures, the network fabric is evolving into a high-performance enforcement plane. Policy definition and decision-making are increasingly centralized in identity and control-plane systems, while enforcement is distributed across the network and applied at line rate to identity-associated flows.

This separation of concerns improves scalability and composability. Identity-centric policy models define “who can do what,” while the network enforces those decisions efficiently and locally. The result is a more adaptable and performant security architecture.

However, the effectiveness of this approach depends on architectural discipline. Designs that treat the fabric as one component within a broader, identity-driven security framework tend to reduce complexity. Conversely, attempts to re-centralize security entirely within the network risk recreating earlier limitations in a more complex form.

AI’s Impact on Telecommunications Networks:

Artificial intelligence (AI) is influencing telecom network architectures along two orthogonal dimensions:

1.] AI introduces a new class of workloads that impose stringent and atypical requirements on network infrastructure.

AI workloads fundamentally challenge legacy network design assumptions. Traditional enterprise networks were optimized for north–south traffic patterns, human-driven interactions, and best-effort delivery models. In contrast, AI workloads generate predominantly east–west traffic, operate at machine timescales, and exhibit low tolerance for latency, jitter, and packet loss. Simultaneously, AI-enabled control and management planes enable higher degrees of automation and operational efficiency, particularly in campus and branch environments where autonomous operations are beginning to reduce manual intervention.

2.] AI is increasingly being embedded within the network itself, enhancing operations, optimization, fault diagnosis/recovery and security functions. The interaction between these roles is driving many of the architectural shifts observed today. Today, wide-area networks (WANs) must interconnect AI-intensive data center environments with distributed enterprise domains, effectively bridging heterogeneous traffic models and service requirements.

AI-Driven Changes in Traffic and Risk:

AI is reshaping both the structure of network traffic and its associated risk profile. From a traffic perspective, flows are becoming increasingly east–west, bursty, and machine-generated, with reduced visibility due to encryption and abstraction layers. From a security standpoint, AI introduces new classes of actors (e.g., non-human identities and autonomous agents), as well as new attack vectors, including adversarial AI and data exfiltration via model interactions.

These shifts are tightly coupled. The same properties that define AI-driven traffic—distribution, dynamism, and opacity—also complicate detection and enforcement. As a result, security architectures are evolving toward:

-

Identity-centric models that extend zero-trust principles to non-human entities.

-

Data loss prevention mechanisms adapted to AI-generated and AI-consumed data flows.

-

Fine-grained segmentation within network fabrics, subject to latency constraints.

-

Increased reliance on AI-driven detection and response systems to counter AI-enabled threats.

Importantly, these dynamics vary across network domains (LAN, WAN, and data center/cloud), requiring domain-specific adaptations while maintaining consistent policy frameworks.

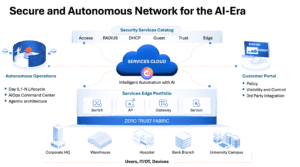

Alignment with “Why Batch Pipelines Break AI Agents: The Case For Streaming-First Network Operations:”

The key points made in this article are highly consistent with the above referenced IEEE Techblog post written by Shazia Hasnie, Ph.D. Both articles treat AI as an architectural forcing function: Shazia’s article focuses on the data/telemetry layer, while this post extends the same logic to the broader enterprise network stack. The core claim in both pieces is that legacy architectures were built for human-operated, latency-tolerant workflows, not autonomous AI systems. In the Shazia’s article, batch pipelines fail because they deliver stale, incomplete, and inconsistent context to AI agents. Here, the same mismatch appears at the network level, where legacy enterprise designs were optimized for north–south traffic, perimeter trust, and static operational assumptions. Both arguments are fundamentally about architectural mismatch rather than isolated product shortcomings.

A particularly strong point of overlap is the emphasis on real-time context. Shazia’s article argues that AI agents require continuous data freshness and an ordered event stream to function safely, while this piece frames AI networking as a shift toward machine-timescale traffic, streaming telemetry, and identity-aware enforcement. In both cases, the network is no longer just a transport layer; it becomes part of the control loop that determines whether AI decisions are accurate and timely.

The failure models are also similar. Shazia identifies five failure modes of batch-to-agent mismatch: stale data, memory gaps, delete blindness, schema fragility, and coordination failure. While not using that taxonomy explicitly, we share the same underlying diagnosis by arguing that complexity, fragmentation, and legacy operational models are now the primary sources of risk. Our discussion of east–west traffic, non-human identities, zero trust, and observability mirrors Shazia’s broader point that autonomous systems fail when their surrounding infrastructure cannot preserve state, sequence, and policy consistency.

These two articles work well together because they address different layers of the same transition. The first article is mainly about the data plane of AI operations—how telemetry, event streams, and agent inputs must move from batch to streaming to avoid operational failure. This article is about the network and security architecture around that data plane—how the enterprise stack, LAN, WAN, and fabric must evolve to support AI-native workloads and enforcement. Hence, the reader can consider the two articles companion pieces.

…………………………………………………………………………………………………………………………………………………………………………………………

About the Author:

Shashi Kiran has nearly 30 years of experience in network, security and cloud technologies, primarily as an operator and executive in public and private B2B companies, where he has held global product management and marketing positions. He’s adopting a protopian view of AI, while being both fascinated and frightened by it at the same time.

Shashi is currently the CMO at Nile, whose network architecture aligns with what AI-era networks require: identity-centric control, embedded security, and autonomous operations. He previously held executive roles at Cisco, Check Point Software, Broadcom and other venture backed startups, and is based in San Jose, CA. He can be reached at http://www.linkedin.com/in/

…………………………………………………………………………………………………………………………………………………………………………………………

References:

Why Batch Pipelines Break AI Agents: The Case For Streaming-First Network Operations

Nile launches a Generative AI engine (NXI) to proactively detect and resolve enterprise network issues

Fiber Optic Networks & Subsea Cable Systems as the foundation for AI and Cloud services

Dell’Oro: Bright Future for Campus Network As A Service (NaaS) and Public Cloud Managed LAN

Cisco Plus: Network as a Service includes computing and storage too

https://nilesecure.com/press-releases/networking-and-security-in-higher-ed

https://nilesecure.com/press-releases/nile-powers-black-hat-mea-2025-with-zero-reported-incidents

Why Batch Pipelines Break AI Agents: The Case For Streaming-First Network Operations

By Shazia Hasnie, Ph.D, editorial review by IEEE Techblog team member Sridhar Talari Rajagopal

Abstract:

The adoption of AI agents in network operations has exposed a critical architectural gap. Most enterprise data pipelines were designed for dashboards and reporting, not autonomous decision-making. When AI agents consume data from batch-oriented pipelines, five distinct failure modes emerge: stale data, memory gaps, delete blindness, schema fragility, and coordination failure. This article examines each failure mode, explains the underlying mechanism, and proposes architectural remedies grounded in streaming-first design principles. It also connects each technical failure to measurable business outcomes—extended downtime, recurring incidents, compliance exposure, silent decision degradation, and cascading impact. The result is both a diagnostic framework for I&O leaders and a financial argument for treating streaming data infrastructure as the prerequisite for autonomous operations.

Introduction: The Data Foundation Gap

Artificial intelligence is reshaping network operations. AI agents promise to detect anomalies, diagnose root causes, and execute remediation faster than human engineers. The industry has focused attention on models, GPUs, and orchestration frameworks. The data layer remains largely unexamined.

This is a critical oversight. Most enterprise data pipelines were built for human consumers. They serve dashboards, weekly reports, and historical analysis. Humans tolerate latency. Humans bring context. Humans notice when something looks wrong.

AI agents require something fundamentally different. They need real-time context. They need historical state. They need accurate representations of current reality. When these requirements are not met, agents do not complain. They act—on incomplete information, with incorrect assumptions, producing wrong outcomes.

The gap between what batch pipelines deliver and what agents require creates failure modes that most teams do not see until an agent makes the wrong decision. Recent analysis has identified the economic dimensions of this gap [1], while industry resources have begun documenting the specific failure patterns that arise when batch processing meets autonomous agents [6]. This article extends that work by identifying five distinct failure modes and proposing a streaming-first architectural response.

FIVE FAILURE MODES: ANATOMY OF BATCH-TO-AGENT MISMATCH

The following five failure modes represent the specific ways batch data pipelines undermine autonomous network operations. Each is examined through its mechanism—how the batch pipeline architecture produces the failure—its operational consequence, and the streaming-first architectural remedy that eliminates it. Together, they form a diagnostic taxonomy for any I&O team evaluating whether their data foundation is ready for Agentic AI.

Failure Mode 1: Stale Data

Mechanism: Batch telemetry pipelines poll, collect, and process data in cycles. Data is extracted on a schedule, transformed in bulk, and loaded into a destination—a warehouse, data lake, time-series database, or feature store that holds a static, point-in-time snapshot of the source. Between cycles, the pipeline holds no current state. An AI agent that spins up between cycles receives a snapshot of the past.

Consequence: The agent diagnoses an outage using telemetry from five minutes ago. The network state has changed during that interval. Routes have shifted. Traffic has been redirected. Thus, the agent’s diagnosis is based on a reality that no longer exists. Remediation actions applied to a past state can worsen the current incident. The agent becomes a liability rather than an asset. Industry documentation confirms that AI agents require continuous data freshness to function correctly [5].

Architectural Remedy: Streaming telemetry replaces cyclical polling with continuous event push. Data flows from source to consumer in real time, ingested directly into the streaming platform’s durable event log [2]. The agent consumes from a live stream, not a stale snapshot. Context acquisition takes milliseconds. The cognitive loop remains intact. This is not an add-on to the batch pipeline. It is a structural replacement of the ingestion layer.

Failure Mode 2: Memory Gap

Mechanism: Batch pipelines deliver windows of data—the last hour, the last day, the last processing cycle. They do not preserve the sequence of events that led to the current moment. Historical context is stripped away with each new extract. The pipeline knows what happened. It does not know what happened before.

Consequence: An agent responding to an interface flap cannot answer the most basic diagnostic question: has this happened before? It cannot correlate the current event with the three similar events that occurred in the preceding 24 hours. It cannot detect the pattern that would reveal a degrading optical module. Every incident appears isolated. Pattern recognition—the core value proposition of AI-driven operations—is structurally impossible. The distinction between streaming and batch architectures for these use cases has been well-documented [4].

Architectural Remedy: A durable event log with configurable retention serves as the agent’s memory [2]. Unlike a batch window, which discards history with each new extract, the event log preserves the ordered sequence of all events within the retention period. The agent seeks backward in the log on startup and replays the preceding window of telemetry. Pattern detection across time becomes native to the architecture. This is not a separate cache layered on top. It is the storage layer itself—immutable, ordered, and built for event replay from any offset.

Failure Mode 3: Delete Blindness

Mechanism: Batch pipeline’s Extract, Transform, Load (ETL) processes compare snapshots of source data. They do not watch the database transaction log. They identify what exists at two points in time and process the difference. When a record is deleted from the source system, the pipeline has no way of distinguishing between a row that was deleted and a row that was simply omitted due to extraction error, filtering logic, or schema mismatch. The absence of a row is not an event. It is a gap. Batch pipelines are not designed to interpret gaps as meaningful signals. The record simply vanishes from the next extract. The downstream consumer—an AI agent or any other system—has no way of knowing the record ever existed.

Consequence: The agent queries the downstream data store and finds no record for a deactivated account, a revoked certificate, or a cancelled change order. It cannot distinguish between “never existed” and “was deleted,” so it treats the absence as neutral.

The agent makes decisions on ghosts—data that no longer exists in source systems. In access control scenarios, this is not an operational error. It is a security incident. This specific failure mode has been identified in analyses of batch processing limitations for AI agents [6].

Architectural Remedy: Change data capture (CDC), implemented through Kafka Connect with Debezium connectors, reads the database transaction log directly [2], [8]. Debezium provides CDC source connectors for MySQL, PostgreSQL, MongoDB, SQL Server, and other databases — capturing inserts, updates, and deletes as discrete events with explicit operation types by tailing the database’s native transaction log. Nothing is invisible to the pipeline. The streaming architecture knows not only what exists but what ceased to exist. This is not an ETL workaround with soft-delete flags. It is a structural capability of the integration layer, converting database changes into first-class events the moment they occur.

Failure Mode 4: Schema Fragility

Mechanism: Source database schemas change over time. Columns are renamed, added, deprecated, or re-typed. Batch pipelines are configured for a specific schema at extraction time. When the source schema changes, the pipeline responds in one of two ways. It fails silently and drops the affected field from every subsequent extract. Or it fails loudly and stops processing entirely.

Silent failure is the more dangerous outcome. The pipeline continues delivering data. The consumer has no indication that a critical field is missing.

Consequence: The agent continues operating without a critical data input. It makes decisions with incomplete information. It has no awareness that its reasoning is compromised. The wrong decisions accumulate. By the time the missing field is discovered—often through an operational failure rather than a monitoring alert—the cost of remediation includes auditing and correcting every decision made during the degradation window.

Architectural Remedy: A schema registry with compatibility enforcement validates schema changes before they propagate to downstream consumers [2]. Streaming platforms can enforce backward and forward compatibility rules at the producer level. A breaking schema change is rejected before any data is published. The pipeline fails loudly and immediately. This is not a documentation standard or a code review checklist. It is a structural governance layer embedded in the streaming architecture itself, preventing silent field loss at the point of ingestion.

Failure Mode 5: Coordination Failure

Mechanism: When multiple AI agents operate on batch-derived data, each agent consumes a separate, potentially inconsistent snapshot. Agent A receives data from the 10:00 AM extract. Agent B receives data from the 10:15 AM extract. The extracts differ. Each agent holds a different version of reality. There is no shared, ordered log of events that all agents consume.

Consequence: Two agents respond to the same cascading failure. Agent A identifies a BGP routing issue and begins rerouting traffic. Agent B identifies a DNS resolution failure and begins modifying name server configurations. Neither agent knows the other acted. The redundant changes compete. The conflicting configurations create new instability. The original incident expands rather than resolves. What began as a single point of failure becomes a cascade that erodes trust in autonomous operations.

Architectural Remedy: A shared, ordered event log serves as a single source of truth for all agents in the system. Every agent consumes from the same log. Actions taken by one agent are published back to the log as events, immediately visible to all others [7]. Coordination becomes native to the architecture.

Visibility alone, however, does not prevent conflicting actions. Two agents may observe the same anomaly and both initiate remediation before either’s action becomes visible on the log. In practice, this is addressed through complementary mechanisms layered on the same event-driven model: action intent events that signal an agent is about to act, giving others a window to defer; idempotency keys that prevent duplicate remediation from causing harm; and lightweight leases for resources that should only be modified by one agent at a time. These mechanisms do not require a central coordinator. They are published to the same log, consumed by the same agents, and enforced through the same ordered stream.

This is not a separate orchestration layer or message bus bolted onto the side. It is the core of the streaming platform—a unified, ordered, multi-consumer event stream that provides both the shared state and the coordination primitives that eliminate the inconsistent snapshots batch architectures produce by default.

Batch-to-Streaming Reference Architecture — Five Failure Modes and Their Architectural Remedies

THE UNIFIED DIAGNOSTIC FRAMEWORK

The five failure modes translate into a practical audit that I&O leaders can apply to their own infrastructure. Each question corresponds to a specific architectural requirement.

The Five-Question Audit

- Can the data pipeline deliver real-time context to an agent the moment it wakes up? If not, the system is vulnerable to stale data failures.

- Can the agent access the preceding window of telemetry to detect patterns across events? If not, the system is vulnerable to memory gap failures.

- Does the pipeline capture deletes as explicit events with operation types? If not, the system is vulnerable to delete blindness.

- Does the pipeline detect schema changes before they propagate to downstream consumers? If not, the system is vulnerable to schema fragility.

- Do all agents share a single, ordered view of events with visibility into each other’s actions? If not, the system is vulnerable to coordination failure.

A negative answer to any one of these questions signals a data foundation that is not ready for autonomous operations. The model is not the bottleneck. The GPUs are not the bottleneck. The telemetry pipeline is.

THE MIGRATION PATH: FROM BATCH TO STREAMING-FIRST

Adopting a streaming-first architecture does not require abandoning existing batch investments overnight. For most organizations, the transition follows a coexistence model: streaming pipelines are introduced alongside batch pipelines, not as an immediate replacement.

The practical starting point is to identify the highest-value agent—the one whose decisions carry the greatest operational or financial consequence—and convert its data pipeline first. This agent is typically the one where stale data, memory gaps, or coordination failures have produced measurable incidents. Converting this single pipeline to streaming telemetry with a durable event log delivers a targeted operational improvement while the rest of the batch estate continues to function.

From there, adoption expands incrementally. Each additional agent is migrated as operational experience with the streaming platform grows. Teams develop competence in offset management, schema governance through the registry, and backpressure handling while batch pipelines continue to serve lower-priority consumers. The streaming and batch estates coexist for a transition period measured in months, not days.

This incremental approach also reveals where streaming delivers the greatest marginal benefit. Not every data flow requires real-time treatment. Dashboards fed by hourly batch extracts may serve their purpose indefinitely. The streaming investment should be directed at the pipelines that feed autonomous agents—the flows where the five failure modes carry real operational consequence. The goal is not to stream everything. It is to stream the right things first.

THE BUSINESS IMPACT: FROM TECHNICAL FAILURE TO FINANCIAL CONSEQUENCE

Technical failures in the data pipeline do not remain technical. They cascade into business outcomes that appear on budget reviews, SLA reports, and board presentations. Each failure mode carries a distinct financial consequence.

Stale Data → Extended Downtime