IMT Future Technology Trends

Nokia and Rohde & Schwarz collaborate on AI-powered 6G receiver years before IMT 2030 RIT submissions to ITU-R WP5D

Nokia and the test and measurement firm Rohde & Schwarz have created and successfully tested a “6G” radio receiver that uses AI technologies to overcome one of the biggest anticipated challenges of 6G network rollouts, coverage limitations inherent in 6G’s higher-frequency spectrum.

–>This is truly astonishing as ITU-R WP5D doesn’t even plan to evaluate 6G RIT/SRITs till February 2027 when the first submissions are invited to be presented.

Nokia Bell Labs developed the receiver and validated it using 6G test equipment and methodologies from Rohde & Schwarz. The two companies will unveil a proof-of-concept receiver at the Brooklyn 6G Summit on November 6, 2025. Nokia says, “the machine learning capabilities in the receiver greatly boost uplink distance, enhancing coverage for future 6G networks. This will help operators roll out 6G over their existing 5G footprints, reducing deployment costs and accelerating time to market.”

Image Credit: Rohde & Schwarz

Nokia Bell Labs and Rohde & Schwarz have tested this new AI receiver under real world conditions, achieving uplink distance improvements over today’s receiver technologies ranging from 10% to 25%. The testbed comprises an R&S SMW200A vector signal generator, used for uplink signal generation and channel emulation. On the receive side, the newly launched FSWX signal and spectrum analyzer from Rohde & Schwarz is employed to perform the AI inference for Nokia’s AI receiver. In addition to enhancing coverage, the AI technology also demonstrates improved throughput and power efficiency, multiplying the benefits it will provide in the 6G era.

“One of the key issues facing future 6G deployments is the coverage limitations inherent in 6G’s higher-frequency spectrum. Typically, we would need to build denser networks with more cell sites to overcome this problem. By boosting the coverage of 6G receivers, however, AI technology will help us build 6G infrastructure over current 5G footprints,” said Peter Vetter, President, Core Research, Bell Labs, Nokia.

“Rohde & Schwarz is excited to collaborate with Nokia in pioneering AI-driven 6G receiver technology. Leveraging more than 90 years of experience in test and measurement, we’re uniquely positioned to support the development of next-generation wireless, allowing us to evaluate and refine AI algorithms at this crucial pre-standardization stage. This partnership builds on our long history of innovation and demonstrates our commitment to shaping the future of 6G,” said Michael Fischlein, VP, Spectrum & Network Analyzers, EMC and Antenna Test, Rohde & Schwarz.

…………………………………………………………………………………………………………………………………………………………………………………………

Last month, Nokia teamed up with rival kit vendor Ericsson to work on video coding standardization in preparation for 6G. The project, which also involved Berlin’s Fraunhofer Heinrich Hertz Institute (HHI), demonstrated a new video codec which they claim has higher compression efficiency than the current standards (H.264/AVC, H.265/HEVC, and H.266/VVC) without significantly increasing complexity, and its wider aim is to strengthen Europe’s role in next generation standardization, we were told at the time.

…………………………………………………………………………………………………………………………………………………………………………………………

About Nokia:

At Nokia, we create technology that helps the world act together.

As a B2B technology innovation leader, we are pioneering networks that sense, think and act by leveraging our work across mobile, fixed and cloud networks. In addition, we create value with intellectual property and long-term research, led by the award-winning Nokia Bell Labs, which is celebrating 100 years of innovation.

With truly open architectures that seamlessly integrate into any ecosystem, our high-performance networks create new opportunities for monetization and scale. Service providers, enterprises and partners worldwide trust Nokia to deliver secure, reliable and sustainable networks today – and work with us to create the digital services and applications of the future

About Rohde & Schwarz:

Rohde & Schwarz is striving for a safer and connected world with its Test & Measurement, Technology Systems and Networks & Cybersecurity Divisions. For over 90 years, the global technology group has pushed technical boundaries with developments in cutting-edge technologies. The company’s leading-edge products and solutions empower industrial, regulatory and government customers to attain technological and digital sovereignty. The privately owned, Munich-based company can act independently, long-term and sustainably. Rohde & Schwarz generated a net revenue of EUR 3.16 billion in the 2024/2025 fiscal year (July to June). On June 30, 2025, Rohde & Schwarz had more than 15,000 employees worldwide.

References:

ITU-R WP5D IMT 2030 Submission & Evaluation Guidelines vs 6G specs in 3GPP Release 20 & 21

ITU-R WP 5D reports on: IMT-2030 (“6G”) Minimum Technology Performance Requirements; Evaluation Criteria & Methodology

Market research firms Omdia and Dell’Oro: impact of 6G and AI investments on telcos

Nvidia pays $1 billion for a stake in Nokia to collaborate on AI networking solutions

Highlights of Nokia’s Smart Factory in Oulu, Finland for 5G and 6G innovation

Verizon’s 6G Innovation Forum joins a crowded list of 6G efforts that may conflict with 3GPP and ITU-R IMT-2030 work

Qualcomm CEO: expect “pre-commercial” 6G devices by 2028

NGMN: 6G Key Messages from a network operator point of view

ITU-R WP 5D reports on: IMT-2030 (“6G”) Minimum Technology Performance Requirements; Evaluation Criteria & Methodology

Introduction:

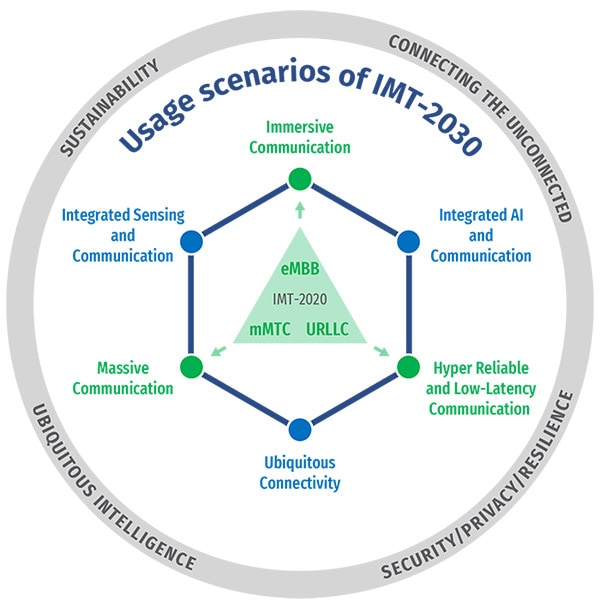

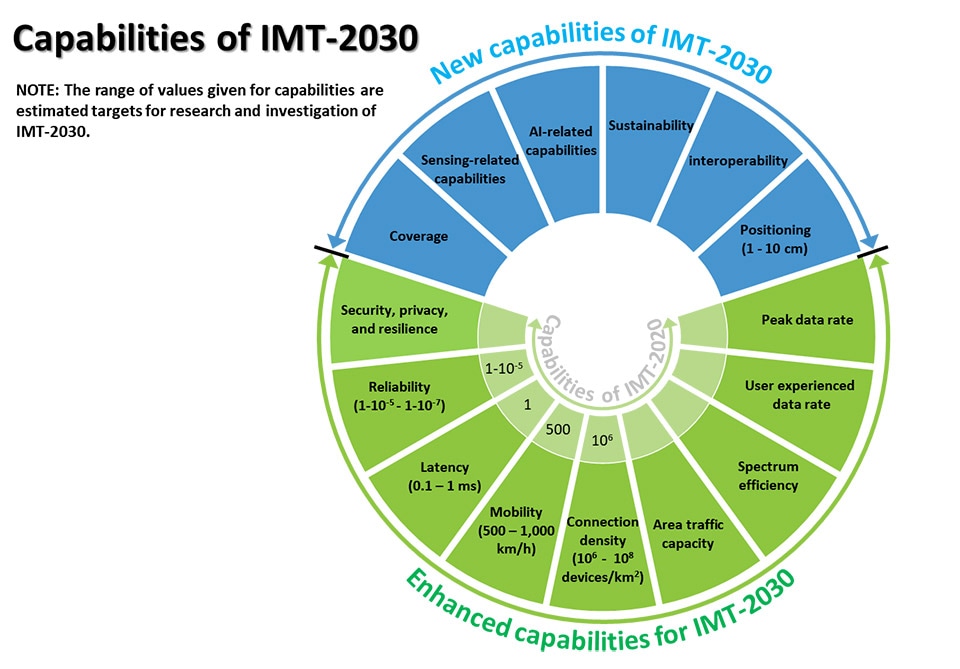

Recommendation ITU R M.2160 ‒ “Framework and overall objectives of the future development of IMT for 2030 and Beyond” identifies IMT-2030 capabilities which aim to make IMT-2030 (6G) more capable, flexible, reliable and secure than previous IMT systems when providing diverse and novel services in the intended six usage scenarios, including immersive communication, hyper reliable and low latency communication (HRLLC), massive communication, ubiquitous connectivity, artificial intelligence and communication, and integrated sensing and communication (ISAC).

IMT-2030 can be considered from multiple perspectives, including users, manufacturers, application developers, network operators, verticals, and service and content providers. Therefore, it is recognized that technologies for IMT-2030 can be applied in a variety of deployment scenarios and can support a range of environments, service capabilities, and technology options.

IMT-2030 is also expected to be built on overarching aspects which act as design principles commonly applicable to all usage scenarios. These distinguishing design principles of the IMT‑2030 are including, but are not limited to sustainability, security and resilience, connecting the unconnected for providing universal and affordable access to all users independent of the location, and ubiquitous intelligence for improving overall system performance.

ITU-R WP 5D February 2025 Meeting Highlights:

1. At its ITU-R WP5D February 2025 meeting, a large number of ITU-R WP 5D contributions were discussed on the development of a draft report titled, “Minimum technical performance requirements (TPRs) for IMT‑2030 (“6G”) radio interface(s) [IMT-2030.TECH PERF REQ].” That work is being done in the Technology Aspects WG along with all other IMT-2030 projects.

This Report describes key requirements related to the minimum technical performance of IMT-2030 candidate radio interface technologies. It also provides the necessary background information about the individual requirements and the justification for the items and values chosen. Provision of such background information is needed for a broader understanding of the requirements. After discussion of the contributions, a preliminary list of minimum TPRs is created, and the working document is updated. In total eleven sessions were used including three Drafting Groups to address requirements related to artificial intelligence, energy efficiency and joint requirements. This Report is based on the ongoing development activities of external research and technology organizations.

IMT 2030 performance requirements are to be evaluated according to the criteria defined in Report ITU-R M.[IMT‑2030.EVAL] and Report ITU-R M.[IMT-2030.SUBMISSION] for the development of IMT-2030 recommendations.

2. This WP5D meeting also discussed contributions on “Evaluation criteria and methodology for IMT-2030″ [IMT-2030.EVAL] and updated the working document. The discussion focused on a number of subjects including test environments, mapping between TPR and test environments, and a high-level view of TPR evaluation methodologies.

3. WP5D SWG Coordination started the work of revision of the Document IMT-2030/2 – Submission, evaluation process and consensus building, in order to incorporate decisions to be made on criterial related to test environments and other subjects.

4. At its next meeting (July 2025 in Japan), WP5D Technology Aspects WG will:

- continue working on revision of IMT-2030/2 “Process” – submission, evaluation process and consensus building process for IMT-2030;

- start to work on candidate technology submission template for IMT-2030;

- continue working on ITU-R M.[IMT-2030.TECH PERF REQ] – minimum requirements related to technical performance for IMT-2030 radio interface(s);

- continue working on M.[IMT-2030.EVAL] – Guidelines for evaluation of radio interface technologies for IMT-2030;

……………………………………………………………………………………………………………………….

During 3GPP Technical Specification Group RAN’s meeting RAN#106, in Madrid on December 12th, an important 6G study item was approved. The study represents a significant milestone in 3GPP’s interactions with ITU on 6G technical performance requirements (TPRs) as future, deployment scenarios, requirements and potential directions of 6G radio access technologies are further identified and investigated in 3GPP. The 3GPP study item (Details in RP-243327) aims to investigate a candidate set of items for minimum TPRs based on the Recommendation ITU-R M.2160 and, where applicable, the associated target values and key assumptions for the identified minimum TPRs.

The outcome is expected to be shared by Liaison Statement with ITU-R WP5D and used as a baseline for the subsequent 6G study in RAN.

Expected Output and Time scale: A 38 series Technical Report ‘Study on 6G Scenarios and requirements’ scheduled for RAN#112 in June, 2026.

…………………………………………………………………………………………………………………

References:

ITU-R: IMT-2030 (6G) Backgrounder and Envisioned Capabilities

ITU-R WP5D invites IMT-2030 RIT/SRIT contributions

NGMN issues ITU-R framework for IMT-2030 vs ITU-R WP5D Timeline for RIT/SRIT Standardization

IMT-2030 Technical Performance Requirements (TPR) from ITU-R WP5D

https://www.3gpp.org/news-events/3gpp-news/ran-6g-study1

Ericsson and e& (UAE) sign MoU for 6G collaboration vs ITU-R IMT-2030 framework

IALA describes maritime use cases and applications for 5G Radio Interface Technologies (IMT 2020 RITs)

The International Organization for Marine Aids to Navigation (IALA) has been developing use cases and service requirements of Marine Aids to Navigation (Marine AtoN) including regulatory aspects for maritime safety, which may serve as input to support the development of IMT-2030 (6G RIT/SRIT) standardization. IALA has detailed some of use cases that IMT-2020 (5G RIT/SRIT) which have been applied in the maritime sector. They are requesting ITU-R WP5D to include those maritime use cases in the section 5.10 of the working document for the preliminary draft revision of Report ITU-R M.2527-0, titled “Applications of the Terrestrial Component of International Mobile Telecommunications for Specific Societal, Industrial, and Other Usages.”

IMT-2020 and beyond systems can be used to address such specific needs, such as:

− secure mechanism to associate an identity of a IMT-based device with a vessel identity;

− direct communication among vessels;

− communication between shore-based operations centers and vessels/unmanned aerial vehicles (UAVs)/unmanned underwater vehicles (UUVs);

− determining accurate position, heading and speed of IMT-based devices associated with a vessel identity, e.g. for maritime emergency requests or assisting IMT-based devices associated with other vessels with safety information;

− mechanisms of distributing a maritime emergency request received from a user endpoint (UE) to another UEs on a vessel.

− digitized workflows and processes, e.g. digital bunkering.

……………………………………………………………………………………………………………………..

Here are a few maritime use cases of IMT 2020:

1. Pilotage service and tug service

The use case on pilotage service is to provide shipboard users such as a pilot or a shipmaster and shore-based users such as pilot authorities, pilot organization or bridge personnel the exact information necessary to maneuver vessels over IMT systems through pilotage areas such as dangerous or congested waters and harbors or to anchor vessels in a harbor to safeguard traffic at sea and protect the environment.

A tug is a boat or ship that maneuvers vessels by pushing or towing them. Tugs move vessels that either should not move by themselves (e.g. vessels passing in a narrow canal, berthing and unberthing operations) or those that cannot move by themselves (e.g. barges, disabled ships, oil platforms). The use case of tug service is described for ship assistance (e.g. mooring), towage (in harbor/ocean), or escort operations to safeguard traffic at sea and protect the environment by IMT systems.

2. Autonomous surface ships

The autonomous surface ship is one of the main streams for the digital transformation of the maritime sector. The demand for the high performance of maritime communication technologies is expected to be skyrocketing once autonomous surface ships become pervasive at sea or in rivers. In general, most ships are designed for a life of 25 to 30 years, which means that multiple radio access technologies are highly likely to coexist in the maritime sector across two or three generational evolutions of IMT systems that have been evolved every ten years so far. IMT-2020 technologies provide the feature on the support of the multiple radio access technologies (RATs).

The size of autonomous ships is varied, and the length of such ships can be from a few meters to a few hundreds of meters. In case of an autonomous ship with the length of a few hundred meters, the communications environment on its deck or inside the ship may be similar with the one of smart factory, smart farm, or smart campus where local networks over IMT-2020 and beyond systems provide mobile services only within their territories. The IMT-2020 technologies related to non-public networks are applicable to provide the mobile communication services for cabin crews, passengers, or Internet of Things (IoT) devices integrated into navigation systems of the ship on board on its deck or inside the ship.

In addition, the direct communication between two ships is applicable over IMT-2020 technologies and it will help autonomous surface ships efficiently exchange the information related to their navigation and maritime safety and avoid any delay of the information delivery which may cause a risk on the conflict between autonomous surface ships. IMT-2020 and beyond systems are expected to continue to enhance the support of the direct communication among ships to provide much longer communication coverage which is sufficient to satisfy the requirement of the maritime sector.

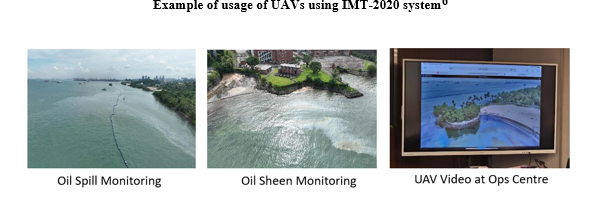

3. UAVs

As decarbonization efforts intensify in the maritime sector, novel ways are needed to reduce the carbon footprint of maritime operations in the port waters. UAVs is one such example that could achieve this. Take for example the management of the oil spill within port waters where multiple drone flights were conducted to capture high quality video footages for transmission back to the shore-based operations center via an IMT-2020 system.

Live high quality video footage was crucial to monitor and predict the movement of oil spills affected by waves, tides and wind, validate oil spill models, and allow better deployment of response assets.

Real-time video steaming service

Maritime incidents in the port waters are unavoidable, and speed is of the essence to resume normalcy for port and maritime operations. To ensure that emergency response teams are equipped to do so, standard operating procedures need to be periodically rehearsed and practiced to deal with such contingencies. During a ferry rescue exercise in Aug 2024, a simulation of collision between two domestic ferries, one electric and one diesel-powered, was carried out within port waters. The collision resulted in severe damage to the hull of the diesel-powered ferry, causing the vessel to take in water. An UUV was deployed from a vessel to conduct hull inspection of the diesel-powered ferry, and live high quality video footage was sent by IMT-2020 system to the shore-based operations centre for hull “damage” assessment to aid “rescue” operations.

Virtual marine Aids to Navigation (AtoN)

The term ‘marine Aids to Navigation (AtoN)’ means a device, system, or service which are external to a vessel, designed and operated to enhance safe and efficient navigation of all vessels or vessel traffic. Example of conventional types of such AtoN includes lighthouses, buoys, and day beacons. The maritime sector recently employed virtual marine AtoNs whose position information is broadcast to make ships identify them though they do not physically exist at sea. IMT-2020 technologies are applicable for the virtual marine AtoNs to enable their location information to be broadcast to ships on voyage around virtual marine AtoNs. In addition, more enhanced direct communication supporting the communication range sufficient for the maritime sector are expected to make IMT-2020 and beyond system attractive to the maritime sector because it may be useful to overcome the constraints of the maritime communication environment caused by the limited network infrastructure compared to the terrestrial communication environment.

Maritime services

The IMT-2020 technologies provide features that are useful for the communication among authorities, the emergency request, or the public warning. Mission critical services (e.g. mission critical push to talk, mission critical data service) over IMT-2020 system are applicable to the marine usage by enabling coast guard ships to efficiently exchange the information even in an isolated network at sea where coast guard ships are away from a shore and are unable to be connected to a core network on land.

IMT-2020 system also provides features for the public warning that are related to regulatory requirements. Additional enhancement of IMT-2020 technologies is expected to enhance the information related to marine regulatory requirements is integrated into features for the public warning.

Digitalization of bunkering processes and documentations such as electronic bunker delivery notes (eBDN), in alignment with IMO regulation 18 of MARPOL Annex VI, can be achieved IMT-2020[1] system within port waters. This will help to improve efficiency and productivity, increase transparency, enhance crew safety and facilitate interoperability between different systems.

Other use cases in ports

Automation and worker safety and retention are the key motivation for IMT applications at shipping ports. The world’s largest shipping ports operate 24 (hours) × 7 (days). In this dynamic environment, worker safety is a major concern. Another pain point for port operators is worker retention due to poor working conditions. For example, crane operators work in tight spaces, high above the ground, for an extended period. Remote control of crane operations, container trucks, and other heavy machinery in ports can alleviate these pain points. For instance, with real-time video streaming and analytics, a crane operator may be able to operate multiple lifts and cranes situated at an operations centre. As a result, remote operations can increase productivity, save labour costs, and improve worker safety.

Real-time video is critical for port security and remote control operations. Video surveillance is essential to maintaining port security. Real-time video surveillance with computer vision can be used to maintain security control and access. In addition to infrastructure security, real-time video is vital for handling heavy machineries, such as cranes and unmanned container trucks, in remote command and control operations. Private IMT-2020 networks promise superior coverage, low latency, and massive machine-type communications with fewer radios than existing RLAN-based meshing networks. While existing RLAN and meshing solutions are fine for fixed wireless applications, they are not reliable in dynamically changing mobile environments such as ports.

Drone inspection of port operations is another interesting IMT application found in shipping ports. In addition to drones, video-mounted cranes and containers tagged with sensors are used to track containers to help locate goods (within containers) in ports. Port operators are increasingly called upon to provide visibility of the supply chain to logistics and trucking companies and end customers in an increasingly connected world. As a result, port operators increasingly seek new technology solutions, such as private IMT-2020 and video analytics, to gain additional operational efficiency and compete against other port operators worldwide.

References:

International Organization for Marine Aids to Navigation LIAISON NOTE TO ITU-R WORKING PARTY 5D, 13 December 2024

3GPP TR 22.819: Feasibility Study on Maritime Communication Services over 3GPP system

3GPP TS 22.119: Maritime Communication Services over 3GPP system; Stage 1

ITU-R: IMT-2030 (6G) Backgrounder and Envisioned Capabilities

IMT Vision – Framework and overall objectives of the future development of IMT for 2030 and beyond

This ITU-R recommendation in progress will be the main focus of next week’s ITU-R WP5D meeting #43 in Geneva. It defines the framework and overall objectives for the development of International Mobile Telecommunications (IMT) for 2030 and beyond. There are contributions related to this recommendation from: Apple, Nokia, Ericsson, Wireless World Research Forum, Motorola Mobility, Orange, United Kingdom of Great Britain and Northern Ireland, Finland, Germany, GSOA, China, Qualcomm, Electronics and Telecommunications Research Institute (ETRI), Brazil, Samsung, ZTE, Huawei, InterDigital, Intel and India, with several being multi-company contributions.

The objective is to reach a consensus on the global vision for IMT-2030 (aka 6G), including identifying the potential user application trends and emerging technology trends, defining enhanced and brand-new usage scenarios and corresponding capabilities, as well as understanding the new spectrum needs.

IMT will continue to better serve the needs of the networked society, for both developed and developing countries in the future and this Recommendation outlines how that will be accomplished. This Recommendation also intends to drive the industries and administrations for encouraging further development of IMT for 2030 and beyond.

The framework of the development of IMT for 2030 and beyond, including a broad variety of capabilities associated with envisaged usage scenarios, is described in detail in this Recommendation.

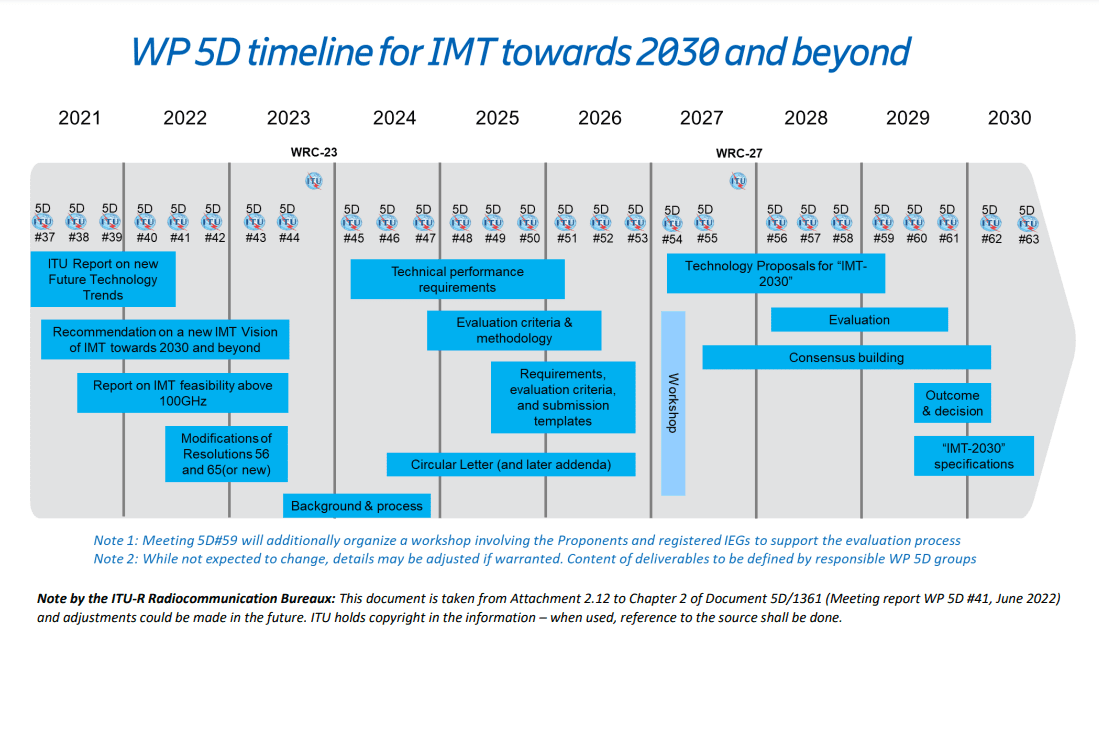

In June 2022, ITU-R decided on the overall timeline for 6G with three major stages:

- Stage 1 – vision definition to be completed in June 2023 before the World Radiocommunication Conference 2023 (WRC-23),

- Stage 2 – requirements and evaluation methodology to be completed in 2026, and

- Stage 3 – specifications to be completed in 2030. The 3-stage timeline and the tasks for each stage are summarized in Figure below.

This draft Recommendation defines a [potential] framework and overall objectives for the development of the terrestrial component of International Mobile Telecommunications (IMT) for 2030 and beyond. IMT will continue to better serve the needs of the [networked] society, for both developed and developing countries in the future and this [Recommendation/document] outlines how [possibly] that could be accomplished. This [Recommendation/document] also intends to encourage further development of IMT-2030. In this [Recommendation/document], the [potential] framework of the development of IMT-2030, including a broad variety of capabilities associated with [some possible] envisaged usage scenarios[, and those yet to be developed and] described in detail. Furthermore, this [Recommendation/document] addresses the objectives for the development of IMT-2030, which includes further enhancement and evolution of existing IMT and the development of IMT-2030.

It should be noted that this Recommendation is defined considering the development of IMT to date based on Recommendation ITU-R M.2083 (approved in September 2015).

Technology Trends:

Report ITU-R M.2516 provides a broad view of future technical aspects of terrestrial IMT systems considering the timeframe up to 2030 and beyond, characterized with respect to key emerging services, applications trends, and relevant driving factors. It comprises a toolbox of technological enablers for terrestrial IMT systems, including the evolution of IMT through advances in technology, and their deployment. In the following sections a brief overview of emerging technology trends, technologies to enhance the radio interface, and technologies to enhance the radio network are presented.

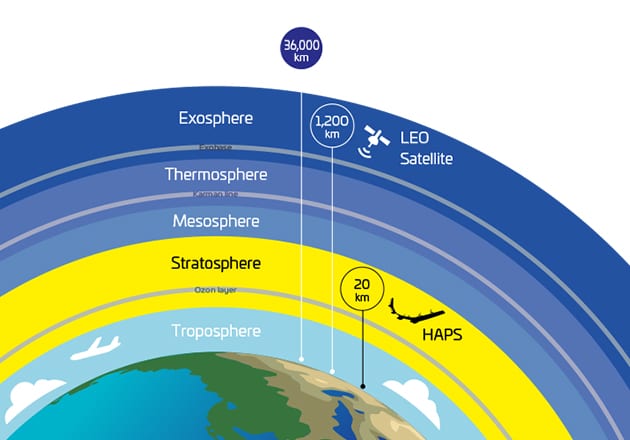

An important breakthrough in 3GPP Rel-17, Technical Specifications for Non-Terrestrial Networks (NTN) were established & defined for satellite direct access to device for both 5G and IoT services. This development reflects a trend that satellite & space technologies can offer many benefits for development & operation of future IMT-2030 networks, to enable 5G & 6G available everywhere, accessible to enterprises and citizens across the globe.

IMT-2030 will consider an AI-native new air interface that refers to the use of AI to enhance radio interface performance such as symbol detection/decoding, channel estimation etc. An AI-native radio network will enable automated and intelligent networking services such as intelligent data perception, supply of on-demand capability etc. Radio network to support AI services is the design of IMT technologies to serve various AI applications, and the proposed directions include on-demand uplink/sidelink-centric, deep edge and distributed machine learning. The integration of sensing and communication functions in future wireless systems will provide beyond-communication capabilities by utilizing wireless communication systems more effectively resulting in mutual benefit to both functions. Integrated sensing and communication (ISAC) systems will also enable innovative services and applications such as intelligent transportation, gesture and sign language recognition, automatic security, healthcare, air quality monitoring, and solutions with higher degree of accuracy. Combined with technologies such as AI, network cooperation and multi-nodes cooperative sensing, the ISAC system will lead to benefits in enhanced mutual performance, overall cost, size and power consumption of the whole system.

Computing services and data services are expected to become an integral component of the future IMT system. Emerging technology trends include processing data at the network edge close to the data source for real-time response, low data transport costs, energy efficiency and privacy protection, as well as scaling out device computing capability for advanced application computing workloads.

Device-to-device (D2D) wireless communication with extremely high throughput, ultra-accuracy positioning and low latency will be an important communication paradigm for the future IMT. Technologies such as THz technology, ultra-accuracy sidelink positioning and enhance terminal power reduction technology can be considered to satisfy requirements of new applications.

Energy efficiency and low power consumption comprises both the user device and the network’s perspectives. The promising technologies include energy harvesting, backscattering communications, on-demand access technologies, etc.

To achieve real-time communications with extremely low latency communications, two essential technology components are considered: accurate time and frequency information shared in the network and fine-grained and proactive just-in-time radio access.

There is a need to ensure security, privacy, and resilient solutions allowing for the legitimate exchange of sensitive information through network entities. Potential technologies to enhance trustworthiness include those for RAN privacy, such as distributed ledger technologies, differential privacy and federated learning, quantum technology with respect to the RAN and physical-layer security technologies.

UPDATE: https://techblog.comsoc.org/2023/07/09/draft-new-itu-r-recommendation-not-yet-approved-m-imt-framework-for-2030-and-beyond/

References:

Summary of ITU-R Workshop on “IMT for 2030 and beyond” (aka “6G”)

https://www.itu.int/en/ITU-R/study-groups/rsg5/rwp5d/Pages/wsp-imt-vision-2030-and-beyond.aspx

Excerpts of ITU-R preliminary draft new Report: FUTURE TECHNOLOGY TRENDS OF TERRESTRIAL IMT SYSTEMS TOWARDS 2030 AND BEYOND

Development of “IMT Vision for 2030 and beyond” from ITU-R WP 5D

ITU-R: Future Technology Trends for the evolution of IMT towards 2030 and beyond (including 6G)

China’s MIIT to prioritize 6G project, accelerate 5G and gigabit optical network deployments in 2023

ITU-R WP5D: Studies on technical feasibility of IMT in bands above 100 GHz

https://www.itu.int/rec/R-REC-M.2083 (Sept 2015)

ITU-R Reports in Progress: International Mobile Telecommunications (IMT) including IMT 2020

Working documents toward preliminary draft new ITU-R reports from WP5D:

M.[IMT.MULTIMEDIA] – Capabilities of the terrestrial component of IMT-2020 for multimedia communications

M.[IMT.INDUSTRY] – Addresses the usage, technical and operational aspects and capabilities of IMT for meeting specific needs of societal, industrial and enterprise usages.

M.[IMT.AAS] – Measurements and mathematical modelling of Advanced Antenna Systems (AAS) in IMT-2020 systems

M.[HIBS-CHARACTERISTICS] – Related to WRC-23 agenda item 1.4 – Spectrum needs, usage and deployment scenarios, and technical and operational characteristics for the use of high-altitude platform stations as IMT base stations (HIBS) in the mobile service in certain frequency bands below 2.7 GHz

New draft Recommendations:

M.[FSS_ES_IMT_26GHz] – Guidelines to assist administrations to mitigate interference from FSS earth stations into IMT stations operating in the frequency bands 24.65-25.25 GHz and 27-27.5 GHz

References:

https://www.itu.int/md/R19-WP5D-C-1361/en

ITU-R Future Report: high altitude platform stations as IMT base stations (HIBS)

ITU-R Future Report: high altitude platform stations as IMT base stations (HIBS)

Excerpts of ITU-R preliminary draft new Report: FUTURE TECHNOLOGY TRENDS OF TERRESTRIAL IMT SYSTEMS TOWARDS 2030 AND BEYOND

Cautionary Note:

This ITU-R draft report is not complete or agreed upon at this time. Therefore, all of the text below is subject to change. For sure, AI will play a huge role in future IMT

Introduction:

International Mobile Telecommunications (IMT) systems are mobile broadband systems including both IMT-2000 (3G), IMT-Advanced (true 4G) and IMT-2020 (M.2150 5G RIT/SRIT/RAN previously know as IMT-2020.specs).

IMT-2000 provides access by means of one or more radio links to a wide range of telecommunications services supported by the fixed telecommunications networks (e.g. PSTN/Internet) and other services specific to mobile users. Since the year 2000, IMT-2000 has been continuously enhanced, and Recommendation ITU-R M.1457 providing the detailed radio interface specifications of IMT-2000, has been updated accordingly. Some new features and technologies were introduced to IMT-2000 which enhanced its capabilities.

IMT-Advanced is a mobile system that includes the new capabilities of IMT that go far beyond those of IMT-2000 and also has capabilities for high-quality multimedia applications within a wide range of services and platforms providing a significant improvement in performance and quality of the current services. IMT-Advanced systems can work in low to high mobility conditions and a wide range of data rates in accordance with user and service demands in multiple user environments. Such systems provide access to a wide range of telecommunication services including advanced mobile services, supported by mobile and fixed networks, which are generally packet-based. Recommendations ITU-R M.2012 provides the detailed radio interface specifications of IMT‑Advanced.

ITU-R studied the technology trends for the preparation of development of IMT-Advanced and IMT-2020, and the results were documented in Reports ITU-R M.2038 and ITU-R M.2320, respectively.

Since the approval of Report ITU-R M.2320 in 2014, there have been significant advances in IMT technologies and the deployment of IMT systems. The capabilities of IMT systems are being continuously enhanced in line with user trends and technology developments. [813] IMT-2020 systems include new capabilities of IMT that go beyond those of IMT-2000 and IMT-Advanced and make IMT-2020 more efficient, fast, flexible and reliable when providing diverse services in the intended usage scenarios including enhanced Mobile Broadband, ultra-reliable low-latency communication and massive machine-type communication.

This Report provides information on the technology trends of terrestrial IMT systems considering the time-frame 2023-2030 and beyond. Technologies described in this Report are collections of possible technology enablers which may be applied in the future. This Report does not preclude the adoption of any other technologies that exist or appear in the future, and newly emerging technologies are expected in the future.

Scope:

This Report provides a broad view of future technical aspects of terrestrial IMT systems considering the time frame up to 2030 and beyond, characterized with respect to key attributes and alignment with relevant driving factors. It includes information on technical and operational characteristics of terrestrial IMT systems, including the evolution of IMT through advances in technology and spectrally efficient techniques, and their deployment.

New services and application trends:

The development of IMT systems for 2030 and beyond calls for a thorough reconsideration of several types of interactions [3]. The roles of modularity and complementarity of new technological solutions become increasingly important in the development of increasingly complex systems. The use of data and algorithms, such as AI, will play an important role and technological complementarities are required to ensure that the technology innovations complement each other. This is particularly important as the role of IMT for 2030 and beyond can be seen as a pervasive general-purpose system, instead of simply an enabling technology, resulting in complex technical dependencies.

The role of the users of new services and applications is important in the technology development for IMT for 2030 and beyond, and users will need to have access to the services, required devices, and knowledge to use them, including non-users and potential reasons for their exclusion. Users’ opportunities to actively participate as experientials and developers will increase through a deeper understanding of technologies and skills and allows to shape the technologies for personalized needs.

Key new services and application trends for IMT for 2030 and beyond can be summarized as follows:

– Networks support enabling services that help to steer communities and countries towards reaching the UN SDGs

– Customization of user experience will increase with the help of user-centric resource orchestration models

– Localized demand–supply–consumption models will become prominent at a global level

– Community-driven networks and public–private partnerships (PPP) will bring about new models for future service provisioning

– Networks will have a strong role in various vertical and industrial contexts

– Market entry barriers will be lowered by the decoupling of technology platforms, making it possible for multiple entities to contribute to innovations

– Empowering citizens as knowledge producers, users and developers will contribute to a process of human-centred innovation, contributing to pluralism and increased diversity

– Privacy will be strongly influenced by the increased platform data economy or sharing economy, emergence of intelligent assistants (AI), connected living in smart cities, transhumanism, and digital twins

– Monitoring and steering of circular economy will be possible, helping to create better understanding of sustainable data economy

– Sharing- and circular economy-based co-creation will enable promoting sustainable interaction also with existing resources and processes

– Development of products and technologies that innovate to zero are promoted, for example, zero-waste and zero-emission technologies

– Immersive digital realities will facilitate novel ways of learning, understanding, and memorizing in several fields of science.

The role of IMT for 2030 and beyond will be to connect a number of feasible devices, processes as well as humans to a global information grid in a cognitive fashion, offering new opportunities for various verticals [3]. Considering their different development cycles, a full trolley of the potential advances and vertical transformations will continue to be occur in the beyond 2030 era. The trend towards higher data rates will continue going towards 2030 leading to peak data rates approaching Tbit/s regime indoors, which will require large available bandwidths giving rise to (sub-) THz communications. On the other hand, a large portion of the verticals’ data traffic will be measurement based or actuation related small data which in many cases require extreme low latency in rapid control loops necessitating short over the air latencies to allow time for computation and decision making. At the same time, the reliability requirement in many vertical applications will be stringent. Industrial devices and processes, future haptic applications and multi-stream holographic applications require timing synchronization setting tight requirements for transmission jitter. In the future, there will be use cases that require extreme performance as well as new combinations of requirements that do not fall into the three categories of IMT-2020: eMBB, URLLC, and massive machine type communication (mMTC). Some of these use cases will require wide coverage whereas others are confined in small areas.

The three usage scenarios described in IMT-2020 i.e. eMBB, mMTC and URLLC will still be important and new use cases and applications should be all taken into account for the continuing evolution, especially for those driving the technologies development and reflecting the future requirements.

Services and trend opportunities:

– Holographic Communications

Holographic displays are the next evolution in multimedia experience delivering 3D images from one or multiple sources to one or multiple destinations, providing an immersive 3D experience for the end user. Interactive holographic capability in the network will require a combination of very high data rates and ultra-low latency. The former arises because a hologram consists of multiple 3D images, while the latter is rooted in the fact that parallax is added so that the user can interact with the image, which also changes with the viewer’s position.

Holographic communication provides real-time three-dimensional representation of people, things, and their surroundings into a remote scenario. It requires at least an order of magnitude high transmission rate and powerful 3D display capability.

– Tactile and Haptic Internet Applications

Advanced robotics scenarios in manufacturing need a maximum latency target in a communication link of 100 microseconds (µs), and round-trip reaction times of 1 millisecond (ms). Human operators can monitor the remote machines by VR or holographic-type communications, and are aided by tactile sensors, which could also involve actuation and control via kinaesthetic feedback.

Vehicle-to-vehicle (V2V) or vehicle-to-infrastructure communication (V2I) and coordination, autonomous driving can result in a large reduction of road accidents and traffic jams. Latency in the order of a few ms will likely be needed for collision avoidance and remote driving.

Tele-diagnosis, remote surgery and telerehabilitation are just some of the many potential applications in healthcare. Tele-diagnostic tools, medical expertise/consultation could be available anywhere and anytime regardless of the location of the patient and the medical practitioner. Remote and robotic surgery is an application where a surgeon gets real-time audio-visual feeds of the patient that is being operated upon in a remote location. The technical requirements for haptic internet capability cannot be fully provided by current systems.

– Network and Computing Convergence

Mobile edge compute (MEC) will be deployed as part of 5G networks, yet this architecture will continue towards IMT 2030 networks. When a client requests a low latency service, the network may direct this to the nearest edge computing site. For computation-intensive applications, and due to the need for load balancing, a multiplicity of edge computing sites may be involved, but the computing resources must be utilized in a coordinated manner. Augmented reality/virtual reality (AR/VR) rendering, autonomous driving and holographic type communications are all candidates for edge cloud coordination.

– Extremely High Rate Information Access

Access points in metro stations, shopping malls, and other public places may provide information access kiosks. The data rates for these information access kiosks could be up to 1 Tbps. The kiosks will provide fibre-like speeds. They could also act as the backhaul needs of millimeter-wave (mmWave) small cells. Co-existence with contemporaneous cellular services as well as security seems to be the major issue requiring further attention in this direction.

– Connectivity for Everything

Scenarios include real-time monitoring of buildings, cities, environment, cars and transportation, roads, critical infrastructure, water and power etc. The internet of bio-things through smart wearable devices, intra-body communications achieved via implanted sensors will drive the need of connectivity much beyond mMTC.

It is anticipated that Private networks, applications or vertical-specific networks, mini and micro, enterprises, IoT / sensor networks will increase in numbers in the coming years (based on multiple Radio technologies). Interoperability is one of the most significant challenges in such a Ubiquitous connectivity / compute environment (smart environments), where different products, processes, applications, use cases and organizations are connected. Interactions among telecommunications networks, computers, and other peripheral devices have been of interest since the earliest distributed computing systems.

– XR – Interactive immersive experience

The interactive immersive experience use case will have the ability to seamlessly blend virtual and real-world environments and offer new multi-sensory experiences to users. This use case will enable the users to interact with avatars of other remotely located users and flexibly manipulate objects from representations of real and/or virtual environments with high degree of realism. The implications of this use case are expected to be immense, given its wide-ranging applicability to social, entertainment, gaming, industry, and business sectors.

X-Reality, such as virtual reality (VR), augmented reality (AR), and mixed reality (MR) is expected to provide higher resolution, larger FoV, higher FPS, and lower MTP, which all translate into higher demand on the transmission data rate and end-to-end latency.

A key challenge to address when supporting interactive experiences in network include synchronized transport of multi-modality of flows (e.g., visual media, audio, haptics) to and from different devices in a collaborative group serving the same XR application. Another important consideration is supporting real-time adaptations in the network relative to user movements and actions to ensure the interactions with other users and objects appear highly realistic in terms of placement and responsivity. Enabling spatial interactions will also require fast accessibility and ease of integration of content containing up-to-date and accurate representations of real/virtual environments from different content sources.

– Multidimensional sensing

Sensing based on measuring and analysing wireless signals will open opportunities for high-precision positioning, ultra-high-resolution imaging, mapping and environment reconstruction, gesture and motion recognition, which will demand high sensing resolution, accuracy, and detection rate.

– Digital Twin

Digital twin is a digital replica of entities in physical world, which demands real time and high accuracy sensing to ensure the accuracy, and low latency and high data transmission rate to guarantee the real time interaction between virtual and physical worlds.

A digital twin network is a dynamic replica of the physical network for its full life cycle. It should be capable of generating perceptive and cognitive intelligence based on collection of historical and on-line network data. It should be capable of continuously seeking the optimal state of the physical network in advance, and enforcing management operations accordingly. Digital twin enables network self-boosting, self-evolving, self-optimizing by verifying new functionalities, services and optimization features before deployment. Sensing and learning are the two fundamental functions to fuse the physical and cyber world.

Mixed Reality and Virtual Presence

Enabling efficient Machine Type Communication (MTC) continues to be important driver for IMT for 2030 and beyond. It allows machines and devices to communicate with each other without direct human involvement is a major driver behind the Internet of Things (IoT) and the future digitalization of economies and society [11]. MTC encompasses critical MTC (cMTC) and massive MTC (mMTC). The former targets mission-critical connectivity with stringent requirements on key performance indicators (KPI) such as reliability, latency, dependability, and synchronization accuracy. On the other hand, the latter addresses connectivity needs for massive number of potentially low-rate, low-energy simple devices, where the connection density and the energy efficiency are the most important KPIs.

For 2030 and beyond, data markets will become increasingly important technology area connecting data suppliers and customers [11]. The data generated by the widely distributed MTDs will have enormous business and societal value. The value-added services of data marketplaces will be empowered by emerging technologies like artificial intelligence (AI) and distributed ledger technology (DLT), while adding new data-centric KPIs such as the age of information, privacy and localization accuracy.

Proliferation of intelligence

Real-time distributed learning, joint inferring among proliferation of intelligent devices, and collaboration between intelligent robots demand a re-thinking of the communication system and networks design.

– Global Seamless Coverage

In order to connect the unconnected and provide continuously high quality mobile broadband service in various areas, it is expected that the interconnection of terrestrial and non-terrestrial networks will facilitate the provision of such services.

Technology Drivers for future technology trends towards 2030 and beyond:

The continuing evolution of the IMT systems, and the underlying technologies, must be guided by the imperative to satisfy fundamental needs, and contextualized in terms of how they can help the society, the end users, and the value creation and delivery. These necessities and key driving factors are:

– Societal goals – Future technologies should help contribute further to the success of a number of UN SDG goals including environmental sustainability, trust and inclusion, efficient delivery of health care, reduction in poverty and inequality, improvements in public safety and privacy, support for ageing populations, and managing expanding urbanization.

– Market expectations – New technologies should enable significant and novel capabilities, supporting radically new and differentiated services, opening up greater market opportunities

– Operational necessities – The need to manage complexity, drive efficiency, and reduce costs, with end to end automation and visibility, is also an imperative as a motivation and driving factor

Key considerations for IMT Systems for 2030 and beyond include:

– Sustainability/Energy efficiency

Energy efficiency has long been one important design target for both network and terminal. While improving the energy efficiency, the total energy consumption should also be kept as low as possible for the sustainable development.

Energy efficiency has long been one important design target for both network and terminal. While improving the energy efficiency, the total energy consumption should also be kept as low as possible for the sustainable development. Power efficient technology solutions are needed both in backhaul and local access to make use of small-scale renewable energy sources.

– Peak Data Rate/Guaranteed Data Rate

The peak data rate for future system should be largely increased in order to support extremely high bandwidth services such as extremely immersive XR and holographic communication.

Guaranteed data rate usually refers to the achievable data rate at the edge of coverage area. Future system should guarantee users’ experience regardless of users’ location and network traffic conditions.

– Latency

Services with real-time and precise control usually have high demands on the low latency of communications, such as the air interface delay, end-to-end latency, and roundtrip latency.

– Jitter

Usually refers to the degree of latency variation. Some of the future services such as time sensitive industry automation applications may request the jitter close to zero.

– Sensing resolution and accuracy

Sensing based services, including traditional positioning and new functions such as imaging and mapping, will be widely integrated with future smart services, including indoor and outdoor scenarios. Very high accuracy and resolution will be needed to support a better service experience.

– Connection density

Refers to the number of connected or accessible devices per unit space. It is an important indicator to measure the ability of mobile networks to support large-scale terminal devices. With the popularity of the Internet of Things (IoT) and the diversification of terminal accesses in the specific applications, such as industrial automation and personal health care etc., mobile system needs to have the ability to support ultra-large connections.

– Coverage and full connectivity

The future network should be able to provide global coverage and full connectivity by wireless and wired, terrestrial and non-terrestrial coverage with heterogeneous multi-layer architecture. The full connectivity network should support intelligent scheduling of connectivity according to application requirements and network status to improve the resource efficiency and service experience. It will extend the provision of quality guaranteed services, such as MBB, massive IoT, high precision navigation services etc, from outdoor to outdoor from urban to rural areas and from terrestrial to non-terrestrial spaces.

– Mobility

Refers to the maximum speed supported under a specific Quality of Service (QoS) requirement. Future system will not only support terminals on land including high speed train, but it will also provide services to terminals in high-speed airplane, drone and so on.

– Spectrum utilization

With new services and applications towards 2030 and beyond, more spectrum is required to accommodate the explosive mobile data traffic growth. Further study will be introduced on novel usage of low and mid band, and the extension to much higher frequency band with much broader channel bandwidth. The smart utilization of multiple bands and improvement of spectrum efficiency through advanced technologies are essential to achieve high throughput in limited bandwidth.

– Simplified user-centric network

With huge amounts of new services and scenarios towards 2030 and beyond, the network is required to satisfy diversified demand and personalized performance. The soft network should be designed as a fully service-based and native cloud-based radio access network, which can guarantee the QoS and provide consistent user experience. The lite network should be constructed as a globally unified access network with the simple architecture and the powerful capabilities of robust signalling control, accurate network services and efficient transmission through the converged communication protocols and access technologies with plug-and-play, on-demand deployment. A user-centric network is required to enable a fully distributed/decentralized network mitigating single point of failure as well as to enable the user-controlled data ownership which is critical to the next generation network.

– Native AI

The future mobile system will have stronger capabilities and support more diversified services, which will inevitably increase the complexity of the network. Artificial Intelligence (AI) reasoning will be embedded everywhere in the future network including physical layer design, radio resource management, network security, and application enhancement, as well as network architecture, which results in a multi-layer deep integrated intelligent network design. Meanwhile the future network can also support distributed AI as a service for larger scale intelligence.

– Security/Trustworthiness

The future network supports more advanced system resilience for reliable operation and service provision, security to provide confidentiality, integrity and availability, privacy with self-sovereign data, and safety regarding the impact to the human being and environment etc.

The roles of trust, security and privacy are somewhat interconnected, but different facets of the future networks. Inherited and novel security threats in future networks need to be addressed. Diversity and volume of novel IoT and other networked devices and their control systems will continue to pose significant security and privacy risks and additional threat vectors as we move from IMT-2020 to beyond. IMT for 2030 and beyond needs to support embedded end-to-end trust such that the resulting level of information security in the networks to is significantly better than in state-of-the art networks. Trust modelling, trust policies and trust mechanisms need to be defined.

Security algorithms can use machine learning to identify attacks and respond to them. Continuous deep learning is needed on a packet/byte level and applying machine learning to enforce policies, detect, contain, mitigate, and prevent threats or active attacks. While IMT-2020 is still largely device / network specific, future networks envisage far more immersive engagement with the network.

Conventional trust, security and privacy solutions may not be directly applicable to specific machine type communication scenarios owing to their lack of humans in the loop, massive deployment, diverse requirements, and wide range of deployment scenarios. This motivates the design of resource-efficient unsupervised solutions to be exploited by MTD, e.g., based on distributed ledger technology (DLT).

– Dynamically controllable radio environment

To be able to dynamically change the characteristics of radio propagation environment and create favourable channel conditions to support higher data rate communication and improve the coverage.

Emerging Technology trends and enablers:

Technologies to use AI in communications:

The big success of artificial intelligence (AI) in image, video and audio signal processing, data mining and knowledge discovery, etc., has made it possible to shift wireless communication to an intelligent paradigm in a similar manner, i.e., learning from the wireless big data which has yet to be fully exploited to design new and efficient architectures, protocols, schemes and algorithms for the future communication system. In turn, with the wide deployment of base stations, edge servers and intelligent devices, the mobile network will provide a new and powerful platform for ubiquitous data collection, storage, exchange and computing which are needed for future mobile / distributed / collaborative machine learning. For the future communication system, an emerging and transformative move will be providing the access of AI to everyone, every business, every service anywhere anytime. AI is the design tablet of the future communication system, and it will be the cornerstone to create intelligence everywhere. One of the main differences of the future communication system compared to IMT-2020 is that it will use mobile technologies to enable the proliferation of AI and use the radio networks to augment ubiquitous, distributed machine learning. Furthermore, AI ethics issues, existing in all AI-based systems and applications, have been raised and discussed in wireless community from different aspects. Then, future IMT technology would request on fairness, robustness to avoid AI ethics issues in certain level.

AI native new air interface:

Applying tools from Artificial Intelligence (AI), and Machine Learning (ML) and its sub-set Deep Learning (DL), in wireless communications have gained a lot of traction in recent years. This trend in large part has been motivated by the significant increase in the system complexity in the IMT-2020 radio access network (RAN) and its evolution over previous wireless technology generations. Deep neural networks allow the characterization of specific or even unknown channel environment and network environment, i.e., the traffic, the interferences and user behaviours, and then adapt the radio signalling to the channel and network environment. With learning it can optimize user signalling, power consumption as well as its end-to-end connectivity, and smartly coordinate the multi-user access of radio resources, thus optimizing the data and control plane signalling and improving the overall system performances.

The most challenging issue in air interface design is to sense the communication environments, i.e. the estimation and prediction of propagation channels. To this end, traditional air interface pays much effort to pilot design and channel estimation. Now with machine learning and especially the capability of black-box modelling and hyper-parameterization of a deep neural network, the unknowns of the underlying channel could be properly learned providing that sufficient data is available. Thus, we can reconstruct a physical channel rather than just estimate it. With transfer learning, the learned model can be transferred to adjacent nodes. This gives new way to air interface design. Several components in the transceiver chain are expected to be implemented through AI/ML based algorithms. This includes the transmitter side – beamforming and management and the receiver side – channel estimation, symbol detection and/or decoding at a minimum. Therefore, there will be a heavy focus to redesign the physical layer of the communication protocol stack using AI. On the other hand, the implementation issues related to periodic updating of deep learning models that are used in various blocks of the physical layer must be addressed.

In addition, radio resource management or resource allocation can also be implemented via AI/ML based methods. In a multi-user environment, with reinforcement learning, base stations and user equipment could automatically coordinate the channel access and resource allocation based on the signals they respectively received. Each of the nodes calculates its reward for each transmission, and adjusts its power, beam direction and other signalling to accomplish the distributed interference coordination and improve the system capacity. Following are some potential usages:

– For QoE bottleneck of last mile radio link. It is expected for RAN AI to expose radio channel prediction capabilities for upper layer adaptation, for example, available bandwidth and predicted latency, by taking into account multi-users radio channel fluctuation, traffic pattern and cell load variation and etc. This interaction could be based on the subscribed request from upper layer and would be only triggered when the predefined threshold is satisfied.

– The optimization of radio resource allocation to meet the requirements of highly demanding applications such as the cloud based interactive applications which requires low latency and high throughput. The optimization will take into account multi-dimensional metrics, for example, application-level traffic pattern, i.e., video frame level I/B/P frames distribution, transport layer congestion control, low layer buffer status, QoS profiles (e.g. bandwidth, latency)

– For the randomness and uncertainty of traffic distribution in the vehicle network, use the deep reinforcement learning based adaptive exploration approach for the resource allocation, including offline training, online distributed learning method etc.

Machine learning techniques can be used for symbol detection and/or decoding. While de-modulation/decoding in the presence of Gaussian noise or interference by classical means has been studied for many decades, and optimal solutions are available in many cases, ML could be useful in scenarios where either the interference/noise situation does not conform to the assumptions of the optimal theory, or where the optimal solutions are too complex. Meanwhile, IMT for 2030 and beyond will likely utilize even shorter codewords than IMT-2020 with low-resolution hardware (which inherently introduce non-linearity that is difficult to handle with classical methods). ML could play a major role, from symbol detection, to precoding, to beam selection, and antenna selection.

Another promising area for ML is the estimation and prediction of propagation channels. Previous generations, including IMT-2020, have mostly exploited CSI at the receiver, while CSI at the transmitter was mostly based on roughly quantized feedback of received signal quality and/or beam directions. In systems with even larger number of antenna elements, wider bandwidths, and higher degree of time variations, the performance loss of these techniques is non negligible. ML may be a promising approach to overcome such limitations.

MAC layer is a major application area of AI where many of the problems that have legacy solutions can be replaced with AI based methods using supervised learning, data collection and ML model deployment options. Next generation MAC algorithms will need to consider the AI that is used in various layers of the network, especially in physical layer. This is needed because of the need to update the deployed machine learning models, collect data for supervised learning tasks and enable reinforcement learning on different blocks of the network.

AI techniques can be used to target one or more wireless domains, including non-real-time (non-RT) network orchestration and management, such as configuration of antenna parameters and near-real-time (near-RT) network operation, such as load balancing and mobility robustness optimization. Each wireless domain involves different sets of physical and virtual components, family of parameters including key-performance-indicators (KPIs), underlying complexities, and time constraints for updates. Hence, there is a need to consider tailored AI solutions for different classes of the RAN, and their associated problems. There already exists a rich body of research and practical demonstrations of the potential benefits of AI for Wireless, including significant network energy savings.

With the progresses of machine learning and information theory, the ultimate air interface can hopefully perform the automatic semantic communications. There are many open fundamental problems in this direction for the wireless community. For example, learning algorithms usually relies highly on the wireless data which may be hard to obtain or be preserved under privacy constraints. To solve it, we can learn with both the practical wireless data and the statistical models.

Questions related to the most optimal ML algorithms given certain conditions, required amount of training data, transferability of parameters to different environments, and improvement of explainability will be the major topics of research in the foreseeable future. There will be various phases towards development of AI for Wireless, and it is imperative to ensure the increased integration of the technology comes with minimum disruption to the rollout and operation of wireless systems and services. In the short and medium terms, AI models may be targeted for optimisation of specific features within RAN for IMT-2020 and its evolution, such as network operation and management functionalities. In the longer term, AI may be used to enable new features over legacy wireless systems.

AI-Native radio network

Future IMT-systems are required to support extremely reliable and performance-guaranteed services. They will introduce a multi-dimensional network topology, which will make network management and operation more difficult and introduce more challenging problems. To address these problems, it will adopt AI technologies for automated and intelligent networking services. Consequently, to assist computation intensive tasks in AI applications, it will evolve into an AI-native network architecture.

The highest level of AI-native radio network should be designed and implemented by AI to be an intelligent radio network, which can automatically optimize and adjust the network according to specific requirements/objectives/commands, or changes of the environment. The research may include the high-layer protocols, network architecture and networking technologies enabling the above intelligent radio network.

RAN optimization is one of the problems that is rather difficult to solve due to the complexity of the mathematical formulation of the problems. Deep reinforcement learning paradigm in AI can enable zero-touch optimization of the RAN elements with minimum hand-crafted engineering. In addition, Radio networks architecture design is often a challenging task that can be automated with the use of AI. Methods such as graph representation learning could be utilized to enable the network architecture design that can simplify the problem.

Various use cases of AI-empowered network automation are proposed, including fault recovery/root cause analysis, AI-based energy optimization, optimal scheduling, and network planning. Key challenges of training issues have been identified: lack of bounding performance, lack of explainability, uncertainty in generalization, and lack of interoperability to realize full network automation. Four types of analytics can be classified for future AI-native networks, and they are: descriptive analytics, diagnostic analytics, predictive analytics, and prescriptive analytics. The key for successful network automation in AI-native network architecture is how to collect rich and reliable network data that is not typically open to other players other than network operators.

In general, an overall network architecture consists of four tiers of entities: UE, BS, core network, and application server. Application of AI can be categorized into three levels as shown in Figure 1: 1) local AI, 2) joint AI, and 3) E2E AI. This use case family consists in being present and interacting anytime, anywhere, using all senses if so desired. It enables humans to interact with each other without any limitation on physical presence.

The future RAN will be able to perceive and adapt to complex and dynamic environments by monitoring and tracking conditions in the radio network while diagnosing and restoring any RAN issues in an automated fashion. To achieve autonomy for its full life cycle management, at least the following novel networking technologies need to be considered: 1) efficient and intelligent network telemetry technologies that leverage AI to apply management operations based on a collection of historical and live network data, 2) automated network management and orchestration technologies that continuously seek the optimal state of the RAN and enforce management operations accordingly, 3) automatically perform life cycle management operations, adjust configurations on radio network elements, and optimize new services and features during and after deployment, and 4) provide AI based assistance, in particular for aspects such as forecasting, root cause analysis, anomaly detection and intent translation.

More specifically, large quantity of data transportation will bring burdens to each network interface. Besides, data sensed from the radio environment sometimes don’t have the corresponding labels. Intelligent data perception, e.g., utilizing GAN to generate the required data so as to simulate real data, will avoid transferring large amount of data over interfaces, and protect the data privacy to a certain degree. To further this vision of zero-touch network management, an open network data set and open eco-system need to be established.

It is also possible that user feedback is introduced into the decision-making process of the network to improve the decision-making of AI algorithms and help the machine better understand user preferences and make more user-preferred decisions.

In future IMT-systems, more computation nodes will be required to support highly computation-intensive services. Thus, computation nodes will be pervasive from core to edge and from network to device. To cope with this trend, the control and user planes of the network for future IMT-systems need to be redesigned, and emerging technologies such as programmable switches and distributed/federated learning need to be aggressively adopted.

To support services in multiple application scenarios, an intelligent network is needed. In the AI-Native Radio Network, AI is no longer just optimizing the wireless resources of the wireless network, but an intelligent system integrating with radio network, which can realize the supply of on-demand capability.

In order to realize the intelligence of Radio Network, the new functions of Sensing and AI need to be supported. Through the data sensing function, we can realize the end-to-end collection, processing and storage of network data. While the AI function can call and subscribe to these data on demand, and provide capability support according to different application scenarios. In this way, the utilization and support of AI capabilities can be realized more efficiently and globally.

AI system in AI-Native Radio Network is distributed on different network functions. AI algorithms running on different functions or AI models trained on different functions are all components of this distributed AI system, and all components are organic unity. Under the control or coordination of the unified AI control center, each component of the distributed AI system independently completes the assigned tasks, interacts with other components, and reports measurements to the control center. Distributed AI system should be end-to-end solution.

Edge AI is to be considered one of the key enablers for future IMT-systems, especially for sensing-communication-computing-control. On the other hand, a distributed deep learning architecture is to be considered for realizing URLLC in future IMT-systems. Thus, its RAN can be flexibly and adaptively optimized with the aid of AI to guarantee QoS and leading to the topics of interest: 1) adaptive RAN slicing architecture and the corresponding distributed intelligence architecture, 2) knowledge-assisted learning architecture and methods, and 3) fast training/federated learning methods.

In addition, Self-Synthesising Networks automate the actual design process, or large parts thereof. Whilst the actual invention of engineering principles may still be done by human researchers or in combination with AI, the system design, prototyping and standards development are now largely executed by machines. Given that the two phases of systems design and standardisation take years, it is hoped that the introduction of self-synthesising networking principles will accelerate feature development in telecoms by 5-8 years.

Radio network for AI:

The radio network will be migrated from over the top towards the AI era. Wireless networks should consider the AI applications and paradigms that require exchange of large amounts of data, machine learning models, and inference data exchange between different entities in the networks. We must find long-term platform technologies to better support AI service, which will greatly impact the design of future radio network, i.e. radio network for AI, The distributed and collaborative machine learning is required to fully leverage the computing/communication load and the efficiency and furthermore to comply with the local governance of data requirement and data privacy. Therefore, the data-split and model‑split approaches will be the major focuses for future research. The impacts of this on the future network design are threefold:

– Shift from downlink-centric radio to uplink-centric radio: Unlike existing downlink-centric radio which usually supports heavier traffic and better QoS for downlinks, AI requires more frequent model and data exchanges between a base station and the different users it serves. The uplinks should be reconsidered in network design to attain a balanced, efficient and robust distributed machine learning.

– Shift from the core network to the deep edge: The locality of data and the computing/communication needed for deep machine learning bring big challenges to the end-to-end delay. To mitigate it, new network as well as the corresponding protocols should be redesigned. One of such research directions is to place the major learning processes and threads close to the edge and thus forms a deep edge which can greatly mitigate the system delay.

– Shift from cloudification to machine learning: Due to the distributive nature of data and computing power, the communication and computing procedures of a machine learning algorithm often take place across the whole network from the cloud to the edge and the devices. Therefore, traditional cloudification should also be reconsidered to be application-centric, i.e., to meet the specific needs of the more general distributed machine learning applications with proper deployment of computing and communication resources.

In addition, Future data-intensive, real-time applications require distributed ML/AI solutions deployed on the edge-cloud continuum, shortly known as EdgeAI or Edge Intelligence. These solutions support augmenting human-decision processes, developing autonomous systems from small devices to complete factories, and optimising the network performance and marshalling the billions of IoT devices expected to be interacting in the 2030s. Distributed ML/AI has become an inseparable part of wireless networks and increasing volumes of heterogeneous streaming data will require more advanced computing paradigms. Since heterogeneous IoT devices are not as reliable as high-performance, centralised servers, distributed and self-organising schemes are mandatory to provide strong robustness in device and link failures. The current open questions in fulfilling the requirements of the true Edge Intelligence include data and resource distribution, distributed and online model training, and inference on those models across multiple heterogeneous devices, locations, and domains of varying context-awareness. The future network architecture is expected to provide native support for radio-based sensing and, through versatile connectivity, accommodate ultra-dense sensor and actuator networks, enabling hyper-local and real-time sensing, communication, and interaction the intelligent edge-cloud continuum.

Explainable AI for RAN:

Automation principles were introduced into the telecommunications architecture as early as in 2008. Despite a swath of algorithmic ML/AI/SON frameworks available, uptake was not as widespread as expected. An important reason for this was that –whilst the developed automation frameworks outperformed any other operational approach– it exhibited occasional and unexplainable outages which operators could not accept. Since the proposal of the concept of wireless AI, there has been a widespread concern on how to harmonize the relationship between existing communication mechanism and the so-called “black-box” AI (machine learning, or even deep learning) models. It has been highly encouraged that existing expert wireless knowledge should be fused in the design of AI models to improve their performance and interpretability, for example, AI based MIMO channel estimation achieving the significant performance gain.