AI RAN

Qualcomm CEO: expect “pre-commercial” 6G devices by 2028

During his keynote speech at the 2025 Snapdragon Summit in Maui, Qualcomm CEO Cristiano Amon said:

“We have been very busy working on the next generation of connectivity…which is 6G. Designed to be the connection between the cloud and Edge devices, The difference between 5G and 6G, besides increasing the speeds, increasing broadband, increasing the amount of data, it’s also a network that has intelligence to have perception and sensor data. We’re going to have completely new use cases for this network of intelligence — connecting the edge and the cloud.”

“We have been working on this (6G) for a while, and it’s sooner than you think. We are ready to have pre-commercial devices with 6G as early as 2028. And when we get that, we’re going to have context aware intelligence at scale.”

…………………………………………………………………………………………………………………………………………………………………………………………..

Analysis: Let’s examine that statement, in light of the ITU-R IMT 2030 recommendations not scheduled to be completed until the end of 2030:

“pre-commercial devices” are not meant for general consumers while “as early as” leaves open the possibility that those 6G devices might not be available until after 2028.

…………………………………………………………………………………………………………………………………………………………………………………………..

Looking ahead at the future of devices, Amon noted that 6G would play a key role in the evolution of AI technology, with AI models becoming hybrid. This includes a combination of cloud and edge devices (like user interfaces, sensors, etc). According to Qualcomm, 6G will make this happen. Anon envisions a future where AI agents are a crucial part of our daily lives, upending the way we currently use our connected devices. He firmly believes that smartphones, laptops, cars, smart glasses, earbuds, and more will have a direct line of communication with these AI agents — facilitated by 6G connectivity.

Opinion: This sounds very much like the hype around 5G ushering a whole new set of ultra-low latency applications which never happened (because the 3GPP specs for URLLC were not completed in June 2020 when Release 16 was frozen). Also, very few mobile operators deployed 5G SA core, without which there are no 5G features, like network slicing and security.

Separately, Nokia Bell Labs has said that in the coming 6G era, “new man-machine interfaces” controlled by voice and gesture input will gradually replace more traditional inputs, like typing on touchscreens. That’s easy to read as conjecture, but we’ll have to see if that really happens when the first commercial 5G networks are deployed in late 2030- early 2031.

We’re sure to see faster network speeds with higher amounts of data with 6G with AI in more devices, but standardized 6G is still at least five years from being a commercial reality.

References:

https://www.androidauthority.com/qualcomm-6g-2028-3600781/

https://www.nokia.com/6g/6g-explained/

ITU-R WP5D IMT 2030 Submission & Evaluation Guidelines vs 6G specs in 3GPP Release 20 & 21

ITU-R WP 5D reports on: IMT-2030 (“6G”) Minimum Technology Performance Requirements; Evaluation Criteria & Methodology

ITU-R: IMT-2030 (6G) Backgrounder and Envisioned Capabilities

Ericsson and e& (UAE) sign MoU for 6G collaboration vs ITU-R IMT-2030 framework

Highlights of 3GPP Stage 1 Workshop on IMT 2030 (6G) Use Cases

6th Digital China Summit: China to expand its 5G network; 6G R&D via the IMT-2030 (6G) Promotion Group

MediaTek overtakes Qualcomm in 5G smartphone chip market

SoftBank’s Transformer AI model boosts 5G AI-RAN uplink throughput by 30%, compared to a baseline model without AI

Softbank has developed its own Transformer-based AI model that can be used for wireless signal processing. SoftBank used its Transformer model to improve uplink channel interpolation which is a signal processing technique where the network essentially makes an educated guess as to the characteristics and current state of a signal’s channel. Enabling this type of intelligence in a network contributes to faster, more stable communication, according to SoftBank. The Japanese wireless network operator successfully increased uplink throughput by approximately 20% compared to a conventional signal processing method (the baseline method). In the latest demonstration, the new Transformer-based architecture was run on GPUs and tested in a live Over-the-Air (OTA) wireless environment. In addition to confirming real-time operation, the results showed further throughput gains and achieved ultra-low latency.

Editor’s note: A Transformer model is a type of neural network architecture that emerged in 2017. It excels at interpreting streams of sequential data associated with large language models (LLMs). Transformer models have also achieved elite performance in other fields of artificial intelligence (AI), including computer vision, speech recognition and time series forecasting. Transformer models are lightweight, efficient, and versatile – capable of natural language processing (NLP), image recognition and wireless signal processing as per this Softbank demo.

Significant throughput improvement:

- Uplink channel interpolation using the new architecture improved uplink throughput by approximately 8% compared to the conventional CNN model. Compared to the baseline method without AI, this represents an approximately 30% increase in throughput, proving that the continuous evolution of AI models leads to enhanced communication quality in real-world environments.

Higher AI performance with ultra-low latency:

- While real-time 5G communication requires processing in under 1 millisecond, this demonstration with the Transformer achieved an average processing time of approximately 338 microseconds, an ultra-low latency that is about 26% faster than the convolution neural network (CNN) [1.] based approach. Generally, AI model processing speeds decrease as performance increases. This achievement overcomes the technically difficult challenge of simultaneously achieving higher AI performance and lower latency. Editor’s note: Perhaps this can overcome the performance limitations in ITU-R M.2150 for URRLC in the RAN, which is based on an uncompleted 3GPP Release 16 specification.

Note 1. CNN-based approaches to achieving low latency focus on optimizing model architecture, computation, and hardware to accelerate inference, especially in real-time applications. Rather than relying on a single technique, the best results are often achieved through a combination of methods.

Using the new architecture, SoftBank conducted a simulation of “Sounding Reference Signal (SRS) prediction,” a process required for base stations to assign optimal radio waves (beams) to terminals. Previous research using a simpler Multilayer Perceptron (MLP) AI model for SRS prediction confirmed a maximum downlink throughput improvement of about 13% for a terminal moving at 80 km/h.*2

In the new simulation with the Transformer-based architecture, the downlink throughput for a terminal moving at 80 km/h improved by up to approximately 29%, and by up to approximately 31% for a terminal moving at 40 km/h. This confirms that enhancing the AI model more than doubled the throughput improvement rate (see Figure 1). This is a crucial achievement that will lead to a dramatic improvement in communication speeds, directly impacting the user experience.

The most significant technical challenge for the practical application of “AI for RAN” is to further improve communication quality using high-performance AI models while operating under the real-time processing constraint of less than one millisecond. SoftBank addressed this by developing a lightweight and highly efficient Transformer-based architecture that focuses only on essential processes, achieving both low latency and maximum AI performance. The important features are:

(1) Grasps overall wireless signal correlations

By leveraging the “Self-Attention” mechanism, a key feature of Transformers, the architecture can grasp wide-ranging correlations in wireless signals across frequency and time (e.g., complex signal patterns caused by radio wave reflection and interference). This allows it to maintain high AI performance while remaining lightweight. Convolution focuses on a part of the input, while Self-Attention captures the relationships of the entire input (see Figure 2).

(2) Preserves physical information of wireless signals

While it is common to normalize input data to stabilize learning in AI models, the architecture features a proprietary design that uses the raw amplitude of wireless signals without normalization. This ensures that crucial physical information indicating communication quality is not lost, significantly improving the performance of tasks like channel estimation.

(3) Versatility for various tasks

The architecture has a versatile, unified design. By making only minor changes to its output layer, it can be adapted to handle a variety of different tasks, including channel interpolation/estimation, SRS prediction, and signal demodulation. This reduces the time and cost associated with developing separate AI models for each task.

The demonstration results show that high-performance AI models like Transformer and the GPUs that run them are indispensable for achieving the high communication performance required in the 5G-Advanced and 6G eras. Furthermore, an AI-RAN that controls the RAN on GPUs allows for continuous performance upgrades through software updates as more advanced AI models emerge, even after the hardware has been deployed. This will enable telecommunication carriers to improve the efficiency of their capital expenditures and maximize value.

Moving forward, SoftBank will accelerate the commercialization of the technologies validated in this demonstration. By further improving communication quality and advancing networks with AI-RAN, SoftBank will contribute to innovation in future communication infrastructure. The Japan based conglomerate strongly endorsed AI RAN at MWC 2025.

References:

https://www.softbank.jp/en/corp/news/press/sbkk/2025/20250821_02/

https://www.telecoms.com/5g-6g/softbank-claims-its-ai-ran-tech-boosts-throughput-by-30-

https://www.telecoms.com/ai/softbank-makes-mwc-25-all-about-ai-ran

https://www.ibm.com/think/topics/transformer-model

https://www.itu.int/rec/R-REC-M.2150/en

Softbank developing autonomous AI agents; an AI model that can predict and capture human cognition

Dell’Oro Group: RAN Market Grows Outside of China in 2Q 2025

Dell’Oro: AI RAN to account for 1/3 of RAN market by 2029; AI RAN Alliance membership increases but few telcos have joined

Dell’Oro: RAN revenue growth in 1Q2025; AI RAN is a conundrum

Nvidia AI-RAN survey results; AI inferencing as a reinvention of edge computing?

OpenAI announces new open weight, open source GPT models which Orange will deploy

Deutsche Telekom and Google Cloud partner on “RAN Guardian” AI agent

Ericsson reports ~flat 2Q-2025 results; sees potential for 5G SA and AI to drive growth

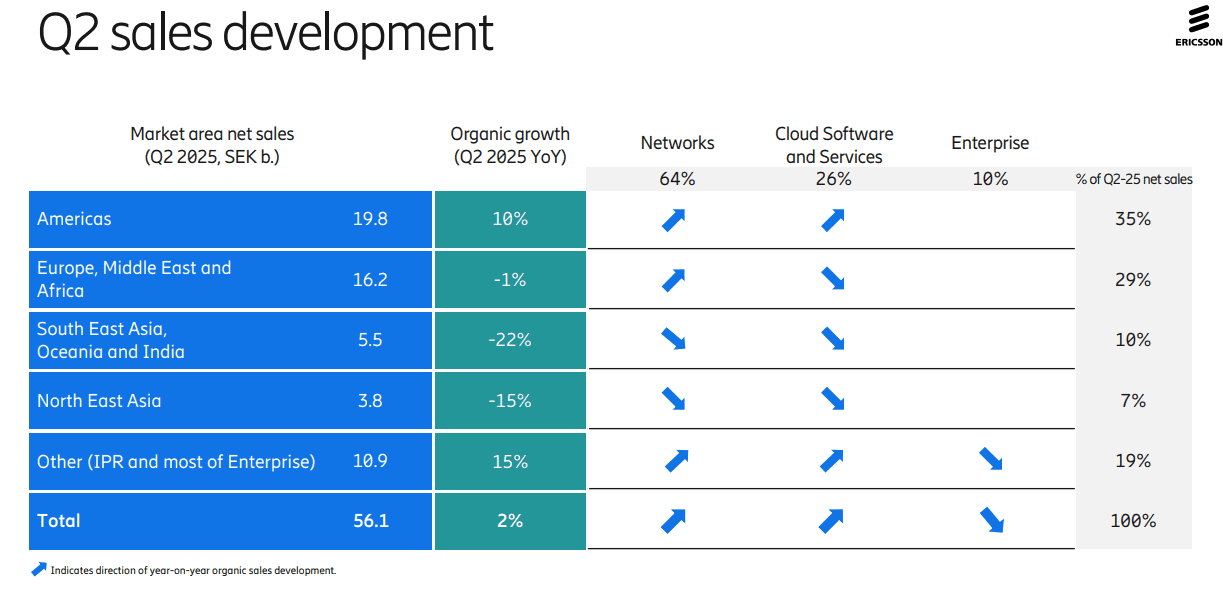

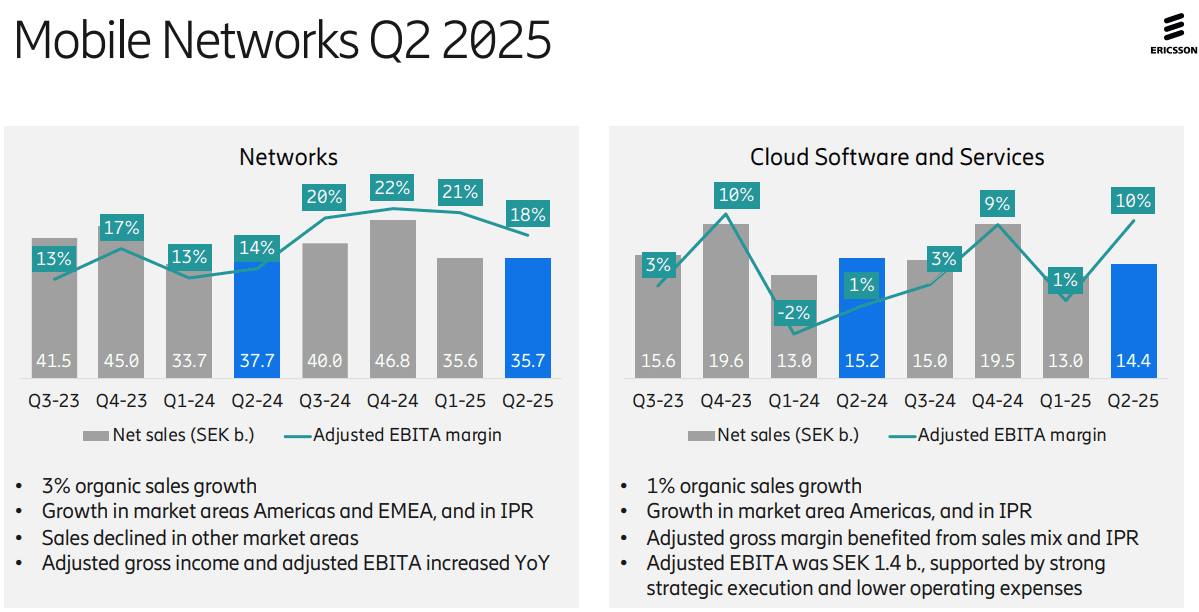

Ericsson’s second-quarter results were not impressive, with YoY organic sales growth of +2% for the company and +3% for its network division (its largest). Its $14 billion AT&T OpenRAN deal, announced in December of 2023, helped lift Swedish vendor’s share of the global RAN market by +1.4 percentage points in 2024 to 25.7%, according to new research from analyst company Omdia (owned by Informa). As a result of its AT&T contract, the U.S. accounted for a stunning 44% of Ericsson’s second-quarter sales while the North American market resulted in a 10% YoY increase in organic revenues to SEK19.8bn ($2.05bn). Sales dropped in all other regions of the world! The charts below depict that very well:

Ericsson’s attention is now shifting to a few core markets that Ekholm has identified as strategic priorities, among them the U.S., India, Japan and the UK. All, unsurprisingly, already make up Ericsson’s top five countries by sales, although their contribution minus the US came to just 15% of turnover for the recent second quarter. “We are already very strong in North America, but we can do more in India and Japan,” said Ekholm. “We see those as critically important for the long-term success.”

Opportunities: As telco investment in RAN equipment has declined by 12.5% (or $5 billion) last year, the Swedish equipment vendor has had few other obvious growth opportunities. Ericsson’s Enterprise division, which is supposed to be the long-term provider of sales growth for Ericsson, is still very small – its second-quarter revenues stood at just SEK5.5bn ($570m) and even once currency exchange changes are taken into account, its sales shrank by 6% YoY.

On Tuesday’s earnings call, Ericsson CEO Börje Ekholm said that the RAN equipment sector, while stable currently, isn’t offering any prospects of exciting near-term growth. For longer-term growth the industry needs “new monetization opportunities” and those could come from the ongoing modest growth in 5G-enabled fixed wireless access (FWA) deployments, from 5G standalone (SA) deployments that enable mobile network operators to offer “differentiated solutions” and from network APIs (that ultra hyped market is not generating meaningful revenues for anyone yet).

Cost Cutting Continues: Ericsson also has continued to be aggressive about cost reduction, eliminating thousands of jobs since it completed its Vonage takeover. “Over the last year, we have reduced our total number of employees by about 6% or 6,000,” said Ekholm on his routine call with analysts about financial results. “We also see and expect big benefits from the use of AI and that is one reason why we expect restructuring costs to remain elevated during the year.”

Use of AI: Ericsson sees AI as an opportunity to enable network automation and new industry revenue opportunities. The company is now using AI as an aid in network design – a move that could have negative ramifications for staff involved in research and development. Ericsson is already using AI for coding and “other parts of internal operations to drive efficiency… We see some benefits now. And it’s going to impact how the network is operated – think of fully autonomous, intent-based networks that will require AI as a fundamental component. That’s one of the reasons why we invested in an AI factory,” noted the CEO, referencing the consortium-based investment in a Swedish AI Factory that was announced in late May. At the time, Ericsson noted that it planned to “leverage its data science expertise to develop and deploy state-of-the-art AI models – improving performance and efficiency and enhancing customer experience.

Ericsson is also building AI capability into the products sold to customers. “I usually use the example of link adaptation,” said Per Narvinger, the head of Ericsson’s mobile networks business group, on a call with Light Reading, referring to what he says is probably one of the most optimized algorithms in telecom. “That’s how much you get out of the spectrum, and when we have rewritten link adaptation, and used AI functionality on an AI model, we see we can get a gain of 10%.”

Ericsson hopes that AI will boost consumer and business demand for 5G connectivity. New form factors such as smart glasses and AR headsets will need lower-latency connections with improved support for the uplink, it has repeatedly argued. But analysts are skeptical, while Ericsson thinks Europe is ill equipped for more advanced 5G services.

“We’re still very early in AI, in [understanding] how applications are going to start running, but I think it’s going to be a key driver of our business going forward, both on traffic, on the way we operate networks, and the way we run Ericsson,” Ekholm said.

Europe Disappoints: In much of Europe, Ericsson and Nokia have been frustrated by some government and telco unwillingness to adopt the European Union’s “5G toolbox” recommendations and evict Chinese vendors. “I think what we have seen in terms of implementation is quite varied, to be honest,” said Narvinger. Rather than banning Huawei outright, Germany’s government has introduced legislation that allows operators to use most of its RAN products if they find a substitute for part of Huawei’s management system by 2029. Opponents have criticized that move, arguing it does not address the security threat posed by Huawei’s RAN software. Nevertheless, Ericsson clearly eyes an opportunity to serve European demand for military communications, an area where the use of Chinese vendors would be unthinkable.

“It is realistic to say that a large part of the increased defense spending in Europe will most likely be allocated to connectivity because that is a critical part of a modern defense force,” said Ekholm. “I think this is a very good opportunity for western vendors because it would be far-fetched to think they will go with high-risk vendors.” Ericsson is also targeting related demand for mission-critical services needed by first responders.

5G SA and Mobile Core Networks: Ekholm noted that 5G SA deployments are still few and far between – only a quarter of mobile operators have any kind of 5G SA deployment in place right now, with the most notable being in the US, India and China. “Two things need to happen,” for greater 5G SA uptake, stated the CEO.

- “One is mid-band [spectrum] coverage… there’s still very low build out coverage in, for example, Europe, where it’s probably less than half the population covered… Europe is clearly behind on that“ compared with the U.S., China and India.

- “The second is that [network operators] need to upgrade their mobile core [platforms]... Those two things will have to happen to take full advantage of the capabilities of the [5G] network,” noted Ekholm, who said the arrival of new devices, such as AI glasses, that require ultra low latency connections and “very high uplink performance” is starting to drive interest. “We’re also seeing a lot of network slicing opportunities,” he added, to deliver dedicated network resources to, for example, police forces, sports and entertainment stadiums “to guarantee uplink streams… consumers are willing to pay for these things. So I’m rather encouraged by the service innovation that’s starting to happen on 5G SA and… that’s going to drive the need for more radio coverage [for] mid-band and for core [systems].”

Ericsson’s Summary -Looking Ahead:

- Continue to strengthen competitive position

- Strong customer engagement for differentiated connectivity

- New use cases to monetize network investments taking shape

- Expect RAN market to remain broadly stable

- Structurally improving the business through rigorous cost management

- Continue to invest in technology leadership

………………………………………………………………………………………………………………………………………………………………………………………………

References:

https://www.telecomtv.com/content/5g/ericsson-ceo-waxes-lyrical-on-potential-of-5g-sa-ai-53441/

https://www.lightreading.com/5g/ericsson-targets-big-huawei-free-places-ai-and-nato-as-profits-soar

Ericsson revamps its OSS/BSS with AI using Amazon Bedrock as a foundation

Dell’Oro: AI RAN to account for 1/3 of RAN market by 2029; AI RAN Alliance membership increases but few telcos have joined

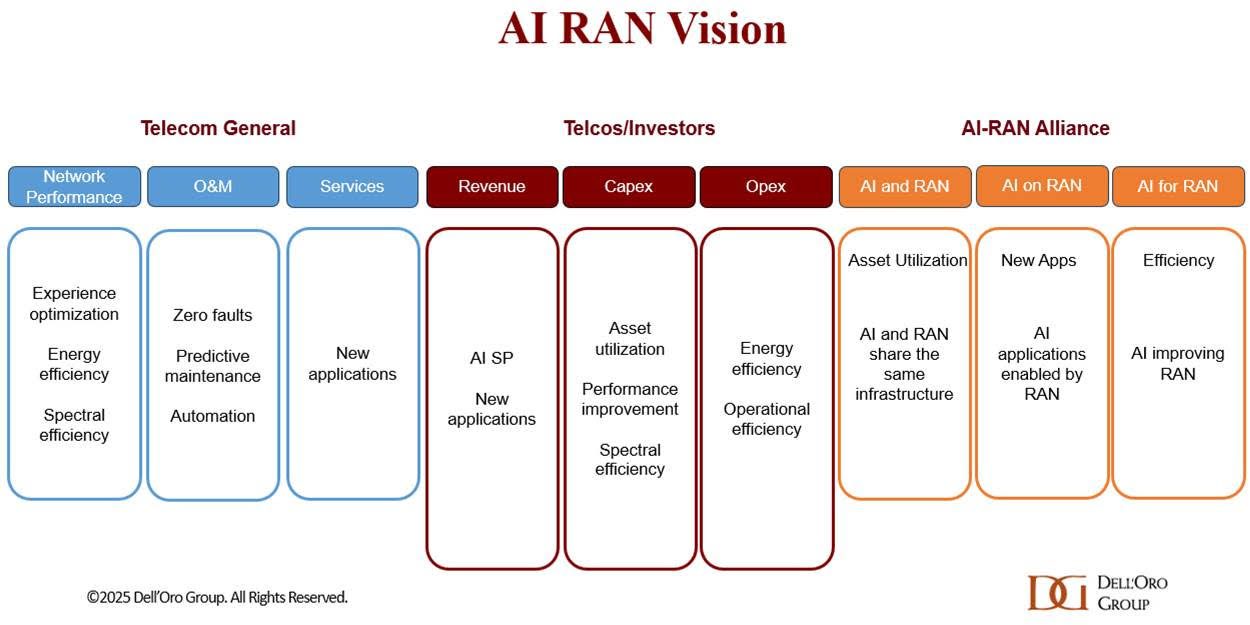

AI RAN [1.] is projected to account for approximately a third of the RAN market by 2029, according to a recent AI RAN Advanced Research Report published by the Dell’Oro Group. In the near term, the focus within the AI RAN segment will center on Distributed-RAN (D-RAN), single-purpose deployments, and 5G.

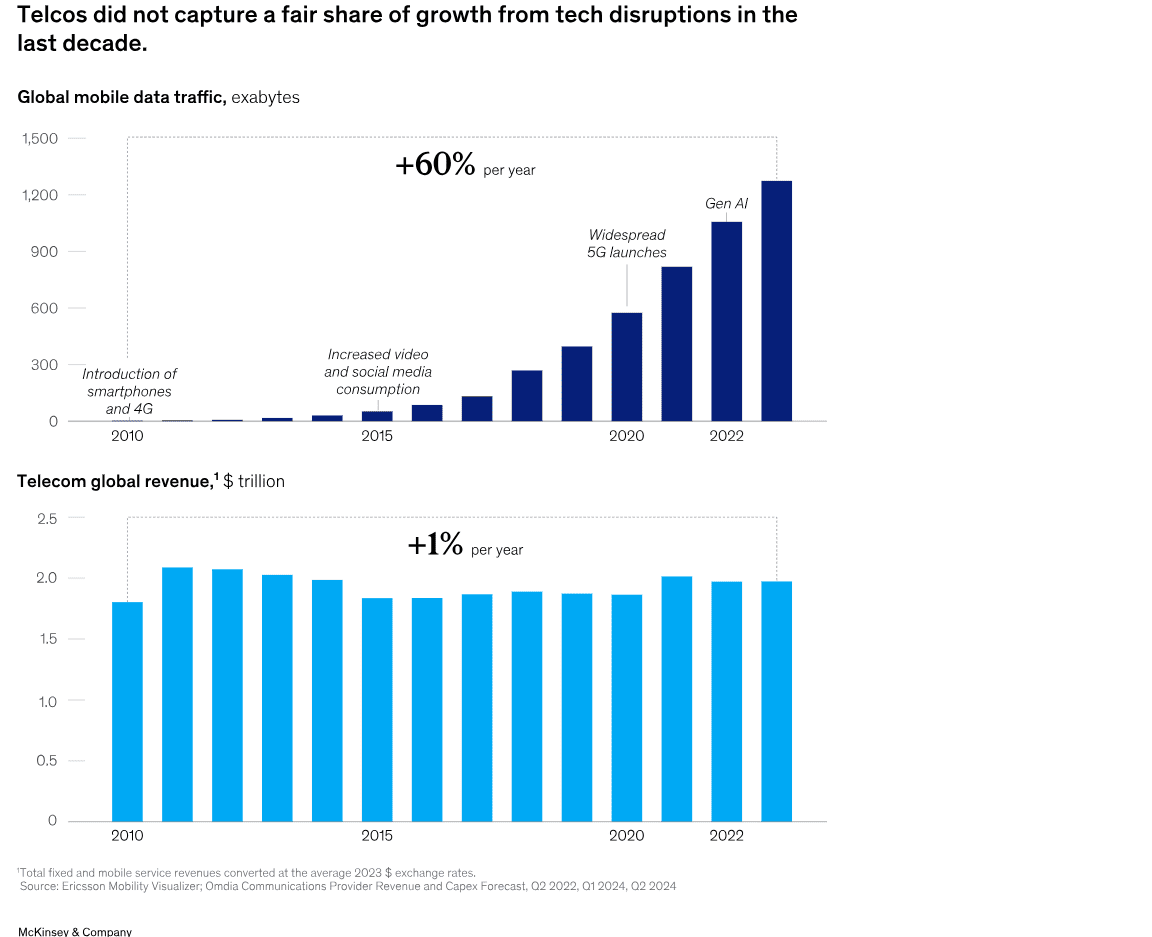

“Near-term priorities are more about efficiency gains than new revenue streams,” said Stefan Pongratz, Vice President at Dell’Oro Group. “There is strong consensus that AI RAN can improve the user experience, enhance performance, reduce power consumption, and play a critical role in the broader automation journey. Unsurprisingly, however, there is greater skepticism about AI’s ability to reverse the flat revenue trajectory that has defined operators throughout the 4G and 5G cycles,” continued Pongratz.

Note 1. AI RAN integrates AI and machine learning (ML) across various aspects of the RAN domain. The AI RAN scope in this report is aligned with the greater industry vision. While the broader AI RAN vision includes services and infrastructure, the projections in this report focus on the RAN equipment market.

Additional highlights from the July 2025 AI RAN Advanced Research Report:

- The base case is built on the assumption that AI RAN is not a growth vehicle. But it is a crucial technology/tool for operators to adopt. Over time, operators will incorporate more virtualization, intelligence, automation, and O-RAN into their RAN roadmaps.

- This initial AI RAN report forecasts the AI RAN market based on location, tenancy, technology, and region.

- The existing RAN radio and baseband suppliers are well-positioned in the initial AI-RAN phase, driven primarily by AI-for-RAN upgrades leveraging the existing hardware. Per Dell’Oro Group’s regular RAN coverage, the top 5 RAN suppliers contributed around 95 percent of the 2024 RAN revenue.

- AI RAN is projected to account for around a third of total RAN revenue by 2029.

In the first quarter of 2025, Dell’Oro said the top five RAN suppliers based on revenues outside of China are Ericsson, Nokia, Huawei, Samsung and ZTE. In terms of worldwide revenue, the ranking changes to Huawei, Ericsson, Nokia, ZTE and Samsung.

About the Report: Dell’Oro Group’s AI RAN Advanced Research Report includes a 5-year forecast for AI RAN by location, tenancy, technology, and region. Contact: [email protected]

………………………………………………………………………………………………………………………………………………………………………………………………………………………………………………

Author’s Note: Nvidia’s Aerial Research portfolio already contains a host of AI-powered tools designed to augment wireless network simulations. It is also collaborating with T-Mobile and Cisco to develop AI RAN solutions to support future 6G applications. The GPU king is also working with some of those top five RAN suppliers, Nokia and Ericsson, on an AI-RAN Innovation Center. Unveiled last October, the project aims to bring together cloud-based RAN and AI development and push beyond applications that focus solely on improving efficiencies.

……………………………………………………………………………………………………………………………………………………………………………………………………………………………………………….

The one year old AI RAN Alliance has now increased its membership to over 100, up from around 84 in May. However, there are not many telco members with only Vodafone joining since May. The other telco members are: Turkcell ,Boost Mobile, Globe, Indosat Ooredoo Hutchison (Indonesia), Korea Telecom, LG UPlus, SK Telecom, T-Mobile US and Softbank. This limited telco presence could reflect the ongoing skepticism about the goals of AI-RAN, including hopes for new revenue opportunities through network slicing, as well as hosting and monetizing enterprise AI workloads at the edge.

Francisco Martín Pignatelli, head of open RAN at Vodafone, hardly sounded enthusiastic in his statement in the AI-RAN Alliance press release. “Vodafone is committed to using AI to optimize and enhance the performance of our radio access networks. Running AI and RAN workloads on shared infrastructure boosts efficiency, while integrating AI and generative applications over RAN enables new real-time capabilities at the network edge,” he added.

Perhaps, the most popular AI RAN scenario is “AI on RAN,” which enables AI services on the RAN at the network edge in a bid to support and benefit from new services, such as AI inferencing.

“We are thrilled by the extraordinary growth of the AI-RAN Alliance,” said Alex Jinsung Choi, Chair of the AI-RAN Alliance and Principal Fellow at SoftBank Corp.’s Research Institute of Advanced Technology. “This milestone underscores the global momentum behind advancing AI for RAN, AI and RAN, and AI on RAN. Our members are pioneering how artificial intelligence can be deeply embedded into radio access networks — from foundational research to real-world deployment — to create intelligent, adaptive, and efficient wireless systems.”

Choi recently suggested that now is the time to “revisit all our value propositions and then think about what should be changed or what should be built” to be able to address issues including market saturation and the “decoupling” between revenue growth and rising TCO. He also cited self-driving vehicles and mobile robots, where low latency is critical, as specific use cases where AI-RAN will be useful for running enterprise workloads.

About the AI-RAN Alliance:

The AI-RAN Alliance is a global consortium accelerating the integration of artificial intelligence into Radio Access Networks. Established in 2024, the Alliance unites leading companies, researchers, and technologists to advance open, practical approaches for building AI-native wireless networks. The Alliance focuses on enabling experimentation, sharing knowledge, and real-world performance to support the next generation of mobile infrastructure. For more information, visit: https://ai-ran.org

References:

https://www.delloro.com/advanced-research-report/ai-ran/

https://www.delloro.com/news/ai-ran-to-top-10-billion-by-2029/

Dell’Oro: RAN revenue growth in 1Q2025; AI RAN is a conundrum

AI RAN Alliance selects Alex Choi as Chairman

Nvidia AI-RAN survey results; AI inferencing as a reinvention of edge computing?

Deutsche Telekom and Google Cloud partner on “RAN Guardian” AI agent

The case for and against AI-RAN technology using Nvidia or AMD GPUs

Deloitte and TM Forum : How AI could revitalize the ailing telecom industry?

IEEE Techblog readers are well aware of the dire state of the global telecommunications industry. In particular:

- According to Deloitte, the global telecommunications industry is expected to have revenues of about US$1.53 trillion in 2024, up about 3% over the prior year.Both in 2024 and out to 2028, growth is expected to be higher in Asia Pacific and Europe, Middle East, and Africa, with growth in the Americas being around 1% annually.

- Telco sales were less than $1.8 trillion in 2022 vs. $1.9 trillion in 2012, according to Light Reading. Collective investments of about $1 trillion over a five-year period had brought a lousy return of less than 1%.

- Last year (2024), spending on radio access network infrastructure fell by $5 billion, more than 12% of the total, according to analyst firm Omdia, imperilling the kit vendors on which telcos rely.

Deloitte believes generative (gen) AI will have a huge impact on telecom network providers:

Telcos are using gen AI to reduce costs, become more efficient, and offer new services. Some are building new gen AI data centers to sell training and inference to others. What role does connectivity play in these data centers?

There is a gen AI gold rush expected over the next five years. Spending estimates range from hundreds of billions to over a trillion dollars on the physical layer required for gen AI: chips, data centers, and electricity.16 Close to another hundred billion US dollars will likely be spent on the software and services layer.17 Telcos should focus on the opportunity to participate by connecting all of those different pieces of hardware and software. And shouldn’t telcos, whose business is all about connectivity, be able to profit in some way?

There are gen AI markets for connectivity: Inside the data centers there are miles of mainly copper (and some fiber) cables for transmitting data from board to board and rack to rack. Serving this market is worth billions in 2025,18 but much of this connectivity is provided by data centers and chipmakers and have never been provided by telcos.

There are also massive, long-haul fiber networks ranging from tens to thousands of miles long. These connect (for example) a hyperscaler’s data centers across a region or continent, or even stretch along the seabed, connecting data centers across continents. Sometimes these new fiber networks are being built to support sovereign AI—that is, the need to keep all the AI data inside a given country or region.

Historically, those fiber networks were massive expenditures, built by only the largest telcos or (in the undersea case) built by consortia of telcos, to spread the cost across many players. In 2025, it looks like some of the major gen AI players are building at least some of this connection capacity, but largely on their own or with companies that are specialists in long-haul fiber.

Telcos may want to think about how they can continue to be a relevant player in the part of the connectivity space, rather than just ceding it to the gen AI behemoths. For context, it is estimated that big tech players will spend over US$100 billion on network capex between 2024 and 2030, representing 5% to 10% of their total capex in that period, up from only about 4% to 5% of capex for a network historically.

Where the opportunities could be greater are for connecting billions of consumers and enterprises. Telcos already serve these large markets, and as consumers and businesses start sending larger amounts of data over wireline and wireless networks, that growth might translate to higher revenues. A recent research report suggests that direct gen AI data traffic could be in exabyte by 2033.24

The immediate challenge is that many gen AI use cases for both consumer and enterprise markets are not exactly bandwidth hogs: In 2025, they tend to be text-based (so small file sizes) and users may expect answers in seconds rather than milliseconds,25 which can limit how telcos can monetize the traffic. Users will likely pay a premium for ultra-low latency, but if latency isn’t an issue, they are unlikely to pay a premium.

Telcos may want to think about how they can continue to be a relevant player in the part of the connectivity space, rather than just ceding it to the gen AI behemoths.

A longer-term challenge is on-device edge computing. Even if users start doing a lot more with creating, consuming, and sharing gen AI video in real time (requiring much larger file transmission and lower latency), the majority of devices (smartphones, PCs, wearables, or Internet of Things (IoT) devices in factories and ports) are expected to soon have onboard gen AI processing chips.26 These gen accelerators, combined with emerging smaller language AI models, may mean that network connectivity is less of an issue. Instead of a consumer recording a video, sending the raw image to the cloud for AI processing, then the cloud sending it back, the image could be enhanced or altered locally, with less need for high-speed or low-latency connectivity.

Of course, small models might not work well. The chips on consumer and enterprise edge devices might not be powerful enough or might be too power inefficient with unacceptably short battery life. In which case, telcos may be lifted by a wave of gen AI usage. But that’s unlikely to be in 2025, or even 2026.

Another potential source of gen AI monetization is what’s being called AI Radio Access Network (RAN). At the top of every cell tower are a bunch of radios and antennas. There is also a powerful processor or processors for controlling those radios and antennas. In 2024, a consortium (the AI-RAN Alliance) was formed to look at the idea of adding the same kind of generative AI chips found in data centers or enterprise edge servers (a mix of GPUs and CPUs) to every tower.The idea would be that they could run the RAN, help make it more open, flexible, and responsive, dynamically configure the network in real time, and be able to perform gen AI inference or training as service with any extra capacity left over, generating incremental revenues. At this time, a number of original equipment manufacturers (OEMs, including ones who currently account for over 95% of RAN sales), telcos, and chip companies are part of the alliance. Some expect AI RAN to be a logical successor to Open RAN and be built on top of it, and may even be what 6G turns out to be.

…………………………………………………………………………………………………………………………………………………………………………….

The TM Forum has three broad “AI initiatives,” which are part of their overarching “Industry Missions.” These missions aim to change the future of global connectivity, with AI being a critical component.

The three broad “AI initiatives” (or “Industry Missions” where AI plays a central role) are:

-

AI and Data Innovation: This mission focuses on the safe and widespread adoption of AI and data at scale within the telecommunications industry. It aims to help telcos accelerate, de-risk, and reduce the costs of applying AI technologies to cut operational expenses and drive revenue growth. This includes developing best practices, standards, data architectures, ontologies, and APIs.

-

Autonomous Networks: This initiative is about unlocking the power of seamless end-to-end autonomous operations in telecommunications networks. AI is a fundamental technology for achieving higher levels of network automation, moving towards zero-touch, zero-wait, and zero-trouble operations.

-

Composable IT and Ecosystems: While not solely an “AI initiative,” this mission focuses on simpler IT operations and partnering via AI-ready composable software. AI plays a significant role in enabling more agile and efficient IT systems that can adapt and integrate within dynamic ecosystems. It’s based on the TM Forum’s Open Digital Architecture (ODA). Eighteen big telcos are now running on ODA while the same number of vendors are described by the TM Forum as “ready” to adopt it.

These initiatives are supported by various programs, tools, and resources, including:

- AI Operations (AIOps): Focusing on deploying and managing AI at scale, re-engineering operational processes to support AI, and governing AI operations.

- Responsible AI: Addressing ethical considerations, risk management, and governance frameworks for AI.

- Generative AI Maturity Interactive Tool (GAMIT): To help organizations assess their readiness to exploit the power of GenAI.

- AI Readiness Check (AIRC): An online tool for members to identify gaps in their AI adoption journey across key business dimensions.

- AI for Everyone (AI4X): A pillar focused on democratizing AI across all business functions within an organization.

Under the leadership of CEO Nik Willetts, a rejuvenated, AI-wielding TM Forum now underpins what many telcos do in business and operational support systems, the essential IT plumbing. The TM Forum rates automation using the same five-level system as the car industry, where 0 means completely manual and 5 heralds the end of human intervention. Many telcos are on track for Level 4 in specific areas this year, said Willetts. China Mobile has already realized an 80% reduction in major faults, saving 3,000 person years of effort and 4,000 kilowatt hours of energy each year, thanks to automation.

Outside of China, telcos and telco vendors are leaning heavily on technologies mainly developed by just a few U.S. companies to implement AI. A person remains in the loop for critical decision-making, but the justifications for taking any decision are increasingly provided by systems built on the core underlying technologies from those same few companies. As IEEE Techblog has noted, AI is still hallucinating – throwing up nonsense or falsehoods – just as domain-specific experts are being threatened by it.

Agentic AI substitutes interacting software programs for junior technicians, the future decision-makers. If AI Level 4 renders them superfluous, where do the future decision-makers come from?

Caroline Chappell, an independent consultant with years of expertise in the telecom industry, says there is now talk of what the AI pundits call “learning world models,” more sophisticated AI that grows to understand its environment much as a baby does. When mature, it could come up with completely different approaches to the design of telecom networks and technologies. At this stage, it may be impossible for almost anyone to understand what AI is doing, she said.

References:

Sources: AI is Getting Smarter, but Hallucinations Are Getting Worse

McKinsey: AI infrastructure opportunity for telcos? AI developments in the telecom sector

Dell’Oro: RAN revenue growth in 1Q2025; AI RAN is a conundrum

Dell’Oro Group just completed its 1Q-2025 Radio Access Network (RAN) report. Initial findings suggest that after two years of steep declines, market conditions improved in the quarter. Preliminary estimates show that worldwide RAN revenue, excluding services, stabilized year-over-year, resulting in the first growth quarter since 1Q-2023. Author Stefan Pongratz attributes the improved conditions to favorable regional mix and easy comparisons (investments were very low same quarter lasts year), rather than a change to the fundamentals that shape the RAN market.

Pongratz believes the long-term trajectory has not changed. “While it is exciting that RAN came in as expected and the full year outlook remains on track, the message we have communicated for some time now has not changed. The RAN market is still growth-challenged as regional 5G coverage imbalances, slower data traffic growth, and monetization challenges continue to weigh on the broader growth prospects,” he added.

Vendor rankings haven’t changed much in several years, as per this table:

Additional highlights from the 1Q 2025 RAN report:

– Strong growth in North America was enough to offset declines in CALA, China, and MEA.

– The picture is less favorable outside of North America. RAN, excluding North America, recorded a fifth consecutive quarter of declines.

– Revenue rankings did not change in 1Q 2025. The top 5 RAN suppliers (4-Quarter Trailing) based on worldwide revenues are Huawei, Ericsson, Nokia, ZTE, and Samsung.

– The top 5 RAN (4-Quarter Trailing) suppliers based on revenues outside of China are Ericsson, Nokia, Huawei, Samsung, and ZTE.

– The short-term outlook is mostly unchanged, with total RAN expected to remain stable in 2025 and RAN outside of China growing at a modest pace.

Dell’Oro Group’s RAN Quarterly Report offers a complete overview of the RAN industry, with tables covering manufacturers’ and market revenue for multiple RAN segments including 5G NR Sub-7 GHz, 5G NR mmWave, LTE, macro base stations and radios, small cells, Massive MIMO, Open RAN, and vRAN. The report also tracks the RAN market by region and includes a four-quarter outlook. To purchase this report, please contact us by email at [email protected]

………………………………………………………………………………………………………………………………………………………………………………..

Separately, Pongrantz says “there is great skepticism about AI’s ability to reverse the flat revenue trajectory that has defined network operators throughout the 4G and 5G cycles.”

The 3GPP AI/ML activities and roadmap are mostly aligned with the broader efficiency aspects of the AI RAN vision, primarily focused on automation, management data analytics (MDA), SON/MDT, and over-the-air (OTA) related work (CSI, beam management, mobility, and positioning).

Current AI/ML activities align well with the AI-RAN Alliance’s vision to elevate the RAN’s potential with more automation, improved efficiencies, and new monetization opportunities. The AI-RAN Alliance envisions three key development areas: 1) AI and RAN – improving asset utilization by using a common shared infrastructure for both RAN and AI workloads, 2) AI on RAN – enabling AI applications on the RAN, 3) AI for RAN – optimizing and enhancing RAN performance. Or from an operator standpoint, AI offers the potential to boost revenue or reduce capex and opex.

While operators generally don’t consider AI the end destination, they believe more openness, virtualization, and intelligence will play essential roles in the broader RAN automation journey.

Operators are not revising their topline growth or mobile data traffic projections upward as a result of AI growing in and around the RAN. Disappointing 4G/5G returns and the failure to reverse the flattish carrier revenue trajectory is helping to explain the increased focus on what can be controlled — AI RAN is currently all about improving the performance/efficiency and reducing opex.

Since the typical gains demonstrated so far are in the 10% to 30% range for specific features, the AI RAN business case will hinge crucially on the cost and power envelope—the risk appetite for growing capex/opex is limited.

The AI-RAN business case using new hardware is difficult to justify for single-purpose tenancy. However, if the operators can use the resources for both RAN and non-RAN workloads and/or the accelerated computing cost comes down (NVIDIA recently announced ARC-Compact, an AI-RAN solution designed for D-RAN), the TAM could expand. For now, the AI service provider vision, where carriers sell unused capacity at scale, remains somewhat far-fetched, and as a result, multi-purpose tenancy is expected to account for a small share of the broader AI RAN market over the near term.

In short, improving something already done by 10% to 30% is not overly exciting. However, suppose AI embedded in the radio signal processing can realize more significant gains or help unlock new revenue opportunities by improving site utilization and providing telcos with an opportunity to sell unused RAN capacity. In that case, there are reasons to be excited. But since the latter is a lower-likelihood play, the base case expectation is that AI RAN will produce tangible value-add, and the excitement level is moderate — or as the Swedes would say, it is lagom.

…………………………………………………………………………………………………………………………………………………………………………………………………………………………………………….

Editor’s Note:

ITU-R WP 5D is working on aspects related to AI in the Radio Access Network (RAN) as part of its IMT-2030 (6G) recommendations. IMT-2030 is expected to consider an appropriate AI-native new air interface that uses to the extent practicable, and proved demonstrated actionable AI to enhance the performance of radio interface functions such as symbol detection/decoding, channel estimation etc. An appropriate AI-native radio network would enable automated and intelligent networking services such as intelligent data perception, supply of on-demand capability etc. Radio networks that support applicable AI services would be fundamental to the design of IMT technologies to serve various AI applications, and the proposed directions include on-demand uplink/sidelink-centric, deep edge, and distributed machine learning.

In summary:

- ITU-R WP5D recognizes AI as one of the key technology trends for IMT-2030 (6G).

- This includes “native AI,” which encompasses both AI-enabled air interface design and radio network for AI services.

- AI is expected to play a crucial role in enhancing the capabilities and performance of 6G networks.

…………………………………………………………………………………………………………………………………………………………………………………………………………………………………………….

References:

Dell’Oro: Private RAN revenue declines slightly, but still doing relatively better than public RAN and WLAN markets

ITU-R WP 5D reports on: IMT-2030 (“6G”) Minimum Technology Performance Requirements; Evaluation Criteria & Methodology

https://www.itu.int/dms_pubrec/itu-r/rec/m/R-REC-M.2160-0-202311-I!!PDF-E.pdf

McKinsey: AI infrastructure opportunity for telcos? AI developments in the telecom sector

A new report from McKinsey & Company offers a wide range of options for telecom network operators looking to enter the market for AI services. One high-level conclusion is that strategy inertia and decision paralysis might be the most dangerous threats. That’s largely based on telco’s failure to monetize past emerging technologies like smartphones and mobile apps, cloud networking, 5G-SA (the true 5G), etc. For example, global mobile data traffic rose 60% per year from 2010 to 2023, while the global telecom industry’s revenues rose just 1% during that same time period.

“Operators could provide the backbone for today’s AI economy to reignite growth. But success will hinge on effectively navigating complex market dynamics, uncertain demand, and rising competition….Not every path will suit every telco; some may be too risky for certain operators right now. However, the most significant risk may come from inaction, as telcos face the possibility of missing out on their fair share of growth from this latest technological disruption.”

McKinsey predicts that global data center demand could rise as high as 298 gigawatts by 2030, from just 55 gigawatts in 2023. Fiber connections to AI infused data centers could generate up to $50 billion globally in sales to fiber facilities based carriers.

Pathways to growth -Exploring four strategic options:

- Connecting new data centers with fiber

- Enabling high-performance cloud access with intelligent network services

- Turning unused space and power into revenue

- Building a new GPU as a Service business.

“Our research suggests that the addressable GPUaaS [GPU-as-a-service] market addressed by telcos could range from $35 billion to $70 billion by 2030 globally.” Verizon’s AI Connect service (described below), Indosat Ooredoo Hutchinson (IOH), Singtel and Softbank in Asia have launched their own GPUaaS offerings.

……………………………………………………………………………………………………………………………………………………………………………………………………………………………….

Recent AI developments in the telecom sector include:

- The AI-RAN Alliance, which promises to allow wireless network operators to add AI to their radio access networks (RANs) and then sell AI computing capabilities to enterprises and other customers at the network edge. Nvidia is leading this industrial initiative. Telecom operators in the alliance include T-Mobile and SoftBank, as well as Boost Mobile, Globe, Indosat Ooredoo Hutchison, Korea Telecom, LG UPlus, SK Telecom and Turkcell.

- Verizon’s new AI Connect product, which includes Vultr’s GPU-as-a-service (GPUaaS) offering. GPU-as-a-service is a cloud computing model that allows businesses to rent access to powerful graphics processing units (GPUs) for AI and machine learning workloads without having to purchase and maintain that expensive hardware themselves. Verizon also has agreements with Google Cloud and Meta to provide network infrastructure for their AI workloads, demonstrating a focus on supporting the broader AI economy.

- Orange views AI as a critical growth driver. They are developing “AI factories” (data centers optimized for AI workloads) and providing an “AI platform layer” called Live Intelligence to help enterprises build generative AI systems. They also offer a generative AI assistant for contact centers in partnership with Microsoft.

- Lumen Technologies continues to build fiber connections intended to carry AI traffic.

- British Telecom (BT) has launched intelligent network services and is working with partners like Fortinet to integrate AI for enhanced security and network management.

- Telus (Canada) has built its own AI platform called “Fuel iX” to boost employee productivity and generate new revenue. They are also commercializing Fuel iX and building sovereign AI infrastructure.

- Telefónica: Their “Next Best Action AI Brain” uses an in-house Kernel platform to revolutionize customer interactions with precise, contextually relevant recommendations.

- Bharti Airtel (India): Launched India’s first anti-spam network, an AI-powered system that processes billions of calls and messages daily to identify and block spammers.

- e& (formerly Etisalat in UAE): Has launched the “Autonomous Store Experience (EASE),” which uses smart gates, AI-powered cameras, robotics, and smart shelves for a frictionless shopping experience.

- SK Telecom (Korea): Unveiled a strategy to implement an “AI Infrastructure Superhighway” and is actively involved in AI-RAN (AI in Radio Access Networks) development, including their AITRAS solution.

- Vodafone: Sees AI as a transformative force, with initiatives in network optimization, customer experience (e.g., their TOBi chatbot handling over 45 million interactions per month), and even supporting neurodiverse staff.

- Deutsche Telekom: Deploys AI across various facets of its operations

……………………………………………………………………………………………………………………………………………………………………..

A recent report from DCD indicates that new AI models that can reason may require massive, expensive data centers, and such data centers may be out of reach for even the largest telecom operators. Across optical data center interconnects, data centers are already communicating with each other for multi-cluster training runs. “What we see is that, in the largest data centers in the world, there’s actually a data center and another data center and another data center,” he says. “Then the interesting discussion becomes – do I need 100 meters? Do I need 500 meters? Do I need a kilometer interconnect between data centers?”

……………………………………………………………………………………………………………………………………………………………………..

References:

https://www.datacenterdynamics.com/en/analysis/nvidias-networking-vision-for-training-and-inference/

https://opentools.ai/news/inaction-on-ai-a-critical-misstep-for-telecos-says-mckinsey

Bain & Co, McKinsey & Co, AWS suggest how telcos can use and adapt Generative AI

Nvidia AI-RAN survey results; AI inferencing as a reinvention of edge computing?

The case for and against AI-RAN technology using Nvidia or AMD GPUs

Telecom and AI Status in the EU

Major technology companies form AI-Enabled Information and Communication Technology (ICT) Workforce Consortium

AI RAN Alliance selects Alex Choi as Chairman

AI Frenzy Backgrounder; Review of AI Products and Services from Nvidia, Microsoft, Amazon, Google and Meta; Conclusions

AI sparks huge increase in U.S. energy consumption and is straining the power grid; transmission/distribution as a major problem

Deutsche Telekom and Google Cloud partner on “RAN Guardian” AI agent

NEC’s new AI technology for robotics & RAN optimization designed to improve performance

MTN Consulting: Generative AI hype grips telecom industry; telco CAPEX decreases while vendor revenue plummets

Amdocs and NVIDIA to Accelerate Adoption of Generative AI for $1.7 Trillion Telecom Industry

SK Telecom and Deutsche Telekom to Jointly Develop Telco-specific Large Language Models (LLMs)

Telecom sessions at Nvidia’s 2025 AI developers GTC: March 17–21 in San Jose, CA

Nvidia’s annual AI developers conference (GTC) used to be a relatively modest affair, drawing about 9,000 people in its last year before the Covid outbreak. But the event now unofficially dubbed “AI Woodstock” is expected to bring more than 25,000 in-person attendees!

Nvidia’s Blackwell AI chips, the main showcase of last year’s GTC (GPU Technology Conference), have only recently started shipping in high volume following delays related to the mass production of their complicated design. Blackwell is expected to be the main anchor of Nvidia’s AI business through next year. Analysts expect Nvidia Chief Executive Jensen Huang to showcase a revved-up version of that family called Blackwell Ultra at his keynote address on Tuesday.

March 18th Update: The next Blackwell Ultra NVL72 chips, which have one-and-a-half times more memory and two times more bandwidth, will be used to accelerate building AI agents, physical AI, and reasoning models, Huang said. Blackwell Ultra will be available in the second half of this year. The Rubin AI chip, is expected to launch in late 2026. Rubin Ultra will take the stage in 2027.

Nvidia watchers are especially eager to hear more about the next generation of AI chips called Rubin, which Nvidia has only teased at in prior events. Ross Seymore of Deutsche Bank expects the Rubin family to show “very impressive performance improvements” over Blackwell. Atif Malik of Citigroup notes that Blackwell provided 30 times faster performance than the company’s previous generation on AI inferencing, which is when trained AI models generate output. “We don’t rule out Rubin seeing similar improvement,” Malik wrote in a note to clients this month.

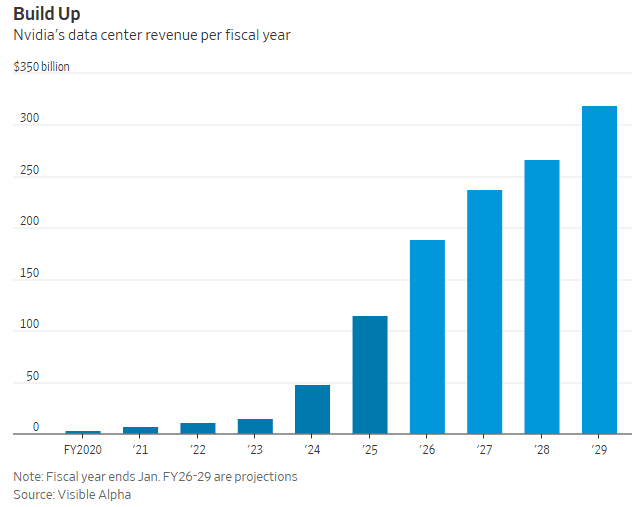

Rubin products aren’t expected to start shipping until next year. But much is already expected of the lineup; analysts forecast Nvidia’s data-center business will hit about $237 billion in revenue for the fiscal year ending in January of 2027, more than double its current size. The same segment is expected to eclipse $300 billion in annual revenue two years later, according to consensus estimates from Visible Alpha. That would imply an average annual growth rate of 30% over the next four years, for a business that has already exploded more than sevenfold over the last two.

Nvidia has also been haunted by worries about competition with in-house chips designed by its biggest customers like Amazon and Google. Another concern has been the efficiency breakthroughs claimed by Chinese AI startup DeepSeek, which would seemingly lessen the need for the types of AI chip clusters that Nvidia sells for top dollar.

…………………………………………………………………………………………………………………………………………………………………………………………………………………….

Telecom Sessions of Interest:

Wednesday Mar 19 | 2:00 PM – 2:40 PM

Delivering Real Business Outcomes With AI in Telecom [S73438]

In this session, executives from three leading telcos will share their unique journeys of embedding AI into their organizations. They’ll discuss how AI is driving measurable value across critical areas such as network optimization, customer experience, operational efficiency, and revenue growth. Gain insights into the challenges and lessons learned, key strategies for successful AI implementation, and the transformative potential of AI in addressing evolving industry demands.

Thursday Mar 20 | 11:00 AM – 11:40 AM PDT

AI-RAN in Action [S72987]

Thursday Mar 20 | 9:00 AM – 9:40 AM PDTHow Indonesia Delivered a Telco-led Sovereign AI Platform for 270M Users [S73440]

Thursday Mar 20 | 3:00 PM – 3:40 PM PDT

Driving 6G Development With Advanced Simulation Tools [S72994]

Thursday Mar 20 | 2:00 PM – 2:40 PM PDT

Thursday Mar 20 | 4:00 PM – 4:40 PM PDT

Pushing Spectral Efficiency Limits on CUDA-accelerated 5G/6G RAN [S72990]

Thursday Mar 20 | 4:00 PM – 4:40 PM PDT

Enable AI-Native Networking for Telcos with Kubernetes [S72993]

Monday Mar 17 | 3:00 PM – 4:45 PM PDT

Automate 5G Network Configurations With NVIDIA AI LLM Agents and Kinetica Accelerated Database [DLIT72350]

Learn how to create AI agents using LangGraph and NVIDIA NIM to automate 5G network configurations. You’ll deploy LLM agents to monitor real-time network quality of service (QoS) and dynamically respond to congestion by creating new network slices. LLM agents will process logs to detect when QoS falls below a threshold, then automatically trigger a new slice for the affected user equipment. Using graph-based models, the agents understand the network configuration, identifying impacted elements. This ensures efficient, AI-driven adjustments that consider the overall network architecture.

We’ll use the Open Air Interface 5G lab to simulate the 5G network, demonstrating how AI can be integrated into real-world telecom environments. You’ll also gain practical knowledge on using Python with LangGraph and NVIDIA AI endpoints to develop and deploy LLM agents that automate complex network tasks.

Prerequisite: Python programming.

………………………………………………………………………………………………………………………………………………………………………………………………………..

References:

Nvidia AI-RAN survey results; AI inferencing as a reinvention of edge computing?

The case for and against AI-RAN technology using Nvidia or AMD GPUs

FT: Nvidia invested $1bn in AI start-ups in 2024

Quartet launches “Open Telecom AI Platform” with multiple AI layers and domains

At Mobile World Congress 2025, Jio Platforms (JPL), AMD, Cisco, and Nokia announced the Open Telecom AI Platform, a new project designed to pioneer the use of AI across all network domains. It aims to provide a centralized intelligence layer that can integrate AI and automation into every layer of network operations.

The AI platform will be large language model (LLM) agnostic and use open APIs to optimize functionality and capabilities. By collectively harnessing agentic AI and using LLMs, domain-specific SLMs and machine learning techniques, the Telecom AI Platform is intended to enable end-to-end intelligence for network management and operations. The founding quartet of companies said that by combining shared elements, the platform provides improvements across network security and efficiency alongside a reduction in total cost of ownership. The companies each bring their specific expertise to the consortium across domains including RAN, routing, AI compute and security.

Jio Platforms will be the initial customer. The Indian telco says it will be AI-agnostic and use open APIs to optimize functionality and capabilities. It will be able to make use of agentic AI, as well as large language models (LLMs), domain-specific small language models (SLMs), and machine learning techniques.

“Think about this platform as multi-layer, multi-domain. Each of these domains, or each of these layers, will have their own agentic AI capability. By harnessing agentic AI across all telco layers, we are building a multimodal, multidomain orchestrated workflow platform that redefines efficiency, intelligence, and security for the telecom industry,” said Mathew Oommen, group CEO, Reliance Jio.

“In collaboration with AMD, Cisco, and Nokia, Jio is advancing the Open Telecom AI Platform to transform networks into self-optimising, customer-aware ecosystems. This initiative goes beyond automation – it’s about enabling AI-driven, autonomous networks that adapt in real time, enhance user experiences, and create new service and revenue opportunities across the digital ecosystem,” he added.

On top of Jio Platforms’ agentic AI workflow manager is an AI orchestrator which will work with what is deemed the best LLM. “Whichever LLM is the right LLM, this orchestrator will leverage it through an API framework,” Oomen explained. He said that Jio Platforms could have its first product set sometime this year.

Under the terms of the agreement, AMD will provide high-performance computing solutions, including EPYC CPUs, Instinct GPUs, DPUs, and adaptive computing technologies. Cisco will contribute networking, security, and AI analytics solutions, including Cisco Agile Services Networking, AI Defense, Splunk Analytics, and Data Center Networking. Nokia will bring expertise in wireless and fixed broadband, core networks, IP, and optical transport. Finally, Jio Platforms Limited (JPL) will be the platform’s lead organizer and first adopter. It will also provide global telecom operators’ initial deployment and reference model.

The Telecom AI Platform intends to share the results with other network operators (besides Jio).

“We don’t want to take a few years to create something. I will tell you a little secret, and the secret is Reliance Jio has decided to look at markets outside of India. As part of this, we will not only leverage it for Jio, we will figure out how to democratize this platform for the rest of the world. Because unlike a physical box, this is going to be a lot of virtual functions and capabilities.”

AMD represents a lower-cost alternative to Intel and Nvidia when it comes to central processing units (CPUs) and graphics processing units (GPUs), respectively. For AMD, getting into a potentially successful telco platform is a huge success. Intel, its arch-rival in CPUs, has a major lead with telecom projects (e.g. cloud RAN and OpenRAN), having invested massive amounts of money in 5G and other telecom technologies.

AMD’s participation suggests that this JPL-led group is looking for hardware that can handle AI workloads at a much lower cost then using NVIDIA GPUs.

“AMD is proud to collaborate with Jio Platforms Limited, Cisco, and Nokia to power the next generation of AI-driven telecom infrastructure,” said Lisa Su, chair and CEO, AMD. “By leveraging our broad portfolio of high-performance CPUs, GPUs, and adaptive computing solutions, service providers will be able to create more secure, efficient, and scalable networks. Together we can bring the transformational benefits of AI to both operators and users and enable innovative services that will shape the future of communications and connectivity.”

Jio will surely be keeping a close eye on the cost of rolling out this reference architecture when the time comes, and optimizing it to ensure the telco AI platform is financially viable.

“Nokia possesses trusted technology leadership in multiple domains, including RAN, core, fixed broadband, IP and optical transport. We are delighted to bring this broad expertise to the table in service of today’s important announcement,” said Pekka Lundmark, President and CEO at Nokia. “The Telecom AI Platform will help Jio to optimise and monetise their network investments through enhanced performance, security, operational efficiency, automation and greatly improved customer experience, all via the immense power of artificial intelligence. I am proud that Nokia is contributing to this work.”

Cisco chairman and CEO Chuck Robbins said: “This collaboration with Jio Platforms Limited, AMD and Nokia harnesses the expertise of industry leaders to revolutionise networks with AI.

“Cisco is proud of the role we play here with integrated solutions from across our stack including Cisco Agile Services Networking, Data Center Networking, Compute, AI Defence, and Splunk Analytics. We look forward to seeing how the Telecom AI Platform will boost efficiency, enhance security, and unlock new revenue streams for service provider customers.”

If all goes well, the Open Telecom AI Platform could offer an alternative to Nvidia’s AI infrastructure, and give telcos in lower-ARPU markets a more cost-effective means of imbuing their network operations with the power of AI.

References:

https://www.telecoms.com/ai/jio-s-new-ai-club-could-offer-a-cheaper-route-into-telco-ai

Does AI change the business case for cloud networking?

For several years now, the big cloud service providers – Amazon Web Services (AWS), Microsoft Azure, and Google Cloud – have tried to get wireless network operators to run their 5G SA core network, edge computing and various distributed applications on their cloud platforms. For example, Amazon’s AWS public cloud, Microsoft’s Azure for Operators, and Google’s Anthos for Telecom were intended to get network operators to run their core network functions into a hyperscaler cloud.

AWS had early success with Dish Network’s 5G SA core network which has all its functions running in Amazon’s cloud with fully automated network deployment and operations.

Conversely, AT&T has yet to commercially deploy its 5G SA Core network on the Microsoft Azure public cloud. Also, users on AT&T’s network have experienced difficulties accessing Microsoft 365 and Azure services. Those incidents were often traced to changes within the network’s managed environment. As a result, Microsoft has drastically reduced its early telecom ambitions.

Several pundits now say that AI will significantly strengthen the business case for cloud networking by enabling more efficient resource management, advanced predictive analytics, improved security, and automation, ultimately leading to cost savings, better performance, and faster innovation for businesses utilizing cloud infrastructure.

“AI is already a significant traffic driver, and AI traffic growth is accelerating,” wrote analyst Brian Washburn in a market research report for Omdia (owned by Informa). “As AI traffic adds to and substitutes conventional applications, conventional traffic year-over-year growth slows. Omdia forecasts that in 2026–30, global conventional (non-AI) traffic will be about 18% CAGR [compound annual growth rate].”

Omdia forecasts 2031 as “the crossover point where global AI network traffic exceeds conventional traffic.”

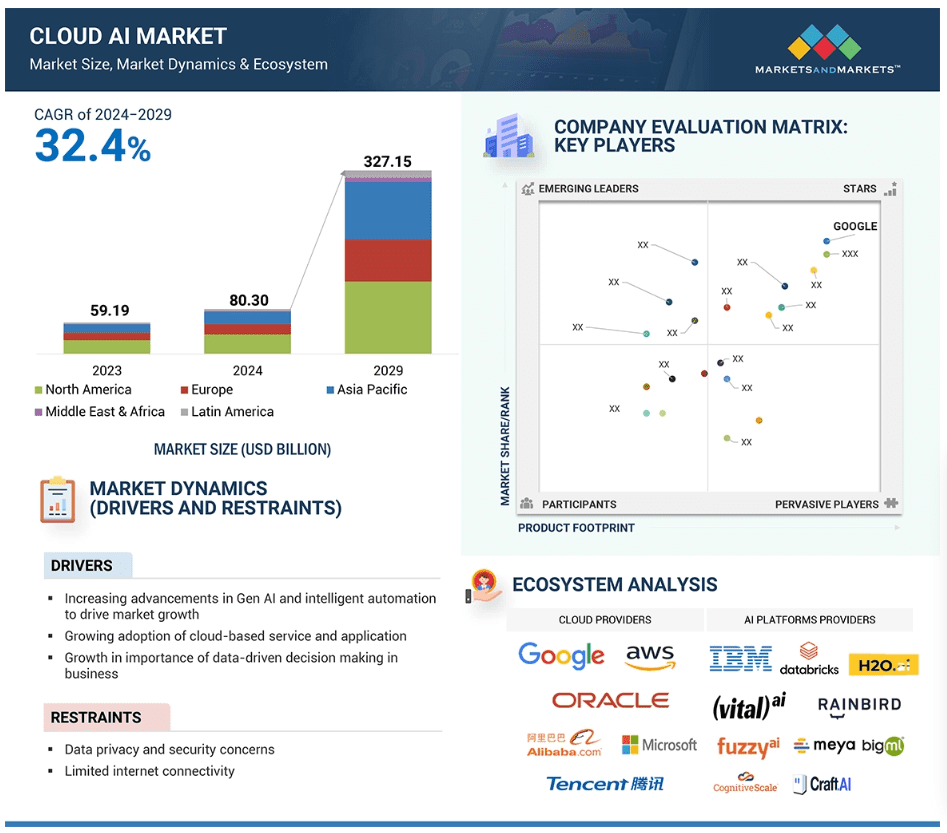

Markets & Markets forecasts the global cloud AI market (which includes cloud AI networking) will grow at a CAGR of 32.4% from 2024 to 2029.

AI is said to enhance cloud networking in these ways:

- Optimized resource allocation:

AI algorithms can analyze real-time data to dynamically adjust cloud resources like compute power and storage based on demand, minimizing unnecessary costs. - Predictive maintenance:

By analyzing network patterns, AI can identify potential issues before they occur, allowing for proactive maintenance and preventing downtime. - Enhanced security:

AI can detect and respond to cyber threats in real-time through anomaly detection and behavioral analysis, improving overall network security. - Intelligent routing:

AI can optimize network traffic flow by dynamically routing data packets to the most efficient paths, improving network performance. - Automated network management:

AI can automate routine network management tasks, freeing up IT staff to focus on more strategic initiatives.

The pitch is that AI will enable businesses to leverage the full potential of cloud networking by providing a more intelligent, adaptable, and cost-effective solution. Well, that remains to be seen. Google’s new global industry lead for telecom, Angelo Libertucci, told Light Reading:

“Now enter AI,” he continued. “With AI … I really have a power to do some amazing things, like enrich customer experiences, automate my network, feed the network data into my customer experience virtual agents. There’s a lot I can do with AI. It changes the business case that we’ve been running.”

“Before AI, the business case was maybe based on certain criteria. With AI, it changes the criteria. And it helps accelerate that move [to the cloud and to the edge],” he explained. “So, I think that work is ongoing, and with AI it’ll actually be accelerated. But we still have work to do with both the carriers and, especially, the network equipment manufacturers.”

Google Cloud last week announced several new AI-focused agreements with companies such as Amdocs, Bell Canada, Deutsche Telekom, Telus and Vodafone Italy.

As IEEE Techblog reported here last week, Deutsche Telekom is using Google Cloud’s Gemini 2.0 in Vertex AI to develop a network AI agent called RAN Guardian. That AI agent can “analyze network behavior, detect performance issues, and implement corrective actions to improve network reliability and customer experience,” according to the companies.

And, of course, there’s all the buzz over AI RAN and we plan to cover expected MWC 2025 announcements in that space next week.

https://www.lightreading.com/cloud/google-cloud-doubles-down-on-mwc

Nvidia AI-RAN survey results; AI inferencing as a reinvention of edge computing?

The case for and against AI-RAN technology using Nvidia or AMD GPUs

Generative AI in telecom; ChatGPT as a manager? ChatGPT vs Google Search