AI

Analysis: Nvidia’s rumored new 6G AI-RAN – likely features/functions and industry impact

Executive Summary:

According to Light Reading, Nvidia is working on a GPU combo chip that would sit directly in the 6G radio unit [1.], extending its AI-RAN push from baseband/server into the radio itself. It’s reported to be a more hardware-integrated, sub-100W embedded design rather than just GPU acceleration in centralized RAN compute.

Note 1. 6G/IMT 2030 Radio Interface Technologies (RITs) have yet to be defined, let alone specified by 3GPP or ITU-R WP5D. They won’t be solidified until the end of 2030 so any specific silicon design won’t be completed until then or 2031!

……………………………………………………………………………………………………………………………………………………….

Light Reading’s headline frames it as a “radical new AI-RAN plan and they wrote that “the move was confirmed by knowledgeable sources, with Nvidia saying GPUs in more advanced radios will become “essential” in future. It marks a dramatic new development in the GPU giant’s “AI-RAN” strategy.”

If accurate, this would be a notable shift for Nvidia, because it would let them influence the whole RAN stack, not just centralized compute. That could matter for performance, power efficiency, and AI-native functions such as sensing, spectrum optimization, and real-time signal processing. Nvidia’s broader 6G messaging already emphasizes AI-native wireless, integrated sensing and communications, and spectrum agility as core themes.

The unconfirmed report fits Nvidia’s existing telecom roadmap rather than appearing out of nowhere. Nvidia has already announced an AI-native wireless stack for 6G with partners including Cisco, MITRE, Booz Allen, ODC, and T-Mobile, and it has promoted AI-RAN as a way to combine connectivity, computing, and sensing on one platform. It also aligns with the company’s recent partnership with Nokia, where Nvidia introduced the ARC-Pro 6G-ready accelerated computing platform and described it as a software-upgradable path from 5G-Advanced to 6G. That makes the rumored radio-chip move look like a vertical extension of the same strategy.

For wireless network operators, a radio-unit chip from Nvidia would be significant only if it improves cost, power, or flexibility versus incumbent RU silicon. The practical test will be whether it can deliver enough RF, baseband, and AI function integration to justify another architecture layer at the edge. It would also intensify competition in the radio-access supply chain and reinforce the trend toward AI-native, software-defined RANs. It also suggests Nvidia wants to shape not only the compute layer but the physical radio layer of 6G networks.

Possible AI Silicon Features and Functions:

Nvidia would most likely add AI-for-RAN features into radio silicon first, because those map directly to signal processing and link adaptation rather than to generic “AI at the edge.” Nvidia’s own AI-RAN materials emphasize embedding AI/ML into the radio signal-processing layer to improve spectral efficiency, coverage, capacity, and performance. Here are a few likely AI features/functions for the rumored 6G AI Nvidia super chip:

-

Neural channel estimation and equalization, to infer cleaner channel state from noisy RF observations and improve link reliability. Nvidia’s open-source Aerial release specifically calls out advanced neural models for channel estimation.

-

Real-time beam management, including beam selection, beam tracking, and beam refinement for massive MIMO and mmWave/upper-midband deployments. These are natural AI-RAN use cases because they depend on fast adaptation to changing propagation conditions.

-

Spectrum agility and interference mitigation, such as identifying jammed or congested resource blocks and dynamically avoiding them. NVIDIA and partners have already described spectrum agility applications that freeze only affected frequencies while keeping the rest of the system online.

-

Dynamic resource scheduling, using learned traffic and channel patterns to allocate PRBs, power, and compute more efficiently in real time. Nvidia describes AI-RAN as improving spectral efficiency and dynamic traffic handling through AI.

-

Integrated sensing and communications support, where the radio helps detect objects, motion, or environmental context in parallel with communication. Nvidia has already highlighted ISAC-style applications with camera/RF fusion and object tracking.

-

Edge inference hooks, letting the RU expose real-time PHY data to AI applications or a dApp-style framework. Nvidia’s open-source Aerial stack says third-party apps can access physical-layer data through secure APIs and modify RAN behavior in real time.

-

Self-optimization and closed-loop control, where the radio silicon learns local conditions and continuously retunes thresholds, coding, MCS selection, and precoding policies. That fits Nvidia’s broader framing of AI-native networks as software-defined and continuously adaptable.

The most plausible first wave is not a fully autonomous “AI radio,” but a hybrid RU chip that accelerates selected PHY functions and exposes telemetry/data paths to the rest of the AI-RAN stack. Nvidia’s current messaging emphasizes software-defined infrastructure, deterministic performance, and layered AI-RAN capabilities rather than replacing the entire RAN with a black-box model.

The real differentiator would be whether Nvidia can combine RF signal processing with its GPU/CUDA ecosystem, so the same platform handles channel learning, inference, and orchestration across RU/DU/CU tiers. That would let operators optimize for spectral efficiency and OPEX while still keeping a software-upgrade path to 6G. Radio electronics is constrained by power, latency, determinism, and certification, so Nvidia would need to prove these AI features help without destabilizing PHY timing. That is why the likely starting point is assistive AI inside the signal chain, not a fully learned end-to-end radio.

Image Credit: Nvidia

…………………………………………………………………………………………………………………………………………………………………………………………………………..

Competitive Analysis:

Nvidia’s reported move into a 6G radio-unit chip is most threatening to Marvell and Qualcomm at the silicon layer, while it is more of a strategic architecture challenge to Nokia and Ericsson at the system level. The immediate effect is less about a single chip and more about Nvidia trying to pull compute, connectivity, and AI deeper into the RAN value chain

Qualcomm is the closest direct competitor if Nvidia is trying to put silicon into the radio or near-radio layer. Qualcomm already has a Layer 1 strategy that combines silicon and software in SmartNIC/server-adjacent form factors, so Nvidia would be moving into a space where Qualcomm has both telecom credibility and established IP.

The risk for Qualcomm is that Nvidia can use its AI brand, CUDA ecosystem, and hyperscale relationships to redefine what “performance” means in RAN silicon, especially if AI-native functions become a buying criterion. The counterpoint is that Qualcomm still has a strong edge in wireless-specific silicon integration and standards heritage, which matters if the 6G radio path remains RF- and modem-centric.

Nokia looks less exposed in the short term because it is already partnering with Nvidia rather than treating it as a pure adversary. Nvidia and Nokia have publicly framed their relationship as an AI-native 5G-Advanced/6G platform effort, and Nokia says it will add NVIDIA-powered commercial AI-RAN products to its RAN portfolio.

Nonetheless, a Nvidia radio-chip push could still compress Nokia’s differentiation over time if more of the RAN stack becomes software-defined and GPU-centric. The strategic question is whether Nokia remains the integrator and operator-facing systems vendor, or whether Nvidia gradually becomes the architectural center of gravity.

Ericsson is the most structurally interesting case because it sits at the high end of global RAN share and has been more cautious about Nvidia as a Layer 1 option. Light Reading notes Ericsson is currently dismissive of Nvidia as a Layer 1 choice, even while the broader ecosystem explores AI-RAN collaboration.

For Ericsson, the threat is not immediate revenue loss from a single chip; it is erosion of the traditional assumption that RAN leadership comes from proprietary radio and baseband stacks. If Nvidia can make AI-native RAN a default design paradigm, Ericsson may be forced to defend its software and systems value rather than simply its box-selling model.

Samsung Electronics contacted Light Reading after their story was published to point out that it also works with AMD as a chip partner. “Samsung supports full Layer 1 (L1) processing using Intel’s telco CPUs (e.g., Xeon 6 Granite Rapids) and lookaside accelerator approach and in addition has successfully demonstrated full L1 processing on AMD’s CPUs without relying on dedicated L1 accelerators,” a Samsung spokesperson said via email.

Marvell is the most exposed chip supplier in this story because its telecom position is more concentrated in custom Layer 1 silicon. Light Reading specifically points out that Marvell is a critical supplier to Nokia in Layer 1, which makes a Nvidia radio-chip effort a direct substitution threat in portions of the stack.

If Nvidia succeeds, Marvell faces a two-sided squeeze: loss of design wins in telecom silicon and a narrative shift toward AI-native programmable platforms that favor Nvidia’s broader ecosystem. Marvell’s defense is that telecom operators still care about power, latency, and deterministic functionality, areas where custom silicon can remain more efficient than a generalized AI-compute approach.

…………………………………………………………………………………………………………………………………………………………………………

Summary Table:

| Company | Impact level | Why |

|---|---|---|

| Qualcomm | High | Direct silicon adjacency and overlapping Layer 1 ambitions. |

| Marvell | High | Telecom custom-silicon exposure, especially Layer 1. |

| Ericsson | Medium | Strategic and architectural threat more than immediate chip displacement. |

| Nokia | Medium to low near term | Partnered with Nvidia, so risk is more about future dependence and stack control. |

Source: Perplexity.ai

…………………………………………………………………………………………………………………………………………………………………………

Conclusions:

It’s unknown whether Nvidia’s rumored radio chip becomes a product, a reference design, or just an extension of its AI-RAN platform. If it ships, watch for operator trials, power-envelope disclosures, and whether it targets RU integration, DU acceleration, or a hybrid AI-RAN endpoint. If it stays at the partnership/reference-design level, the market impact will be more narrative than revenue-relevant.

Another unanswered question is whether Nokia and Ericsson keep treating Nvidia as a collaborator while preserving their own Physical layer control, or whether they start to see Nvidia as a platform owner in the making. That boundary will determine whether this is a tactical ecosystem play or the beginning of a deeper industry reset.

…………………………………………………………………………………………………………………………………………………………………………

References:

https://www.lightreading.com/6g/nvidia-has-a-radical-new-ai-ran-plan-a-6g-radio-unit-chip

https://www.lightreading.com/6g/analyst-insight-6g-coming-into-focus

https://www.nvidia.com/en-us/industries/telecommunications/ai-ran/

RAN Silicon Rethink- Part II; vRAN and General-Purpose Compute

Orange, Nokia, Nvidia, and Intel debate: ASICs vs. GPUs vs. General-Purpose CPUs for RAN Baseband Processing

RAN silicon rethink – from purpose built products & ASICs to general purpose processors or GPUs for vRAN & AI RAN

Dell’Oro: Analysis of the Nokia-NVIDIA-partnership on AI RAN

Nvidia pays $1 billion for a stake in Nokia to collaborate on AI networking solutions

Inside Nokia’s new AI Networking Innovation Lab

Analysis: Nvidia’s $2 billion investment in Marvell; NVLink Fusion ecosystem & RAN vendor silicon strategy

Marvell shrinking share of the RAN custom silicon market & acquisition of XConn Technologies for AI data center connectivity

Network X Americas: AT&T and Comcast reveal huge AI impact on network operations

Echoing a recent Cisco report, telecom leaders at the Network X Americas conference (held in Irving, TX last week) noted that AI is fundamentally shifting traffic patterns while having a very positive impact on network operations. With billions of connected sensors and devices (like autonomous vehicles generating 20GB of data per day), operators are forced to prioritize uplink capacity and low latency over traditional consumer downlink traffic.

AT&T’s network CTO, Yigal Elbaz, cited the robo-taxi as a bellwether for how AI is affecting network traffic. Each Waymo vehicle generates about 20 gigabytes of data per day, roughly 30 times the amount a typical mobile user consumes. Most of that traffic flows from the car to the cloud. “Every other week,” Elbaz noted, “a new flavor of a frontier AI model drops on us.”

“We already have about 700,000 changes on a daily basis in our network made by AI,” said Elbaz, noting that AT&T has built a proprietary foundation AI model because standard large language models (LLMs) don’t understand KPIs, network alarms or fiber deployment specifics. He cited a 20-25% cost reduction and 12-15% better results than general-purpose models.

In his keynote speech, Comcast EVP and Chief Network Officer Elad Nafshi described 200 edge compute centers capable of self-healing 77% of network events. He touted AI chipsets close enough to customers’ homes to pinpoint outside plant faults with 99.2% precision, and a partnership with Nvidia to push that edge platform further.

Nafshi highlighted the gap in network provider promises vs delivery with a hypothetical small-business use case example. A pizza shop operator, could materially change workflow and productivity if the service provider delivered an AI-enabled concierge—built on a task-optimized small language model—to manage order intake and customer interaction. In that scenario, the network evolves from a passive access pipe into an application-aware platform that augments business operations. The concept is credible from a technical standpoint, but remains largely theoretical until operators can effectively reach and educate SMB customers who still perceive connectivity as a fixed monthly expense.

Both AT&T and Comcast Israeli executives said this was more than modernization and discussed the changes in what a network does. The network is now a platform, not a pipe. Today’s network learns, adapts and increasingly acts on behalf of its customers. But I can’t help but wonder if the customers know… or if that network value will ever trickle down to the customers who need it most.

In a keynote panel session titled, ” Convergence in action – Competing, scaling and winning in the AI-driven connectivity market,” Josh Goodell, AT&T’s VP of Broadband and Converged Product Development, framed the company’s objective as becoming “the greatest simplifier of our customers’ lives” while instilling “connectivity confidence.” That positioning is notable for a sector that has historically under-communicated its value proposition beyond basic service metrics.

The broader industry narrative appears to be shifting. Historically, go-to-market strategies emphasized throughput benchmarks and promotional pricing. As Omdia’s Ruth Brown (panel session moderator) observed, packaging has been largely defensive, optimized around billing constructs rather than differentiated user experience. The emerging model instead centers on networks that operate contextually and autonomously—delivering value in ways that are largely invisible to the end user.

Derek Peterson, CTO of Boingo Wireless, articulated a parallel issue in venue networks, describing the “stadium problem.” Operators dimension infrastructure for peak ingress and then underutilize that capacity once users are inside the venue. The architectural question is no longer solely about capacity provisioning, but about service-layer innovation on top of that capacity. At Petco Park, Boingo leveraged existing network assets to enable pre-entry commerce, driving incremental revenue before fans pass through the gates. The infrastructure was not the constraint; the limiting factor was identifying and executing on higher-order use cases.

A similar disconnect persists in the industry’s framing of the digital divide. AT&T’s John Stankey and others have suggested the gap is nearing closure, citing expanded fiber footprints and fixed wireless access. While coverage metrics have improved, the divide has never been purely a function of infrastructure availability. Adoption is equally constrained by affordability and, critically, by perceived value. If connectivity continues to be positioned as a commoditized utility, the most economically vulnerable segments—those with the greatest need for digital enablement—remain the least likely to engage.

This is particularly relevant in an AI-driven economy. The users and small enterprises that could benefit most from intelligent, network-delivered services are often those least exposed to the evolving capabilities of the platform. The industry risks over-indexing on measurable deployment milestones while under-communicating the functional value of next-generation networks.

The Network X keynotes underscored that the technical roadmap is largely in place. Network operators are advancing toward networks capable of real-time traffic learning, proactive cybersecurity at the edge, and highly personalized in-home connectivity experiences. These capabilities represent a more compelling value proposition than traditional service tier comparisons.

However, the central challenge remains go-to-market execution. The industry has demonstrated that it can architect and deploy these capabilities at scale. It has yet to establish a clear, effective framework for articulating that value to end users and enterprises in a way that drives adoption.

As a final observation, the broader telecom ecosystem—illustrated by developments such as autonomous vehicle platforms—already depends on AI-enabled, highly distributed network intelligence. While the underlying infrastructure is incrementally aligning with these requirements, the industry dialogue around its broader economic and societal implications remains underdeveloped.

References:

Cisco report: Agentic AI to reshape WAN traffic, AI inference will be ~25% of total traffic by 2035

Will the wave of AI generated user-to/from-network traffic increase spectacularly as Cisco and Nokia predict?

Telecom operators investing in Agentic AI while Self Organizing Network AI market set for rapid growth

Analysis: Cisco, HPE/Juniper, and Nvidia network equipment for AI data centers

Cisco CEO sees great potential in AI data center connectivity, silicon, optics, and optical systems

The Financial Trap of Autonomous Networks: Scaling Agentic AI in the Telecom Core

Ericsson integrates Agentic AI into its NetCloud platform for self healing and autonomous 5G private networks

STL Partners webinar: Agentic AI needed for RAN autonomy & efficiency

Nokia to showcase agentic AI network slicing; Ericsson partners with Ookla to measure 5G network slicing performance

Agentic AI and the Future of Communications for Autonomous Vehicles (V2X)

Telecom data centers must be redesigned for the AI era with rack scale architectures, enhanced power & cooling requirements

Is the “far edge” a bridge to far to cross for AI inferencing? What about “Distributed AI Grids”?

T-Mobile US announces new broadband wireless and fiber targets, 5G-A with agentic AI and live voice call translation

Intel and AI chip startup SambaNova partner; SN50 AI inferencing chip max speed said to be 5X faster than competitive AI chips

CES 2025: Intel announces edge compute processors with AI inferencing capabilities

Cisco report: Agentic AI to reshape WAN traffic, AI inference will be ~25% of total traffic by 2035

Executive Summary:

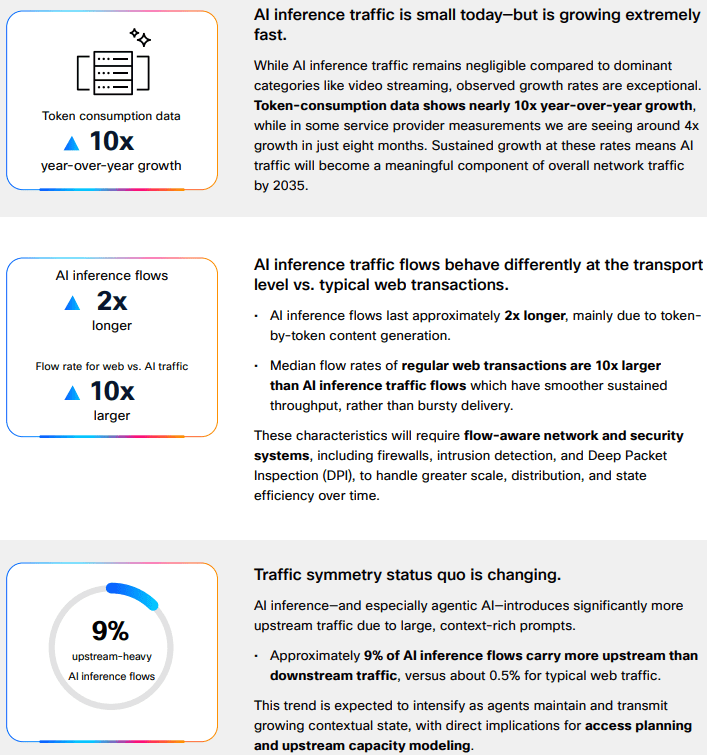

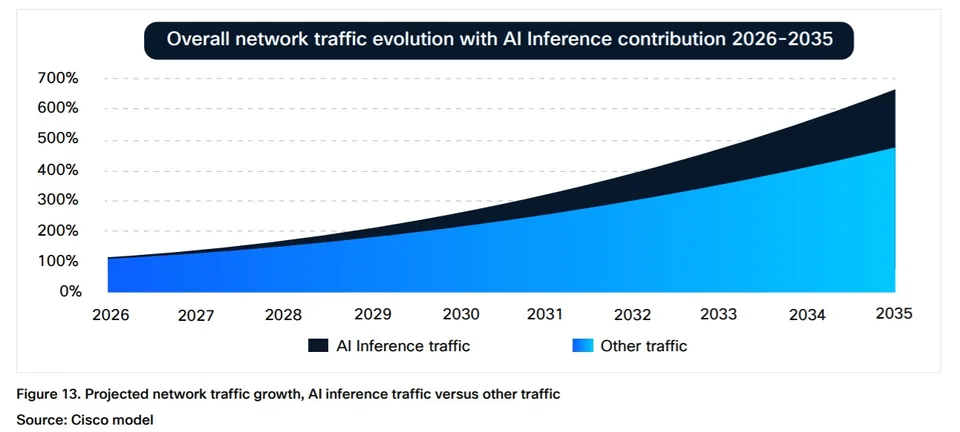

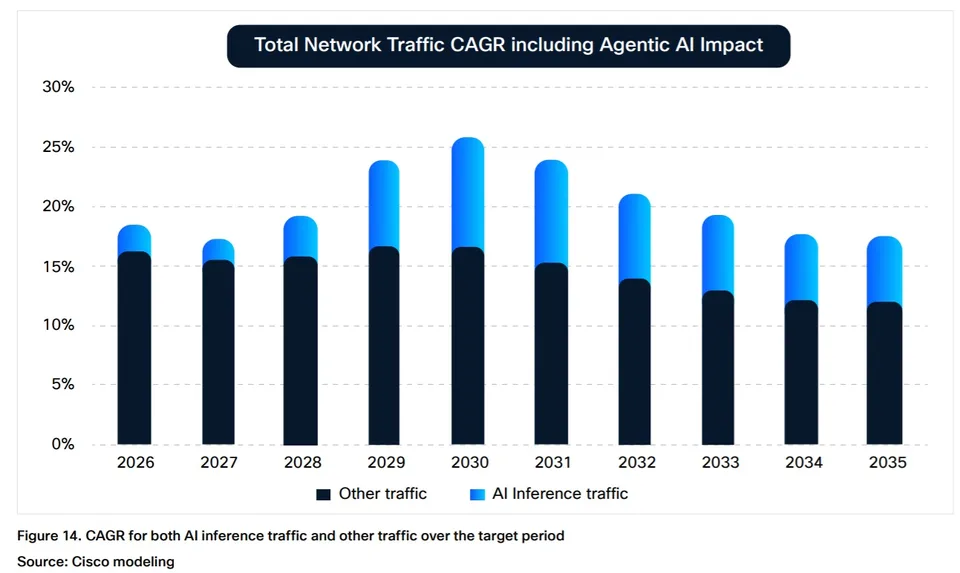

Consumer-driven AI traffic [1.] currently represents a marginal share of aggregate Internet traffic. However, accelerating adoption of agentic AI is expected to materially reshape traffic composition over the next decade. In its “AI Impact on Wide Area Networks” report, Cisco projects that AI will emerge as the dominant driver of network traffic growth. As consumer AI adoption approaches “near-universal usage,” AI and agentic AI are forecast to increase consumer-driven network traffic by approximately 6.6× by the mid-2030s (see chart below).

Cisco estimates that this AI expansion will account for roughly 63% of incremental traffic growth relative to non-AI scenarios. The study focuses specifically on WAN implications, rather than data center or GPU infrastructure, and provides guidance on network design and capacity planning. Methodologically, the report integrates real-world traffic observations (via Cisco Crosswork Assurance User Experience), third-party industry datasets, and controlled laboratory evaluations of AI agents to characterize how AI-generated traffic diverges from conventional web traffic patterns.

Token-consumption data shows nearly 10x year-over-year growth, while in some service provider measurements Cisco is seeing ~4x growth in just eight months. Sustained growth at these rates means AI traffic will become a meaningful component of overall network traffic by 2035.

Note 1. Consumer AI traffic has a few defining technical traits: it is still dominated by short text-based exchanges, but it is becoming more stateful, more upstream-heavy, and more latency-sensitive as users move from simple prompts to agentic workflows and multimodal interactions. Today’s consumer AI traffic is still overwhelmingly text-oriented, which is one reason the aggregate bandwidth impact remains modest despite rapid adoption. Comcast’s network observation is a useful real-world proxy: 97.1% of AI traffic was text-based, while images accounted for 2.6% and video only 0.3%. The key technical implication is that current traffic volumes are often limited more by conversation frequency and session behavior than by very large payloads, though that changes quickly as users adopt image, audio, and video generation.

Although AI inference traffic is currently “negligible” relative to dominant categories such as video streaming, Cisco projects it will comprise approximately 25% of total network traffic by 2035 (see chart below). At that point, AI traffic is expected to represent a “meaningful component” of overall network load. Importantly, AI-generated traffic exhibits distinct characteristics: inference flows are approximately twice the duration of typical web transactions, demonstrate higher upstream bandwidth demand, and operate at “software speed” rather than human interaction rates.

The emergence of AI agents as “power users” further amplifies these dynamics. Cisco notes that agent-executed tasks can generate up to 450% more traffic per task compared to human-driven interactions. This shift is expected to drive operator adoption of “flow-aware network and security systems” as traffic patterns become increasingly machine-driven and less predictable.

Cisco’s broader framing is that AI traffic “isn’t just adding traffic,” but is changing the shape of traffic, with inference flows running about twice as long as typical web transactions and, in some cases, generating up to 450% more traffic per task when an agent executes the workload. AI inference sessions tend to hold resources longer, create more sustained flows, and push operators to think in terms of flow-aware behavior rather than only peak-throughput sizing. Cisco also notes that about 9% of AI inference flows carry more upstream than downstream traffic, versus about 0.5% for typical web traffic, which is a meaningful shift for access and broadband networks. Cisco reports that approximately 9% of AI inference flows are upstream-dominant, compared to roughly 0.5% for traditional web traffic, with this divergence expected to widen alongside increased agentic AI utilization. In parallel, latency sensitivity is anticipated to become a more critical performance parameter for AI-driven applications.

Latency and symmetry:

AI traffic is also more sensitive to latency than many ordinary consumer web transactions because the user experience is often conversational and interactive, with the expectation of near-immediate turn-taking. Cisco describes AI inference as operating at “software speed” rather than human speed, which means small delays can be more noticeable and operationally important. At the same time, upstream demand becomes more significant because prompts, context, attachments, and agent-generated actions can increase return-path traffic, especially as multimodal inputs and agentic tool use expand.

Multimodal growth:

The biggest step-up in technical impact comes when consumer AI shifts from text-only prompting to multimodal generation and agent-driven workflows. In those cases, each task can involve multiple model calls, retrieval steps, tool invocations, and richer media payloads, which expands both flow count and bytes per session. Cisco’s study suggests that this is why AI traffic will increasingly require “flow-aware network and security systems,” because the traffic profile is not just larger, but structurally different from conventional browsing.

Infrastructure Implications:

Telecom infrastructure is becoming “increasingly intertwined with hyperscale infrastructure, not because operators are leading AI investment, but because they are becoming part of the ecosystem that supports it,” analyst firm MTN Consulting said in an April 27th research note. “Demand for optical transport, data-center interconnect, and edge infrastructure is rising as telecom networks carry growing volumes of cloud and AI-driven traffic,” the firm said.

“AI network traffic is already reshaping infrastructure needs. What we are seeing is clear: AI isn’t just adding traffic. It’s changing the shape of traffic,” Javier Antich, principal product management engineer in the CTO office of Cisco’s provider connectivity group, and Gurudatt Shenoy, SVP, product management, provider connectivity, explained in this blog post.

These shifts are beginning to influence access network evolution. Fiber networks already provide relatively symmetric throughput and low latency, while cable operators are advancing similar capabilities through DOCSIS upgrades. Mid-split and high-split architectures increase upstream spectrum allocation, enabling more balanced capacity profiles. Concurrently, Tier 1 operators such as Comcast and Charter Communications are introducing low-latency enhancements within DOCSIS networks.

Operational data reflects early-stage impacts. Comcast Chief Network Officer Elad Nafshi noted at the Cable Next-Gen event in March that approximately 97.1% of AI traffic on Comcast’s network remains text-based, with images accounting for 2.6% and video just 0.3%, indicating that bandwidth-intensive multimodal AI traffic has yet to scale materially.

Network design impact:

For broadband and access networks, the immediate engineering issues are upstream traffic capacity, queue behavior, and latency consistency rather than raw total throughput alone. Symmetry upgrades (such as DOCSIS mid-split and high-split for MSOs), along with low-latency capabilities, are relevant because consumer AI creates more return-path pressure and more time-sensitive sessions. In other words, the challenge is not simply to carry more bytes; it is to carry more interactive sessions with predictable performance, especially as multimodal and agentic usage scales.

………………………………………………………………………………………………………………………………………………………………………………………………………….

References:

Will the wave of AI generated user-to/from-network traffic increase spectacularly as Cisco and Nokia predict?

Telecom operators investing in Agentic AI while Self Organizing Network AI market set for rapid growth

Analysis: Cisco, HPE/Juniper, and Nvidia network equipment for AI data centers

Cisco CEO sees great potential in AI data center connectivity, silicon, optics, and optical systems

The Financial Trap of Autonomous Networks: Scaling Agentic AI in the Telecom Core

Ericsson integrates Agentic AI into its NetCloud platform for self healing and autonomous 5G private networks

STL Partners webinar: Agentic AI needed for RAN autonomy & efficiency

Nokia to showcase agentic AI network slicing; Ericsson partners with Ookla to measure 5G network slicing performance

Agentic AI and the Future of Communications for Autonomous Vehicles (V2X)

Telecom data centers must be redesigned for the AI era with rack scale architectures, enhanced power & cooling requirements

Is the “far edge” a bridge to far to cross for AI inferencing? What about “Distributed AI Grids”?

T-Mobile US announces new broadband wireless and fiber targets, 5G-A with agentic AI and live voice call translation

Intel and AI chip startup SambaNova partner; SN50 AI inferencing chip max speed said to be 5X faster than competitive AI chips

CES 2025: Intel announces edge compute processors with AI inferencing capabilities

Inside Nokia’s new AI Networking Innovation Lab

- Silicon & Compute: Collaborating with AMD to optimize enterprise AI workloads alongside Nokia data center switches.

- Testing & Infrastructure: Partnering with Keysight Technologies to emulate workloads across Ultra Ethernet Consortium (UEC) and RoCEv2 transports.

- Hardware & Servers: Integrating high-performance platforms from Lenovo and Supermicro.

- Data Storage & Cloud: Working with Weka and cloud builders like Nscale to eliminate storage bottlenecks during heavy computational training.

Nokia’s AI Networking Innovation Lab is built upon three fundamental pillars: Technology Innovation, Ecosystem Collaboration, and Validation. Image credit: Nokia

………………………………………………………………………………………………………………….

Technology Innovation: The lab provides a dedicated space for AI partners to experiment with next-gen solutions across the entire networking stack – driving emerging standards forward with pioneering approaches to new protocols, switching silicon, congestion control, real-time telemetry, and automation.

“Partnering with Nokia in the AI Networking Innovation Lab has enabled us to benchmark and optimize AI networks under real-world conditions…Together, we are helping accelerate AI network adoption by giving operators and hyperscalers the validated insights needed for confident, large-scale deployment.”

Ecosystem Collaboration: True progress depends on a strong ecosystem of technology providers – silicon manufacturers, GPU developers, system, storage and test vendors, and cloud platforms – that work together to create highly-compatible AI-ready solutions. This facilitates joint testing for interoperability, improves integration, and ensures roadmaps are aligned across different hardware, software, and orchestration layers.

Travis Karr, Corporate Vice President, HPC and Sovereign AI at AMD believes customer collaboration and an open ecosystem are fundamental to accelerating AI innovation:

“By co-developing solutions with partners, such as Nokia in their AI networking innovation lab, we ensure our AMD enterprise AI solutions are tested with Nokia data center switches on real-world workloads and network demands. An open, standards-driven approach empowers customers to integrate seamlessly across heterogeneous environments, avoiding lock-in and fostering industry-wide advancement in AI.”

Validation: This positions the lab as the testing ground for Nokia Validated Designs, where customers and partners rigorously validate multi-vendor data center architectures under authentic AI training and inference workloads. By testing failure scenarios, congestion behavior, and operational automation, the lab turns NVDs into proven, deployable solutions — enabling predictable performance, faster deployment, and reduced operational complexity and risk for organizations navigating the AI era.

Arno van Huyssteen, Vice President of Global Telecommunications for Nscale:

“Nokia is a strategic networking partner for Nscale as we build towards AI Grid, and the engineering rigour behind their Validated Designs reflects the kind of innovation needed to enable next-generation AI infrastructure. The depth of hardware, software and failure testing behind those blueprints is what will give operators the confidence to deploy complex AI environments faster, with fewer integration risks and less operational disruption. We’re excited to collaborate in the AI Networking Innovation Lab to help push the boundaries of AI-native networking and validate the next generation of solutions before they reach production.”

A primary focal point inside the lab is managing data center congestion. Unlike traditional cloud traffic, back-end AI networks feature high-density data synchronization across massive GPU clusters. The lab uses advanced automation, AIOps, and lossless Ethernet solutions—such as the Nokia 7220 IXR-H6 switches—to handle these intense uplink and synchronization demands safely.

The AI Networking Innovation Lab supports Nokia’s broader strategy to accelerate the next era of AI-driven connectivity. As demand for AI infrastructure continues to grow, data center networking has become one of the most critical foundations of the global AI ecosystem. Through this investment, Nokia is strengthening its capabilities in AI and cloud infrastructure while advancing its vision of AI-native networking.

Rudy Hoebeke, Vice President of Software Product Management at Nokia:

“The launch of Nokia’s AI Networking Innovation Lab marks a major milestone in our commitment to drive the next era of AI-native connectivity. As the industry continues to evolve with solutions like scale-across and AI-Grid, this lab is poised to accelerate AI networking technology that will not only support but optimize these emerging industry offerings. This center gives our customers and partners early access to new technologies, deeper collaboration with the world’s leading AI ecosystem players, and the confidence that their networks are validated under more realistic AI conditions. By accelerating innovation and reducing deployment risks, we’re enabling the industry to deliver faster, more reliable, and more sustainable AI experiences to people and businesses everywhere.”

………………………………………………………………………………………………………………………

References:

Analysis: Nokia’s strong growth in Optical Networks and AI network infrastructure

Orange, Nokia, Nvidia, and Intel debate: ASICs vs. GPUs vs. General-Purpose CPUs for RAN Baseband Processing

Nokia’s AI Applications Study: “Physical AI” may require RAN redesign to support high‑volume, low‑latency uplink traffic

Australia’s NBN and Nokia demonstrate multi-generation optical technologies concurrently over existing FTTP infrastructure

Nokia to showcase agentic AI network slicing; Ericsson partners with Ookla to measure 5G network slicing performance

Tampnet to expand 5G offshore connectivity in the Gulf of Mexico using Nokia AirScale 5G radios

Dell’Oro: Analysis of the Nokia-NVIDIA-partnership on AI RAN

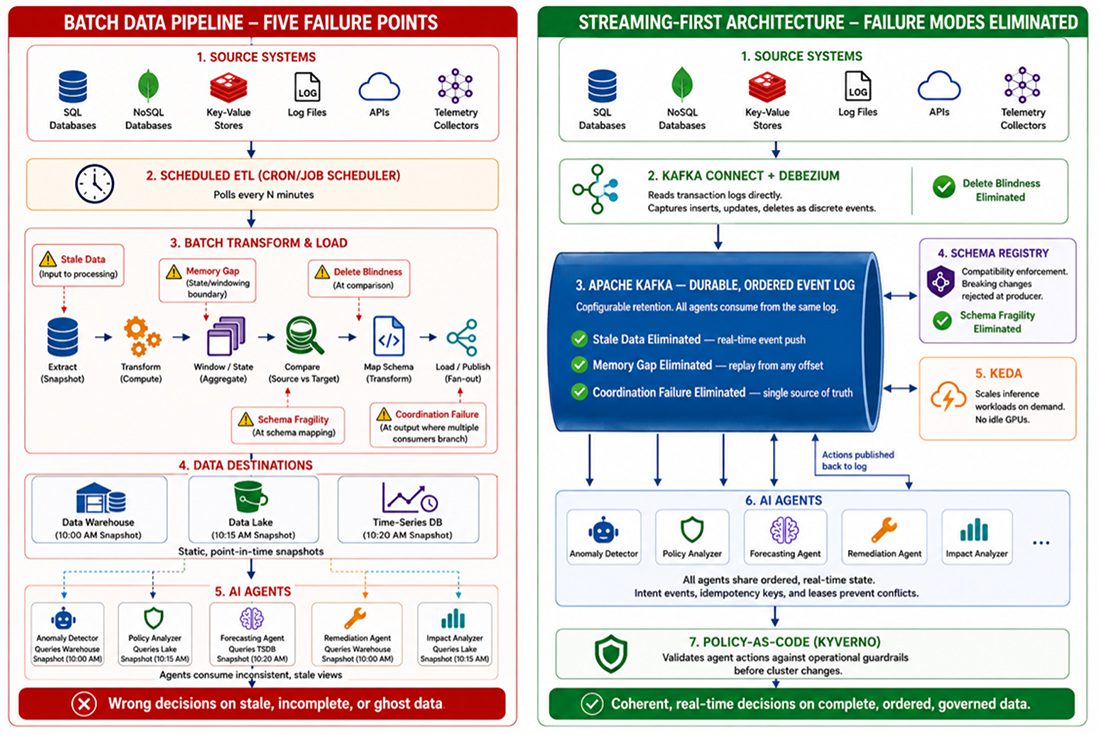

Why Batch Pipelines Break AI Agents: The Case For Streaming-First Network Operations

By Shazia Hasnie, Ph.D, editorial review by IEEE Techblog team member Sridhar Talari Rajagopal

Abstract:

The adoption of AI agents in network operations has exposed a critical architectural gap. Most enterprise data pipelines were designed for dashboards and reporting, not autonomous decision-making. When AI agents consume data from batch-oriented pipelines, five distinct failure modes emerge: stale data, memory gaps, delete blindness, schema fragility, and coordination failure. This article examines each failure mode, explains the underlying mechanism, and proposes architectural remedies grounded in streaming-first design principles. It also connects each technical failure to measurable business outcomes—extended downtime, recurring incidents, compliance exposure, silent decision degradation, and cascading impact. The result is both a diagnostic framework for I&O leaders and a financial argument for treating streaming data infrastructure as the prerequisite for autonomous operations.

Introduction: The Data Foundation Gap

Artificial intelligence is reshaping network operations. AI agents promise to detect anomalies, diagnose root causes, and execute remediation faster than human engineers. The industry has focused attention on models, GPUs, and orchestration frameworks. The data layer remains largely unexamined.

This is a critical oversight. Most enterprise data pipelines were built for human consumers. They serve dashboards, weekly reports, and historical analysis. Humans tolerate latency. Humans bring context. Humans notice when something looks wrong.

AI agents require something fundamentally different. They need real-time context. They need historical state. They need accurate representations of current reality. When these requirements are not met, agents do not complain. They act—on incomplete information, with incorrect assumptions, producing wrong outcomes.

The gap between what batch pipelines deliver and what agents require creates failure modes that most teams do not see until an agent makes the wrong decision. Recent analysis has identified the economic dimensions of this gap [1], while industry resources have begun documenting the specific failure patterns that arise when batch processing meets autonomous agents [6]. This article extends that work by identifying five distinct failure modes and proposing a streaming-first architectural response.

FIVE FAILURE MODES: ANATOMY OF BATCH-TO-AGENT MISMATCH

The following five failure modes represent the specific ways batch data pipelines undermine autonomous network operations. Each is examined through its mechanism—how the batch pipeline architecture produces the failure—its operational consequence, and the streaming-first architectural remedy that eliminates it. Together, they form a diagnostic taxonomy for any I&O team evaluating whether their data foundation is ready for Agentic AI.

Failure Mode 1: Stale Data

Mechanism: Batch telemetry pipelines poll, collect, and process data in cycles. Data is extracted on a schedule, transformed in bulk, and loaded into a destination—a warehouse, data lake, time-series database, or feature store that holds a static, point-in-time snapshot of the source. Between cycles, the pipeline holds no current state. An AI agent that spins up between cycles receives a snapshot of the past.

Consequence: The agent diagnoses an outage using telemetry from five minutes ago. The network state has changed during that interval. Routes have shifted. Traffic has been redirected. Thus, the agent’s diagnosis is based on a reality that no longer exists. Remediation actions applied to a past state can worsen the current incident. The agent becomes a liability rather than an asset. Industry documentation confirms that AI agents require continuous data freshness to function correctly [5].

Architectural Remedy: Streaming telemetry replaces cyclical polling with continuous event push. Data flows from source to consumer in real time, ingested directly into the streaming platform’s durable event log [2]. The agent consumes from a live stream, not a stale snapshot. Context acquisition takes milliseconds. The cognitive loop remains intact. This is not an add-on to the batch pipeline. It is a structural replacement of the ingestion layer.

Failure Mode 2: Memory Gap

Mechanism: Batch pipelines deliver windows of data—the last hour, the last day, the last processing cycle. They do not preserve the sequence of events that led to the current moment. Historical context is stripped away with each new extract. The pipeline knows what happened. It does not know what happened before.

Consequence: An agent responding to an interface flap cannot answer the most basic diagnostic question: has this happened before? It cannot correlate the current event with the three similar events that occurred in the preceding 24 hours. It cannot detect the pattern that would reveal a degrading optical module. Every incident appears isolated. Pattern recognition—the core value proposition of AI-driven operations—is structurally impossible. The distinction between streaming and batch architectures for these use cases has been well-documented [4].

Architectural Remedy: A durable event log with configurable retention serves as the agent’s memory [2]. Unlike a batch window, which discards history with each new extract, the event log preserves the ordered sequence of all events within the retention period. The agent seeks backward in the log on startup and replays the preceding window of telemetry. Pattern detection across time becomes native to the architecture. This is not a separate cache layered on top. It is the storage layer itself—immutable, ordered, and built for event replay from any offset.

Failure Mode 3: Delete Blindness

Mechanism: Batch pipeline’s Extract, Transform, Load (ETL) processes compare snapshots of source data. They do not watch the database transaction log. They identify what exists at two points in time and process the difference. When a record is deleted from the source system, the pipeline has no way of distinguishing between a row that was deleted and a row that was simply omitted due to extraction error, filtering logic, or schema mismatch. The absence of a row is not an event. It is a gap. Batch pipelines are not designed to interpret gaps as meaningful signals. The record simply vanishes from the next extract. The downstream consumer—an AI agent or any other system—has no way of knowing the record ever existed.

Consequence: The agent queries the downstream data store and finds no record for a deactivated account, a revoked certificate, or a cancelled change order. It cannot distinguish between “never existed” and “was deleted,” so it treats the absence as neutral.

The agent makes decisions on ghosts—data that no longer exists in source systems. In access control scenarios, this is not an operational error. It is a security incident. This specific failure mode has been identified in analyses of batch processing limitations for AI agents [6].

Architectural Remedy: Change data capture (CDC), implemented through Kafka Connect with Debezium connectors, reads the database transaction log directly [2], [8]. Debezium provides CDC source connectors for MySQL, PostgreSQL, MongoDB, SQL Server, and other databases — capturing inserts, updates, and deletes as discrete events with explicit operation types by tailing the database’s native transaction log. Nothing is invisible to the pipeline. The streaming architecture knows not only what exists but what ceased to exist. This is not an ETL workaround with soft-delete flags. It is a structural capability of the integration layer, converting database changes into first-class events the moment they occur.

Failure Mode 4: Schema Fragility

Mechanism: Source database schemas change over time. Columns are renamed, added, deprecated, or re-typed. Batch pipelines are configured for a specific schema at extraction time. When the source schema changes, the pipeline responds in one of two ways. It fails silently and drops the affected field from every subsequent extract. Or it fails loudly and stops processing entirely.

Silent failure is the more dangerous outcome. The pipeline continues delivering data. The consumer has no indication that a critical field is missing.

Consequence: The agent continues operating without a critical data input. It makes decisions with incomplete information. It has no awareness that its reasoning is compromised. The wrong decisions accumulate. By the time the missing field is discovered—often through an operational failure rather than a monitoring alert—the cost of remediation includes auditing and correcting every decision made during the degradation window.

Architectural Remedy: A schema registry with compatibility enforcement validates schema changes before they propagate to downstream consumers [2]. Streaming platforms can enforce backward and forward compatibility rules at the producer level. A breaking schema change is rejected before any data is published. The pipeline fails loudly and immediately. This is not a documentation standard or a code review checklist. It is a structural governance layer embedded in the streaming architecture itself, preventing silent field loss at the point of ingestion.

Failure Mode 5: Coordination Failure

Mechanism: When multiple AI agents operate on batch-derived data, each agent consumes a separate, potentially inconsistent snapshot. Agent A receives data from the 10:00 AM extract. Agent B receives data from the 10:15 AM extract. The extracts differ. Each agent holds a different version of reality. There is no shared, ordered log of events that all agents consume.

Consequence: Two agents respond to the same cascading failure. Agent A identifies a BGP routing issue and begins rerouting traffic. Agent B identifies a DNS resolution failure and begins modifying name server configurations. Neither agent knows the other acted. The redundant changes compete. The conflicting configurations create new instability. The original incident expands rather than resolves. What began as a single point of failure becomes a cascade that erodes trust in autonomous operations.

Architectural Remedy: A shared, ordered event log serves as a single source of truth for all agents in the system. Every agent consumes from the same log. Actions taken by one agent are published back to the log as events, immediately visible to all others [7]. Coordination becomes native to the architecture.

Visibility alone, however, does not prevent conflicting actions. Two agents may observe the same anomaly and both initiate remediation before either’s action becomes visible on the log. In practice, this is addressed through complementary mechanisms layered on the same event-driven model: action intent events that signal an agent is about to act, giving others a window to defer; idempotency keys that prevent duplicate remediation from causing harm; and lightweight leases for resources that should only be modified by one agent at a time. These mechanisms do not require a central coordinator. They are published to the same log, consumed by the same agents, and enforced through the same ordered stream.

This is not a separate orchestration layer or message bus bolted onto the side. It is the core of the streaming platform—a unified, ordered, multi-consumer event stream that provides both the shared state and the coordination primitives that eliminate the inconsistent snapshots batch architectures produce by default.

Batch-to-Streaming Reference Architecture — Five Failure Modes and Their Architectural Remedies

THE UNIFIED DIAGNOSTIC FRAMEWORK

The five failure modes translate into a practical audit that I&O leaders can apply to their own infrastructure. Each question corresponds to a specific architectural requirement.

The Five-Question Audit

- Can the data pipeline deliver real-time context to an agent the moment it wakes up? If not, the system is vulnerable to stale data failures.

- Can the agent access the preceding window of telemetry to detect patterns across events? If not, the system is vulnerable to memory gap failures.

- Does the pipeline capture deletes as explicit events with operation types? If not, the system is vulnerable to delete blindness.

- Does the pipeline detect schema changes before they propagate to downstream consumers? If not, the system is vulnerable to schema fragility.

- Do all agents share a single, ordered view of events with visibility into each other’s actions? If not, the system is vulnerable to coordination failure.

A negative answer to any one of these questions signals a data foundation that is not ready for autonomous operations. The model is not the bottleneck. The GPUs are not the bottleneck. The telemetry pipeline is.

THE MIGRATION PATH: FROM BATCH TO STREAMING-FIRST

Adopting a streaming-first architecture does not require abandoning existing batch investments overnight. For most organizations, the transition follows a coexistence model: streaming pipelines are introduced alongside batch pipelines, not as an immediate replacement.

The practical starting point is to identify the highest-value agent—the one whose decisions carry the greatest operational or financial consequence—and convert its data pipeline first. This agent is typically the one where stale data, memory gaps, or coordination failures have produced measurable incidents. Converting this single pipeline to streaming telemetry with a durable event log delivers a targeted operational improvement while the rest of the batch estate continues to function.

From there, adoption expands incrementally. Each additional agent is migrated as operational experience with the streaming platform grows. Teams develop competence in offset management, schema governance through the registry, and backpressure handling while batch pipelines continue to serve lower-priority consumers. The streaming and batch estates coexist for a transition period measured in months, not days.

This incremental approach also reveals where streaming delivers the greatest marginal benefit. Not every data flow requires real-time treatment. Dashboards fed by hourly batch extracts may serve their purpose indefinitely. The streaming investment should be directed at the pipelines that feed autonomous agents—the flows where the five failure modes carry real operational consequence. The goal is not to stream everything. It is to stream the right things first.

THE BUSINESS IMPACT: FROM TECHNICAL FAILURE TO FINANCIAL CONSEQUENCE

Technical failures in the data pipeline do not remain technical. They cascade into business outcomes that appear on budget reviews, SLA reports, and board presentations. Each failure mode carries a distinct financial consequence.

Stale Data → Extended Downtime

An agent diagnosing from stale telemetry makes incorrect decisions. Remediation applied to a past state can worsen the current incident. Mean Time to Resolution increases. For revenue-generating services, every minute of extended downtime translates to lost revenue and SLA penalty accrual.

Consider an illustrative model: a Tier-1 service provider processing $50M in customer transactions per hour, 5-minute stale-data induced misdiagnosis that extends an outage by 15 minutes represents $12.5M in direct revenue loss—not counting SLA penalties, regulatory scrutiny, or reputational harm. The cost of a single such incident can exceed the annual investment in the streaming infrastructure that would have prevented it. If even a portion of such incidents are eliminated by replacing the batch pipeline feeding the diagnostic agent with a streaming backbone, the infrastructure investment is recovered in a single avoided outage.

Memory Gap → Recurring Incidents

An agent without historical context cannot recognize chronic conditions. A flapping interface, a memory leak, or a degrading optical module triggers the same alert repeatedly. Each occurrence consumes GPU inference cycles. Each occurrence generates a ticket. Each occurrence may require human escalation. The cumulative cost of a single undiagnosed chronic issue, multiplied across an enterprise network over a year, represents operational expenditure that a stateful agent could eliminate.

Delete Blindness → Compliance and Security Exposure

An agent acting on deleted records makes authorization decisions based on invalid state. A deactivated account granted access. A revoked certificate treated as valid. In regulated industries, these errors are compliance violations with defined financial penalties and reporting obligations. The cost of a single access control error caused by ghost data can exceed the annual cost of the streaming infrastructure that would have prevented it.

Schema Fragility → Silent Decision Degradation

When a batch pipeline drops a critical field, the agent does not fail loudly. It continues operating with incomplete inputs. Decisions degrade silently. The cost includes not only the direct operational impact but the effort of auditing and correcting every decision made during the degradation window. Silent failure multiplies eventual remediation cost.

Coordination Failure → Cascading Impact

When multiple agents act on inconsistent views of reality, they create new problems. Redundant changes compete. Conflicting configurations destabilize the environment. The original incident expands. The cost includes extended resolution time, additional engineering effort, and eroded trust in autonomous operations. Organizational credibility is a balance sheet item that coordination failure depletes.

The Aggregated View

Taken together, the five failure modes represent a predictable drain on AI investment returns. An organization that deploys expensive GPU infrastructure, fine-tunes capable models, and implements event-driven orchestration [3]—but feeds all of it with a batch data pipeline—has built an autonomous operations capability on a foundation that guarantees suboptimal outcomes. The streaming backbone is not an incremental cost. It is the insurance policy that protects the returns on every other AI infrastructure investment.

CONCLUSION: STREAMING-FIRST AS THE ARCHITECTURAL PREREQUISITE

The five failure modes share a common root cause. Batch data pipelines were designed for human consumers who tolerate latency, bring context, and notice anomalies. AI agents tolerate nothing. They act on what they receive.

Each failure mode is addressable within a unified streaming data architecture. Streaming telemetry solves stale data by replacing cyclical polling with continuous event push. Durable event logs solve memory gaps by preserving the sequence of events with configurable retention, allowing agents to replay history and detect patterns across time. Change data capture—a structural component of the streaming architecture implemented through Kafka Connect and Debezium—solves delete blindness by reading database transaction logs directly, capturing inserts, updates, and deletes as discrete events with explicit operation types. A schema registry with compatibility enforcement solves schema fragility by validating schema changes before they propagate downstream, catching breaking changes at the source rather than discovering them after agent failure. A shared, ordered event log solves coordination failure by serving as a single source of truth that all agents consume, ensuring every agent operates on the same reality with visibility into every other agent’s actions—complemented by intent events, idempotency keys, and lightweight leases that prevent conflicting actions without a central coordinator.

These are not disparate tools. They are structural elements of a single streaming data architecture. Apache Kafka provides the durable, shared event log at the core. Kafka Connect provides the integration framework for change data capture, ingesting database changes as first-class events. Schema Registry provides the compatibility governance layer. Together, they form a complete data foundation where stale data, memory gaps, delete blindness, schema fragility, and coordination failure are eliminated by design—not patched after the fact.

These architectural components eliminate the data-layer failure modes. But real-time data also enables real-time action—and that speed demands an execution-layer governance framework. Policy-as-code engines ensure that agent decisions, even when based on perfect context and full state, are validated against operational guardrails before they become cluster changes. The streaming backbone delivers the context. The policy layer ensures that context is acted upon safely.

This streaming architecture is not an end in itself. It is the data foundation upon which event-driven network operations can be built. While the streaming backbone eliminates the data-layer failure modes, organizations that pair it with event-driven compute unlock an additional dimension of efficiency. When a telemetry event flows through the event log and an anomaly is detected, that same stream can trigger the Kubernetes Event-driven Autoscaling (KEDA) of inference workloads [3]—spinning up the right-sized model at the right moment, on the right context. The streaming backbone delivers the context. Event-driven orchestration delivers the compute. Together, they close the loop from detection to inference, ensuring the agent has both the data and the compute it needs without the waste of always-on infrastructure.

The barrier is not technology. Each of these architectural components is proven, open-source, and deployed in production environments today. The barrier is architectural awareness. Organizations that invest in a streaming-first data architecture will deploy AI agents that deliver on their promise. Organizations that do not will discover these failure modes in production—after the wrong decision is already made.

The streaming data architecture is not a performance upgrade for Agentic AI. It is the architectural prerequisite.

REFERENCES

[1] P. Madduri and A. L. Thakur, “The Financial Trap of Autonomous Networks: Scaling Agentic AI in the Telecom Core,” IEEE ComSoc Technology Blog, April 2026. [Online]. Available: https://techblog.comsoc.org/2026/03/30/the-financial-trap-of-autonomous-networks-scaling-agentic-ai-in-the-telecom-core/

[2] Apache Software Foundation, “Apache Kafka Documentation.” [Online].

Available: https://kafka.apache.org/42/getting-started/introduction/

[3] Cloud Native Computing Foundation, “KEDA: Kubernetes Event-driven Autoscaling.” [Online]. Available: https://keda.sh/

[4] Streamkap, “Streaming ETL vs. Batch ETL: A Decision Framework.” [Online].

Available: https://streamkap.com/resources-and-guides/streaming-etl-vs-batch-etl

[5] Streamkap, “Real-Time vs Batch Data for AI Agents: Why Freshness Matters.” [Online]. Available: https://streamkap.com/resources-and-guides/real-time-vs-batch-data-for-agents

[6] Streamkap, “Why AI Agents Can’t Use Batch Data.” [Online]. Available: https://streamkap.com/resources-and-guides/why-agents-cant-use-batch-data

[7] Redpanda, “Building safe, multi-agent AI systems in Redpanda Agentic Data Plane.” [Online]. Available: https://www.redpanda.com/blog/adp-governed-multi-agent-ai-cloud

[8] Debezium Community, “Debezium: Open-Source Change Data Capture,” Debezium Documentation. [Online]. Available: https://debezium.io/

ABOUT THE AUTHOR

Shazia Hasnie, Ph.D., is VP, Product Strategy and Innovation at Cuber AI, focused on Agentic Network Operations, AI-driven automation, and streaming data architectures. Her work explores the intersection of autonomous systems, cloud-native infrastructure, and the economic models that make AI operations sustainable at scale.

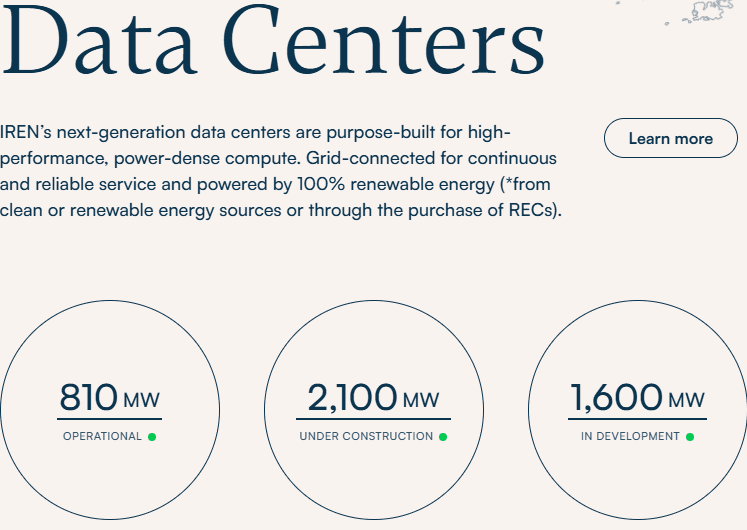

Nvidia strategic partnership with IREN targets 5G Watts AI infrastructure buildout + $2.1B investment option

Nvidia has announced a strategic partnership with cloud AI data center operator IREN [1.] to deploy up to 5G Watts (5GW) of AI infrastructure, driven by a $3.4 billion services contract and a $2.1 billion investment option for Nvidia. This collaboration aims to secure critical, high-density data center capacity for AI workloads while accelerating IREN’s transition into a major AI infrastructure provider. This strategic expansion targets up to 5GW of NVIDIA DSX-aligned AI infrastructure across IREN’s global pipeline. The roadmap centers on the 2GW Sweetwater campus in Texas, positioned to be the flagship deployment of NVIDIA’s DSX factory architecture. This integrated model synergizes NVIDIA’s reference designs with IREN’s core competencies in utility-scale power procurement, site development, and full-stack GPU cloud operations.

“AI factories are becoming foundational infrastructure for the global economy,” said Jensen Huang, founder and CEO of Nvidia. “Deploying these systems at scale requires deep integration across the full stack — compute, networking, software, power and operations. IREN brings the scale and infrastructure expertise to help accelerate the buildout of next-generation AI infrastructure globally. Together, we are building for the age of AI,” he added. Future deployments are expected to focus on IREN’s 2-gigawatt Sweetwater campus in Texas, which the companies expect to serve as a flagship deployment for Nvidia’s DSX architecture.

“This partnership combines NVIDIA’s AI systems and architecture leadership with IREN’s expertise across power, land, data centers, GPU deployment and infrastructure operations,” said Daniel Roberts, cofounder and co-CEO of IREN. “Together, we believe we can accelerate deployment of AI infrastructure and expand access to compute for AI-native and enterprise customers globally.”

References:

China vs U.S.: Race to Generate Power for AI Data Centers as Electricity Demand Soars

Fiber Optic Boost: Corning and Meta in multiyear $6 billion deal to accelerate U.S data center buildout

How will fiber and equipment vendors meet the increased demand for fiber optics in 2026 due to AI data center buildouts?

Big tech spending on AI data centers and infrastructure vs the fiber optic buildout during the dot-com boom (& bust)

Analysis: Cisco, HPE/Juniper, and Nvidia network equipment for AI data centers

Expose: AI is more than a bubble; it’s a data center debt bomb

Can the debt fueling the new wave of AI infrastructure buildouts ever be repaid?

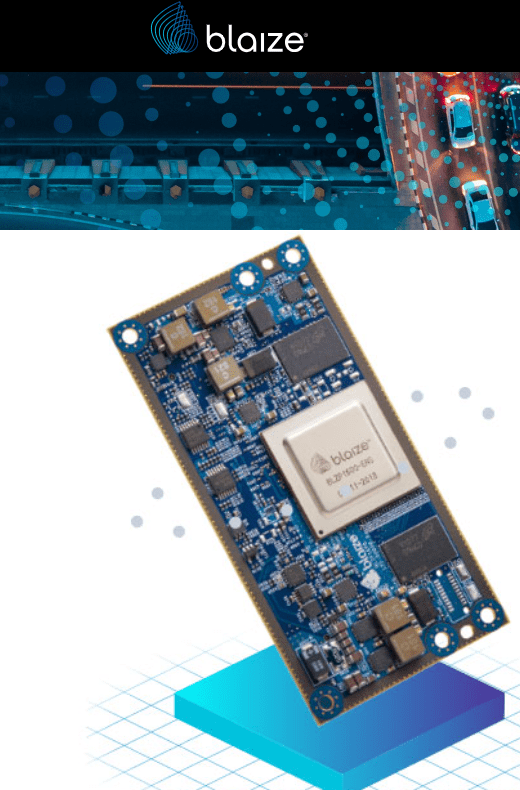

Blaize and Winmate Forge Strategic Partnership to Accelerate Edge AI Integration in Ruggedized Systems

Bridging the Edge Connectivity Gap:

While modern AI architecture has historically favored centralized data centers, mission-critical applications require real-time inference at the edge. For defense personnel in remote locations, maritime operations, or emergency medical responders, reliance on cloud-based processing is often non-viable due to bandwidth constraints and latency requirements.

Eldorado Hills, CA based Blaize Holdings, Inc. and Winmate Inc. (TAIWAN) have announced a Strategic Partnership Agreement aimed at generating approximately $15 million in business during its inaugural year. This collaboration integrates Blaize’s high-performance AI accelerators into Winmate’s industrial-grade ruggedized hardware ecosystem—including UAVs, handhelds, vehicle-mounted computers, and embedded systems—designed for mission-critical reliability in high-stress environments. Both organizations anticipate this agreement to be the foundation of a long-term, multi-year technological synergy.

The partnership addresses the “cloud dependency” bottleneck by leveraging Blaize’s GSP® (Graph Streaming Processor) architecture. These chips are engineered to industrial specifications, enabling sophisticated AI workloads to run locally on the device. When paired with Winmate’s ruggedized chassis—built to withstand extreme thermal fluctuations, high-velocity vibration, and dust ingress—the resulting systems provide high-compute AI capabilities in environments where traditional hardware fails.

- Border security and surveillance: Real-time threat detection and perimeter monitoring

- Mobile command and control: On-site intelligence and situational awareness for field teams

- Drones and unmanned systems: Autonomous navigation and mission execution for UAVs and ground vehicles

- Critical infrastructure: Continuous monitoring and predictive analytics for power, ports, and transportation

- Maritime domain awareness: Vessel tracking and anomaly detection at sea

- Field healthcare: Portable diagnostics and decision support in remote and disaster environments

Deal at a glance:

- First-year revenue: the parties intend to work in good faith to close approximately $15 million in business, expected to scale meaningfully in subsequent years

- Term: Three-year initial term, with automatic renewal

- Next steps: Joint engineering, sales, and marketing execution to bring integrated systems to market, with additional opportunities to be added through follow-on programs

-

- Task-Level Parallelism: The architecture leverages an on-chip hardware scheduler to analyze data dependencies in real-time. It executes deeper layers of a neural network as soon as previous layers produce sufficient intermediate results, minimizing the “idle time” typical of sequential processing.

- Performance-to-Power Ratio: The flagship Blaize 1600 SoC features 16 GSP cores delivering 16 TOPS (Tera Operations Per Second) of AI inference within a conservative 7W power envelope.

- Memory Efficiency: By streaming data through the processor and holding intermediate results in cache, the GSP reduces external DRAM access by up to 50x, which significantly lowers latency and overall system thermal output.

- Unified Development Platform: All hardware is supported by the Blaize Picasso SDK, which allows developers to port models from standard frameworks (like PyTorch or TensorFlow) into a streaming execution format without requiring low-level hardware manual coding.

Image Credit: Blaize Holdings

………………………………………………………………………………………………………………………

- Pathfinder P1600 SOM: This System-on-Module is the primary vehicle for integration into Winmate’s handhelds and drones. It operates as a standalone unit with dual ARM Cortex-A53 processors and integrated MIPI CSI camera interfaces for real-time sensor fusion.

- Mission-Ready Durability: These systems are engineered to meet MIL-STD-810H and IP65+ standards, ensuring that Blaize’s AI silicon remains stable under extreme vibration, thermal shock (operating in sub-zero or high-heat field conditions), and high-velocity impacts.

- Sovereign Edge Computing: By processing sensitive data locally on ruggedized handhelds or vehicle-mounted units, the partnership ensures data sovereignty, preventing critical telemetry or biometric data from ever leaving the device during field operations

“Our customers can’t wait, and they often can’t rely on the cloud. They need AI that runs where the work happens. Winmate makes some of the most capable rugged systems in the industry, and our chips are designed to run AI inside exactly those kinds of devices. This partnership turns a years-long vision into a practical, deployable answer for defense and critical infrastructure operators,” said Dinakar Munagala, CEO of Blaize, Inc.

“Our platforms are deployed on naval vessels, in border outposts, on industrial sites, and in disaster zones – environments where most hardware fails. With Blaize, we can now deliver those same systems with on-device AI built in, giving customers real-time intelligence wherever they operate,” said Ken Lu, Chairman and CEO of Winmate Inc.

-

- Latency: The necessity for near-zero response times in autonomous and diagnostic systems.

- Security: The requirement to process sensitive data locally to mitigate the risks associated with transmitting information over public or compromised networks.

About Blaize, Inc.

Blaize delivers a programmable AI platform, purpose-built for AI inference workloads in real-world environments. Its Hybrid AI architecture combines the Blaize GSP (Graph Streaming Processor) with GPU-based infrastructure, enabling AI inference workloads to run across edge, cloud, and data center. Blaize solutions support computer vision, multimodal AI, and sensor-driven applications across smart cities, industrial automation, telecommunications, retail, logistics, and defense. Blaize is headquartered in El Dorado Hills, California, with a global presence across North America, Europe, the Middle East, and Asia. Visit www.blaize.com or follow us on LinkedIn @blaizeinc.

About Winmate Inc.

Winmate Inc. is a publicly traded global leader in rugged computing systems, delivering industrial-grade platforms – including handhelds, tablets, vehicle-mounted units, panel PCs, and embedded modules – for demanding environments across defense, transportation, energy, healthcare, and industrial markets.

………………………………………………………………………………………………………………………………………………………………………………………………………………………..References:

Is the “far edge” a bridge to far to cross for AI inferencing? What about “Distributed AI Grids”?

Analysis: Edge AI and Qualcomm’s AI Program for Innovators 2026 – APAC for startups to lead in AI innovation

Will “AI at the Edge” transform telecom or be yet another telco monetization failure?

Private 5G networks move to include automation, autonomous systems, edge computing & AI operations

Orange, Nokia, Nvidia, and Intel debate: ASICs vs. GPUs vs. General-Purpose CPUs for RAN Baseband Processing

For Orange CTO Laurent Leboucher, the main attraction of AI today lies in its potential to improve the efficiency of 5G radio access networks (RANs). That helps explain Orange’s recent collaboration with Nokia and Nvidia. Orange already deploys Nokia’s purpose-built 5G network equipment and software at mobile sites in France and other markets. Until recently, it had little obvious need for Nvidia, the U.S. chip making king best known for the graphics processing units (GPUs) used to train large language models. But Nokia and Nvidia became closely aligned last October, when Nvidia took a 3% stake in Nokia as part of a $1 billion investment. Nokia is now developing AI RAN software designed to run on GPUs.

Leboucher’s interest is driven in part by concerns over the cost of custom silicon — the application-specific integrated circuits (ASICs) used in purpose-built 5G networks. “It creates an opportunity to bring a general-purpose chipset instead of an ASIC implementation,” he told Light Reading at last week’s FutureNet World event in London. “I think we could, at some point, benefit from the economies of scale of new chipsets. That could be Nvidia.”

The rationale is much easier to understand than arguments about 5G for autonomous vehicles. Chip manufacturing is already expensive, and both Nokia and Ericsson expect component costs to rise further this year amid relentless AI demand. At the same time, the RAN market remains relatively small and has contracted. According to market research firm Omdia, telco spending fell from $45 billion in 2022 to $35 billion last year and is expected to stay at that level. In that context, it is increasingly difficult to justify designing high-cost chips with limited reuse outside telecom.

Image Credit: Orange

Last year, Nvidia spent about $18.5 billion on research and development, generated nearly $216 billion in revenue, and reported a gross margin of more than 70%. Its financial strength is not in question. If telecom operators can use its GPUs for RAN software, they may face less pressure to secure the long-term economics of 5G and 6G development. That alone could be enough to support the case for Nvidia. The counterarguments are cost and power consumption. By design, custom silicon is optimized for a specific workload and will always outperform a more general-purpose processor at that task. An Nvidia GPU in the RAN could therefore be seen as excessive — like using a crop duster to water a hanging basket.

Leboucher, believes that Nokia and Nvidia are developing something far more compact than a typical data-center deployment. “It is not a Blackwell GPU,” he said, referring to Nvidia’s current hyperscaler-class product line. “I have an understanding it’s something which is a little bit smaller.” One of the first GPU-based products is expected to come on a card that Orange can insert into an existing Nokia AirScale chassis.

He is also interested in replacing traditional RAN algorithms with AI to improve spectral efficiency and overall performance. Through trials with Nokia and Nvidia, Orange wants to determine whether a GPU is actually required to capture the full benefit. “We can completely rethink the way we are doing algorithms today, using AI for the radio Layer 1,” he said, referring to the most compute-intensive part of the RAN software stack. Some of the “AI-RAN” narrative still sounds “a little bit like science fiction,” Leboucher admitted. “But I think there are some very interesting ideas behind that. We want to understand where we are.”

This is not the first time the industry has debated a shift from ASICs to general-purpose processors for RAN equipment. Alongside its purpose-built 5G portfolio, Ericsson already offers cloud RAN products based on Intel CPUs. Samsung is now focused on Intel-based virtual RAN and has recently predicted the end of purpose-built 5G. Even so, cloud and virtual RAN still account for only a small share of live 5G deployments. Huawei and Ericsson, the two largest RAN vendors, remain committed to custom silicon development.

Nvidia’s entry into the market has clearly given Leboucher and his team more to evaluate as RAN technology becomes more sophisticated. “We are introducing new requirements for radio networks, typically for beamforming, and we have to consider the need for quite powerful chipsets,” he said. “Whether the best way to keep going is using ASICs or a general-purpose architecture – I think this is a good time to ask the question. Before, it was too early.”

The answer could shape Orange’s next major RAN decisions. The operator is preparing for what Leboucher describes as a “refresh” of RAN equipment across several countries ahead of the expected 6G launch in 2030. For the first time, he said, Orange will include cloud RAN as a “major option” in its request for proposal.

The concern around Intel as an alternative to Nvidia is its still-fragile financial position. Before December, Intel had been trying to spin off its network and edge group (NEX), which develops RAN chips. Those plans were later shelved, but the company’s net loss widened to about $4.3 billion in the most recent first quarter, from $887 million a year earlier, while revenue rose only 7% year over year to $13.6 billion. Cristina Rodriguez, who had led NEX, left this month to join Coherent, and Intel has not yet named a successor. “The shares jumped 28% in after-hours trading, taking Intel firmly into meme-stock territory,” said Radio Free Mobile analyst Richard Windsor in a blog published after results came out on April 23. “I say meme-stock because there is no other way to describe it when the shares are on a 2026 PER [price-to-earnings ratio] of 137x, and its technology looks obsolete.”

Orange places significant value on separating hardware from software, allowing the same RAN software to run across multiple hardware platforms. Ericsson and Samsung both say the virtual RAN software they have built for Intel CPUs could, with relatively modest changes, be ported to AMD silicon using the same x86 architecture or to Arm-based CPUs.

By contrast, Layer 1 code written for Nvidia GPUs and the CUDA software stack would not be portable to other platforms, according to Ericsson. “I think the main challenge we see with that is we are trying very hard to keep our stack portable, to give hardware options,” Michael Begley, Ericsson’s head of RAN compute, told Light Reading at MWC Barcelona this year. “If you go all in on one, it’s great, but you’re all in on one, and you can’t offer those other options to the operators or the ecosystem.”

Leboucher acknowledges that risk. “The risk of lock-in exists, definitely,” he said. “We really want to stay open. At the same time, we know that benefiting from a very, very large-scale general-purpose architecture should improve the TCO [total cost of ownership]. At the end of the day, it will be a trade-off. But we would welcome an architecture where we have the capacity at some point to decide to swap if we need to swap.”

Nokia’s hope is that much of the Layer 1 software written for Nvidia GPUs will eventually be deployable on other GPU platforms. But Nvidia’s near-monopoly in that segment leaves the industry with few alternatives for now. There is also optimism inside Nokia that GPU-based code could later be adapted for capable CPUs, although Ericsson’s comments suggest that would be much harder. For telecom executives, the choices made over the next couple of years may be pivotal as 6G approaches.

………………………………………………………………………………………………………………………………………………………

References:

https://www.lightreading.com/5g/orange-weighs-nvidia-against-intel-for-5g-chips-ahead-of-new-rfp

RAN Silicon Rethink- Part II; vRAN and General-Purpose Compute

RAN silicon rethink – from purpose built products & ASICs to general purpose processors or GPUs for vRAN & AI RAN

Analysis: Nokia and Marvell partnership to develop 5G RAN silicon technology + other Nokia moves

Analysis: Nvidia’s $2 billion investment in Marvell; NVLink Fusion ecosystem & RAN vendor silicon strategy

Ericsson goes with custom silicon (rather than Nvidia GPUs) for AI RAN

Marvell shrinking share of the RAN custom silicon market & acquisition of XConn Technologies for AI data center connectivity

Custom AI Chips: Powering the next wave of Intelligent Computing

OpenAI and Broadcom in $10B deal to make custom AI chips

Will Google Cloud’s AI and data analytics revenue +TPU IP licensing income offset huge AI CAPEX to produce a decent ROI?

Big Tech AI spending binge results in massive job cuts!

Big Tech AI spending binge results in massive job cuts!

Executive Summary:

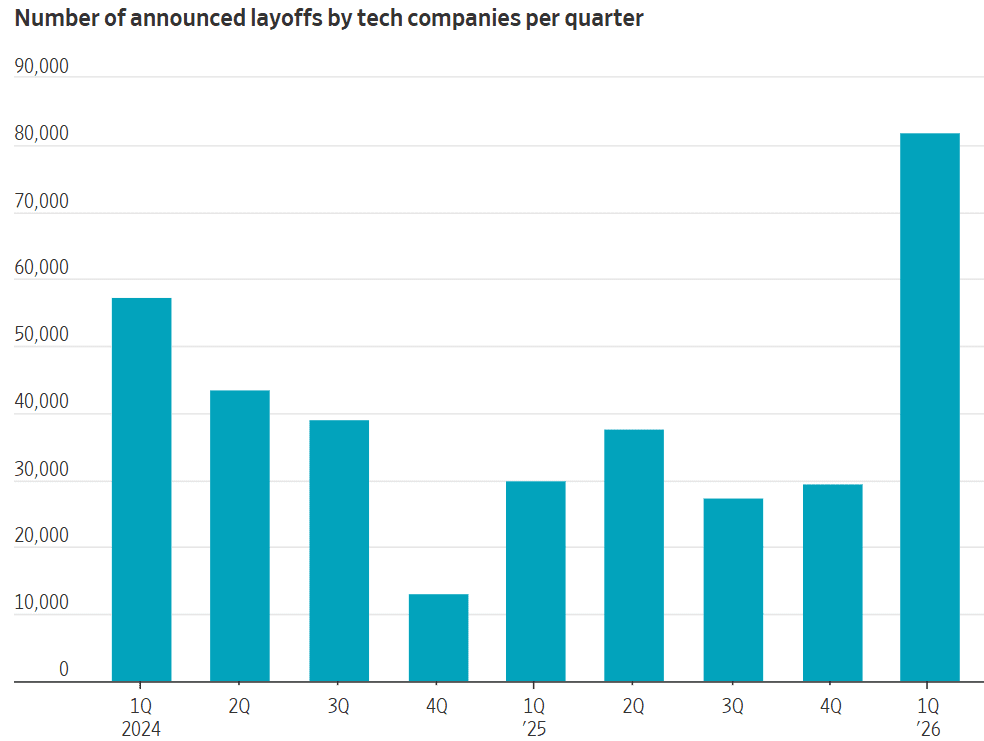

The tech industry is undergoing a massive structural realignment. Hyperscalers, Software as a Service (SaaS) vendors, and telecom network and equipment providers are aggressively slashing workforces to reallocate capital toward massive AI infrastructure investments. Alphabet, Meta, Amazon, and Microsoft are projected to spend a collective $674 billion in 2026—over double their 2024 levels. Most of that spending is AI related.

From the referenced WSJ article: