Author: Alan Weissberger

Ligado Networks and Ubiik to offer private LTE network using Band 54 spectrum at 1670-1675 MHz

U.S. satellite communications service provider Ligado Networks plans to offer a private LTE network using advanced metering infrastructure (AMI) from Taiwan based Ubiik. Using Band 54 spectrum at 1670-1675 MHz, the private LTE network is intended for the utilities sector and other mission-critical customers. Band 54 is standardized for 3GPP-based cellular technologies; it is available contiguously across the US.

Ubiik gained considerable success in Taiwan, including a $17 million tender from Taiwan Power Company (Taipower) at the start of 2023. The company has developed a private LTE base station for Band 54 spectrum, under the brand goRAN; the 5MHz chunk at 1670-1675 MHz, affording time-division (rather than frequency division) duplexing (TDD), is presented as a useful private network addition for low-power IoT projects.

On October 6th, Ubiik partnered with Electricity Canada with the aim of contributing to Canada’s clean energy future through developments in wireless connectivity. Ubiik will collaborate with Electricity Canada’s members and partners to deliver pLTE networks for utilities in Canada, using its innovative goRAN™ LTE Base Station and LTE-M end devices that support the 1.8GHz, 900MHz, and 1.4GHz spectrum.

The goRAN™ base station integrates a full-software 3GPP Release 15 Radio Access Network (RAN) optimized for private networks, with multi-carrier standalone NB-IoT support as well as standalone LTE-M in 1.4MHz, 3MHz and 5MHz bandwidths, including VoLTE. It can operate as a Base Station connecting to an external Evolved Packet Core (EPC) via the S1 interface, as well as an Access Point with its built-in EPC and integrated HSS (external HSS via S6a is also supported).

…………………………………………………………………………………………………………………………….

It is a “golden opportunity” for critical industries, said Ubiik. TDD separates the uplink and downlink signals by allocating different time slots in the same frequency band, allowing for asymmetric flow for uplink and downlink transmission. Ligado Networks said: “[It] equips utility users with significant flexibility, as different ratios of uplink versus downlink slots may be used to address requirements of mission-critical applications.”

A news release said: “The Band 54 goRAN™ LTE Base Station will be particularly useful for utilities deploying private networks which is why the companies plan to showcase a demonstration version of the device during the 2023 Utility Broadband Alliance (UBBA) Summit & Plugfest Event in Minneapolis next week from October 10-12, 2023.”

Sachin Chhibber, chief technology officer at Ligado Networks emphasized how the announcement represents another building block in the expansion of the ecosystem which utilizes Band 54 frequencies. He stated: “Ubiik’s goRAN base station is a significant enhancement to the opportunities the band affords to the critical infrastructure industry – especially utility and other enterprise organisations planning private networks… By eliminating the requirement to pair channels for uplink and downlink, we will be able to offer partners the flexibility to use the spectrum exactly how they need, and with greater efficiencies.”

Chhibber reiterated how specific attributes of Band 54 – particularly its Time Division Duplex (TDD) capabilities – equip utility users with significant flexibility, as different ratios of uplink versus downlink slots may be used to address requirements of mission-critical applications. “By eliminating the requirement to pair channels for uplink and downlink, we will be able to offer our partners the flexibility necessary to use the spectrum exactly how they need, and with greater efficiencies,” Chhibber noted. He added that uplink-heavy users such as utilities – for monitoring purposes, as an example – will be able to deploy tailormade networks to achieve their priorities.

Tienhaw Peng, chief executive at Ubiik, said: “Given the scarcity of spectrum, being able to secure an optimal 5 MHz slice to build out a private network is a golden opportunity for critical infrastructure customers. Our goRAN base station offers the perfect mix of affordability and ease of deployment combined with the spectral efficiency, interoperability and security brought by LTE. With Ligado, we look forward to providing a solution-in-a-box for building an LTE network – by either utilising a user’s specified core network or one directly built into the base station.”

Peng also explained that the goRAN™ Base Station will integrate with chipsets supporting Band 54 and with a utility-hardened LTE endpoint module currently in development. Ubiik’s recent acquisition of utility networks provider Mimomax Wireless will provide North American utilities with additional expertise in the deployment of multiple large-scale wireless networks.

Ubiik’s recent acquisition of utility networks provider Mimomax Wireless will provide North American utilities with additional expertise in the deployment of multiple large-scale wireless networks, the company said.

References:

Ligado Networks teams up with Ubiik to offer US utilities Band-54 private LTE at 1670-1675 MHz

Nokia Bell Labs claims new world record of 800 Gbps for transoceanic optical transmission

Nokia today announced it has set two new world records in submarine optical transmission, both of which will shape the next generation of optical networking equipment.

The first sets a new optical speed record for transoceanic distances. Nokia Bell Labs researchers were able to demonstrate an 800-Gbps data rate at a distance of 7865 km using a single wavelength of light. That distance is two times greater than what current state-of-the-art equipment can transmit at the same capacity and is approximately the geographical distance between Seattle and Tokyo. Nokia Bell Labs achieved this milestone at its optical research testbed in Paris-Saclay, France.

The second record was achieved by both Nokia Bell Labs and Nokia subsidiary Alcatel Submarine Networks (ASN), establishing a net throughput of 41 Tbps over 291 km via a C-band unrepeated transmission system. C-band unrepeated systems are commonly used to connect islands and offshore platforms to each other and the mainland proper. The previous record for these kinds of systems is 35 Tbps over the same distance. Nokia Bell Labs and ASN broke the record at ASN’s research testbed facility, also in Paris-Saclay.

Nokia Bell Labs and ASN presented the scientific findings behind both records on the 4th and 5th of October at the European Conference on Optical Communications (ECOC), held in Glasgow, Scotland.

Making lasers that blink faster:

Nokia Bell Labs and Alcatel Submarine Networks were able to achieve both world records through the innovation of higher-baud-rate technologies. “Baud” measures the number of times per second that an optical laser switches on and off, or “blinks”. Higher baud rates mean higher data throughput and will allow future optical systems to transmit the same capacities per wavelength over far greater distances. In the case of transoceanic systems, these increased baud rates will double the distance at which we could transmit the same amount of capacity, allowing us to efficiently bridge cities on opposite sides of the Atlantic and Pacific oceans. In the case of C-band unrepeated systems, higher baud would allow service providers connecting islands or off-shore platforms to achieve higher capacities with fewer transceivers and without the addition of new frequency bands.

The research behind these two records will have significant impact on the next generation of submarine optical transmission systems. While future deployments of submarine fiber will take advantage of new fiber technologies like multimode and multicore, the existing undersea fiber networks can take advantage of next-generation higher-baud-rate transceivers to boost their performance and increase their long-term viability.

Sylvain Almonacil, Research Engineer at Nokia Bell Labs, said: “With these higher baud rates, we can directly link most of the world’s continents with 800 Gbps of capacity over individual wavelengths. Previously, these distances were inconceivable for that capacity. Furthermore, we’re not resting on our achievement. This world record is the next step toward next-generation Terabit-per-second submarine transmissions over individual wavelengths.”

Hans Bissessur, Unrepeated Systems Group leader at ASN, said: “These research advances show that that we can achieve better performance over the existing fiber infrastructure. Whether these optical systems are crisscrossing the world or linking the islands of an archipelago, we can extend their lifespans.”

………………………………………………………………………………………………………………………….

According to TeleGeography, there were an estimated 1.4 million km of submarine cables in service globally at the start of 2023 and that number is rapidly increasing.

Recent highlights include Orange and ASN agreeing in July to construct the new Medusa cable system between multiple locations in North Africa and Southern Europe. In late September, Telecom Egypt agreed to extend Medusa all the way to the Red Sea.

On a slightly smaller scale, early last month, Telecom Italia Sparkle began offering commercial services on a stretch of the Blue cable system, linking Palermo with Genoa to Milan. It is part of the larger Blue and Raman system, being built in partnership with Google. Once completed, the Blue part will connect various locations on the Med – including Greece and Israel in addition to Italy – while the Raman part will connect Jordan, Saudi Arabia, Oman and eventually India.

Resources and additional information:

https://telecoms.com/524184/nokia-bell-labs-makes-submarine-cables-go-blinkin-fast/

Analysys Mason: 40 operational 5G SA networks worldwide; Sub-Sahara Africa dominates new launches

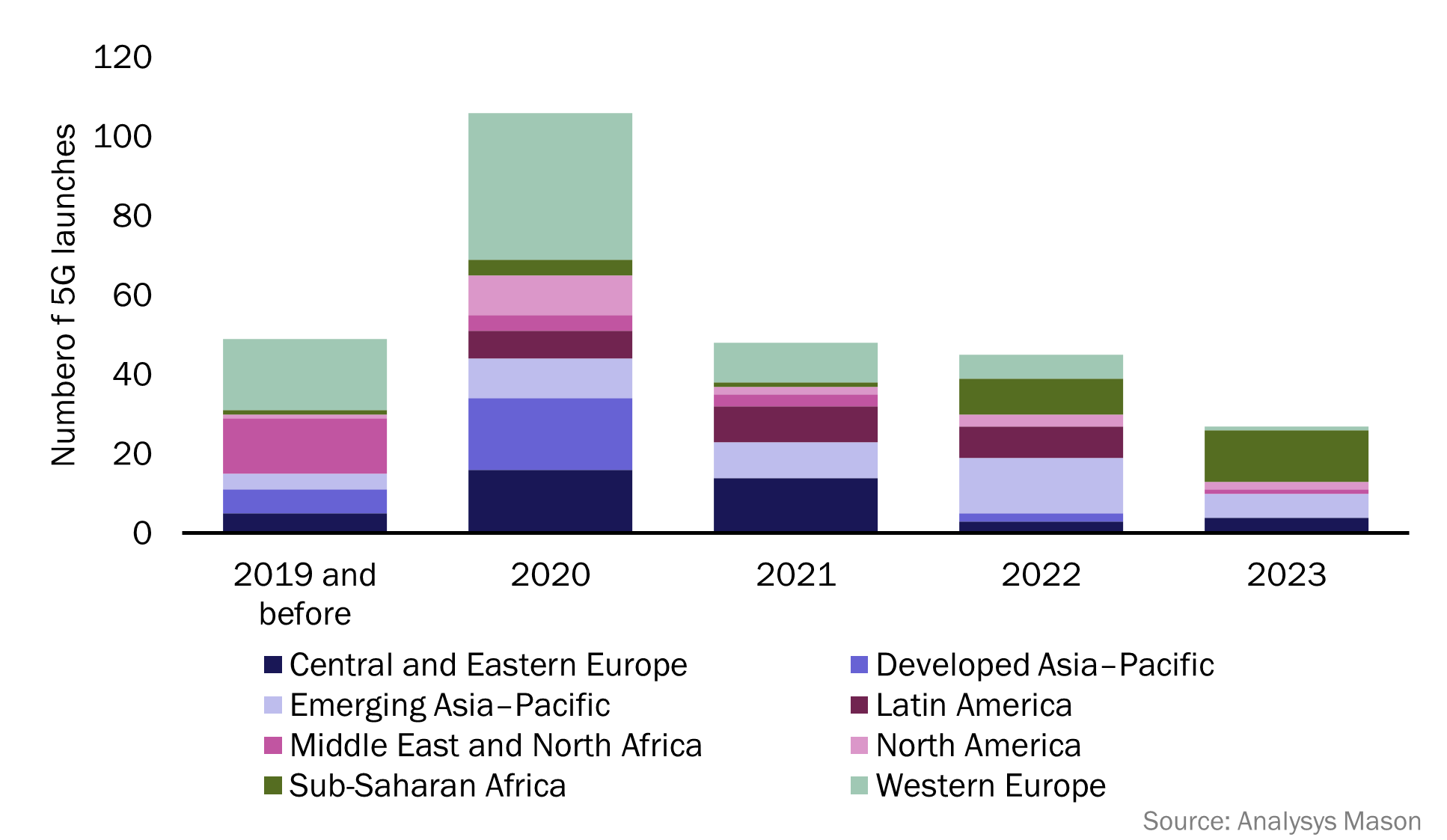

According to the latest edition of Analysys Mason’s 5G deployment tracker, 26 new 5G networks have been commercially launched across 22 countries so far in 2023, with an additional 55 5G networks either in deployment or scheduled for launch later this year.

Sub-Saharan Africa (SSA) has dominated 5G launch figures in 2023, with 13 new launches across 10 countries, accounting for over 48% of 5G launches during the period. Emerging Asia–Pacific (EMAP) has recorded 6 new 5G launches in 2023 so far, while Central and Eastern Europe (CEE) recorded 4 new 5G launches, respectively. Additionally, North America (NA) recorded 2 new 5G launches, while Western Europe (WE) and the Middle East and North Africa (MENA) each reported one new 5G launch in the same period. 5G standalone (SA) launches for the last 12 months (August 2022–2023) have continued to grow steadily, with 11 new operators commercially launching 5G SA networks. Five of these launches have occurred in 2023, with 3 operators launching in WE and 2 launching in MENA.

The market research firm’s 5G deployment tracker includes 338 entries from 2018 to 1H 2023, with 274 confirmed launches of 5G networks and 40 commercial launches of 5G SA networks, worldwide.

According to the latest edition of Analysys Mason’s 5G deployment tracker, 26 new 5G networks have been commercially launched across 22 countries so far in 2023, with an additional 55 5G networks either in deployment or scheduled for launch later this year.

Sub-Saharan Africa (SSA) has dominated 5G launch figures in 2023, with 13 new launches across 10 countries, accounting for over 48% of 5G launches during the period. Emerging Asia–Pacific (EMAP) has recorded 6 new 5G launches in 2023 so far, while Central and Eastern Europe (CEE) recorded 4 new 5G launches, respectively. Additionally, North America (NA) recorded 2 new 5G launches, while Western Europe (WE) and the Middle East and North Africa (MENA) each reported one new 5G launch in the same period. 5G standalone (SA) launches for the last 12 months (August 2022–2023) have continued to grow steadily, with 11 new operators commercially launching 5G SA networks. Five of these launches have occurred in 2023, with 3 operators launching in WE and 2 launching in MENA.

The 5G deployment tracker includes 338 entries from 2018 to 1H 2023, with 274 confirmed launches of 5G networks and 40 commercial launches of 5G SA networks, worldwide.

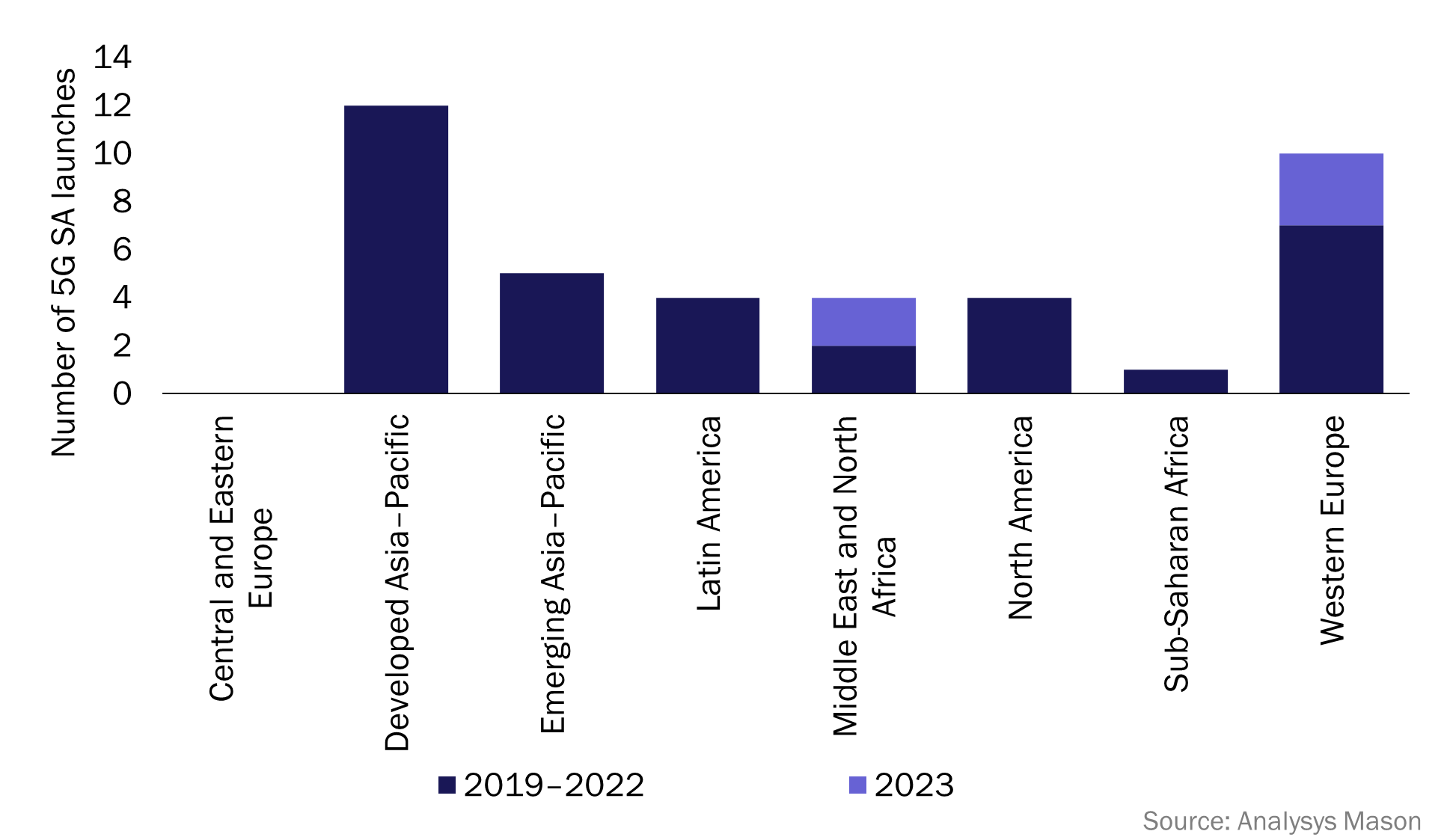

Figure 1: 5G network launches, worldwide, 2019 (and before)–2023

Network operators in SSA have long prioritised investment in 4G networks over 5G. This is due to the lower cost of 4G devices and infrastructure, and the high number of users on legacy networks, such as 2G and 3G, across the region. As a result, operators have prioritised the migration of these users to 4G networks over new 5G deployments. In 2021, 79.8% of all mobile connections in SSA were 2G or 3G connections, and there were only 6 operational 5G networks in the region. This changed in 2022, with operators launching 9 new 5G networks across the region.1 This number has continued to climb so far in 2023, with a total of 13 new 5G network launches since January.

SSA now accounts for over 48% of all 2023 5G launches, with the region now having more operational 5G networks than MENA, NA, Latin America (LATAM) and developed Asia–Pacific (DVAP). Airtel has launched the most 5G networks in SSA so far in 2023, with the group launching 4 new 5G networks in 4 different countries. These include:

- Kenya: Airtel became the second operator to launch a 5G network in Kenya, following Safaricom’s launch in October 2022. Airtel claims coverage across 370 areas including Mombasa, Nakuru, Nairobi and Kakamega.

- Nigeria: Airtel launched its 5G network in June 2023, with coverage in multiple areas including Abuja, Port Harcourt and Lagos. Airtel is the third operator to launch a 5G network in Nigeria, following MTN (2022) and Mafab (January 2023).

- Uganda: Airtel launched its 5G services in various areas of Kampala in August 2023, one month after MTN launched the first 5G network in Uganda.

- Zambia: Airtel became the second operator to launch a 5G network in Zambia in July 2023, following MT’s 5G launch in November 2022.

Other notable launches across SSA include:

- French Guiana: Orange Caraibe and SFR Caraibe both launched their 5G networks in 2023 in the 3.5GHz band. These are the first 5G networks in French Guiana.

- The Gambia: QCell became the first operator to launch 5G in The Gambia in June 2023, launching in selected areas of the capital city, Banjul.

- South Africa: Telkom South Africa launched their 5G network in 2023, becoming the fourth operator to launch 5G in South Africa after Rain, MTN and Vodacom.

…………………………………………………………………………………………………………………………………

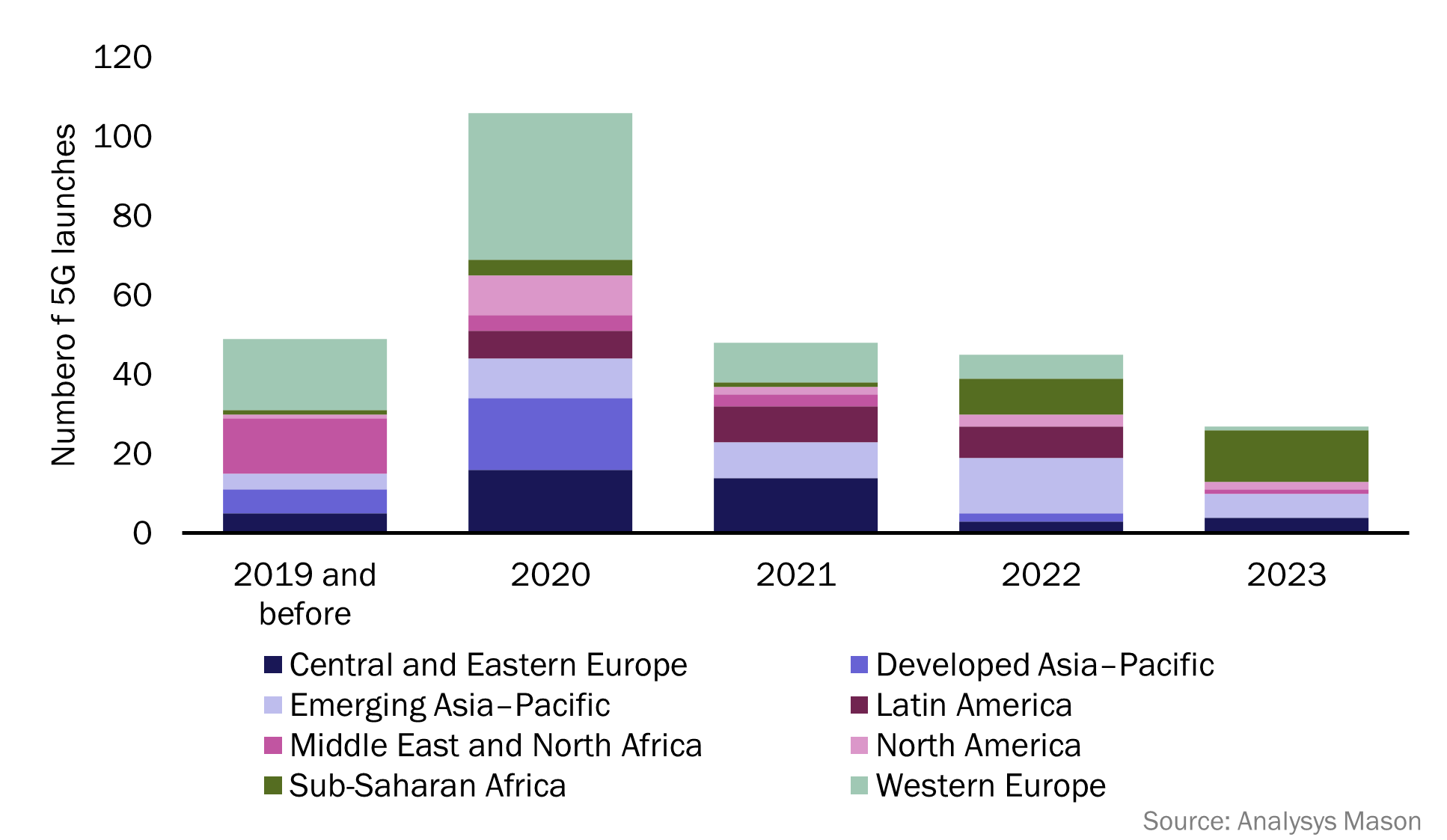

There are now 40 operational 5G SA networks worldwide, spanning 24 countries and 34 different operators. In the previous 12 months (from September 2022 to September 2023) there have been 11 new 5G SA launches, with 5 of these occurring in 2023. These 5 launches were spread across WE (3 new launches) and MENA (2 new launches). More 5G SA launches are expected in 2023, as launch figures have historically peaked in the second half of a calendar year. 5G SA launch figures are expected to accelerate over the next few years, and operators that have already launched 5G SA networks are likely to continue to expand their standalone coverage.

Analysys Mason predicts that by 2024, 5G SA will be the main source of revenue for vendors.

…………………………………………………………………………………………………………………………….

Editor’s Note: We strongly disagree with that 5G SA forecast and we don’t know if the “vendors,” like Ericsson, Nokia, Huawai, ZTE, Samsung, NEC, also provide 5G RAN equipment. Other 5G SA core network vendors, don’t make RAN equipment, e.g. Amazon AWS, Microsoft Azure, Cisco, VMware, Parallel Wireless and Mavenir

In the U.S., only T-Mobile and Dish Network have deployed 5G SA core networks. AT&T and Verizon have been talking the talk about 5G SA core networks but have no commercial deployments. UScellular 5G SA is in test mode. UScellular’s CTO Mike Irizarry said it’s now testing Nokia’s 5G SA core, but doesn’t plan to rush headlong into the SA 5G future.

Also, because there are no specification for 5G SA core network interoperability or roaming, 5G SA endpoint mobility will be extremely limited.

…………………………………………………………………………………………………………………………….

With three new launches so far in 2023, WE is beginning to compete with DVAP for the total number of 5G SA network launches. DVAP has led the total 5G standalone network launch figures since 2021 (see Figure 2), with 11 new launches in the last 2 years across Australia, Japan, Singapore and South Korea. Western Europe now accounts for 25% of all 5G SA networks worldwide, 5 percentage points less than DVAP which accounts for 30%. All regions have now launched at least one 5G SA network, excluding CEE (see Figure 2).

Figure 2: 5G SA launches, worldwide, 2019–2023

In 2023, notable deployments of standalone networks have included:

- Saudi Arabia: Zain launched the first 5G SA network in Saudi Arabia in March 2023, making Saudi Arabia the third country in MENA to have an operational 5G SA network, after Bahrain and Kuwait.

- Spain: Orange and Telefónica both launched 5G SA networks in 2023. These are the first two 5G SA networks in Spain.

- United Arab Emirates (UAE): E& (formerly Etisalat) launched its 5G SA network in February 2023, becoming the fourth operator to launch 5G SA services within MENA and the first in the UAE.

- United Kingdom: Vodafone launched the first 5G SA network in the UK, in June 2023, with coverage across Cardiff, Glasgow, London and Manchester. Vodafone has branded its 5G SA as ‘5G Ultra’.

References:

https://www.analysysmason.com/research/content/articles/5g-deployment-launches-rma18/

GSA 5G SA Core Network Update Report

ABI Research: Expansion of 5G SA Core Networks key to 5G subscription growth

Counterpoint Research: Ericsson and Nokia lead in 5G SA Core Network Deployments

Tech Mahindra and Microsoft partner to bring cloud-native 5G SA core network to global telcos

Omdia and Ericsson on telco transitioning to cloud native network functions (CNFs) and 5G SA core networks

Dell’Oro: RAN market declines at very fast pace while Mobile Core Network returns to growth in Q2-2023

Dell’Oro: RAN Market to Decline 1% CAGR; Mobile Core Network growth reduced to 1% CAGR

Dell’Oro: Mobile Core Network & MEC revenues to be > $50 billion by 2027

Dell’Oro: Market Forecasts Decreased for Mobile Core Network and Private Wireless RANs

Dell’Oro: Mobile Core Network market driven by 5G SA networks in China

Counterpoint Research – 5G SA Core Deployments Decelerate in H1 2023

UAE network operator “etisalat by e&” achieves 5G mmWave distance milestone

UAE network operator etisalat by e& today claimed the world’s first deployment of 5G mmWave covering more than 10 kilometres, as it highlighted the potential of the range to support fixed wireless access (FWA) and industrial applications over private networks. In a statement, e& explained the pilot used the 26Ghz band and delivered high speeds. The test forms part of a push to address demand for mobile networks capable of delivering large amounts of data reliably and securely.

The UAE telco claimed its test demonstrated the network’s ability to uplink heavy video and real-time data transfer with faster speed and lower latency, supporting “industries operating over vast areas.”

Alongside citing opportunities for FWA, the operator highlighted the potential of private networks using the frequencies across various industry verticals, citing healthcare, manufacturing and public safety.

The implementation of 5G mmWave (FR2 only) network capability was steered as part of etisalat by e& vision to deliver state of art technologies to the society. This is considered as a global first 5G deployment on mmWave @ 26Ghz, FR2 only over 10 km with high speeds. The step aimed at addressing the demand of consumers and enterprises to have a solution following the highest standards of data security and digitalisation over mobile network that’s also capable to deliver large amounts of data reliably and securely.

The mmWave spectrum generally refers to above 24GHz, that can deliver extreme capacity, ultra-high throughput and ultra-low latency which has huge potential in multiple applications for consumers as well as enterprises.

The solution demonstrates the ability of 5G networks to enable uplink heavy video and real-time data transfer scenarios over a specific geographical area, effectively paving the way toward the digital transformation of industries operating over vast areas.

Marwan Bin Shakar, SVP Access Network Development, etisalat by e& said: “This deployment is a commitment to unleashing the full potential of 5G network and pushing the boundaries to redefine the world of connectivity. This is a significant milestone for 5G mmWave, especially that the demand for data has increased exponentially, and this plays a pivotal role in increasing network capacity. Our partnerships with technology leaders has also contributed to setting these benchmarks in the industry and bring advanced solutions to the country making sure we address customer digitalization’s requirements and enabling quicker time to market.”

This achievement will support the use of 5G network for FWA subscribers who can enjoy fiber like user experience over wireless network and also accelerate the adoption of 5G private network technology in other sectors like oil and gas, public safety, healthcare, manufacturing and more to have complete control over their user data with on-premise hosted MEC (Multi-access edge computing) and use their enterprise data and security policies to manage data delivered from a private 5G network.

References:

UAE’s “etisalat by e&” announces first software defined quantum satellite network

Important satellite network services to be discussed at WRC 23

Several agenda items for WRC‑23 include fixed, mobile, broadcasting, and radio determination satellite services. Study Group 4 ITU–R is responsible for preparing these agenda items, aiming to ensure efficient use of the radio spectrum and satellite orbit systems and networks.

Non‑geostationary satellite orbit (non‑GSO) systems are one of the top priorities on the WRC‑23 agenda.

First, coexistence must be ensured between non‑GSO and geostationary satellite orbit (GSO) systems, with protection being ensured for both kinds of satellites. This requires accurate calculations of potential interference to and from non‑GSOs, allowing possible modifications to non‑GSO systems to be considered where needed.Improved rules for non‑GSOs should also cover those on orbital tolerances. These will be treated under the conference’s agenda items for satellite services (7A), milestone reporting (7B), and aggregate interference to GSOs (7J), along with a functional description for software tools to determine non‑GSO fixed-satellite service (FSS) system or network conformity (ITU–R Recommendation S.1503).

Satellite operators expect decisions at WRC‑23 to provide maximum flexibility in the use of spectrum allocations for certain purposes.

These include: earth stations in motion (ESIM) in the FSS, under agenda items 1.15 and 1.16; inter-satellite communications in the FSS, item 1.17; and FSS in the existing broadcasting-satellite service (BSS), item 1.19.

WRC‑23 discussions on these topics will aim to allow for more efficient spectrum use than is currently the case.

Amid rapid satellite development in recent years, non‑GSO systems have been deployed on a large scale. At the same time, new high-capacity satellites have gone into geostationary orbit.

On the regulatory side, the addition of a satellite component to the International Mobile Telecommunications (IMT‑2020) ecosystem has enabled satellite usage in cellular networks, along with new satellite services and other innovations.

Member States of the International Telecommunication Union (ITU) are increasingly raising the issue of sustainability, equitable access, and the rational use of GSO and non‑GSO spectrum resources. Resolution 219 of the ITU Plenipotentiary Conference (Bucharest, 2022) reflects these concerns.

WRC‑23 needs to continue giving high priority to establishing equitable access to satellite orbits. This means recognizing the special needs of developing countries, often including geographical challenges.

The development of innovative satellite technologies has now moved significantly ahead of regulations in the use of radio-frequency spectrum and satellite orbits. As this gap continues widening, ITU must find new approaches to keep international satellite regulation timely and relevant for the industry.

Technology is advancing so rapidly that some operators have begun to introduce new satellite technologies using GSO and non‑GSO satellites without waiting for conference decisions to regulate such use. Moreover, national administrations sometimes grant authorization for such uses in the absence of internationally agreed rules.

Concerns are growing about derogations from the ITU Radio Regulations, particularly under 4.4 of Article 4 — which allows national administrations to assign frequencies exceptionally, outside the Table of Frequency Allocations and other treaty requirements, as long as such assignments do not cause harmful interference to any existing radio services.

The conference will consider how to deal with the widespread use of 4.4, for non‑coordinated satellite networks. It should also clarify whether the derogation option under 4.4 should be available for all radio systems, or only non‑commercial systems.

Overall, WRC‑23 must clarify how administrations use the provision, when they have the right to invoke it, and which specific circumstances justify exceptional use of 4.4 on a temporary basis.

The Radio Regulations, containing the rules and regulations for the use of the radio-frequency spectrum and satellite orbits, are updated approximately every four years, in line with ITU’s associated conference cycle.

Perhaps the time has come to think about reducing the number of years between World Radiocommunication Conferences and simplifying the preparatory cycle and associated documentation. One way forward could be to reassess the current Conference Preparatory Meeting (CPM) format and to consider merging the two CPM sessions into one.

Given the rapid growth, transformation and innovation phase the satellite industry is now going through, WRC‑23 should instruct the ITU Radiocommunication Sector to conduct urgent studies on the potential for reusing frequency bands allocated to mobile services for non‑GSO satellite systems.

National administrations, as well as companies and organizations taking part as ITU Sector Members, need to jointly address these new issues, strengthen the ITU–R framework, and pursue global solutions for the benefit of all.

References:

Amazon launches first Project Kuiper satellites in direct competition with SpaceX/Starlink

Juniper Research: 5G Satellite Networks are a $17B Operator Opportunity

New developments from satellite internet companies challenging SpaceX and Amazon Kuiper

SatCom market services, ITU-R WP 4G, 3GPP Release 18 and ABI Research Market Forecasts

KDDI Partners With SpaceX to Bring Satellite-to-Cellular Service to Japan

European Union plan for LEO satellite internet system

GSMA- ESA to collaborate on on new satellite and terrestrial network technologies

ABI Research and CCS Insight: Strong growth for satellite to mobile device connectivity (messaging and broadband internet access)

Telstra partners with Starlink for home phone service and LEO satellite broadband services

FT: A global satellite blackout is a real threat; how to counter a cyber-attack?

Spark New Zealand partnering with Lynk Global to offer a satellite-to-mobile service

Deutsche Telekom with AWS and VMware demonstrate a global enterprise network for seamless connectivity across geographically distributed data centers

Deutsche Telekom (DT) has partnered up with AWS and VMware to demonstrate what the German network operator describes as a “globally distributed enterprise network” that combines Deutsche Telekom connectivity services in federation with third party connectivity, compute, and storage resources at campus locations in Prague, Czech Republic and Seattle, USA and an OGA (Open Grid Alliance) grid node in Bonn, Germany.

The goal is to allow customers to book connectivity services directly from DT using a unified interface for the management of the network across its various locations.

The POC demonstrates how the approach supports an optimized resource allocation for advanced AI based applications such as video analytics, autonomous vehicles and robotics. The demonstration use case is video analytics with distributed AI inference.

PoC setup:

The global enterprise network integrates Deutsche Telekom private 5G wireless solutions, AWS services and infrastructure, VMware’s multi-cloud telco platform, OGA grid nodes and Mavenir’s RAN/Core functions. Two 5G Standalone (SA) private wireless networks deployed at locations in Prague, Czech Republic and Seattle, USA are connected to a Mavenir 5G Core hosted on AWS Frankfurt Region leveraging the framework of the Integrated Private Wireless on AWS program. The convergence of the global network with local high-speed 5G connectivity is enabled by the AWS backbone and infrastructure.

The 5G SA private wireless network with User Plane Function (UPF) and RAN hosted at the Seattle location, is operating on the VMware Telco Cloud Platform to enable low latency services. The VMware Service Management and Orchestration (SMO) is also deployed in the same location and serves as the global orchestrator. The SMO framework helps to simplify, optimize and automate the RAN, Core and its applications in a multi-cloud environment.

To demonstrate the benefit of this approach, the deployed POC used a video analytics application where cameras were installed at both Prague and Seattle locations and connected through a private wireless global enterprise network. Using this approach, operators were able to run AI components concurrently for immediate analysis and inferencing. This helps demonstrate the ability for customers to seamlessly connect devices across locations using the global enterprise network. Leveraging OGA architectural principles for Distributed Edge AI Networking, an OGA grid node was established on Dell infrastructure in Bonn facilitating seamless connectivity across the European locations.

Statements:

“As AI gets engrained deeper in the ecosystem of our lives, it necessitates equitable access to compute and connectivity for everyone, everywhere across the globe. Multi-national enterprises are seeking trusted and sovereign compute & connectivity constructs that underpin an equitable and seamless access. Deutsche Telekom is excited to partner with the OGA ecosystem for co-creation on these essential constructs and the enablement of the Distributed Edge AI Networking applications of the future,” – Kaniz Mahdi, Group Chief Architect and SVP Technology Architecture and Innovation at Deutsche Telekom.

“VMware is proud to support this Proof of Concept – contributing know-how and a modern and scalable platform that aims to offer the agility required in distributed environments. VMware Telco Cloud Platform is suited to deliver the compute resources on-demand wherever critical customer workloads are needed. As a founding member of the Open Grid Alliance, VMware embraces both the principles of this initiative and the opportunity to collaborate more deeply with fellow alliance members AWS and Deutsche Telekom to help meet the evolving needs of global enterprise customers.” – Stephen Spellicy, vice president, Service Provider Marketing, Enablement and Business Development, VMware

References:

https://www.telekom.com/en/media/media-information/archive/global-enterprise-network-1050910

Deutsche Telekom Global Carrier Launches New Point-of-Presence (PoP) in Miami, Florida

AWS Integrated Private Wireless with Deutsche Telekom, KDDI, Orange, T-Mobile US, and Telefónica partners

Deutsche Telekom Achieves End-to-end Data Call on Converged Access using WWC standards

Deutsche Telekom exec: AI poses massive challenges for telecom industry

Amazon launches first Project Kuiper satellites in direct competition with SpaceX/Starlink

Amazon has finally joined the race to build massive constellations of satellites that can blanket the globe in internet connectivity — a move that puts the tech company in direct competition with SpaceX and its Starlink satellite Internet system. The first two prototype satellites for Amazon’s Project Kuiper space network, launched aboard a United Launch Alliance (ULA) rocket from Cape Canaveral, Florida, at 2:06 p.m. ET Friday. The Protoflight launch is the first mission in a broader commercial partnership between ULA and Amazon to launch the majority of the Project Kuiper constellation.

“This is Amazon’s first time putting satellites into space, and we’re going to learn an incredible amount regardless of how the mission unfolds,” Rajeev Badyal, a vice president of technology for Project Kuiper at Amazon, said in a statement from the company before the launch. “We’ve done extensive testing here in our lab and have a high degree of confidence in our satellite design, but there’s no substitute for on-orbit testing,” he added.

“This initial launch is the first step in support of deployment of Amazon’s initiative to provide fast, affordable broadband service to unserved and underserved communities around the world,” said Gary Wentz, ULA vice president of Government and Commercial Programs. “We have worked diligently in partnership with the Project Kuiper team to launch this important mission that will help connect the world. We look forward to continuing and building on the partnership for future missions.”

United Launch Alliance cut off the livestream of the launch after the first stage of its rocket — the portion that provides the initial boost at liftoff — finished firing its engines off. The company did confirm “mission success,” and said in a news release that it “precisely” delivered the satellites. Amazon could not immediately confirm contact with the satellites.

A ULA Atlas V rocket carrying the Protoflight mission for Amazon’s Project Kuiper lifts off from Space Launch Complex-41 at 2:06 p.m. EDT on October 6.

Photo by United Launch Alliance

………………………………………………………………………………………………………………………………………………………………………………………………………………………………………………….

If successful, the mission could queue up Amazon to begin adding hundreds more of the satellites into orbit, eventually building a network of more than 3,200 satellites that will work in tandem to beam internet connectivity to the ground.

But why wasn’t a Blue Origin (owned by Jeff Bezos) rocket used to launch the Project Kuiper satellites? It’s because Blue Origin has yet to launch anything into orbit. Although its suborbital space tourist rocket New Shepard has made many flights, the New Glenn rocket that it has been developing for more than a decade to take payloads like Kuiper satellites to orbit is at least three years behind schedule. Its debut flight is penciled in for next year. In April last year, Amazon announced a gigantic purchase of up to 83 launches, the largest commercial purchase of rocket launches ever. That includes 27 from Blue Origin and the rest from two other companies, Arianespace of France and United Launch Alliance of the United States. The contracts with the other companies also rely on new rockets that have not yet flown: the Ariane 6 from Arianespace and the Vulcan from United Launch Alliance.

The leading satellite Internet company is Starlink, the SpaceX subsidiary that has been growing rapidly since 2019. SpaceX has more than 4,500 active Starlink satellites in orbit and offers commercial and residential service to most of the Americas, Europe and Australia.

The space industry is in the midst of a revolution. Until relatively recently, most space-based telecommunications services were provided by large, expensive satellites in geosynchronous orbit, which lies thousands of miles away from Earth. The drawback with this space-based internet strategy was that the extreme distance of the satellites created frustrating lag times. Now, companies including SpaceX, OneWeb and Amazon are looking to bring things closer to home.

Even before those companies began to build their services, the satellite industry dreamed of delivering high-speed, space-based internet directly to consumers. There were several such efforts in the 1990s that either ended in bankruptcy or forced corporate owners to shift plans when expenses outweighed the payoffs.

Such widespread high-speed internet access could be revolutionary. As of 2021, nearly 3 billion people across the globe still lacked basic internet access, according to statistics from the United Nations. That’s because more common forms of internet service, such as underground fiber optic cables, had not yet reached certain areas of the world.

SpaceX is well ahead of the competition in terms of growing its service, and its efforts so far have occasionally thrust the company into geopolitical controversy. The company notably faced significant blowback in late 2022 and early 2023 for preventing Ukrainian troops on the front lines of the war with Russia from accessing Starlink services, which had been crucial to Ukraine’s military operations. (The company later reversed course, and SpaceX founder Elon Musk discussed the Ukraine controversy in a recent book.) It’s possible Amazon’s Project Kuiper constellation could become part of that conversation — facing similar geopolitical pressures — if the network proves successful.

“I’m also curious if Amazon plans dual-use capabilities where government/defense will be a major client. This may result in the targeting of Kuiper like that of Starlink in Ukraine,” said Gregory Falco, an assistant professor of mechanical and aerospace engineering at Cornell University, in a statement.

Despite the promises of a global internet access revolution, the massive satellite megaconstellations needed to beam internet across the globe are controversial. Already, there are thousands of pieces of space junk in low-Earth orbit. And the more objects there are in space, the more likely it is that disastrous collisions could occur, further exacerbating the issue.

The Federal Communications Commission, which authorizes space-based telecom services, recently began enhancing its space debris mitigation policies. The satellite industry has largely pledged to abide by recommended best practices, including pledging to deorbit satellites as missions conclude.

In a May blog post, Amazon previously laid out its plans for sustainability, which include ensuring its satellites are capable of maneuvering while in orbit. Amazon also pledged to safely deorbit the first two test satellites at the end of their mission.

Separately, astronomers have also continuously raised concerns about the impact all these satellites in low-Earth orbit have on the night sky, warning that these manmade objects can intrude upon and distort telescope observations and complicate ongoing research.

Amazon addressed those concerns in a statement to CNN, saying one of the two prototype satellites it launched Friday will test antireflective technology aiming to mitigate telescope interference. The company has also been consulting with astronomers from organizations such as the National Science Foundation, according to Amazon spokesperson Brecke Boyd. However, SpaceX has made similar commitments.

It remains to be seen how well Project Kuiper will compete with SpaceX’s Starlink. And while Starlink already has more than 1 million customers, documents recently obtained by the Wall Street Journal showed that the SpaceX megaconstellation hasn’t been as successful as once projected.

As far as consumer price points go: People can purchase a Starlink user terminal for a home for about $600 plus the cost of monthly service.

Amazon has said it hopes to produce Project Kuiper terminals for as low as about $400 per device, though the company has not yet begun demonstrating or selling the terminals. The company has not revealed a price for monthly Kuiper services.

SpaceX has had the clear advantage of using its own Falcon 9 rockets to launch batches of Starlink satellites to orbit.

Amazon does not have its own rockets. And while the Jeff Bezos-founded rocket company Blue Origin is working on a rocket capable of reaching orbit, the project is years behind schedule.

For now, Kuiper satellites are launching on rockets built by United Launch Alliance, a close partner of Blue Origin. In addition to ULA and Blue Origin, Amazon has a Project Kuiper launch contract with European launch provider Arianespace.

On August 28, The Cleveland Bakers and Teamsters Pension Fund, which owns a stake in Amazon, filed a lawsuit against the company over the launch contracts. The lawsuit alleges Amazon executives “consciously and intentionally breached their most basic fiduciary responsibilities” in part by forgoing the option of launching Project Kuiper satellites on rockets built by SpaceX, which the suit claims is “one of the most cost-effective launch providers.”

“The claims in this lawsuit are completely without merit, and we look forward to showing that through the legal process,” said an Amazon spokesperson.

If all goes to plan, Amazon said it intends to launch its first production satellites early next year and begin offering beta testing to initial customers by the end of 2024, according to a news release.

References:

https://www.nytimes.com/2023/10/06/science/amazon-project-kuiper-launch.html

Rogers Communications activates 5G service in Toronto subways

Canadian telco Rogers Communications has announced that it has activated 5G services in the busiest sections of the Toronto subway system for customers of all major Canadian mobile networks.

Rogers conducted extensive testing, including live calls with Toronto Maple Leafs Defenceman Morgan Rielly, who FaceTimed his father while riding the subway underground. See video here.

In April, Rogers announced its plans to introduce full 5G connectivity services to the entire Toronto subway system, including access to 911 for all riders. Also in April, Rogers acquired the Canadian operations of BAI Communications, which had owned the rights to provide wireless service on the Toronto subway.

Rogers stated that it conducted extensive testing, including live calls, to prepare the network for all riders. Beginning on October 2nd, customers of all major Canadian carriers can now connect to 5G and engage in voice calls, texting, and streaming within the Toronto Transit Commission (TTC) subway system in the following areas:

- On Line 1, including all stations and tunnels in the Downtown U, as well as Spadina and Dupont stations

- On Line 2, encompassing thirteen stations from Keele to Castle Frank, along with the tunnels between St George and Yonge stations.

“We are very pleased to bring 5G connectivity to all subway riders,” said Tony Staffieri, President and CEO of Rogers. “Our team has been working around the clock to introduce an immediate solution so all riders can connect when travelling on the busiest sections of the TTC subway system. I am so proud of our Rogers technology team who continue to bring innovation, ingenuity, and leading solutions to Canadians. Today’s announcement is another milestone in our plan to make wireless services available throughout the entire subway system.”

“Our dedicated team of technologists designed and introduced an immediate solution that added capacity, so Bell and Telus could join the network,” said Ron McKenzie, Chief Technology and Information Officer at Rogers. “For over 10 years, subway riders have been without mobile phone services and the Rogers team is pleased to step up and make 5G a reality for all riders today.”

https://telecomtalk.info/rogers-5g-enhanced-network-toronto-ttc-subway/860378/

NEC completes Patara-2 subsea cable system in Indonesia

NEC Corp. announced that the Patara-2 submarine cable system connecting multiple islands across Indonesia is complete and operational. This subsea cable is owned by Telkom Indonesia, the biggest digital telco company in Indonesia, which is strongly committed to accelerating the country’s digitalization.

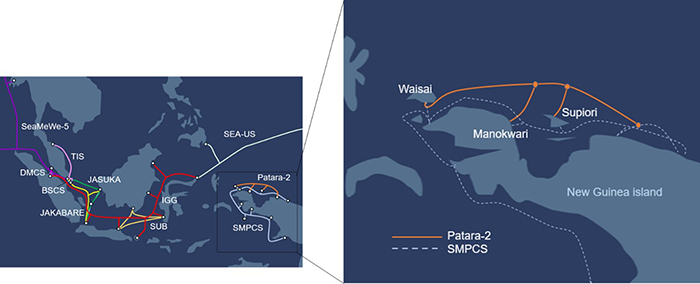

The Patara-2 is a 100 Gigabit per second (Gbps) x 80 wavelengths (wl) x 2 fiber pairs (fp) optical fiber submarine cable system measuring approximately 1,200 kilometers. In addition to the existing Sulawesi Maluku Papua Cable System (SMPCS) and others in Indonesia provided by NEC, this new cable system enhances connectivity among the cities of Waisai, Manokwari, and Supiori.

NEC’s major supply record in Indonesia and the Patara-2 cable system

……………………………………………………………………………………………………………………..

“Both the Patara-2 and SMPCS cable systems enable the network in the north of Papua to have a redundant configuration, providing highly reliable communications in Papua,” said Herlan Wijanarko, Director of Network & IT Solutions, Telkom.

“NEC, in cooperation with NEC Indonesia, is honored to provide advanced connectivity among Indonesian cities, and has been involved in a variety of submarine cable projects for Telkom since 1991, including IGG and SMPCS,” said Atsushi Kuwahara, Managing Director, Submarine Network Division, NEC Corporation. “We have laid more than 10 submarine cable systems in the region and are proud to continue contributing to the expansion of Indonesia’s connectivity.”

NEC has been a leading supplier of submarine cable systems for more than 50 years, and has built more than 400,000 km of cable, spanning the earth nearly 10 times. NEC is well-established as a reliable partner in the submarine cable field as a system integrator that provides all aspects of submarine cable operations, including the manufacture and installation of optical submarine cables and repeaters, provision of ocean surveys and route designs, delivery, training and testing. NEC subsidiary OCC Corporation manufactures optical submarine cables capable of withstanding water pressures at ocean depths beyond 8,000 meters.

Also, NEC recently completed a long-distance field trial on the IGG cable system owned by Telkom Indonesia. The trial involved using NEC’s latest XF3200 transponder, which the Japanese cable manufacturer says has the world’s highest level of transmission performance at 800 Gbit/s.

References:

https://www.nec.com/en/press/202310/global_20231002_01.html

https://www.telkom.co.id/sites

https://www.lightreading.com/cable-technology/nec-completes-indonesia-s-new-subsea-cable-system

Business Research Company: Global Wireless Mesh Network Market will increase by 12% in 2023

According to The Business Research Company’s Wireless Mesh Network Global Market Report 2023, the global wireless mesh network market (aka Wireless Access Point market) is poised for substantial growth, with the market size expected to expand from $7.41 billion in 2022 to $8.30 billion in 2023, marking an impressive Compound Annual Growth Rate (CAGR) of 12%.

This growth trend is projected to continue, with estimates suggesting that the market will reach $12.78 billion in 2027, at a CAGR of 11.4%. The growth is attributed to various factors, including government support, global population growth, urbanization, increased demand for smart connected devices, and the rising need for industrial automation.

……………………………………………………………………………………………………………………………..

………………………………………………………………………………………………………………………

The global wireless mesh network market features a diverse array of players, resulting in a fairly fragmented landscape. LM Ericsson led the wireless mesh network market with a 9.5% share, followed by Hewlett Packard Enterprise (HPE), Cisco Systems Inc., Zebra Technologies Corporation, Hitachi, Ltd., Qualcomm Incorporated, TP-Link Technologies Co Ltd., Qorvo Inc., Netgear Inc., and Digi International Inc.

The global wireless mesh network market is segmented as follows:

- By Radio Frequency: Sub 1 GHz Band, 2.4 GHz Band, 4.9 GHz Band, 5 GHz Band

- By Mesh Design: Infrastructure Wireless Mesh, AD-HOC Mesh

- By Component: Product, Service

- By Application: Home Networking, Video Surveillance, Disaster Management and Rescue Operations, Medical Device Connectivity, Traffic Management

- By End-Use: Education, Government, Healthcare, Hospitality, Mining, Oil and Gas, Transportation and Logistics, Smart Cities and Smart Warehouses

The market segment showing the highest growth potential within the wireless mesh network market, categorized by radio frequency, is the 2.4 GHz band, expected to achieve $2.2 billion in global annual sales by 2027.

Asia-Pacific is the largest region in the wireless mesh network market, with a market worth of $2.4 billion in 2022. The growth of the wireless mesh network market in Asia-Pacific is driven by infrastructure upgrades, digitization efforts, and a growing internet penetration rate. The surge in adoption of the Internet of Things (IoT) and the increasing need for internet connectivity have been instrumental in driving market growth. In 2022, Asia-Pacific boasted 2.6 billion internet users, representing 62% of the population, up from 1.7 billion (41% of the population) in 2017.

References:

https://www.thebusinessresearchcompany.com/report/wireless-mesh-network-global-market-report

Technavio: Wireless access point market to grow at a CAGR of 6% 2022-2027