Using AI, DeepSig Advances Open, Intelligent Baseband RAN Architectures

Using advanced AI techniques, DeepSig has reportedly managed to eliminate a mobile network’s pilot signal, thereby removing signaling overhead without degrading overall performance. Founded in 2016, the U.S.-based startup occupies a leading position at the intersection of artificial intelligence (AI) and the radio access network (RAN), developing data-driven models that could supplant traditional, human-engineered signal processing algorithms.

This work has become especially relevant as the telecom industry moves toward open and software-defined RAN architectures. DeepSig is now a visible contributor to OCUDU (Open Centralized Unit Distributed Unit), an open-source initiative announced by the Linux Foundation in collaboration with the U.S. Department of Defense and its FutureG ecosystem partners to accelerate open CU/DU development for 5G and early 6G systems. OCUDU is intended to establish a carrier-grade reference platform for baseband software, with support for AI-based algorithms and solutions embedded in the RAN compute stack.

As AI becomes a central theme across the telecom ecosystem, DeepSig has rapidly moved from relative obscurity to prominence through collaborations with major industry and government stakeholders. Most recently, the company emerged as a key contributor to OCUDU—the Open Central Unit Distributed Unit initiative announced by the Linux Foundation and the U.S. Department of Defense (DoD) ahead of MWC Barcelona 2026. The program’s goal is to introduce open-source software elements into the RAN baseband domain, an area historically dominated by proprietary offerings from Ericsson, Nokia, and Samsung. By lowering barriers to entry, OCUDU aims to foster innovation and enable smaller players like DeepSig to participate more freely in the U.S. baseband ecosystem.

Image Credit: DeepSig

DeepSig was identified, alongside Ireland-based Software Radio Systems (SRS), as one of two startups selected to deliver OCUDU’s initial software stack. “The National Spectrum Consortium had an RFQ for developing an open-source stack,” explained Jim Shea, DeepSig’s CEO. “SRS already had a capable baseline, but it needed to be elevated to carrier-grade—adding new features and strengthening reliability,” he added.

Meanwhile, major vendors Ericsson and Nokia were named “premier members” of the new OCUDU Ecosystem Foundation. While both could, in principle, leverage the platform to integrate third-party components into their baseband systems, industry observers remain skeptical that these incumbents will fully embrace open-source alternatives over their established proprietary stacks. In comments at MWC, Nokia CEO Justin Hotard characterized OCUDU as a welcome ecosystem evolution to accelerate innovation but clarified that “not everything necessarily needs to be open source.”

Driven in part by DoD interests, OCUDU reflects broader U.S. government ambitions to ensure that 5G and future 6G networks remain open to domestic innovation, particularly for defense and mission-critical use cases. For vendors like Ericsson and Nokia—who view defense markets as increasingly strategic—this alignment could bring both opportunity and complexity.

DeepSig’s trajectory extends beyond OCUDU. The company’s technology originated from research by Tim O’Shea, now CTO, during his tenure at Virginia Tech, where he explored deep learning’s application to wireless signal processing. “You can apply deep learning to enhance the way communication systems operate by replacing many of the traditional algorithms,” said Jim Shea. While these methods do not circumvent theoretical limits such as Shannon’s Law, small efficiency gains can yield substantial operational and economic benefits for cost-sensitive mobile operators.

As DeepSig and peers continue to redefine how intelligence is integrated into the RAN, their work signals a shift toward AI-native architectures—where machine learning, rather than handcrafted algorithms, becomes the foundation for next-generation network optimization.

References:

https://www.lightreading.com/5g/small-deepsig-is-at-heart-of-ai-ran-challenge-to-ericsson-nokia

Accelerating 5G vRAN, AI-RAN, and 6G on OCUDU, “the Linux of RAN”

AI-RAN Reality Check: hype vs hesitation, shaky business case, no specific definition, no standards?

Ericsson goes with custom silicon (rather than Nvidia GPUs) for AI RAN

Dell’Oro: RAN Market Stabilized in 2025 with 1% CAG forecast over next 5 years; Opinion on AI RAN, 5G Advanced, 6G RAN/Core risks

Dell’Oro: Analysis of the Nokia-NVIDIA-partnership on AI RAN

RAN silicon rethink – from purpose built products & ASICs to general purpose processors or GPUs for vRAN & AI RAN

Dell’Oro: AI RAN to account for 1/3 of RAN market by 2029; AI RAN Alliance membership increases but few telcos have joined

Dell’Oro: AI RAN to account for 1/3 of RAN market by 2029; AI RAN Alliance membership increases but few telcos have joined

InterDigital led consortium to advance wireless spectrum coexistence & sharing

Telecom sessions at Nvidia’s 2025 AI developers GTC: March 17–21 in San Jose, CA

Sources: AI is Getting Smarter, but Hallucinations Are Getting Worse

Huawei FY2025: 2.2% YoY revenue increase; strategic pivot to AI and intelligent automotive solutions

Overview:

Huawei has released its 2025 audited financial results, reporting total revenue of CNY 880.9bn ($127.6bn) — a 2.2% YoY increase. The report highlights a significant expansion in profitability, with operating profit surging 22.1% to CNY 96.9bn ($14bn). That translated to an operating profit margin of 11%, up 180 bps from the 9.2% recorded in 2024.

Image Credit: Imago/Alamy Stock Photo

……………………………………………………………………………………………….

“In 2025, Huawei’s overall performance remained steady,” said Sabrina Meng, Huawei’s Rotating Chairwoman. “I would like to thank our customers for your ongoing trust and support. Thanks also to consumers for choosing Huawei, as well as suppliers, partners, and developers around the world for working with us. “Of course, we couldn’t do any of this without the support of every Huawei employee. Thank you for your hard work, and also your families for their steadfast support.”

In 2025, Huawei’s connectivity business weathered the impact of industry investment cycles, while its computing business continued to seize opportunities in AI. The consumer business worked to overcome formidable challenges, driving the HarmonyOS ecosystem to cross a new threshold in user experience. Huawei’s digital power business continued to place quality before all else. Huawei Cloud honed its competitiveness with a focus on core services, and the company’s intelligent automotive solutions grew rapidly.

………………………………………………………………………………………….

Pivot to Intelligent Automotive Solutions:

Huawei is aggressively diversifying and placing a massive strategic bet on the automotive sector to drive future growth. Its Intelligent Automotive Solutions business is experiencing explosive growth, with revenue increasing by over 400% in 2024 to 26.35 billion yuan ($3.62bn).

In 2025, the unit surged another 72% to CNY 45 billion (approx. $6.2bn). Huawei does not manufacture its own cars directly but operates as a top-tier supplier and technology partner (similar to “Bosch”) via its Harmony Intelligent Mobility Alliance (HIMA). Huawei continues to invest heavily in its “future-oriented” auto and AI businesses.

Revenue Breakdown by Segment & Geography:

- Infrastructure & Solutions: Remains the primary anchor, contributing 42.6% of total revenue (up 2.6% YoY).

- Consumer Business: Accounted for 39.1% of revenue, maintaining a steady recovery with 1.6% YoY growth.

- Intelligent Automotive Solutions (IAS): The high-growth outlier, with revenues spiking 72.1% YoY to CNY 45bn, now representing 5.1% of the total portfolio.

- Geographic Mix: Domestic China operations generated ~70% of revenue. International footprints were led by EMEA (18.3%), followed by Asia-Pacific (5.7%) and the Americas (4.2%).

R&D Intensity and Ecosystem Strategy:

Huawei continues to maintain one of the industry’s highest reinvestment rates, allocating CNY 192.3bn ($27.9bn) to R&D—a massive 21.8% of annual revenue. Huawei’s R&D expenditure rose 7% last year to an impressive RMB 192.3 billion (approximately $28 billion), representing nearly 22% of annual revenue.

In sharp contrast, Ericsson—whose portfolio remains heavily centered on 5G—reduced its R&D outlay by 9% to SEK 48.9 billion (about $5.2 billion). At 21% of sales, Ericsson’s R&D intensity was largely in line with Huawei’s. Nokia, meanwhile, outpaced both rivals in relative terms, allocating 23% of revenue—roughly €4.6 billion ($5.3 billion)—to R&D, up 7% year over year. Most of that increase stemmed from the February 2025 acquisition of optical systems vendor Infinera, which expanded Nokia’s technology base and R&D footprint.

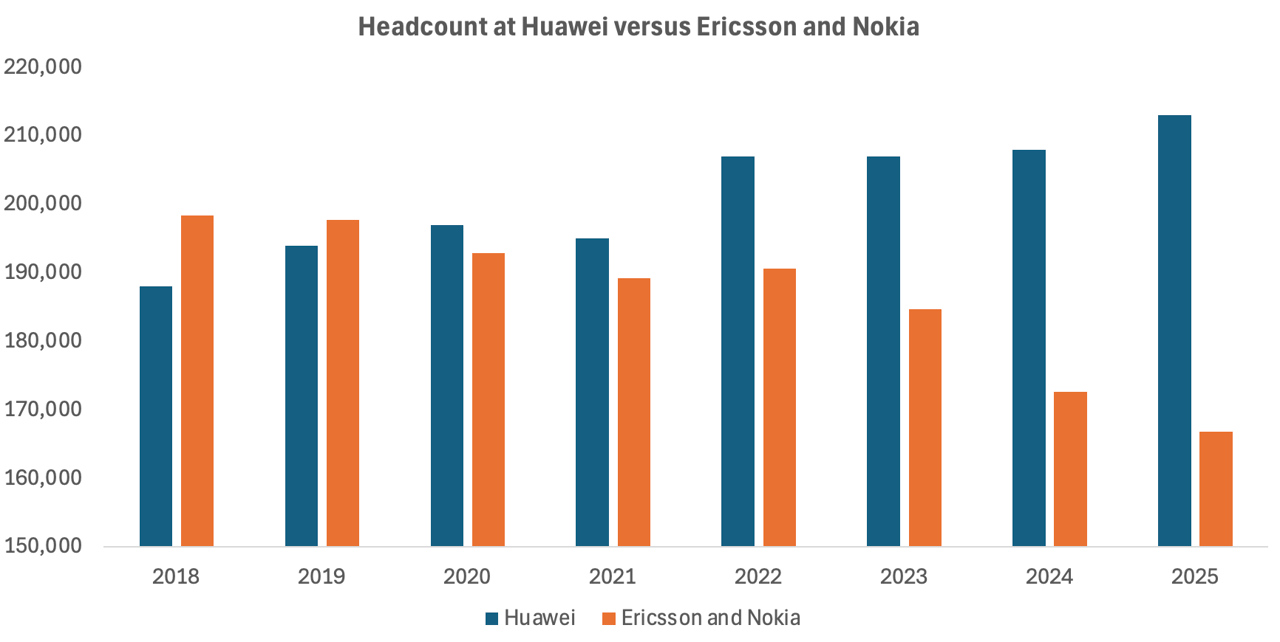

The huge divergence lies in workforce trends. As reported by Light Reading, Ericsson and Nokia have collectively shed nearly 28,000 positions since 2022, equivalent to about 15% of their combined headcount that year. While growing automation and AI integration have arguably improved operational efficiency, the scale of these reductions also reflects a cooling investment climate among operators. With telco spending on 5G deployments tapering off, Europe’s two large network equipment vendors are continuing layoffs.

In contrast, Huawei’s workforce has continued to increase as it has pushed into new industrial sectors. Since 2021, when Huawei suffered its worst-ever sales decline, the Chinese behemoth has added about 18,000 employees to its payroll, according to annual reports. Around 5,000 of them were recruited last year, including 1,000 in R&D alone. That resulted in 213,000 employees Huawei employees in 2025.

The increased hiring boosted overall operating costs, including R&D expenditure, by 7.2%, to about RMB334 billion ($48.5 billion).

Source & Graph Credit: Light Reading

………………………………………………………………………………………………………………….

Moving forward, China’s largest IT vendor’s roadmap prioritizes:

- Full-Stack AI Integration: Embedding AI and carrier-grade security across the entire product lifecycle and network architecture.

- Strategic Domain Expansion: Increasing CapEx and R&D in connectivity, cloud, and autonomous driving.

- Ecosystem Sovereignty: Scaling the Ascend (AI), Kunpeng (Computing), and HarmonyOS ecosystems to drive vendor-agnostic collaboration and industry-wide adoption

Meng stressed, “We are moving toward a future that is full of uncertainty, so we have to remain true to our strategy and maintain strategic focus. We will translate strategy to execution, keep cultivating the developer ecosystem, and pursue high-quality development.”

………………………………………………………………………………………………………………….

References:

https://www.huawei.com/en/news/2026/3/annual-report-2025

https://www.lightreading.com/5g/huawei-sales-growth-plummeted-in-2025-as-it-gained-5-000-workers

Huawei unveils AI Centric Network roadmap, U6 GHz products, 5G Advanced strategy and SuperPoD cluster computing platforms

Huawei, Qualcomm, Samsung, and Ericsson Leading Patent Race in $15 Billion 5G Licensing Market

Huawei Cloud Review and Global Sales Partner Policies for 2026

Huawei’s Electric Vehicle Charging Technology & Top 10 Charging Trends

Huawei to Double Output of Ascend AI chips in 2026; OpenAI orders HBM chips from SK Hynix & Samsung for Stargate UAE project

Omdia on resurgence of Huawei: #1 RAN vendor in 3 out of 5 regions; RAN market has bottomed

Huawei launches CloudMatrix 384 AI System to rival Nvidia’s most advanced AI system

U.S. export controls on Nvidia H20 AI chips enables Huawei’s 910C GPU to be favored by AI tech giants in China

AI-RAN Reality Check: hype vs hesitation, shaky business case, no specific definition, no standards?

Introduction:

The narrative surrounding “AI-RAN” — a term thrust into the spotlight by Nvidia — may have left many believing that boatloads of GPUs are already powering baseband compute in RAN equipment across the world’s seven million mobile sites. In truth, the reality is far more nascent.

Among major RAN vendors, Nokia stands alone in adapting baseband software for GPU acceleration. Yet even Nokia does not anticipate commercial readiness until late 2026, as confirmed by its Chief Technology Officer, Pallavi Mahajan, during the company’s MWC press conference earlier this year. For now, no operator has announced a commercial deployment — despite the buzz around trials.

Early Movers, Limited Momentum:

Much of the current AI-RAN activity centers on two operators: T-Mobile US and Japan’s SoftBank. At MWC, T-Mobile’s Executive Vice President of Innovation and ex-CTO, John Saw, acknowledged the limited availability of deployable solutions, quipping that he hoped Nokia would deliver an AI-RAN product within the year. Nokia CEO Justin Hotard quickly assured him that such a milestone was indeed on track.

Still, the debut of a GPU-based RAN stack does not imply an imminent large-scale rollout. Without tangible network performance or cost advantages over existing virtualized or disaggregated RAN approaches, operators are unlikely to move past controlled trials.

SoftBank, while often positioned as an AI-RAN pioneer, remains cautious. As Ryuji Wakikawa, Vice President of its Advanced Technology Division, outlined last year, the operator aims to deploy only a handful of AI-RAN sites over the next fiscal cycle. Transitioning from testing to carrying live commercial traffic, he emphasized, demands a significant maturity leap in quality and feature completeness.

Beyond Hype: Limited Commercial Engagement:

Elsewhere, Indonesia’s Indosat Ooredoo Hutchison (IOH) was heralded in 2025 as the first operator in Southeast Asia pursuing AI-RAN. More than a year later, authoritative sources indicate IOH’s work remains confined to its research facility in Surabaya, with no near-term plans for GPU investment at cell sites until measurable value is demonstrated.

The challenge for Nokia — and for GPU-backed AI-RAN broadly — is convincing operators that general-purpose accelerators offer sufficient performance or efficiency gains for most RAN workloads. T-Mobile and SoftBank continue evaluating both Nokia and Ericsson, whose AI-RAN philosophies diverge sharply. Nokia is developing GPU-based baseband software, while Ericsson maintains its focus on custom silicon and CPU architectures.

Divergent Architectures and Use Cases:

Ericsson contends that no core RAN performance enhancements intrinsically require GPUs. Its ongoing collaboration with Nvidia leverages the latter’s Grace CPU technology rather than its GPU portfolio, reserving GPU acceleration only for compute-intensive functions like forward error correction (FEC).

If Ericsson’s premise holds, GPUs in the RAN become justifiable only when supporting AI inference workloads. Even then, inference at every radio site remains improbable. A more incremental strategy — deploying GPUs selectively at edge locations where AI workloads justify their cost — may prove more practical.

This modular approach aligns with existing virtual RAN deployments based on Intel CPUs, which already include native FEC acceleration. “It is an off-the-shelf card that you can slide right into an HPE or Dell or Supermicro server,” said Alok Shah, the vice president of network strategy for Samsung Networks. “That gets you the edge functionality you are looking for.”

Rethinking the Economic Case for AI RAN:

Initially, Nvidia positioned GPUs for AI-RAN as viable only if broadly utilized for AI inference across the RAN. Following its strategic alignment with Nokia, however, the company has softened its stance — now suggesting that appropriately sized, power-efficient GPUs could make sense even when dedicated solely to baseband computation.

For now, the global RAN landscape remains far from GPU-saturated. AI-RAN remains an exploratory frontier — one testing not only the technical feasibility of GPUs at the edge, but also the economic/business case rationale for re-architecting a trillion-dollar telecom infrastructure around them.

The AI models suitable for RAN environments must be compact and efficient, far slimmer than those that drive data center-scale AI. There’s no room for the massive, parameter-heavy neural networks that justify a GPU’s cost or energy appetite. In that light, a GPU looks less like a breakthrough and more like a mismatch — a chainsaw brought to a task better handled with a sharp pair of scissors.

Evaluating the Case for AI-RAN Acceleration:

The central question is whether GPUs can deliver meaningful benefits over custom silicon or conventional CPUs for RAN compute. Ericsson’s engineers argue that AI models deployed at the RAN must remain relatively lightweight, with far fewer parameters than those used in large-scale data centers. Excessive model complexity could introduce signaling delays unacceptable in real-time radio environments. In this context, deploying a GPU for such workloads might seem disproportionate — a high-powered tool for a low-demand task.

The most compelling defense of GPU-based RAN acceleration came from Ronnie Vasishta, Nvidia’s Senior Vice President for Telecom, who told Light Reading last summer, “The world is developing on Nvidia.” His point underscores a shift in semiconductor economics: the cost and risk of building dedicated silicon for a mature and shrinking RAN market make general-purpose processors — supported by large-volume ecosystems — increasingly attractive alternatives.

Intel’s difficulties further illustrate this dynamic. Despite $53 billion in revenue during 2025, the former microprocessor king barely broke even despite $53 billion in revenue, following a $19 billion loss the previous year. A major restructuring cut its headcount by nearly 24,000, and its planned spinoff of the Network and Edge division — serving telecom infrastructure customers — was ultimately abandoned in December. Nvidia, the world’s most valuable company, may be eager to step into that space — but the economic logic seems upside down. Wireless network operators are looking to reduce costs, not import data center economics into the RAN.

Ecosystem or Echo Chamber?

Nvidia’s Aerial platform and CUDA-based software ecosystem do present a compelling story: open infrastructure, modular APIs, and space for smaller developers to innovate alongside giants like Nokia. On paper, it’s an alluring image of democratized RAN software. In practice, it ties the industry even more tightly to a vertically integrated, proprietary ecosystem.

Nokia appears comfortable with that trade-off. Nokia CTO Pallavi Mahajan’s recent blog post framed AI-RAN as a vehicle for “software speed and innovation.” He added, “Nokia’s AI-RAN initiative begins with a simple observation: AI is changing not only how networks are operated, but also the nature of the traffic they carry. AI workloads have already reached massive scale, with mobile devices accounting for more than half of AI interactions. Large language model interactions introduce richer uplink flows and burstier patterns as devices continuously send context to models.”

Indeed, that me be true someday. But for now, most wireless network operators need stable, cost-efficient networks, not AI-driven complexity or GPU-level power draw.

Image Credit: Nokia

Conclusions:

The uncomfortable truth is that AI-RAN feels more like a vendor-driven experiment than an operator-driven demand. Until someone proves that GPUs in the RAN deliver a measurable payoff — in performance, cost, or operational simplicity — the whole concept risks joining the long list of telecom “game-changers” that never made it past the trial stage. The hype cycle is predictable; the economics are not. Unless that equation changes, the real intelligence may be knowing when not to deploy AI RAN.

………………………………………………………………………………………………………………

In a Substack post today, Sebastian Barros writes: What Does AI-RAN Even Mean?

Despite the crazy hype, there is no definition for AI-RAN. Today it is at best a vibe, a dangerous reality for an industry that moves on strict standards that are currently completely absent.

The AI RAN hype is crazy right now. But despite the endless talk and vendor announcements, there is no actual technical definition of what it even means. As wild as it sounds for an industry built on strict 3GPP and O-RAN standards (those are specs- not standards), AI RAN is currently just a vendor interpretation designed to move hardware. Moreover, telecom has been using AI in the RAN before it was even cool. In fact, we were among the first industries to use neural networks in signal processing back in the 80s.

The problem is that treating AI-RAN as a marketing narrative rather than a rigid standard actively stalls progress. When the definition of AI-RAN is as different as night and day depending on which OEM you ask, it becomes impossible for any Telco to accurately model TCO or make solid CAPEX decisions.

Editor Notes:

- ITU-R’s IMT-2030 framework (ITU-R Recommendation M.2160-0 for IMT-2030) calls for an AI-native new air interface and AI-enhanced radio networks, but does not mention Nokia’s AI RAN.

- 3GPP Release 18 and later have study/work items on AI/ML for RAN functions such as energy saving, load balancing, mobility optimization, and AI/ML on the RAN air interface, but again no specifics have been discussed let alone agreed upon.

- 3GPP Release 19 continues and expands this work, with reporting that it builds on Release 18’s normative work and adds new AI/ML-based use cases for NG-RAN. In other words, 3GPP does have AI-RAN-related specs in progress and some normative features, but they are distributed across multiple RAN work items rather than packaged as one standalone “AI RAN” specification.

- AI RAN Alliance “is dedicated to driving the enhancement of RAN performance and capability with AI.” However, they’ve not yet produced any implementable specifications for AI RAN. Yet there are only four carriers that are “executive members“: Vodafone, T-Mobile, and SK Telecom, and Softbank (which is a conglomerate).

In Japan, NTT Docomo holds the largest cellular market share, with KDDI second, followed by SoftBank and the rapidly expanding Rakuten Mobile.

References:

https://www.lightreading.com/5g/ai-ran-lots-of-talk-little-action-no-guarantees

https://www.nokia.com/blog/ai-ran-bringing-software-speed-innovation-into-the-radio-network/

Ericsson goes with custom silicon (rather than Nvidia GPUs) for AI RAN

Dell’Oro: RAN Market Stabilized in 2025 with 1% CAG forecast over next 5 years; Opinion on AI RAN, 5G Advanced, 6G RAN/Core risks

Dell’Oro: Analysis of the Nokia-NVIDIA-partnership on AI RAN

RAN silicon rethink – from purpose built products & ASICs to general purpose processors or GPUs for vRAN & AI RAN

Dell’Oro: AI RAN to account for 1/3 of RAN market by 2029; AI RAN Alliance membership increases but few telcos have joined

Dell’Oro: AI RAN to account for 1/3 of RAN market by 2029; AI RAN Alliance membership increases but few telcos have joined

Fiber Broadband Association Middle Mile WG: how to use “Digital Infrastructure Networks” for coordinated fiber backbone investments

The Fiber Broadband Association (FBA) today released guidance from its Middle Mile Working Group (WG) which outlines how states can strengthen digital infrastructure through coordinated fiber backbone investment. Fiber is the foundation of AI, powering the high-capacity, low-latency, secure connectivity that links data centers, cloud infrastructure, and the communities that depend on them. To meet rising national demand, the U.S. must scale fiber deployment 2.3x by 2029. This goal requires accelerated infrastructure builds and strong coordination among states, utilities, and industry partners.

Digital Infrastructure Networks are strategic fiber optic systems that connect the core internet backbone to last-mile broadband providers. By strengthening these middle-mile connections, states can reduce the cost of broadband deployment, improve network resiliency, and expand connectivity to unserved and underserved communities.

“Middle-mile infrastructure is what allows broadband networks to scale,” said Sachin Gupta, Chair of the Middle Mile Working Group and Vice President of Business and Technology Strategies at Centranet. “When high-capacity fiber backbones are located closer to underserved communities, providers can extend last-mile networks more affordably, reach more locations, operate more efficiently, and better serve communities across the state.”

Among the recommendations:

- Coordinate infrastructure projects across agencies to streamline deployment and reduce unnecessary construction

- Implement “dig once” policies that install conduit or fiber whenever roads or utility corridors are opened for construction

- Leverage state-owned assets, including rights-of-way, existing fiber routes, and utility infrastructure

- Modernize permitting and coordination processes to accelerate broadband builds

FBA will further explore these strategies during two Middle Mile Working Group breakout sessions at Fiber Connect 2026, taking place Tuesday morning. The sessions include:

- Rural Collaboration, Infrastructure Planning, and Sustaining Affordable, High-Performance Middle Mile Broadband

- Unlocking New Middle Mile Opportunities for ISPs and Community Networks

……………………………………………………………………………………………………………………………………………………………………………………………………………………………………

Technical Topology: The DWDM Advantage:

- Massive Spectral Efficiency: Multiplexing up to 96+ channels onto a single fiber, with each wavelength supporting 100G, 400G, or 800G data rates.

- Scalable Architecture: Capacity can be increased incrementally by lighting new wavelengths without forklift upgrades or additional trenching.

- Resilient Topologies:

- Ring Networks: Often preferred for regional backhaul, utilizing Optical Add/Drop Multiplexers (OADMs) to provide self-healing 1+1 protection and sub-50ms failover.

- Mesh Networks: The gold standard for reliability, offering multiple diverse paths to ensure uptime even during multiple fiber cuts.

- Long-Haul Performance: Utilizing Erbium-Doped Fiber Amplifiers (EDFAs) and Raman amplification to maintain signal integrity over spans exceeding 1,000 km without electronic regeneration.

References:

Learn more; fiberconnect.fiberbroadband.org. Learn more about FBA’s research here or subscribe to FBA’s Fiber Forward Weekly newsletter here to stay updated.

Digital Infrastructure Networks: Meeting the Broadband Challenge for State Governments

Australia’s NBN and Nokia demonstrate multi-generation optical technologies concurrently over existing FTTP infrastructure

Automating Fiber Testing in the Last Mile: An Experiment from the Field

U.S. fiber rollouts now pass ~52% of homes and businesses but are still far behind HFC

Highlights of FiberConnect 2024: PON-related products dominate

Fiber Broadband Association: 1.4M Fiber Miles Needed for 5G in Top 25 U.S. Metros

AT&T expands its fiber-optic network amid slowdown in mobile subscriber growth

The Financial Trap of Autonomous Networks: Scaling Agentic AI in the Telecom Core

By Pavan Madduri with Ajay Lotan Thakur

The telecom industry wants autonomous, self-healing networks, but nobody is looking at the GPU bill. Running Agentic AI 24/7 “just in case” will bankrupt your IT department and ruin your ESG goals. The only way to survive the autonomous era is ruthless, event-driven orchestration that scales cognitive compute to absolute zero.

Introduction – The Compute Crisis:

The Compute Crisis Nobody is Talking About

Everyone in telecom right now is obsessed with “self-healing” autonomous networks. The vendor pitch sounds amazing. Just drop in some Agentic AI, let it watch your data plane, and watch it fix anomalies without a human ever touching a keyboard. But there’s a massive trap hiding underneath all that hype, and enterprise architects are completely ignoring it. It comes down to the raw physics of AI compute.

Unlike your standard microservices, which just run deterministic, compiled code on cheap CPU cycles, Agentic AI needs massive foundation models. To actually reason through a network failure, these models have to load gigabytes of weights into Video RAM and generate tokens. You need dedicated GPUs for this. We aren’t talking about cheap, stateless API calls here. These are the most expensive, power-hungry workloads in your entire datacenter.

If a telco tries to run an autonomous core the old-fashioned way by keeping high-end GPU nodes spinning 24/7 just in case a BGP route flaps, their cloud bill is going to wipe out any operational savings the AI was supposed to deliver.

The reality is that autonomy is no longer just a software problem. It’s a financial one. The telcos that actually win will not be the ones with the smartest AI. They will be the ones who figure out how to build a strict “scale-to-zero” environment. They need to spin up that expensive cognitive compute exactly when it is needed, and kill it the exact second the job is done.

Why Traditional Auto-scaling is Broken for AI:

When platform engineers first see the compute costs of running these AI agents, their first instinct is usually just to slap standard Kubernetes Horizontal Pod Autoscaling (HPA) on the cluster and call it a day. But standard HPA was built for stateless web servers, not massive cognitive engines. If you try to use it for Agentic AI in a telecom core, you’re going to fail for two big reasons.

The Cold-Start Penalty: Traditional autoscaling is entirely reactive. It sits around waiting for a CPU to hit 80% before it decides to scale up. In telecom, SLAs are measured in sub-milliseconds. If you wait for an anomaly to spike your CPU, then provision a new GPU node, pull a massive AI container image, and load the model weights into VRAM, you are talking about minutes of delay. By the time your AI agent actually wakes up to fix the problem, you have already breached your SLA.

CPU Utilization is a Liar: For AI workloads, standard hardware metrics are completely misleading. A GPU could be pegged at 90% utilization just thinking through a minor log warning, while a massive, critical network failure is stuck waiting in the queue. If your scaling logic is tied to hardware metrics instead of the actual severity of the event queue, you are just going to burn budget scaling blindly.

We have to abandon reactive resource metrics entirely and move to event-driven orchestration.

The Fix – Event-Driven Orchestration:

If standard HPA is broken for this, what is the fix? You have to completely decouple the infrastructure from the workload using strict, event-driven orchestration.

Instead of keeping baseline infrastructure running just to maintain a state, you treat cognitive compute as 100% ephemeral. You don’t scale based on how hard the CPU is working. You scale based on the exact depth and severity of the anomaly queue.

To actually build this, architects need purpose-built event-driven scalers like KEDA (Kubernetes Event-driven Autoscaling). KEDA lets your cluster completely bypass those reactive hardware metrics and listen directly to the network’s data plane.

But how do you avoid the cold-start latency of booting a fresh GPU pod? KEDA solves this by reacting to the event queue length itself rather than waiting for an existing pod’s CPU to max out. By the time a traditional HPA notices a CPU spike, the system is already overwhelmed. (To solve this exact issue in production, I open-sourced a custom KEDA scaler specifically designed to scrape and react to native GPU metrics, allowing the orchestrator to scale cognitive workloads preemptively. You can view the architecture on [GitHub])

KEDA intercepts the telemetry trigger at the source. When paired with a warm pool of paused GPU nodes and pre-pulled container images, KEDA can scale a pod from zero to active in milliseconds. The infrastructure is anticipating the load based on the queue, not reacting to the stress of it.

Here is what the workflow actually looks like when you do it right:

- The Trigger: Telemetry picks up a severe anomaly ,like a sudden 5G slice degradation, and pushes an event straight to a message broker like Kafka.

- The Scale-Up: KEDA intercepts that exact metric and instantly provisions a dedicated, GPU-backed AI pod from a warm standby pool.

- The Execution: The Agentic AI loads into VRAM, figures out the blast radius of the anomaly, and executes a fix. This is usually by reconciling the state through a GitOps controller.

- The Kill Switch: The absolute millisecond that the event queue clears and the network is stable, the orchestrator aggressively terminates the pod and gives the GPU back to the node pool.

You only pay the premium GPU tax during moments of active reasoning. The 24/7 idle tax is gone.

Architecting the Scale-to-Zero Core:

To make this scale-to-zero dream a reality, you have to fundamentally change how you handle network observability. The biggest mistake I see architects make is tightly coupling their monitoring tools with their AI execution layer. If your observability stack is running on the same hardware as your AI engine, you are literally wasting premium GPU compute just to watch logs.

You need a strict, physical separation of concerns:

The Watchers (The Lightweight Control Plane):

Your network data plane needs to be monitored by lightweight, CPU-efficient edge collectors like Prometheus or OpenTelemetry. These sit right at the edge, continuously eating millions of telemetry data points and BGP state changes. Because they don’t do any complex reasoning, they run incredibly cheap on standard CPU nodes.

The Thinkers (The Heavyweight Execution Plane):

Your expensive AI models are completely isolated in a separate, GPU-backed node pool that literally defaults to zero instances.

When the Watchers spot an anomaly, they don’t try to fix it. They just fire an alert to KEDA. KEDA then wakes up the Thinkers, spinning up the exact number of GPU pods needed to handle that specific blast radius. By decoupling the watchers from the thinkers, you guarantee that not a single cycle of GPU compute is wasted on baseline monitoring.

The Bottom Line:

Autonomous telecom networks are going to happen. But trying to brute-force the infrastructure provisioning is a fast track to bankrupting your IT department. The smartest Agentic AI in the world is useless if you can’t afford the cloud bill to run it.

Furthermore, this isn’t just about protecting the IT budget. Running idle GPUs 24/7 creates a massive, unnecessary carbon footprint. By enforcing a scale-to-zero architecture, telcos can drastically reduce the energy consumption of their autonomous networks, turning a massive ESG liability into a sustainable operational model.

Autonomy is no longer just a software engineering problem. It is an infrastructure balancing act. If Agentic AI is going to survive in the telecom core, we have to ditch legacy threshold scaling and embrace strict, event-driven orchestration.

Tools like KEDA give us the ability to build networks that are both cognitively brilliant and financially ruthless. We can spin up massive intelligence at the exact millisecond of failure and scale right back to zero the moment the network is healed.

References and Further Reading:

- Unlocking Energy Saving in Telecom Networks: A Path to a Sustainable Future – A deep dive into the operational and ESG mandates driving energy efficiency in modern telecom infrastructure.

- KEDA Documentation: Kubernetes Event-driven Autoscaling – Technical specifications for decoupling workload scaling from standard CPU/Memory metrics.

- keda-gpu-scaler – An open-source custom KEDA scaler I developed to enable event-driven autoscaling specifically tied to native GPU telemetry and queue depth.

Building and Operating a Cloud Native 5G SA Core Network

How Network Repository Function Plays a Critical Role in Cloud Native 5G SA Network

HPE Aruba Launches “Cloud Native” Private 5G Network with 4G/5G Small Cell Radios

…………………………………………………………………………………………….

About the Author:

Pavan Madduri is a Cloud-Native Architect, CNCF Golden Kubestronaut, and active IEEE researcher specializing in enterprise infrastructure automation, Agentic SREs, and Kubernetes networking. He designs scalable, zero-trust cloud environments and frequently writes about the intersection of AI governance and cloud-native infrastructure.

Connect with Pavan Madduri on [LinkedIn] .

Disclaimer: The author acknowledges the use of AI-assisted tools for structural formatting, language refinement, and copyediting during the drafting of this article. The core architectural concepts, technical opinions, and engineering strategies remain entirely original.

ABI Research: mobile network spending to fall 29% in 2026-31

According to ABI Research, global mobile network infrastructure spending is projected to peak at ~$92 billion in 2026–2027 before falling 29% to $65 billion by 2031. This decline reflects the maturation of 5G deployments and a shift in operator focus toward 6G, with reduced demand for traditional Radio Access Network (RAN) equipment.

“5G deployments have seen significant growth over the years, with industry estimates placing the current number of launched 5G networks at over 350 globally,” said Matthias Foo, Principal Analyst at ABI Research. “By the end of 2025, global 5G population coverage is expected to reach 60%, driven in part by rapid deployments in India, where more than 500,000 5G Base Transceiver Stations have been installed within three years.”

As 5G rollouts mature, RAN equipment vendors are beginning to report slower growth. Even as advanced deployments such as 5G-Advanced emerge in markets like the United States, China, and Saudi Arabia, overall infrastructure demand is stabilizing following years of rapid expansion.

Recent financial results from major vendors reinforce this trend.

- Ericsson reported flat RAN growth in 2025 and expects a similar outlook for 2026.

- Nokia also posted flat performance in its Mobile Networks business.

- ZTE reported a 5.9% Year-on-Year decline in its Carriers’ Networks segment in the first half of 2025.

Following the release of their respective financial reports, both Ericsson and Nokia said they expect the RAN market to be more or less flat this year. Nokia is focusing on data center networking, while Ericsson is concentrating on mission critical communications, defense, and enterprise networking.

ABI says some near-term growth is still expected in 2026, supported by ongoing deployments in markets such as Malaysia, India, Argentina, Peru, and Vietnam.

Open RAN adoption is forecast to grow at a 26.5% CAGR through 2031, accounting for approximately 23% of the installed base. However, despite high-profile announcements from operators and vendors, the market is still expected to remain largely dominated by incumbent suppliers rather than new entrants initially expected.

These findings are from ABI Research’s Indoor, Outdoor, and IoT Network Infrastructure market data report, part of its 5G, 6G & Open RAN research service. The report provides detailed forecasts, market share analysis, and insights into key infrastructure investment trends.

Research Highlights:

- mMIMO market tracker across regions and by configurations.

- DAS revenue forecasts by region, technology, and verticals.

- Small cell market tracker for both indoor and outdoor infrastructure.

………………………………………………………………………………………………

Dell’Oro Group is slightly less pessimistic than ABI Research. In January, it forecast that global RAN revenues will grow at a 1% CAGR for the remainder of the 2020s, as ongoing 5G investments. Stefan Pongratz said at the time that downside risks still outweigh the upside potential though, the most notable of those being slowing data growth.

References:

Dell’Oro: RAN Market Stabilized in 2025 with 1% CAG forecast over next 5 years; Opinion on AI RAN, 5G Advanced, 6G RAN/Core risks

Dell’Oro: RAN market stable, Mobile Core Network market +14% Y/Y with 72 5G SA core networks deployed

RAN Silicon Rethink- Part II; vRAN and General-Purpose Compute

Will “AI at the Edge” transform telecom or be yet another telco monetization failure?

Ericsson goes with custom silicon (rather than Nvidia GPUs) for AI RAN

Omdia on resurgence of Huawei: #1 RAN vendor in 3 out of 5 regions; RAN market has bottomed

Omdia: Huawei increases global RAN market share due to China hegemony

Dell’Oro Group: RAN Market Grows Outside of China in 2Q 2025

Dell’Oro: AI RAN to account for 1/3 of RAN market by 2029; AI RAN Alliance membership increases but few telcos have joined

Australia’s NBN and Nokia demonstrate multi-generation optical technologies concurrently over existing FTTP infrastructure

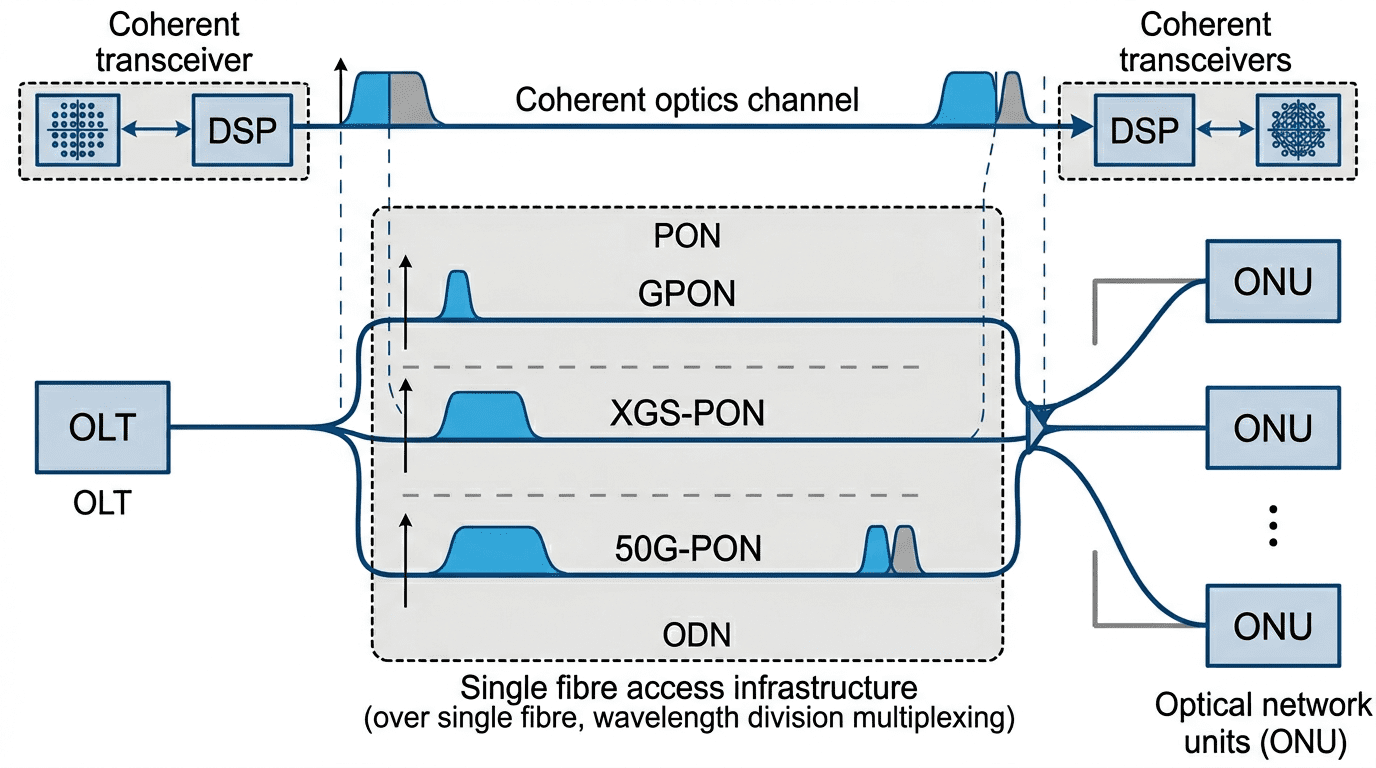

NBN Co, in collaboration with Nokia, has successfully conducted a laboratory demonstration of multiple generations of optical access and coherent transmission technologies operating concurrently over its existing Fiber‑to‑the‑Premises (FTTP) network. The technical trial validates the long‑term scalability of NBN Co’s national full‑fibre infrastructure and its capacity to accommodate the sustained growth of residential, enterprise, and industrial data demand anticipated over the coming decades.

The “Supercharging Fibre” trial, presented at the Broadband Forum Spring Member Meeting—held in Australia for the first time and hosted by NBN Co—demonstrated aggregate transmission rates exceeding 230 Gbit/s using multiple optical technologies over a single physical fiber link in a controlled laboratory environment. The experimental setup also established a pathway toward achieving terabit‑class capacities in future trials through the evolution of optical modulation formats and channel aggregation techniques.

A key outcome of the trial was the successful integration of coherent optical transmission with multiple generations of passive optical network (PON) technologies—GPON, XGS‑PON, and 50G‑PON—operating simultaneously over the same fiber infrastructure currently in service across Australia. Coherent optics, traditionally deployed within metropolitan, core, and data center interconnect networks, employ advanced modulation and digital signal processing to deliver extended reach, low latency, and high spectral efficiency. Their introduction into the access network domain represents a significant step toward the convergence of access and transport technologies, offering an efficient route to enhanced capacity and service flexibility without extensive physical network replacement.

The demonstration (see illustration below) underscores the technical viability of leveraging existing passive optical infrastructure to support future bandwidth requirements driven by the proliferation of cloud computing, immersive digital experiences, artificial intelligence applications, and industrial IoT systems. The results further illustrate the potential of FTTP systems to evolve into a highly scalable, future‑ready broadband platform capable of sustaining national connectivity objectives.

Image Credit: Perplexity.ai

…………………………………………………………………………………………………………………………………………………………………………………………………………………………………………..

By 31 December 2025, more than 1 million customers had transitioned from copper‑based services to high‑speed full‑fiber connections, positioning FTTP as NBN Co’s dominant fixed‑line technology at approximately 35% of total connections. The company achieved its commitment to enable 10 million premises, representing about 90% of the NBN fixed‑line footprint, to order multi‑gigabit‑capable wholesale broadband services. Ongoing upgrade activities encompass over 228,000 premises, as part of an initiative to extend full‑fiber access to 95% of the remaining ~622,000 copper‑served locations by 2030.

These developments reflect NBN Co’s strategic focus on access network modernization and underscore the continuing evolution of optical access technologies toward achieving the performance, flexibility, and resilience required to support Australia’s transition to a digital and cloud‑centric economy.

NBN Co. was established in 2009 by the Commonwealth of Australia as a Government Business Enterprise (GBE) with a clear direction – to design, build and operate a wholesale broadband access network for Australia.

And we’ve done just that – creating a network that criss-crosses a country, and allowing internet retailers to provide reasonably priced broadband services to consumers and businesses.

The network is the digital backbone of Australia and is constantly evolving to keep communities and businesses connected and our nation productive.

References:

https://www.nbnco.com.au/corporate-information/about-nbn-co

https://www.broadband-forum.org/events/spring-2026-member-meeting/

Dell’Oro: Optical Transport Systems market +15% year-over-year in 3Q2025 driven by Cloud Service Providers

AI wireless and fiber optic network technologies; IMT 2030 “native AI” concept

Point Topic: FTTP broadband subs to reach 1.12bn by 2030 in 29 largest markets

Nokia and Hong Kong Broadband Network Ltd deploy 25G PON

Nokia’s launches symmetrical 25G PON modem

Google Fiber planning 20 Gig symmetrical service via Nokia’s 25G-PON system

Ericsson and Forschungszentrum Jülich MoU for neuromorphic computing use in 5G and 6G

Ericsson and major European research center Forschungszentrum Jülich are collaborating to develop technologies for the continued evolution of 5G and for the future introduction of 6G (IMT 2030) networks. The organizations signed a Memorandum of Understanding (MoU) on March 24, 2026.The project aims to leverage JUPITER, Europe’s first “exascale” supercomputer, to design and test new artificial intelligence solutions for the complex demands of 6G. The partnership will explore AI models and methods to enhance Ericsson’s core network, network management, and Radio Access Network (RAN).

Important objectives include exploring ultra-efficient, “brain-inspired” computing approaches like neuromorphic computing [1.] to handle intense network tasks and strengthen Europe’s digital infrastructure. Modern mobile networks rely heavily on Massive MIMO, a technology where many devices communicate simultaneously via numerous antennas. By exploring novel system architecture approaches like neuromorphic computing, researchers aim to speed up optimization and reduce energy use versus classical methods.

Note 1. Neuromorphic computing is a brain-inspired engineering approach that mimics biological neural networks using analog or digital electronic circuits. It combines memory and processing in one place—similar to neurons and synapses—to achieve extreme energy efficiency, speed, and learning capabilities, moving beyond the limitations of traditional computing architecture. Unlike traditional AI that uses continuous data, neuromorphic systems use “spikes”—discrete events in time—to mimic how neurons communicate. Such systems only consume significant power when processing data (“spiking”), making them ideal for ultra-low-power edge computing, unlike traditional computers that are always on. They can process complex, real-world data (like vision or touch) much faster and with far less power than traditional computers.

…………………………………………………………………………………………………………………………………………………………………………………………..

The alliance will study operational strategies like heat recovery to boost energy efficiency in HPC and cloud deployments. The collaboration involves systematic benchmarking of AI methods – including the application of neuromorphic AI – across Ericsson products to assess execution speed, scalability to large datasets, information retention, and storage efficiency. In addition, the partnership will provide insights into the feasibility of cloud strategies based on concepts from the EuroHPC ecosystem, which is establishing a world-class supercomputing infrastructure.

Professor Laurens Kuipers, a member of the Executive Board of Forschungszentrum Jülich, said: “This collaboration has the potential to make a significant contribution to a more sustainable digital future. By combining our excellence in high-performance computing and our research into novel, neuro-inspired computing approaches with Ericsson’s expertise in telecommunications, we aim to develop more energy-efficient network solutions and strengthen a sovereign European digital infrastructure.”

Image Credit: Image: Forschungszentrum Jülich / Kurt Steinhausen

……………………………………………………………………………………………………………………………………….

Nicole Dinion, Head of Architecture and Technology, Cloud Software and Services, Ericsson said: “The future of mobile networks is deeply intertwined with AI and the need for unparalleled energy efficiency. Our collaboration with Forschungszentrum Jülich, for years a global leader in supercomputing and applied physics, combines their research and computing power with our expertise in all domains of telecoms technology. We will explore architectures that define the next generation of telecommunication.”

The collaboration covers several areas of research:

- AI methods for Ericsson products across the full portfolio: systematic benchmarking of approaches to assess execution speed, scalability to large datasets, information retention, and storage efficiency. Where security and commercial conditions permit, the teams may also use JUPITER for large-scale model training, leveraging its compute resources.

- Energy-efficient computing for AI inference at the radio and edge: developing and prototyping highly efficient solutions for tasks such as radio channel estimation and Massive MIMO – a key technology in modern mobile networks, in which many devices communicate simultaneously via numerous antennas. This includes exploring novel system architecture approaches like neuromorphic computing (e.g., memristors) to speed up optimization and reduce energy use versus classical methods.

- HPC and cloud architectures and operations for AI: researching and implementing Modular Supercomputing Architecture (MSA) concepts from exascale work at Forschungszentrum Jülich – in particular, at the Jülich Supercomputing Centre (JSC) – and studying operational strategies, such as heat recovery, to boost energy efficiency in HPC and cloud deployments.

The collaboration will provide insights into the feasibility of cloud strategies based on concepts from the EuroHPC ecosystem, which is establishing a world-class supercomputing infrastructure with leading European centers such as the JSC.

ABOUT FORSCHUNGSZENTRUM JÜLICH:

Shaping change: This is what drives us at Forschungszentrum Jülich. As a member of the Helmholtz Association with more than 7,000 employees, we conduct research into the possibilities of a digitized society, a climate-friendly energy system, and a resource-efficient economy. We combine natural, life, and engineering sciences in the fields of information, energy, and the bioeconomy with specialist expertise in simulation and data science. www.fz-juelich.de

References:

https://www.ericsson.com/en/blog/2026/1/ai-future-will-be-defined-by-the-intelligent-digital-fabric

https://www.ibm.com/think/topics/neuromorphic-computing

China vs U.S.: Race to Generate Power for AI Data Centers as Electricity Demand Soars

AI infrastructure spending boom: a path towards AGI or speculative bubble?

Big tech spending on AI data centers and infrastructure vs the fiber optic buildout during the dot-com boom (& bust)

Will billions of dollars big tech is spending on Gen AI data centers produce a decent ROI?

Expose: AI is more than a bubble; it’s a data center debt bomb

Sovereign AI infrastructure for telecom companies: implementation and challenges

Analysis: Cisco, HPE/Juniper, and Nvidia network equipment for AI data centers

Networking chips and modules for AI data centers: Infiniband, Ultra Ethernet, Optical Connections

Custom AI Chips: Powering the next wave of Intelligent Computing

Groq and Nvidia in non-exclusive AI Inference technology licensing agreement; top Groq execs joining Nvidia

Analysis and Impact of Blockbuster FCC ban on foreign made WiFi routers

On March 23rd, the Federal Communications Commission (FCC) updated its Covered List to prohibit the sale of foreign made consumer-grade (WiFi) routers to be sold in the U.S. The FCC’s Covered List is a list of communications equipment and services that are deemed to pose an unacceptable risk to the national security of the U.S. or the safety and security of U.S. persons. This FCC decision follows a determination by an Executive Branch interagency body, which concluded those devices pose unacceptable risks to U.S. national security and the safety of its citizens. . The new FCC restriction applies strictly to new foreign made router models, meaning retailers can continue marketing previously approved units and consumers can operate their existing equipment without interruption.

Impact:

TP-Link, Netgear, and Asus are currently among the top-selling Wi-Fi router brands in the U.S. consumer market. Estimates for early 2026 indicate that TP-Link alone holds approximately 35% of the U.S. consumer router market share, while Netgear and Asus collectively account for another 25%. The TP-Link Archer AXE75 is frequently rated the best router for most users due to its Wi-Fi 6E speed and reasonable price.

AXE5400 Tri-Band Gigabit Wi-Fi 6E Router

…………………………………………………………………………………………………………………………………

Linksys and Ubiquiti are American-based companies, but their hardware is produced by contract manufacturers overseas in locations like China, Vietnam, and Taiwan. Similarly, Amazon eero and Google Nest mesh routers are not made in the U.S.

–>Hence, these companies ability to sell new WiFi router models in the U.S. is now facing strict regulatory hurdles.

Quotes:

FCC Chairman Brendan Carr said: “I welcome this Executive Branch national security determination, and I am pleased that the FCC has now added foreign-produced routers, which were found to pose an unacceptable national security risk, to the FCC’s Covered List. “Following President Trump’s leadership, the FCC will continue to do our part in making sure that US cyberspace, critical infrastructure, and supply chains are safe and secure.”

Bogdan Botezatu, director of Threat Research at cybersecurity firm Bitdefender, says this ban is a step to harden the cybersecurity readiness of U.S. households, given ongoing geopolitical tensions. “Consumer routers sit at the edge of every home network, which makes them an attractive target and a strategic risk if compromised at scale,” he says. Asked whether he thinks the risk is real, Botezatu says the risk is real, though there’s no easy way to prove intent. “[Internet of Things] devices, including routers, are a weak point across the internet.”

“Virtually all (WiFi) routers are made outside the United States, including those produced by US-based companies like TP-Link, which manufactures its products in Vietnam,” a spokesperson from TP-Link tells WIRED. “It appears that the entire router industry will be impacted by the FCC’s announcement concerning new devices not previously authorized by the FCC.”

- Reduced Product Availability: New, high-performance routers manufactured outside the U.S. will not receive the necessary approval to be imported or sold, restricting future consumer choices.

- Higher Costs: The, “This ruling has the potential to significantly disrupt the U.S. consumer router market,” according to, likely resulting in increased prices for consumers as companies grapple with new regulatory requirements.

- Shift in Manufacturing: Router manufacturers, including those targeting the U.S. market, will likely need to shift production to the U.S. to satisfy security concerns and bypass the ban, says PC Magazine.

- Security Focus: The ban targets vulnerabilities in foreign hardware and firmware.

- No Impact on Existing Devices: Consumers can continue to use routers they currently own

References:

https://www.wired.com/story/us-government-foreign-made-router-ban-explained/

U.S. Weighs Ban on Chinese made TP-Link router and China Telecom

China backed Volt Typhoon has “pre-positioned” malware to disrupt U.S. critical infrastructure networks “on a scale greater than ever before”

WSJ: T-Mobile hacked by cyber-espionage group linked to Chinese Intelligence agency

Trump and FCC crack down on China telecoms; supply chain security at risk

RAN Silicon Rethink- Part II; vRAN and General-Purpose Compute

Overview:

The global Radio Access Network (RAN) market has experienced a significant decline, dropping by nearly $10 billion in annual product revenue between 2022 and 2024, from roughly $45 billion to about $35 billion by the end of last year (source: Omdia).

- As the IEEE Techblog previously reported, Nokia is gradually moving away from its long-held reliance on custom RAN baseband (BBU) silicon from Marvell [1.] as it pivots to use Nvidia’s GPUs, as part of the latter’s $1B investment in Nokia in October 2025.

Note 1. Nokia uses Marvell RAN silicon in its 5G ReefShark portfolio. The companies collaborate to develop custom OCTEON SoC (System-on-a-Chip) and Infrastructure Processors, which are used to boost 5G AirScale base station performance.

- Samsung has long partnered with Marvell Technology on purpose-built 5G baseband silicon. However, rising development costs and a contracting market for proprietary RAN hardware are reshaping that strategy. The economic case for new, custom RAN chipsets is becoming weaker as operators accelerate network virtualization.

- In sharp contrast, Ericsson continues to defend its investment in proprietary silicon architectures while maintaining a flexible approach for operators that prefer virtualized or cloud RAN implementations running on standard central processing units (CPUs). At present, those solutions rely exclusively on Intel processors, though Ericsson notes its software is being engineered with portability in mind to support future hardware diversity.

Samsung’s Silicon Strategy:

Among RAN equipment vendors accessible to operators across North America and much of Europe, Samsung now stands as the principal alternative to the two Nordic RAN equipment suppliers, following the exclusion of Huawei and ZTE from many Western markets.

The South Korean conglomerate has become the global frontrunner in virtualized RAN (vRAN) deployments. Whereas custom silicon once dominated RAN infrastructure design, Samsung’s strategy has notably inverted that paradigm: vRAN is now its mainstream offering, and purpose-built hardware has moved to the periphery.

By the close of last year, Samsung reported supporting approximately 53,000 vRAN sites worldwide — a significant share of which lies within Verizon’s U.S. footprint. The company also disclosed major European developments, including Vodafone’s planned rollout across Germany and other markets, which will rely entirely on vRAN technology. For Samsung, discussions of bespoke, purpose-built 5G infrastructure have become increasingly rare.

According to Alok Shah, Vice President of Network Strategy at Samsung Networks, this transition reflects both the rising cost of developing custom silicon and the performance enhancements achieved by general-purpose CPU platforms.

“We’re still selling our purpose-built BBUs to a number of customers, but I do believe that it’s a matter of time,” Shah told Light Reading during MWC Barcelona, when asked if Samsung envisions an eventual phaseout of its proprietary baseband hardware portfolio.

Virtualized RAN Gains Momentum:

Transitioning to virtualized RAN (vRAN) allows network equipment vendors to capitalize on the scale economies of commercial data-center silicon. Samsung has established commercial vRAN contracts with Verizon and Vodafone, reflecting growing operator confidence in software-defined architectures.

“Virtual RAN performance has reached parity,” Shah said. “I know not all of our competitors feel that way, but that’s certainly how we feel. And the cost of building that modem is pretty high, even for a company like Samsung that’s really good at semiconductors,” he added.

Intel’s Granite Rapids Xeon platform exemplifies this shift to vRAN. The processor’s increased core density enables operators to cut hardware footprints; in many configurations, a single server can now support workloads that previously required two. Several network operators have confirmed this performance improvement during field evaluations.

Samsung and Ericsson continue to explore additional CPU suppliers. AMD’s latest multicore x86 processors offer up to 84 cores, compared with 72 in Intel’s Granite Rapids. However, offloading Forward Error Correction (FEC)—one of the most compute-intensive RAN processes—remains a challenge. Intel’s vRAN Boost feature integrates a dedicated hardware accelerator for FEC, while AMD currently lacks a direct equivalent.

Samsung has also evaluated Arm-based platforms, which increasingly support efficient software migration from x86. Nvidia’s Grace CPU, built on Arm architecture, has emerged as a potential candidate, especially when paired with its GPUs for selective Layer 1 acceleration.

Samsung’s roadmap aligns with a gradual and selective introduction of GPU acceleration. The company demonstrated GPU-based beamforming optimization during MWC, illustrating how AI can refine radio energy targeting. However, Samsung executives maintain that the latest Intel CPUs also provide sufficient capacity to host AI inference workloads directly. “Granite Rapids has plenty of capacity to support AI algorithms on-platform,” noted Shah.

While Nokia is building a GPU-compatible Layer 1 to accelerate computationally intensive baseband functions—including FEC—Samsung’s approach appears incrementally narrower, focusing on targeted AI for RAN optimization rather than complete GPU offload. GPUs may ultimately support AI at the Edge applications—so-called AI and RAN—where telecom operators leverage deployed GPUs for latency-sensitive inference services.

The degree to which such applications will reside within RAN sites remains uncertain. Some operators suggest that edge inference may instead remain within core network clusters that can meet latency requirements more efficiently.

Samsung’s architecture already supports GPU integration through commercial off-the-shelf (COTS) servers from manufacturers such as HPE, Dell, and Supermicro—aligning with broader cloud-native RAN trends. “It’s an off-the-shelf card that can be integrated directly into standard servers,” said Shah.

For now, Intel remains Samsung’s primary compute partner for commercial vRAN products. “We haven’t had an instance where customers are pushing for a second platform—it’s primarily a matter of commercial interest,” Shah added. The direction is clear: Samsung, like other leading vendors, is prioritizing scalable, general-purpose compute over bespoke 5G silicon as vRAN deployment accelerates.

……………………………………………………………………………………………………………………………………………………………………

References:

https://www.lightreading.com/5g/samsung-eyes-death-of-purpose-built-5g-but-has-no-ai-ran-fears