Cloud networking

The Financial Trap of Autonomous Networks: Scaling Agentic AI in the Telecom Core

By Pavan Madduri with Ajay Lotan Thakur

The telecom industry wants autonomous, self-healing networks, but nobody is looking at the GPU bill. Running Agentic AI 24/7 “just in case” will bankrupt your IT department and ruin your ESG goals. The only way to survive the autonomous era is ruthless, event-driven orchestration that scales cognitive compute to absolute zero.

Introduction – The Compute Crisis:

The Compute Crisis Nobody is Talking About

Everyone in telecom right now is obsessed with “self-healing” autonomous networks. The vendor pitch sounds amazing. Just drop in some Agentic AI, let it watch your data plane, and watch it fix anomalies without a human ever touching a keyboard. But there’s a massive trap hiding underneath all that hype, and enterprise architects are completely ignoring it. It comes down to the raw physics of AI compute.

Unlike your standard microservices, which just run deterministic, compiled code on cheap CPU cycles, Agentic AI needs massive foundation models. To actually reason through a network failure, these models have to load gigabytes of weights into Video RAM and generate tokens. You need dedicated GPUs for this. We aren’t talking about cheap, stateless API calls here. These are the most expensive, power-hungry workloads in your entire datacenter.

If a telco tries to run an autonomous core the old-fashioned way by keeping high-end GPU nodes spinning 24/7 just in case a BGP route flaps, their cloud bill is going to wipe out any operational savings the AI was supposed to deliver.

The reality is that autonomy is no longer just a software problem. It’s a financial one. The telcos that actually win will not be the ones with the smartest AI. They will be the ones who figure out how to build a strict “scale-to-zero” environment. They need to spin up that expensive cognitive compute exactly when it is needed, and kill it the exact second the job is done.

Why Traditional Auto-scaling is Broken for AI:

When platform engineers first see the compute costs of running these AI agents, their first instinct is usually just to slap standard Kubernetes Horizontal Pod Autoscaling (HPA) on the cluster and call it a day. But standard HPA was built for stateless web servers, not massive cognitive engines. If you try to use it for Agentic AI in a telecom core, you’re going to fail for two big reasons.

The Cold-Start Penalty: Traditional autoscaling is entirely reactive. It sits around waiting for a CPU to hit 80% before it decides to scale up. In telecom, SLAs are measured in sub-milliseconds. If you wait for an anomaly to spike your CPU, then provision a new GPU node, pull a massive AI container image, and load the model weights into VRAM, you are talking about minutes of delay. By the time your AI agent actually wakes up to fix the problem, you have already breached your SLA.

CPU Utilization is a Liar: For AI workloads, standard hardware metrics are completely misleading. A GPU could be pegged at 90% utilization just thinking through a minor log warning, while a massive, critical network failure is stuck waiting in the queue. If your scaling logic is tied to hardware metrics instead of the actual severity of the event queue, you are just going to burn budget scaling blindly.

We have to abandon reactive resource metrics entirely and move to event-driven orchestration.

The Fix – Event-Driven Orchestration:

If standard HPA is broken for this, what is the fix? You have to completely decouple the infrastructure from the workload using strict, event-driven orchestration.

Instead of keeping baseline infrastructure running just to maintain a state, you treat cognitive compute as 100% ephemeral. You don’t scale based on how hard the CPU is working. You scale based on the exact depth and severity of the anomaly queue.

To actually build this, architects need purpose-built event-driven scalers like KEDA (Kubernetes Event-driven Autoscaling). KEDA lets your cluster completely bypass those reactive hardware metrics and listen directly to the network’s data plane.

But how do you avoid the cold-start latency of booting a fresh GPU pod? KEDA solves this by reacting to the event queue length itself rather than waiting for an existing pod’s CPU to max out. By the time a traditional HPA notices a CPU spike, the system is already overwhelmed. (To solve this exact issue in production, I open-sourced a custom KEDA scaler specifically designed to scrape and react to native GPU metrics, allowing the orchestrator to scale cognitive workloads preemptively. You can view the architecture on [GitHub])

KEDA intercepts the telemetry trigger at the source. When paired with a warm pool of paused GPU nodes and pre-pulled container images, KEDA can scale a pod from zero to active in milliseconds. The infrastructure is anticipating the load based on the queue, not reacting to the stress of it.

Here is what the workflow actually looks like when you do it right:

- The Trigger: Telemetry picks up a severe anomaly ,like a sudden 5G slice degradation, and pushes an event straight to a message broker like Kafka.

- The Scale-Up: KEDA intercepts that exact metric and instantly provisions a dedicated, GPU-backed AI pod from a warm standby pool.

- The Execution: The Agentic AI loads into VRAM, figures out the blast radius of the anomaly, and executes a fix. This is usually by reconciling the state through a GitOps controller.

- The Kill Switch: The absolute millisecond that the event queue clears and the network is stable, the orchestrator aggressively terminates the pod and gives the GPU back to the node pool.

You only pay the premium GPU tax during moments of active reasoning. The 24/7 idle tax is gone.

Architecting the Scale-to-Zero Core:

To make this scale-to-zero dream a reality, you have to fundamentally change how you handle network observability. The biggest mistake I see architects make is tightly coupling their monitoring tools with their AI execution layer. If your observability stack is running on the same hardware as your AI engine, you are literally wasting premium GPU compute just to watch logs.

You need a strict, physical separation of concerns:

The Watchers (The Lightweight Control Plane):

Your network data plane needs to be monitored by lightweight, CPU-efficient edge collectors like Prometheus or OpenTelemetry. These sit right at the edge, continuously eating millions of telemetry data points and BGP state changes. Because they don’t do any complex reasoning, they run incredibly cheap on standard CPU nodes.

The Thinkers (The Heavyweight Execution Plane):

Your expensive AI models are completely isolated in a separate, GPU-backed node pool that literally defaults to zero instances.

When the Watchers spot an anomaly, they don’t try to fix it. They just fire an alert to KEDA. KEDA then wakes up the Thinkers, spinning up the exact number of GPU pods needed to handle that specific blast radius. By decoupling the watchers from the thinkers, you guarantee that not a single cycle of GPU compute is wasted on baseline monitoring.

The Bottom Line:

Autonomous telecom networks are going to happen. But trying to brute-force the infrastructure provisioning is a fast track to bankrupting your IT department. The smartest Agentic AI in the world is useless if you can’t afford the cloud bill to run it.

Furthermore, this isn’t just about protecting the IT budget. Running idle GPUs 24/7 creates a massive, unnecessary carbon footprint. By enforcing a scale-to-zero architecture, telcos can drastically reduce the energy consumption of their autonomous networks, turning a massive ESG liability into a sustainable operational model.

Autonomy is no longer just a software engineering problem. It is an infrastructure balancing act. If Agentic AI is going to survive in the telecom core, we have to ditch legacy threshold scaling and embrace strict, event-driven orchestration.

Tools like KEDA give us the ability to build networks that are both cognitively brilliant and financially ruthless. We can spin up massive intelligence at the exact millisecond of failure and scale right back to zero the moment the network is healed.

References and Further Reading:

- Unlocking Energy Saving in Telecom Networks: A Path to a Sustainable Future – A deep dive into the operational and ESG mandates driving energy efficiency in modern telecom infrastructure.

- KEDA Documentation: Kubernetes Event-driven Autoscaling – Technical specifications for decoupling workload scaling from standard CPU/Memory metrics.

- keda-gpu-scaler – An open-source custom KEDA scaler I developed to enable event-driven autoscaling specifically tied to native GPU telemetry and queue depth.

Building and Operating a Cloud Native 5G SA Core Network

How Network Repository Function Plays a Critical Role in Cloud Native 5G SA Network

HPE Aruba Launches “Cloud Native” Private 5G Network with 4G/5G Small Cell Radios

…………………………………………………………………………………………….

About the Author:

Pavan Madduri is a Cloud-Native Architect, CNCF Golden Kubestronaut, and active IEEE researcher specializing in enterprise infrastructure automation, Agentic SREs, and Kubernetes networking. He designs scalable, zero-trust cloud environments and frequently writes about the intersection of AI governance and cloud-native infrastructure.

Connect with Pavan Madduri on [LinkedIn] .

Disclaimer: The author acknowledges the use of AI-assisted tools for structural formatting, language refinement, and copyediting during the drafting of this article. The core architectural concepts, technical opinions, and engineering strategies remain entirely original.

Does AI change the business case for cloud networking?

For several years now, the big cloud service providers – Amazon Web Services (AWS), Microsoft Azure, and Google Cloud – have tried to get wireless network operators to run their 5G SA core network, edge computing and various distributed applications on their cloud platforms. For example, Amazon’s AWS public cloud, Microsoft’s Azure for Operators, and Google’s Anthos for Telecom were intended to get network operators to run their core network functions into a hyperscaler cloud.

AWS had early success with Dish Network’s 5G SA core network which has all its functions running in Amazon’s cloud with fully automated network deployment and operations.

Conversely, AT&T has yet to commercially deploy its 5G SA Core network on the Microsoft Azure public cloud. Also, users on AT&T’s network have experienced difficulties accessing Microsoft 365 and Azure services. Those incidents were often traced to changes within the network’s managed environment. As a result, Microsoft has drastically reduced its early telecom ambitions.

Several pundits now say that AI will significantly strengthen the business case for cloud networking by enabling more efficient resource management, advanced predictive analytics, improved security, and automation, ultimately leading to cost savings, better performance, and faster innovation for businesses utilizing cloud infrastructure.

“AI is already a significant traffic driver, and AI traffic growth is accelerating,” wrote analyst Brian Washburn in a market research report for Omdia (owned by Informa). “As AI traffic adds to and substitutes conventional applications, conventional traffic year-over-year growth slows. Omdia forecasts that in 2026–30, global conventional (non-AI) traffic will be about 18% CAGR [compound annual growth rate].”

Omdia forecasts 2031 as “the crossover point where global AI network traffic exceeds conventional traffic.”

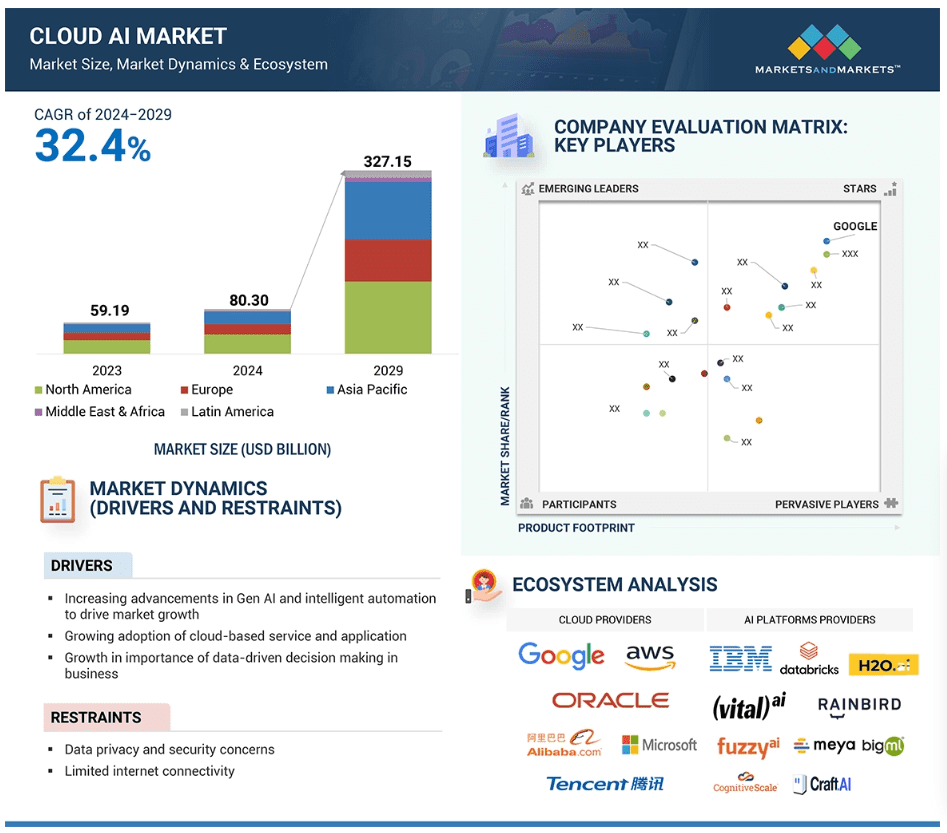

Markets & Markets forecasts the global cloud AI market (which includes cloud AI networking) will grow at a CAGR of 32.4% from 2024 to 2029.

AI is said to enhance cloud networking in these ways:

- Optimized resource allocation:

AI algorithms can analyze real-time data to dynamically adjust cloud resources like compute power and storage based on demand, minimizing unnecessary costs. - Predictive maintenance:

By analyzing network patterns, AI can identify potential issues before they occur, allowing for proactive maintenance and preventing downtime. - Enhanced security:

AI can detect and respond to cyber threats in real-time through anomaly detection and behavioral analysis, improving overall network security. - Intelligent routing:

AI can optimize network traffic flow by dynamically routing data packets to the most efficient paths, improving network performance. - Automated network management:

AI can automate routine network management tasks, freeing up IT staff to focus on more strategic initiatives.

The pitch is that AI will enable businesses to leverage the full potential of cloud networking by providing a more intelligent, adaptable, and cost-effective solution. Well, that remains to be seen. Google’s new global industry lead for telecom, Angelo Libertucci, told Light Reading:

“Now enter AI,” he continued. “With AI … I really have a power to do some amazing things, like enrich customer experiences, automate my network, feed the network data into my customer experience virtual agents. There’s a lot I can do with AI. It changes the business case that we’ve been running.”

“Before AI, the business case was maybe based on certain criteria. With AI, it changes the criteria. And it helps accelerate that move [to the cloud and to the edge],” he explained. “So, I think that work is ongoing, and with AI it’ll actually be accelerated. But we still have work to do with both the carriers and, especially, the network equipment manufacturers.”

Google Cloud last week announced several new AI-focused agreements with companies such as Amdocs, Bell Canada, Deutsche Telekom, Telus and Vodafone Italy.

As IEEE Techblog reported here last week, Deutsche Telekom is using Google Cloud’s Gemini 2.0 in Vertex AI to develop a network AI agent called RAN Guardian. That AI agent can “analyze network behavior, detect performance issues, and implement corrective actions to improve network reliability and customer experience,” according to the companies.

And, of course, there’s all the buzz over AI RAN and we plan to cover expected MWC 2025 announcements in that space next week.

https://www.lightreading.com/cloud/google-cloud-doubles-down-on-mwc

Nvidia AI-RAN survey results; AI inferencing as a reinvention of edge computing?

The case for and against AI-RAN technology using Nvidia or AMD GPUs

Generative AI in telecom; ChatGPT as a manager? ChatGPT vs Google Search

Cisco to lay off more than 4,000 as it shifts focus to AI and Cybersecurity

Reuters reports that Cisco Systems will cut thousands of jobs in its second round of layoffs this year. The number of people affected could be similar to or slightly higher than the 4,000 employees Cisco laid off in February, and will likely be announced as early as Wednesday with the company’s fourth-quarter results.

The San Jose, CA headquartered networking company plans to shift its product focus to higher-growth areas, such as AI and cybersecurity. It’s current set of products and services are listed here.

Cisco has been contending with weakening demand and persistent supply chain issues in its core business – routers and switches – that are used by ISPs and enterprise private networks. Two reasons for that are: 1.] the major cloud service providers design their own switch/routers or use bare metal switches (made by ODMs in Taiwan and China), and 2.] enterprise private/virtual private networks are being replaced by cloud network solutions.

- Global enterprise network sales have been declining. Dell’Oro Group reported sales contractions in Branch Routing and Campus Switching in 4Q-2023 and that is expected to continue throughout most of 2024. On premises data centers (which use Cisco Ethernet switches) are not growing. In its place……

- Enterprise spending on cloud infrastructure services is growing by leaps and bounds. It’s now nearing $80 billion per quarter. Cloud customers increased their spending on cloud services by $14.1 billion to $79.1 billion in the 2Q-2024, an increase of 22% year-over-year. It’s the third consecutive quarter in which the year-over-year growth rate was 20% or more, with generative AI being one of the factors behind the market acceleration.

……………………………………………………………………………………………………………………………………………

As a result of stagnant sales of its core networking products, Cisco has been pursuing a strategy aimed at diversifying its revenue streams. One of the most significant moves in this direction was the $28 billion acquisition of Splunk, a cybersecurity firm, which was finalized in March. This purchase is expected to boost Cisco’s subscription-based services, reducing its dependence on one-time hardware sales, which have been increasingly susceptible to market volatility.

Cisco’s major shift towards AI is a key part of its long-term strategy. In May, the company reiterated its ambitious goal of achieving $1 billion in AI-related product orders by 2025. This target is supported by a $1 billion fund launched in June, aimed at investing in AI startups such as Cohere, Mistral AI, and Scale AI. Over the past few years, Cisco has made over 20 AI-focused acquisitions and investments, highlighting its commitment to integrating AI into its product offerings.

……………………………………………………………………………………………………………………………………………..

Over 126,000 employees have been laid off across 393 tech companies since the start of the year, according to data from tracking website Layoffs.fyi. That surely reflects their need to cut costs to balance huge investments in AI, analytics and related technologies.

……………………………………………………………………………………………………………………………………………..

References:

https://www.cisco.com/c/en/us/products/index.html#~products-by-technology

Cisco to Implement Second Round of Layoffs Amidst Strategic Shift to AI and Cybersecurity

Worldwide Enterprise Network Spending Follows Roller Coaster Trajectory

Cisco restructuring plan will result in ~4100 layoffs; focus on security and cloud based products

Forbes: Cloud is a huge challenge for enterprise networks; AI adds complexity

Survey data and discussions with enterprise networking professionals reveal they are still grappling with many networking issues spawned by the expansion of the cloud – the most common of which include securing connections for remote work, implementing zero-trust security strategies, and integrating myriad cloud and wide-area networks (WANs).

For example, in Futuriom’s latest survey of 196 enterprise IT and networking professionals, more than 80% said the complexity of connecting the wide variety of networks was a large challenge. At nearly 70% of responses, expertise and knowledge was the second-largest challenge (multiple responses were allowed), and cost was cited by 60%. Please refer to survey highlights below.

Contributing to that complexity is the ephemeral nature of both cloud connectivity and hybrid work. Workers are now moving around more than ever, and cloud services can change and scale nearly every day (or minute).

Survey Highlights:

- Survey respondents indicate strong demand for SD-WAN and SASE managed services. Our survey data and discussions with end users indicate that SD-WAN/SASE technology helps professionals with network and security challenges, including the growing complexity created by distributed applications, cloud connectivity, and sprawling security risks.

- Managing network complexity is the largest challenge driving managed services demand. When asked about the largest challenges in managing WANs, 85% of respondents identified complexity, followed by expertise and knowledge (68%). Rounding out the responses were cost (60%) and time (47%). (Multiple responses were allowed.)

- Hybrid work and the need for zero-trust network access (ZTNA) are key drivers of SD-WAN/SASE technology. In the survey, 98% of respondents said that hybrid work has increased demand for SASE and ZTNA. When we asked respondents if ZTNA is a crucial component of SASE and SD-WAN offerings, 92% said yes.

- Hybrid (cloud/edge deployment) and single-pass architectures will be important components of SASE/SD-WAN services going forward. When respondents were asked if they wanted a hybrid solution that can accommodate networking and security both on premises and using cloud points of presence (PoPs), 98% said yes. In addition, 94% of respondents said they prefer a single-pass architecture.

- There will continue to be a diversity of SD-WAN/SASE deployment models. The two most popular models for deployment are best-of-breed combination (34%) and single-vendor (23%), but survey results show a wide diversity of deployment models.

AI increases complexity as enterprises need to figure out how to store, connect, and move their data in hybrid clouds that will leverage AI.

This complexity, along with the rapid shift to hybrid work spurred by COVID, has triggered a wave of innovation in networking – perhaps more innovation than we have seen in decades. Startups are drawing large funding rounds. Best-of-breed established networking players such as Arista Networks, Extreme Networks, Juniper Networks, and HPE are building new networking and security products and chipping away at the market share of market leader Cisco. Cisco is responding in kind. Sources tell me they think Cisco’s acquisition of Valtix may be the most interesting in years.

All of this sets the stage for the most dynamic networking environment I’ve seen in decades. And it’s only going to get more interesting, as the AI and hybrid work wave makes networking more crucial.

The melding of security and networking remains hot. In the software-defined networking (SD-WAN) and Secure Access Service Edge market, potential Initial Public Offering (IPO) companies such as Aryaka Networks, Cato Networks, and Versa Networks are building our product suites to help secure remote workers and cloud connectivity. These companies will also help enterprises connect to cloud on-ramps and consolidate security functions with a SASE approach. Versa last October tanked up with $120 in funding in what it called a “pre-IPO round.”

Many of the cloud networking startups that are included in the Futuriom 50 list of promising cloud innovators are using this chaotic moment to shore up strategies, raise money — or both.

For example, just this week, cloud-native networking start-up Arrcus announced that Hitachi Ventures would invest additional capital, raising its Series D to $65 million before it closes. Arrcus says its Arrcus Connected Edge (ACE) platform will be more economical for cloud providers and service providers deploying services such as 5G and AI. It claims it is growing revenue 100% year-over-year.

Other cloud networking startups are also going after AI. DriveNets recently announced that its Network Cloud-AI solution, which uses cloud-based Ethernet-based networking to boost the performance of AI clouds, is in trials with major hyperscalers.

Cost optimization, one of the strongest themes of the year in cloud technology, is another focus for cloud networking players. Cloud networking pioneer Aviatrix has beefed up security and cost-optimization features and launched a distributed firewall to help enterprises reduce the costs of cloud networking infrastructure. Prosimo last week made an interesting play to get its application-layer cloud networking suite in the hands of more users by launching a free, introductory-level version of its product called MCN Foundation.

Yes, there is a trend to all these announcements. They are focused on return on investment (ROI) and cost savings. This is the right message for the era we are in. Enterprise tech planners not only want to shift to more flexible cloud-based services, they need to do so to save money.

For example, in its new product release, Prosimo said customers can achieve a 30%-50% reduction in total cost of ownership (TCO) by optimizing cloud network connectivity. With its distributed firewall, Aviatrix says network pros will save money by reducing the expense of additional firewall instances, which many enterprises must buy to support additional cloud connectivity and scaling. (But they may not want to stack firewall upon firewall into the cloud, which after all can function as a firewall itself.) DriveNets says its trials have reduced the idle time of AI clouds by as much as 30%.

Integrating all of this stuff isn’t easy either. That’s the value proposition of Itential, a plucky Georgia-based startup with a set of low-code automation tools that streamline networking for integrations in hybrid networking and cloud environments.

It’s no coincidence that the marketing messages have all shifted toward ROI, which is the mother’s milk of technology. It’s the reason we all use cloud-based software-as-a-service and iPhones instead of minicomputers and rotary dial phones. Innovation is about efficiency.

This makes me very optimistic about cloud networking – and the networking market in general. After decades of stagnation, the cloud has woken up the industry. In addition to innovation, there is also a surge in competition — which will put more efficient and affordable technology into the hands of the users.

References:

Networking Startups Jump On Cloud Costs And AI (forbes.com)

https://www.futuriom.com/articles/news/results-from-our-sd-wan-sase-managed-services-survey/2023/06

Generative AI in telecom; ChatGPT as a manager? ChatGPT vs Google Search

Generative AI could put telecom jobs in jeopardy; compelling AI in telecom use cases

Allied Market Research: Global AI in telecom market forecast to reach $38.8 by 2031 with CAGR of 41.4% (from 2022 to 2031)

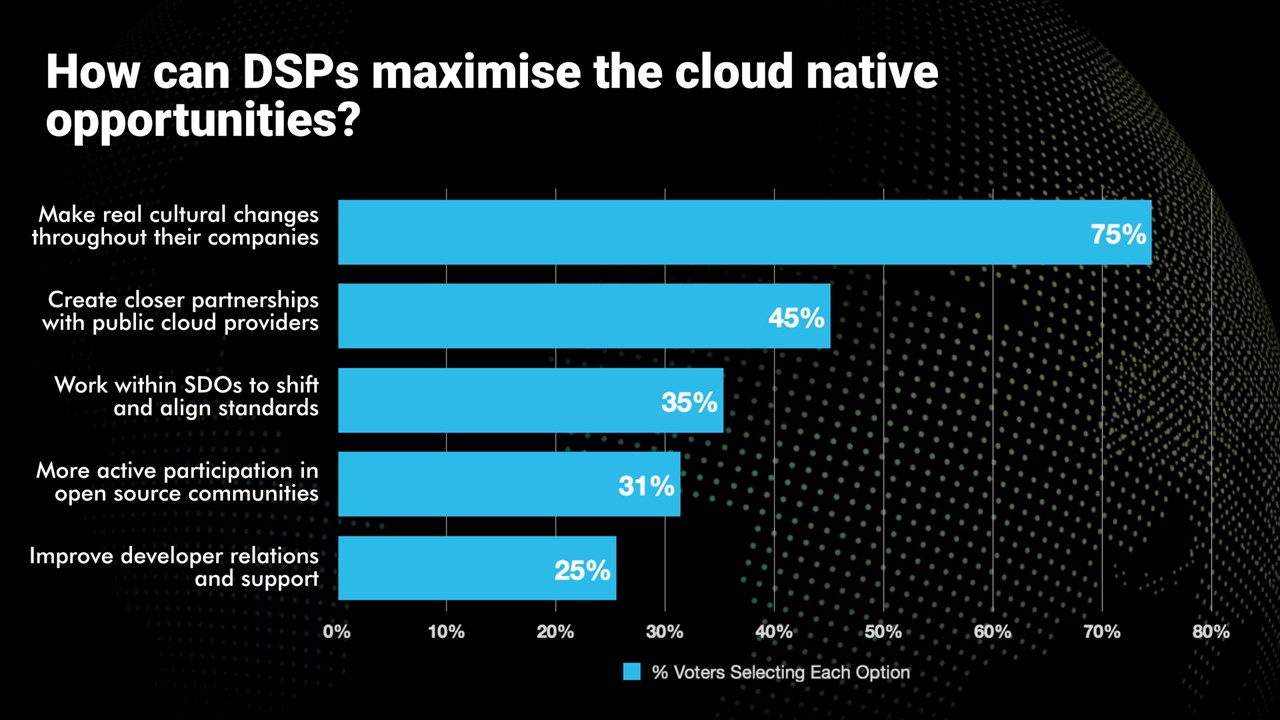

Telecom TV Poll: How to maximize cloud-native opportunities?

The adoption of cloud-native methodologies, processes and tools has been a challenge for communications service providers (CSPs), aka telcos or network operators.

- Telcos are embracing cloud-native processes and tools

- It’s part of their evolution towards being digital service providers

- But the cloud-native journey is still in its early stages

- Real cultural change is needed if telcos are to capitalise fully on the cloud-native opportunities

Alongside a a session, Why cloud native is essential to delivering the automation, agility and innovation needed to support new services, at Telco TV’s DSP Leaders World Forum event in Windsor, UK, a poll was taken. The following question was asked, “How can Digital Service Providers maximize the cloud-native opportunities?” Respondents were able to select all the options they deemed relevant. Here are the results:

Please check out the upcoming Cloud Native Telco Summit session on cloud-native application development to see what the industry experts have to say.

Omdia and Ericsson on telco transitioning to cloud native network functions (CNFs) and 5G SA core networks

Huawei Connect 2022: It’s Cloud Native everything!

Banned in the U.S., China Telecom Americas launches eSurfing Cloud services in Brazil

China Telecom do Brasil (“CTB”) today announced the launch of eSurfing Cloud services in Brazil. Through on-demand purchases that aim to simplify the process for more targeted service, the new offering provides businesses with the flexibility of accessing public and private cloud services, combined with the security and control of private cloud.

CTB’s eSurfing Cloud services enable enterprises in Brazil to take advantage of the latest cloud technologies, with the added benefit of local support and expertise. With this new offering, businesses in Brazil can optimize their cloud environments, reduce costs, and improve efficiency, all while maintaining high levels of security and compliance. The eSurfing Cloud services in São Paulo will allow customers to connect on a global multi-cloud network of more than nine public cloud nodes, 30 proprietary edge cloud nodes, and more than 200 CDN nodes.

“We are excited to bring our world-class cloud solutions to businesses in Brazil,” said Luis Fiallo, the officer of China Telecom do Brasil. “Our eSurfing Cloud services deliver flexible and scalable solutions that can meet the unique evolving needs of businesses in the region. The launch of this new offering is our continued commitment to helping our customers achieve their business goals and succeed in today’s digital landscape.”

Brazil is one of the most active cloud markets in Latin America, with high demand for the critical services that connect LATAM to the global market. Cloud adoption in Brazil has increased nearly 40% since 2019 and is expected to grow nearly 19% by 2033. While eSurfing Cloud provides customers with access to public cloud, private cloud, hybrid cloud, and edge cloud, its advantages in cloud-network integration, security, and extensive customization make it the choice digital transformation accelerator for businesses of any size.

About China Telecom do Brasil:

China Telecom do Brasil is the largest subsidiary of China Telecom Americas in Latin America and a leading provider of Internet and cloud computing services in Brazil. With a focus on customer satisfaction, the company delivers reliable, scalable, and secure solutions that enable businesses to connect their networks within Brazil and internationally, while thriving in today’s digital landscape. The company is the largest Chinese Internet provider in Brazil with network POPs and backbone connecting the state of Sao Paulo, State of Rio De Janeiro, State of Parana and State of Rio Grande do Sul to the China Telecom global network.

SOURCE: China Telecom Americas

………………………………………………………………………………………………………………………………………

China Telecom still banned in U.S.:

The Federal Communications Commission (FCC) has raised mounting concerns about Chinese telecom companies in recent years which had won permission to operate in the United States decades ago. On October 26, 2021 the FCC revoked and terminated China Telecom America’s authority to provide Telecom Services in America. The FCC said that China Telecom (Americas) “is subject to exploitation, influence and control by the Chinese government.”

On December 20, 2022, a U.S. federal appeals court rejected China Telecom Corp’s challenge to the order withdrawing the company’s authority to provide services in the United States.

…………………………………………………………………………………………………………………………………………

References:

https://www.fcc.gov/document/fcc-revokes-china-telecom-americas-telecom-services-authority

Analysis and Implications: China’s 3 Major Telecom Operators to be delisted by NYSE

Swisscom, Ericsson and AWS collaborate on 5G SA Core for hybrid clouds

Swiss network operator Swisscom have announced a proof-of-concept (PoC) collaboration with Ericsson 5G SA Core running on AWS. The objective is to explore hybrid cloud use cases with AWS, beginning with 5G core applications. The plan is for more applications to then gradually be added as the trial continues. With each cloud strategy (private, public, hybrid, multi) bringing its own drivers and challenges the idea here seems to be enabling the operator to take advantage of the specific characteristics of both hybrid and public cloud.

The PoC reconfirms Swisscom and Ericsson’s view of the potential hybrid cloud has as a complement to existing private cloud infrastructure. Both Swisscom and Ericsson are on a common journey with AWS to explore how use cases can benefit telecom operators.

The PoC will examine use cases that take advantage of the particular characteristics of hybrid and public cloud. In particular, the flexibility and elasticity it can offer to customers which can mean deployment efficiencies for use cases where capacity is not constantly needed. An example of this could be when maintenance activities are undertaken in Swisscom’s private cloud, or when there are traffic peaks, AWS can be used to offload and complement the private cloud.

Swisscom had already been collaborating with AWS on migrating its 5G infrastructure towards standalone 5G. In addition, it has also used the hyperscaler’s public cloud platform for its IT environments. Telco concerns linger [1.] around the use of public cloud in telecoms infrastructure (especially the core networks) for some operators, hybrid cloud is seemingly gaining momentum as a transitional approach.

Note 1. Telco concerns over public cloud:

- In a recent survey by Telecoms.com more than four in five industry respondents feared security concerns over running telco applications in the public cloud, including 37% who find it hard to make the business case for public cloud as private cloud remains vital in addressing security issues. This also means that any efficiency gains are offset by the IT environment and the network running over two cloud types.

- Many in the industry also fear vendor lock-in and lack of orchestration from public cloud providers. Around a third of industry experts from the same survey find it a compelling reason not to embrace and move workloads to the public cloud unless applications can run on all versions of public cloud and are portable among cloud vendors.

- There’s also a lack of interoperability and interconnectedness with public clouds. The services of different public cloud vendors are indeed not interconnected nor interoperable for the same types of workloads. This concern is one of the drivers to avoid public cloud, according to some network operators.

–>PLEASE SEE THE COMMENT ON THIS TOPIC IN THE BOX BELOW THE ARTICLE.

Quotes:

Mark Düsener, Executive Vice President Mobile Network & Services at Swisscom, says: “By bringing the Ericsson 5G Core onto AWS we will substantially change the way our networks will be built and operated. The elasticity of the cloud in combination with a new magnitude in automatization will support us in delivering even better quality more efficiently over time. In order to shape this new concept, we as Swisscom believe strategic and deep partnerships like the ones we have with Ericsson and AWS are the key for success.”

Monica Zethzon, Head of Solution Area Core Networks, Ericsson says: “5G innovation requires deep collaboration to create the foundations necessary for new and evolving use cases. This Proof-of-Concept project with Swisscom and AWS is about opening up the routes to innovation by using hybrid cloud’s flexible combination of private and public cloud resources. It demonstrates that through partnership, we can deliver a hybrid cloud solution which meets strict telecoms industry requirements and security while making best use of HCP agility and cloud economy of scale.”

Fabio Cerone, General Manager AWS Telco EMEA at AWS, says: “With this move, Swisscom is opening the door to cloud native networks, delivering full automation and elasticity at scale, with the ability to innovate faster and make 5G impactful to their customers. We are committed to working closely with partners, such as Ericsson, to explore new use cases and strategies that best support the needs of customers like Swisscom.”

“How to deploy software in different cloud environments – at a high level, it is hard making that work in practice,” said Per Narvinger, the head of Ericsson’s cloud software and services unit. “You have hyperscalers with their offering and groups trying to standardize and people trying to do it their own way. There needs to be harmonization of what is wanted.”

https://telecoms.com/520337/swisscom-ericson-and-aws-collaborate-on-hybrid-cloud-poc-on-5g-core/

https://telecoms.com/520055/telcos-and-the-public-cloud-drivers-and-challenges/

AWS Telco Network Builder: managed network automation service to deploy, run, and scale telco networks on AWS

Omdia and Ericsson on telco transitioning to cloud native network functions (CNFs) and 5G SA core networks

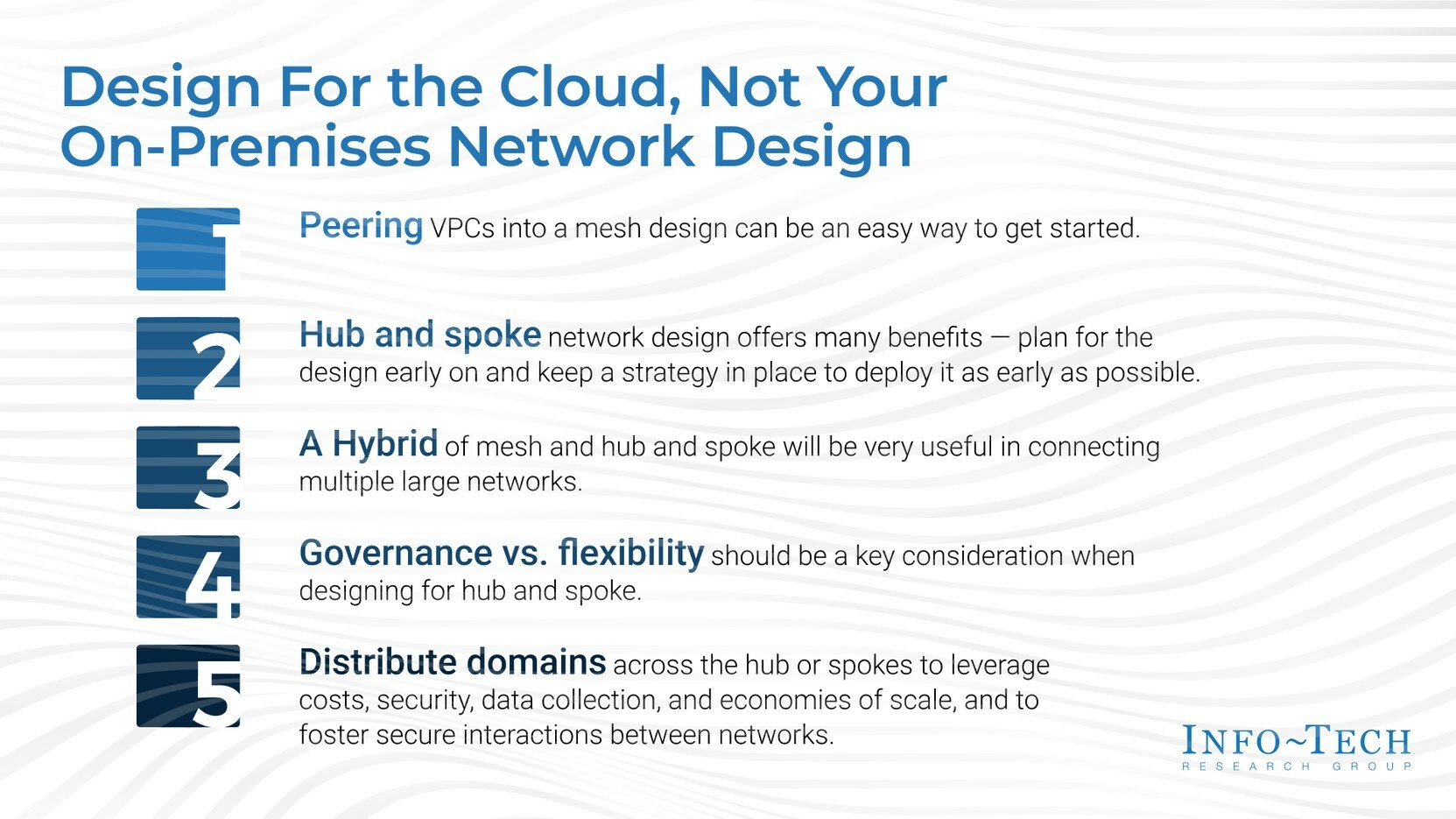

Info-Tech: Cloud Network Design Must Evolve to Meet Both Current and Future Organizational Needs

Cloud adoption among organizations has increased dramatically over the past few years, both in the range of services used and the extent to which they are employed. However, network builders tend to overlook the vulnerabilities of network topologies, which leads to complications down the road, especially since the structures of cloud network topologies are not all of the same quality. To help organizations build a network design that suits their current needs and future state, global IT research and advisory firm Info-Tech Research Group has published its latest advisory deck, Considerations for a Hub and Spoke Model When Deploying Infrastructure in the Cloud.

The new research deck states that for organizations considering migrating their resources to the cloud, careful planning and decision making is required. This includes selecting the right topology, designing the cloud infrastructure for efficient management, and providing access to shared services. The advisory deck further highlights that one of the main challenges of cloud infrastructure planning is finding the right balance between governance and flexibility, which is often overlooked.

“Evaluating and selecting the right cloud network topology is crucial for optimizing performance. It also enables easier management and resource provisioning,” says Nitin Mukesh, senior research analyst at Info-Tech Research Group. “An ‘as the need arises’ strategy will not work efficiently since network design changes can significantly impact data flows and application architectures, which becomes more complicated as the number of cloud-hosted services grows. Designing a network strategy early on will give more control over networks and prevent the need for significant infrastructure changes later.”

Info-Tech’s research indicates that when organizations move to the cloud, many often retain the mesh networking topology from their on-prem design, or they choose to implement the mesh design using peering technologies in the cloud without considering the potential changes in business needs. Although there are various network topologies for on-prem infrastructure, the network design team may not be aware of the best approach in cloud platforms for their requirements, or a cloud networking strategy may even go overlooked during the migration.

The new resource explores a hub and spoke model for organizations deciding between governance and flexibility in network design. A hub and spoke network design involves connecting multiple networks to a central network, or a hub, that facilitates intercommunication between them. The hub can be used by multiple workloads for hosting services and managing external connectivity.

Other networks connected to the hub through network peering are called spokes and host workloads. Communications between workloads, servers, or services on the spokes pass through the hub, where they are inspected and routed. The spokes can be centrally managed from the hub using IT rules and processes. This design allows for a larger number of virtual networks to be interconnected, with only one peered connection needed to communicate with any other network in the system.

Organizations that choose to deploy the hub and spoke model face a dilemma in choosing between governance and flexibility for their networks. Info-Tech recommends that organizations consider the following design options when developing a cloud network strategy:

- PEERING: Peering Virtual Private Clouds (VPCs) into a mesh design can be an easy way to get onto the cloud, but it shouldn’t be the networking strategy for the long run.

- HUB AND SPOKE: Hub and spoke network design offers more benefits than any other network strategy to be adopted only when the need arises. Organizations should plan for the design and strategize to deploy it as early as possible.

- HYBRID: A mesh and hub and spoke hybrid can be instrumental in connecting multiple large networks, especially when they need to access the same resources without having to route the traffic over the internet.

- GOVERNANCE VS. FLEXIBILITY: Governance vs. flexibility should be a key consideration when designing for hub and spoke to leverage the best out of the infrastructure.

- DOMAINS: Distribute domains across the hub or spokes to leverage costs, security, data collection, and economies of scale and foster secure interactions between networks.

The firm advises that the advantages of using a hub and spoke model far exceed those of using a mesh topology in the cloud. However, organizations, especially large ones, are complex entities, and choosing only one model may not serve all business needs. In such cases, a hybrid approach may be the best strategy.

To learn more, download the complete Considerations for a Hub and Spoke Model When Deploying Infrastructure in the Cloud advisory deck.

Info-Tech Research Group is one of the world’s leading information technology research and advisory firms, proudly serving over 30,000 IT professionals. The company produces unbiased and highly relevant research to help CIOs and IT leaders make strategic, timely, and well-informed decisions. For 25 years, Info-Tech has partnered closely with IT teams to provide them with everything they need, from actionable tools to analyst guidance, ensuring they deliver measurable results for their organizations.

Media professionals can register for unrestricted access to research across IT, HR, and software and over 200 IT and Industry analysts through the ITRG Media Insiders Program. To gain access, contact [email protected].

SOURCE Info-Tech Research Group

……………………………………………………………………………………………………………………

References:

For more information about Info-Tech Research Group or to access the latest research, visit infotech.com and connect via LinkedIn and Twitter.

Canalys: Cloud marketplace sales to be > $45 billion by 2025

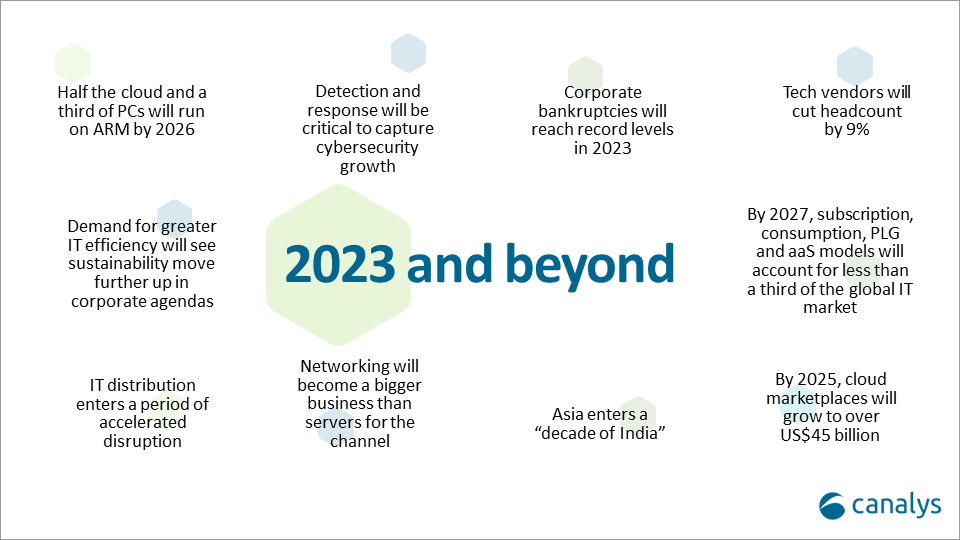

Canalys now expects that by 2025, cloud marketplaces will grow to more than $45 billion, representing an 84% CAGR. That was one of the market research firm’s predictions for 2023 and beyond (see chart below).

Cloud marketplaces [1.] are accelerating as a route to market for technology, led by hyperscale cloud vendors such as Alibaba, Amazon Web Services, Microsoft, Google and Salesforce, which are pouring billions of development dollars into the sector.

Note 1. A cloud marketplace is an online storefront operated by a cloud service provider. A cloud marketplace provides customers with access to software applications and services that are built on, integrate with or complement the cloud service provider’s offerings. A marketplace typically provides customers with native cloud applications and approved apps created by third-party developers. Applications from third-party developers not only help the cloud provider fill niche gaps in its portfolio and meet the needs of more customers, but they also provide the customer with peace of mind by knowing that all purchases from the vendor’s marketplace will integrate with each other smoothly.

…………………………………………………………………………………………………………………………………………………………………….

“The marketplace route to market is on fire and cannot be ignored by any channel leader,” said Canalys Chief Analyst, Jay McBain. “Marketplaces grew more in the first three months of the pandemic than in the previous decade and have just kept growing,” he added.

“We under-called it,” explained Steven Kiernan, vice president at Canalys. “Cloud marketplaces are accelerating at such a dizzying speed that we’ve doubled our pre-pandemic forecast.

Some software vendors that are active on marketplaces, in particular cybersecurity vendors, are publicly reporting as much as 600% year-on-year growth via this channel, according to McBain.

In addition, the hyperscalers are now reporting growing numbers of billion-dollar customer commitments through enterprise cloud consumption credits, which cover more than just software.

The large cloud marketplaces have lowered fees from upwards of 20% down to 3%, enabling vendors to fund multi-partner offers inside the transaction.

Private equity is funding billions more into marketplace development firms such as AppDirect, Mirakl, Vendasta and CloudBlue to enable hundreds of niche marketplaces across different buyers, industries, geographies, customer segments, product areas and business models.

Canalys Chief Analyst, Alastair Edwards:

“The rise of this route to market represents a threat to both resellers and two-tier distribution. But as more complex technologies are consumed via marketplaces, end customers are also turning to trusted partners to help them discover, procure and manage marketplace purchases. The hyperscalers are increasingly recognizing the value of channel partners, allowing them to create customized vendor offers for end-customers, and supporting the flow of channel margins through their marketplaces. Hyperscalers’ cloud marketplaces are becoming a growing force in global IT distribution as a result.”

By 2025, Canalys conservatively forecasts that almost a third of marketplace procurement will be done via channel partners on behalf of their end customers.

Canalys key predictions for 2023 and beyond:

About Canalys:

Canalys is an independent analyst company that strives to guide clients on the future of the technology industry and to think beyond the business models of the past. We deliver smart market insights to IT, channel and service provider professionals around the world. We stake our reputation on the quality of our data, our innovative use of technology and our high level of customer service.

References:

https://canalys.com/newsroom/cloud-marketplace-forecast-2023

https://www.canalys.com/resources/Canalys-outlook-2023-predictions-for-the-technology-industry

https://www.techtarget.com/searchitchannel/definition/cloud-marketplace

Canalys: Global cloud services spending +33% in Q2 2022 to $62.3B

AWS, Microsoft Azure, Google Cloud account for 62% – 66% of cloud spending in 1Q-2022

IDC: Cloud Infrastructure Spending +13.5% YoY in 4Q-2021 to $21.1 billion; Forecast CAGR of 12.6% from 2021-2026

Microsoft acquires Lumenisity – hollow core fiber high speed/low latency leader

Executive Summary:

Microsoft announced it has acquired Lumenisity® Limited, a leader in next-generation hollow core fiber (HCF) solutions. Lumenisity’s innovative and industry-leading HCF product can enable fast, reliable and secure networking for global, enterprise and large-scale organizations.

The acquisition will expand Microsoft’s ability to further optimize its global cloud infrastructure and serve Microsoft’s Cloud Platform and Services customers with strict latency and security requirements. The technology can provide benefits across a broad range of industries including healthcare, financial services, manufacturing, retail and government.

Organizations within these sectors could see significant benefit from HCF solutions as they rely on networks and datacenters that require high-speed transactions, enhanced security, increased bandwidth and high-capacity communications. For the public sector, HCF could provide enhanced security and intrusion detection for federal and local governments across the globe. In healthcare, because HCF can accommodate the size and volume of large data sets, it could help accelerate medical image retrieval, facilitating providers’ ability to ingest, persist and share medical imaging data in the cloud. And with the rise of the digital economy, HCF could help international financial institutions seeking fast, secure transactions across a broad geographic region.

Types of Hollow Core Fiber:

Various types of hollow-core photonic bandgap fibers:

(a) Photonic crystal fiber featuring small hollow core surrounded by a periodic array of large air holes.

(b) Microstructured fiber featuring medium-sized hollow core surrounded by several rings of small air holes separated by nano-size bridges.

(c) Bragg fiber featuring large hollow core surrounded by a periodic sequence of high and low refractive index layers

Lumenisity HCF benefits:

Lumenisity’s hollow core fiber technology replaces the standard glass core in a fiber cable with an air-filled chamber. According to Microsoft, light travels through air 47% faster than glass. Lumenisity’s next generation of HCF uses a proprietary design where light propagates in an air core, which has significant advantages over traditional cable built with a solid core of glass, including:

- Increased overall speed and lower latency as light travels through HCF 47% faster than standard silica glass.[1]

- Enhanced security and intrusion detection due to Lumenisity’s innovative inner structure.

- Lower costs, increased bandwidth and enhanced network quality due to elimination of fiber nonlinearities and broader spectrum.

- Potential for ultra-low signal loss enabling deployment over longer distances without repeaters.

Lumenisity was formed in 2017 as a spinoff from the world-renowned Optoelectronics Research Centre (ORC) at the University of Southampton to commercialize breakthroughs in the development of hollow core optical fiber. In 2021 and 2022, the company won the Best Fibre Component Product for their NANF® CoreSmart® HCF cable in the European Conference on Optical Communication (ECOC) Exhibition Industry Awards. As part of the Lumenisity acquisition, Microsoft plans to utilize the organization’s technology and team of industry-leading experts to accelerate innovations in networking and infrastructure.

Lumenisity said: “We are proud to be acquired by a company with a shared vision that will accelerate our progress in the hollow-core space. This is the end of the beginning, and we are excited to start our new chapter as part of Microsoft to fulfill this technology’s full potential and continue our pursuit of unlocking new capabilities in communication networks.”

………………………………………………………………………………………………………………………………………………………………..

Analysis:

The purchase is also noteworthy in light of Microsoft’s other recent acquisitions in the telecommunications sector, which include Affirmed Networks, Metaswitch Networks and AT&T’s core network operations (including 5G SA Core Network).

Microsoft isn’t the only company interested in HCF technology and Lumenisity. Both BT in the UK and Comcast in the US have tested Lumenisity’s offerings.

Comcast announced in April it was able to support speeds in the range of 10 Gbit/s to 400 Gbit/s over a 40km “hybrid” connection in Philadelphia that utilized legacy fiber and the new hollow core fiber. Comcast worked with Lumenisity.

“As we continue to develop and deploy technology to deliver 10G, multigigabit performance to tens of millions of homes, hollow core fiber will help to ensure that the network powering those experiences is among the most advanced and highest performing in the world,” said Comcast networking chief Elad Nafshi in the release issued in April.

References:

https://www.datacenterdynamics.com/en/news/microsoft-acquires-hollow-core-fiber-firm-lumenisity

Comcast Deploys Advanced Hollowcore Fiber With Faster Speed, Lower Latency