Author: Alan Weissberger

U.S. Home Internet prices DECLINE amidst fierce competition between wireless carriers and cablecos

Home internet prices in the U.S. are being driven down by fierce competition between mobile carriers offering Fixed Wireless Access (FWA) and cable internet companies offering legacy Hybrid Fiber Coax connections. The increased competition has driven down the cost of home internet service, a welcome break for consumers when prices are rising for many other essential products. The price of home internet service fell 3.1% in May from a year earlier, while the overall consumer-price index rose 2.4%, according to the Labor Department.

The WSJ reports that major home-internet service providers including Verizon VZ, Comcast/Xfinity and T-Mobile launched a flurry of price-lock guarantees, promising steady rates for as long as five years. CableCos Charter, which is acquiring Cox, unveiled a three-year deal last year.

Cable companies have struggled to retain broadband internet subscribers since mobile carriers began offering more affordable 5G fixed-wireless access (FWA) internet service in 2018. FWA, which relies on over the air transmission to cell towers instead of HFC access, brought competition into markets where cable companies had long enjoyed being the only game in town. Now both types of providers are growing more aggressive to attract—and keep—customers.

“The cable companies went from gaining subscribers and raising rates every year to declining subscribers and giving people price locks,” said John Hodulik, a UBS analyst. “They’re seeing churn rise in their broadband subscriber base. And they’re trying to nip that in the bud.” Fixed wireless can sometimes cost half as much as a cable-provided internet plan. Though network congestion and other connectivity issues can be an issue for some users, the lower price point has been luring cable customers away.

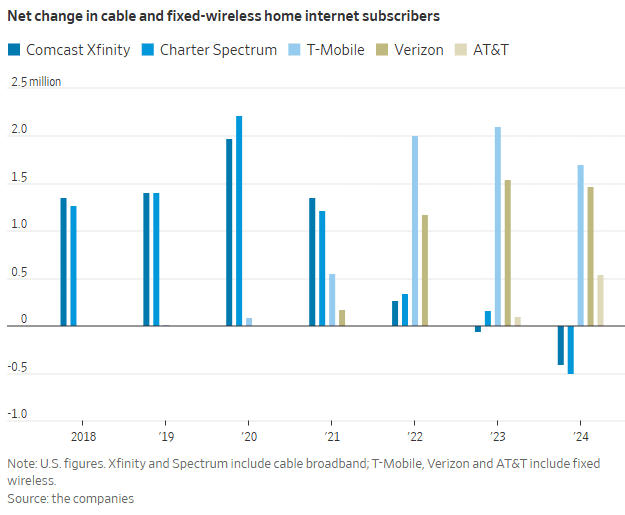

T-Mobile, Verizon and AT&T added a combined 3.7 million FWA customers in 2024. In sharp contrast, Comcast’s Xfinity and Charter’s Spectrum lost more than 900,000 home internet subscribers. That’s depicted in this graph:

“Our pricing wasn’t breaking through in the marketplace,” said Steve Croney, chief operating officer for Comcast’s connectivity and platforms business. He said the company’s five-year price lock, introduced in April, competes well against the telecom companies’ offerings.

Frank Boulben, chief revenue officer at Verizon’s consumer group, said his company has been trying to address the “pain points” customers have with cable companies, such as price hikes. That’s why the telco is emphasizing FWA vs its FiOS fiber to the home based service. Boulben said his company would focus on selling fiber service to customers as it becomes available to them.

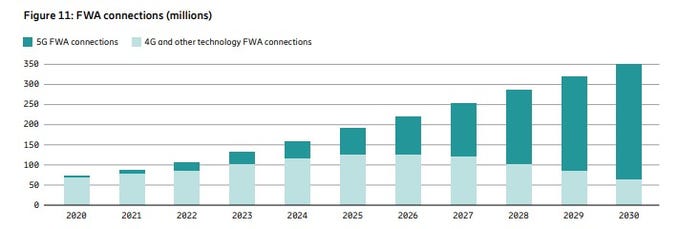

Is FWA the ONLY real killer application for 5G? Even though it was NOT one of the envisioned use cases? Ericsson’s recently released Mobility Report says FWA will account for more than 35% of all new fixed broadband connections, with an expected increase to 350 million by the end of 2030. The report states that more than half of all network service providers (wireless telcos) who offer FWA now do so with “speed-based monetization benefits enhanced by 5G.”

About 80% of the global network operators sampled by Ericsson currently offer FWA, with the most rapid area of growth among CSPs (communications service providers) offering 5G-enabled speed-based tariff plans. These opportunities are about the ability to offer a range of subscriber packages with different downlink and uplink data options with 5G FWA. As with fiber deals, “increasing monetization opportunities for CSPs compared to earlier generations of FWA.” 51% of operators with FWA offerings now include these speed-based options, which is up from 40% on the same period in June 2024 and represents a 27.5% increase. The June 2024 number had grown 50% on the June 2023 equivalent.

Source: Ericsson Mobility Report

…………………………………………………………………………………………………………………………………………………………………..

“We are at an inflection point, where 5G and the ecosystem are set to unleash a wave of innovation,” said Erik Ekudden, Ericsson Senior Vice President and Chief Technology Officer. “The recent advancements in 5G standalone (SA) networks, coupled with the progress in 5G-enabled devices, have led to an ecosystem poised to unlock transformative opportunities for connected creativity. Service providers have recognized this potential of 5G and are beginning to monetize it through innovative service offerings that extend beyond merely selling data plans. To fully realize the potential of 5G, it is essential to continue deploying 5G SA and to further build out mid-band sites. 5G SA capabilities serve as a catalyst for driving new business growth opportunities.”

Fixed-wireless doesn’t work everywhere. Besides congestion weak signals can make coverage spotty. If your cell phone doesn’t pick up 5G coverage smoothly, fixed-wireless from the same company probably won’t work either.

Verizon, AT&T and T-Mobile are winning converts to FWA at a faster pace than many anticipated, said Jonathan Chaplin, a managing partner at equity research firm New Street Research. Charter agreed to buy Cox last month for $21.9 billion in equity and assume $12 billion of its outstanding debt, in part to acquire scale to better compete with fixed wireless access. However, fixed-wireless growth can’t last indefinitely. The wireless networks on which they run will eventually hit capacity, limiting how many subscribers they can add. Chaplin estimates the networks can support around 19 million total fixed-wireless subscribers—which he predicts they will reach in about five years, accounting for planned network expansions that the companies have announced. When that limit is reached, cable companies may regain the upper hand and keep growing their fiber customer base, Chaplin said.

The big three wireless carriers (AT&T, Verizon and T-Mobile) have all been investing in fiber-based wired networks via build-outs and acquisitions. AT&T is bringing new customers in via FWA, with the long-term goal to convert them to fiber-based service, said Erin Scarborough, who runs that company’s broadband and connectivity initiatives.

References:

https://www.telecoms.com/5g-6g/ericsson-says-fwa-is-boosting-telco-monetization-opportunities

https://www.ericsson.com/en/reports-and-papers/mobility-report

https://www.consumeraffairs.com/news/cable-vs-wireless-war-is-driving-prices-down-062525.html

Dell’Oro: 4G and 5G FWA revenue grew 7% in 2024; MRFR: FWA worth $182.27B by 2032

Latest Ericsson Mobility Report talks up 5G SA networks and FWA

T-Mobile posts impressive wireless growth stats in 2Q-2024; fiber optic network acquisition binge to complement its FWA business

5G Advanced offers opportunities for new revenue streams; 3GPP specs for 5G FWA?

FWA a bright spot in otherwise gloomy Internet access market

Google Cloud targets telco network functions, while AWS and Azure are in holding patterns

Overview:

Network operators have used public clouds for analytics and IT, including their business and operational support systems, but the vast majority have been reluctant to rely on hyper-scaler public clouds to host their network functions. However, there have been a few exceptions:

1. AWS counts Boost Mobile, Dish Network, Swisscom and Telefónica Germany as network operators running part of their 5G network in its public cloud. In a cloud-native 5G stand alone (SA) core network, the network functions are virtualized and run as software, rather than relying on dedicated hardware.

a] Dish Network is using Nokia’s cloud-native, 5G standalone core software which is deployed on the AWS public cloud. This includes software for subscriber data management, device management, packet core, voice and data core, and integration services. Dish invokes several AWS services, including Regions, Local Zones and Outposts, to host its 5G core network and related components.

b] Swisscom is migrating its core applications, including OSS/BSS and portions of its 5G core, to AWS according to Business Wire. This is part of a broader digital transformation strategy to modernize its infrastructure and services.

c] Telefónica Germany (O2 Telefónica) has moved its 5G core network to Amazon Web Services (AWS). This move, in collaboration with Nokia, makes them the first telecom company to switch an existing 5G core to a public cloud provider, specifically AWS. They launched their 5G cloud core, built entirely in the cloud, in July 2024, initially serving around one million subscribers.

2. Microsoft’s Azure cloud is running AT&T and the Middle East’s Etisalat 5G core network. AT&T is using Microsoft’s Azure Operator Nexus platform to run its 5G core network, including both standalone (SA) and non-standalone (NSA) deployments, according to AT&T and Microsoft. This move is part of a strategic partnership between the two companies where AT&T is shifting its 5G mobile network to the Microsoft cloud. However, AT&T’s 5G core network is not yet commercially available nationwide.

3. Ericsson has partnered with Google Cloud to offer 5G core as a service (5GCaaS) leveraging Google Cloud’s infrastructure. This allows operators to deploy and manage their 5G core network functions on Google’s cloud, rather than relying solely on traditional on-premises infrastructure. This Ericsson on-demand service recently launched with Google seems aimed mainly at smaller telcos, keen to avoid big upfront costs, or specific scenarios. To address much bigger needs, Google has an Outposts competitor it markets under the brand of Google Distributed Cloud (or GDC).

A serious concern with this Ericsson -Google offering is cloud provider lock-in, i.e. that a telco would not be able to move its 5GCaaS provided by Ericsson to an alternative cloud platform. Going “native,” in this case, meant building on top of Google-specific technologies, which rules out any prospect of a “lift and shift” to AWS, Microsoft or someone else, said Eric Parsons, Ericsson’s vice president of emerging segments in core networks, on a recent call with Light Reading.

……………………………………………………………………………………………………………………………………………………………………….

Google Cloud for Network Functions:

Angelo Libertucci, Google’s global head of telecom told Light Reading, the “timing is right” for a Google campaign that targets telco networks after years of sluggish industry progress. “The pressures that telcos are dealing with – the higher capex, lower ARPU [average revenue per user], competitiveness – it’s been a tough two years and there have been a number of layoffs, at least in North America,” he told Light Reading at last week’s Digital Transformation World event in Copenhagen.

“We run the largest private network on the planet,” said Libertucci. “We have over 2 million miles of fiber.” Services for more than a billion users are supported “with a fraction of the people that even the smallest regional telcos have, and that’s because everything we do is automated,” he claimed.

“There haven’t been that many network functions that run in the cloud – you can probably name them on less than four fingers,” he said. “So we don’t think we’ve really missed the boat yet on that one.” Indeed, most network functions are still deployed on telco premises (aka central offices).

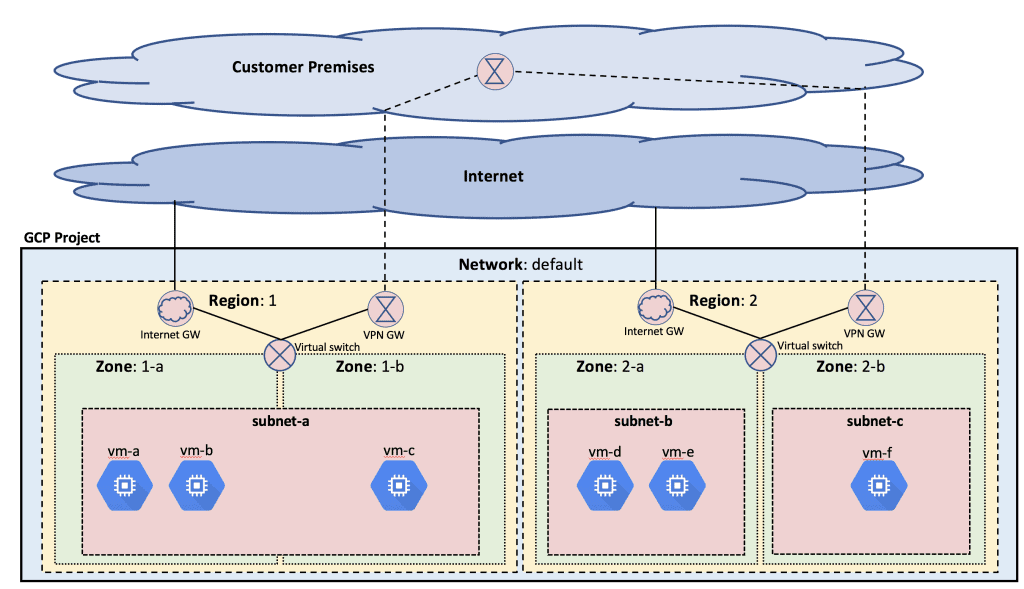

Image Credit: Google Cloud Platform

Deutsche Telekom has partnered with Google earlier this year to build an agentic AI called RAN Guardian, which can assess network data, detect performance issues and even take corrective action without manual intervention. Built using Gemini 2.0 in Vertex AI from Google Cloud, the agent can analyze network behavior, detect performance issues, and implement corrective actions to improve network reliability, reduce operational costs, and enhance customer experiences. Deutsche Telekom keeps the network data at its own facilities but relies on interconnection to Google Cloud for the above listed functions.

“Do I then decide to keep it (network functions and data) on-prem and maintain that pre-processing pipeline that I have? Or is there a cost benefit to just run it in cloud, because then you have all the native integration? You don’t have any interconnect, you have all the data for any use case that you ever wanted or could think of. It’s much easier and much more seamless.” Such autonomous networking, in his view, is now the killer use case for the public cloud.

Yet many telco executives believe that public cloud facilities are incapable of handling certain network functions. European telcos including BT, Deutsche Telekom, Orange and Vodafone, have made investments in their own private cloud platforms for their telco workloads. Also, regulators in some countries may block operators from using public clouds. BT this year said local legislation now prevents it from using the public cloud for network functions. European authorities increasingly talk of the need for a “sovereign cloud” under the full control of local players.

Google does claim to have a set of “sovereign cloud” products that ensure data is stored in the country where the telco operates. “We have fully air-gapped sovereign cloud offerings with Google Cloud binaries that we’ve done in partnership with telcos for years now,” said Libertucci. The uncertainty is whether these will always meet the definition. “If sovereign means you can’t use an American-owned organization, then that’s another part of the definition that somehow we will have to find a way to address,” he added. “If you are cloud-native, it’s supposed to be easier to move to any cloud, but with telco it’s not that simple because it’s a very performance-oriented workload,” said Libertucci.

What’s likely, then, is that operators will assign whole regions to specific combinations of public cloud providers and telco vendors, he thinks, as they have done on the network side. “You see telcos awarding a region to Huawei and another to Ericsson with complete separation between them. They might choose to go down that route with network vendors as well and so you may have an Ericsson and Google part of the network.”

“We’re a platform company, we’re a data company and we’re an AI company,” said Libertucci. “I think we’re happy now with being a platform others develop on.”

………………………………………………………………………………………………………………………………………………………………………………….

Cloud RAN Disappoints:

Outside a trial with Ericsson almost two years ago, there is not much sign of Google activity in cloud RAN, the use of general-purpose chips and cloud platforms to support RAN workloads. “So far, no one’s really pushed us down into that area,” said Libertucci. AWS, by contrast, has this year begun to show off an Outposts server built around one of its own Graviton central processing units for cloud RAN. Currently, however, it does not appear to be supporting a cloud RAN deployment for any telco.

………………………………………………………………………………………………………………………………………………………………………………

References:

https://www.lightreading.com/cloud/google-preps-public-cloud-charge-at-telecom-as-microsoft-wobbles

Deutsche Telekom and Google Cloud partner on “RAN Guardian” AI agent

Key Objectives of WG Technology Aspects at ITU-R WP 5D meeting June 24-July 3, 2025

ITU-R WP 5D is responsible for the overall radio system aspects of the terrestrial component of International Mobile Telecommunications (IMT) systems, comprising the current IMT-2000, IMT-Advanced and IMT-2020 as well as IMT for 2030 and beyond. Note that 5D’s work is only for terrestrial radio access network interfaces. It does not include 5G or 6G Core network or satellite network access.

ITU-R WP5D Technology Aspects Working Group (WG) consists of several Sub Working Groups (SWGs):

SWG IMT SPECIFICATIONS, SWG EVALUATION, SWG RADIO ASPECTS, SWG IMT UNWANTED EMISSIONS, SWG IMT COORDINATION

Key objectives of WG Technology Aspects at their June 24-July 3, 2025 meeting include:

- Continue revising Recommendation ITU-R M.2150-2 (5G) and Recommendation ITU‑R M.2012-6 (IMT Advanced aka 4G), including consideration of further revision based on contribution;

- Continue working on revision of Document IMT-2030/2 “Process” – submission, evaluation process and consensus building process for IMT-2030;

- Start to work on candidate technology submission template for IMT-2030 (6G);

- Continue working on Report ITU-R M.[IMT-2030.TECH PERF REQ] – minimum requirements related to technical performance for IMT-2030 radio interface(s);

- Continue working on Report ITU-R M.[IMT-2030.EVAL] – Guidelines for evaluation of radio interface technologies for IMT-2030;

- Continue working on Report ITU-R M.[IMT-TROPO DUCT MITIGATION] – Mitigation of interference for IMT network under tropospheric ducting effect;

- Continue working on the documents of unwanted emission characteristics of base/mobile stations using the terrestrial radio interfaces of IMT-2020.

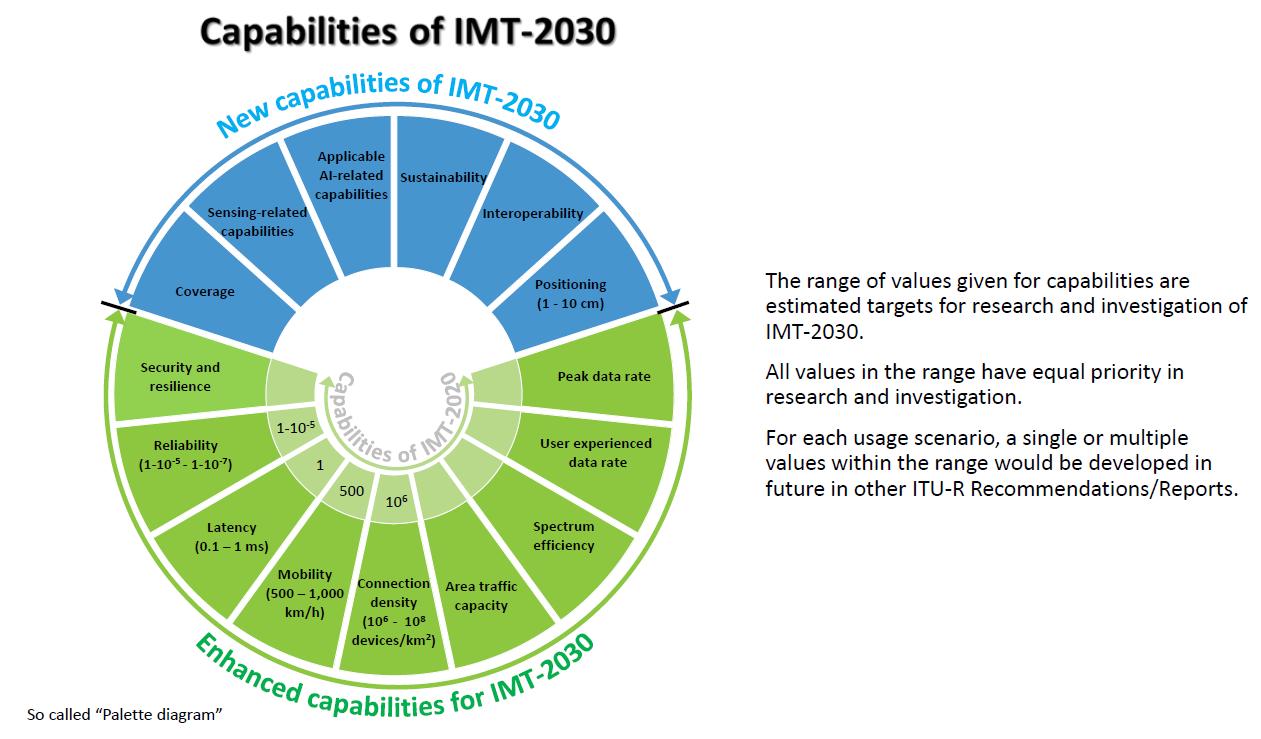

Backgrounder on IMT 2030 (6G):

Recommendation ITU R M.2160 ‒ “Framework and overall objectives of the future development of IMT for 2030 and Beyond” identifies IMT-2030 capabilities which aim to make IMT-2030 (6G) more capable, flexible, reliable and secure than previous IMT systems when providing diverse and novel services in the intended six usage scenarios, including immersive communication, hyper reliable and low latency communication (HRLLC), massive communication, ubiquitous connectivity, artificial intelligence and communication, and integrated sensing and communication (ISAC).

IMT-2030 can be considered from multiple perspectives, including users, manufacturers, application developers, network operators, verticals, and service and content providers. Therefore, it is recognized that technologies for IMT-2030 can be applied in a variety of deployment scenarios and can support a range of environments, service capabilities, and technology options.

IMT-2030 is also expected to be built on overarching aspects which act as design principles commonly applicable to all usage scenarios. These distinguishing design principles of the IMT‑2030 are including, but are not limited to sustainability, security and resilience, connecting the unconnected for providing universal and affordable access to all users independent of the location, and ubiquitous intelligence for improving overall system performance.

………………………………………………………………………………………………………………………………………………………………………………………………………….

References:

ITU-R WP 5D reports on: IMT-2030 (“6G”) Minimum Technology Performance Requirements; Evaluation Criteria & Methodology

Highlights of 3GPP Stage 1 Workshop on IMT 2030 (6G) Use Cases

ITU-R WP 5D reports on: IMT-2030 (“6G”) Minimum Technology Performance Requirements; Evaluation Criteria & Methodology

Ericsson and e& (UAE) sign MoU for 6G collaboration vs ITU-R IMT-2030 framework

ITU-R: IMT-2030 (6G) Backgrounder and Envisioned Capabilities

ITU-R WP5D invites IMT-2030 RIT/SRIT contributions

NGMN issues ITU-R framework for IMT-2030 vs ITU-R WP5D Timeline for RIT/SRIT Standardization

NGMN: 6G Key Messages from a network operator point of view

IMT-2030 Technical Performance Requirements (TPR) from ITU-R WP5D

Draft new ITU-R recommendation (not yet approved): M.[IMT.FRAMEWORK FOR 2030 AND BEYOND]

ZTE’s AI infrastructure and AI-powered terminals revealed at MWC Shanghai

ZTE Corporation unveiled a full range of AI initiatives under the theme “Catalyzing Intelligent Innovation” at MWC Shanghai 2025. Those innovations include AI + networks, AI applications, and AI-powered terminals. During several demonstrations, ZTE showcased its key advancements in AI phones and smart homes. Leveraging its underlying capabilities, the company is committed to providing full-stack solutions—from infrastructure to application ecosystems—for operators, enterprises, and consumers, co-creating an era of AI for all.

ZTE’s Chief Development Officer Cui Li outlined the vendor’s roadmap for building intelligent infrastructure and accelerating artificial intelligence (AI) adoption across industries during a keynote session at MWC Shanghai 2025. During her speech, Cui highlighted the growing influence of large AI models and the critical role of foundational infrastructure. “No matter how AI technology evolves in the future, the focus will remain on efficient infrastructure, optimized algorithms and practical applications,” she said. The Chinese vendor is deploying modular, prefabricated data center units and AI-based power management, which she said reduce energy use and cooling loads by more than 10%. These developments are aimed at delivering flexible, sustainable capacity to meet growing AI demands, the ZTE executive said.

ZTE is also advancing “AI-native” networks that shift from traditional architectures to heterogeneous computing platforms, with embedded AI capabilities. This, Cui said, marks a shift from AI as a support tool to autonomous agents shaping operations. Ms. Cui emphasized the role of high-quality, secure data and efficient algorithms in building more capable AI. “Data is like fertile ‘soil’. Its volume, purity and security decide how well AI as a plant can grow,” she said. “Every digital application — including AI — depends on efficient and green infrastructure,” she said.

ZTE is heavily investing in AI-native network architecture and high-efficiency computing:

- AI-native networks – ZTE is redesigning telecom infrastructure with embedded intelligence, modular data centers and AI-driven energy systems to meet escalating AI compute demands.

- Smarter models, better data – With advanced training methods and tools, ZTE is pushing the boundaries of model accuracy and real-world performance.

- Edge-to-core deployment – ZTE is integrating AI across consumer, home and industry use cases, delivering over 100 applied solutions across 18 verticals under its “AI for All” strategy.

ZTE has rolled out a full range of innovative solutions for network intelligence upgrades.

-

AIR RAN solution: deeply integrating AI to fully improve energy efficiency, maintenance efficiency, and user experience, driving the transition towards value creation of 5G

-

AIR Net solution: a high-level autonomous network solution that encompasses three engines to advance network operations towards “Agentic Operations”

-

AI-optical campus solution: addressing network pain points in various scenarios for higher operational efficiency in cities

-

HI-NET solution: a high-performance and highly intelligent transport network solution enabling “terminal-edge-network-computing” synergy with multiple groundbreaking innovations, including the industry’s first integrated sensing-communication-computing CPE, full-band OTNs, highest-density 800G intelligent switches, and the world’s leading AI-native routers

Through technological innovations in wireless and wired networks, ZTE is building an energy-efficient, wide-coverage, and intelligent network infrastructure that meets current business needs and lays the groundwork for future AI-driven applications, positioning operators as first movers in digital transformation.

In the home terminal market, ZTE AI Home establishes a family-centric vDC and employs MoE-based AI agents to deliver personalized services for each household member. Supported by an AI network, home-based computing power, AI screens, and AI companion robots, ZTE AI Home ensures a seamless and engaging experience—providing 24/7 all-around, warm-hearted care for every family member. The product highlights include:

-

AI FTTR: Serving as a thoughtful life assistant, it is equipped with a household knowledge base to proactively understand and optimize daily routines for every family member.

-

AI Wi-Fi 7: Featuring the industry’s first omnidirectional antenna and smart roaming solution, it ensures high-speed and stable connectivity.

-

Smart display: It acts like an exclusive personal trainer, leveraging precise semantic parsing technology to tailor personalized services for users.

-

AI flexible screen & cloud PC: Multi-screen interactions cater to diverse needs for home entertainment and mobile office, creating a new paradigm for smart homes.

-

AI companion robot: Backed by smart emotion recognition and bionic interaction systems, the robot safeguards children’s healthy growth with emotionally intelligent connections.

ZTE will anchor its product strategy on “Connectivity + Computing.” Collaborating with industry partners, the company is committed to driving industrial transformation, and achieving computing and AI for all, thereby contributing to a smarter, more connected world.

References:

ZTE reports H1-2024 revenue of RMB 62.49 billion (+2.9% YoY) and net profit of RMB 5.73 billion (+4.8% YoY)

ZTE reports higher earnings & revenue in 1Q-2024; wins 2023 climate leadership award

Malaysia’s U Mobile signs MoU’s with Huawei and ZTE for 5G network rollout

China Mobile & ZTE use digital twin technology with 5G-Advanced on high-speed railway in China

Dell’Oro: RAN revenue growth in 1Q2025; AI RAN is a conundrum

Dell’Oro: RAN market still declining with Huawei, Ericsson, Nokia, ZTE and Samsung top vendors

Dell’Oro: Global RAN Market to Drop 21% between 2021 and 2029

Deloitte and TM Forum : How AI could revitalize the ailing telecom industry?

IEEE Techblog readers are well aware of the dire state of the global telecommunications industry. In particular:

- According to Deloitte, the global telecommunications industry is expected to have revenues of about US$1.53 trillion in 2024, up about 3% over the prior year.Both in 2024 and out to 2028, growth is expected to be higher in Asia Pacific and Europe, Middle East, and Africa, with growth in the Americas being around 1% annually.

- Telco sales were less than $1.8 trillion in 2022 vs. $1.9 trillion in 2012, according to Light Reading. Collective investments of about $1 trillion over a five-year period had brought a lousy return of less than 1%.

- Last year (2024), spending on radio access network infrastructure fell by $5 billion, more than 12% of the total, according to analyst firm Omdia, imperilling the kit vendors on which telcos rely.

Deloitte believes generative (gen) AI will have a huge impact on telecom network providers:

Telcos are using gen AI to reduce costs, become more efficient, and offer new services. Some are building new gen AI data centers to sell training and inference to others. What role does connectivity play in these data centers?

There is a gen AI gold rush expected over the next five years. Spending estimates range from hundreds of billions to over a trillion dollars on the physical layer required for gen AI: chips, data centers, and electricity.16 Close to another hundred billion US dollars will likely be spent on the software and services layer.17 Telcos should focus on the opportunity to participate by connecting all of those different pieces of hardware and software. And shouldn’t telcos, whose business is all about connectivity, be able to profit in some way?

There are gen AI markets for connectivity: Inside the data centers there are miles of mainly copper (and some fiber) cables for transmitting data from board to board and rack to rack. Serving this market is worth billions in 2025,18 but much of this connectivity is provided by data centers and chipmakers and have never been provided by telcos.

There are also massive, long-haul fiber networks ranging from tens to thousands of miles long. These connect (for example) a hyperscaler’s data centers across a region or continent, or even stretch along the seabed, connecting data centers across continents. Sometimes these new fiber networks are being built to support sovereign AI—that is, the need to keep all the AI data inside a given country or region.

Historically, those fiber networks were massive expenditures, built by only the largest telcos or (in the undersea case) built by consortia of telcos, to spread the cost across many players. In 2025, it looks like some of the major gen AI players are building at least some of this connection capacity, but largely on their own or with companies that are specialists in long-haul fiber.

Telcos may want to think about how they can continue to be a relevant player in the part of the connectivity space, rather than just ceding it to the gen AI behemoths. For context, it is estimated that big tech players will spend over US$100 billion on network capex between 2024 and 2030, representing 5% to 10% of their total capex in that period, up from only about 4% to 5% of capex for a network historically.

Where the opportunities could be greater are for connecting billions of consumers and enterprises. Telcos already serve these large markets, and as consumers and businesses start sending larger amounts of data over wireline and wireless networks, that growth might translate to higher revenues. A recent research report suggests that direct gen AI data traffic could be in exabyte by 2033.24

The immediate challenge is that many gen AI use cases for both consumer and enterprise markets are not exactly bandwidth hogs: In 2025, they tend to be text-based (so small file sizes) and users may expect answers in seconds rather than milliseconds,25 which can limit how telcos can monetize the traffic. Users will likely pay a premium for ultra-low latency, but if latency isn’t an issue, they are unlikely to pay a premium.

Telcos may want to think about how they can continue to be a relevant player in the part of the connectivity space, rather than just ceding it to the gen AI behemoths.

A longer-term challenge is on-device edge computing. Even if users start doing a lot more with creating, consuming, and sharing gen AI video in real time (requiring much larger file transmission and lower latency), the majority of devices (smartphones, PCs, wearables, or Internet of Things (IoT) devices in factories and ports) are expected to soon have onboard gen AI processing chips.26 These gen accelerators, combined with emerging smaller language AI models, may mean that network connectivity is less of an issue. Instead of a consumer recording a video, sending the raw image to the cloud for AI processing, then the cloud sending it back, the image could be enhanced or altered locally, with less need for high-speed or low-latency connectivity.

Of course, small models might not work well. The chips on consumer and enterprise edge devices might not be powerful enough or might be too power inefficient with unacceptably short battery life. In which case, telcos may be lifted by a wave of gen AI usage. But that’s unlikely to be in 2025, or even 2026.

Another potential source of gen AI monetization is what’s being called AI Radio Access Network (RAN). At the top of every cell tower are a bunch of radios and antennas. There is also a powerful processor or processors for controlling those radios and antennas. In 2024, a consortium (the AI-RAN Alliance) was formed to look at the idea of adding the same kind of generative AI chips found in data centers or enterprise edge servers (a mix of GPUs and CPUs) to every tower.The idea would be that they could run the RAN, help make it more open, flexible, and responsive, dynamically configure the network in real time, and be able to perform gen AI inference or training as service with any extra capacity left over, generating incremental revenues. At this time, a number of original equipment manufacturers (OEMs, including ones who currently account for over 95% of RAN sales), telcos, and chip companies are part of the alliance. Some expect AI RAN to be a logical successor to Open RAN and be built on top of it, and may even be what 6G turns out to be.

…………………………………………………………………………………………………………………………………………………………………………….

The TM Forum has three broad “AI initiatives,” which are part of their overarching “Industry Missions.” These missions aim to change the future of global connectivity, with AI being a critical component.

The three broad “AI initiatives” (or “Industry Missions” where AI plays a central role) are:

-

AI and Data Innovation: This mission focuses on the safe and widespread adoption of AI and data at scale within the telecommunications industry. It aims to help telcos accelerate, de-risk, and reduce the costs of applying AI technologies to cut operational expenses and drive revenue growth. This includes developing best practices, standards, data architectures, ontologies, and APIs.

-

Autonomous Networks: This initiative is about unlocking the power of seamless end-to-end autonomous operations in telecommunications networks. AI is a fundamental technology for achieving higher levels of network automation, moving towards zero-touch, zero-wait, and zero-trouble operations.

-

Composable IT and Ecosystems: While not solely an “AI initiative,” this mission focuses on simpler IT operations and partnering via AI-ready composable software. AI plays a significant role in enabling more agile and efficient IT systems that can adapt and integrate within dynamic ecosystems. It’s based on the TM Forum’s Open Digital Architecture (ODA). Eighteen big telcos are now running on ODA while the same number of vendors are described by the TM Forum as “ready” to adopt it.

These initiatives are supported by various programs, tools, and resources, including:

- AI Operations (AIOps): Focusing on deploying and managing AI at scale, re-engineering operational processes to support AI, and governing AI operations.

- Responsible AI: Addressing ethical considerations, risk management, and governance frameworks for AI.

- Generative AI Maturity Interactive Tool (GAMIT): To help organizations assess their readiness to exploit the power of GenAI.

- AI Readiness Check (AIRC): An online tool for members to identify gaps in their AI adoption journey across key business dimensions.

- AI for Everyone (AI4X): A pillar focused on democratizing AI across all business functions within an organization.

Under the leadership of CEO Nik Willetts, a rejuvenated, AI-wielding TM Forum now underpins what many telcos do in business and operational support systems, the essential IT plumbing. The TM Forum rates automation using the same five-level system as the car industry, where 0 means completely manual and 5 heralds the end of human intervention. Many telcos are on track for Level 4 in specific areas this year, said Willetts. China Mobile has already realized an 80% reduction in major faults, saving 3,000 person years of effort and 4,000 kilowatt hours of energy each year, thanks to automation.

Outside of China, telcos and telco vendors are leaning heavily on technologies mainly developed by just a few U.S. companies to implement AI. A person remains in the loop for critical decision-making, but the justifications for taking any decision are increasingly provided by systems built on the core underlying technologies from those same few companies. As IEEE Techblog has noted, AI is still hallucinating – throwing up nonsense or falsehoods – just as domain-specific experts are being threatened by it.

Agentic AI substitutes interacting software programs for junior technicians, the future decision-makers. If AI Level 4 renders them superfluous, where do the future decision-makers come from?

Caroline Chappell, an independent consultant with years of expertise in the telecom industry, says there is now talk of what the AI pundits call “learning world models,” more sophisticated AI that grows to understand its environment much as a baby does. When mature, it could come up with completely different approaches to the design of telecom networks and technologies. At this stage, it may be impossible for almost anyone to understand what AI is doing, she said.

References:

Sources: AI is Getting Smarter, but Hallucinations Are Getting Worse

McKinsey: AI infrastructure opportunity for telcos? AI developments in the telecom sector

Ericsson revamps its OSS/BSS with AI using Amazon Bedrock as a foundation

At this week’s TM Forum-organized Digital Transformation World (DTW) event in Copenhagen, Ericsson has given its operations support systems (BSS/OSS) portfolio a complete AI makeover. This BSS/OSS revamp aims to improve operational efficiency, boost business growth, and elevate customer experiences. It includes a Gen-AI Lab, where telcos can try out their latest BSS/OSS-related ideas; a Telco Agentic AI Studio, where developers are invited to come and build generative AI products for telcos; and a range of Ericsson’s own Telco IT AI apps. Underpinning all this is the Telco IT AI Engine, which handles various tasks to do with BSS/OSS orchestration.

Ericsson is investing to enable CSPs make a real impact with AI, intent and automation. AI is now embedded throughout the portfolio, and the other updates range across five critical, interlinked transformation areas within a CSP’s operational transformation, with each area of evolution based on a clear rationale and vision for the value it generates. Ericsson sites several benefits for telcos:

- Data – Make your data more useful. Introducing Telco DataOps Platform. An evolution from the existing Ericsson Mediation, the platform enables unified data collection, processing, management, and governance, removing silos and complexity to make data more useful across the whole business, and fuel effective AI to run their business and operations more smoothly.

- Cloud and IT – Stay ahead of the business. Introducing Ericsson Intelligent IT Suite. A holistic end-to-end approach supporting OSS/BSS evolution designed for Telco scale to accelerate delivery, streamline operations, and empower teams with the tools to unlock value from day one and beyond. It enables CSPs to embrace innovative transformative approaches that deliver real-time business agility and impact to stay ahead of business demands in rapidly evolving OSS/BSS landscapes.

- Monetization – Make sure you get paid. Introducing Ericsson Charging and Billing Evolved. A cloud-native monetization platform that enables real-time charging and billing for multi-sided business models. It is powered by cutting-edge AI capabilities that makes it easy to accelerate partner-led growth, launch and monetize enterprise services efficiently, and capture revenue across all business lines at scale.

- Service Orchestration – Deliver as fast as you can sell. Upgraded Ericsson Service Orchestration and Assurance with Agentic AI: Uses AI and intent to automatically set up and manage services based on a CSP’s business goals, providing a robust engine for transforming to autonomous networks. It empowers CSPs to cut out manual steps and provides the infrastructure to launch and scale differentiated connectivity services

- Core Commerce – Be easy to buy from. AI-enabled core commerce. Streamline selling with intelligent offer creation. Key capabilities include efficient offering design through a Gen-AI capable product configuration assistant and guided selling using an intelligent telco-specific CPQ for seamless ‘Quote to Cash’ processes, supported by a CRM-agnostic approach. CSPs can launch tailored enterprise solutions faster and co-create offers with partners all while delivering seamless omni-channel experiences

Grameenphone, a Bangladesh telco with more than 80 million subscribers is an Ericsson BSS/OSS customer. “They can’t do massive investments in areas that aren’t going to give a return,” said Jason Keane, the head of Ericsson’s business and operational support systems portfolio who noted the low average revenue per user (ARPU) in the Bangladeshi telecom market. The technologies developed by Ericsson are helping Grameenphone’s subscribers with top-ups, bill payments and operations issues.

“What they’re saying is we want to enable our customers to have a fast, seamless experience, where AI can help in some of the interaction flows between external systems. “AI itself isn’t free. You’ve got to pay your consumption, and it can add up if you don’t use it correctly.”

To date, very few companies have seen financial benefits in either higher sales or lower costs from AI. The ROI just isn’t there. If organizations end up spending more on AI systems than they would on manual effort to achieve the same results, money would be wasted. Another issue is the poor quality of telco data which can’t be effectively used to train AI agents.

Ericsson’ Booth at DTW Ignite 2025 event in Copenhagen

………………………………………………………………………………………………………………………………………………………..

Ericsson appears to have been heavily reliant on Amazon Web Services (AWS) for the technologies it is advertising at DTW this week. Amazon Bedrock, a managed service for building generative AI models, is the foundation of the Gen-AI Lab and the Telco Agentic AI Studio. “We had to pick one, right?” said Keane. “I picked Amazon. It’s a good provider, and this is the model I do my development against.”

Regarding AI’s threat to jobs of OSS/BSS workers, Light Reading’s Iain Morris, wrote:

“Wider adoption by telcos of Ericsson’s latest technologies, and similar offerings from rivals, might be a big negative for many telco operations employees. At most immediate risk are the junior technicians or programmers dealing with basic code that can be easily handled by AI. But the senior programmers had to start somewhere, and even they don’t look safe. AI enthusiasts dream of what the TM Forum calls the fully autonomous network, when people are out of the loop and the operation is run almost entirely by machines.”

Ericsson has realized its OSS and BSS tools need to address the requirements of network operators that either already, or will in the near future, adopt cloud-native processes, run cloud-based horizontal IT platforms and make extensive use of AI to automate back-office processes and introduce autonomous network operations that reduce manual intervention and the time to address problems while also introducing greater agility (as long as the right foundations are in place).

Mats Karlsson, Head of Solution Area Business and Operations Support Systems, Ericsson says: “What we are unveiling today illustrates a transformative step into industrializing Business and Operations Support Systems for the autonomous age. Using AI and automation, as well as our decades of knowledge and experience in our people, technology, processes – we get results. These changes will ensure we empower CSPs to unlock value precisely when and where it can be captured. We operate in a complex industry, one which is evidently in need of a focus on no nonsense OSS/BSS. These changes, and our commitment to continuous evolution for innovation, will help simplify it where possible, ensuring that CSPs can get on with their key goals of building better, more efficient services for their customers while securing existing revenue and striving for new revenue opportunities.”

Ahmad Latif Ali, Associate Vice President, EMEA Telecommunications Insights at IDC says: “Our recent research, featured in the IDC InfoBrief “Mapping the OSS/BSS Transformation Journey: Accelerate Innovation and Commercial Success,” highlights recurring challenges organizations faced in transformation initiatives, particularly the complex and often simultaneous evolution of systems, processes, and organizational structures. Ericsson’s continuous evolution of OSS/BSS addresses these key, interlinked transformation challenges head-on, paving the way for automation powered by advanced AI capabilities. This approach creates effective pathways to modernize OSS/BSS and supports meaningful progress across the transformation journey.”

References:

McKinsey: AI infrastructure opportunity for telcos? AI developments in the telecom sector

Telecom sessions at Nvidia’s 2025 AI developers GTC: March 17–21 in San Jose, CA

Quartet launches “Open Telecom AI Platform” with multiple AI layers and domains

Goldman Sachs: Big 3 China telecom operators are the biggest beneficiaries of China’s AI boom via DeepSeek models; China Mobile’s ‘AI+NETWORK’ strategy

Generative AI in telecom; ChatGPT as a manager? ChatGPT vs Google Search

Allied Market Research: Global AI in telecom market forecast to reach $38.8 by 2031 with CAGR of 41.4% (from 2022 to 2031)

The case for and against AI in telecommunications; record quarter for AI venture funding and M&A deals

SK Group and AWS to build Korea’s largest AI data center in Ulsan

Amazon Web Services (AWS) is partnering with the SK Group to build South Korea’s largest AI data center. The two companies are expected to launch the project later this month and will hold a groundbreaking ceremony for the 100MW facility in August, according to state news service Yonhap.

Scheduled to begin operations in 2027, the AI Zone will empower organizations in Korea to develop innovative AI applications locally while leveraging world-class AWS services like Amazon SageMaker, Bedrock, and Q. SK Group expects to bolster Korea’s AI competitiveness and establish the region as a key hub for hyperscale infrastructure in Asia-Pacific through AI initiatives.

AWS provides on-demand cloud computing platforms and application programming interfaces (APIs) to individuals, businesses and governments on a pay-per-use basis.The data center will be built on a 36,000-square-meter site in an industrial park in Ulsan, 305 km southeast of Seoul. It will be powered by 60,000 GPUs, making it the country’s first large-scale AI data center.

The facility will be located in the Mipo industrial complex in Ulsan, 305 kilometers southeast of Seoul. It will house 60,000 graphics processing units (GPUs) and have a power capacity of 100 megawatts, making it the country’s first AI infrastructure of such scale, the sources said.

Ryu Young-sang, chief executive officer (CEO) of SK Telecom Co., had announced the company’s plan to build a hyperscale AI data center equipped with 60,000 GPUs in collaboration with a global tech partner, during the Mobile World Congress (MWC) 2025 held in Spain in March.

SK Telecom plans to invest 3.4 trillion won (US$2.49 billion) in AI infrastructure by 2028, with a significant portion expected to be allocated to the data center project. SK Telecom- South Korea’s biggest mobile operator and 31% owned by the SK Group – will manage the project. “They have been working on the project, but the exact timeline and other details have yet to be finalized,” an SK Group spokesperson said.

The AI data center will be developed in two phases, with the initial 40MW phase to be completed by November 2027 and the full 100MW capacity to be operational by February 2029, the Korea Herald reported Monday. Once completed, the facility, powered by 60,000 graphics processing units, will have a power capacity of 103 megawatts, making it the country’s largest AI infrastructure, sources said.

SK Group appears to have chosen Ulsan as the site, considering its proximity to SK Gas’ liquefied natural gas combined heat and power plant, ensuring a stable supply of large-scale electricity essential for data center operations. The facility is also capable of utilizing LNG cold energy for data center cooling.

SKT last month released its revised AI pyramid strategy, targeting AI infrastructure including data centers, GPUaaS and customized data centers. It is also developing personal agents A. and Aster for consumers and AIX services for enterprise customers.

Globally, it has found partners through the Global Telecom Alliance, which it co-founded, and is collaborating with US firms Anthropic and Lambda.

SKT’s AI business unit is still small, however, recording just KRW156 billion ($115 million) in revenue in Q1, two-thirds of it from data center infrastructure. Its parent SK Group, which also includes memory chip giant SK Hynix and energy firm SK Innovation, reported $88 billion in revenue last year.

AWS, the world’s largest cloud services provider, has been expanding its footprint in Korea. It currently runs a data center in Seoul and began constructing its second facility in Incheon’s Seo District in late 2023. The company has pledged to invest 7.85 trillion won in Korea’s cloud computing infrastructure by 2027.

“When SK Group’s exceptional technical capabilities combine with AWS’s comprehensive AI cloud services, we’ll empower customers of all sizes, and across all industries here in Korea to build and innovate with safe, secure AI technologies,” said Prasad Kalyanaraman, VP of Infrastructure Services at AWS. “This partnership represents our commitment to Korea’s AI future, and I couldn’t be more excited about what we’ll achieve together.”

Earlier this month AWS launched its Taiwan cloud region – its 15th in Asia-Pacific – with plans to invest $5 billion on local cloud and AI infrastructure.

References:

https://en.yna.co.kr/view/AEN20250616004500320?section=k-biz/corporate

https://www.koreaherald.com/article/10510141

https://www.lightreading.com/data-centers/aws-sk-group-to-build-korea-s-largest-ai-data-center

Big tech firms target data infrastructure software companies to increase AI competitiveness

- Meta announced Friday a $14.3 billion deal for a 49% stake in data-labeling company Scale AI. It’s 28 year old co-founder and CEO will join Meta as an AI advisor.

- Salesforce announced plans last month to buy data integration company Informatica for $8 billion. It will enable Salesforce to better analyze and assimilate scattered data from across its internal and external systems before feeding it into its in-house AI system, Einstein AI, executives said at the time.

- IT management provider ServiceNow said in May it was buying data catalogue platform Data.world, which will allow ServiceNow to better understand the business context behind data, executives said when it was announced.

- IBM announced it was acquiring data management provider DataStax in February to manage and process unstructured data before feeding it to its AI platform.

Hutchison Telecom is deploying 5G-Advanced in Hong Kong without 5G-A endpoints

Hutchison Telecom-Hong Kong is deploying 3GPP’s 5G-Advanced (5G-A) in high-traffic venues in Hong Kong, including the HK Exhibition Center, the West Kowloon Cultural District and the new $3.9 billion Kai Tak Sports Park. However, 5G-A end points [1.] (like smartphones and tablets) aren’t likely to arrive until next year, according to Hutchinson Executive Director and CEO Kenny Koo. Therefore, the 5G-A Hong Kong deployment is mostly symbolic. However, Hutchison is doing some commercial business with 5G-A hotspots.

Hutchinson used the 5G-A modems to provide coverage for the annual Art Basel visual arts fair in March, enabling organizers to offer free Wi-Fi for visitors. It’s also found a little niche in pop-up stores. 5G-A modems registered download and upload speeds of 3.1 Gbit/s and 370 Mbit/s respectively in a demo earlier this month.

Note 1. Only a handful of 5G-A endpoint devices are available in mainland China, where operators are reporting 5G-A commercial networks in hundreds of cities – in the 3.5GHz, 4.9GHz and 2.1GHz bands.

“2025 marks the 5th anniversary of 5G launch. In December 2024, our 5G customer penetration rate reached 54%. At this important stage, we are comprehensively enhancing our 5G coverage and capacity while continuously optimizing user experience. Limited-time upgrade offers are also tailored to encourage customers to upgrade to 5G. Together, we are advancing into the new era of 5.5G (aka 5GA). In support of the development of the Northern Metropolis, we have taken the initiative to actively enhance 5G network coverage in the district as the flow of people and vehicles surges. This ensures that commuters travelling between the northwest New Territories and Kowloon can enjoy a smoother network experience at major transportation hubs, including Tai Lam Tunnel and the Kam Sheung Road section of the MTR Tuen Ma Line. In addition, we are helping to boost the mega event economy by activating 5.5G network hotspots at major event venues in Hong Kong including Kai Tak Sports Park, the West Kowloon Cultural District and the Hong Kong Convention and Exhibition Centre. Customers enjoy an improved experience at high-traffic hotspots compared with the original 5G coverage, with enhanced network speed, increased capacity and low latency performance provided by 5G broadband.”

“We try to position ourselves as a market leader in the technology evolution,” Koo told Light Reading. He said the 5G-A ecosystem “was not yet ready” because of the lack of devices that can support the 26 GHz and 28 GHz bands. “iPhone, Samsung and Huawei handsets do not support 5.5G in those bands,” he said, using the company’s preferred branding of 5G-Advanced.

Author’s Note: Koo did not mention that 5G-A has yet to be standardized by ITU-R as part of IMT 2020 RIT/SRIT aka the ITU-R M.2150 recommendation. 5G Advanced is included in 3GPP Release 18 and is expected to be part of M.2150 issue 3, now being developed by ITU-R WP 5D.

Hutchinson’s subscriber base grew 17% to 4.6 million, mostly due to prepaid gains, while 5G penetration increased 8 percentage points to 54%. Koo said the company has been able to sustain the growth this year because of demand from inbound travelers from mainland China. “They like our prepaid cards,” he added. Last year, Hutchinson’s roaming revenue increased 30% to 684 million Hong Kong dollars (US$87 million) and now accounts for nearly a fifth of total service revenue.

eSim is also a growing market for Hutchinson. There are a lot of travel SIM portals selling eSIM solutions right to consumers,” Koo said. The popularity of its eSIM product means Hutchison’s addressable market has expanded way beyond Hong Kong to reach mobile customers worldwide.

About Hutchison Telecommunications Hong Kong:

Hutchison Telecommunications Hong Kong Limited (“HTHK”) has launched 5G broadband services in both the consumer and enterprise markets, providing high-speed indoor and outdoor internet access. Leveraging a robust 5G network, HTHK has also extended the deployment of 5G solutions including 5G 4K live broadcasting, virtual reality and real-time data transmission to various verticals. HTHK plays a prominent role in developing a new economy ecosystem, channeling the latest technologies into innovations that set market trends and steer industry development.

……………………………………………………………………………………………………………………………………………………………

References:

https://www.lightreading.com/5g/hutchison-joins-5g-advanced-race

https://doc.irasia.com/listco/hk/hthkh/press/p250529.pdf

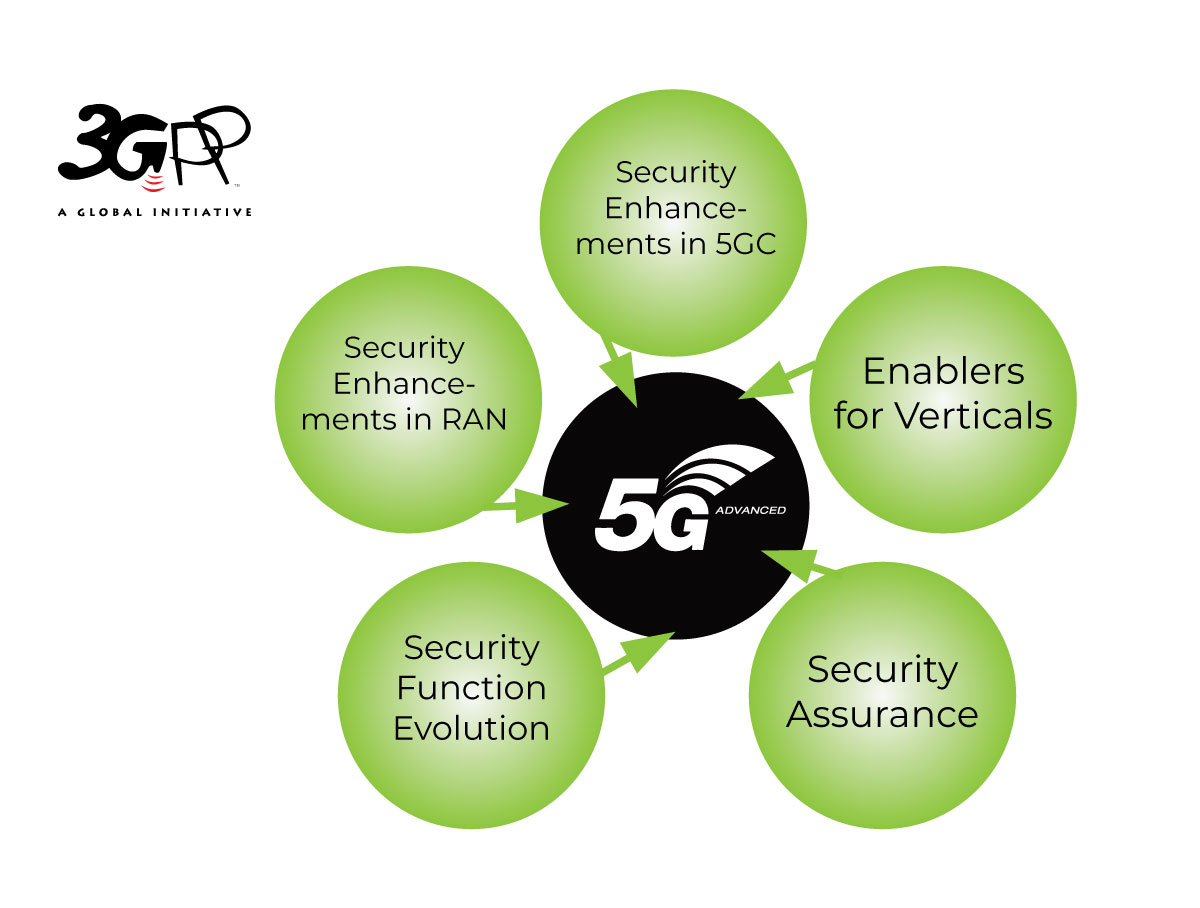

5G Advanced offers opportunities for new revenue streams; 3GPP specs for 5G FWA?

What is 5G Advanced and is it ready for deployment any time soon?

Huawei pushes 5.5G (aka 5G Advanced) but there are no completed 3GPP specs or ITU-R standards!

Nokia exec talks up “5G Advanced” (3GPP release 18) before 5G standards/specs have been completed

ITU-R recommendation IMT-2020-SAT.SPECS from ITU-R WP 5B to be based on 3GPP 5G NR-NTN and IoT-NTN (from Release 17 & 18)

Nile launches a Generative AI engine (NXI) to proactively detect and resolve enterprise network issues

Nile is a Nile is a private, venture-funded technology company specializing in AI-driven network and security infrastructure services for enterprises and government organizations. Nile has pioneered the use of AI and machine learning in enterprise networking. Its latest generative AI capability, Nile Experience Intelligence (NXI), proactively resolves network issues before they impact users or IT teams, automating fault detection, root cause analysis, and remediation at scale. This approach reduces manual intervention, eliminates alert fatigue, and ensures high performance and uptime by autonomously managing networks.

Significant Innovations Include:

-

Automated site surveys and network design using AI and machine learning

-

Digital twins for simulating and optimizing network operations

-

Edge-to-cloud zero-trust security built into all service components

-

Closed-loop automation for continuous optimization without human intervention

Today, the company announced the launch of Nile Experience Intelligence (NXI), a novel generative AI capability designed to proactively resolve network issues before they impact IT teams, users, IoT devices, or the performance standards defined by Nile’s Network-as-a-Service (NaaS) guarantee. As a core component of the Nile Access Service [1.], NXI uniquely enables Nile to take advantage of its comprehensive, built-in AI automation capabilities. NXI allows Nile to autonomously monitor every customer deployment at scale, identifying performance anomalies and network degradations that impact reliability and user experience. While others market their offerings as NaaS, only the Nile Access Service with NXI delivers a financially backed performance guarantee—an unmatched industry standard.

………………………………………………………………………………………………………………………………………………………………

Note 1. Nile Access Service is a campus Network-as-a-Service (NaaS) platform that delivers both wired and wireless LAN connectivity with integrated Zero Trust Networking (ZTN), automated lifecycle management, and a unique industry-first performance guarantee. The service is built on a vertically integrated stack of hardware, software, and cloud-based management, leveraging continuous monitoring, analytics, and AI-powered automation to simplify deployment, automate maintenance, and optimize network performance.

………………………………………………………………………………………………………………………………………………………………………………………………….

“Traditional networking and NaaS offerings based on service packs rely on IT organizations to write rules that are static and reactive, which requires continuous management. Nile and NXI flipped that approach by using generative AI to anticipate and resolve issues across our entire install base, before users or IT teams are even aware of them,” said Suresh Katukam, Chief Product Officer at Nile. “With NXI, instead of providing recommendations and asking customers to write rules that involve manual interaction—we’re enabling autonomous operations that provide a superior and uninterrupted user experience.”

Key capabilities include:

- Proactive Fault Detection and Root Cause Analysis: predictive modeling-based data analysis of billions of daily events, enabling proactive insights across Nile’s entire customer install base.

- Large Scale Automated Remediation: leveraging the power of generative AI and large language models (LLMs), NXI automatically validates and implements resolutions without manual intervention, virtually eliminating customer-generated trouble tickets.

- Eliminate Alert Fatigue: NXI eliminates alert overload by shifting focus from notifications to autonomous, actionable resolution, ensuring performance and uptime without IT intervention.

Unlike rules-based systems dependent on human-configured logic and manual maintenance, NXI is:

- Generative AI and self-learning powered, eliminating the need for static, manually created rules that are prone to human error and require ongoing maintenance.

- Designed for scale, NXI already processes terabytes of data daily and effortlessly scales to manage thousands of networks simultaneously.

- Built on Nile’s standardized architecture, enabling consistent AI-driven optimization across all customer networks at scale.

- Closed-loop automated, no dashboards or recommended actions for customers to interpret, and no waiting on manual intervention.

Katukam added, “NXI is a game-changer for Nile. It enables us to stay ahead of user experience and continuously fine-tune the network to meet evolving needs. This is what true autonomous networking looks like—proactive, intelligent, and performance-guaranteed.”

From improved connectivity to consistent performance, Nile customers are already seeing the impact of NXI. For more information about NXI and Nile’s secure Network as a Service platform, visit www.nilesecure.com.

About Nile:

Nile is leading a fundamental shift in the networking industry, challenging decades-old conventions to deliver a radically new approach. By eliminating complexity and rethinking how networks are built, consumed, and operated, Nile is pioneering a new category designed for a modern, service-driven era. With a relentless focus on simplicity, security, reliability, and performance, Nile empowers organizations to move beyond the limitations of legacy infrastructure and embrace a future where networking is effortless, predictable, and fully aligned with their digital ambitions.

Nile is recognized as a disruptor in the enterprise networking market, offering a modern alternative to traditional vendors like Cisco and HPE. Its model enables organizations to reduce total cost of ownership by more than 60% and reclaim IT resources while providing superior connectivity. Major customers include Stanford University, Pitney Bowes, and Carta.

The company has received several industry accolades, including the CRN Tech Innovators Award (2024) and recognition in Gartner’s Peer Insights Voice of the Customer Report1. Nile has raised over $300 million in funding, with a significant $175 million Series C round in 2023 to fuel expansion.

References:

https://nilesecure.com/company/about-us