China ITU filing to put ~200K satellites in low earth orbit while FCC authorizes 7.5K additional Starlink LEO satellites

- Purpose: The planned systems are intended to provide global broadband connectivity, data relay, and positioning services, directly competing with U.S. efforts like SpaceX’s Starlink network.

- Filing Entities: The primary filings were submitted by the state-backed Institute of Radio Spectrum Utilization and Technological Innovation, along with other commercial and state-owned companies like China Mobile and Shanghai Spacecom.

- Status: These filings are an initial step in a long international regulatory process and serve as a claim to limited spectrum and orbital slots. They do not guarantee all satellites will ultimately be built or launched. The actual deployment will be a gradual process over many years.

- Context: The move is part of an escalating “space race” to dominate the LEO environment. Early filings are crucial for securing priority access to orbital resources and avoiding signal interference. The sheer scale of the Chinese proposal would, if realized, dwarf most other planned constellations.

- Regulations: Under ITU rules, operators must deploy a certain percentage of the satellites within seven years of the initial filing to retain their rights.

- Shanghai Yuanxin (Qianfan), currently China’s most advanced LEO satellite operator, has submitted a regulatory request for an additional 1,296 satellites.

- Telecommunications giant China Mobile is planning two separate constellations totaling 2,664 satellites.

- ChinaSat, the established state-owned satellite provider, is focusing on a 24-satellite medium-Earth orbit (MEO) system.

- GalaxySpace, a private satellite manufacturer based in Beijing, has applied for 187 satellites, and China Telecom has applied for 12.

Image Credit: Klaus Ohlenschlaeger/Alamy Stock Photo

“This gives SpaceX what they need for the next couple of years of operation. They’re launching a bit over 3,000 satellites a year, so 7,500 satellites being authorized is potentially enough for SpaceX to do what they want to do until late 2027,” said Tim Farrar, satellite analyst and president at TMF Associates.

SpaceX has plans for a larger D2D satellite constellation that would use the AWS-4 and H-block spectrum it is acquiring from EchoStar. It is awaiting FCC approval for the US$17 billion deal, but the spectrum is not expected to be transferred until the end of November 2027.

The FCC noted that the changes will allow the Starlink system to serve more customers and deliver “gigabit speed service.” Along with permission for another tranche of satellites, the FCC has set new parameters for frequency use and lower orbit altitudes. The modified authorizations will also apply to new satellites to be launched.

Starlink’s LEO satellite network competitors are Amazon Leo, OneWeb and AST Space Mobile.

………………………………………………………………………………………………………………………………………………………..

References:

U.S. BEAD overhaul to benefit Starlink/SpaceX at the expense of fiber broadband providers

Huge significance of EchoStar’s AWS-4 spectrum sale to SpaceX

Telstra selects SpaceX’s Starlink to bring Satellite-to-Mobile text messaging to its customers in Australia

SpaceX launches first set of Starlink satellites with direct-to-cell capabilities

SpaceX has majority of all satellites in orbit; Starlink achieves cash-flow breakeven

Amazon Leo (formerly Project Kuiper) unveils satellite broadband for enterprises; Competitive analysis with Starlink

NBN selects Amazon Project Kuiper over Starlink for LEO satellite internet service in Australia

GEO satellite internet from HughesNet and Viasat can’t compete with LEO Starlink in speed or latency

Amazon launches first Project Kuiper satellites in direct competition with SpaceX/Starlink

Vodafone and Amazon’s Project Kuiper to extend 4G/5G in Africa and Europe

New Linux Foundation white paper: How to integrate AI applications with telecom networks using standardized CAMARA APIs and the Model Context Protocol (MCP)

The Linux Foundation’s CAMARA project [1.] released a significant white paper, “In Concert: Bridging AI Systems & Network Infrastructure through MCP: How to Build Network-Aware Intelligent Applications.” The open source software organization says, “Telco network capabilities exposed through APIs provide a large benefit for customers. By simplifying telco network complexity with APIs and making the APIs available across telco networks and countries, CAMARA enables easy and seamless access.”

Note 1. CAMARA is an open source project within the Linux Foundation to define, develop and test the APIs. CAMARA works in close collaboration with the GSMA Operator Platform Group to align API requirements and publish API definitions. Harmonization of APIs is achieved through fast and agile created working code with developer-friendly documentation. API definitions and reference implementations are free to use (Apache2.0 license).

…………………………………………………………………………………………………………………………………………………………….

The white paper outlines how the Model Context Protocol (MCP) and CAMARA’s network APIs can provide AI systems with real-time network intelligence, enabling the development of more efficient and network-aware applications. This is seen as a critical step toward future autonomous networks that can manage and fix their own data discrepancies.

CAMARA facilitates the development of operator-agnostic network APIs, adhering to a “write once” paradigm to mitigate fragmentation and provide uniform access to essential network capabilities, including Quality on Demand (QoD), Device Location, Edge Discovery, and fraud prevention signals. The new technical paper details an architecture where an MCP server functions as an abstraction layer, translating CAMARA APIs into MCP-compliant “tools” that AI applications can seamlessly discover and invoke. This integration bridges the historical operational gap between AI systems and the underlying communication networks that power modern digital services. By leveraging MCP integration, AI agents can dynamically access the latest API capabilities upon release, circumventing the need for continuous code refactoring and ensuring immediate utilization of emerging network functionalities without implementation bottlenecks.

“AI agents increasingly shape the digital experiences people rely on every day, yet they operate disconnected from network capabilities – intelligence, control, and real-time source of truth,” said Herbert Damker, CAMARA TSC Chair and Lead Architect, Infrastructure Cloud at Deutsche Telekom. “CAMARA and MCP bring AI and network infrastructure into concert, securely and consistently across operators.”

The paper includes practical example scenarios for “network-aware” intelligent applications/agents, including:

- Intelligent video streaming with AI-powered quality optimization

- Banking fraud prevention using network-verified security context

- Local/edge-optimized AI deployment informed by network and edge resource conditions

In addition to the architecture and use cases, the paper outlines CAMARA’s objectives for supporting MCP, which include covering areas such as security guidelines; standardized MCP tooling for CAMARA APIs; and quality requirements and success factors needed for production-grade implementations. The white paper is available for download on the CAMARA website.

Collaboration with the Agentic AI Foundation

The release of this work aligns with a major ecosystem milestone: MCP now lives under the Linux Foundation’s newly formed Agentic AI Foundation (AAIF), a sister initiative that provides neutral, open governance for key agentic AI building blocks. The Linux Foundation announced AAIF on December 9, 2025, with founding project contributions including Anthropic’s MCP, Block’s goose, and OpenAI’s AGENTS.md. AAIF’s launch emphasizes MCP’s role as a broadly adopted standard for connecting AI models to tools, data, and applications, with more than 10,000 published MCP servers cited by the Linux Foundation and Anthropic.

“With MCP now under the Linux Foundation’s Agentic AI Foundation, developers can invest with confidence in an open, vendor-neutral standard,” said Arpit Joshipura, general manager, Networking, Edge and IoT at the Linux Foundation. “CAMARA’s work demonstrates how MCP can unlock powerful new classes of network-aware AI applications.”

“The Agentic AI Foundation calls for trustworthy infrastructure. CAMARA answers that call. As AI shifts from conversation to orchestration, agentic workflows demand synchronization with reality,” said Nick Venezia, CEO and Founder, Centillion.AI, CAMARA End User Council Representative to the TSC. “We provide the contextual lens that allows AI to verify rather than infer, moving from guessing to knowing.“

References:

https://camaraproject.org/news/

IEEE/SCU SoE May 1st Virtual Panel Session: Open Source vs Proprietary Software Running on Disaggregated Hardware

Linux Foundation creates standards for voice technology with many partners

LF Networking 5G Super Blue Print project gets 7 new members

OCP – Linux Foundation Partnership Accelerates Megatrend of Open Software running on Open Hardware

Private 5G networks move to include automation, autonomous systems, edge computing & AI operations

A new report from PrivateLTEand5G.com analyzes the rapid evolution and expansion of global private cellular networks. The market research firm states that organizations worldwide shifted decisively from Private 5G feasibility trials to large-scale, operationally-integrated deployments. The defining theme is no longer just connectivity, but intelligent automation, with private 5G – often in 5G Standalone (SA) configurations – powering sophisticated applications including autonomous vehicle fleets, AI-driven quality control, remote machinery operation, and comprehensive digital twins.

“The year 2025 marked a significant acceleration in the private cellular network market,” said Ashish Jain, Co-founder of KAIROS Pulse and PrivateLTEand5G.com. “Private network deployments are increasingly focused on enabling intelligent automation rather than simply providing connectivity. We’re seeing autonomous haulage systems in complex mining environments, AI-powered video analytics for safety, and private networks actively replacing legacy systems like Wi-Fi, DECT, and pagers in mission-critical healthcare and utilities operations.”

The report documents 70+ verified private network deployments across tens of countries, providing the granular intelligence your organization needs to navigate this rapidly evolving landscape in 2026. Industry-specific insights on key private cellular use cases:

- Manufacturing & Industrial: Smart factories deploying 5G for AI-driven quality control, digital twins, and autonomous logistics

- Ports & Logistics: Real-time cargo tracking, autonomous vehicle coordination, and crane digitalization at the world’s busiest terminals

- Transportation Infrastructure: Airports, railways, and smart mobility deploying mission-critical connectivity

- Healthcare & Education: Private networks enabling telemedicine, campus safety, and immersive learning

- Energy & Mining: Remote operations, predictive maintenance, and worker safety in extreme environments

Featured deployments span dozens of countries and showcase groundbreaking implementations such as:

- •Autonomous Operations: Aker BP’s fully autonomous private 5G on North Sea oil platforms; Air New Zealand’s drone-based automated inventory management

- AI-Driven Manufacturing: BMW’s Debrecen facility with AI quality control and 1,000 industrial robots; Hyundai’s RedCap wireless vehicle inspection technology

- Mission-Critical Replacement: Austria’s Gesundheit Burgenland replacing pagers and DECT across five hospitals; Memphis utility’s CBRS network modernizing grid operations

- Broadcast Innovation: BT’s multiple network slicing deployment for Emirates Sail Grand Prix; T-Mobile’s dedicated 5G for MLB All-Star Game

- Transportation Transformation: Deutsche Bahn’s first commercial 5G railway network; Maersk’s fleet-wide LTE across 450 ships

“While industrial sectors like manufacturing, mining, and logistics continue to lead adoption, the use cases have evolved substantially,” Jain noted. “The rise of Neutral Host networks is solving connectivity challenges for public-facing venues like stadiums and airports, while advanced 5G features like network slicing enable demanding applications such as live 4K broadcast production.”

The report emphasizes that the availability of dedicated spectrum – from CBRS in the United States to licensed bands in Europe and Asia – remains a critical enabler, providing the deterministic reliability required for autonomous and mission-critical systems being deployed.

The complete report is available for download at PrivateLTEand5G.com. The company says “it offers essential insights for enterprise technology leaders, telecom operators, system integrators, and innovation teams planning private cellular network strategies.”

………………………………………………………………………………………………………………………………………………………………………………………………………………..

Here’s what Google Gemini has to say about recent Private 5G network developments:

- Intelligent Automation & AI Operations: The focus has moved beyond simple connectivity. Private 5G, often in Standalone (SA) configurations, is now the backbone for sophisticated applications like autonomous vehicle fleets, remote-controlled machinery, and AI-driven quality control in industries such as manufacturing and mining. AI and Machine Learning (ML) are also being used for predictive maintenance and real-time network orchestration.

- Edge Computing Integration: To support the massive data generated by IoT devices and AI applications, data processing is moving closer to the source (the edge) to reduce latency and improve efficiency. This synergy is crucial for real-time decision-making in critical operations.

- 5G-Advanced Features (3GPP Release 18): Commercialization of 5G-Advanced is underway and introduces critical industrial features, including:

- URLLC (Ultra-Reliable, Low-Latency Communications): Essential for replacing wired connections in mission-critical control systems (achieving millisecond response times).

- 5G RedCap (Reduced Capability): A cost-efficient technology for lower-power IoT and industrial sensors, bridging the gap between basic IoT needs and full 5G capabilities.

- Network Slicing: This allows enterprises to create multiple virtual networks on a single physical infrastructure, each tailored with specific performance parameters (e.g., bandwidth, latency) for different applications.

- Open RAN and Virtualization: The adoption of Open Radio Access Network (Open RAN) and virtualized RAN solutions is increasing, reducing infrastructure costs, preventing vendor lock-in, and allowing for greater vendor diversity.

- Hybrid Networks: Enterprises are increasingly combining private 5G with existing Wi-Fi 6/7 networks and public cellular coverage (neutral host systems) to provide seamless indoor and outdoor connectivity across large campuses and remote areas.

- Enhanced Security: Private 5G inherently offers better security than public networks through dedicated, isolated environments and SIM-based authentication. New solutions from companies like Palo Alto Networks and Trend Micro are focusing on extending security visibility across both IT and operational technology (OT) domains.

- Market Growth: The private 5G market is experiencing rapid acceleration, projected to grow at a CAGR of over 40% through the rest of the decade.

- Key Industries: Manufacturing, logistics, mining, utilities, and healthcare are leading the adoption, leveraging private 5G for use cases such as factory automation, connected robotics, remote patient monitoring, and autonomous vehicles.

- Regional Markets:

- North America: Dominates the market in spending and innovation, driven by spectrum access like CBRS (Citizens Broadband Radio Service).

- Asia-Pacific: The fastest-growing region, with China leading in large-scale deployments due to state-funded initiatives.

- Europe: Seeing significant interest, with countries like Germany, the UK, and Sweden allocating dedicated local spectrum for industrial use.

- Simplified Deployment: To overcome the complexity and skill shortages associated with deployments, vendors are offering “network-in-a-box” solutions and “5G-as-a-service” (5GaaS) models, which shift costs from capital expenditures (CapEx) to operational expenditures (OpEx).

………………………………………………………………………………………………………………………………………………………………………………………………………………………..

References:

https://www.privatelteand5g.com/reports/private-cellular-network-deployments-report-2026/

SNS Telecom & IT: Private 5G Market Nears Mainstream With $5 Billion Surge

SNS Telecom & IT: Private 5G and 4G LTE cellular networks for the global defense sector are a $1.5B opportunity

SNS Telecom & IT: Private 5G Network market annual spending will be $3.5 Billion by 2027

OneLayer Raises $28M Series A funding to transform private 5G networks with enhanced security

Verizon partners with Nokia to deploy large private 5G network in the UK

HPE Aruba Launches “Cloud Native” Private 5G Network with 4G/5G Small Cell Radios

Tata Consultancy Services: Critical role of Gen AI in 5G; 5G private networks and enterprise use cases

Keysight Technologies Demonstrates 3GPP Rel-19 NR-NTN Connectivity in Band n252

Keysight Technologies, Inc. has demonstrated the first end-to-end New Radio Non-Terrestrial Network (NR-NTN) connection in 3GPP band n252 under Release 19 specifications, achieved in collaboration with Samsung Electronics using Samsung’s next-generation commercial NR modem chipset (part number not stated). The live trial, conducted at CES 2026 in Las Vegas, validated satellite-to-satellite (SAT-to-SAT) mobility and cross-vendor interoperability, establishing a key milestone for direct-to-cell (D2C) satellite communications and NTN commercialization.

The successful validation of band n252 marks the first public confirmation of this spectrum band in an operational NTN system. Band n252 is expected to be a foundational component for upcoming low Earth orbit (LEO) constellations targeting global broadband and IoT coverage. This result demonstrates tangible progress toward large-scale NTN integration supporting ubiquitous, standards-based connectivity for consumers, connected vehicles, IoT devices, and critical communications.

Together with earlier demonstrations in bands n255 and n256, Keysight and Samsung have now validated all major NR-NTN FR1 frequency bands end-to-end. This consolidation enables ecosystem participants—including modem vendors, satellite network operators, and device manufacturers—to analyze cross-band mobility, inter-satellite handovers, and radio performance under consistent, controlled NTN emulation conditions.

The demonstration leveraged Keysight’s NTN Network Emulator Solutions to replicate multi-orbit LEO scenarios, emulate SAT-to-SAT mobility, and execute complete end-to-end routing while supporting live user traffic over the NTN link. When paired with Samsung’s chipset, the setup verified standards compliance, user throughput performance, and multi-vendor interoperability, providing a high-fidelity validation environment that accelerates system testing and time-to-market for NR-NTN deployments targeted for global scaling in 2026.

This integration underscores the readiness of 3GPP Release 19-compliant NTN technologies to transition from proof-of-concept trials to operational field testing, supporting the broader industry goal of realizing seamless terrestrial–non-terrestrial 5G networks within the Rel-19 framework and paving the way for future 6G NTN evolution.

For network operators, device OEMs, and satellite providers, this consolidation of NTN FR1 coverage provides a reference environment to evaluate cross‑band handovers, inter‑satellite mobility, and multi‑vendor interoperability before field deployment. By moving live NR‑NTN testing with commercial‑grade silicon into an emulated LEO constellation environment, the solution is positioned to reduce integration risk, compress trial timelines, and accelerate commercialization of direct‑to‑cell NTN services anticipated to scale from 2026.

Peng Cao, Vice President and General Manager of Keysight’s Wireless Test Group, Keysight, said:

“Together with Samsung’s System LSI Business, we are demonstrating the live NTN connection in 3GPP band n252 using commercial-grade modem silicon with true SAT-to-SAT mobility. With n252, n255, and n256 now validated across NTN, the ecosystem is clearly accelerating toward bringing direct-to-cell satellite connectivity to mass-market devices. Keysight’s NTN emulation environment enables chipset and device makers a controlled way to prove multi-satellite mobility, interoperability, and user-level performance, helping the industry move from concept to commercialization.”

Resources:

About Keysight Technologies:

At Keysight (NYSE: KEYS), we inspire and empower innovators to bring world-changing technologies to life. As an S&P 500 company, we’re delivering market-leading design, emulation, and test solutions to help engineers develop and deploy faster, with less risk, throughout the entire product life cycle. We’re a global innovation partner enabling customers in communications, industrial automation, aerospace and defense, automotive, semiconductor, and general electronics markets to accelerate innovation to connect and secure the world. Learn more at Keysight Newsroom and www.keysight.com.

………………………………………………………………………………………………………………………………………….

References:

https://www.telecoms.com/satellite/samsung-and-keysight-show-off-continuous-ntn-connectivity

Telecom operators investing in Agentic AI while Self Organizing Network AI market set for rapid growth

Telecom companies are planning to use Agentic AI [1.] for customer experience and network automation. A recent RADCOM survey shows 71% of network operators plan to deploy agentic AI in 2026, while 14% have already begun, prioritizing areas that directly influence trust and customer satisfaction: security and fraud prevention (57%) and customer service and support (56%). The top use cases are automated customer complaint resolution and autonomous fault resolution.

Operators are betting on agentic AI to remove friction before customers feel it, with the highest-value use cases reflecting this shift, including:

- 57% – automated customer complaint resolution

- 54% – autonomous fault resolution before it impacts service

- 52% – predicting experience to prevent churn

This technology is shifting networks from simply detecting issues to preventing them before customers notice. In contact centers, 2026 is expected to see a rise in human and AI agent collaboration to improve efficiency and customer service.

Note 1. Agentic AI refers to autonomous artificial intelligence systems that can perceive, reason, plan, and act independently to achieve complex goals with minimal human intervention, going beyond simple command-response to manage multi-step tasks, use various tools, and adapt to new information for proactive automation in dynamic environments. These intelligent agents function like digital coworkers, coordinating internally and with other systems to execute sophisticated workflows.

……………………………………………………………………………………………………………………………………………………………………………………………

ResearchAndMarkets.com has just published a “Self-Organizing Network Artificial Intelligence (AI) Global Market Report 2025.” The market research firm says that the self-organizing network AI [2.] is forecast to expand from $5.19 billion in 2024 to $6.18 billion in 2025, at a CAGR of 19.2%. This surge is driven by the integration of machine learning and AI in telecom networks, smart network management investment, and the growing demand for features like self-healing and self-optimization, as well as predictive maintenance technologies.driven by the expansion of 5G, increasing automation demands, and AI integration for network optimization. Opportunities include AI-driven RRM and predictive maintenance. Asia-Pacific emerges as the fast-growing region, boosting telecom innovations amid global trade shifts.

Note 2. Self-organizing network AI leverages software, hardware, and services to dynamically optimize and manage telecom networks, applicable across various network types and deployment modes. The market encompasses a broad range of solutions, from network optimization software to AI-driven planning products, underscoring its expansive potential.

Looking further ahead, the market is expected to reach $12.32 billion by 2029, with a CAGR of 18.8%. Key drivers during this period include heightened demand for automation, increased 5G deployments, and growing network densification, accompanied by rising data traffic and subscriber numbers. Trends such as AI-driven network automation advancements, machine learning integration for real-time optimization, and the rise of generative AI for analytics are reshaping the landscape.

The expansion of 5G networks plays a pivotal role in propelling this growth. These networks, characterized by high-speed data and ultra-low latency, significantly enhance the capabilities of self-organizing network AI. The integration facilitates real-time data processing, supporting automation, optimization, and predictive maintenance, thereby improving service quality and user experience. A notable development in 2023 saw UK outdoor 5G coverage rise to 85-93%, reflecting growing demand and technological advancement.

Huawei Technologies and other major tech companies, are pioneering innovative solutions like AI-driven radio resource management (RRM), which optimizes network performance and enhances user experience. These solutions rely on AI and machine learning for dynamic spectrum and network resource management. For instance, Huawei’s AI Core Network, introduced at MWC 2025, marks a substantial leap in intelligent telecommunications, integrating AI into core systems for seamless connectivity and real-time decision-making.

Strategic acquisitions are also shaping the market, exemplified by Amdocs Limited acquiring TEOCO Corporation in 2023 to bolster its network optimization and analytics capabilities. This acquisition aims to enhance end-to-end network intelligence and operational efficiency.

Leading players in the market include Huawei, Cisco Systems Inc., Qualcomm Incorporated, and many others, driving innovation and competition. Europe held the largest market share in 2024, with Asia-Pacific poised to be the fastest-growing region through the forecast period.

References:

Operator Priorities for 2026 and Beyond: Data, Automation, Customer Experience

https://uk.finance.yahoo.com/news/self-organizing-network-artificial-intelligence-105400706.html

Ericsson integrates agentic AI into its NetCloud platform for self healing and autonomous 5G private network

Agentic AI and the Future of Communications for Autonomous Vehicles (V2X)

IDC Report: Telecom Operators Turn to AI to Boost EBITDA Margins

Omdia: How telcos will evolve in the AI era

Palo Alto Networks and Google Cloud expand partnership with advanced AI infrastructure and cloud security

Arm Holdings unveils “Physical AI” business unit to focus on robotics and automotive

- Cloud and AI: Focused on data center and AI infrastructure solutions.

- Edge: Encompassing mobile devices, personal computing, and related technologies.

- Physical AI: Integrating its automotive business with robotics initiatives.

- Market Opportunity: Acknowledged the significant growth potential in robotics, from industrial automation to humanoid robots, driven by AI advancements.

- Synergy with Automotive: Combined robotics and automotive within the unit due to shared technical needs, such as power efficiency, safety, and sensor technology.

- Strategic Reorganization: Positioned Physical AI as a third core business line, alongside Cloud & AI and Edge (mobile/PC), to better focus resources and expertise.

- Customer Demand: Responding to existing customers (like automakers and robotics firms such as Boston Dynamics) who are integrating more AI into physical devices.

- Enhancing Real-World Impact: Aims to deliver solutions that fundamentally improve labor, productivity, and potentially GDP, moving AI from data centers to physical interactions

2026 Consumer Electronics Show Preview: smartphones, AI in devices/appliances and advanced semiconductor chips

Marvell shrinking share of the RAN custom silicon market & acquisition of XConn Technologies for AI data center connectivity

Groq and Nvidia in non-exclusive AI Inference technology licensing agreement; top Groq execs joining Nvidia

The Internet of Things (IoT) explained along with ARM’s role in making it happen!

Marvell shrinking share of the RAN custom silicon market & acquisition of XConn Technologies for AI data center connectivity

Samsung and Nokia currently use Marvell’s OCTEON Fusion baseband processors and OCTEON Data Processing Units (DPUs) in their 5G Radio Access Network (RAN) equipment.

- OCTEON Fusion Processors: Samsung uses these baseband processors in its 5G base stations, particularly for massive MIMO (Multiple-Input Multiple-Output) deployments that require significant compute power for complex beamforming algorithms.

- OCTEON and OCTEON Fusion Families: Samsung has leveraged multiple generations of these processors for baseband and transport processing solutions.

- Customized OCTEON Silicon: Nokia uses customized Marvell OCTEON silicon across key applications, including multi-RAT (Radio Access Technology) RAN and transport.

- OCTEON Fusion Processors: These are used for baseband processing in Nokia’s 5G products.

- OCTEON TX2 and OCTEON 10 Families: These infrastructure processors are used for demanding tasks like packet processing, security, and edge inferencing within Nokia’s 5G infrastructure.

- OCTEON 10 Fusion: Nokia is working with the latest generation of this 5nm baseband platform, which supports use cases from radio units (RU) to distributed units (DU) for both traditional and Open RAN architectures.

……………………………………………………………………………………………………………………………………………………………………………….

Meanwhile, the total global RAN market has been declining for years as network operators slash investment in network equipment and cut jobs. According to Omdia (owned by Informa):

- Global RAN equipment sales fell from $45 billion in 2022 to $40 billion in 2023 and just $35 billion in 2024. Nokia’s mobile networks business group suffered an operating loss of €64 million (US$75 million) on sales of €5.3 billion ($6.2 billion) for the first nine months of 2025.

- For its 2023 fiscal year (ending in January 2023), Marvell’s carrier division made almost $1.1 billion in revenues, more than 18% of total company sales. Two years later, annual revenues had slumped to just $338.2 million, less than 6% of turnover.

- Marvell’s carrier sales have also recently improved in fiscal 2026, rising 88% year-over-year for the first nine months, to $436.3 billion. However, that’s still half as much as Marvell made during the first nine months of fiscal 2024, and interest in the RAN has seemingly evaporated.

- Samsung’s share of the shrinking RAN market has declined. Amid contraction of the entire addressable market, revenues generated by Samsung Networks fell from 5.39 trillion South Korean won ($3.74 billion) in 2022 to just KRW2.82 trillion ($1.95 billion) in 2024. For the first nine months of 2025, Samsung reported network sales of KRW2.1 trillion ($1.46 billion). But it has also lost market share, which dipped from 6.1% in 2023 to 4.8% in 2024, according to Omdia.

- Ericsson has two development tracks – one for purpose-built RAN products based partly on its own custom RAN silicon and the other for an Intel-based virtual RAN. In contrast to Samsung, the purpose-built RAN silicon portfolio today accounts for nearly all of the company’s sales.

- Ericsson’s senior managers increasingly talk about virtualization as a means of developing one set of software for multiple hardware platforms. The hope is that software originally designed for use with Intel’s processors could be redeployed on CPUs from AMD or licensees from ARM Ltd. with minimal coding changes. Such optionality combined with the narrowing of the performance gap between CPUs and purpose built RAN silicon would make it hard for Ericsson to justify investment in its own custom silicon.

…………………………………………………………………………………………………………………………………………………………………………….

Today, Marvell announced it will acquire XConn Technologies for $540 million to boost AI/data center connectivity. In late 2025, the company announced the acquisition of Celestial AI for up to $5.5 billion to expand its optical interconnects for next-gen data centers, solidifying its position in infrastructure semiconductors.

Adding XConn’s PCIe and CXL switching technology (see illustrations below), fills gaps in Marvell’s silicon portfolio and enables the company to expand into higher-speed interconnects (like PCIe Gen 6).

XConn Technologies XC 50256 chip: 256 lanes with total 2,048GB/s switching capacity

…………………………………………………………………………………………………………………………………….

XC50256 CXL 2.0 Switch Chip

……………………………………………………………………………………………………………………………………………………………

As AI workloads scale, data center system design is evolving from single-rack deployments to larger, multi-rack configurations. These next-generation platforms increasingly require a high-bandwidth, ultra-low latency scale-up fabric such as UALink to efficiently connect large numbers of XPUs and enable more flexible resource sharing across the system.

UALink is a new open industry standard purpose-built for scale-up connectivity, enabling efficient, high-speed communication so multiple accelerators can operate together as a single, larger system. UALink builds on decades of PCIe ecosystem innovation and incorporates proven high-speed I/O techniques to meet the bandwidth, latency, and reach requirements of next-generation accelerated infrastructure.

Together, Marvell and XConn will bring together a significantly larger, integrated team to fully address the rapidly emerging opportunity in UALink switching as well as comprehensively support the growing list of customers and partners who want to work with Marvell in evolving their next generation AI platforms.

About Marvell:

To deliver the data infrastructure technology that connects the world, we’re building solutions on the most powerful foundation: our partnerships with our customers. Trusted by the world’s leading technology companies for over 30 years, we move, store, process and secure the world’s data with semiconductor solutions designed for our customers’ current needs and future ambitions. Through a process of deep collaboration and transparency, we’re ultimately changing the way tomorrow’s enterprise, cloud and carrier architectures transform—for the better.

About XConn Technologies:

XConn is the innovation leader in next-generation interconnect technology for high-performance computing and AI applications. The company is the industry’s first to deliver a hybrid switch supporting both CXL and PCIe on a single chip. Privately funded, XConn is setting the benchmark for data center interconnect with scalability, flexibility, and performance. For more information visit: https://www.xconn-tech.com

……………………………………………………………………………………………………………………………………………………………

References:

https://www.lightreading.com/5g/fragile-samsung-deal-with-marvell-shows-challenge-for-ran-chipmakers

RAN silicon rethink – from purpose built products & ASICs to general purpose processors or GPUs for vRAN & AI RAN

Dell’Oro: Analysis of the Nokia-NVIDIA-partnership on AI RAN

Intel FlexRAN™ gets boost from AT&T; faces competition from Marvel, Qualcomm, and EdgeQ for Open RAN silicon

Analysis: Nokia and Marvell partnership to develop 5G RAN silicon technology + other Nokia moves

Samsung and Marvell develop SoC for Massive MIMO and Advanced Radios

China gaining on U.S. in AI technology arms race- silicon, models and research

Omdia on resurgence of Huawei: #1 RAN vendor in 3 out of 5 regions; RAN market has bottomed

2026 Consumer Electronics Show Preview: smartphones, AI in devices/appliances and advanced semiconductor chips

The 2026 Consumer Electronics Show (CES) in Las Vegas, NV, in the first week of January each year, is one of the largest and most significant tech trade shows in the world. It’s attended by all the major, established tech companies, as well as numerous up-and-coming companies from around the world. The show floor officially opens on Tuesday, January 6th, for four days, but companies have already started making announcements. LG’s CLOiD is a laundry-folding, milk-fetching home robot, SwitchBot’s AI-powered Obboto lamp looks like a desktop version of the Las Vegas Sphere, the wooden Mui Board adds sleep tracking, and Clicks’ new Communicator is a smartphone alternative that reminds us of a classic BlackBerry. Watch for more smartphone announcements from the likes of Samsung, LG, and Motorola – infused with AI, of course.

-

Samsung Galaxy Z TriFold: Samsung is expected to use CES 2026 to bring its first tri-folding smartphone, the Galaxy Z TriFold, to the US market and provide hands-on demonstrations. This device folds into a large 10-inch, tablet-sized display using two hinges.

- New Motorola Foldable: Motorola is anticipated to unveil a new book-style foldable phone, distinct from its existing clamshell Razr models. Physical invites sent to the media suggest it may feature a unique wood finish.

- AI Integration: Artificial intelligence will be a dominant theme, integrated into new laptops and home appliances, and is expected to be a core feature of the new foldable phones, enhancing user experiences and functionality. Samsung has framed its entire CES presence around a “unified AI approach” across its devices.

- Advanced Display Concepts: Beyond immediate product launches, Samsung Display is likely to showcase experimental and futuristic display panels, which often preview technologies that will appear in consumer devices in the coming years, such as wider aspect ratio foldables and potentially holographic displays.

- Smart Glasses and Wearables: Though not strictly phones, AI-powered smart glasses and other wearables are expected to have a significant presence at the show, building on their modest comeback in 2025.

Photo credit: James Martin/CNET

Major smartphone manufacturers like Apple typically host their own separate launch events and do not make major announcements at CES. The focus at CES is generally on innovative form factors, new technologies, and general tech trends rather than mainstream flagship phone releases.

………………………………………………………………………………………………………………………………………………………………………………

- AI Accelerators: Updates are anticipated on the next-generation Instinct MI400 series, designed to compete with Nvidia in the AI training segment.

- Consumer CPUs/APUs: Expect showcases of the new Ryzen AI 400 “Gorgon Point” APUs for laptops and a refresh of Zen 5 X3D desktop CPUs (specifically the Ryzen7 9850X3D and

Ryzen 9 9950X3D).

- Data Center/Server: AMD may provide updates on its next-generation Zen 6 “Venice” EPYC CPUs for the data center market.

- GPUs: No new consumer discrete GPU launches (Radeon RX 9000 series) are expected at this show, with the focus remaining on existing RDNA 4 models and integrated graphics.

- Consumer Processors: Intel will highlight its upcoming “Panther Lake” (Core Ultra Series 3) chips, its first processors using the highly anticipated 18A manufacturing process. These chips will power new laptops and PCs demonstrated by partners like Dell, HP, Lenovo, and Samsung.

- GPUs: Details are expected on the “Crescent Island” Xe3 discrete GPU, positioned as a cost-effective option for inference workloads, and potentially the mid-tier Arc B770 “Battlemage” GPU.

- AI Hardware: The focus will likely be on next-generation AI accelerators beyond the current Blackwell architecture, data center roadmaps, and the company’s push into “physical AI” systems like robotics and self-driving cars.

- Consumer GPUs: No new high-end consumer GPU launches (RTX 50 series) are expected at CES, as these are typically reserved for separate events like Nvidia’s GTC conference.

- PC Processors: The company is expected to showcase laptops featuring its Snapdragon X2 Elite and X2 Elite Extreme chips, emphasizing their performance and power efficiency for AI PCs.

- Samsung Electronics is hosting “The First Look” event to unveil its vision for AI-driven customer experiences across its device ecosystem.

- Visual Semiconductor will showcase its “glasses-free 3D” (GF3D) display technology for both large home displays and smartphones.

- The overall theme of CES 2026 across all companies is the pervasive integration of AI into consumer and industrial devices, from wearables and robots to enterprise machines

………………………………………………………………………………………………………………………………………………………………………………

References:

https://spectrum.ieee.org/ces-2026-preview

https://www.cnet.com/tech/ces-2026-preview-expectations/

Research & Markets: WiFi 6E and WiFi 7 Chipset Market Report; Independent Analysis

Custom AI Chips: Powering the next wave of Intelligent Computing

Nvidia’s networking solutions give it an edge over competitive AI chip makers

Superclusters of Nvidia GPU/AI chips combined with end-to-end network platforms to create next generation data centers

Networking chips and modules for AI data centers: Infiniband, Ultra Ethernet, Optical Connections

Huawei to Double Output of Ascend AI chips in 2026; OpenAI orders HBM chips from SK Hynix & Samsung for Stargate UAE project

OpenAI and Broadcom in $10B deal to make custom AI chips

U.S. export controls on Nvidia H20 AI chips enables Huawei’s 910C GPU to be favored by AI tech giants in China

Technavio: Silicon Photonics market estimated to grow at ~25% CAGR from 2024-2028

LG Electronics demos “6G” power amplifier and adaptive beamforming technology at South Korea trade show

Huawei to compete in global consumer electronics market with world’s first “5G” TV

Sebastian Barros: Using telecom networks for weather sensing requires a strategic telco shift from connectivity providers to ecosystem orchestrators

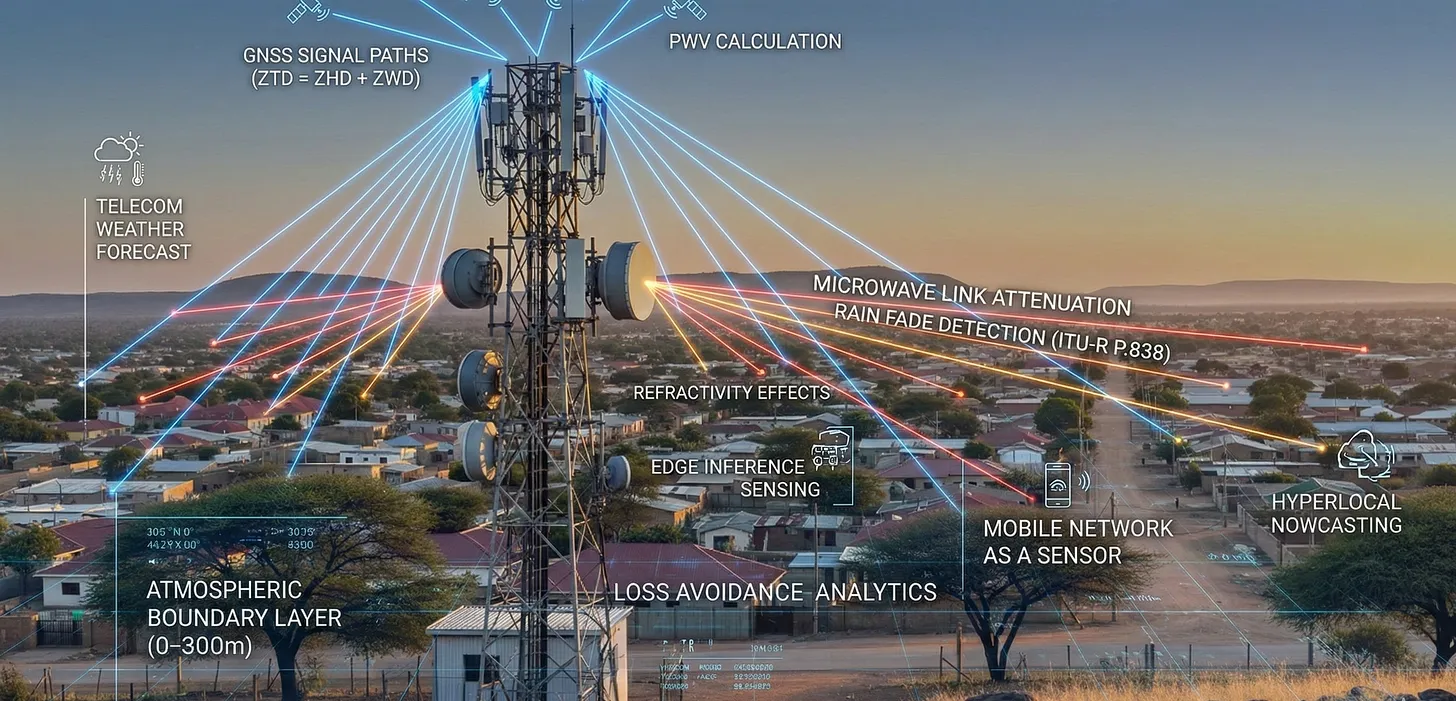

Telecom networks for weather sensing can be facilitated by using existing microwave links and 4G/5G signals as virtual sensors, detecting changes in signal strength and timing caused by rain, humidity, and temperature, effectively turning vast infrastructure into a dense, real-time atmospheric monitoring system for improved forecasting and disaster alerts, notes Sebastian Barros on Substack. By analyzing signal attenuation, telecom networks create high-resolution weather maps, complementing traditional methods like radar. Yet very few network operators or vendors have attempted to use telecommunications infrastructure for dense atmospheric sensing. The data exists but is rarely activated, processed, or disclosed.

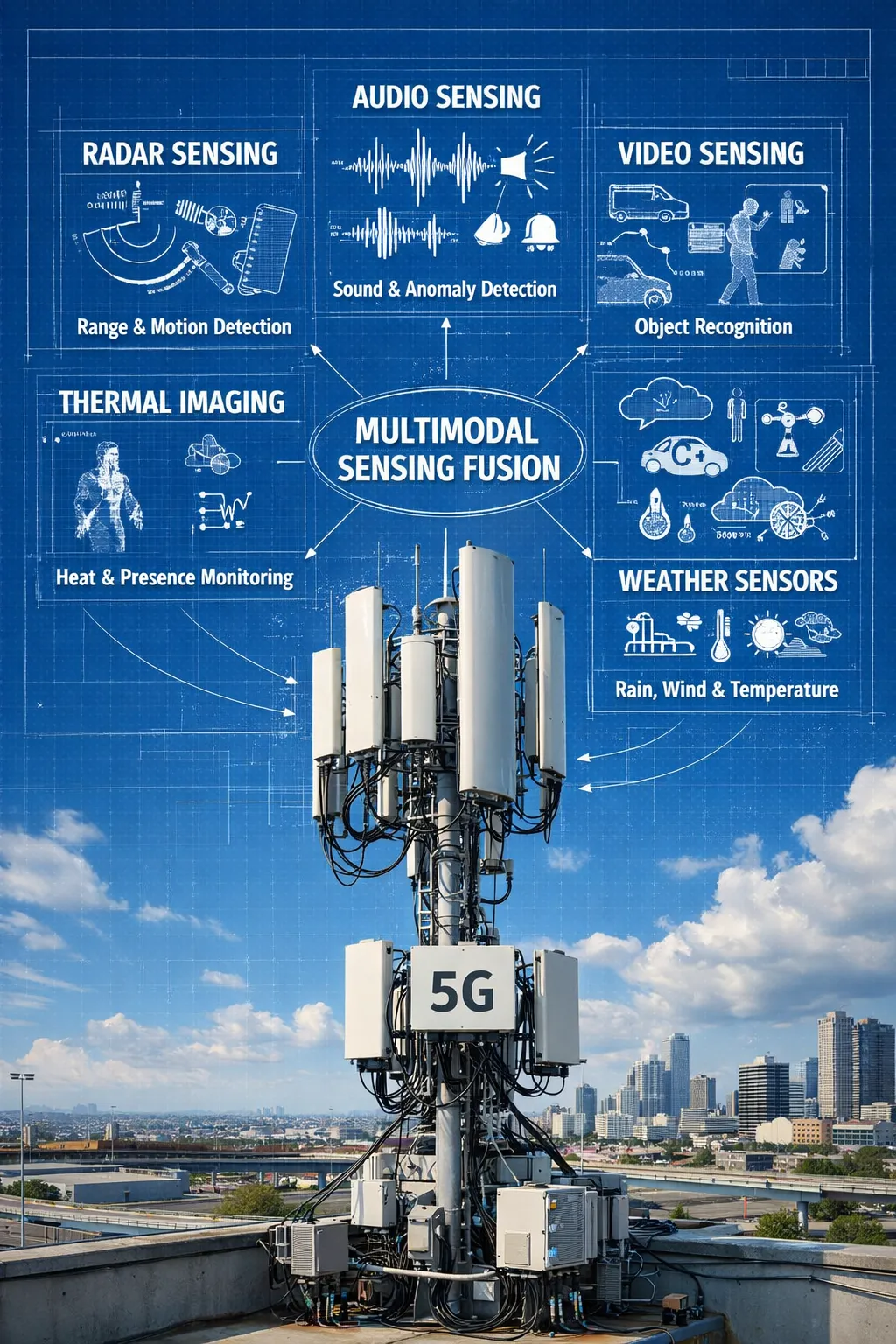

Global Navigation Satellite System (GNSS) [1.] based atmospheric estimation, rain attenuation on microwave links, and radio refractivity effects have been studied for more than 20 years. The physics is well understood and already embedded in network planning and synchronization systems. There are eight million radio sites span cities, roads, ports, factories, and borders. Every site has power, compute, backhaul, antennas, timing, and regulatory protection. Today, the network only provides connectivity. Integrated Sensing and Communication (ISAC) starts to change that. It repurposes radio waves for radar-like sensing, including presence detection, velocity, Doppler shift, and range.

……………………………………………………………………………………………………………………………………………………………………………

Note 1. Global Navigation Satellite System (GNSS) is the umbrella term for satellite constellations like the U.S.’s GPS, Russia’s GLONASS, the EU’s Galileo, and China’s BeiDou, which provide global positioning, navigation, and timing (PNT) services. GNSS receivers use signals from these orbiting satellites to calculate precise locations on Earth, offering increased accuracy and reliability compared to relying on a single system, enabling applications from smartphone navigation to autonomous vehicles and precision agriculture.

……………………………………………………………………………………………………………………………………………………………………………

- Receiver: Your end point receiving device (phone, car navigation, etc.) picks up signals from several of these satellites.

- Calculation: By measuring the time it takes for signals to arrive from at least four satellites, the receiver performs complex calculations (trilateration) to pinpoint your exact position.

Telecom networks can expose not just connectivity but structured awareness: object motion, crowd flow, anomalies, risks, and environmental state. In real time, across every continent. The infrastructure already exists. What’s missing is the architecture and the will to build the sensing layer on top of it. With dense, real-time sensing already in place, telecom can expose environmental intelligence through Open Gateway APIs, just as it exposes location or quality today. No new hardware. No new towers. Just activation, inference at the edge, and exposure.

- Data Siloing: The data produced by ISAC currently remains locked within the physical (PHY) and Media Access Control (MAC) layers of the network. It is primarily used for internal network optimization and is not exposed to external applications or platforms.

- Lack of Abstraction and APIs: There are no standard abstraction layers or Application Programming Interfaces (APIs) that would allow external systems (e.g., weather services, autonomous navigation systems, urban infrastructure management) to access and interpret the raw sensing data.

- Absence of Data Fusion Standards: There is no standard methodology to fuse the output from ISAC with data from other sensing modalities (e.g., vision, audio, thermal sensors). This prevents the creation of a comprehensive, multimodal sensing mesh.

- Missing Marketplace: The lack of standardized access and integration means there is no marketplace for this valuable data, which stifles innovation and collaboration across different industries that could benefit from real-world awareness information.

References:

https://sebastianbarros.substack.com/p/telecom-built-the-worlds-best-weather

https://sebastianbarros.substack.com/p/telco-network-as-a-sensor-is-a-huge

https://www.linkedin.com/feed/update/urn:li:activity:7413260481743769600/

https://www.euspa.europa.eu/eu-space-programme/galileo/what-gnss

2025 Year End Review: Integration of Telecom and ICT; What to Expect in 2026

Smart electromagnetic surfaces/RIS: an optimal low-cost design for integrated communications, sensing and powering

Deutsche Telekom: successful completion of the 6G-TakeOff project with “3D networks”

Roles of 3GPP and ITU-R WP 5D in the IMT 2030/6G standards process

Setting the Record Straight: Many pundits and tech media outlets have been buzzing about 6G. One of many examples is today’s featured Light Reading post titled, “Looking ahead: Ready or not, here comes 6G.” There are also a plethora of 6G alliances and consortiums that are working on proposed 6G technologies — long before the 3GPP specifications or ITU-R IMT 2030/6G standards have been completed. We endeavor to provide an accurate summary of the standardization work in this article, including the delineation of activates between ITU-R and 3GPP.

Executive Summary:

As we’ve explained in numerous IEEE Techblog posts (see References below), ITU-R establishes the technical requirements and minimal performance objectives for IMT 2030 (6G) Radio Interface Technologies (RITs), and Sets of RITs (SRITs). As in IMT 2020 (5G RITs/SRITs), 3GPP develops the actual RIT/SRIT specifications, which are then contributed to ITU-R WP 5D (via ATIS) where they are discussed and agreed upon as a candidate ITU-R IMT 2030 RIT/SRIT for the forthcoming recommendation (i.e. standard). Other IMT 20230 RIT/SRITs might also be considered by 5D.

Author’s Note on IMT 2020: In addition to the 3GPP 5G-NR specs included in IMT 2020 standard (ITU-R M.2150), there were also two others (5Gi/LMLC and ETSI/DECT 5G-SRIT) which have not been widely deployed (ETSI/DECT 5G-SRIT) or deployed at all (5Gi/LMLC). The ITU-R standard for IMT 2020 Frequency Arrangements is ITU-R M.1036, which provides templates and guidelines for implementing IMT in the WRC identified bands, while Recommendation ITU-R M.2150 details the radio interfaces.

5G and 6G Frequency Bands and Arrangements: In addition to IMT 2020/5G and IMT 2030/6G RIT/SRITs, ITU-R WP5D develops the associated 5G and 6G Frequency Arrangements, based on the inputs received from the most recent ITU-R World Radio Communications (WRC) conference. The most recent WRC (#23) was held Dec 2023 in Dubai, UAE which did not definitively identify specific frequency bands for IMT-2030 (6G) deployment, but rather agreed on frequency bands to be studied as potential candidates for WRC-27 (in 2027) and beyond. So we don’t even know which frequencies will be used for IMT 2030/6G at this time.

5G and 6G Non-Radio Specifications: As with IMT 2020, 3GPP will develop all the non-radio specifications for IMT 2030/6G but will not likely send them to ITU-T for standardization as 3GPP did not do that for IMT 2020. Those non-radio specs include: mobile core network (and the role AI will play), signaling, security (including cryptography), network management (including AI), integrated sensing and communications, distributed access points, digital models for network optimization and planning, extending MEC, etc.

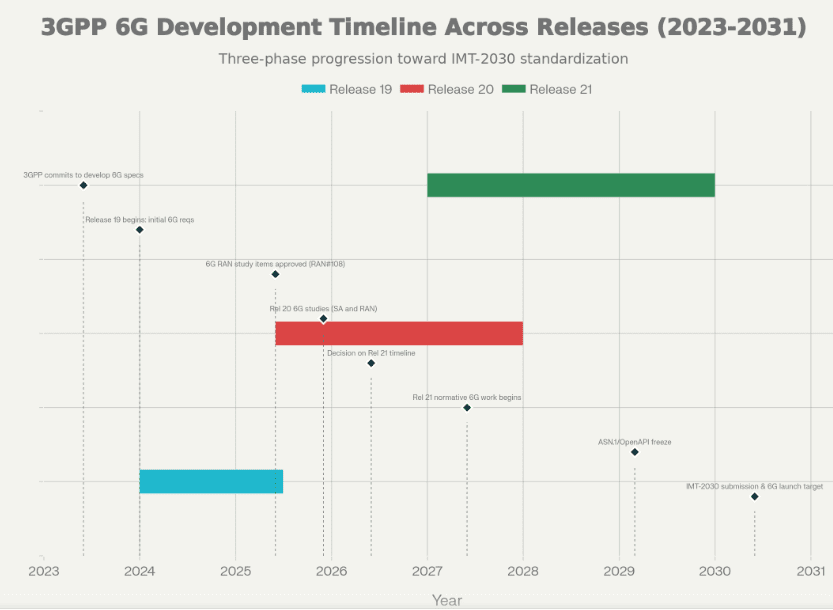

3GPP 6G Status: 3GPP’s specification work on 6G is currently in the early stages, focusing on requirements, architecture, and key technology enablers with a projected timeline that targets initial 6G specifications by 2028–2030 for commercial systems. The work is organized through 3GPP’s releases:

- Release 19 initiating 6G requirement studies in 2024.

- Release 20 expanding on 6G architecture and technology exploration in 2025 in parallel with 5G advanced.

- Release 21 delivering the first concrete 6G specifications, and subsequent releases refining features and interoperability toward mass deployment around 2030. The timeline for the actual spec work for this release is to be decided by June 2026.

The overall plan aligns with ITU’s timeline for 6G ecosystem readiness and commercial availability by roughly 2030, though exact finish dates depend on evolving technical, regulatory, and market conditions.

3GPP Release 20 concurrent work on 5G Advanced and 6G: This approach reflects a general view that 6G should be more of an evolution rather than a revolution. The big three equipment vendors Ericsson, Huawei and Nokia see 5G Advanced as a foundation for 6G, but in their own specific ways. “This dual focus on both 5G Advanced and 6G we needed to ensure that there is a continuity and to create a framework that allows the two tracks to complement but not compete with each other,” said Puneet Jain, Chair of 3GPP’s Service and Systems Aspects working group and senior principal engineer for technical standards at Intel, in a video interview.

Examples of 5G Advanced capabilities that are expected to be further developed in 6G include: the integration of machine learning into the RAN and core networks, energy efficiency, low latency, advanced MIMO techniques and satellite integration, according to 5G Americas. The latest 5G Advanced specs also address integrated sensing and communication (ISAC), which is considered to be an important new capability for 6G.

……………………………………………………………………………………………………………………………………………………….

Here’s a concise technical summary of the 6G standardization work in both ITU-R and 3GPP:

-

Scope and objectives

-

Establish 6G service requirements and use cases, including extreme data rates, ultra-low latency, high reliability, and enhanced AI-driven network management, while ensuring backward compatibility where feasible.

-

Define a scalable, flexible air interface and spectrum strategy to support wide bandwidths (including frequencies above 7 GHz and potential THz considerations) and diverse deployment scenarios.

-

-

Core architectural themes

-

Enhanced cloud-native, end-to-end network architecture with distributed computing, edge capabilities, and AI/ML-driven orchestration for dynamic resource management.

-

Native support for integrated space-air-ground networks and network slicing to enable heterogeneous service delivery and global coverage.

-

-

Air interface and radio aspects

-

Investigations into wider channel bandwidths, robust channel coding hybrids (LDPC and Polar codes as baselines with extensions), and numerology that can span multiple bands while maintaining scalable, energy-efficient designs.

-

Emphasis on robust coverage, especially at cell edges, and efficient use of mid-band and higher-frequency spectrum to balance performance and practical deployment considerations.

-

-

Mobility and latency

-

Aiming for lower end-to-end latency, improved reliability, and advanced mobility support to enable new immersive and industrial applications, while ensuring interoperability with 5G and future network layers.

-

-

Security and privacy

-

Early attention to security-by-design within the 6G architecture, including native protection of data planes and resilient identity/authentication mechanisms across heterogeneous networks.

-

Timeline and milestones (progress up to projected completion dates):

-

2024: Initiation of 6G work in Release 19, focused on requirements and initial study items for 6G SA1 service requirements.

-

2025–2026: Continued refinement of use cases, service requirements, and architectural concepts; preparatory work for Release 20 items.

-

2027–2028: First concrete 6G specifications anticipated in Release 21, with core radio and network framework definitions, and initial protocol stack extensions.

-

2029–2030: Further releases (Release 22 onward) expand interoperability, optimization, and ecosystem compatibility, moving toward commercial deployment and early trials.

-

Target commercial availability: Roughly 2030, with initial systems expected to appear earlier in some pilots or regional deployments depending on market and regulatory readiness.

-

Public summaries and industry analyses triangulate the timeline and major themes from 3GPP plenaries and RAN/SA/WG discussions, with dates anchored to 2024–2028 planning and the stated aim of Release 21 delivering the first 6G specifications.

-

Draft documents and WG SID proposals provide insight into technical directions (coding, bandwidth, channel modeling, and spectrum considerations), though these are interim and subject to change as the standardization process evolves.

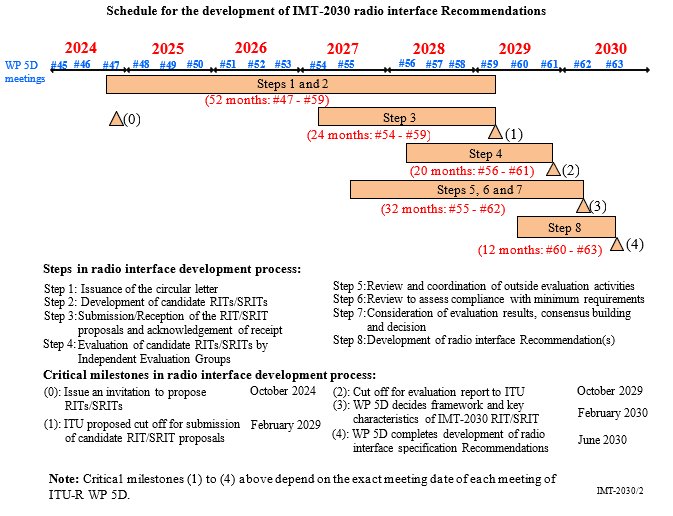

Submission of IMT 2030 RIT/SRIT proposals may begin at 54th meeting of ITU-R WP 5D, currently planned for February 2027. The final deadline for submissions is 16:00 hours UTC, 12 calendar days prior to the start of the 59th meeting of WP 5D in February 2029.

The evaluation of the proposed RITs/SRITs by the independent evaluation groups and the consensus-building process will be performed throughout this time period and thereafter. Subsequent calendar schedules will be decided according to the submissions of proposals.

After ITU-R WP 5D approves a recommendation (e.g for IMT 2030) it then goes through a formal approval process before it becomes a standard. It is sent to ITU-R SG 5 for approval, then all ITU member states review the draft and if there is near unanimous approval it is adopted as an official ITU-R recommendation or standard. That ITU-R recommendation approval process takes ~3 or 4 months. Therefore, we expect the ITU-R IMT 2030 RIT/SRIT standard to be approved in late 2030 to early 2031 with early 6G deployments to begin at that time.

………………………………………………………………………………………………………………………………………..

References:

https://www.itu.int/en/ITU-R/study-groups/rsg5/rwp5d/imt-2030/Pages/default.aspx

https://www.lightreading.com/6g/looking-ahead-ready-or-not-here-comes-6g

https://www.3gpp.org/specifications-technologies/releases/release-20

https://www.5gamericas.org/5g-advanced-overview/

ITU-R WP5D IMT 2030 Submission & Evaluation Guidelines vs 6G specs in 3GPP Release 20 & 21

ITU-R WP 5D Timeline for submission, evaluation process & consensus building for IMT-2030 (6G) RITs/SRITs

ITU-R WP 5D reports on: IMT-2030 (“6G”) Minimum Technology Performance Requirements; Evaluation Criteria & Methodology

AI wireless and fiber optic network technologies; IMT 2030 “native AI” concept

Highlights of 3GPP Stage 1 Workshop on IMT 2030 (6G) Use Cases

Should Peak Data Rates be specified for 5G (IMT 2020) and 6G (IMT 2030) networks?

GSMA Vision 2040 study identifies spectrum needs during the peak 6G era of 2035–2040

Highlights and Summary of the 2025 Brooklyn 6G Summit

NGMN: 6G Key Messages from a network operator point of view

Nokia and Rohde & Schwarz collaborate on AI-powered 6G receiver years before IMT 2030 RIT submissions to ITU-R WP5D

Verizon’s 6G Innovation Forum joins a crowded list of 6G efforts that may conflict with 3GPP and ITU-R IMT-2030 work

Nokia Bell Labs and KDDI Research partner for 6G energy efficiency and network resiliency

Deutsche Telekom: successful completion of the 6G-TakeOff project with “3D networks”

Market research firms Omdia and Dell’Oro: impact of 6G and AI investments on telcos

Qualcomm CEO: expect “pre-commercial” 6G devices by 2028

Ericsson and e& (UAE) sign MoU for 6G collaboration vs ITU-R IMT-2030 framework

KT and LG Electronics to cooperate on 6G technologies and standards, especially full-duplex communications

Highlights of Nokia’s Smart Factory in Oulu, Finland for 5G and 6G innovation

Nokia sees new types of 6G connected devices facilitated by a “3 layer technology stack”

Rakuten Symphony exec: “5G is a failure; breaking the bank; to the extent 6G may not be affordable”

India’s TRAI releases Recommendations on use of Tera Hertz Spectrum for 6G

New ITU report in progress: Technical feasibility of IMT in bands above 100 GHz (92 GHz and 400 GHz)