Author: Alan Weissberger

Nokia’s AI Applications Study: “Physical AI” may require RAN redesign to support high‑volume, low‑latency uplink traffic

According to Nokia, AI-generated traffic in most mobile networks is at an early stage, with application maturity and adoption by consumers and enterprises only at the start of a broader AI super cycle. The Finland based company analyzed more than 50 AI applications and came to three conclusions: higher uplink traffic, overall data growth and increasing sensitivity to delay in conversational services such as chat and voice. Also, the mobile network industry is moving toward “AI-RAN” or “6G-native” structures that embed AI into the network, transforming radio sites into “robotic” nodes capable of edge inference and handling these new demands.

–>Do those findings require a structural change in Radio Access Network (RAN) design? Let’s take a fresh look…..

Mobile networks traditionally support a heterogeneous mix of traffic, ranging from high-throughput video streaming to low-bandwidth, delay-tolerant messaging. Network operators typically address escalating capacity demands through infrastructure expansion and overprovisioning, relying on best-effort delivery—a model that has proven remarkably resilient. However, capacity alone is insufficient for new use cases.

The transition from circuit-switched voice to packet-switched (voice/video/data) IP traffic requires a redesign to accommodate variable packet sizes instead of predictable, continuous voice patterns. The proliferation of Internet of Things (IoT) devices introduced requirements for massive machine-type communications (mMTC), driving the development of LTE-M and NB-IoT to optimize for deep indoor penetration and power efficiency. Conversely, consumer web-based services and video streaming scale seamlessly by adding RAN and core capacity. Existing AI applications, such as generative AI chatbots, follow this model, making current RAN architectures adequate for the present load.

A paradigm shift is emerging with Physical AI [1.], which enables machines like autonomous vehicles and robots to interact with the environment in real time. Unlike traditional video streaming, these applications cannot leverage buffering to absorb network jitter. In Physical AI, high-definition video frames and sensor data must arrive within stringent time-to-live (TTL) constraints to remain actionable. This shifts the focus from average throughput to consistent low latency. Maintaining this strict QoS, particularly in the uplink, requires abandoning best-effort, overprovisioned models in favor of guaranteed scheduling, which necessitates substantial reserved capacity or specialized AI-RAN functionalities.

Note 1. Physical AI combines sensors, perception, decision-making, and actuators so machines can understand their environment and take physical (real world) action. Physical AI is used by robots, vehicles, drones, industrial machines, and smart infrastructure that generate and consume real-time sensor, video, and control traffic. These systems need tight coupling between low latency, high reliability, and continuous feedback loops because decisions in software immediately affect physical motion or control. Physical AI is different from typical generative AI because the output is not text or images; it is real-world action. That makes network performance critical, especially for uplink-heavy, latency-sensitive traffic where delays can affect safety, control accuracy, and operational efficiency.

“Physical AI introduces the possibility that large-volume uplink video with strict latency requirements. It will become a meaningful part of mobile traffic, creating both a design challenge and a monetization opportunity,” says Harish Viswanathan, Head of the Radio Systems Research Group at Nokia.

Image Credit: Techslang

Delivering uplink video with sub‑20 ms end-to-end latency can require provisioning three to four times the average uplink capacity. While this level of redundancy is manageable for low-bandwidth services such as voice or control signaling, it becomes prohibitively expensive when supporting high-throughput video streams.

As device densities increase, the required headroom for reserved capacity grows disproportionately, significantly constraining network scalability and driving up cost per bit. This makes Physical AI traffic—characterized by real-time sensor and video inputs for machine analysis—fundamentally different from conventional services, and unsuited to existing best‑effort transport models.

To address these challenges, telecom operators are expected to adopt a multi‑layer approach encompassing network architecture, traffic management, and service monetization.

At the Application layer, not all traffic requires identical latency treatment. When video or sensor data is processed by AI rather than consumed by humans, only semantically relevant information may need immediate uplink transmission. This emerging paradigm, known as semantic communication, allows for significant data reduction while preserving information integrity within latency‑critical loops.

Within the network domain, established mechanisms such as Quality of Service (QoS) and network slicing remain essential. QoS enables prioritization of specific traffic classes, while slicing supports logically isolated virtual networks with guaranteed service-level attributes—latency, jitter, bandwidth, and reliability.

At the service and business model level, supporting low-latency, bandwidth-intensive applications reshapes network economics. Operators must evolve beyond best‑effort pricing structures toward differentiated service tiers or performance-based charging models aligned with enterprise and industrial use cases.

For the RAN, Physical AI underscores the need for greater programmability and elasticity. Future RAN designs will depend on dynamic resource allocation, real-time traffic classification, and AI-driven orchestration to balance throughput, latency, and reliability at scale.

As Physical AI deployments expand—from autonomous mobility to precision manufacturing and tele‑robotics—managing high‑volume, low‑latency uplink traffic will become a defining capability for next‑generation network strategy and differentiation. Unlike conventional mobile data, Physical AI cannot rely on buffering to manage traffic spikes. The requirement for continuous video and sensor data to arrive within strict time limits to inform real-time actions makes traditional “best-effort” network approaches inefficient and costly.

- Uplink-Centric Demand: Physical AI shifts the network requirement from downlink-heavy (human consumption) to uplink-heavy (machine-generated) traffic.

- Strict Latency & Throughput: Maintaining consistent low latency (e.g., around 20 milliseconds) for high-volume video uploads can require 3x to 4x more capacity than average, making overprovisioning unsustainable.

- Need for Programmable Architectures: To support this, RAN must move toward more flexible, AI-native architectures that prioritize critical data and provide deterministic, rather than best-effort, performance.

- Semantic Communication: To reduce data volume while maintaining performance, the RAN will need to adopt semantic communication—transmitting only the essential data needed for the AI to make decisions.

………………………………………………………………………………………………………………………………………………………..

References:

https://www.nokia.com/asset/215147/

https://telcomagazine.com/news/nokia-report-points-to-ai-driven-shift-in-mobile-traffic

Arm Holdings unveils “Physical AI” business unit to focus on robotics and automotive

Is the “far edge” a bridge to far to cross for AI inferencing? What about “Distributed AI Grids”?

The Financial Trap of Autonomous Networks: Scaling Agentic AI in the Telecom Core

Ericsson and Intel collaborate to accelerate AI-Native 6G; other AI-Native 6G advancements at MWC 2026

NVIDIA and global telecom leaders to build 6G on open and secure AI-native platforms + Linux Foundation launches OCUDU

Comparing AI Native mode in 6G (IMT 2030) vs AI Overlay/Add-On status in 5G (IMT 2020)

AI-RAN Reality Check: hype vs hesitation, shaky business case, no specific definition, no standards?

Analysis: Nvidia’s $2 billion investment in Marvell; NVLink Fusion ecosystem & RAN vendor silicon strategy

NVIDIA just announced a $2 billion investment in custom silicon developer Marvell Technology (NASDAQ:MRVL). This comes right on the heels of its $2 billion investments in Lumentum, Coherent, and $1 billion in Nokia.

- NVIDIA is also deepening its relationship with Marvell within its NVLink Fusion ecosystem. NVLink is NVIDIA’s proprietary scale-up networking system. Scale-up refers to connecting computing components within a rack rather than between racks.

- NVLink Fusion essentially allows customers to connect non-NVIDIA components to NVIDIA components within the same rack. Thus, customers can mix and match technologies from different vendors when they make a purchase. However, each platform does need to have at least one NVIDIA component.

- NVLink Fusion is in opposition to the UALink consortium, of which NVIDIA is not a member. Key NVIDIA competitors like Broadcom (NASDAQ:AVGO) and Advanced Micro Devices (NASDAQ:AMD) back UALink. Companies in this group have the same goal as NVIDIA does for NVLink Fusion: to allow customers to easily connect their devices together within racks.

- UALink’s goal is to reduce NVIDIA’s power by providing an alternative to NVLink Fusion. One of the key benefits to data center operators, which buy AI chips, is avoiding vendor lock-in. By being able to source components from a wide range of companies, there is greater competitive pressure, and thus more room to negotiate. Building AI infrastructure solely on NVLink grants NVIDIA massive bargaining power.

Photo Credit: Marvell Technology

Marvell has been a member of both NVLink and UALink, one of the few major chip companies that can make this claim. Now, NVIDIA is more formally recognizing Marvell’s place within NVLink, potentially expanding its ability to win customers. Meanwhile, Marvell strengthens its standing in the AI market. From Marvell’s perspective, the deal has significant benefits. Even though Marvell was already a part of NVLink Fusion, the company’s place within this ecosystem is now elevated. Not all companies in NVLink Fusion have received a multi-billion-dollar investment from NVIDIA or their own dedicated announcement.

These factors suggest that NVIDIA is particularly confident in Marvell’s solutions and that it will put in more effort to sell them to customers. NVIDIA now has 2 billion more reasons to do just that. This is particularly noteworthy, as MediaTek and Alchip Technologies are also in NVLink Fusion, and compete with Marvell in custom silicon.

In fact, Alchip has been the source of considerable volatility in Marvell shares over the recent past. This comes as some investors believed that the firm would siphon off much of the custom chip business that Marvell has built with Amazon. However, Marvell’s last earnings report helped to significantly quell these fears. Additionally, Marvell will add $2 billion to its balance sheet. That is very significant, as the company ended last quarter with cash and equivalents of just $2.64 billion, adding meaningful financial flexibility.

The announcement also outlines new products outside of custom silicon that NVIDIA and Marvell will collaborate on. This includes scale-up networking components, optical interconnect solutions, and silicon photonics. This comes just two months after Marvell completed its acquisition of Celestial AI, which it calls a “pioneer in optical interconnect technology for scale-up connectivity.”

Expanding the language of the partnership to include these very products suggests that NVLink Fusion could offer a significant pathway to grow this recently acquired business. Overall, between the $2 billion investment, the dedicated announcement, and the expanded partnership scope, Marvell now looks like the custom silicon provider of choice within NVLink Fusion.

NVIDIA is telling its customers that its technology and Marvell’s can integrate seamlessly. This could influence future customers who want to use both products to do so on NVLink. As a result, the firm could see revenue benefits by getting in on deals it otherwise might not have participated in. This logic extends to customers interested in Marvell’s optical interconnect and scale-up solutions.

……………………………………………………………………………………………………………………………………………………………………………………………………………………………..

RAN Silicon Strategy:

- Ericsson continues to prioritize its in-house custom ASICs, dismissing claims that the R&D required is unsustainable. Michael Begley, Ericsson’s Head of RAN Compute, noted at MWC that their 30-year legacy of iterative development creates a cost efficiency that a “blank sheet” competitor couldn’t match.. Despite this, Ericsson is diversifying through its Cloud RAN portfolio. Their vRAN software, currently optimized for Intel x86 architectures, is being architected for portability across AMD and Nvidia (Arm-based) platforms. While Ericsson frames this as addressing varied customer requirements, industry observers view it as a strategic hedge against the shifting hardware landscape.

- Conversely, Nokia is signaling a pivot away from proprietary hardware. Following a significant investment from Nvidia, Nokia’s leadership has articulated a shift toward general-purpose hardware and decoupled software models. Although Nokia remains contractually tied to Marvell for custom silicon through the mid-2030s, the potential to offload intensive Massive MIMO processing to Nvidia GPUs suggests a technical path toward phased-out reliance on traditional custom ASICs.

- Furthermore, Ericsson utilizing look-aside acceleration via custom ASICs while Nokia commits to in-line acceleration using SmartNICs for improved performance and TCO. Ericsson prioritizes in-house silicon for efficiency, whereas Nokia is shifting toward general-purpose hardware and Nvidia-backed GPU acceleration.

- Meanwhile, Samsung’s RAN silicon strategy has fundamentally inverted the traditional industry model: virtualized RAN (vRAN) is now its primary offering, with purpose-built custom silicon moved to the periphery. As of early 2026, Samsung is the global leader in vRAN deployments, surpassing 53,000 active sites. For vRAN, Samsung is aggressively diversifying its silicon partners beyond Intel to include AMD (x86) and NVIDIA (Arm-based Grace CPUs) to prevent hardware lock-in. The South Korean company is positioning its vRAN as the “AI-native” foundation for 5G-Advanced and 6G, aiming for fully autonomous “autopilot” networks by 2027 through its CognitiV NOS (Network Operations Suite).

- Samsung is leveraging its internal silicon foundry to develop 2nm Exynos chips and Silicon Photonics (“Dream Chip”), with a mass production target of 2028 to integrate optical transmission directly into AI accelerators and RAN modules. Its new Network in a Server (NIS) solution consolidates Core, vCU, vDU, and AI agents into a single 1U server, targeted at private 5G and enterprise edge use cases. While Samsung has pivoted heavily toward a vRAN-first strategy, it still maintains a portfolio of purpose-built (non-vRAN) equipment that utilizes custom ASICs. However, the role of these chips is shifting from a primary focus to a supporting role as the industry moves toward general-purpose hardware.

- Legacy and Specialized Support: Samsung continues to sell and support its traditional Baseband Units (BBUs), which are powered by proprietary silicon developed in partnership with companies like Marvell. These ASICs are still used for high-density, performance-critical deployments where standard CPUs are not yet chosen by the operator.

- Hardware Acceleration: In non-vRAN scenarios, custom ASICs handle the most computationally intensive Layer 1 (L1) tasks, such as beamforming for Massive MIMO and FEC (Forward Error Correction).

- Phased-Out Trajectory: Samsung executives have acknowledged that the era of proprietary hardware is likely nearing its end. Alok Shah, VP of Network Strategy at Samsung, has noted that while they still provide purpose-built BBUs, it is only a “matter of time” before vRAN becomes the universal standard.

……………………………………………………………………………………………………………………………………………………….

References:

https://www.lightreading.com/5g/nvidia-backed-marvell-pitches-one-chip-to-rule-the-ran

RAN Silicon Rethink- Part II; vRAN and General-Purpose Compute

RAN silicon rethink – from purpose built products & ASICs to general purpose processors or GPUs for vRAN & AI RAN

AI-RAN Reality Check: hype vs hesitation, shaky business case, no specific definition, no standards?

Ericsson goes with custom silicon (rather than Nvidia GPUs) for AI RAN

AT&T and Ericsson boost Cloud RAN performance with AI-native software running on Intel Xeon 6 SoC

Analysis: Rakuten Mobile and Intel partnership to embed AI directly into vRAN

Is the “far edge” a bridge to far to cross for AI inferencing? What about “Distributed AI Grids”?

Analysis: Edge AI and Qualcomm’s AI Program for Innovators 2026 – APAC for startups to lead in AI innovation

Will “AI at the Edge” transform telecom or be yet another telco monetization failure?

Is the “far edge” a bridge to far to cross for AI inferencing? What about “Distributed AI Grids”?

How Far is the Far Edge?

As major telcos size up distributed edge sites for a possible AI inferencing model, they’re trying to determine how far out the right place is in their networks to invest in AI computing capacity. According to Light Reading, the “far edge” is a divisive option for inferencing. According to Omdia, owned by Informa, the Far edge includes: radio access network (RAN) cell sites, aggregation hubs, exchange offices, optical line terminal (OLT) nodes, and Tier 2 metro hubs.

Many telcos are struggling to define how far is the edge from customer premises and how to serve various use cases with compute and intelligence? It seems that 5G SA core with network slicing would be mandatory to support multiple unique use cases, each with different QoS requirements.

According to Omdia’s Telco Edge Computing Survey last year, just 15% of telcos ranked network far edge as the top location for where most AI inferencing will take place, while even less (11%) said the network near edge would be the main spot (which includes central offices, headend sites and large telco data centers). The results showed AI inferencing is expected to be handled mostly on the end devices themselves and at the enterprise edge (e.g., offices, campus or manufacturing sites).

Kerem Arsal, Omdia senior principal analyst for telco enterprise and whoIe sale, predicted in a research note that this year will see telcos split into camps of “believers” and “doubters” of the far edge.

Image Credit: Sphere

…………………………………………………………………………………………………………………………………………………………………………………………………………………..

AT&T VP Yigal Elbaz, speaking at the recent New Street Research and BCG Global Connectivity Leaders Conference, expressed a cautious view on AI compute at the “far edge,” questioning how far the edge truly needs to extend to serve specific use cases effectively. He said the following (Source: Light Reading)

“The proliferation of compute and high-performing compute across the nation, in all metros is just happening, with a software layer on top of this [and] with the tools that developers need. So, I am not sure that there’s much value in extending that compute all the way to the far edge just to save another millisecond or two milliseconds of latency.”

“AT&T’s fiber and wireless networks can provide the “deterministic experience” needed between any new use cases and help them to “intelligently connect to the right model that they use, the context or the infrastructure that they need because that’s going to be heavily distributed across the US.”

“There’s no doubt that that AI is going to be embedded into wireless networks, and we’re going to call it AI-native and combine the physical space with the intelligence of the network. This is all true,” said Elbaz.

………………………………………………………………………………………………………………………………………………………………………………………………………………………..

Distributed AI Grids:

- Ethernet with RDMA (RoCE): The foundation is built on Nvidia Spectrum-X Ethernet, which utilizes RDMA over Converged Ethernet (RoCE). This allows for direct memory access between edge GPUs (e.g., Nvidia RTX PRO 6000 Blackwell Server Edition) and the network core, bypassing CPU overhead to achieve near-line-rate performance.

- Scale-Across Networking: Using Nvidia Spectrum-XGS, the architecture extends standard RoCE to scale across geographically distributed sites. This creates a unified “AI Factory Grid” where remote edge nodes function as a single, programmable compute substrate.

- Silicon One Routing: Cisco’s Silicon One-based routing is utilized for AI-optimized traffic management, providing the high-speed, high-density throughput required for token-intensive inference workloads.

- Zero Trust & Secure Pathways: The interconnect includes a Zero Trust security layer embedded directly into the fabric. It utilizes localized traffic breakout and policy-enforced pathways to ensure that sensitive IoT and video data (such as public safety feeds) remain within the customer’s secure domain at the network edge.

- Orchestration Control Plane: A workload-aware control plane manages these protocols to intelligently route tasks based on real-time KPIs (latency, cost-per-token, and data sovereignty), ensuring that “mission-critical” inference happens at the optimal node.

- Proprietary Software Lock-in: Integrating network functions into a proprietary ecosystem (like Nvidia’s CUDA or AI Aerial) can create a “subscription trap,” where software is inseparable from specific hardware, making it nearly impossible to swap vendors without a total architectural overhaul.

- Data Fragmentation: Deploying AI across a distributed grid often leads to fragmented data sets across legacy and new multi-vendor platforms, which can result in inaccurate AI models and increased operational complexity.

- Standardization Lag: While industry bodies like the GSMA are pushing for Open Telco AI standards, the rapid deployment of proprietary AI systems often outpaces these frameworks, leading to entrenched, incompatible systems that require significantly more resources to reconcile later.

- Integration with Legacy Systems: Modern “agentic AI” and AI-native stacks often struggle to orchestrate processes across siloed legacy infrastructure, creating rigid operational environments that prevent the seamless flow of data needed for automated network troubleshooting.

Bottom Line: While the AI Grid may offer a more viable roadmap than AI-RAN, there is insufficient industry discourse regarding the strategic risks of a global, geographically distributed computing platform—as Nvidia defines it—reliant on a single-vendor hardware stack. Although Nvidia currently maintains undisputed market dominance, historical precedents such as Intel serve as a cautionary tale; long-term dominance is never guaranteed, and even market leaders face potential obsolescence. Furthermore, Nvidia’s practice of providing capital injections to entities that subsequently re-invest those funds back into Nvidia’s own ecosystem raises significant concerns regarding market sustainability and long-term financial health.

References:

https://www.lightreading.com/ai-machine-learning/at-t-cto-casts-doubt-on-ai-compute-at-the-far-edge

https://www.lightreading.com/5g/nvidia-lines-up-ai-grid-as-orange-cto-echoes-the-ai-ran-doubts

Edge AI Computing Explained: Key Concepts and Industry Use Cases

Will “AI at the Edge” transform telecom or be yet another telco monetization failure?

Analysis: Edge AI and Qualcomm’s AI Program for Innovators 2026 – APAC for startups to lead in AI innovation

Private 5G networks move to include automation, autonomous systems, edge computing & AI operations

Nvidia AI-RAN survey results; AI inferencing as a reinvention of edge computing?

Nvidia’s networking solutions give it an edge over competitive AI chip makers

IDC Survey of Networking Leaders: Enterprise AI progress stalls despite ambitious goals

New IDC research released in April 2026 highlights a growing disconnect between ambitious enterprise AI goals and the reality of their technical execution. The 2026 IDC AI in Networking Special Report (LinkedIn Video hyperlink) [1.] found that organizations expecting to move from early and selective AI use for business and IT initiatives to more advanced deployments largely haven’t. The result is a widening gap between intent and execution that is becoming harder to ignore. This widening gap in AI execution is driven by a mismatch between ambitious goals and the realities of legacy infrastructure, which cannot handle the data demands for production-grade models.

Despite high expectations, many organizations have seen their AI progress stall over the last 18 months, with “select use” adopters failing to advance to more “substantial” deployments. A critical shortage of specialized AI experienced personnel, combined with lagging security and governance controls, has caused widespread “pilot paralysis” across most enterprises. To overcome this, organizations are shifting toward “AI factories” to create a repeatable, governed pipeline for deploying AI.

Note 1. IDC’s 2026 AI in Networking Special Report is a report driven by a worldwide survey of 500+ enterprise network executives and experts. The report covers both the impact and plans for supporting AI workloads across the network and using AI-powered networking solutions. The focus of this research is comprehensive, covering datacenters, cloud services, multi-cloud environments, network core and edge, and network management.

…………………………………………………………………………………………………………………………………………………………………………………………………………………………………………

Mark Leary, IDC research director, Network Observability and Automation:

“Many solution suppliers are prioritizing a platform approach to the challenges associated with moving AI workloads into production. This survey of networking leaders highlights the shift in preference from platforms to best-in-class solutions when supporting AI workloads across their networks. As certain functional requirements intensify, as IT staff experience and expertise build, and as platforms fall short in delivering expected advantages, IT organizations are more willing to take on the added responsibilities associated with assembling their own mix of best-in-class solutions. For the supplier, the challenge is to avoid developing and delivering a platform that is classified as a jack-of-all-trades and master of none.”

“Agentic AI is to have a profound effect on the network infrastructure and on networking staff. Two years ago, AI assistants were labeled leading edge when they offered natural language processing for operator interactions and network management guidance driven by technical manual content. How things have changed! Agentic AI is no longer just a passive informer and instructor but an active intelligent virtual network engineer. Agents gather and process comprehensive network data, develop deep and precise insights, and determine and, increasingly, execute needed network management actions. Whether fixing a network problem, activating a network service, optimizing a network configuration, or responding to a developing network condition, agentic AI solutions are proving more and more useful across the entire network and the entire set of tasks required to engineer and operate the network.”

While this IDC Survey Spotlight offers only an overview of responses relating to agentic AI, detailed results are available by geographic region, select country, company size, major vertical industries, respondent role, and the AI maturity level of the respondent’s organization.

…………………………………………………………………………………………………………………………………………………………………………………………………………………………………………

Organizations are pursuing AI in networking across two categories:

1.] Supporting AI workloads across network infrastructure and

2.] Applying AI to network operations.

But in both cases, progress is constrained by persistent challenges. “2026 is when organizations find out if AI in networking delivers real operational impact—or remains stuck in pilot mode,” Leary said in the referenced LinkedIn Video.

Source: IDC

……………………………………………………………………………………………………………………………

Security remains the top concern among enterprises, both as a barrier to deployment and a primary use case for AI itself. “You have to fight AI with AI from a network security perspective,” said Brandon Butler, senior research manager at IDC. “There’s a realization that nefarious actors are leveraging AI themselves. The pressure is already on the network. The question now is whether organizations can keep up with what AI is demanding of their infrastructure,” he added.

Integration with existing systems and a shortage of skilled talent follow close behind. “Most folks don’t feel their staff can fully evaluate and select the right solutions,” Leary said. As a result, many organizations are turning outward for help:

- 81% say they are increasing spending on managed service providers (MSP) to support AI initiatives.

- 89% of data centers expect to increase bandwidth by at least 11% within the next year, driven by AI workloads.

- That demand extends beyond individual facilities, with 91% expecting similar growth in inter-data center connectivity, highlighting the strain on distributed architectures.

- Nearly half of respondents (46%) prefer AI systems that can both determine and execute network actions autonomously.

- Another 41% favor a guided approach, while 13% prefer no AI involvement.

Cloud environments are seeing sharper increases in AI use. Organizations anticipate an average 49% rise in bandwidth for cloud connectivity over the next year. “The cloud is almost always involved,” Leary says. “The biggest group mixes one cloud platform with one or more data centers.”

Beyond the data center and cloud, the network edge is emerging as the next major growth area. Today, 27% of organizations have deployed AI workloads at the edge, and 54% plan to do so within two years. Butler said: “Folks who are leveraging AI more extensively are already pushing workloads to the edge. We see this as a leading indicator of where the market is going.”

“Two years in a row, the largest group said they want AI to both determine and execute actions. It was honestly surprising,” he added.

Enterprise edge bandwidth is projected to grow by an average of 51% in the next year. As AI becomes more distributed, network teams will need to manage greater complexity across environments while maintaining performance and security.

…………………………………………………………………………………………………………………………………………………………………………….

When assessing expected ROI from AI in networking, IDC survey respondents focused on elevating IT capabilities, with 31% prioritizing superior service levels and 30% focusing on operational efficiency. These outcomes ranked above worker productivity and revenue, suggesting that leaders are strategically utilizing AI to enhance foundational operational workflows. Notably, reducing operating costs ranked seventh, suggesting a focus on strategic value rather than immediate expense reduction.

Source: IDC

……………………………………………………………………………………………………

IDC Research identified specific applications—from automated configuration validation to AI-enhanced threat response—as catalysts for measurable performance gains and the organizational trust essential for broader implementation. For network executives, this phased approach represents the most strategic methodology for achieving long-term operational objectives.

“It doesn’t have to be handing the keys of your kingdom to AI to really get some benefits from these AI tools,” Butler concluded.

……………………………………………………………………………………………………………………………………………………………………………………….

References:

https://www.networkworld.com/article/4152655/ai-for-it-stalls-as-network-complexity-rises.html

Inside TM Forum’s Catalyst project “Living Networks – Phase III”

TM Forum’s [1.] Catalyst project “Living Networks – Phase III” brings together a broad ecosystem of communications service providers and technology innovators to advance autonomous, resilient, and energy-efficient network operations. It will be showcased at DTW Ignite 2026, taking place June 23–25 in Copenhagen.

Note 1. TM Forum is a global alliance of 800+ organizations across the connectivity ecosystem. Members include the top 10 Communication Service Providers, top three hyperscalers, leading Network Equipment Providers, and a wide range of vendors, consultancies, and system integrators.

Image Credit: TM Forum

………………………………………………………………………………………………..

“Living Networks – Phase III” builds on earlier Catalyst work focused on intent-based automation and traffic resilience. According to TM Forum and project materials, Phase III advances that foundation toward governed, adaptive intelligence. It introduces a cloud-native, Kubernetes-based architecture with stronger data governance to help networks predict failures, optimize resources, and support programmable, platform-based business models such as Connectivity-as-a-Service.

The project is designed to help operators improve resilience, reduce operational effort, and lower energy consumption. It also enables them to scale autonomous operations safely across increasingly complex multi-domain environments.

Digital Global Systems (DGS), a company using AI/ML to optimize radio frequencies to monitor radio spectrum, is collaborating with a distinguished group of global Catalyst participants, including Beyond Now, Chunghwa Telecom, Globetom, Infosim, Julius-Maximilians-Universität Würzburg, MTN Nigeria, MTN South Africa, NTT Group, Orange, Telekomunikasi Indonesia International, Seacom, and Telecom Italia. TM Forum identifies several of these operators as project champions, underscoring the depth of CSP engagement behind the initiative.

The Catalyst project aligns closely with the DGS’s mission to bring AI-powered intelligence to complex communications environments. DGS develops real-time RF Awareness and spectrum optimization technologies that help operators detect issues early, improve reliability, and make communications infrastructure more resilient and efficient.

“Telecom networks are becoming too dynamic and too essential to be managed with yesterday’s operating models,” said Armando Montalvo, CTO of Digital Global Systems. “By participating in Living Networks – Phase III with leading CSPs and technology innovators from around the world, DGS is helping advance a future in which networks become more autonomous, more resilient, and more responsive to both operational demands and business opportunities. This kind of collaboration is exactly what the industry needs to move from automation experiments to real, scalable transformation.”

“Living Networks – Phase III demonstrates what becomes possible when CSPs, research institutions, and specialized technology providers work together around a common vision for autonomous networks,” said Dr. David Hock, Director of Research at Infosim. “The collaboration within this Catalyst is especially powerful because it connects innovation with practical operational outcomes, helping the industry move toward more trusted, scalable, and intelligent network automation.”

The Catalyst focuses on a set of pressing industry challenges, including rising service demands, sustainability pressures, operational complexity, and the business impact of network outages. By combining AI, digital twins, multi-domain orchestration, and stronger governance over data and automation workflows, the team aims to show how operators can reduce mean time to repair and improve SLA performance. It also highlights how operators can create new monetization opportunities across partner ecosystems.

DGS said the project is another example of how collaboration across the telecom ecosystem can accelerate innovation beyond what any one company can achieve alone. At DTW Ignite in Copenhagen this June, the team will demonstrate how communications networks can evolve from static infrastructure into adaptive, intelligent platforms. These platforms will support the next generation of digital services.

About Digital Global Systems (DGS):

Digital Global Systems (DGS) delivers AI-driven RF awareness and spectrum optimization solutions that power resilient communications for governments, industries, and communities worldwide. With more than 725 issued and pending patents, DGS helps nations and enterprises rebuild stronger, smarter, and more connected.

References:

Deloitte and TM Forum : How AI could revitalize the ailing telecom industry?

GSMA, ETSI, IEEE, ITU & TM Forum: AI Telco Troubleshooting Challenge + TelecomGPT: a dedicated LLM for telecom applications

Broadband Forum new work areas to enable broadband services & apps

Verizon’s 6G Innovation Forum joins a crowded list of 6G efforts that may conflict with 3GPP and ITU-R IMT-2030 work

TM Forum Survey: Communications Service Providers Struggle with Business Case for NFV & Digital Transformation

Will 2026 be the “Year of the AI Ontology” for telecoms?

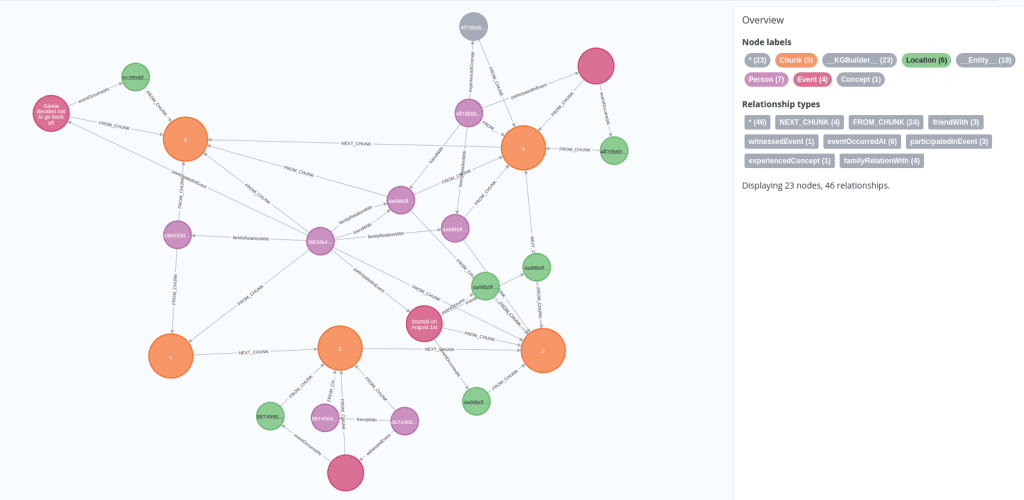

Overview:

For the telecommunications industry, many pundits say 2026 will be the year of “AI Ontology [1.],” primarily because a standardized knowledge plane is now seen as the “ultimate driver” for reaching higher levels of network autonomy. Industry experts from companies like Telstra and Amdocs emphasize that for agentic AI to move from isolated pilots to enterprise-scale operations, it requires a structured, explainable, and typed world model—an ontology—to unify data across fragmented systems.

Note 1. An ontology in AI is a formal, machine-readable framework that defines the concepts, properties, and relationships within a specific domain to enable knowledge sharing, reasoning, and semantic understanding. It structures data into a network of “things” (classes) rather than just files, acting as a “Rosetta stone” that allows AI systems to understand context, infer conclusions, and act on data.

…………………………………………………………………………………………………

Several network providers are adopting a “standardized, ontology-driven knowledge plane” to enable agentic AI to operate across traditionally siloed network systems. This shift in 2026, is driven by the need for Level 4 and 5 network autonomy, where agents require a common language to reason about network states and business intents.

1. Mark Sanders, Telstra’s chief architect, talked about the emergence of a structured, explainable knowledge plane that removes silo barriers between agents, freeing them up to become the workhorses of network automation. “We think for the autonomous network to reach level four or five is going to require a standardized, ontology-driven approach on the knowledge plane,” said Sanders at a recent Ericsson conference, touting this approach as the ultimate driver in next-level autonomous networks.

2. For BT, agentic AI is already yielding tangible results in IT service desks, especially as organizations shift from assistance to execution, according to Girish Mahajan, senior leader for mobile AI data/automation. In particular, AI agents have reduced trouble ticket resolution times. “It has reduced the time of the manual effort, and it has also increased efficiency of the service desk,” he said. However, same autonomy that drives value also introduces unpredictability.

“The outcome of agentic AI is something unpredictable because it’s continuously adapting during execution,” he said, adding a call for better design principles. “We need reflection-based architecture, and we need better AI/human collaboration. AI agents should learn from their actions and should work along with humans in their day-to-day.”

3. For Vodafone, work has revolved around lighthouse projects: small-scale efforts to demonstrate the value of a larger business use case.

“It’s quite a mundane use case around energy cost recovery. So obviously, energy is a huge operational expense for our industry,” said Simon Norton, digital/OSS engineering director, Vodafone Group. “It’s very complex, especially when you’re working in that multi-market environment, to manually compare line by line with energy bills against your own data sets.”

Vodafone’s AI agents, therefore, have been automatically ingesting bills and comparing them to identify any tariff anomalies.

“It’s mundane but actually super valuable,” said Norton, who stressed operators should find a project with a clear value proposition and get it out into production quickly. “You build the credibility, you start to get the funding into the system, and it buys you the time to work on that longer-term strategy.”

………………………………………………………………………………………..

- From Assistant to Doer: AI is evolving from a “helper” that provides insights to a “doer” that autonomously observes, decides, and executes actions within governed boundaries.

- Multi-Agent Orchestration: 2026 will see the rise of coordinated multi-agent ecosystems. These systems require an ontology to ensure that a “planner agent” can accurately break down goals for specialized “worker agents” without semantic confusion.

- Intent-Based Orchestration: To ensure network stability, telcos are adopting intent-based orchestration layers. These layers use ontologies to provide the deterministic, model-driven framework necessary to ground agent actions in real-world business intent.

- Network Autonomy: CSPs are aiming for TM Forum Level 3 or 4 autonomy by late 2026, using agents to turn intent into outcomes in live networks.

- Operational Leverage: Rather than massive headcount cuts, agentic AI is providing “operational leverage,” allowing teams to manage growing network complexity with the same workforce.

- Measurable ROI: Investments are focusing on high-impact areas like autonomous incident handling (30-40% cost reduction) and predictive maintenance (up to 40% fewer outages).

- Structured Knowledge Plane: Operators are shifting toward a standardized, ontology-driven knowledge plane to remove silo barriers between agents. This allows multiple specialized agents to collaborate on “broader, bigger outcomes” like root cause analysis across billing, CRM, and network systems.

- Enabling Agentic Autonomy: While 2025 focused on “agentic AI” as a buzzword, 2026 is about the foundational infrastructure—specifically graph-based data systems and digital twins—that gives agents the “executable semantics” they need to plan and act safely.

- Unified Truth for Agents: Without a central ontology, horizontal AI platforms often suffer from “agent drift,” where different agents interpret the same business logic (e.g., “unlimited plan”) differently, leading to billing and provisioning errors.

Ericsson’s View:

Hassan Iftikhar, Ericsson’s head of product domain data & analytics, called for better hyperscaler collaboration on scale, foundational cloud, and AI capabilities.

“The AI tooling, the security framework, we use those to industrialize and put agents into production… It’s pretty much an ecosystem that works together,” he said. At the panel, the data head revealed the vendor’s role in the agentic ecosystem through the use case of one operator needing help with catalog management, as well as scarce developer skills.

“They wanted to take the pain out of product configuration. So we designed a multi-agentic system where it basically helps product managers and marketers to configure and publish new instances through an actual language. So very complex catalog engineering, which can take weeks, is reduced to hours where you can search for reuse and launch.”

Iftikhar also revealed an OSS tool to help one operator’s engineers to diagnose and resolve issues within their operational instances – resulting in an agent that was seemingly too autonomous for the client.

“We put this use case together, basically taking an intent from an operations engineer, such as data diagnostics, and into it, we built the ability to take remediation actions automatically. What we sort of decided from that was a bit of a step too far to just throw that to an operations department for it to autonomously take steps. So we actually had to go in and build guardrails to limit that capability to a human oversight capability.”

“I think what we learned is that we have to sort of build that confidence in the team step by step before we can actually go to fully autonomous operation. Our learning from adjusting that use case was to be practical and adapt very quickly to what the business really needs.”

…………………………………………………………………………………

References:

https://www.sdxcentral.com/analysis/has-telco-already-faced-the-year-of-ai-agents/

The Financial Trap of Autonomous Networks: Scaling Agentic AI in the Telecom Core

Telecom operators investing in Agentic AI while Self Organizing Network AI market set for rapid growth

Nokia to showcase agentic AI network slicing; Ericsson partners with Ookla to measure 5G network slicing performance

T-Mobile US announces new broadband wireless and fiber targets, 5G-A with agentic AI and live voice call translation

Ericsson integrates Agentic AI into its NetCloud platform for self healing and autonomous 5G private networks

Agentic AI and the Future of Communications for Autonomous Vehicles (V2X)

AWS to deploy AI inference chips from Cerebras in its data centers; Anapurna Labs/Amazon in-house AI silicon products

Using AI, DeepSig Advances Open, Intelligent Baseband RAN Architectures

Using advanced AI techniques, DeepSig has reportedly managed to eliminate a mobile network’s pilot signal, thereby removing signaling overhead without degrading overall performance. Founded in 2016, the U.S.-based startup occupies a leading position at the intersection of artificial intelligence (AI) and the radio access network (RAN), developing data-driven models that could supplant traditional, human-engineered signal processing algorithms.

This work has become especially relevant as the telecom industry moves toward open and software-defined RAN architectures. DeepSig is now a visible contributor to OCUDU (Open Centralized Unit Distributed Unit), an open-source initiative announced by the Linux Foundation in collaboration with the U.S. Department of Defense and its FutureG ecosystem partners to accelerate open CU/DU development for 5G and early 6G systems. OCUDU is intended to establish a carrier-grade reference platform for baseband software, with support for AI-based algorithms and solutions embedded in the RAN compute stack.

As AI becomes a central theme across the telecom ecosystem, DeepSig has rapidly moved from relative obscurity to prominence through collaborations with major industry and government stakeholders. Most recently, the company emerged as a key contributor to OCUDU—the Open Central Unit Distributed Unit initiative announced by the Linux Foundation and the U.S. Department of Defense (DoD) ahead of MWC Barcelona 2026. The program’s goal is to introduce open-source software elements into the RAN baseband domain, an area historically dominated by proprietary offerings from Ericsson, Nokia, and Samsung. By lowering barriers to entry, OCUDU aims to foster innovation and enable smaller players like DeepSig to participate more freely in the U.S. baseband ecosystem.

Image Credit: DeepSig

DeepSig was identified, alongside Ireland-based Software Radio Systems (SRS), as one of two startups selected to deliver OCUDU’s initial software stack. “The National Spectrum Consortium had an RFQ for developing an open-source stack,” explained Jim Shea, DeepSig’s CEO. “SRS already had a capable baseline, but it needed to be elevated to carrier-grade—adding new features and strengthening reliability,” he added.

Meanwhile, major vendors Ericsson and Nokia were named “premier members” of the new OCUDU Ecosystem Foundation. While both could, in principle, leverage the platform to integrate third-party components into their baseband systems, industry observers remain skeptical that these incumbents will fully embrace open-source alternatives over their established proprietary stacks. In comments at MWC, Nokia CEO Justin Hotard characterized OCUDU as a welcome ecosystem evolution to accelerate innovation but clarified that “not everything necessarily needs to be open source.”

Driven in part by DoD interests, OCUDU reflects broader U.S. government ambitions to ensure that 5G and future 6G networks remain open to domestic innovation, particularly for defense and mission-critical use cases. For vendors like Ericsson and Nokia—who view defense markets as increasingly strategic—this alignment could bring both opportunity and complexity.

DeepSig’s trajectory extends beyond OCUDU. The company’s technology originated from research by Tim O’Shea, now CTO, during his tenure at Virginia Tech, where he explored deep learning’s application to wireless signal processing. “You can apply deep learning to enhance the way communication systems operate by replacing many of the traditional algorithms,” said Jim Shea. While these methods do not circumvent theoretical limits such as Shannon’s Law, small efficiency gains can yield substantial operational and economic benefits for cost-sensitive mobile operators.

As DeepSig and peers continue to redefine how intelligence is integrated into the RAN, their work signals a shift toward AI-native architectures—where machine learning, rather than handcrafted algorithms, becomes the foundation for next-generation network optimization.

References:

https://www.lightreading.com/5g/small-deepsig-is-at-heart-of-ai-ran-challenge-to-ericsson-nokia

Accelerating 5G vRAN, AI-RAN, and 6G on OCUDU, “the Linux of RAN”

AI-RAN Reality Check: hype vs hesitation, shaky business case, no specific definition, no standards?

Ericsson goes with custom silicon (rather than Nvidia GPUs) for AI RAN

Dell’Oro: RAN Market Stabilized in 2025 with 1% CAG forecast over next 5 years; Opinion on AI RAN, 5G Advanced, 6G RAN/Core risks

Dell’Oro: Analysis of the Nokia-NVIDIA-partnership on AI RAN

RAN silicon rethink – from purpose built products & ASICs to general purpose processors or GPUs for vRAN & AI RAN

Dell’Oro: AI RAN to account for 1/3 of RAN market by 2029; AI RAN Alliance membership increases but few telcos have joined

Dell’Oro: AI RAN to account for 1/3 of RAN market by 2029; AI RAN Alliance membership increases but few telcos have joined

InterDigital led consortium to advance wireless spectrum coexistence & sharing

Telecom sessions at Nvidia’s 2025 AI developers GTC: March 17–21 in San Jose, CA

Sources: AI is Getting Smarter, but Hallucinations Are Getting Worse

Huawei FY2025: 2.2% YoY revenue increase; strategic pivot to AI and intelligent automotive solutions

Overview:

Huawei has released its 2025 audited financial results, reporting total revenue of CNY 880.9bn ($127.6bn) — a 2.2% YoY increase. The report highlights a significant expansion in profitability, with operating profit surging 22.1% to CNY 96.9bn ($14bn). That translated to an operating profit margin of 11%, up 180 bps from the 9.2% recorded in 2024.

Image Credit: Imago/Alamy Stock Photo

……………………………………………………………………………………………….

“In 2025, Huawei’s overall performance remained steady,” said Sabrina Meng, Huawei’s Rotating Chairwoman. “I would like to thank our customers for your ongoing trust and support. Thanks also to consumers for choosing Huawei, as well as suppliers, partners, and developers around the world for working with us. “Of course, we couldn’t do any of this without the support of every Huawei employee. Thank you for your hard work, and also your families for their steadfast support.”

In 2025, Huawei’s connectivity business weathered the impact of industry investment cycles, while its computing business continued to seize opportunities in AI. The consumer business worked to overcome formidable challenges, driving the HarmonyOS ecosystem to cross a new threshold in user experience. Huawei’s digital power business continued to place quality before all else. Huawei Cloud honed its competitiveness with a focus on core services, and the company’s intelligent automotive solutions grew rapidly.

………………………………………………………………………………………….

Pivot to Intelligent Automotive Solutions:

Huawei is aggressively diversifying and placing a massive strategic bet on the automotive sector to drive future growth. Its Intelligent Automotive Solutions business is experiencing explosive growth, with revenue increasing by over 400% in 2024 to 26.35 billion yuan ($3.62bn).

In 2025, the unit surged another 72% to CNY 45 billion (approx. $6.2bn). Huawei does not manufacture its own cars directly but operates as a top-tier supplier and technology partner (similar to “Bosch”) via its Harmony Intelligent Mobility Alliance (HIMA). Huawei continues to invest heavily in its “future-oriented” auto and AI businesses.

Revenue Breakdown by Segment & Geography:

- Infrastructure & Solutions: Remains the primary anchor, contributing 42.6% of total revenue (up 2.6% YoY).

- Consumer Business: Accounted for 39.1% of revenue, maintaining a steady recovery with 1.6% YoY growth.

- Intelligent Automotive Solutions (IAS): The high-growth outlier, with revenues spiking 72.1% YoY to CNY 45bn, now representing 5.1% of the total portfolio.

- Geographic Mix: Domestic China operations generated ~70% of revenue. International footprints were led by EMEA (18.3%), followed by Asia-Pacific (5.7%) and the Americas (4.2%).

R&D Intensity and Ecosystem Strategy:

Huawei continues to maintain one of the industry’s highest reinvestment rates, allocating CNY 192.3bn ($27.9bn) to R&D—a massive 21.8% of annual revenue. Huawei’s R&D expenditure rose 7% last year to an impressive RMB 192.3 billion (approximately $28 billion), representing nearly 22% of annual revenue.

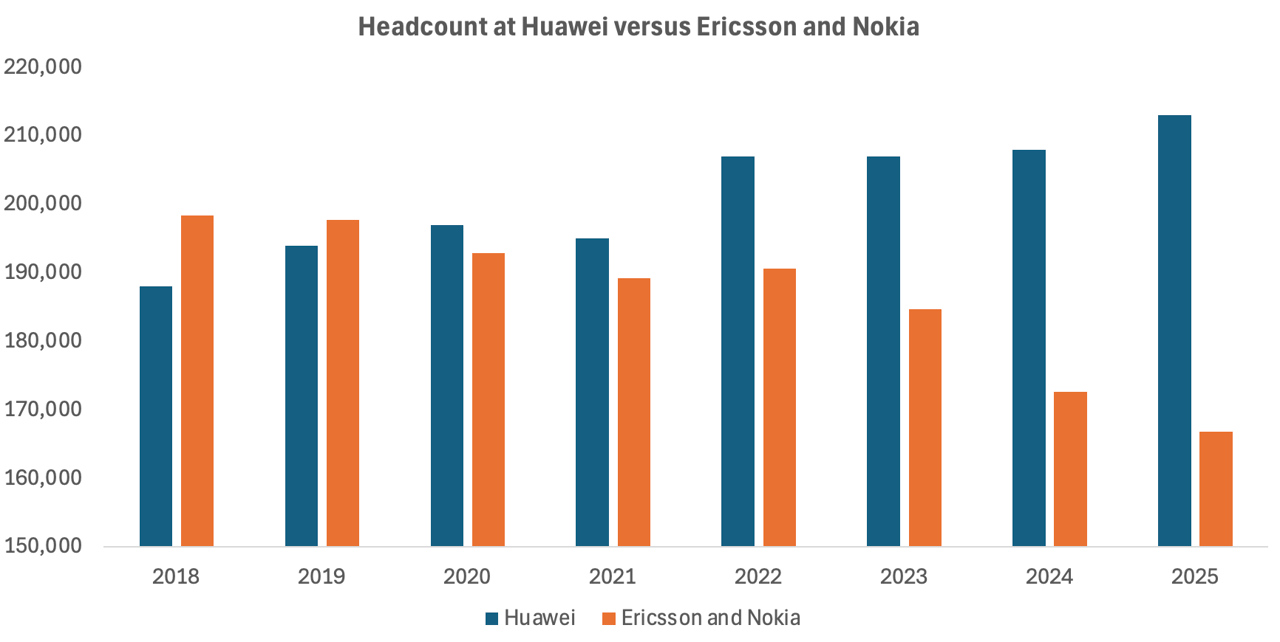

In sharp contrast, Ericsson—whose portfolio remains heavily centered on 5G—reduced its R&D outlay by 9% to SEK 48.9 billion (about $5.2 billion). At 21% of sales, Ericsson’s R&D intensity was largely in line with Huawei’s. Nokia, meanwhile, outpaced both rivals in relative terms, allocating 23% of revenue—roughly €4.6 billion ($5.3 billion)—to R&D, up 7% year over year. Most of that increase stemmed from the February 2025 acquisition of optical systems vendor Infinera, which expanded Nokia’s technology base and R&D footprint.

The huge divergence lies in workforce trends. As reported by Light Reading, Ericsson and Nokia have collectively shed nearly 28,000 positions since 2022, equivalent to about 15% of their combined headcount that year. While growing automation and AI integration have arguably improved operational efficiency, the scale of these reductions also reflects a cooling investment climate among operators. With telco spending on 5G deployments tapering off, Europe’s two large network equipment vendors are continuing layoffs.

In contrast, Huawei’s workforce has continued to increase as it has pushed into new industrial sectors. Since 2021, when Huawei suffered its worst-ever sales decline, the Chinese behemoth has added about 18,000 employees to its payroll, according to annual reports. Around 5,000 of them were recruited last year, including 1,000 in R&D alone. That resulted in 213,000 employees Huawei employees in 2025.

The increased hiring boosted overall operating costs, including R&D expenditure, by 7.2%, to about RMB334 billion ($48.5 billion).

Source & Graph Credit: Light Reading

………………………………………………………………………………………………………………….

Moving forward, China’s largest IT vendor’s roadmap prioritizes:

- Full-Stack AI Integration: Embedding AI and carrier-grade security across the entire product lifecycle and network architecture.

- Strategic Domain Expansion: Increasing CapEx and R&D in connectivity, cloud, and autonomous driving.

- Ecosystem Sovereignty: Scaling the Ascend (AI), Kunpeng (Computing), and HarmonyOS ecosystems to drive vendor-agnostic collaboration and industry-wide adoption

Meng stressed, “We are moving toward a future that is full of uncertainty, so we have to remain true to our strategy and maintain strategic focus. We will translate strategy to execution, keep cultivating the developer ecosystem, and pursue high-quality development.”

………………………………………………………………………………………………………………….

References:

https://www.huawei.com/en/news/2026/3/annual-report-2025

https://www.lightreading.com/5g/huawei-sales-growth-plummeted-in-2025-as-it-gained-5-000-workers

Huawei unveils AI Centric Network roadmap, U6 GHz products, 5G Advanced strategy and SuperPoD cluster computing platforms

Huawei, Qualcomm, Samsung, and Ericsson Leading Patent Race in $15 Billion 5G Licensing Market

Huawei Cloud Review and Global Sales Partner Policies for 2026

Huawei’s Electric Vehicle Charging Technology & Top 10 Charging Trends

Huawei to Double Output of Ascend AI chips in 2026; OpenAI orders HBM chips from SK Hynix & Samsung for Stargate UAE project

Omdia on resurgence of Huawei: #1 RAN vendor in 3 out of 5 regions; RAN market has bottomed

Huawei launches CloudMatrix 384 AI System to rival Nvidia’s most advanced AI system

U.S. export controls on Nvidia H20 AI chips enables Huawei’s 910C GPU to be favored by AI tech giants in China

AI-RAN Reality Check: hype vs hesitation, shaky business case, no specific definition, no standards?

Introduction:

The narrative surrounding “AI-RAN” — a term thrust into the spotlight by Nvidia — may have left many believing that boatloads of GPUs are already powering baseband compute in RAN equipment across the world’s seven million mobile sites. In truth, the reality is far more nascent.

Among major RAN vendors, Nokia stands alone in adapting baseband software for GPU acceleration. Yet even Nokia does not anticipate commercial readiness until late 2026, as confirmed by its Chief Technology Officer, Pallavi Mahajan, during the company’s MWC press conference earlier this year. For now, no operator has announced a commercial deployment — despite the buzz around trials.

Early Movers, Limited Momentum:

Much of the current AI-RAN activity centers on two operators: T-Mobile US and Japan’s SoftBank. At MWC, T-Mobile’s Executive Vice President of Innovation and ex-CTO, John Saw, acknowledged the limited availability of deployable solutions, quipping that he hoped Nokia would deliver an AI-RAN product within the year. Nokia CEO Justin Hotard quickly assured him that such a milestone was indeed on track.

Still, the debut of a GPU-based RAN stack does not imply an imminent large-scale rollout. Without tangible network performance or cost advantages over existing virtualized or disaggregated RAN approaches, operators are unlikely to move past controlled trials.

SoftBank, while often positioned as an AI-RAN pioneer, remains cautious. As Ryuji Wakikawa, Vice President of its Advanced Technology Division, outlined last year, the operator aims to deploy only a handful of AI-RAN sites over the next fiscal cycle. Transitioning from testing to carrying live commercial traffic, he emphasized, demands a significant maturity leap in quality and feature completeness.

Beyond Hype: Limited Commercial Engagement:

Elsewhere, Indonesia’s Indosat Ooredoo Hutchison (IOH) was heralded in 2025 as the first operator in Southeast Asia pursuing AI-RAN. More than a year later, authoritative sources indicate IOH’s work remains confined to its research facility in Surabaya, with no near-term plans for GPU investment at cell sites until measurable value is demonstrated.

The challenge for Nokia — and for GPU-backed AI-RAN broadly — is convincing operators that general-purpose accelerators offer sufficient performance or efficiency gains for most RAN workloads. T-Mobile and SoftBank continue evaluating both Nokia and Ericsson, whose AI-RAN philosophies diverge sharply. Nokia is developing GPU-based baseband software, while Ericsson maintains its focus on custom silicon and CPU architectures.

Divergent Architectures and Use Cases:

Ericsson contends that no core RAN performance enhancements intrinsically require GPUs. Its ongoing collaboration with Nvidia leverages the latter’s Grace CPU technology rather than its GPU portfolio, reserving GPU acceleration only for compute-intensive functions like forward error correction (FEC).

If Ericsson’s premise holds, GPUs in the RAN become justifiable only when supporting AI inference workloads. Even then, inference at every radio site remains improbable. A more incremental strategy — deploying GPUs selectively at edge locations where AI workloads justify their cost — may prove more practical.

This modular approach aligns with existing virtual RAN deployments based on Intel CPUs, which already include native FEC acceleration. “It is an off-the-shelf card that you can slide right into an HPE or Dell or Supermicro server,” said Alok Shah, the vice president of network strategy for Samsung Networks. “That gets you the edge functionality you are looking for.”

Rethinking the Economic Case for AI RAN:

Initially, Nvidia positioned GPUs for AI-RAN as viable only if broadly utilized for AI inference across the RAN. Following its strategic alignment with Nokia, however, the company has softened its stance — now suggesting that appropriately sized, power-efficient GPUs could make sense even when dedicated solely to baseband computation.

For now, the global RAN landscape remains far from GPU-saturated. AI-RAN remains an exploratory frontier — one testing not only the technical feasibility of GPUs at the edge, but also the economic/business case rationale for re-architecting a trillion-dollar telecom infrastructure around them.

The AI models suitable for RAN environments must be compact and efficient, far slimmer than those that drive data center-scale AI. There’s no room for the massive, parameter-heavy neural networks that justify a GPU’s cost or energy appetite. In that light, a GPU looks less like a breakthrough and more like a mismatch — a chainsaw brought to a task better handled with a sharp pair of scissors.

Evaluating the Case for AI-RAN Acceleration:

The central question is whether GPUs can deliver meaningful benefits over custom silicon or conventional CPUs for RAN compute. Ericsson’s engineers argue that AI models deployed at the RAN must remain relatively lightweight, with far fewer parameters than those used in large-scale data centers. Excessive model complexity could introduce signaling delays unacceptable in real-time radio environments. In this context, deploying a GPU for such workloads might seem disproportionate — a high-powered tool for a low-demand task.

The most compelling defense of GPU-based RAN acceleration came from Ronnie Vasishta, Nvidia’s Senior Vice President for Telecom, who told Light Reading last summer, “The world is developing on Nvidia.” His point underscores a shift in semiconductor economics: the cost and risk of building dedicated silicon for a mature and shrinking RAN market make general-purpose processors — supported by large-volume ecosystems — increasingly attractive alternatives.

Intel’s difficulties further illustrate this dynamic. Despite $53 billion in revenue during 2025, the former microprocessor king barely broke even despite $53 billion in revenue, following a $19 billion loss the previous year. A major restructuring cut its headcount by nearly 24,000, and its planned spinoff of the Network and Edge division — serving telecom infrastructure customers — was ultimately abandoned in December. Nvidia, the world’s most valuable company, may be eager to step into that space — but the economic logic seems upside down. Wireless network operators are looking to reduce costs, not import data center economics into the RAN.

Ecosystem or Echo Chamber?

Nvidia’s Aerial platform and CUDA-based software ecosystem do present a compelling story: open infrastructure, modular APIs, and space for smaller developers to innovate alongside giants like Nokia. On paper, it’s an alluring image of democratized RAN software. In practice, it ties the industry even more tightly to a vertically integrated, proprietary ecosystem.

Nokia appears comfortable with that trade-off. Nokia CTO Pallavi Mahajan’s recent blog post framed AI-RAN as a vehicle for “software speed and innovation.” He added, “Nokia’s AI-RAN initiative begins with a simple observation: AI is changing not only how networks are operated, but also the nature of the traffic they carry. AI workloads have already reached massive scale, with mobile devices accounting for more than half of AI interactions. Large language model interactions introduce richer uplink flows and burstier patterns as devices continuously send context to models.”

Indeed, that me be true someday. But for now, most wireless network operators need stable, cost-efficient networks, not AI-driven complexity or GPU-level power draw.

Image Credit: Nokia

Conclusions:

The uncomfortable truth is that AI-RAN feels more like a vendor-driven experiment than an operator-driven demand. Until someone proves that GPUs in the RAN deliver a measurable payoff — in performance, cost, or operational simplicity — the whole concept risks joining the long list of telecom “game-changers” that never made it past the trial stage. The hype cycle is predictable; the economics are not. Unless that equation changes, the real intelligence may be knowing when not to deploy AI RAN.

………………………………………………………………………………………………………………

In a Substack post today, Sebastian Barros writes: What Does AI-RAN Even Mean?

Despite the crazy hype, there is no definition for AI-RAN. Today it is at best a vibe, a dangerous reality for an industry that moves on strict standards that are currently completely absent.

The AI RAN hype is crazy right now. But despite the endless talk and vendor announcements, there is no actual technical definition of what it even means. As wild as it sounds for an industry built on strict 3GPP and O-RAN standards (those are specs- not standards), AI RAN is currently just a vendor interpretation designed to move hardware. Moreover, telecom has been using AI in the RAN before it was even cool. In fact, we were among the first industries to use neural networks in signal processing back in the 80s.

The problem is that treating AI-RAN as a marketing narrative rather than a rigid standard actively stalls progress. When the definition of AI-RAN is as different as night and day depending on which OEM you ask, it becomes impossible for any Telco to accurately model TCO or make solid CAPEX decisions.

Editor Notes:

- ITU-R’s IMT-2030 framework (ITU-R Recommendation M.2160-0 for IMT-2030) calls for an AI-native new air interface and AI-enhanced radio networks, but does not mention Nokia’s AI RAN.

- 3GPP Release 18 and later have study/work items on AI/ML for RAN functions such as energy saving, load balancing, mobility optimization, and AI/ML on the RAN air interface, but again no specifics have been discussed let alone agreed upon.

- 3GPP Release 19 continues and expands this work, with reporting that it builds on Release 18’s normative work and adds new AI/ML-based use cases for NG-RAN. In other words, 3GPP does have AI-RAN-related specs in progress and some normative features, but they are distributed across multiple RAN work items rather than packaged as one standalone “AI RAN” specification.

- AI RAN Alliance “is dedicated to driving the enhancement of RAN performance and capability with AI.” However, they’ve not yet produced any implementable specifications for AI RAN. Yet there are only four carriers that are “executive members“: Vodafone, T-Mobile, and SK Telecom, and Softbank (which is a conglomerate).

In Japan, NTT Docomo holds the largest cellular market share, with KDDI second, followed by SoftBank and the rapidly expanding Rakuten Mobile.

References:

https://www.lightreading.com/5g/ai-ran-lots-of-talk-little-action-no-guarantees

https://www.nokia.com/blog/ai-ran-bringing-software-speed-innovation-into-the-radio-network/

Ericsson goes with custom silicon (rather than Nvidia GPUs) for AI RAN

Dell’Oro: RAN Market Stabilized in 2025 with 1% CAG forecast over next 5 years; Opinion on AI RAN, 5G Advanced, 6G RAN/Core risks

Dell’Oro: Analysis of the Nokia-NVIDIA-partnership on AI RAN

RAN silicon rethink – from purpose built products & ASICs to general purpose processors or GPUs for vRAN & AI RAN

Dell’Oro: AI RAN to account for 1/3 of RAN market by 2029; AI RAN Alliance membership increases but few telcos have joined

Dell’Oro: AI RAN to account for 1/3 of RAN market by 2029; AI RAN Alliance membership increases but few telcos have joined

Fiber Broadband Association Middle Mile WG: how to use “Digital Infrastructure Networks” for coordinated fiber backbone investments

The Fiber Broadband Association (FBA) today released guidance from its Middle Mile Working Group (WG) which outlines how states can strengthen digital infrastructure through coordinated fiber backbone investment. Fiber is the foundation of AI, powering the high-capacity, low-latency, secure connectivity that links data centers, cloud infrastructure, and the communities that depend on them. To meet rising national demand, the U.S. must scale fiber deployment 2.3x by 2029. This goal requires accelerated infrastructure builds and strong coordination among states, utilities, and industry partners.

Digital Infrastructure Networks are strategic fiber optic systems that connect the core internet backbone to last-mile broadband providers. By strengthening these middle-mile connections, states can reduce the cost of broadband deployment, improve network resiliency, and expand connectivity to unserved and underserved communities.

“Middle-mile infrastructure is what allows broadband networks to scale,” said Sachin Gupta, Chair of the Middle Mile Working Group and Vice President of Business and Technology Strategies at Centranet. “When high-capacity fiber backbones are located closer to underserved communities, providers can extend last-mile networks more affordably, reach more locations, operate more efficiently, and better serve communities across the state.”

Among the recommendations:

- Coordinate infrastructure projects across agencies to streamline deployment and reduce unnecessary construction

- Implement “dig once” policies that install conduit or fiber whenever roads or utility corridors are opened for construction

- Leverage state-owned assets, including rights-of-way, existing fiber routes, and utility infrastructure

- Modernize permitting and coordination processes to accelerate broadband builds

FBA will further explore these strategies during two Middle Mile Working Group breakout sessions at Fiber Connect 2026, taking place Tuesday morning. The sessions include:

- Rural Collaboration, Infrastructure Planning, and Sustaining Affordable, High-Performance Middle Mile Broadband

- Unlocking New Middle Mile Opportunities for ISPs and Community Networks

……………………………………………………………………………………………………………………………………………………………………………………………………………………………………

Technical Topology: The DWDM Advantage:

- Massive Spectral Efficiency: Multiplexing up to 96+ channels onto a single fiber, with each wavelength supporting 100G, 400G, or 800G data rates.

- Scalable Architecture: Capacity can be increased incrementally by lighting new wavelengths without forklift upgrades or additional trenching.

- Resilient Topologies:

- Ring Networks: Often preferred for regional backhaul, utilizing Optical Add/Drop Multiplexers (OADMs) to provide self-healing 1+1 protection and sub-50ms failover.

- Mesh Networks: The gold standard for reliability, offering multiple diverse paths to ensure uptime even during multiple fiber cuts.

- Long-Haul Performance: Utilizing Erbium-Doped Fiber Amplifiers (EDFAs) and Raman amplification to maintain signal integrity over spans exceeding 1,000 km without electronic regeneration.

References:

Learn more; fiberconnect.fiberbroadband.org. Learn more about FBA’s research here or subscribe to FBA’s Fiber Forward Weekly newsletter here to stay updated.

Digital Infrastructure Networks: Meeting the Broadband Challenge for State Governments

Australia’s NBN and Nokia demonstrate multi-generation optical technologies concurrently over existing FTTP infrastructure

Automating Fiber Testing in the Last Mile: An Experiment from the Field

U.S. fiber rollouts now pass ~52% of homes and businesses but are still far behind HFC

Highlights of FiberConnect 2024: PON-related products dominate

Fiber Broadband Association: 1.4M Fiber Miles Needed for 5G in Top 25 U.S. Metros

AT&T expands its fiber-optic network amid slowdown in mobile subscriber growth